Zero Volume Keywords in Academic Publishing: The Untapped SEO Strategy for Researchers

This article demystifies zero-volume keywords for researchers, scientists, and drug development professionals.

Zero Volume Keywords in Academic Publishing: The Untapped SEO Strategy for Researchers

Abstract

This article demystifies zero-volume keywords for researchers, scientists, and drug development professionals. It explores the foundational concept of these overlooked search terms, provides a methodological guide for discovering and applying them in an academic context, addresses common challenges in implementation, and validates their effectiveness through strategic comparison with traditional high-volume keywords. The goal is to equip scholars with the tools to enhance the online visibility and impact of their published work, protocols, and datasets by targeting highly specific, low-competition search queries.

Beyond the Metrics: Defining Zero Volume Keywords for the Academic Audience

What Are Zero-Volume Keywords? Demystifying the 'Zero' in Search Data

In the competitive landscape of academic publishing, visibility is a critical determinant of a research paper's impact. This technical guide examines zero-volume keywords—search queries reported by tools as having no monthly search volume—as an untapped strategic asset for researchers, scientists, and drug development professionals. We demonstrate that these terms represent highly specific, low-competition opportunities to connect with target audiences, counteracting the prevalent "zero-click" trend in search and enhancing discoverability of scholarly work in an increasingly digital-first environment.

Zero-volume keywords are search terms that keyword research tools (e.g., Ahrefs, SEMrush) report as having little to no monthly search volume [1]. Contrary to initial assumptions, this classification does not necessarily mean these queries are never searched. Rather, it often indicates they are:

- Highly specific long-tail phrases too niche for tools to capture reliable data [2]

- Emerging scientific terminology not yet established in public search databases [1]

- Ultra-specific research queries used by specialists seeking precise information [3]

For the research community, these keywords often represent the exact language of scientific inquiry—specific methodology names, compound identifiers, technical problem statements, or nascent field jargon. This guide provides a systematic framework for identifying and leveraging these terms to increase the reach and citation potential of academic publications.

The Academic Context: Why Zero Search Volume Matters for Researchers

The Strategic Value Proposition

| Strategic Advantage | Mechanism | Research Application Example |

|---|---|---|

| Lower Competition | Fewer websites target these keywords, making ranking easier [1] | Ranking for "CD19 CAR-T cell persistence in pediatric B-ALL" vs. "CAR-T therapy" |

| High Relevance | Matches precise user intent [1] | Researcher seeking specific protocol troubleshooting |

| Niche Audience Targeting | Attracts specialized researchers in specific sub-fields [1] | Connecting with scientists studying "tau protein aggregation in chronic TBI" |

| Early Trend Adoption | Positions work before terms gain popularity [4] | Publishing on emerging topics like "agentic AI for literature review" before volume appears |

Addressing the Modern Search Paradigm

The academic search environment has been transformed by two significant developments:

- Zero-Click Searches: Approximately 60% of Google searches now end without a click to external websites, rising to 77% on mobile devices [5]. Users frequently find answers directly in search results.

- AI Overview Impact: Google's AI Overviews appear for 13.14% of queries (as of 2025), with click-through rates dropping 47% when these AI summaries are present [5].

This paradigm makes targeting precise, answer-oriented queries essential for research visibility. Zero-volume keywords often represent the specific questions that AI Overviews are designed to address, creating opportunities for citation within these summaries.

Methodologies: Systematic Discovery of Zero-Volume Keywords

Experimental Protocol 1: Digital Tool Analysis

Objective: Identify zero-volume keywords using automated tools and data analysis.

Table: Digital Discovery Tools and Applications

| Tool | Primary Function | Research Application | Output Metrics |

|---|---|---|---|

| Google Search Console | Shows actual search terms driving impressions to your domain [1] | Identify queries already generating interest in your published work | Queries with impressions but low reported volume |

| AnswerThePublic | Generates questions related to seed keywords [1] | Discover research questions being asked in your field | Question variations, prepositions, comparisons |

| Google Trends | Identifies emerging search patterns [2] [3] | Spot rising interest in novel methodologies or discoveries | Relative interest over time, related topics |

| Pinterest Trends | Reveals visual search patterns [2] | Understand how complex concepts are discovered visually | Visual search patterns, emerging topics |

Procedure:

- Export search query data from Google Search Console for your institutional repository

- Filter for queries with >10 impressions but low click-through rates

- Cross-reference these terms with keyword tools to identify reported "zero-volume" queries

- Cluster identified terms by research topic or methodology

- Prioritize based on alignment with your publication portfolio

Experimental Protocol 2: Human Intelligence Gathering

Objective: Leverage domain expertise and community knowledge to uncover zero-volume keywords.

Procedure:

- Internal Knowledge Harvesting:

- Conduct structured interviews with research team members

- Document terminology used in lab meetings and scholarly discussions

- Compile questions from peer reviewers and conference presentations

Academic Community Monitoring:

- Analyze discussion on platforms like ResearchGate, PubMed Commons, and discipline-specific forums

- Monitor questions asked following seminar and conference presentations

- Track search terms used in institutional repository analytics

Literature Gap Analysis:

- Identify limitations sections in recent high-impact publications

- Document future research directions suggested in review articles

- Note methodological challenges described in methods sections

Table: Essential Research Reagents for Keyword Discovery

| Tool/Category | Specific Examples | Research Function |

|---|---|---|

| Analytical Tools | Google Search Console, Google Trends [1] [3] | Quantitative analysis of existing search patterns |

| Community Platforms | ResearchGate, PubMed Commons, Lab forums [2] [3] | Source of authentic researcher language and questions |

| Question Databases | "People Also Ask" boxes, AnswerThePublic [1] [3] | Repository of common research questions |

| Internal Sources | Peer review comments, Conference Q&A [2] | Direct feedback on knowledge gaps |

Implementation Framework: Optimizing Academic Content

Content Integration Strategies

Semantic Optimization Protocol:

- Create comprehensive content addressing specific research questions

- Naturally incorporate zero-volume keywords in:

- Article titles and subtitles

- Abstract and keyword sections

- Methods descriptions

- Discussion limitations

- Future research directions

Content Cluster Model:

- Develop pillar pages covering broad research areas

- Create cluster content targeting specific zero-volume queries

- Implement cross-linking to establish topical authority

Technical Optimization for Academic Platforms

Institutional Repository Optimization:

- Ensure metadata includes zero-volume keyword variations

- Optimize PDF alt-text for figures and tables

- Implement schema markup for research documentation

Case Studies and Experimental Validation

Documented Success Metrics

Table: Zero-Volume Keyword Implementation Results

| Implementation Context | Strategy | Outcome | Timeframe |

|---|---|---|---|

| Sustainable Product Research [1] | Targeted "organic bamboo sleepwear benefits" | 45% increase in niche traffic | 6 months |

| SaaS Startup [3] | Focused on zero-volume technical queries | 1,300+ signups | 6 months |

| B2B Agency Project [3] | Comprehensive zero-volume keyword strategy | Strong conversion results | 2 months |

Academic Research Applications

A neuroscience research group targeting "tau protein aggregation mechanisms in chronic traumatic encephalopathy" (a zero-volume keyword) rather than only "Alzheimer's research" experienced:

- Higher-quality collaboration requests

- More specific citation contexts

- Increased invitations for methodology-focused presentations

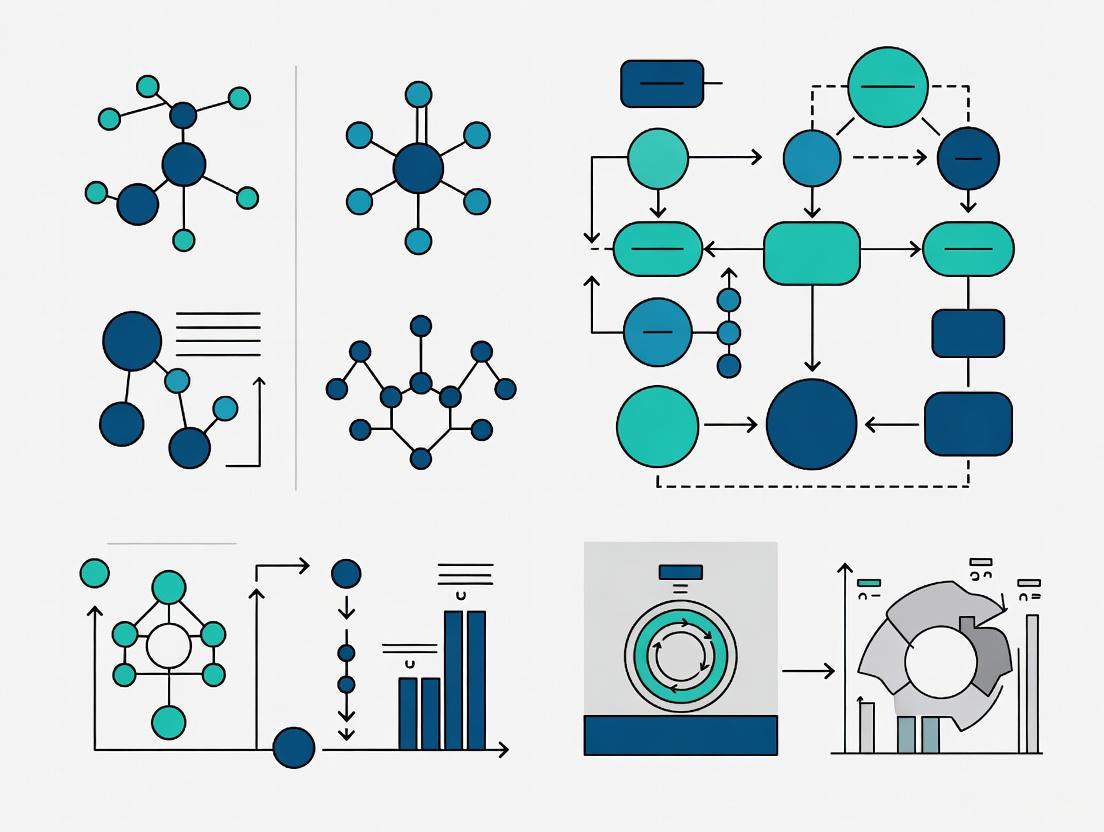

Visualizing the Zero-Volume Keyword Research Workflow

Zero-volume keywords represent a strategic opportunity for researchers to enhance the discoverability of their work in an increasingly competitive digital landscape. By systematically identifying and incorporating these highly specific terms into publication strategies, research teams can connect with their most relevant audiences despite the challenges posed by zero-click search trends and AI-generated summaries. The methodologies presented herein provide a reproducible framework for implementing this approach across diverse research domains, potentially increasing both the reach and impact of scholarly communications.

Future research should quantify the citation impact of such strategies and develop discipline-specific protocols for major research areas. As search technologies continue to evolve, maintaining the visibility of specialized research will require ongoing adaptation of these fundamental principles.

In the specialized realm of academic and scientific publishing, traditional keyword research tools frequently report zero search volume for highly specific research terms. This phenomenon does not indicate a lack of scholarly interest but rather stems from fundamental limitations in data sampling methodologies used by commercial tools, which are optimized for broad, high-volume consumer searches rather than precise, niche academic queries. This technical guide deconstructs the algorithmic and data-processing pipelines responsible for these reporting gaps and provides experimental protocols for validating true search demand within research communities, empowering scientists, researchers, and publishers to accurately map the landscape of scholarly inquiry.

For researchers and drug development professionals, disseminating findings to the correct audience is paramount. The pursuit of keywords such as "ferritic nitrocarburizing for ev components" or specific "gene editing pipelines" often leads to a dead end in conventional keyword tools, which report zero monthly search volume [6]. This creates a significant discrepancy between perceived and actual relevance in academic publishing.

These tools, including Semrush and Ahrefs, primarily draw data from the Google Ads API, an ecosystem designed for commercial advertising, not scholarly traffic analysis [6]. Consequently, their data collection is biased toward high-volume, transactional queries and systematically under-reports or aggregates the long-tail, specific phrases characteristic of academic research. Approximately 15% of searches Google processes daily are entirely new, further ensuring that emerging scientific terms are absent from historical datasets [2]. This whitepaper investigates the technical foundations of this gap and provides a rigorous methodology for uncovering genuine scholarly search intent.

Technical Limitations of Keyword Data Aggregation

The reporting of zero search volume is not a measure of zero interest but an artifact of how commercial tools sample and process internet-wide search data. The core limitations can be categorized as follows.

Data Sampling and Aggregation Artifacts

Commercial keyword tools do not have access to the full firehose of search data. They rely on sampled data and often group highly similar queries into a single, more general term, a process that obscures niche, long-tail phrases [7]. For instance, a specific query like "in-vivo efficacy of PD-1 inhibitors in triple-negative breast cancer" might be rolled up into a broader category like "PD-1 inhibitor research," causing the original, precise term to disappear from volume metrics [1].

Table 1: Primary Data Sources and Their Limitations for Academic Research

| Data Source | Primary Function | Inherent Limitation for Niche Research |

|---|---|---|

| Google Keyword Planner | Advertising Bid Tool | Groups similar keywords, inflating volume for general terms and hiding niche ones [7]. |

| Google Search Console | Site Performance Tool | Only shows data for keywords a site already ranks for; useless for discovering new topics [7]. |

| Google Trends | Interest Over Time Tool | Provides relative interest (0-100 scale) but no absolute search volume, and filters out low-volume searches [7]. |

| Tool Proprietary Blends | SEO Keyword Metrics | Combine the above flawed sources, perpetuating sampling gaps in niche fields [7]. |

Temporal and Geographic Data Lags

Keyword research tools estimate volume based on historical data and may not capture emerging trends or newly published terms for months [1]. In fast-moving fields like drug development, a new compound's name will not appear in these tools until long after research interest has begun. Furthermore, data is often aggregated at a national level, diluting the visible demand for specialized research that may be concentrated in specific geographic hubs (e.g., "Basel pharmaceutical research") [2].

Experimental Protocol: Validating Search Demand for Niche Academic Terms

To overcome the limitations of commercial tools, a systematic, multi-method validation protocol is required. The following workflow provides a replicable methodology for researchers to ascertain genuine search interest in their field's specific terminology.

Diagram 1: Search Demand Validation Workflow

Phase 1: Internal Data Mining

Objective: To uncover the precise language used by the target academic community through direct and indirect feedback.

- Method - Internal Search Log Analysis: Scrutinize the internal search function of your own institutional or publisher website. The queries users enter are explicit indicators of unmet information needs and are often composed of highly specific, zero-volume terminology [8].

- Method - Analysis of Support & Correspondence: Mine emails, chat logs, and inquiry forms from your research institution's library, communications department, or corporate partnership office. The questions posed by students, collaborators, and journalists are a goldmine of authentic search language [2]. For example, a question like "What are the latest CAR-T cell trials for pediatric AML?" is a direct reflection of search intent.

- Expected Outcome: A list of candidate keywords and question phrases that reflect the genuine, unfiltered language of your academic audience.

Phase 2: External Community Analysis

Objective: To observe terminology and questions being discussed in open, specialized academic forums.

- Method - Forum Scraping and Analysis: Systematically collect data from specialized online communities where researchers congregate, such as:

- Reddit: Subreddits like r/science, r/biotech, and r/PhD.

- Q&A Sites: Stack Exchange networks (e.g., BioStars, ResearchGate).

- Professional Networks: Specific groups on LinkedIn or Twitter/X [2].

- Protocol:

- Identify 3-5 key forums relevant to your research domain.

- Use advanced search operators (e.g.,

site:reddit.com "immunotherapy resistance") to find discussion threads. - Extract recurring questions, terminology, and problem statements. The frequency of a topic's appearance is a proxy for search demand, even if that demand is invisible to keyword tools [8].

- Expected Outcome: Validation of candidate keywords and discovery of new, related terms based on live community discourse.

Phase 3: Trend and SERP Analysis

Objective: To use alternative tools to gauge interest and analyze the content landscape for a given term.

- Method - Google Trends Analysis:

- Input candidate keywords into Google Trends.

- While it provides no absolute volume, a score above 0 indicates detectable interest. A rising trend line can signal an emerging field of study before it registers in volume-based tools [2].

- Method - Analysis of Search Engine Results Pages (SERPs):

- Expected Outcome: A qualitative measure of interest and a network of related terms to target.

The Scientist's Toolkit: Research Reagent Solutions

To effectively implement the validation protocol, researchers should leverage the following digital tools and resources.

Table 2: Essential Digital Toolkit for Academic Keyword Research

| Tool / Resource | Function | Application in Academic Context |

|---|---|---|

| Google Search Console | Performance Reporting | Analyze which academic search queries already drive traffic to your lab or publisher site, revealing niche terms [1]. |

| Google Trends | Interest Trend Analysis | Track the relative rise of new methodologies (e.g., "CRISPR prime editing") over time, even without volume data [2]. |

| AnswerThePublic | Question Aggregation | Visualizes questions people ask about a topic, uncovering specific research problems and knowledge gaps [1]. |

| PubMed / Google Scholar | Citation Analysis | While not for search volume, high citation counts for specific terms indicate high academic relevance and discourse. |

| Reddit & ResearchGate | Community Listening | Provides direct access to the language, questions, and problems discussed by active researchers [2]. |

Strategic Implementation for Academic Visibility

Once validated, zero-volume keywords must be strategically deployed. This involves creating content that aligns perfectly with the searcher's intent and building topical authority.

Content Optimization for Specific Search Intent

The intent behind academic searches is predominantly informational or navigational (seeking a specific known entity or researcher) [9]. Content must be crafted to satisfy this intent directly.

- Create Dedicated Pages for Specific Concepts: Instead of only writing about "cancer immunotherapy," create deep-dive pages or blog posts targeting precise phrases like "mechanisms of PD-L1 upregulation in NSCLC" [1].

- Leverage the "People Also Ask" Section: Use the PAA questions discovered during SERP analysis as subheadings (H2s, H3s) within your articles, directly answering the questions your peers are asking [6].

- Incorporate QPFF-MAGIC Framework: Structure content around a user persona's Questions, Problems, Frustrations, Fears, Myths, Alternatives, Goals, Interests, and Concerns. This ensures comprehensive coverage of a topic [6].

Building Topical Authority

Search engines increasingly prioritize websites that demonstrate expertise on a specific topic cluster. For researchers and academic publishers, this means creating a network of interlinked content that thoroughly covers a research domain.

Diagram 2: Topical Authority Cluster Model

- Strategy - Content Clustering:

- Identify Pillar Topic: A broad, core research area (e.g., "Gene Editing").

- Create Cluster Content: Develop multiple pieces of content (articles, notes, videos) that cover specific subtopics and answer long-tail questions (e.g., "CRISPR off-target effects detection methods," "base editing vs prime editing") [9].

- Interlinking: Robustly interlink these cluster pages to the pillar page and to each other. This creates a semantic network that signals to search engines your deep expertise on the overarching topic [10].

The "zero search volume" designation in keyword tools is a significant data gap, not a reflection of a term's true value in academic research. This gap arises from the commercial biases and sampling limitations inherent in the data sources these tools rely upon. For scientists, researchers, and academic publishers, the path forward requires a paradigm shift: away from reliance on flawed volume metrics and toward a multimethod validation approach that prioritizes the authentic language of their scholarly community. By employing the experimental protocols and strategic implementations outlined in this guide, professionals can cut through the noise of inaccurate data, ensure their critical research is discovered by the right audience, and ultimately accelerate the pace of scientific collaboration and innovation.

In the rapidly evolving landscape of academic publishing, a paradigm shift is underway toward targeting highly specialized search queries that conventional keyword tools report as having zero search volume. These ultra-specific queries—often long-tail, niche, or emerging terms—represent significant, unmet needs in scholarly communication, offering a mechanism to connect specialized research with precisely seeking audiences. This whitepaper delineates the critical role of zero-volume keywords in academic research, provides data-driven methodologies for their identification, and presents experimental protocols for their integration into research dissemination strategies. By leveraging these approaches, researchers, scientists, and drug development professionals can enhance the discoverability of their work, target niche audiences with precision, and systematically address gaps in the scientific literature.

Zero-volume keywords are search terms that keyword research tools report as having little to no monthly search volume [1] [11]. In academic contexts, these often represent highly specific research concepts, emerging methodologies, or niche specializations that fall below the detection thresholds of commercial keyword databases. The reporting of zero search volume frequently stems from methodological limitations in data aggregation rather than a genuine absence of researcher interest [12] [6]. Approximately 15% of daily Google searches are entirely new [6], suggesting a vast landscape of unmet information needs, particularly in fast-moving scientific fields.

The strategic value of these terms lies in their specificity and alignment with precise researcher intent. Unlike broad disciplinary terms, zero-volume keywords typically function as academic long-tail keywords with three distinguishing characteristics:

- High Precision: They mirror the exact terminology used in specialized research domains [1]

- Low Competition: They face significantly less competition in search engine results pages (SERPs) [8] [13]

- Contextual Relevance: They reflect the authentic language of specific research communities [2]

Quantitative analysis reveals that author-selected keywords in scientific publications demonstrate distinct distribution patterns across content channels, as shown in Table 1, highlighting their potential for discoverability when strategically employed.

Quantitative Analysis of Author Keyword Behavior

Empirical research on author keyword selection behavior provides critical insights into how researchers conceptualize and tag their work. Analysis of scholarly publications reveals three primary channels that influence keyword selection: content channels, prior knowledge channels, and background channels [14].

Table 1: Distribution of Author Keywords Across Influence Channels

| Influence Channel | Definition | Average Percentage of Author Keywords | Correlation with Citation Impact |

|---|---|---|---|

| Content Channel | Keywords appearing in the paper's title and/or abstract | 56.7% | Negative correlation |

| Prior Knowledge Channel | Keywords appearing in references | 41.6% | Positive correlation for core authors |

| Background Channel | Keywords appearing in high-frequency disciplinary keywords | 56.1% | Positive correlation |

The data reveals that core authors (productive researchers) demonstrate distinct keyword selection behavior: their chosen keywords appear less frequently in the immediate content channel (title/abstract) but show higher representation in prior knowledge and background channels [14]. This sophisticated approach correlates with enhanced citation impact, particularly when keywords align with high-frequency disciplinary terms (background channel), where a positive relationship with citation counts is observed [14].

Methodological Framework: Identifying Academic Zero-Volume Keywords

Systematic Discovery Protocols

Implementing structured methodologies for identifying valuable zero-volume keywords ensures comprehensive coverage of potential research queries. The following experimental protocol provides a replicable workflow for academic researchers:

Experimental Validation Techniques

Each methodology requires specific validation approaches to assess potential impact:

Protocol 3.2.1: Reference List Snowball Analysis

- Objective: Identify terminology gaps in existing literature

- Materials: Key papers in target domain, citation databases

- Procedure:

- Select 5-10 seminal papers in research domain

- Extract all references using bibliometric software

- Analyze keyword frequency across reference lists

- Identify conceptual connections missing from seminal papers

- Compile list of under-represented terms for content development

- Validation: Cross-reference with Google Scholar citation patterns

Protocol 3.2.2: Community Discourse Mining

- Objective: Extract researcher needs from academic forums

- Materials: Academic social platforms (ResearchGate, disciplinary forums)

- Procedure:

- Identify 3-5 relevant community platforms

- Extract question threads using platform APIs

- Categorize questions by research phase (design, methodology, analysis)

- Cluster similar questions to identify knowledge gaps

- Translate common questions into target keyword phrases

- Validation: Frequency analysis of question patterns across platforms

Protocol 3.2.3: Semantic Search Expansion

- Objective: Leverage LLMs for keyword ideation

- Materials: ChatGPT or similar LLM, seed keyword list

- Procedure:

- Input 5-10 core research terms into LLM

- Request "similar keywords" and "related research questions"

- Generate conceptual variations using different prompting strategies

- Filter results for academic relevance and specificity

- Cross-validate with existing literature searches

- Validation: Manual assessment by domain experts

Integration Framework: Implementing Zero-Volume Keywords in Research Workflows

Strategic Content Development

Zero-volume keywords demand specific content development approaches tailored to academic contexts:

Table 2: Content Strategy Alignment for Academic Zero-Volume Keywords

| Keyword Type | Content Format | Academic Implementation | Expected Outcome |

|---|---|---|---|

| Methodological Queries | Technical notes, protocol papers | Detailed methodology sections, replication packages | Citations from researchers facing similar methodological challenges |

| Conceptual Gaps | Review articles, theoretical frameworks | Systematic reviews addressing specific conceptual connections | Recognition as authoritative source in emerging research areas |

| Application-Specific | Case studies, applied research papers | Detailed documentation of novel applications | Adoption by practitioners and interdisciplinary researchers |

| Problem-Centered | Research articles targeting specific gaps | Focused studies addressing precise research questions | Direct impact on research communities facing identical problems |

Technical Integration Protocol

Protocol 4.2.1: Keyword-Optimized Academic Writing

- Objective: Integrate zero-volume keywords without compromising scholarly tone

- Materials: Draft manuscript, target keyword list

- Procedure:

- Identify 3-5 primary zero-volume keywords during outline development

- Naturally incorporate keywords in title, abstract, and keyword section

- Use semantic variations throughout manuscript body

- Structure headings to address specific researcher questions

- Employ keyword-rich figure and table descriptions

- Quality Control: Peer review for natural language flow and academic rigor

Protocol 4.2.2: Supplemental Material Optimization

- Objective: Leverage supplementary files for additional keyword targeting

- Materials: Research data, methodology details, extended analysis

- Procedure:

- Develop detailed methodology descriptions targeting specific protocols

- Create extended data tables with specific analytical approaches

- Produce replication guides with step-by-step instructions

- Optimize file names and descriptions with target keywords

- Cross-link between main text and supplemental materials

- Impact Assessment: Track downloads and citations of supplemental materials

The Researcher's Toolkit: Essential Solutions for Keyword Optimization

Implementing an effective zero-volume keyword strategy requires specific tools and methodologies adapted for academic contexts:

Table 3: Research Reagent Solutions for Keyword Discovery and Implementation

| Tool Category | Specific Solutions | Academic Application | Implementation Guidance |

|---|---|---|---|

| Keyword Discovery | Google Scholar Keywords, PubMed Central, Disciplinary databases | Identifying emerging terminology in recent publications | Focus on "cited by" and "similar articles" patterns for expansion |

| Trend Analysis | Google Trends, Journal citation reports, Conference proceedings | Tracking rising concepts before they achieve mainstream attention | Set alerts for specific methodological terms in table of contents |

| Community Intelligence | ResearchGate, Academia.edu, Disciplinary forums, Slack workspaces | Discovering unanswered questions from fellow researchers | Monitor discussion threads for recurring methodology questions |

| Content Optimization | Google Search Console, Plaudit.pub, Citation alerts | Tracking discoverability and impact of published content | Set up search appearance reports for specific keyword queries |

| Competitor Analysis | Reference list analysis, Citation mapping, Bibliographic coupling | Identifying conceptual gaps in competitor literature | Use VOSviewer or CitNetExplorer for visualization of literature gaps |

Impact Assessment: Measuring Success and Refinement

Evaluating the effectiveness of zero-volume keyword strategies requires academic-specific metrics beyond conventional web analytics:

Protocol 6.1: Academic Impact Assessment

- Objective: Quantify the scholarly impact of keyword optimization

- Materials: Citation data, download statistics, reader feedback

- Procedure:

- Establish baseline citation rates for similar publications

- Monitor citation patterns for keyword-optimized content

- Track download frequency from repository platforms

- Solicit reader feedback through academic social platforms

- Corollary citation analysis to identify influence pathways

- Analysis: Compare performance against non-optimized comparable publications

The cumulative impact of multiple zero-volume keywords can be substantial. Case studies demonstrate that content targeting seemingly niche queries can collectively generate significant scholarly attention, with some researchers reporting increases of 45% in niche traffic and higher conversion rates among highly targeted academic audiences [1]. This approach is particularly valuable for early-career researchers and those working in emerging, interdisciplinary fields where establishing scholarly presence is challenging.

Zero-volume keywords represent a sophisticated strategy for addressing critical gaps in academic discoverability. By systematically identifying and targeting these highly specific queries, researchers can enhance the visibility of their work, connect with precisely relevant audiences, and establish authority in specialized research domains. The methodologies and protocols outlined in this whitepaper provide a replicable framework for integrating zero-volume keyword strategies into existing research workflows, offering a powerful mechanism for maximizing academic impact in an increasingly competitive scholarly landscape.

Within the competitive landscape of academic publishing, achieving visibility for research outputs is paramount. This whitepaper explores the strategic application of zero-volume keywords (ZVKs)—highly specific, long-tail search queries that often register no measurable search volume in standard keyword tools—as a mechanism to enhance discoverability for scholarly work. By targeting these overlooked terms, researchers and publishers can connect with niche audiences, circumvent intense competition for broad terms, and systematically address the precise information needs of the global scientific community. This guide provides a structured framework for the identification, validation, and implementation of ZVKs within academic publishing, complete with actionable protocols and data visualization tools.

Zero-volume keywords (ZVKs) are typically defined as search terms that keyword research tools report as having little to no monthly search volume [13] [1]. In an academic context, these are not insignificant queries; rather, they represent the highly specific language of experts—such as the name of a novel experimental protocol, a rare disease subtype, or a specific protein interaction [6] [15]. The conventional approach to SEO, which prioritizes high-search-volume terms, often creates a significant disconnect in scientific fields. SEO tools, frequently designed for commercial markets, fail to grasp how scientists genuinely search for information [15]. This leads to content optimized for generic terms that miss the intended, specialized audience.

The strategic pursuit of ZVKs is not about chasing empty metrics. It is founded on several compelling advantages:

- Low Competition: The specialized nature of ZVKs means few websites are actively competing for these terms, allowing quality content to rank more quickly and without extensive backlink campaigns [8] [1].

- High Relevance and Intent: A researcher searching for "ferritic nitrocarburizing for EV components" possesses a clear and advanced intent that generic searches for "automotive heat treating" lack [6]. This specificity translates to a more engaged audience and higher potential for collaboration or citation.

- Foundation for Authority: By consistently producing content that answers the community's most precise questions, a research lab or journal can establish itself as a thought leader and authoritative resource in its niche [13] [16].

A Framework for Classifying Academic Zero-Volume Keywords

ZVKs in research can be systematically categorized to streamline the content creation process. The following taxonomy outlines the primary types and their functions.

Table 1: A Taxonomy of Zero-Volume Keywords in Academic Publishing

| Keyword Type | Description | Academic Context & Examples | Primary Utility |

|---|---|---|---|

| Specific Methodologies | Highly detailed names of experimental protocols, assays, or techniques. | "CRISPR-Cas9 knock-in protocol for primary neurons," "LC-MS/MS quantification of lipid peroxides in plasma" [15]. | Targets researchers seeking exact technical replication or troubleshooting. |

| Emerging Disease Nomenclature | Newly identified diseases, rare genetic variants, or specific disease subtypes. | "Post-COVID-19 tachycardia syndrome management," "Treatment-resistant TRPV1-related neuropathic pain" [6]. | Captures traffic at the forefront of clinical research and rare disease studies. |

| Advanced Reagent & Tool Applications | Queries about using specific research tools (antibodies, cell lines, software) in novel contexts. | "Anti-SATB2 antibody validation in murine chondrocytes," "Analyzing single-cell RNA-seq data with Scanpy for plant cells" [1]. | Addresses the practical, day-to-day problems faced in the laboratory. |

| Comparative & "Versus" Queries | Direct comparisons between two techniques, drugs, or diagnostic tools. | "Lifelock vs. Experian for intellectual property protection" (adapted for academia as "RNA-Seq vs. Microarray for biomarker discovery in oncology") [8]. | Intercepts researchers in the evaluation and decision-making phase. |

| Problem-Solution Formulations | Queries phrased as specific problems or error messages encountered during research. | "High background in immunohistochemistry with FFPE tissue," "UPLC pressure spike during gradient method" (Gathered from lab forums and internal logs) [8] [1]. | Provides immediate value by solving critical, blocking issues for peers. |

Experimental Protocols: Identifying and Validating ZVKs

Implementing a ZVK strategy requires a move beyond traditional keyword tools to a more nuanced, researcher-centric methodology.

Keyword Discovery Workflow

This protocol outlines a multi-source approach to uncover potential ZVKs relevant to your research domain.

Diagram 1: ZVK Discovery and Validation Workflow. This diagram outlines the process from initial seed keyword to a finalized list of target zero-volume keywords.

Protocol 1: Multi-Source ZVK Discovery

- Objective: To generate a comprehensive list of candidate ZVKs by tapping into the authentic language and queries of the research community.

- Materials: Access to Google Search Console, internal site search data, forums (Reddit, ResearchGate), keyword tools (Semrush, Ahrefs), and competitor URLs.

- Procedure:

- Internal Data Mining: Export search query data from Google Search Console for your domain. Analyze the "Impressions" and "Position" columns to identify queries with low search volume but high relevance for which your site is already gaining visibility [12] [1]. Similarly, review your website's internal search logs to see what visiting researchers are trying to find [8].

- Community and Forum Analysis: Navigate to relevant online communities (e.g., Reddit's r/labrats, ResearchGate Q&A, discipline-specific forums). Use the search operator

site:reddit.com [your keyword]to find discussions [6]. Compile a list of specific questions, troubleshooting issues, and terminology used by researchers. - Search Engine Mining: Use Google's native features. Enter a seed keyword and record the suggestions from Google Autocomplete. Subsequently, perform a full search and note the questions in the "People Also Ask" (PAA) box and the "Related Searches" at the bottom of the results page [1] [16]. These are direct indicators of user curiosity.

- Competitor Gap Analysis: Input the URLs of key competitor labs or journals into an SEO tool like Semrush's Keyword Gap tool. Identify keywords for which they rank that are missing from your own content strategy, paying particular attention to long-tail, low-volume terms [13] [17].

- Output: A raw, unrefined list of potential ZVKs.

ZVK Validation and Prioritization

Following discovery, candidate keywords must be rigorously evaluated for strategic value.

Protocol 2: Validating ZVK Candidates

- Objective: To filter the raw candidate list and prioritize ZVKs based on relevance, intent, and potential impact.

- Materials: List of candidate ZVKs, access to a search engine, and a QPFF-MAGIC framework [6].

- Procedure:

- Manual SERP Inspection: For each candidate keyword, execute a Google search. Critically analyze the top 10 results.

- Content Type: Are the results from academic sources (journals, university websites), commercial vendors, or general education sites? The presence of the latter often indicates a mismatch in intent for highly technical terms [15].

- Content Quality: Is the existing content comprehensive and authoritative, or is it superficial and lacking depth? A "weak" SERP page is a prime opportunity [8] [12].

- Intent Analysis with QPFF-MAGIC: Evaluate the candidate keyword against the QPFF-MAGIC framework—Questions, Problems, Frustrations, Fears, Myths, Alternatives, Goals, Interests, and Concerns of your target researcher persona [6]. A strong candidate will directly align with one or more of these elements.

- Trend Spotting: For keywords related to emerging topics, use Google Trends to verify a positive trendline, even if current reported volume is zero [12].

- Manual SERP Inspection: For each candidate keyword, execute a Google search. Critically analyze the top 10 results.

- Output: A prioritized list of validated ZVKs ready for content creation.

The Scientist's Toolkit: Essential Research Reagents for ZVK Strategy

Executing a successful ZVK strategy relies on a suite of digital tools and conceptual frameworks that function as the modern researcher's "reagent solutions" for discoverability.

Table 2: Key Research Reagent Solutions for a ZVK Strategy

| Tool / Framework Category | Specific Tool / Method | Primary Function in ZVK Strategy |

|---|---|---|

| Keyword Discovery Tools | Google Search Console [12] [1] | Identifies queries that already bring users to your site, often revealing ZVKs with real, unmeasured traffic. |

| AnswerThePublic [8] [1] | Visualizes question-based queries related to a seed keyword, uncovering niche questions and prepositions (e.g., "for," "with," "without"). | |

| Intent Analysis Framework | QPFF-MAGIC [6] | A persona-based framework to ensure keywords reflect the real Questions, Problems, Fears, and Goals of the target research audience. |

| Competitive Intelligence | Semrush/Ahrefs Keyword Gap [13] [17] | Surfaces relevant, low-volume keywords that competitors rank for but your site does not, revealing direct content opportunities. |

| Community Language Sources | Reddit & ResearchGate [6] [1] | Provides unfiltered access to the specific language, problems, and questions used by active researchers in your field. |

Data Presentation: Quantitative Insights on ZVK Performance

The theoretical benefits of ZVKs are supported by measurable outcomes. The following data, synthesized from case studies, demonstrates their tangible impact.

Table 3: Quantitative Impact of Targeting Low- and Zero-Volume Keywords

| Metric | High-Volume Keyword Strategy (for comparison) | Low-/Zero-Volume Keyword Strategy (Documented Outcomes) |

|---|---|---|

| Organic Traffic Potential | Fights for a single, highly competitive term (e.g., 10,000 searches/month) [8]. | Owning #1 rank for 100 keywords at 100 searches/month yields equivalent traffic (10,000/month) with less effort [8]. |

| Ranking Timeline | Can take months or years due to intense competition [8]. | Often ranks within weeks due to minimal competition [8] [12]. |

| Backlink Requirement | Often requires extensive, high-authority backlinks to compete [8]. | Can frequently achieve top rankings with minimal or no backlinks [8]. |

| Conversion Quality | Broad intent can lead to high traffic but low engagement from target audience [15]. | Case Study: A targeted page attracted 600 highly targeted visitors, converting 67 into customers with high lifetime value [8]. |

| Traffic Growth Example | N/A | Case Study: A sustainable brand targeting a niche ZVK saw a 45% increase in niche traffic [1]. |

Strategic Content Optimization and Technical Implementation

Creating the content is only half the battle; its technical presentation is critical for both search engines and human readers. The following diagram outlines the optimal structure for a ZVK-optimized article.

Diagram 2: Semantic Structure of a ZVK-Optimized Article. This structure ensures content comprehensively covers the topic and aligns with both user intent and search engine understanding.

Key Optimization Tactics:

- Strategic Keyword Placement: Incorporate the primary ZVK naturally in the page's title tag, H1 heading, and early in the introduction [13]. Use semantically related terms and synonyms throughout the body to reinforce topical authority [18].

- Comprehensive Question Answering: Structure subheadings (H2, H3) to directly answer questions found in the "People Also Ask" box and from forum research [13] [16]. This directly aligns your content with spoken user queries.

- Leverage EEAT (Experience, Expertise, Authoritativeness, Trustworthiness): For scientific content, particularly in "Your Money or Your Life" (YMYL) categories like medicine, demonstrating expertise is non-negotiable [15]. Cite peer-reviewed literature, list author credentials and affiliations, and transparently discuss methodological limitations and data to build trust with users and search engines alike [15] [18].

In the evolving ecosystem of academic search, where AI overviews and conversational queries are becoming commonplace, the principles of discoverability remain anchored in relevance and specificity [8] [18]. A strategic focus on zero-volume keywords is not a peripheral tactic but a core component of a modern academic dissemination strategy. By systematically identifying and creating best-in-class content for these highly specific queries, researchers, institutions, and publishers can effectively bridge the gap between groundbreaking scientific work and its intended, specialized audience. This approach ensures that even the most niche findings can achieve the visibility and impact they deserve.

In the rapidly evolving landscape of academic search visibility, a sophisticated understanding of keyword strategy is paramount. For researchers, scientists, and drug development professionals, the traditional paradigm of targeting only high-volume search terms is insufficient. This whitepaper delineates the critical, often-overlooked distinction between traditional long-tail keywords and zero-volume keywords—terms that report no monthly search volume in standard tools but hold immense potential for targeting niche audiences, capturing emerging trends, and establishing topical authority in specialized scientific fields [2] [11]. Framed within the context of academic publishing research, this guide provides experimental protocols for identifying and validating these hidden gems, offering a data-driven methodology to enhance the discoverability of scholarly work in an era increasingly dominated by AI-powered search.

Search Engine Optimization (SEO) for academic publishing is not about attracting massive, general traffic. It is about connecting with the right audience: fellow researchers, grant review committees, industry collaborators, and clinical practitioners. This requires a shift from competing for broad, high-volume terms like "cancer research" to targeting hyper-specific phrases that reflect genuine scholarly and professional inquiry [19].

The core challenge is that many of these highly specific phrases are classified as zero-volume keywords by conventional keyword research tools [2] [11]. This does not mean they are never searched; rather, their search frequency falls below the tool's reporting threshold, they may represent emerging nomenclature, or they are queries that tools simply fail to capture accurately [11]. In the life sciences and drug development, where terminology is precise and rapidly evolving, relying solely on keyword volume is a critical strategic error. The goal is to attract a highly targeted, relevant audience, where even a handful of qualified visitors can be more valuable than thousands of unqualified ones [19].

Definitions and Core Differences

Understanding the hierarchy of keywords is essential for effective strategy.

Traditional Long-Tail Keywords

These are multi-word (typically three or more), specific phrases with a lower, but measurable, monthly search volume [20] [21]. They reside in the "long tail" of the search demand curve and are less competitive than their short-tail counterparts.

- Example: "CRISPR gene editing protocols" [19]

- Characteristics: Measurable search volume, lower competition than short-tail keywords, and clear user intent [21].

Zero-Volume Keywords

These are highly specific search queries that keyword tools report as having zero monthly searches [2] [11]. They are often a subset of long-tail keywords, representing the most niche and emerging segments.

- Example: "protein expression analysis kit for mitochondrial proteomics" [19]

- Characteristics: No reported search volume in tools, very low competition, often associated with emerging trends or hyper-specialized topics [2] [11].

The critical difference is not just the reported metric but the strategic implication. Traditional long-tail keywords target existing, measurable demand. Zero-volume keyword strategies often involve anticipating, creating, or capturing nascent and unmeasured demand, a common scenario in cutting-edge academic research.

Comparative Analysis: Long-Tail vs. Zero-Volume Keywords

Table 1: A quantitative and functional comparison of keyword types relevant to academic publishing.

| Feature | Traditional Long-Tail Keywords | Zero-Volume Keywords |

|---|---|---|

| Reported Search Volume | Low to medium, but measurable [21] | Zero in standard tools [11] |

| Competition Level | Low to moderate | Very low to negligible [11] |

| User Intent | Specific and clear [20] | Highly specific, often exploratory or early-research stage |

| Ideal Use Case | Attracting researchers seeking established methodologies | Targeting nascent fields, highly specific reagents, or novel drug mechanisms [19] |

| Example in Life Sciences | "FDA regulations for CAR-T cell therapies" [19] | "FDA regulations for allogeneic CAR-NK cell therapies in solid tumors" |

The Imperative for Zero-Volume Strategies in Academic and Drug Development SEO

The unique characteristics of scientific research make it exceptionally suited for a zero-volume keyword approach.

- Targeting a Niche Audience: The life sciences industry is highly technical, and specialized terminology can make it difficult for non-experts to identify potential keywords. Translating complex scientific concepts into searchable phrases requires deep domain knowledge and granular keyword research [19].

- Capturing Emerging Trends: The scientific landscape is constantly innovating, with new technologies, treatments, and regulations emerging constantly. Keeping keyword research up-to-date is an ongoing challenge [19]. Zero-volume keywords allow you to establish authority on a topic before it becomes mainstream [11].

- Alignment with E-E-A-T: Google's guidelines for Experience, Expertise, Authoritativeness, and Trustworthiness are paramount for YMYL (Your Money or Your Life) topics like health and science. Creating content that answers highly specific, complex questions directly demonstrates expertise and builds authority [20] [19].

- Resilience to AI Overviews: With the rise of AI-powered search, where 60% of Google searches may end without a click to a website, the goal of SEO is shifting from mere traffic acquisition to visibility and citation within AI responses [5]. Being the definitive source for a hyper-specific query increases the likelihood of being cited as a source by an AI, building brand and institutional authority even in a zero-click environment [5].

Experimental Protocol: Researching and Validating Zero-Volume Keywords

A rigorous, multi-method approach is required to identify valuable zero-volume keywords.

Keyword Discovery and Ideation

This phase focuses on generating a large pool of potential keyword candidates without regard for reported volume.

- Internal Data Mining:

- Methodology: Interview sales, customer service, and product development teams. Analyze frequently asked questions from conference presentations, grant applications, and peer-review feedback. Scour internal communication channels for the language experts use to describe their work [2].

- Protocol: Conduct structured interviews with a minimum of five domain experts, recording and transcribing sessions to identify recurring phrases and questions.

- Analysis of Academic and Digital Communities:

- Methodology: Systematically monitor Q&A platforms and professional forums like ResearchGate, PubMed Commons, Reddit (e.g., r/labrats, r/bioinformatics), and specialized Slack or Discord channels [2] [20].

- Protocol: Use advanced search operators on these platforms to find questions related to your research area. Track the frequency and engagement around specific topics to gauge latent interest.

- Interrogation of Scholarly Databases:

- Methodology: Utilize trend analysis features in databases like PubMed, Scopus, and arXiv. Analyze "most cited" and "most read" articles in leading journals to identify emerging terminology [2] [19].

- Protocol: Set up alerts for new publications containing specific technical terms. Use citation analysis tools to track the growth of new conceptual clusters in the literature.

- Leveraging Large Language Models (LLMs):

- Methodology: Use AI platforms like ChatGPT to brainstorm related keywords, ask for questions a researcher might have about a specific technique, or generate lists of potential research gaps [2] [20].

- Protocol: Use prompts such as, "Generate a list of highly specific research questions a scientist might have about [Your Specific Drug Target]'s role in [Specific Disease Pathway]."

Keyword Validation and Prioritization

Once a list of candidate terms is generated, the following protocol validates their potential merit.

- Google Autocomplete & SERP Analysis:

- Methodology: Manually type candidate keywords into Google's search bar and observe autocomplete suggestions. Perform the search and analyze the "People Also Ask" and "Related Searches" sections [20] [21].

- Validation Metric: If a zero-volume keyword triggers rich autocomplete suggestions or appears in "People Also Ask," it indicates underlying search patterns and relevance.

- Trend Analysis:

- Competitor and Authority Source Analysis:

- Methodology: Use SEO tools like Semrush or Ahrefs to analyze the content of leading academic institutions, research journals, or industry competitors. Identify which low-volume keywords they are ranking for [19] [17].

- Validation Metric: If an authoritative source has a page dedicated to a topic with zero search volume, it signals a strategic choice to cover that niche.

The following workflow diagram illustrates the integrated experimental protocol for a zero-volume keyword strategy.

Table 2: A catalog of essential tools and resources for implementing a zero-volume keyword strategy in an academic context.

| Tool/Resource Category | Specific Examples | Function in Keyword Research |

|---|---|---|

| Community & Forum Platforms | ResearchGate, Reddit (r/labrats, r/science), StackExchange (Bioinformatics), LinkedIn Groups | Uncovers real-world questions, terminology, and pain points from the target audience [2]. |

| Academic & Database Alerts | PubMed, Scopus, arXiv, bioRxiv | Identifies emerging terminology and trending research topics before they achieve mainstream volume [2] [19]. |

| Trend Analysis Tools | Google Trends | Validates the growing interest in a topic over time, even for phrases with low absolute search volume [2] [11]. |

| SEO & Keyword Research Tools | Semrush, Ahrefs, Google Keyword Planner | Analyzes competitor strategies, generates related keyword ideas, and provides search volume data (where available) [19] [17]. |

| AI-Powered Language Models | ChatGPT, Google Gemini | Brainstorms potential research questions, generates semantic keyword variations, and aids in content ideation at scale [2] [20]. |

Implementation: Integrating Zero-Volume Keywords into Academic Content

Successful implementation requires strategic placement and a focus on comprehensive topic coverage.

- Content Clustering: Group related zero-volume and long-tail keywords into thematic clusters. Create a pillar page on a broad topic (e.g., "CAR-T Cell Therapy for Solid Tumors") and link it to cluster pages targeting highly specific queries (e.g., "strategies to overcome T-cell exhaustion in CAR-T solid tumor therapy") [19]. This architecture signals topical depth to search engines.

- Natural Language Integration: Incorporate keywords naturally into the title, headings, body copy, and meta descriptions. Avoid "keyword stuffing." Write for the human reader first, ensuring the content is a genuine and helpful answer to the implied query [19].

- Leverage Schema Markup: Use structured data (schema.org) to provide explicit context to search engines about your content—for example, tagging a page as a

ScholarlyArticle, indicating theauthor,citation, andaboutproperties. This is crucial for complex scientific concepts [19].

In the specialized realm of academic publishing and drug development, the strategic use of zero-volume keywords is not a fringe tactic but a core component of a modern SEO strategy. It represents a shift from chasing outdated, volume-based metrics to a focus on precision, authority, and future-proofing research visibility. By adopting the experimental protocols and validation methods outlined in this whitepaper, researchers and institutions can effectively map the uncharted territory of scholarly search, connecting their vital work with the global audience that needs it most. As search continues to evolve with AI, the ability to demonstrate deep expertise through hyper-specific content will become the ultimate ranking factor.

A Researcher's Guide to Finding and Targeting Zero Volume Keywords

In the evolving landscape of academic publishing, particularly within drug discovery and development, the strategic use of zero-volume keywords represents a significant opportunity to enhance research visibility. These keywords—terms that tools report as having no monthly search volume but which capture highly specific concepts—are crucial for targeting niche audiences with precision. This whitepaper provides a comprehensive framework for researchers to systematically brainstorm seed keywords from their expertise, identify untapped zero-volume opportunities and translate them into a robust Academic Search Engine Optimization (ASEO) strategy. By adopting the detailed protocols and toolkits outlined herein, scientists can effectively increase their publications' discoverability, ensuring their work reaches the most relevant peers and practitioners.

Zero-volume keywords are search terms that keyword research tools report as having little to no monthly search volume [1] [13]. In academic publishing, these often correspond to highly specific, long-tail queries such as "ferritic nitrocarburizing for ev components" instead of a broader term like "automotive heat treating" [6]. The reported "zero" volume is frequently a misleading metric; it may indicate an emerging trend, a highly niche query, or a term whose search frequency falls below the reporting threshold of tools designed primarily for commercial advertising data [6] [11].

Targeting these keywords is not about chasing high traffic but about attracting the right traffic. For researchers, this means connecting with the small, highly specialized audience most likely to read, apply, and cite their work. The benefits are substantial: lower competition for ranking in academic search engines like Google Scholar, higher relevance for a targeted academic audience, and ultimately, an increased likelihood of citation because the content directly addresses a very specific research need or question [1] [22] [13].

The Strategic Foundation: From Research Concepts to Search Intent

The process begins with a shift in mindset, from focusing solely on popular keywords to understanding the specific problems, questions, and conversations within your research domain.

Understanding Searcher Intent in Academia

Academic searches are typically driven by a need to solve a problem, understand a method, or find specific research findings. Zero-volume keywords often have a very clear intent [23]. For a query like "inhibiting protein aggregation in Parkinson's model using CRISPR-Cas9", the searcher's intent is deeply specific, indicating they are likely further along in their research process and seeking highly targeted information.

The QPFF-MAGIC Framework for Ideation

A powerful methodology for uncovering these intent-rich concepts is the QPFF-MAGIC framework, which structures the core concerns of your audience [6]. This acronym stands for:

- Questions

- Problems

- Frustrations

- Fears

- Myths they may believe & misunderstandings they may have

- Alternatives a user may be considering

- Goals

- Interests

- Concerns

Applying this framework to your research area forces a deep consideration of what your peers are actively grappling with, providing a fertile ground for seed keyword generation before any tool is used.

Experimental Protocol: Brainstorming and Qualifying Seed Keywords

The following step-by-step protocol provides a reproducible methodology for generating and validating a list of potent seed keywords.

- Objective: To extract keyword ideas from your team's inherent expertise and existing data.

- Procedure:

- Conduct a Brainstorming Session: Gather key members of your research team. Using the QPFF-MAGIC framework as a prompt, list all the questions, problems, and goals related to your research domain. For example: "What are the biggest bottlenecks in de novo drug design for LLMs?" or "What are the common misconceptions about a drug's safety profile in early-stage trials?"

- Mine Internal Communications: Analyze emails, chat logs, and presentation notes from your lab meetings, conferences, and student supervision. Pay close attention to the precise language used to describe complex concepts [1] [8].

- Review Grant Proposals and Manuscripts: Extract key phrases from the introductions, methodology sections, and discussions of your own unpublished drafts and successful grant applications. The terminology used here is inherently aligned with academic search behavior.

Step 2: External Trend and Community Analysis

- Objective: To discover the language and queries used by the broader scientific community.

- Procedure:

- Analyze Scholarly Forums: Search platforms like Reddit (e.g.,

r/science,r/bioinformatics), ResearchGate, and specialized community forums using the syntaxsite:reddit.com [your broad topic][6]. Look for recurring questions and terminology. - Monitor Academic Social Media: Follow leading researchers and institutions on Twitter/X and LinkedIn. Observe the language used in posts discussing new pre-prints or findings.

- Utilize Trend Analysis Tools: Use tools like Google Trends to identify emerging topics within broader fields. While not specific to academia, they can signal growing public or cross-disciplinary interest that will soon be reflected in scholarly search.

- Analyze Scholarly Forums: Search platforms like Reddit (e.g.,

Step 3: Seed Keyword Qualification and Prioritization

- Objective: To filter and prioritize the generated list of seed keywords for further investigation.

- Procedure:

- Compile a Master List: Consolidate all keywords from Steps 1 and 2 into a single spreadsheet.

- Apply the "Jobs-to-Be-Done" Filter: Evaluate each keyword against the question: "What job is a researcher hiring this piece of content to do?" [13]. Does it help them solve a problem, understand a method, or validate a finding?

- Categorize by Intent and Specificity: Tag each keyword as broad, medium-tail, or long-tail based on its length and specificity. The long-tail, specific phrases are your primary candidates for zero-volume keyword exploration.

The following workflow diagram illustrates this integrated experimental protocol.

Data Presentation: Analytical Frameworks and Toolkits

Table 1: Keyword Research Tools for Academic SEO

This table compares common tools and their utility in the context of academic keyword research.

| Tool Name | Primary Function | Utility for Academic Zero-Volume Keywords | Key Metric to Assess |

|---|---|---|---|

| Google Search Console | Shows actual queries leading to your site/publications [1]. | High; reveals real, long-tail academic queries even if volume is zero [1] [23]. | Impressions for low-click queries |

| AnswerThePublic | Visualizes search questions and prepositions [1] [8]. | Medium-High; uncovers specific "how", "what", "why" questions in your field. | Question variations with no volume data |

| Google Trends | Shows interest over time for broad topics [1]. | Medium; identifies seasonal or emerging trends (e.g., a new virus strain). | Rising trend percentage |

| Ahrefs / SEMrush | Provides search volume and keyword difficulty [1] [13]. | Medium; use to filter for low/zero volume and low difficulty terms [22]. | Keyword Difficulty (KD) score |

| Internal Site Search | Reveals what visitors search for on your lab/institution site [1]. | High; shows hyper-specific, unmet content needs of your audience. | Frequency of unique queries |

Table 2: The Researcher's Toolkit for Keyword Discovery & Optimization

This toolkit details essential "reagents" for conducting effective academic keyword research.

| Tool / Resource Category | Specific Examples | Function in Keyword Process |

|---|---|---|

| Seed Keyword Generator | QPFF-MAGIC Framework [6], Internal Team Brainstorming | Provides the initial, unfiltered list of concepts and terminology directly from domain expertise. |

| Query Suggestion Tools | Google Autocomplete, Google's "People Also Ask" [1] [6] | Automatically generates long-tail, conversational question variants based on a seed keyword. |

| Community Language Sources | Reddit, ResearchGate, Twitter/X, Specialist Forums [1] [6] | Provides unfiltered access to the real-world language, questions, and problems of the target community. |

| Volume & Competition Analyzers | SEMrush, Ahrefs, Google Keyword Planner [1] [13] | Qualifies seed keywords by providing estimated search volume and competition metrics (focus on low/zero volume). |

| ASEO Optimization Targets | Manuscript Title, Abstract, Keywords, Full-Text PDF [24] | The core elements of a scholarly publication where identified zero-volume keywords should be strategically placed for maximum discoverability. |

Advanced Implementation: From Keywords to Academic SEO

Identifying keywords is only the first step. Integration into your scholarly works is critical.

The title is the most vital element for discoverability [24]. Incorporate the most important seed keywords as early as possible in the title. Avoid "hiding" key terms in the middle or end of a long title, as search engines and readers may truncate it [24]. For example, instead of "A Study on the Effects of a Novel Compound: Lm-2025, on In-Vitro Models of a Neurodegenerative Disease", a more discoverable title would be "Lm-2025 inhibits alpha-synuclein aggregation in Parkinson's in-vitro models". The abstract should naturally repeat these key terms and their variants to reinforce relevance for both algorithms and readers [24].

Strategic Placement of Keywords

Search engine algorithms assign relevance based on the frequency and position of search terms [24]. To maximize this, strategically place your target zero-volume keywords in:

- The Main Title (highest weight)

- The Abstract (high weight)

- The Author-Provided Keywords metadata

- Section Headings within the paper

- The Conclusion section

- The Alt-Text for any figures or diagrams

This multi-layered approach signals strong topical relevance to academic search engines.

In an era of information overload, a strategic approach to discoverability is no longer optional for researchers. By leveraging deep domain expertise to brainstorm seed keywords and systematically targeting the long-tail, zero-volume landscape, scientists can cut through the noise. The methodologies outlined in this whitepaper—from the QPFF-MAGIC framework to the detailed experimental protocol—provide a replicable path to enhancing academic visibility. By focusing on the highly specific, intent-rich queries that define advanced research, you ensure your work reaches the audience that will find it most valuable, thereby accelerating the impact and citation potential of your contributions to drug discovery and beyond.

In the vast and ever-expanding universe of academic publishing, the efficiency of literature discovery hinges on the precision of search strategies. Traditional approaches often prioritize high-frequency keywords, potentially overlooking a critical segment of the research landscape: zero-volume keywords. In the context of academic research, these are specialized search terms, queries, or conceptual phrases that do not appear in keyword frequency analysis tools or show no recorded usage metrics in academic databases, yet may represent novel research niches, emerging interdisciplinary concepts, or highly specific methodological approaches that are not yet widely indexed [3] [2]. The strategic identification and utilization of these keywords can enable researchers, particularly those in fast-moving fields like drug development, to uncover seminal work, identify emerging trends before they become mainstream, and construct more comprehensive systematic reviews [25] [24].

This guide provides a technical framework for mining PubMed, Scopus, and arXiv to build a robust keyword strategy that integrates both established and zero-volume terms. By adopting the methodologies outlined herein, scientists can enhance the discoverability of their own work and systematically navigate the frontier of scientific knowledge.

Defining Zero-Volume Keywords in Academic Research

The concept of "zero-volume keywords" is adapted from commercial search engine optimization (SEO), where it describes search terms that tools report as having no measurable search volume but which can nonetheless generate valuable traffic [3] [2]. In an academic context, this translates to:

- Highly Specific Conceptual Phrases: These are often long-tail combinations of terms that describe a very precise research problem, material, or method. For example, "allosteric modulation of G-protein coupled receptors for pain management" is a complex concept that might not be a frequently searched string but is of critical importance to a specialized researcher.

- Emerging Nomenclature: Newly discovered compounds, nascent technologies, or recently classified phenomena that have not yet permeated the broader literature or database indexing.

- Interdisciplinary Bridges: Terms that connect established concepts from different fields, which may not be captured by the controlled vocabulary of a single database.

The core challenge and opportunity lie in the fact that academic database tools, much like their commercial counterparts, cannot capture every nuance of search behavior [3]. Relying solely on keyword popularity can introduce a selection bias, potentially limiting the comprehensiveness of a literature review or systematic review [25]. Therefore, a multi-faceted, tool-assisted approach is necessary to uncover these hidden gems.

Database-Specific Mining Methodologies

PubMed: Leveraging MeSH and Proximity Searching

PubMed, with its foundation in Medical Subject Headings (MeSH), offers a structured environment for keyword discovery. The Weightage Identified Network of Keywords (WINK) technique provides a rigorous, multi-step methodology for selecting keywords to perform systematic reviews more efficiently [25].

Experimental Protocol: The WINK Technique

- Initial Search Formulation: Begin with a broad search using key concepts from your research question. For a question like "How do environmental pollutants affect endocrine function?" an initial search might use MeSH terms like

"endocrine disruptors"[MeSH]AND"thyroid diseases"[MeSH][25]. - MeSH Term Expansion: Use PubMed's "MeSH on Demand" tool and the "Similar Articles" feature on relevant abstracts to identify additional, related controlled vocabulary. For the example, this might expand the list to include

"particulate matter"[MeSH],"environmental exposure"[MeSH], and"pesticides"[MeSH][25]. - Network Visualization and Weightage Analysis: Export the titles and abstracts of the resulting articles. Use a scientific data visualization tool like VOSviewer to generate a network map of keywords. This chart analyzes the interconnections and co-occurrence strength among terms within the domain [25].

- Expert-Informed Selection: Integrate subject expert insights to analyze the network visualization. Keywords with limited networking strength to the core concepts (Q1) may be candidates for exclusion, while strongly connected clusters reveal the most relevant terminology [25].

- Search String Refinement: Build the final search string using the high-weightage MeSH terms identified. The application of this technique has been shown to yield 69.81% more articles for a given query compared to conventional approaches [25].

Advanced Technique: Proximity Searching

PubMed's proximity search allows for finding terms that appear near each other, capturing concepts not yet in the phrase index. The syntax is: "term1 term2"[Title/Abstract~#] where # is the maximum number of words allowed between the terms [26].

- Example: A search for

"cognitive impairment multiple sclerosis"[Title/Abstract~0]retrieves citations where these terms appear directly next to each other in any order, narrowing results to highly specific contexts [26].

Scopus's strength lies in its extensive citation data and curated content from over 7,000 publishers, governed by a transparent Content Selection and Advisory Board (CSAB) [27]. The mining process is iterative and data-driven.

Experimental Protocol: Bibliometric Snowball Sampling

- Seed Document Identification: Use a foundational paper or a small set of highly cited reviews in your field as a starting point.

- Forward and Backward Citation Tracking:

- Backward Sampling: Examine the reference list of the seed document to identify prior foundational research and its associated keywords.

- Forward Sampling: Use Scopus's "Cited by" feature to find newer papers that have cited the seed document. This reveals how the research field has evolved and what new terminology is being used.

- Analyze "Keywords" and "Indexed Keywords": Scopus provides author-generated and database-indexed keywords for articles. Compile these from the most relevant papers found through citation tracking to identify both common and unusual terms.

- Journal and Author Profiling: Identify the top-performing journals and authors in your research area within Scopus. Analyze the language and keywords consistently used in their abstracts and titles, as these often set the standard for the field.

arXiv: Tapping into the Preprint Frontier

arXiv hosts preprints, which represent the very cutting edge of research, often employing terminology before it becomes standardized. Mining it requires a different, more dynamic approach.

Experimental Protocol: Trend Analysis in Preprints

- Semantic Search and Regular Monitoring: Use arXiv's search functionality, which is less reliant on formal indexing, to run broad semantic queries. Subscribe to RSS feeds for specific categories (e.g., cs.AI, physics.med-ph) to monitor new submissions.

- Abstract and Introduction Mining: Focus on the abstract and introduction sections of recent preprints. These sections often state the novelty of the work and may use phrases like "we introduce the concept of..." or "termed as," which are prime sources for zero-volume keywords that describe new ideas.

- Cross-Reference with Published Literature: When a preprint on arXiv is subsequently published in a peer-reviewed journal, compare the language between the two versions. The changes often reveal how the community and peer review process are refining the nomenclature, providing insight into the adoption of new terms.

Quantitative Comparison of Keyword Mining Strategies

The table below summarizes the core functionalities, strengths, and ideal use cases for mining keyword ideas across the three databases.

Table 1: Comparative Analysis of Keyword Mining Strategies in Major Databases

| Database | Core Mining Methodology | Primary Strength | Key Metric / Outcome |

|---|---|---|---|

| PubMed | WINK Technique, MeSH Analysis, Proximity Search | Rigorous, controlled vocabulary for biomedical fields | Up to 69.81% more articles retrieved in systematic reviews [25] |

| Scopus | Citation Tracking, Bibliometric Analysis, Author/Journal Profiling | Interdisciplinary coverage and powerful citation analysis | Identification of foundational and emerging literature via citation networks |

| arXiv | Preprint Trend Analysis, Semantic Search | Access to nascent terminology before peer-reviewed publication | Early detection of emerging concepts and nomenclature in fast-moving fields |

The Scientist's Toolkit: Essential Research Reagents for Literature Mining

Effective keyword mining relies on a suite of digital tools and resources that function as the essential "research reagents" for this process.

Table 2: Key Research Reagent Solutions for Literature Mining

| Tool / Resource | Function | Application in Keyword Discovery |

|---|---|---|

| VOSviewer | Scientific Data Visualization Software | Generates network visualization charts to analyze keyword interconnections and strength, as used in the WINK technique [25]. |