Temporal Validation in Hormone Sampling: Protocols for Precision in Clinical Research and Drug Development

This article provides a comprehensive framework for establishing temporally valid hormone sampling protocols, a critical component for data integrity in clinical trials and endocrine research.

Temporal Validation in Hormone Sampling: Protocols for Precision in Clinical Research and Drug Development

Abstract

This article provides a comprehensive framework for establishing temporally valid hormone sampling protocols, a critical component for data integrity in clinical trials and endocrine research. It addresses the foundational importance of timing in capturing dynamic hormone fluctuations, outlines robust methodological approaches for protocol design and implementation, and presents strategies for troubleshooting common analytical and logistical challenges. The content further delves into validation techniques to ensure protocol robustness and comparative analysis of different methodological frameworks. Aimed at researchers, scientists, and drug development professionals, this guide synthesizes current best practices and emerging trends to enhance the accuracy, reliability, and regulatory compliance of hormonal data.

The Critical Role of Timing: Understanding Hormone Dynamics and the Imperative for Temporal Validation

Defining Temporal Validation in the Context of Endocrine Biomarkers

Temporal validation represents a critical phase in the endocrine biomarker development pipeline, ensuring that biomarker-disease relationships remain stable and predictive across different time points and populations. In the context of endocrine research, this process must account for rhythmic hormonal fluctuations, longitudinal biological changes, and evolving environmental factors that influence biomarker performance. This comprehensive guide examines temporal validation frameworks through the lens of comparative experimental approaches, analyzing methodological rigor across study designs, statistical tools, and analytical platforms. By synthesizing current regulatory standards with emerging validation technologies, we provide researchers with evidence-based protocols for establishing temporally robust endocrine biomarkers that withstand the challenges of clinical implementation across diverse physiological states and temporal contexts.

Temporal validation confirms that a biomarker's predictive accuracy and clinical utility remain consistent when applied to data collected at different time points or from populations sampled in distinct temporal eras [1]. For endocrine biomarkers, this process is particularly complex due to the inherent rhythmicity of hormonal systems and the dynamic interplay between endocrine functions and physiological states. The fundamental premise of temporal validation rests on demonstrating that biological relationships identified during discovery phases persist despite temporal shifts in environmental exposures, assay technologies, and population characteristics.

The critical importance of temporal validation has been underscored by recent research documenting significant temporal trends in endocrine parameters. A comprehensive 2025 systematic review analyzing data from over 1 million subjects revealed a significant progressive decline in serum testosterone and luteinizing hormone (LH) levels in healthy men between 1970 and 2024, independent of age and BMI [1]. This finding demonstrates how population-level hormonal shifts can potentially compromise biomarker performance if not accounted for during validation. Similarly, research on menstrual cycle dynamics has shown that hormonal milieu influences brain structure [2], highlighting the need to validate biomarkers across different cyclic phases in female populations.

Within regulatory frameworks, temporal validation represents a specialized component of the "fit-for-purpose" approach endorsed by the FDA's 2025 Bioanalytical Method Validation for Biomarkers guidance [3]. This guidance recognizes that biomarker validation must be tailored to the specific Context of Use (COU), with temporal stability being paramount for biomarkers intended for longitudinal monitoring or population screening. The remarkably low success rate of biomarker development—with only approximately 0.1% of potentially clinically relevant cancer biomarkers progressing to routine clinical use [4]—further emphasizes the necessity of rigorous temporal validation protocols.

Methodological Framework for Temporal Validation

Core Validation Parameters and Metrics

Temporal validation of endocrine biomarkers requires assessment across multiple analytical and clinical parameters, with specific acceptance criteria tailored to the biomarker's intended context of use. The key validation parameters with corresponding evaluation metrics are detailed in Table 1.

Table 1: Key Parameters and Metrics for Temporal Validation of Endocrine Biomarkers

| Validation Parameter | Evaluation Metrics | Temporal Considerations |

|---|---|---|

| Analytical Stability | Intra-assay & inter-assay CV; Signal drift assessment; Reference material stability | Instrument calibration consistency over time; Reagent lot-to-lot variability; Long-term sample storage effects |

| Biological Consistency | Coefficient of variation across biological cycles; Intraclass correlation coefficient (ICC) | Hormonal cycle effects (circadian, menstrual); Seasonal variations; Age-dependent changes |

| Clinical Performance Stability | Sensitivity, specificity, PPV, NPV; ROC-AUC with confidence intervals; Calibration curves | Maintenance of predictive values across sampling epochs; Consistency of optimal decision thresholds |

| Population Trend Resistance | Meta-regression analysis; Multivariate adjustment for temporal confounders | Resistance to population-level hormonal shifts; Consistency across generational cohorts |

The analytical validity of a biomarker measurement must be established first, ensuring the assay itself produces reproducible results across multiple time points [4]. For endocrine biomarkers, this includes demonstrating minimal diurnal and cyclical variation unrelated to the pathological condition being assessed. The 2025 FDA Biomarker Method Validation guidance emphasizes that unlike pharmacokinetic assays, biomarker validation typically cannot rely on spike-recovery of reference standards identical to the endogenous analyte [3]. Instead, parallelism assessments demonstrating similar behavior between calibrators and endogenous analytes across dilutions becomes crucial for establishing relative accuracy.

Statistical methodologies for temporal validation extend beyond conventional biomarker performance metrics. Researchers must employ longitudinal correlation analyses, mixed-effects models accounting for repeated measures, and time-series analyses to differentiate true biological rhythms from random fluctuations [5]. For biomarkers intended to track disease progression or treatment response, trajectory analyses and growth curve modeling establish whether the biomarker captures meaningful temporal patterns rather than random biological noise.

Experimental Designs for Temporal Assessment

Robust temporal validation employs specific experimental designs that capture biomarker behavior across relevant timeframes:

Dense Sampling Designs: Intensive longitudinal sampling captures biological rhythms essential for endocrine biomarker validation. A 2025 neuroimaging study exemplifies this approach, conducting 25-30 test sessions across menstrual cycles to map structural brain changes to daily hormonal fluctuations [2]. Such designs enable researchers to distinguish cycle-dependent variations from persistent pathological patterns.

Multi-Cohort Temporal Alignment: Studying biomarker performance across cohorts recruited in different eras tests temporal transportability. The systematic review on male testosterone trends effectively implemented this approach by analyzing 1,256 papers with data spanning 1970-2024 [1]. Such designs identify secular trends that might limit biomarker longevity.

Split-Time Validation: Dividing longitudinal data into temporally distinct discovery and validation sets provides the most direct assessment of temporal performance. This approach mirrors cross-validation principles but with time as the splitting criterion rather than random sampling.

Table 2: Comparison of Experimental Designs for Temporal Validation

| Design Type | Key Features | Advantages | Limitations |

|---|---|---|---|

| Dense Sampling | High-frequency measurements across biological cycles | Captures rhythmic patterns; Maps acute responses | Resource-intensive; Participant burden |

| Longitudinal Cohort | Repeated measures over extended duration | Assesses long-term stability; Tracks progression | Attrition; Technology obsolescence |

| Multi-Era Meta-Analysis | Aggregates data collected across different time periods | Assesses secular trend effects; Large sample sizes | Heterogeneous methods; Confounding by era |

Comparative Analysis of Validation Approaches

Regulatory Standards and Methodological Frameworks

The evolving regulatory landscape for biomarker validation reflects growing recognition of the unique challenges posed by temporal factors. The 2025 FDA Bioanalytical Method Validation for Biomarkers (BMVB) guidance explicitly differentiates biomarker validation from pharmacokinetic assay validation, endorsing a "fit-for-purpose" approach that should be tailored to the biomarker's specific context of use [3]. This represents a significant advancement from the 2018 guidance that treated biomarkers similarly to drug concentration assays.

Compared to traditional ELISA-based validation, advanced platforms offer distinct advantages for temporal validation studies. Meso Scale Discovery (MSD) electrochemiluminescence technology provides up to 100 times greater sensitivity than traditional ELISA, enabling detection of lower abundance biomarkers and providing a broader dynamic range critical for capturing hormonal fluctuations [4]. Liquid chromatography tandem mass spectrometry (LC-MS/MS) further surpasses ELISA in sensitivity and specificity while allowing simultaneous analysis of hundreds to thousands of proteins in a single run, facilitating comprehensive biomarker panels rather than single-analyte assessments.

The economic case for advanced validation platforms is compelling. A direct cost comparison demonstrated that measuring four inflammatory biomarkers (IL-1β, IL-6, TNF-α and IFN-γ) using individual ELISAs costs approximately $61.53 per sample, while MSD's multiplex assay reduces the cost to $19.20 per sample—a saving of $42.33 per sample [4]. For long-term temporal validation studies requiring repeated measures, these cost differences become substantial while simultaneously improving data quality.

Analytical Performance Across Technology Platforms

The selection of analytical platforms significantly influences temporal validation outcomes through their differential susceptibility to technological drift and analytical variability. MSD's U-PLEX multiplexed immunoassay platform allows researchers to design custom biomarker panels and measure multiple analytes simultaneously within a single sample, reducing both inter-assay variability and temporal inconsistencies [4]. This multiplexing capability is particularly valuable for endocrine biomarkers that often function as panels rather than isolated measurements.

Mass spectrometry-based approaches offer orthogonal advantages for temporal validation. LC-MS/MS provides superior specificity for distinguishing structurally similar hormones and their metabolites, potentially detecting degradation products or subtle structural modifications that might accumulate during long-term sample storage [4]. This analytical precision directly enhances temporal comparability by minimizing assay-derived variability.

A critical methodological consideration in temporal validation is the handling of batch effects—systematic technical variations introduced when samples are processed in different batches across time. The biomarker validation literature emphasizes that "randomization in biomarker discovery should be carried out to control for non-biological experimental effects due to changes in reagents, technicians, machine drift, etc. that can result in batch effects" [5]. Advanced normalization algorithms and statistical correction methods have been developed specifically to address these temporal technical artifacts.

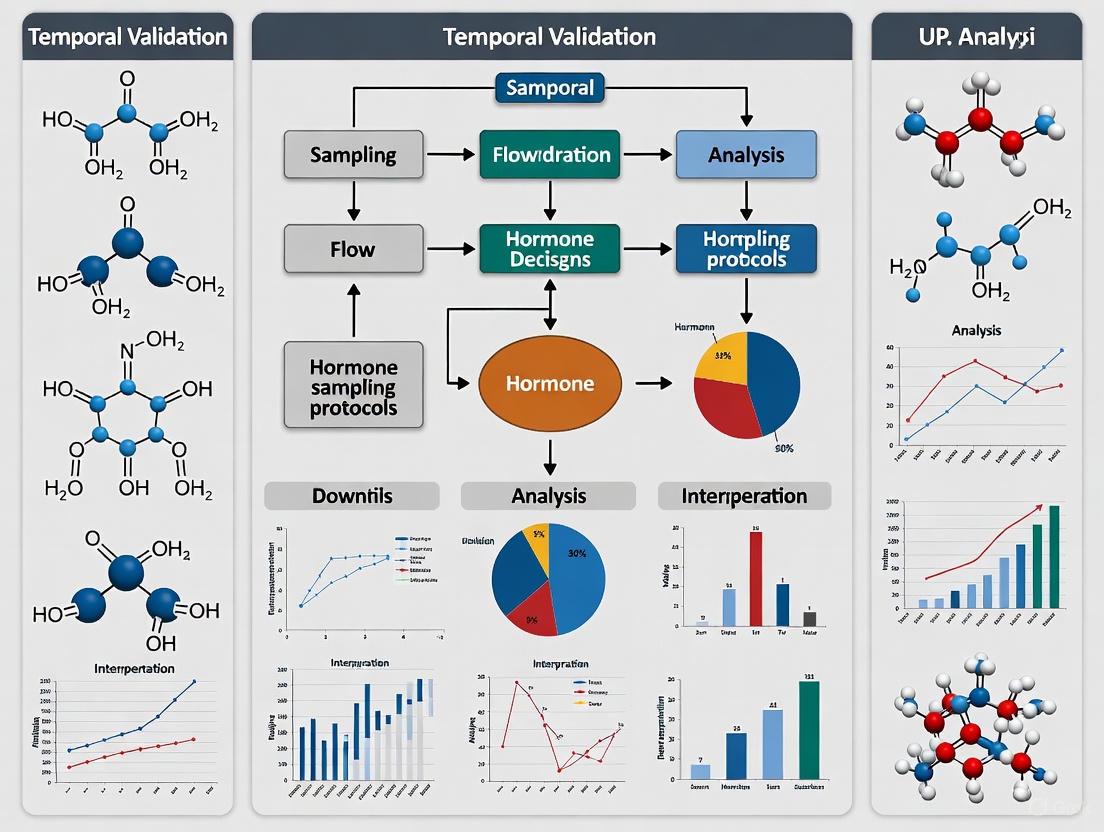

Diagram 1: Temporal Validation Framework in Biomarker Development. This workflow illustrates the integration of temporal assessment throughout the biomarker development pipeline, highlighting key components specific to endocrine biomarkers.

Experimental Protocols for Temporal Validation

Protocol for Dense Longitudinal Hormonal Sampling

Objective: To validate the temporal stability of endocrine biomarkers across relevant biological cycles (circadian, menstrual, seasonal).

Methodology Summary: Based on the dense sampling approach implemented in a 2025 neuroendocrine study [2]:

- Participant Selection: Recruit participants representing target physiological states (typical cycles, endocrine disorders, hormonal contraceptive use)

- Sampling Frequency: Conduct 25-30 test sessions across the menstrual cycle, covering follicular phase (days 1-14), ovulation (day 14), and luteal phase (days 15-28)

- Temporal Alignment: Standardize sampling times to control for diurnal variation (e.g., 8-10 AM for cortisol measurements)

- Multimodal Data Collection: Simultaneously collect biomarker samples (serum, plasma), hormonal assessments (estradiol, progesterone), and clinical phenotyping

- Sample Processing: Implement standardized processing protocols with temporal tracking of sample storage conditions

Analytical Approach:

- Use singular value decomposition (SVD) analyses to generate spatiotemporal patterns of biomarker changes

- Apply linear mixed-effects models with random intercepts for participants to account within-subject correlation

- Conduct cross-correlation analyses between hormone levels and biomarker concentrations

- Calculate intraclass correlation coefficients (ICC) to quantify temporal stability

This protocol successfully identified that in typical menstrual cycles, spatiotemporal patterns of brain volume changes were associated with serum progesterone levels, while during oral contraceptive use, patterns were associated with serum estradiol levels [2]. Such cycle-phase specific associations are critical for temporal validation of female endocrine biomarkers.

Protocol for Multi-Era Temporal Transportability Assessment

Objective: To evaluate biomarker performance consistency across temporal epochs and account for population-level hormonal shifts.

Methodology Summary: Adapted from the systematic review on temporal trends in male hormones [1]:

- Data Collection: Identify published and unpublished datasets measuring the target biomarker across multiple time periods

- Era Stratification: Group data by collection year (e.g., 1970-1979, 1980-1989, etc.)

- Covariate Adjustment: Extract and harmonize data on potential temporal confounders (age, BMI, assay methodology, geographical location)

- Meta-Regression Analysis: Perform weighted regression with collection year as independent variable and biomarker levels as dependent variable

- Performance Comparison: Calculate biomarker performance metrics (sensitivity, specificity, AUC) within each temporal stratum

Analytical Approach:

- Apply mixed-effect meta-regression models using restricted maximum likelihood estimation

- Adjust for subjects' age, BMI, and assay methodology as covariates

- Test for autocorrelation using Durbin-Watson analysis

- Calculate bias ratios (BR) to compare reference interval limits across eras

This approach demonstrated its utility by revealing a significant negative linear relationship between testosterone levels and year of measurement (p=0.033) even after adjusting for age, BMI, and assay methodology [1]. Such findings necessitate periodic recalibration of endocrine biomarker reference intervals.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Research Reagents and Platforms for Temporal Validation Studies

| Reagent/Platform | Function in Temporal Validation | Key Features for Temporal Studies |

|---|---|---|

| U-PLEX Multiplex Immunoassay (MSD) | Simultaneous measurement of biomarker panels | Customizable panels; Reduced sample volume; Minimized inter-assay variability |

| LC-MS/MS Platforms | High-specificity quantification of hormones and metabolites | Superior sensitivity; Structural confirmation; Multiplexing capabilities |

| Stable Isotope-Labeled Internal Standards | Analytical precision normalization | Corrects for instrument drift; Compensates for matrix effects |

| Multiplexed Electrochemiluminescence Detection | Sensitive biomarker quantification | Wide dynamic range (up to 100x ELISA sensitivity); Low abundance detection |

| Automated Sample Preparation Systems | Standardized pre-analytical processing | Reduced technical variability; Improved reproducibility across batches |

The selection of appropriate research reagents and platforms significantly influences the temporal robustness of validation data. Multiplexed platforms like MSD's U-PLEX system provide distinct advantages for temporal studies by enabling consistent measurement of biomarker panels across multiple time points while minimizing technical variability [4]. The platform's electrochemiluminescence detection provides a wide dynamic range essential for capturing physiological hormonal fluctuations that might exceed the limits of traditional ELISA.

Liquid chromatography tandem mass spectrometry (LC-MS/MS) offers orthogonal validation for immunoassay-based findings, providing structural specificity that can distinguish true biomarker changes from analytical interference that might vary across time points [4]. The incorporation of stable isotope-labeled internal standards further enhances temporal comparability by normalizing for analytical drift and matrix effects that can differ between sample batches processed at different times.

Diagram 2: Comparative Workflow for Temporal Validation Methodologies. This diagram contrasts validation approaches across different analytical platforms, highlighting the integration of quality control measures essential for temporal studies.

Data Presentation and Statistical Analysis

Performance Metrics Across Validation Studies

The temporal validation of endocrine biomarkers requires comprehensive statistical assessment using multiple complementary metrics. Table 4 summarizes the key performance indicators from representative temporal validation studies, demonstrating the range of acceptable performance across biomarker classes.

Table 4: Comparative Performance Metrics in Temporal Validation Studies

| Biomarker Category | Temporal Stability Metric | Typical Acceptance Threshold | Exemplary Study Findings |

|---|---|---|---|

| Reproductive Hormones | Intraclass Correlation Coefficient (ICC) | >0.7 for clinical use | Menstrual cycle studies: ICC=0.65-0.89 across phases [2] |

| Metabolic Biomarkers | Coefficient of Variation (CV) across time | <15% analytical; <30% biological | Metabolomics studies: 12-28% biological CV in psychiatric disorders [6] |

| Thyroid Hormones | Reference Interval Stability | <10% shift in limits over decade | Data mining algorithms: <7% change in TSH RIs using EM method [7] |

| Stress Hormones | Diurnal Pattern Consistency | Peak:trough ratio >2.0 | Cortisol research: 2.3-3.1 ratio maintained across seasons |

Statistical methodologies for temporal validation must account for both fixed and random sources of variation. Mixed-effects models with random intercepts for participants effectively capture within-subject correlation across repeated measures, while fixed effects for temporal parameters (collection year, seasonal period, cycle phase) quantify systematic trends. For biomarkers with established rhythmic patterns, cosinor analysis provides robust parameterization of period, amplitude, and phase of biological cycles.

The handling of missing temporal data requires special consideration in validation studies. Multiple imputation methods preserving the temporal structure of the data are preferred over complete-case analysis, which may introduce bias if missingness correlates with temporal factors. Sensitivity analyses comparing results from different missing data approaches strengthen the robustness of temporal validation conclusions.

Temporal validation represents a non-negotiable component of endocrine biomarker development, ensuring that biomarker-disease relationships persist despite hormonal rhythms, population trends, and technological evolution. The comparative analysis presented in this guide demonstrates that advanced multiplexed platforms and mass spectrometry-based methods provide distinct advantages over traditional approaches for temporal studies, offering enhanced sensitivity, specificity, and multiplexing capabilities while simultaneously reducing per-sample costs.

The field of temporal validation continues to evolve with several promising directions. First, the integration of computational biology and machine learning approaches for modeling complex temporal patterns may enhance our ability to distinguish pathological deviations from normal physiological rhythms. Second, the development of standardized reference materials for endocrine biomarkers would significantly improve longitudinal comparability across studies and eras. Finally, regulatory frameworks continue to mature, with the 2025 FDA BMVB guidance representing an important step toward recognizing the unique validation requirements for biomarkers compared to pharmacokinetic assays [3].

For researchers embarking on temporal validation of endocrine biomarkers, the evidence-based protocols and comparative platform analyses provided herein offer a rigorous foundation. By implementing dense sampling designs, controlling for technological drift, and applying appropriate statistical models for temporal data, the scientific community can enhance the translational success of endocrine biomarkers from discovery to clinical implementation.

The endocrine system orchestrates a complex symphony of hormonal signals characterized by distinct temporal patterns: circadian (approximately 24-hour rhythms), menstrual cycle-dependent variations (approximately 28-day rhythms), and ultradian (pulsatile, occurring in minutes to hours). These rhythms are not mere biological curiosities; they are fundamental to maintaining physiological homeostasis, influencing everything from metabolic processes to reproductive function. A deeper understanding of these patterns is crucial for developing accurate diagnostic protocols and effective therapeutic interventions. Disruptions to these rhythms, as seen in shift work or sleep disorders, are increasingly linked to significant health consequences, including menstrual cycle disruption, increased early pregnancy loss, infertility, and metabolic diseases [8] [9].

The study of hormonal rhythms requires specialized methodologies to distinguish endogenous circadian patterns from responses to external stimuli like sleep, light, and food intake. The constant routine (CR) protocol, where subjects are kept in a state of constant wakefulness, posture, and caloric intake in dim light, is considered the gold standard for unmasking endogenous circadian rhythms [9]. This review synthesizes current knowledge on the multifaceted temporal secretion patterns of key hormones, providing a comparative analysis of their regulation and the experimental approaches used to study them, all within the critical context of temporal validation for hormone sampling protocols.

Circadian Hormonal Regulation

The suprachiasmatic nucleus (SCN) of the hypothalamus serves as the body's central pacemaker, synchronizing peripheral clocks throughout the body with the external light-dark cycle. This master clock receives light input via the retinohypothalamic tract (RHT) and coordinates rhythmic physiological processes [8] [10]. On a molecular level, circadian rhythms are generated by a transcriptional-translational feedback loop (TTFL) involving core clock genes. The CLOCK and BMAL1 proteins form a heterodimer that activates the transcription of Period (Per) and Cryptochrome (Cry) genes. PER and CRY proteins then accumulate, complex together, and translocate back to the nucleus to inhibit their own transcription, completing a cycle that takes approximately 24 hours [8] [10]. This cellular clockwork drives the daily oscillations of numerous hormones.

Key Circadian Hormones

- Melatonin: Produced by the pineal gland, melatonin is a quintessential circadian hormone and a potent zeitgeber (time-giver). Its secretion is tightly restricted to the night, peaking in darkness to promote sleep in humans. The SCN regulates its production, and melatonin, in turn, feeds back to the SCN to help synchronize circadian phases. It also acts on peripheral tissues via MT1 and MT2 receptors to coordinate local rhythms [10].

- Glucocorticoids (Cortisol): These steroids exhibit a robust circadian rhythm with a peak around wake-up time (the cortisol awakening response). Their secretion is regulated by a triad of mechanisms: the hypothalamic-pituitary-adrenal (HPA) axis, which receives input from the SCN; autonomic innervation of the adrenal gland; and the intrinsic adrenal clock, which gates the organ's sensitivity to ACTH. Glucocorticoids act as both rhythm drivers, regulating rhythmic gene expression via glucocorticoid response elements (GREs), and zeitgebers, resetting peripheral clocks by affecting Per gene expression [10].

- Thyroid-Stimulating Hormone (TSH): TSH secretion demonstrates a clear circadian pattern, with its highest pulse amplitude and frequency occurring during the night. Interestingly, its rhythm is modulated by sleep-wake states. Sleep deprivation has been shown to augment nightly TSH secretion, while sleep recovery after deprivation can suppress its circadian variation, primarily by altering pulse amplitude rather than frequency [11].

The following diagram illustrates the core molecular mechanism of the circadian clock and its relationship with key hormonal outputs.

Figure 1: The Central and Molecular Circadian Clock Regulating Hormonal Outputs. The suprachiasmatic nucleus (SCN) integrates light input to synchronize molecular clocks in cells, which in turn drive the rhythmic secretion of hormones like melatonin, cortisol, and TSH. These hormones can also provide feedback, acting as zeitgebers to fine-tune timing. Abbreviations: RHT, Retinohypothalamic Tract; TTFL, Transcriptional-Translational Feedback Loop.

Menstrual Cycle and Hormonal Phases

The menstrual cycle represents a dramatic example of a longer-period endocrine rhythm, primarily orchestrated by the interplay of gonadotropins (FSH and LH) and ovarian hormones (estradiol and progesterone). Research has revealed that the expression of circadian rhythms in female reproductive hormones is not constant but varies significantly across the different phases of the menstrual cycle.

Phase-Dependent Circadian Regulation

A critical study utilizing the constant routine (CR) protocol demonstrated that endogenous circadian regulation of reproductive hormones is more robust during the follicular phase compared to the luteal phase [9]. Under CR conditions, significant 24-hour rhythms were detected for estradiol (E2), progesterone (P4), LH, and FSH in the follicular phase. In contrast, during the luteal phase, only FSH and sex hormone-binding globulin (SHBG) maintained significant circadian rhythms [9]. This suggests that the hormonal milieu of the luteal phase may suppress the circadian expression of other key reproductive hormones.

The timing of peak secretion (acrophase) also differs between hormones, as detailed in Table 1. For instance, under both normal and CR conditions, the acrophase for progesterone occurs in the morning, while estradiol peaks during the night, and LH and FSH peak in the afternoon [9]. This complex, phase-shifted pattern of secretion ensures the precise timing of events such as ovulation.

Table 1: Circadian Rhythm Characteristics of Female Reproductive Hormones Across the Menstrual Cycle

| Hormone | Significant 24-h Rhythm in Follicular Phase (under CR) | Significant 24-h Rhythm in Luteal Phase (under CR) | Acrophase (Time of Peak) |

|---|---|---|---|

| Estradiol (E2) | Yes [9] | No [9] | Night [9] |

| Progesterone (P4) | Yes [9] | No [9] | Morning [9] |

| Luteinizing Hormone (LH) | Yes [9] | No [9] | Afternoon [9] |

| Follicle-Stimulating Hormone (FSH) | Yes [9] | Yes [9] | Afternoon [9] |

| Sex Hormone-Binding Globulin (SHBG) | Yes [9] | Yes [9] | Afternoon [9] |

Pulsatile Secretion Patterns

In addition to circadian and monthly rhythms, many hormones are secreted in a pulsatile or ultradian manner. This pattern is characterized by brief, repetitive bursts of secretion separated by periods of relative quiescence. Pulsatility is not merely noise; it is a fundamental feature of hormonal communication, often essential for maintaining target tissue sensitivity and preventing receptor desensitization.

Thyroid-Stimulating Hormone (TSH) Pulsatility

Thyroid-Stimulating Hormone (TSH) exemplifies a hormone under combined circadian and pulsatile control. Computer-assisted analysis of blood samples taken every 10 minutes for 24 hours reveals that TSH is secreted in approximately 9-11 pulses per 24-hour period [11]. These pulses are not randomly distributed; over 50% occur between 2000 h and 0400 h, aligning with the nocturnal rise in TSH levels [11]. The modulation of the circadian TSH rhythm by sleep appears to be achieved primarily through changes in pulse amplitude rather than alterations in pulse frequency or timing. Sleep deprivation increases the amplitude of nocturnal pulses, thereby elevating overall TSH levels, while sleep suppresses them [11].

Experimental Protocols for Temporal Validation

Accurately characterizing hormonal rhythms demands rigorous experimental designs that can isolate endogenous rhythms from confounding environmental factors.

The Constant Routine (CR) Protocol

The Constant Routine (CR) protocol is a rigorous experimental design used to unmask endogenous circadian rhythms by eliminating or uniformly distributing external time cues (zeitgebers) [9].

- Purpose: To measure pure endogenous circadian rhythmicity, free from the masking effects of sleep, activity, postural changes, and light-dark cycles.

- Key Methodology: Participants remain awake in a semi-recumbent posture for an extended period (e.g., ~50 hours) under dim light conditions. Caloric intake is distributed evenly across the 24-hour cycle as small, isocaloric snacks. Blood sampling is performed at regular intervals (e.g., hourly or more frequently) to measure hormone concentrations.

- Application: This protocol was pivotal in demonstrating the phase-dependent circadian regulation of estradiol, progesterone, and LH, proving that their rhythms are endogenously generated and not simply a response to the sleep-wake cycle [9].

High-Density Pulsatility Sampling

To capture rapid, pulsatile hormone secretion, a very different sampling strategy is required.

- Purpose: To characterize the frequency, amplitude, and timing of ultradian hormonal pulses.

- Key Methodology: Blood samples are collected at frequent intervals (e.g., every 10 minutes) over a sustained period, typically 24 hours, to adequately capture multiple pulse events [11]. The resulting time series data is analyzed using specialized computer algorithms (e.g., Cluster analysis, DESADE) to objectively identify and quantify pulses.

- Application: This method was used to establish the detailed pulsatile and circadian profile of TSH secretion in men and women, revealing its ~90-minute pulsing and nocturnal amplification [11].

The Scientist's Toolkit: Research Reagent Solutions

Studying hormonal rhythms relies on a suite of specialized tools and reagents, from advanced analytical instruments to novel sampling technologies. The table below details key solutions used in the field.

Table 2: Key Research Reagent Solutions for Hormone Rhythm Analysis

| Tool / Reagent | Function | Example Application |

|---|---|---|

| LC-MS/MS (Liquid Chromatography-Tandem Mass Spectrometry) | Gold-standard method for highly specific and sensitive quantification of hormones and their metabolites [12]. | Used in the DUTCH Test for comprehensive profiling of sex and adrenal hormones from dried urine [12]. |

| High-Sensitivity Immunoassays | Antibody-based tests (e.g., ELISA) for measuring low-abundance hormones in blood, serum, or saliva. | Employed in large-scale clinical studies to establish population-specific reference intervals (RIs) for thyroid hormones [13]. |

| Conductometric Biosensor | An electrochemical sensor that detects antibody-antigen reactions via changes in solution conductivity [14]. | Proof-of-concept for rapid, electronic quantification of Follicle-Stimulating Hormone (FSH) in urine samples [14]. |

| Smart Samplers (Immunoaffinity) | Paper-based samplers with immobilized antibodies that selectively capture target analytes during sample collection [15]. | Selective capture of Growth Hormone-Releasing Hormone (GHRH) analogs from serum for doping control analysis [15]. |

| Dried Serum/Urine Spots (DSS, DUS) | Minimally invasive sampling technique where blood or urine is spotted onto filter paper for stable transport and storage [15] [12]. | Enables convenient at-home collection for longitudinal hormone monitoring (e.g., DUTCH Test) [12]. |

The workflow for a comprehensive hormonal rhythm assessment, integrating various sampling and analytical techniques, is visualized below.

Figure 2: Experimental Workflow for Hormonal Rhythm Analysis. The process begins with selecting a sampling strategy appropriate for the rhythm of interest (e.g., Constant Routine for circadian, high-density for pulsatile), followed by collection using standard or advanced materials (e.g., dried spots, smart samplers). Analysis is performed via specific platforms, with data finally processed to characterize the rhythm's parameters.

The intricate temporal landscape of hormonal secretion—encompassing circadian, menstrual cycle, and pulsatile patterns—is a critical determinant of health and disease. The comparative analysis presented herein underscores that a "one-size-fits-all" approach to hormone sampling is inadequate for both research and clinical practice. The choice of sampling protocol—be it the Constant Routine for endogenous circadian profiling, high-density sampling for pulsatility, or longitudinal phase-specific sampling for menstrual cycle dynamics—must be precisely tailored to the biological question at hand.

The implications for drug development and diagnostic testing are profound. Ignoring hormonal rhythms can lead to misinterpretation of biomarker levels, inaccurate diagnoses, and suboptimal timing of therapies. Future directions must include the development and widespread adoption of temporally validated sampling protocols that account for these rhythms. Furthermore, the integration of novel technologies like smart samplers and biosensors holds the promise of making dense, longitudinal hormone monitoring more feasible in real-world settings. Ultimately, by embracing the temporal dimension of endocrinology, researchers and clinicians can unlock a deeper level of physiological understanding and pave the way for more personalized and effective medical interventions.

Impact of Temporal Variance on Data Integrity and Clinical Endpoints

Temporal variance—the natural fluctuation in physiological parameters over time—presents a significant challenge in clinical research and drug development. In the specific context of hormone sampling protocols, unaccounted temporal variability can compromise data integrity, distort research findings, and ultimately lead to invalid clinical endpoints. The reliability of endocrine research hinges on robust methodological standardization that acknowledges and controls for these inherent biological rhythms and measurement inconsistencies. This guide compares the impact of different temporal variance sources on data quality, providing researchers with evidence-based protocols to enhance the validity of hormone-related biomarkers and clinical outcomes. A systematic approach to temporal validation is no longer optional but essential for generating reproducible, clinically meaningful results in an era of increasingly precise digital health technologies [16].

Quantitative Impact of Temporal Variance on Data Quality

Temporal variability introduces significant noise and bias into physiological measurements, potentially obscuring true treatment effects and compromising clinical trial outcomes. The tables below synthesize empirical findings on key temporal variance sources and their quantified impacts on endocrine and clinical data.

Table 1: Documented Impacts of Temporal Variance on Hormone Measurement Reliability

| Variance Source | Measured Impact | Experimental Context | Citation |

|---|---|---|---|

| Single Timepoint Sampling | Non-consistent relationships with fitness metrics (positive, negative, or no correlation) | Repeated hormone sampling in free-living sparrows across breeding stages | [17] |

| Seasonal Hormone Variation | Significant repeatability in male stress-induced corticosterone over 3 months; no repeatability in baseline levels | Longitudinal study of mountain white-crowned sparrows | [17] |

| Long-term Hormone Trends | Significant negative linear regression between serum testosterone and year of measurement (p=0.033) | Systematic review of 1,256 papers (1971-2024) including 1,064,891 subjects | [1] |

| Sampling Region Variance | Higher cortisol/cortisone in occipital region vs. posterior vertex for hair analysis | Comparison of 53 participants providing 12 hair samples across two time points | [18] |

Table 2: Clinical Consequences of Timing Errors and Measurement Variability

| Error Source | Impact on Clinical Endpoints | Magnitude/Precision Range | Citation |

|---|---|---|---|

| Unsynchronized Medical Device Clocks | Erroneous event sequences affecting causality assessment | Conceptual accuracy estimates spanning 8 orders of magnitude | [19] |

| Risk Factor Measurement Error | Attenuation of logistic parameter estimates; reduced statistical power | Well-approximated by reliability coefficient for normal random errors | [20] |

| Digital Health Technology Gaps | Invalid, inaccurate, and unreliable derived endpoints | Focus on exclusion where missing data exceeds threshold | [16] |

| Hormone Assay Temporal Variability | Misestimation of disease probabilities in intervention groups | Impact on control vs. experimental group probability estimation | [20] |

Experimental Protocols for Assessing Temporal Variance

Protocol for Longitudinal Hormone Sampling and Repeatability Analysis

Objective: To quantify intra-individual and inter-individual variance in hormone levels across biologically relevant timeframes to establish appropriate sampling frequencies [17].

Materials:

- Laboratory Equipment: Liquid chromatography-tandem mass spectrometry (LC-MS/MS) system for hormone quantification [18]

- Sampling Kits: Standardized blood collection tubes or hair sampling kits with defined anatomical landmarks (e.g., posterior vertex vs. occipital region) [18]

- Data Analysis Software: Statistical packages capable of mixed-effect modeling and repeatability calculations (R, Python, or Kaleidagraph/SigmaPlot for graphing) [21] [1]

Methodology:

- Study Design: Implement a repeated-measures design with sampling across multiple time points (e.g., different stages of breeding season, multiple times per day, or across years) [17].

- Sample Collection: Collect samples (blood, hair, feathers, or feces) using consistent protocols. For hair cortisol analysis, clearly define scalp regions using anatomical landmarks and maintain consistency across all participants [18].

- Hormone Assay: Process samples using standardized protocols. For testosterone and luteinizing hormone (LH), utilize consistent laboratory methodology across all samples to minimize analytical variability [1].

- Data Analysis:

- Calculate repeatability (R) using the Lessells and Boag method: the ratio of among-individual variance to total variance [17].

- Compute profile repeatability (PR) to assess intra-individual variance in stress response patterns, where PR=1 indicates high consistency and PR=0 indicates low consistency [17].

- Apply meta-regression analysis using restricted maximum likelihood estimator (REML) to evaluate temporal trends across studies, adjusting for covariates such as subject age, BMI, and assay methodology [1].

Protocol for Quantifying Temporal Uncertainty in Clinical Timestamps

Objective: To identify, quantify, and correct temporal uncertainties in clinical data collection systems [19].

Materials:

- Master Clock: Synchronized time source approximating Coordinated Universal Time (UTC) [19]

- Data Logging System: Capable of recording timestamps with millisecond precision from multiple independent devices [19]

- Analysis Software: Custom scripts for modeling temporal uncertainty and systematic error correction

Methodology:

- System Mapping: Identify all timekeeping devices in the clinical environment (ventilators, cardiac monitors, laboratory equipment) and their synchronization mechanisms [19].

- Data Collection: Continuously record timestamps from all devices alongside a master clock reference for a defined period (e.g., 1 million patient-hours) [19].

- Error Classification:

- Uncertainty Quantification:

- Implementation: Apply corrective algorithms to timestamps and propagate uncertainty estimates through downstream analytical models [19].

Signaling Pathways and Methodological Workflows

Conceptual Framework of Temporal Variance Impact on Clinical Endpoints

The following diagram illustrates how temporal variance propagates from source mechanisms through measurement systems to ultimately impact clinical endpoint validity, highlighting critical control points for researchers.

Temporal Validation Workflow for Hormone Sampling Protocols

This workflow provides a systematic approach for validating hormone sampling protocols against temporal variance, integrating both biological and methodological considerations.

Research Reagent Solutions for Temporal Validation Studies

The following toolkit details essential materials and methodologies for implementing robust temporal validation in hormone research protocols.

Table 3: Essential Research Toolkit for Temporal Validation of Hormone Sampling

| Tool Category | Specific Products/Methods | Function in Temporal Validation | Protocol Considerations |

|---|---|---|---|

| Hormone Assay Platforms | Liquid chromatography-tandem mass spectrometry (LC-MS/MS) | High-precision quantification of steroid hormones (cortisol, testosterone) in various matrices | Maintain consistent methodology across longitudinal samples; document batch variations [18] [1] |

| Sampling Materials | Standardized hair sampling kits with anatomical landmarks | Consistent collection from defined scalp regions (posterior vertex, occipital) | Document sampling region using precise anatomical descriptors; maintain consistency [18] |

| Time Synchronization | UTC-referenced master clocks; synchronized data loggers | Reference for quantifying and correcting device clock drift | Implement across all medical devices and sampling systems; record synchronization metadata [19] |

| Data Analysis Tools | Mixed-effect modeling (REML); repeatability calculations | Partition variance components (inter-/intra-individual); quantify temporal trends | Use restricted maximum likelihood estimator for meta-regressions of temporal trends [17] [1] |

| Visualization Software | Kaleidagraph, SigmaPlot, specialized R/Python packages | Create publication-quality graphs of temporal patterns | Avoid default software settings; ensure clear axis labels with units; use appropriate scale breaks [21] |

Temporal variance presents a multifaceted challenge to data integrity and clinical endpoint validity in hormone research, manifesting through biological rhythms, measurement errors, and protocol limitations. The comparative analysis presented demonstrates that unaccounted temporal variability can significantly attenuate treatment effects, reduce statistical power, and compromise clinical decision-making. However, through implementation of rigorous validation protocols—including longitudinal sampling designs, synchronized timekeeping systems, and appropriate statistical methods that quantify and adjust for temporal uncertainty—researchers can enhance the reliability of their findings. The standardized methodologies and toolkit presented here provide a framework for developing temporally robust hormone sampling protocols, ultimately strengthening the validity of clinical endpoints in drug development and endocrine research. As the field moves toward more continuous monitoring via digital health technologies, proactively addressing these temporal challenges will become increasingly critical for generating clinically meaningful evidence [16] [19].

In endocrine research, the validity of study findings is inextricably linked to the rigor of the experimental protocols employed, particularly for hormone sampling and analysis. Temporal validation of these protocols ensures that measurements remain accurate, comparable, and meaningful over time, despite evolving laboratory techniques and environmental influences. The growing recognition of a "reproducibility crisis" in scientific research underscores the critical importance of robust methodology [22]. This guide objectively compares approaches to protocol implementation by examining the supporting experimental data and regulatory frameworks that govern them, providing researchers and drug development professionals with a evidence-based perspective on ensuring study validity.

The Critical Link Between Protocol Rigor and Data Validity

The Foundation of Good Clinical Practice (GCP)

The principles of Good Clinical Practice (GCP) provide a foundational framework for achieving rigor, reproducibility, and transparency in research [22]. According to the World Health Organization (WHO), GCP principles most relevant to rigor and transparency include:

- Scientific Justification: Research involving humans must be scientifically justified and described in a clear, detailed protocol.

- Protocol Compliance: Research must be conducted in compliance with the approved protocol.

- Personnel Qualification: Every individual involved in conducting a trial must be qualified by education, training, and experience.

- Accurate Recording: All clinical trial information must be recorded, handled, and stored to allow accurate reporting, interpretation, and verification.

- Quality Systems: Systems with procedures that assure the quality of every aspect of the trial should be implemented [22].

These principles are not merely administrative hurdles but are essential manifestations of scientific rigor. Large multi-site clinical trials often operationalize these principles through a clinical trial coordinating center, which maintains blinding, standardizes procedures across sites, ensures staff competence, oversees data management, and implements quality assurance protocols [22].

Consequences of Methodological Variation in Hormone Assays

The impact of protocol variation is particularly acute in endocrine research, where methodological differences can significantly affect patient diagnosis and management. Assay discordance arises from multiple factors, including differences in calibration, reference intervals, and the efficacy of removing binding proteins prior to measurement [23].

Table: Impact of Assay Variability on Endocrine Management

| Endocrine Area | Source of Variability | Impact on Diagnosis/Management |

|---|---|---|

| Growth Hormone (GH) Axis | Differences in IGF-1 assay calibration and binding protein removal | Discordant interpretation in GH deficiency and excess [23] |

| Thyroid Disorders | Lack of full harmonization of TSH and fT4 immunoassays; proportional bias between platforms | Substantial discordance in diagnosis and management of subclinical hypothyroidism [23] |

| Male Reproductive Health | Variation in testosterone assay methodology over time | Challenges in tracking temporal population trends [1] |

For instance, a study comparing Abbott and Roche platforms for thyroid function tests found median TSH and fT4 results on the Roche platform were 40% and 16% higher than Abbott's, respectively. When combined with differences in manufacturer-provided reference intervals, this led to substantial discordance in the diagnosis and management of subclinical hypothyroidism [23]. Similarly, variable ability of different immunoassay kits to separate insulin-like growth factor 1 (IGF-1) from its binding proteins causes significant differences in measurement capabilities, creating challenges for clinicians monitoring patients with GH excess [23].

Experimental Evidence: Case Studies in Protocol Rigor

Temporal Trends in Male Hormone Research

A 2025 systematic review on temporal trends in serum testosterone provides a compelling case study on the importance of accounting for methodological evolution in longitudinal research. The study analyzed 1,256 papers including 1,064,891 subjects to evaluate trends in testosterone levels from 1970-2024 [1].

Experimental Protocol Highlights:

- Inclusion Criteria: Healthy, eugonadal males >18 years; testosterone measurements reported; blood collection time-frame ≤10 years

- Exclusion Criteria: Participants selected based on testosterone levels; conditions affecting testosterone production; athletes/trained men

- Quality Control: Dual independent reviewers; data extraction by four reviewers; explicit permissible values in digital spreadsheets; environmental and demographic data linkage

- Statistical Analysis: Meta-regression using mixed-effect model with restricted maximum likelihood estimator; adjustment for assay methodology, age, and BMI [1]

This study discovered a significant negative linear regression between testosterone serum levels and year of measurement, with declines in both testosterone and luteinizing hormone (LH) suggesting a resetting of the hypothalamic-pituitary-testicular axis [1]. Crucially, the comprehensive decline remained significant even after adjusting for the assay method used for testosterone measurement, demonstrating how proper methodological accounting strengthens longitudinal findings.

Menopausal Hormone Therapy Guidelines

The 2024 Menopausal Hormone Therapy (MHT) Guidelines from the Korean Society of Menopause illustrate the evolution of evidence-based protocols in women's health. These guidelines emphasize thorough pre-therapy evaluation, including comprehensive medical history, physical examination, and relevant diagnostic investigations personalized based on each patient's risk profile [24].

Assessment Protocol Components:

- Basic Assessment: Lifestyle factors, mental health conditions, personal/familial history of relevant conditions

- Physical Examination: Height, weight, blood pressure, pelvis, breast, and thyroid assessments

- Laboratory Testing: Liver and renal function, hemoglobin, fasting glucose, lipid panels

- Imaging: Mammography, bone mineral density assessment, pelvic ultrasonography

- Follow-up: Repeat assessments every 1-2 years based on clinical status [24]

These detailed protocols ensure that MHT is appropriately targeted to patients who will benefit, while avoiding those with contraindications, thereby validating study outcomes through rigorous participant characterization.

Predictive Model Development in Thyroid Research

A 2025 study on factors influencing 131I-refractory Graves' hyperthyroidism demonstrates rigorous protocol implementation in predictive model development. Researchers developed a nomogram prediction model using LASSO regression analysis on 16 potential variables from 272 patients [25].

Methodological Rigor Elements:

- Clear Diagnostic Criteria: Standardized outcomes (euthyroidism, hypothyroidism, partial remission, no response) assessed 3-6 months post-treatment

- Structured Data Collection: 16 variables including clinical characteristics, laboratory, and imaging examinations

- Validation Framework: Random split into training (70%) and validation (30%) groups

- Model Assessment: Evaluation of discrimination (ROC curves), calibration (Hosmer-Lemeshow), and clinical validity (decision curve analysis) [25]

The resulting model showed excellent predictive accuracy with AUCs of 0.943 and 0.926 in training and validation groups, respectively, providing clinicians with a quantitative tool for assessing 131I treatment efficacy prospectively [25].

Regulatory Frameworks and Quality Assurance

The Role of Real-World Evidence and Regulatory Science

Regulatory science is increasingly recognizing the value of real-world evidence (RWE) across the total product life cycle, including regulatory assessment. Organizations like ISPOR, FDA, and the Medical Device Innovation Consortium (MDIC) are collaborating to advance approaches that promote patient access to safe, innovative medical technologies while ensuring rigorous evidence generation [26].

The MDIC, as a public-private partnership, has been assessing how to apply RWE regulatory science to medical devices, with a key goal of ensuring "a high level of rigor and integrity of RWE necessary for regulatory use cases" [26]. This evolving framework highlights the growing sophistication of regulatory approaches to evidence generation, emphasizing methodological rigor throughout the product lifecycle.

Quality Assurance Protocols in Practice

The Advanced Cognitive Training for Independent and Vital Elderly (ACTIVE) trial provides a concrete example of GCP implementation in a multi-site setting. Key elements included:

- Manualized Protocol: A comprehensive Manual of Procedures (MOP) describing scientific rationale, study protocol, organization, policies, standardized data forms, and quality assurance protocols

- Standardization: Uniform procedures across six field sites with assurance of staff competence

- Oversight Bodies: Coordinating center, Data Safety and Monitoring Board (DSMB), and funding agency supervision

- Data Management: Transparent coding, entry, transmittal, and regular quality assurance [22]

These structures, while resource-intensive, provide a template for how methodological rigor can be institutionalized within research programs to maximize validity.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table: Key Reagents and Materials for Hormone Sampling Protocols

| Reagent/Material | Function in Protocol | Considerations for Validity |

|---|---|---|

| Serum Collection Tubes | Sample acquisition and preservation | Batch consistency; additive compatibility with downstream assays |

| Reference Standards | Calibration of analytical instruments | Traceability to international standards; stability documentation |

| Hormone Immunoassays | Quantitative measurement of hormone levels | Lot-to-lot validation; cross-reactivity profiles; reference intervals [23] |

| Binding Protein Blockers | Improvement of assay accuracy | Efficacy in separating hormones from binding proteins [23] |

| Quality Control Materials | Monitoring assay performance | Commutability with patient samples; multiple concentration levels |

| Automated Platform Reagents | High-throughput hormone testing | Platform-specific performance characteristics; manufacturer-provided reference intervals [23] |

Visualizing the Relationship Between Protocol Rigor and Study Validity

Protocol Rigor to Validity Relationship

HPG Axis and Research Variables

The regulatory and scientific rationale for linking protocol rigor to study validity is clear and compelling. From the detailed guidelines of GCP to the methodological precision required in hormone assay standardization, each element of research protocol contributes to the ultimate validity and utility of study findings. The experimental data presented demonstrates that rigorous methodology enables detection of subtle temporal trends, development of accurate predictive models, and generation of clinically meaningful evidence. As endocrine research continues to evolve, maintaining this focus on protocol rigor will be essential for advancing our understanding of endocrine health and disease, developing effective interventions, and ensuring that research findings withstand the test of time and replication.

The accurate measurement of testosterone is a cornerstone of endocrinological research and clinical practice. However, establishing reliable reference ranges and interpreting individual measurements are complicated by a compelling and growing body of evidence: serum testosterone levels in male populations are declining over time. This secular trend presents a significant challenge for the development and validation of hormone sampling protocols, as the very benchmarks against which samples are compared are shifting. This case study synthesizes recent evidence documenting these temporal trends and explores their profound implications for research design, data interpretation, and the critical need for temporal validation in hormonal sampling protocols. A thorough understanding of these dynamics is essential for researchers, scientists, and drug development professionals to ensure that their methodologies remain robust and their findings accurate in a changing physiological landscape.

Documented Secular Trends in Testosterone Levels

Evidence from Recent Large-Scale Analyses

Recent high-quality studies and systematic reviews have consistently reported a significant decline in testosterone levels among men, independent of aging or changes in body mass index (BMI).

2025 Systematic Review: A comprehensive systematic review and meta-analysis published in 2025, which included 1,256 papers (accounting for 1,504 study groups) and over 1.06 million subjects, detected a significant negative linear regression between serum testosterone levels and the year of measurement (p=0.033). This analysis confirmed a comprehensive decline in testosterone serum levels over the years, which persisted after adjusting for the number of subjects, age, BMI, and the assay method used. Crucially, the study also found a parallel significant decline in Luteinizing Hormone (LH) levels, suggesting an ongoing resetting of the entire hypothalamic-pituitary-gonadal (HPG) axis rather than a primary testicular issue [27].

Large Israeli Cohort Study (2020): A study examining 102,334 men in Israel between 2006 and 2019 found a highly significant (p < 0.001) age-independent decline in total testosterone levels across most age groups. For example, at the age of 21, peak testosterone levels declined from 19.68 nmol/L in 2006-2009 to 17.76 nmol/L in 2016-2019. The study concluded that this decline was unlikely to be explained by increasing rates of obesity, as there were little variation in age-specific BMI over the study period [28].

The following table summarizes the key findings from these and other critical studies:

Table 1: Summary of Key Studies on Temporal Trends in Testosterone

| Study / Author | Study Period | Sample Size | Key Finding | Adjustment for Confounders |

|---|---|---|---|---|

| Santi et al. (2025) [27] | 1971 - 2024 | 1,256 papers (≈1.06 million subjects) | Significant decline in serum testosterone and LH over time. | Age, BMI, assay method, sample size. |

| Levine et al. (2020) [28] | 2006 - 2019 | 102,334 men | Significant age-independent decline in total testosterone; peak levels fell from ~19.7 to ~17.8 nmol/L. | Age; obesity trends ruled out as primary cause. |

| Travison et al. (2007) [28] | 1982 - 2002 | 991 men (from MMAS) | Testosterone decreased more than expected by aging alone; decline evident even in men without weight gain. | Aging, lifestyle factors. |

Implications of Declining LH and the HPG Axis

The finding of a concomitant decline in LH is perhaps one of the most significant insights from recent research. It shifts the potential cause from a primary testicular failure to a central regulatory mechanism. This implies that environmental, lifestyle, or other systemic factors may be suppressing the entire HPG axis, leading to a lower "set point" for testosterone production in more recent populations [27]. For research design, this means that comparing testosterone levels of contemporary cohorts with reference ranges established decades ago could lead to a systematic over-diagnosis of hypogonadism or a miscalibration of inclusion criteria for clinical trials.

Critical Implications for Sampling Design and Research Methodology

The documented temporal trends necessitate a rigorous reassessment of hormonal sampling protocols. A study's validity can be compromised if its design does not account for the variability and confounders that affect testosterone measurement.

Factors Introducing Variability in Testosterone Measurement

The following diagram illustrates the key factors that must be controlled for in sampling design to ensure accurate and interpretable testosterone measurements:

The factors outlined in the diagram can be summarized as follows:

Biological Variability: Testosterone exhibits a pronounced circadian rhythm, with peak levels in the early morning and a nadir in the evening. Samples should ideally be taken between 7-10 AM to ensure consistency [29] [30]. There is also significant intra-individual variation, with studies showing that a single measurement may be misleading; up to 50% of men with an initial T level <300 ng/dl will have a level >300 ng/dl on repeat testing. Averaging 2-3 tests can reduce this range variability by 30-43% [29].

Assay Methodology: The choice of assay introduces substantial variability. Immunoassays (IA) can show high variability, especially at low testosterone concentrations (up to 2.7-14.3 fold), and can differ from mass spectrometry results by -14.1% to 19.2% [29]. Liquid chromatography-tandem mass spectrometry (LC-MS/MS) is considered the gold standard for total testosterone measurement due to its high specificity and accuracy [29] [31] [30]. The measurement of free testosterone is even more complex, with equilibrium dialysis being the gold standard, though calculated empirical estimates are commonly used and accepted [29] [30].

Population and Health Status: Obesity has a profound inverse relationship with testosterone levels; a 4-5 point increase in BMI is associated with a T decline equivalent to 10 years of aging [29]. Acute illness can cause a transient 10-30% decline in young men, while chronic disease and medication use are associated with a more rapid age-related decline [29]. This is critical for subject selection, as including ill individuals can mask true age-related declines, a finding supported by early meta-analyses [32].

Lessons from Major Clinical Trials: The Testosterone Trials

The Testosterone Trials (TTrials) serve as a prime example of meticulous sampling design in the context of low testosterone in aging men. Key methodological considerations from these trials include [33]:

- Stringent Eligibility Criteria: To ensure that enrolled subjects were unequivocally hypogonadal, researchers required two consecutive morning total testosterone measurements below a specific threshold (first test <275 ng/dL, second test <300 ng/dL, average <275 ng/dL). This approach accounted for biological intra-individual variation.

- Standardized Timing: The requirement for morning blood draws controlled for diurnal variation.

- Use of Total Testosterone: Despite free testosterone being biologically active, total testosterone was chosen for screening because its assays are more accurate and standardized at the time.

- Logistical Coordination: The trials' design as a coordinated set of seven studies allowed for uniform recruitment, screening, treatment, and safety monitoring, ensuring consistency across a large dataset.

This rigorous design, which required screening approximately 30 men to randomize one subject, highlights the intensive effort needed to create a well-defined and homogeneous study population in testosterone research [33].

The Scientist's Toolkit: Essential Reagents and Materials

The following table details key reagents and materials essential for conducting high-quality research on testosterone, based on methodologies cited in the literature.

Table 2: Key Research Reagent Solutions for Testosterone Analysis

| Item / Reagent | Function / Application | Key Considerations & Examples from Literature |

|---|---|---|

| Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS) | Gold-standard method for highly specific and accurate quantification of total testosterone in serum/plasma. | Used for precise hormone measurement in large-scale studies [31]. Preferred over immunoassays due to superior accuracy [29] [30]. |

| Equilibrium Dialysis Kit | Considered the reference method for direct measurement of free (unbound) testosterone. | Complex and time-consuming, but provides the most accurate assessment of bioavailable hormone [29] [30]. |

| Validated Immunoassay Kits | Alternative method for total testosterone measurement; more accessible but less specific than MS. | Can show significant variability compared to MS, especially at low concentrations. Requires careful validation [29]. |

| Calculated Free Testosterone Algorithms | Software or formulae (e.g., the Vermeulen equation) to estimate free testosterone based on total T, SHBG, and albumin. | Commonly used and accepted in clinical practice and research when direct measurement is not feasible [29] [30]. |

| Sex Hormone Binding Globulin (SHBG) Assay | Measurement of SHBG levels, which is critical for calculating free or bioavailable testosterone. | An essential component in the calculation of free testosterone and for understanding hormonal bioactivity [29]. |

| Standardized Sample Collection Kits | For consistent biological sample (serum, saliva) acquisition and stabilization. | Includes specific tubes, protocols for time-of-day collection, and handling instructions to minimize pre-analytical variability [29] [34]. |

The unequivocal evidence of a secular decline in testosterone levels, potentially due to a resetting of the hypothalamic-pituitary-gonadal axis, demands a paradigm shift in how researchers approach hormone sampling design. Relying on static, historical reference ranges is no longer tenable. Future research must adopt temporally validated protocols that explicitly account for this trend. This involves implementing rigorous methodological controls—including standardized timing of sample collection, the use of gold-standard mass spectrometry assays, multiple measurements to account for intra-individual variability, and careful selection and characterization of study cohorts. By integrating these principles, the scientific community can ensure that future studies on testosterone remain accurate, reproducible, and clinically relevant in the face of a changing endocrinological landscape.

Building Robust Sampling Frameworks: From Protocol Design to Practical Execution

Key Components of a Comprehensive Hormone Sampling Protocol

Hormone sampling is a foundational tool in clinical diagnostics and research, providing critical insights into endocrine function. However, the accuracy and reliability of hormone measurement are profoundly influenced by pre-analytical variables. A comprehensive sampling protocol that accounts for temporal biological rhythms, sample matrix selection, and standardized handling procedures is essential for generating valid, reproducible data. This guide examines the core components of hormone sampling protocols, comparing methodologies and their applications to support rigorous scientific investigation.

Critical Pre-Sampling Considerations

Proper planning before sample collection is crucial for accurate hormone measurement, as numerous biological and lifestyle factors can significantly influence results.

Biological Rhythms and Timing

Hormone secretion follows predictable temporal patterns that must guide sampling schedules. Cortisol exhibits a pronounced diurnal rhythm, with peak levels in the early morning and a gradual decline throughout the day, necessitating collection within a specific window, ideally before 10 a.m. [35]. Reproductive hormones in women vary significantly across the menstrual cycle; progesterone is best measured seven days before expected menstruation (around day 21), while FSH and LH are typically assessed on days 2-4 of the cycle [35]. Even seasonal variations can impact hormone levels, as demonstrated in fish studies where scale cortisol concentration was significantly lower in winter than in spring and summer [36].

Subject Preparation and Lifestyle Factors

Standardizing participant preparation minimizes confounding variables. Subjects should typically fast for 10-12 hours before sampling for certain hormones, consuming only water while avoiding coffee, tea, juice, and alcohol [35]. Strenuous physical activity and sexual activity should be avoided for at least 48 hours prior to sampling, as they can elevate hormones like prolactin and cortisol [35]. Medications and supplements require careful management; biotin can interfere with thyroid function tests and should be discontinued 48-72 hours beforehand, while thyroid medications should be taken after sampling when possible [35]. Stress management through relaxation techniques and adequate sleep before testing further stabilizes hormone levels [35].

Table 1: Standardized Preparation Requirements for Hormone Sampling

| Factor | Requirement | Rationale | Examples of Affected Hormones |

|---|---|---|---|

| Fasting | 10-12 hours before test | Prevents dietary interference with hormone levels | Insulin, glucose, gut hormones [35] |

| Physical Activity | Avoid 48 hours prior | Precreases stress hormone elevation | Cortisol, prolactin [35] |

| Time of Day | Morning collection (before 10 a.m.) | Aligns with circadian rhythms | Cortisol [35] |

| Medication Adjustments | Biotin cessation 48-72 hours prior | Prevents assay interference | Thyroid hormones [35] |

| Menstrual Timing | Cycle days 2-4 or 21-23 | Corresponds to hormonal peaks | FSH, LH, progesterone [35] |

Sampling Matrices and Methodologies

The choice of sampling matrix significantly influences what hormonal information can be obtained, with each matrix offering distinct advantages and limitations for different research applications.

Blood-Based Sampling

Serum and plasma remain the gold standard for most clinical hormone assessments, providing systemic hormone levels at a single timepoint. Blood collection allows for the measurement of both free and protein-bound hormone fractions and is suitable for a wide range of analytes including thyroid hormones, cortisol, testosterone, and estradiol [35]. Standard venipuncture protocols require specific timing relative to circadian rhythms, menstrual cycles, and medication schedules [24] [35]. Limitations include the invasive nature of collection and the stress-induced potential for acute hormone fluctuations during phlebotomy.

Non-Invasive Sampling Methods

Salivary sampling provides a practical approach for measuring free, biologically active hormone fractions and is particularly valuable for assessing diurnal patterns, especially for cortisol [37]. Its non-invasive nature enables frequent sampling in naturalistic settings. Cutaneous sampling using specialized adhesive tapes (e.g., Sebutape) captures hormones secreted in skin surface lipids, offering unique insights into local steroidogenesis relevant to dermatological conditions [38]. Water-borne hormone collection has emerged as a valuable non-invasive technique for aquatic species, allowing measurement of corticosterone metabolites in tadpoles and fish without handling stress [39]. Scale cortisol measurement in fish serves as an integrated biomarker of chronic stress, with carefully developed extraction protocols required for accurate quantification [36].

Table 2: Comparison of Hormone Sampling Matrices and Applications

| Matrix | Primary Applications | Advantages | Limitations |

|---|---|---|---|

| Serum/Plasma | Clinical diagnostics, endocrine assessment | Gold standard, comprehensive hormone panels | Invasive, single timepoint, requires clinical setting [24] [35] |

| Saliva | Diurnal rhythm studies, stress research | Non-invasive, free hormone fraction, home collection | Limited analytes, sensitive to collection procedure [37] |

| Cutaneous (Sebutape) | Dermatological research, local steroidogenesis | Site-specific hormone measurement, non-invasive | Limited to skin surface, specialized analysis [38] |

| Water-Borne | Aquatic species research | Truly non-invasive, integrated measurement | Species-specific validation required [39] |

| Scales (Fish) | Chronic stress assessment in teleosts | Retrospective analysis, archive potential | Complex extraction protocol [36] |

Experimental Protocols and Workflows

Detailed methodological documentation ensures experimental reproducibility across studies and enables valid cross-study comparisons.

Cutaneous Hormone Sampling Protocol

The Sebutape method for measuring skin surface hormones involves applying silicone-coated adhesive patches to clean skin sites. The sampling area should be free from detergents, antimicrobial soaps, emollients, and creams for 24 hours before application [38]. Tapes are typically applied for a fixed duration (often 30-90 minutes) to collect sebum and skin secretions [38]. Following collection, hormones are extracted from the tapes using organic solvents such as methanol, with subsequent analysis by mass spectrometry or immunoassays [38]. This approach is particularly valuable for investigating hormonal influences in conditions like acne vulgaris, atopic dermatitis, and hidradenitis suppurativa [38].

Water-Borne Hormone Collection in Amphibians

This non-invasive method for aquatic species involves placing individual tadpoles in containers with a known volume of clean water for a standardized incubation period (typically 30-60 minutes) [39]. Water samples are then collected and passed through solid-phase extraction columns to concentrate hormone metabolites [39]. Extracted samples are analyzed using immunoassays, with CORT release rates calculated accounting for water volume, collection time, and individual mass [39]. This protocol enabled the detection of significant time-by-treatment responses in Gulf Coast toad tadpoles exposed to chronic heat stress [39].

Longitudinal Hormone Assessment in Athletic Performance

Tracking hormone levels in conjunction with performance metrics requires systematic longitudinal design. A study of transgender women athletes incorporated both retrospective and prospective data collection, with hormone levels (testosterone, estrogen, haemoglobin) self-reported alongside verified competition results [40]. Blood tests were coordinated with athletic performance assessment at multiple timepoints pre- and post-gender-affirming hormone therapy, with results converted to standardized international units for consistency [40]. This approach revealed that performance decrements following testosterone suppression were greater in longer-duration events (>240 seconds) compared to shorter events [40].

Signaling Pathways and Hormonal Regulation

Understanding the endocrine pathways governing hormone secretion provides critical context for interpreting sampling results and their physiological significance.

Hypothalamic-Pituitary-Adrenal (HPA) Axis

The HPA axis regulates the physiological stress response through a coordinated neuroendocrine cascade. This pathway activates in response to physical and psychological stressors, culminating in cortisol release from the adrenal cortex. In aquatic species like tadpoles, the homologous Hypothalamic-Pituitary-Interrenal (HPI) axis performs this function, secreting corticosterone to mediate responses to environmental challenges such as thermal stress [39].

Hypothalamic-Pituitary-Gonadal (HPG) Axis

The HPG axis regulates reproductive function and sex hormone production through a complex feedback system. This neuroendocrine pathway begins with hypothalamic secretion of gonadotropin-releasing hormone (GnRH), which stimulates pituitary release of luteinizing hormone (LH) and follicle-stimulating hormone (FSH). These gonadotropins then act on the gonads to stimulate production of sex steroids including testosterone, estrogen, and progesterone.

Essential Research Reagent Solutions

Specific laboratory materials and analytical tools are fundamental to implementing robust hormone sampling protocols across different experimental systems.

Table 3: Essential Research Reagents for Hormone Sampling and Analysis

| Reagent/Material | Application | Function | Example Use Cases |

|---|---|---|---|

| Sebutape | Cutaneous hormone sampling | Collects skin surface secretions for hormone analysis | Dermatological research, local steroidogenesis studies [38] |

| Solid-Phase Extraction Columns | Water-borne hormone collection | Concentrates hormone metabolites from water samples | Amphibian and fish stress physiology [39] |