Strategies for Minimizing Analytical Variability in Hormone Immunoassays: From Foundational Principles to Advanced Validation

This article provides a comprehensive analysis of the sources and solutions for analytical variability in hormone immunoassays, a critical challenge for researchers and drug development professionals.

Strategies for Minimizing Analytical Variability in Hormone Immunoassays: From Foundational Principles to Advanced Validation

Abstract

This article provides a comprehensive analysis of the sources and solutions for analytical variability in hormone immunoassays, a critical challenge for researchers and drug development professionals. It explores the fundamental causes of inaccuracy, including cross-reactivity, heterophilic antibody interference, and reagent variability. The content evaluates methodological advancements in immunoassay technology and the growing role of mass spectrometry as a reference standard. A dedicated troubleshooting section offers practical protocols for identifying and mitigating common interference issues. Finally, the article presents a rigorous framework for method validation and comparison, emphasizing the need for standardized practices to ensure data reliability in both research and clinical decision-making.

Understanding the Sources and Impact of Hormone Immunoassay Variability

Core Concepts and Definitions

Analytical variability in hormone immunoassays refers to the variation in measurement results introduced by the analytical method itself, rather than by true biological differences. Controlling this variability is paramount in research and drug development to ensure data reliability and reproducibility. This guide focuses on its three fundamental components: precision, accuracy, and specificity [1] [2].

Precision describes the closeness of agreement between independent test results obtained under stipulated conditions. It is the inverse of imprecision and is typically broken down into:

- Repeatability: Variability when all factors (e.g., analyst, instrument, day) are held constant. Also called within-run precision [1] [2].

- Intermediate Precision: Variability observed within a single laboratory when factors like different days, different analysts, or different equipment are introduced [1] [2].

Accuracy is the closeness of agreement between a measured value and the true value of the analyte. It is often reported as a percent bias from the nominal concentration. A claim of accuracy is only meaningful when accompanied by acceptable precision [1].

Specificity is the ability of the immunoassay to detect the target hormone unequivocally in the presence of interfering substances that may be expected to be present in the sample. Common interferents include cross-reacting molecules, heterophile antibodies, and biotin [1] [3].

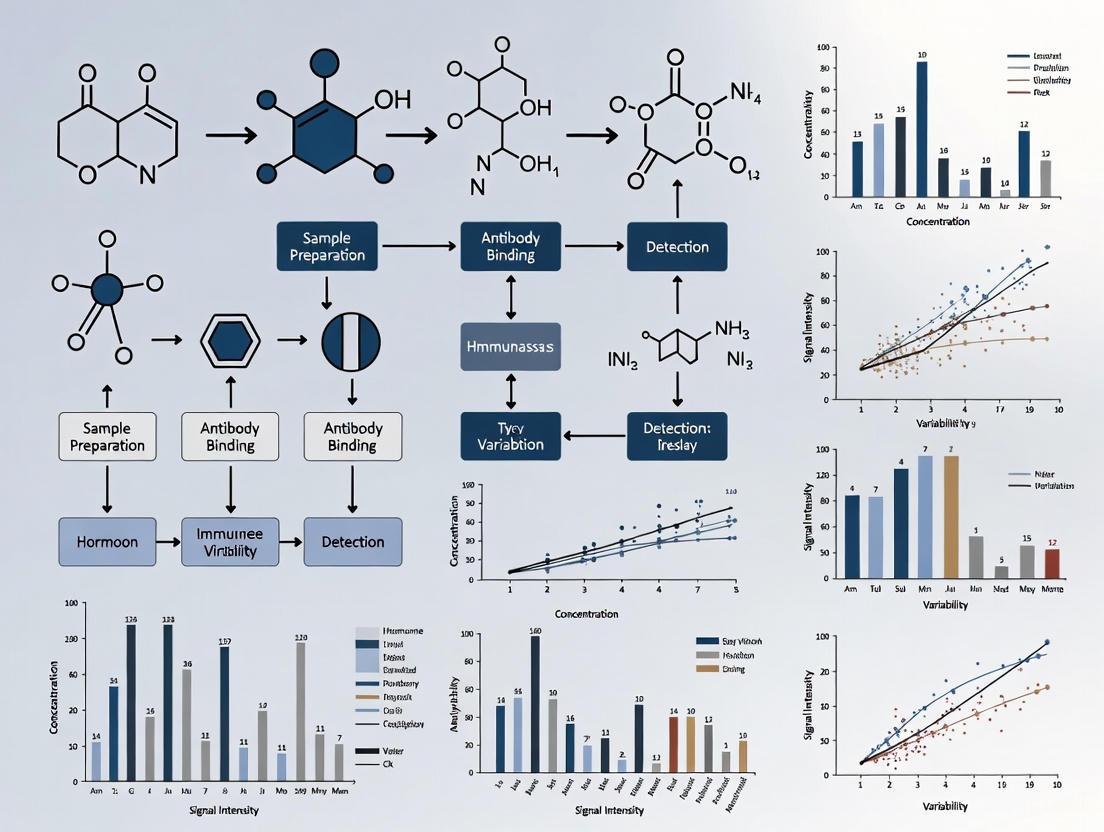

The relationship between these concepts and the broader scope of method validation is outlined in the diagram below.

Troubleshooting Guides & FAQs

This section addresses common challenges researchers face when working with hormone immunoassays.

Frequently Asked Questions

Q1: My assay shows high imprecision (High %CV) between duplicate wells. What are the primary causes? High within-run imprecision is often related to technical execution or sample handling.

- Cause: Inconsistent pipetting technique or poorly calibrated pipettes.

- Solution: Calibrate pipettes regularly and use the reverse pipetting technique for viscous liquids like serum. Ensure the pipette is held at the same angle each time [4].

- Cause: Inadequate mixing of reagents or samples.

- Solution: Always vortex all reagents and thawed samples thoroughly before use. Use an orbital shaker designed for microplates to ensure consistent mixing during incubations (approximately 500-800 rpm without splashing) [4].

- Cause: Particulate matter in samples.

- Solution: Centrifuge thawed, turbid, or viscous samples (e.g., plasma, tissue lysates) at a minimum of 10,000 × g for 5-10 minutes to remove debris and lipids [4].

Q2: I suspect my assay is inaccurate. How can I distinguish a bias (accuracy) problem from a precision problem?

- Assessment: Analyze the precision first by calculating the %CV of replicates. High %CV indicates an imprecision problem that must be resolved before assessing accuracy.

- Assessment: To assess accuracy (bias), run a set of samples with known concentrations (e.g., spiked quality controls or a certified reference material). A consistent, directional deviation from the known values indicates a bias issue [1] [5].

- Common Source of Bias: Non-commutability between the calibrators (standards) and patient samples. The matrix of the calibrator can cause a different assay response than the complex biological matrix of the sample, leading to inaccurate results [6] [5].

Q3: What are the most common interferents affecting the specificity of hormone immunoassays? Immunoassays are susceptible to various interferences due to the complexity of the antigen-antibody interaction in a biological matrix [3].

- Heterophile Antibodies: Human antibodies that can bind to animal-derived assay antibodies, causing false-positive or false-negative results [3].

- Biotin: High circulating levels of biotin (from high-dose supplements) can interfere with assays using a biotin-streptavidin detection system. This typically causes a negative interference in sandwich assays and a positive interference in competitive assays [3].

- Cross-reactivity: Structurally similar molecules (e.g., hormone precursors, metabolites, or certain drugs) can be unintentionally recognized by the assay antibodies. For example, fulvestrant (a breast cancer drug) and certain synthetic steroids can cross-react in estradiol and growth hormone assays, respectively [3].

Q4: My sample result seems clinically implausible. What steps should I take to investigate potential interference?

- Step 1: Re-run the sample. Check if the result is reproducible (precision).

- Step 2: Perform a serial dilution. If the sample result is not proportional to the dilution (non-linear), it suggests interference or a matrix effect [2].

- Step 3: Re-analyze using a different method. If possible, test the sample with an alternative immunoassay platform or a more specific method like LC-MS/MS. A discrepancy between methods is a strong indicator of interference [3] [5].

- Step 4: Use a blocking agent. Re-test the sample after adding a heterophile antibody blocking reagent. A change in the measured concentration suggests heterophile antibody interference [3].

Performance Criteria and Acceptance Limits

To minimize analytical variability, set and adhere to predefined performance criteria. The table below summarizes typical acceptance targets for key validation parameters.

Table 1: Typical Performance Acceptance Criteria for Hormone Immunoassays

| Parameter | Description | Recommended Acceptance Target | Key Experimental Design |

|---|---|---|---|

| Precision | Closeness of agreement between independent results [2]. | ||

| Repeatability | Within-run imprecision. | %CV < 10% (Often stricter for low-concentration analytes). | Minimum of 3 replicates at 3 concentrations over 5 days [1] [2]. |

| Intermediate Precision | Between-run imprecision (different days, analysts). | %CV < 15% (Often stricter for low-concentration analytes). | Minimum of 2 analysts, 2 days, 3 concentrations [1]. |

| Accuracy | Closeness to the true value [1]. | ||

| Percent Recovery | (Measured Concentration / Spiked Concentration) × 100. | 85-115% recovery [1]. | Test a minimum of 3 replicates at 3-5 concentrations across the assay range [1]. |

| Specificity | Ability to measure analyte in presence of interferents [1] [3]. | ||

| Cross-reactivity | Signal from interferent vs. signal from analyte. | Typically < 1-5%, depending on clinical need [3]. | Spike known interferents (metabolites, common drugs) and measure apparent analyte concentration [3]. |

Experimental Protocols for Minimizing Variability

This section provides detailed methodologies for key experiments to characterize and control analytical variability.

Protocol 1: Precision Profile Experiment

Objective: To determine the repeatability and intermediate precision of the hormone immunoassay across its measuring range.

Materials:

- Quality Control (QC) materials at low, medium, and high concentrations within the assay range.

- Assay reagents, buffers, and instruments as per standard protocol.

- Prepare aliquots of the three QC pools.

- Over the course of a minimum of 5 days, one analyst runs two separate analytical runs per day.

- In each run, analyze each QC level in a minimum of 3 replicates.

- If assessing intermediate precision, involve a second analyst who repeats the above procedure on a different set of days, using a different reagent lot if possible.

Data Analysis:

- Calculate the mean, standard deviation (SD), and percent coefficient of variation (%CV) for each QC level within each run (repeatability).

- Pool all data for each QC level across all runs and analysts and calculate the overall mean, SD, and %CV (intermediate precision).

Protocol 2: Accuracy (Recovery) Experiment

Objective: To determine the bias of the assay by measuring the recovery of a known quantity of hormone added to a sample.

Materials:

- A pool of native sample matrix (e.g., hormone-stripped serum or a patient sample with a low, known baseline concentration).

- A standard of known high concentration of the target hormone.

- Pre-spike the native sample pool with the hormone standard to create at least 3 different concentrations spanning the assay's range.

- Also analyze the unspiked native sample pool to determine the baseline concentration.

- Analyze each spiked sample and the unspiked pool in a minimum of 3 replicates.

Data Analysis:

- Calculate the recovery for each spiked sample using the formula: Recovery (%) = [(Measured Concentration of Spiked Sample - Measured Concentration of Unspiked Sample) / Theoretical Spike Concentration] × 100

- The mean recovery across all levels should meet the pre-defined acceptance criteria (e.g., 85-115%).

Protocol 3: Specificity and Interference Investigation

Objective: To demonstrate the assay's ability to measure the hormone specifically in the presence of potential interferents.

Materials:

- Patient sample with a known concentration of the hormone.

- Potential interfering substances (e.g., cross-reactants from [3], biotin, heterophile antibody blocking reagent).

Procedure [3]:

- Split the patient sample into several aliquots.

- Spike one aliquot with a relevant concentration of the potential interferent.

- Spike another aliquot with an equal volume of the interferent's solvent (as a control).

- If investigating heterophile antibodies, treat one aliquot with a blocking reagent.

- Analyze all aliquots in duplicate in the same run.

Data Analysis:

- Compare the measured concentration of the interferent-spiked sample to the solvent-control sample.

- A significant change (e.g., > ±10-15%) indicates potential interference. The effect of a blocking agent can confirm heterophile interference.

The workflow for conducting these core validation experiments is summarized in the following diagram.

The Scientist's Toolkit: Essential Reagents and Materials

Proper execution of immunoassays relies on high-quality reagents and consistent materials. The table below lists key solutions used in the featured experiments and troubleshooting.

Table 2: Key Research Reagent Solutions for Hormone Immunoassays

| Item | Function / Purpose | Critical Notes for Use |

|---|---|---|

| Calibrators / Standards | Define the analytical curve and assign concentration values to unknown samples. | Must be prepared in a matrix as close as possible to the sample matrix (commutability) to minimize bias [5]. |

| Quality Control (QC) Pools | Monitor assay precision and accuracy over time. | Use at least two levels (low and high); prepare from a source independent of the calibrators [1]. |

| Assay Buffer | Serves as the diluent for reagents and samples; maintains optimal pH and ionic strength. | Do not substitute with wash buffer, as it may lack necessary proteins, leading to low recovery [4]. |

| Wash Buffer | Removes unbound reagents, reducing background signal. Typically contains a detergent like Tween 20. | Prevents bead clumping. Incomplete washing is a major source of high background and variability [4]. |

| Blocking Agent | (e.g., BSA, animal sera) Coats unused binding sites on the solid phase to prevent non-specific binding. | Essential for minimizing background noise and improving assay specificity [7]. |

| Heterophile Blocking Reagent | Contains irrelevant animal antibodies to neutralize human heterophile antibodies in samples. | A crucial tool for investigating and resolving erroneous results due to this common interference [3]. |

| Magnetic Bead Separator | Facilitates the separation of bead-bound complexes in wash steps. | For handheld magnets, ensure the plate is firmly attached and decant gently to avoid losing beads [4]. |

Frequently Asked Questions (FAQs)

Cross-Reactivity

1. What is cross-reactivity in hormone immunoassays? Cross-reactivity occurs when substances in a sample, other than the target hormone, are recognized by the assay's antibodies due to structural similarity. This can lead to falsely elevated results. These interfering molecules can be structurally related endogenous compounds (like hormone precursors or metabolites) or drugs (such as synthetic glucocorticoids or anabolic steroids) [8] [3].

2. In which clinical or research scenarios is cross-reactivity a significant concern? Cross-reactivity is particularly problematic in specific contexts:

- Certain Disease States: In 21-hydroxylase deficiency, 21-deoxycortisol can cross-react in cortisol assays. In 11β-hydroxylase deficiency or after a metyrapone challenge, 11-deoxycortisol can cause similar interference [8].

- Drug Administration: Prednisolone and 6-methylprednisolone show high cross-reactivity in some cortisol immunoassays. Several anabolic steroids, like methyltestosterone, can cause false positives in testosterone assays [8].

- Therapy Impact: Norethindrone therapy can interfere with testosterone measurement in women [8].

3. How can I identify and mitigate cross-reactivity in my experiments?

- Consult Manufacturer Data: Always review the package insert for the specific immunoassay, which typically lists known cross-reacting substances and their degree of interference [8].

- Use Alternative Methods: If cross-reactivity is suspected, confirm results using a more specific method like liquid chromatography-tandem mass spectrometry (LC-MS/MS) [8] [3].

- Computational Prediction: Emerging techniques use two-dimensional molecular similarity calculations to predict potential cross-reactants, helping to triage compounds for testing [8].

Heterophilic Antibodies

4. What are heterophilic antibodies and how do they interfere? Heterophilic antibodies are human antibodies that can bind to immunoglobulins from other species (e.g., mouse, goat). In sandwich immunoassays, they can bridge the capture and detection antibodies even when the target analyte is absent, producing a falsely high signal. They can also block antibody binding, leading to falsely low results [9] [10] [11].

5. Which types of immunoassays are most susceptible? Sandwich (or immunometric) immunoassays are the most prone to interference from heterophilic antibodies because their design involves two antibodies, creating a potential bridge for the heterophilic antibody to connect [10] [11].

6. What strategies can be used to detect and prevent heterophilic antibody interference?

- Serial Dilution: A true analyte concentration will typically change linearly with serial dilution. A non-linear response can suggest interference, though this method is not fool-proof [11].

- Use Blocking Reagents: Commercially available blocking tubes or reagents containing animal serum can be used to pre-treat samples. These reagents bind to heterophilic antibodies, neutralizing them [10].

- Use Alternative Assays: Repeating the test with a different immunoassay platform that uses antibodies from a different species or antibody fragments (Fab) can often resolve the issue [10].

- Sample Pre-treatment: Methods like polyethylene glycol (PEG) precipitation can be used to remove interfering immunoglobulins from the sample [11].

Hook Effect

7. What is the high-dose "hook effect"? The hook effect is a phenomenon in one-step sandwich immunoassays where an extremely high concentration of the analyte saturates both the capture and detection antibodies. This prevents the formation of the necessary "sandwich" complex, leading to a falsely low or normal reported result [12] [13] [14].

8. For which analytes is the hook effect a known risk? The hook effect has been reported for several analytes that can reach very high concentrations, including:

- Prolactin (PRL): Particularly in patients with large prolactin-secreting pituitary macroadenomas [13].

- Human Chorionic Gonadotropin (hCG): In conditions like molar pregnancy [13] [14].

- Other Analytes: Growth hormone, TSH, various tumor markers (e.g., PSA, CA 125, calcitonin) [14].

9. How can I detect and correct for the hook effect in the laboratory?

- Serial Dilution: The primary method for detection and correction is to analyze the sample both undiluted and at a significant dilution (e.g., 1:100). If the measured concentration from the diluted sample is substantially higher than the undiluted result, the hook effect is confirmed [13] [14].

- Assay Design: Modern automated analyzers often incorporate features to minimize this effect, but it remains a consideration, especially with point-of-care tests [14].

Experimental Protocols for Error Investigation

Protocol 1: Detecting and Correcting the Hook Effect via Serial Dilution

Purpose: To confirm suspected hook effect interference and obtain an accurate analyte concentration.

Materials:

- Patient sample (e.g., serum or plasma)

- Appropriate assay diluent (as specified by the immunoassay manufacturer)

- Pipettes and sterile tips

- Dilution tubes

- Access to the immunoassay analyzer

Method:

- Analyze the patient sample following the standard, undiluted protocol.

- In parallel, prepare a 1:100 dilution of the sample by adding 10 µL of sample to 990 µL of the recommended assay diluent. Mix thoroughly.

- Analyze the diluted sample using the same immunoassay.

- Multiply the result from the diluted sample by the dilution factor (100) to obtain the corrected concentration.

Interpretation: A corrected concentration that is significantly higher (e.g., >2-3 times) than the undiluted result is indicative of the hook effect. The corrected value should be reported [13] [14].

Protocol 2: Investigating Heterophilic Antibody Interference

Purpose: To determine if heterophilic antibodies are causing interference in an immunoassay result.

Materials:

- Patient sample

- Heterophilic antibody blocking tubes or reagent (commercially available)

- Control sample (if available)

- Pipettes and tips

Method:

- Divide the patient sample into two aliquots.

- Treat one aliquot according to the instructions provided with the heterophilic blocking reagent (e.g., incubate with the reagent).

- Leave the second aliquot untreated.

- Analyze both the treated and untreated aliquots on the immunoassay platform.

- Compare the results.

Interpretation: A significant difference (>30% or as determined by laboratory validation) between the treated and untreated sample results suggests the presence of heterophilic antibody interference. The result from the blocked sample is more reliable [10] [11].

Research Reagent Solutions

The following table details key reagents used to troubleshoot common immunoassay errors.

Table 1: Key Research Reagents for Troubleshooting Immunoassay Interference

| Reagent | Function | Specific Application |

|---|---|---|

| Heterophilic Blocking Reagents | Contains excess animal immunoglobulins that bind to and neutralize heterophilic antibodies in patient samples. | Added to samples prior to analysis to prevent false positive/negative results in sandwich immunoassays [10] [11]. |

| Polyethylene Glycol (PEG) | Precipitates large molecules, including macrocomplexes and immunoglobulins. | Used to pre-treat samples suspected of containing macroprolactin or heterophilic antibodies. The supernatant is then analyzed [13]. |

| Species-Specific Sera | Serum from the same species as the assay antibodies (e.g., mouse serum). | Can be used as an alternative or supplement to commercial blocking reagents to mitigate heterophilic interference [10]. |

| Assay Diluent/Buffer | A defined matrix used to dilute samples while maintaining analyte stability. | Essential for performing serial dilutions to investigate the hook effect or to bring high-concentration samples within the assay's analytical range [13] [14]. |

Workflow Diagrams

Diagram 1: Hook Effect Mechanism and Detection

Diagram 2: Heterophilic Antibody Interference and Resolution

Table 2: Clinically Significant Cross-Reactivity Examples [8] [3]

| Target Assay | Cross-Reacting Compound | Clinical/Research Context |

|---|---|---|

| Cortisol | Prednisolone, 6-Methylprednisolone | Patients on corticosteroid therapy |

| Cortisol | 21-Deoxycortisol | 21-Hydroxylase deficiency |

| Cortisol | 11-Deoxycortisol | 11β-Hydroxylase deficiency, post-metyrapone |

| Testosterone | Methyltestosterone, other anabolic steroids | Anabolic steroid use |

| Testosterone | Norethindrone | Hormone therapy in women |

| Estradiol | Fulvestrant, Exemestane metabolites | Breast cancer therapy |

Table 3: Troubleshooting Guide for Major Immunoassay Errors

| Error Type | Primary Effect on Result | Key Detection Methods | Common Resolution Strategies |

|---|---|---|---|

| Cross-Reactivity | Falsely Elevated | Review package insert; Use LC-MS/MS for confirmation; Computational similarity prediction [8] [3]. | Use a more specific assay (e.g., LC-MS/MS); Switch to a different immunoassay with lower cross-reactivity. |

| Heterophilic Antibodies | Falsely Elevated or Falsely Low | Serial dilution (non-linearity); Re-test with blocking reagent; Use alternative assay platform [10] [11]. | Pre-treat sample with heterophilic blocking reagent; Use species-specific sera; Use Fab antibody fragments. |

| Hook Effect | Falsely Low | Serial dilution of sample (1:100) [13] [14]. | Re-analyze with significant sample dilution; Use a two-step assay design. |

Impact of Sample Matrix and Pre-analytical Variables on Assay Performance

Pre-analytical and sample matrix variables are significant contributors to variability in hormone immunoassay results, profoundly impacting the reliability and interpretation of scientific data. In laboratory medicine, the pre-analytical phase encompasses all steps from test ordering and patient preparation to sample collection, processing, and storage. Evidence suggests that pre-analytical errors account for approximately 60-70% of all laboratory errors, with some estimates reaching as high as 93% of total errors encountered within diagnostic processes [15] [16]. For researchers conducting hormone immunoassays, understanding and controlling these variables is paramount for generating meaningful, reproducible results, particularly in metabolic and endocrine research using rodent models [15]. This guide addresses the most common troubleshooting challenges and provides practical methodologies to minimize pre-analytical variability in your experiments.

Troubleshooting Guides

FAQ: Addressing Common Pre-analytical Challenges

1. How does blood collection site affect hormone measurements in rodent studies? The blood collection site can significantly influence measured hormone concentrations. Studies demonstrate that sampling from different sites in mice produces markedly different results for metabolic hormones like insulin. For instance, plasma insulin concentrations are clearly lower when blood is collected from the retrobulbar sinus compared to the tail vein [15]. This variability arises from differences in local tissue metabolism, blood flow rates, and potential stress responses. To minimize this bias, consistently use the same sampling site across all experimental groups and precisely document the procedure for later interpretation [15].

2. What is the impact of anesthesia on hormone measurements? Inhalation anesthetics such as isoflurane significantly affect metabolic parameters. Research shows that plasma insulin concentrations are significantly lower when blood is collected under isoflurane anesthesia compared to conscious sampling [15]. Anesthetics influence intestinal motility, gastric emptying, and glucose metabolism, thereby introducing unwanted biological variability [15]. For reliable results, maintain consistent anesthesia protocols throughout an experiment and avoid comparing samples collected with different anesthesia methods.

3. Why do hemolyzed samples produce unreliable hormone results? Hemolysis, the in-vitro breakdown of red blood cells, is a primary source of poor blood sample quality, accounting for 40-70% of pre-analytical errors [16]. Hemolysis causes spurious release of intracellular analytes and can interfere with spectral absorbance measurements used in various immunoassays [16]. Additionally, for specific hormones like insulin, hemolysis can directly interfere with the immunoassay measurement itself [3]. Always visually inspect samples for pink/red discoloration and implement proper phlebotomy and sample handling techniques to prevent mechanical hemolysis.

4. How do heterophile antibodies interfere with immunoassays? Heterophile antibodies are endogenous human antibodies that can bind to animal-derived antibodies used in immunoassay reagents. This binding can create false bridges between capture and detection antibodies in sandwich immunoassays, leading to falsely elevated results, or block antibody-antigen binding in competitive assays, causing falsely low results [3]. These interferences are not detectable by standard quality control procedures and can produce seemingly coherent but erroneous hormonal profiles [3]. Suspect heterophile interference when clinical findings contradict laboratory results, and use blocking reagents or alternative methodologies for confirmation.

5. What is the "hook effect" and how can I detect it? The high-dose hook effect occurs in sandwich immunoassays when exceedingly high analyte concentrations saturate both capture and detection antibodies, preventing the formation of the antibody-hormone-antibody "sandwich" [17]. This results in falsely low or normal results despite very high actual hormone levels. The hook effect is particularly problematic with large pituitary tumors (e.g., macroprolactinomas), malignant tumors, and measurements of hCG, thyroglobulin, or PSA [17]. Detection involves performing sample dilutions (e.g., 1:100 or more); if measured concentrations increase proportionally with dilution, the hook effect is likely present.

6. How does sample matrix differ between serum and plasma? The choice between serum and plasma involves important matrix considerations. Serum is generally the preferred matrix for many analytes as additives in plasma tubes can interfere with assays [3]. For instance, EDTA can chelate metallic ions used as labels (e.g., europium) or cofactors required for enzyme activity, while azide preservatives can destroy peroxidase labels [3]. Heparin can also interfere in certain assays. Always validate your immunoassay for the specific matrix used and maintain consistency throughout your study.

Experimental Protocols for Validating Pre-analytical Conditions

Protocol 1: Establishing Sample Stability Under Different Storage Conditions

- Purpose: To determine the stability of your target analyte under various storage temperatures and durations.

- Materials: Pooled plasma/serum sample aliquots, microcentrifuge tubes, freezer boxes, -80°C freezer, -20°C freezer, 4°C refrigerator.

- Procedure:

- Prepare a large volume of pooled sample from your experimental model (e.g., rodent plasma) and aliquot into multiple tubes.

- Baseline Measurement: Immediately analyze one set of aliquots (n=5) to establish baseline concentration.

- Storage Conditions: Store aliquots under different conditions:

- Room temperature (e.g., 22°C) for 2, 4, 8, and 24 hours

- Refrigerated (4°C) for 1, 3, 7, and 14 days

- Frozen (-20°C and -80°C) for 1, 3, 6, and 12 months

- Analysis: After each time point, thaw frozen samples completely, mix gently, and analyze all samples in the same assay run to minimize inter-assay variability.

- Data Interpretation: Compare stored sample results to baseline. A change of less than 10-15% is generally considered acceptable stability, though this may vary by analyte.

Protocol 2: Evaluating Matrix Effects and Spike Recovery

- Purpose: To assess whether sample matrix components interfere with the accurate quantification of your analyte.

- Materials: Test samples, analyte standard, appropriate matrix (e.g., hormone-stripped serum), pipettes, microcentrifuge tubes.

- Procedure:

- Prepare Spiked Samples:

- Low Spike: Add a known low amount of analyte standard to a sample with low endogenous levels.

- High Spike: Add a known high amount of analyte standard to a different aliquot of the same sample.

- Prepare Baseline: Analyze the unspiked sample to determine endogenous analyte level.

- Calculation of Expected Values:

- Expected = (Endogenous concentration) + (Spiked concentration)

- Measurement: Analyze all samples (unspiked, low spike, high spike) in duplicate.

- Recovery Calculation:

- % Recovery = (Measured concentration - Endogenous concentration) / (Spiked concentration) × 100

- Prepare Spiked Samples:

- Interpretation: Recovery between 85-115% generally indicates minimal matrix interference. Consistent recovery outside this range suggests matrix effects that may require sample dilution or alternative sample preparation [18].

Protocol 3: Assessing Linearity and Hook Effect

- Purpose: To determine the assay's linear range and potential for high-dose hook effect.

- Materials: Sample with known high analyte concentration, assay calibrators, appropriate diluent.

- Procedure:

- Prepare a series of dilutions (e.g., undiluted, 1:2, 1:10, 1:50, 1:100) of the high-concentration sample using the recommended assay diluent.

- Analyze all dilutions in the same assay run.

- Multiply the measured concentration of each dilution by its dilution factor to obtain the calculated original concentration.

- Interpretation: If the calculated concentrations are relatively constant across dilutions, the assay is performing linearly. If calculated concentrations significantly increase with higher dilutions (creating a "hook" pattern when graphed), a high-dose hook effect is present, and results from undiluted samples are unreliable [17].

Essential Research Reagent Solutions

Table: Key Materials for Controlling Pre-analytical Variables

| Reagent/Material | Function | Key Considerations |

|---|---|---|

| Stabilized Blood Collection Tubes | Preserve sample integrity from collection to processing [19]. | Allows room-temperature storage, batch processing, and reduces variability from immediate processing requirements. |

| Low-Binding Microcentrifuge Tubes | Minimize adsorptive losses of proteins and peptides [20]. | Surface treatments reduce analyte binding to tube walls, improving recovery for low-abundance biomarkers. |

| Protein-Stabilized Reference Materials | Provide reliable calibrators for immunoassays. | Matrix-matched stabilizers maintain analyte integrity and improve accuracy of calibration curves. |

| Heterophile Antibody Blocking Reagents | Mitigate interference from human anti-animal antibodies [3]. | Added to assay buffer to prevent false binding that causes erroneous results. |

| Matrix-Matched Quality Controls | Monitor assay performance in appropriate biological fluid. | Controls prepared in the same matrix as samples (e.g., rodent serum) better detect matrix-related issues. |

Visual Guide to Pre-analytical Workflow

The following diagram illustrates the critical control points in the pre-analytical workflow where variability can be introduced and must be managed:

Pre-analytical Control Points

Table: Impact of Common Pre-analytical Variables on Assay Performance

| Pre-analytical Variable | Effect on Assay | Magnitude of Effect | Recommended Control Measure |

|---|---|---|---|

| Blood Collection Site (Retrobulbar vs. Tail vein) | Lower measured insulin concentrations [15] | Significant difference (p<0.05) in all mice tested [15] | Consistent sampling site within experiment; precise documentation |

| Inhalation Anesthesia (Isoflurane) | Suppresses insulin secretion [15] | Significant reduction (p<0.05) vs. conscious sampling [15] | Consistent anesthesia protocol; avoid mixing anesthetized/conscious samples |

| Hemolysis | Interferes with spectrophotometric detection; releases intracellular analytes [16] | Accounts for 40-70% of pre-analytical errors [16] | Proper phlebotomy technique; visual inspection; reject hemolyzed samples |

| Inappropriate Sample Volume | Incorrect anticoagulant-to-blood ratio; clot formation [16] | Accounts for 10-20% of pre-analytical errors [16] | Follow tube manufacturer fill volumes; implement training |

| Wrong Collection Container | Additive interference; improper preservation [16] | Accounts for 5-15% of pre-analytical errors [16] | Validate tube type for each analyte; use laboratory-defined protocols |

| Clotted Sample | Analyte entrapment; inaccurate pipetting [16] | Accounts for 5-10% of pre-analytical errors [16] | Proper mixing immediately after collection; check for clots before analysis |

Vigilant management of sample matrix and pre-analytical variables is not merely a preparatory step but a fundamental component of robust experimental design in hormone immunoassay research. By implementing standardized operating procedures, systematically validating pre-analytical conditions, and maintaining meticulous documentation, researchers can significantly reduce unwanted variability, enhance data reliability, and draw more meaningful biological conclusions from their immunoassay measurements.

Structural Heterogeneity of Analytes and its Effect on Immunoassay Consistency

Welcome to the Technical Support Center. This resource is dedicated to helping researchers, scientists, and drug development professionals troubleshoot one of the most persistent challenges in hormone immunoassay research: analytical variability introduced by the structural heterogeneity of analytes.

Structural heterogeneity refers to the natural variations in protein structure, including differences in post-translational modifications (e.g., glycosylation patterns, deamidation, oxidation), the presence of protein fragments, and the formation of aggregates [21] [22]. For monoclonal antibodies (mAbs) and other protein therapeutics, this heterogeneity is a critical quality attribute that must be monitored [21]. In the context of immunoassays, this heterogeneity can significantly impact the accuracy, precision, and reproducibility of your results because different structural variants may be recognized and bound by assay antibodies with varying efficiency [3] [23].

The following guides and FAQs are designed within the broader thesis of minimizing analytical variability. Consistent and reliable immunoassay data are foundational for robust drug development and credible research outcomes.

Troubleshooting Guides

Guide 1: Diagnosing Inconsistent Results Between Assay Lots

Problem: Your experimental results show significant shifts in measured analyte concentration or a high rate of outlier samples when a new lot of an immunoassay kit is introduced.

Explanation: This is often a symptom of Lot-to-Lot Variance (LTLV), a common issue where different production batches of an immunoassay kit yield different results for the same sample [24]. A primary cause is fluctuations in the quality of critical raw materials, particularly antibodies and antigens, which can be sensitive to minor changes in production or storage [24]. The structural heterogeneity of your analyte may interact differently with these slightly varied reagents.

Investigation and Resolution Workflow:

Detailed Steps:

- Run Quality Control (QC) Materials: Use the kit's QC materials and, if available, leftover calibrators from the previous lot. Also, include a panel of well-characterized internal samples that cover the assay's dynamic range.

- Check Assay Performance: Calculate the precision (CV%) and percent recovery for the QC and internal samples. Compare the calibration curves of the old and new lots for significant shifts in slope or background signal.

- Interpret Findings:

- If the kit's QC and calibrators are out of specification, the issue likely lies with the new lot. Proceed to Step 5.

- If the kit QC passes but your internal samples show poor recovery, the problem may be sample-specific. The new lot's antibodies may be differentially affected by the structural variants (e.g., glycosylation, aggregates) present in your real samples [21] [24]. Proceed to Step 4.

- Investigate Sample Interferences: Subject problematic samples to forced degradation studies (e.g., freeze-thaw cycles, brief incubation at room temperature) and re-analyze. A significant change in measured concentration suggests the presence of unstable or heterogeneous analyte forms [21].

- Contact the Manufacturer: Provide them with your detailed comparative data. Inquire about any changes in the sourcing or formulation of critical reagents, especially the capture and detection antibodies.

- Implement a Bridging Study: If you must switch lots, perform a formal method comparison using 20-40 of your study samples. Establish new baseline values and, if necessary, apply a correction factor for longitudinal studies [23].

Guide 2: Addressing Discrepancies Between Different Immunoassay Platforms

Problem: You are collaborating with another lab or have switched clinical testing platforms, and the numeric values for the same hormone (e.g., Thyroglobulin, IGF-1) are not comparable, leading to potential misclassification of patient results.

Explanation: Different immunoassay platforms use different pairs of antibodies (with distinct epitope specificities), different detection methods, and different calibrators. The structural heterogeneity of an analyte means that various assays may detect a different "mix" of the analyte's structural variants [25] [23]. For example, an assay's antibodies might have reduced affinity for a deamidated or oxidized form of the protein, leading to underestimation [21].

Investigation and Resolution Workflow:

Detailed Steps:

- Perform a Concordance Study: If results from two platforms must be compared, run a set of 40-100 patient samples on both assays. Use statistical methods like Passing-Bablok regression and Bland-Altman plots to quantify the correlation and systematic bias [23].

- Stratify by Clinical Decision Levels: Analyze the agreement at clinically critical ranges (e.g., Thyroglobulin < 0.2 ng/mL for "excellent response" in thyroid cancer) [23]. Disagreements are most common at these extremes.

- Evaluate Clinical Significance: Determine if the measured bias would change clinical decision-making (e.g., initiating or stopping a treatment).

- Do Not Mix Platforms: For longitudinal patient monitoring, use the same analytical platform and method throughout the entire follow-up period [23].

- Re-Baseline if Switching is Unavoidable: If a platform change is mandatory, a formal bridging study is essential to re-baseline all patient values and establish new clinical decision limits for the new assay [23].

- Harmonize Reporting: Always report results alongside the name of the assay used and its specific reference intervals or clinical decision limits.

Frequently Asked Questions (FAQs)

Q1: What are the most common structural modifications in protein therapeutics that can affect immunoassay performance?

A: The table below summarizes key modifications and their potential impacts [21]:

| Structural Modification | Description | Potential Impact on Immunoassay |

|---|---|---|

| Deamidation | Conversion of asparagine (Asn) to aspartic acid, often in NG motifs. Increases acidic charge variants. | Can reduce antibody binding affinity, leading to underestimation of potency and concentration [21]. |

| Oxidation | Modification of methionine (Met) or tryptophan (Trp) residues by reactive oxygen species. | Can cause conformational changes, reducing binding affinity and leading to inaccurate quantification [21]. |

| Glycosylation Heterogeneity | Variations in glycan structures attached to asparagine (N-linked) or serine/threonine (O-linked) residues. | Different glycoforms may be recognized differently by assay antibodies, affecting measured concentration and functional assessment [21] [22]. |

| C-terminal Lysine Clipping | Variability in the presence of C-terminal lysine on antibody heavy chains. | Contributes to charge heterogeneity; typically does not impact safety/efficacy but can cause variability in charge-based analytical methods [21]. |

| Aggregation | Formation of high molecular weight (HMW) species. | Aggregates may cause non-specific binding or hook effects, leading to both over- and under-estimation [21] [24]. |

| Fragmentation | Cleavage of the protein backbone, generating low molecular weight (LMW) species. | Fragments may be detected incompletely or not at all, leading to inaccurate quantification of the intact molecule [22]. |

Q2: How can I assess the "developability" of a monoclonal antibody (mAb) to minimize risks from structural heterogeneity?

A: A proactive, three-step developability assessment workflow is recommended for early-stage candidate screening [21]:

- Step 1: In Silico Evaluation. Analyze the amino acid sequence to identify degradation hot spots (e.g., unstable motifs like NG for deamidation), unpaired cysteines, and aggregation-prone regions [21].

- Step 2: Extended Characterization. Use a panel of analytical methods to assess key biophysical and biochemical properties with limited material consumption. This includes measuring:

- Charge Variants: via imaged capillary isoelectric focusing (icIEF) or cation-exchange chromatography (CEX).

- Size Variants: via size-exclusion chromatography (SEC) for aggregates and fragments.

- Hydrophobicity: via hydrophobic interaction chromatography (HIC).

- Detailed Characterization: Advanced techniques like capillary electrophoresis-mass spectrometry (CE-MS) can efficiently resolve and assign isomeric variants and glycoforms at the intact and middle-up level [22].

- Step 3: Forced Degradation Studies. Stress the candidate mAbs under controlled conditions (e.g., elevated temperature, light exposure, oxidative stress) to rapidly reveal candidate-specific degradation pathways and confirm the hot spots identified in Steps 1 and 2 [21].

Q3: Our lab is validating an in-house immunoassay. What are the critical validation parameters to ensure it is robust against analyte heterogeneity?

A: A thorough method validation is crucial. The table below outlines the essential parameters based on international guidelines [26]:

| Validation Parameter | Objective | Recommended Experiment |

|---|---|---|

| Precision | Measure closeness of agreement between repeated measurements. | Run within-run and between-run experiments using controls at low, mid, and high concentrations. Calculate CV% [26]. |

| Accuracy / Recovery | Determine the closeness of the measured value to the true value. | Spike a known amount of pure analyte into a relevant biological matrix and measure the recovery percentage [27] [26]. |

| Linearity / Dilutional Integrity | Verify that samples with high analyte concentrations can be reliably diluted into the assay's measuring range. | Serially dilute a high-concentration sample in the appropriate matrix. The measured concentration should be proportional to the dilution factor [26]. |

| Selectivity / Specificity | Ensure the assay accurately measures the target analyte in the presence of other components (e.g., metabolites, related proteins, interfering antibodies). | Test cross-reactivity with structurally similar compounds. Assess interference from hemolyzed, lipemic, or icteric samples [3] [26]. |

| Calibrator Stability | Confirm that calibrators and critical reagents are stable under defined storage conditions and over the claimed lifetime. | Periodically test old calibrator sets against a new set to detect signal drift [24]. |

| Robustness | Evaluate the method's resilience to small, deliberate variations in procedural parameters (e.g., incubation time, temperature). | Systematically vary key protocol parameters one at a time and assess the impact on the final result [26]. |

Q4: What are the primary causes of lot-to-lot variance in immunoassay reagents, and how can they be controlled?

A: The main causes and mitigation strategies are [24]:

- Causes:

- Antibody Quality: Variations in antibody affinity, specificity, purity, and aggregation between production batches. Aggregates can cause high background or signal leap [24].

- Antigen/Calibrator Quality: Inconsistent purity, stability, or exact composition of the antigen used for calibration and standardization [24].

- Enzyme Conjugates: Fluctuations in enzymatic activity or labeling efficiency of antibody-enzyme conjugates.

- Buffer Formulations: Minor, uncontrolled changes in pH, ionic strength, or stabilizing components.

- Control Strategies:

- Rigorous QC on Raw Materials: Implement strict specifications for antibodies and antigens, using methods like SDS-PAGE, SEC-HPLC, and CE-SDS to monitor purity and aggregation [24].

- Use of Master Calibrators: Maintain a large, well-characterized master lot of calibrator to standardize all new production batches against.

- Comprehensive Lot-to-Lot Testing: Before release, new reagent lots must be tested against the previous lot and the master calibrator using a predefined panel of quality control samples.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Managing Heterogeneity |

|---|---|

| High-Quality Monoclonal Antibodies | Affinity-purified antibodies with well-defined specificity and low aggregation are critical for consistent assay performance. Recombinant antibodies can offer superior lot-to-lot consistency [24]. |

| Stable Reference Standards | Well-characterized, stable calibrators that are standardized against international reference materials (e.g., CRM-457 for thyroglobulin) are essential for harmonizing results across labs and platforms [23]. |

| Heterophilic Blocking Reagents (HBR) | A mixture of irrelevant antibodies and inert proteins that blocks interference from human anti-animal antibodies (HAAA) and other heterophilic antibodies in patient samples [27]. |

| Stabilized Buffer Systems | Consistent buffer formulations with appropriate stabilizers (e.g., BSA, sucrose) are vital for maintaining reagent and analyte stability, thereby reducing variability [24]. |

| Matrix-Matched Controls & Calibrators | Using the same biological matrix (e.g., serum, plasma) for controls and calibrators as for the unknown samples helps account for matrix effects that can differentially affect heterogeneous analyte forms [27] [26]. |

The Clinical and Research Consequences of Unreliable Hormone Data

Troubleshooting Guides and FAQs

FAQ: Recognizing Unreliable Data

Q: My hormone immunoassay results are clinically implausible. What are the most common causes? A: Clinically implausible results often stem from specific, identifiable issues. The most frequent causes include interference from heterophile antibodies or human anti-animal antibodies in the sample, cross-reactivity with structurally similar molecules or medications, the high-dose "hook effect," and high biotin levels from supplement intake [3] [28]. Spurious results can also arise from pre-analytical conditions like improper sample handling or the use of incorrect sample types (e.g., serum vs. plasma) [29].

Q: How can I distinguish true hypoprolactinemia from an artifact caused by the high-dose hook effect? A: The high-dose hook effect occurs in sandwich immunoassays when extremely elevated analyte levels saturate both the capture and detection antibodies, leading to a falsely low reported concentration [28]. This is a known issue with prolactin in patients with macroadenomas. To detect it, request that the laboratory perform the assay on a series of dilutions of the original sample. If the measured concentration increases with dilution, the hook effect is confirmed, and the result from a diluted sample is the accurate one [28].

Q: Why do my results differ from another lab using a different immunoassay kit? A: Different immunoassays for the same hormone may use antibodies that recognize different epitopes or hormone isoforms, have varying susceptibilities to cross-reactants and interferents, and may be calibrated differently [3] [29]. This is a well-documented source of variability. For example, different growth hormone assays may specifically measure the 22K isoform or also detect 20K and other non-22K isoforms, leading to different results [28]. Standardizing with a single method and batch for a study is crucial [29].

Troubleshooting Guide: Common Interferences

Heterophile Antibody Interference

- Problem: Falsely elevated or depressed hormone levels.

- Recognition: Results are inconsistent with the clinical picture or other biochemical data. Interference is often erratic and not reproducible across different assay platforms [3] [28].

- Solution:

- Use a proprietary heterophile blocking tube (HBT) to pre-treat the sample.

- Re-measure the sample on a different immunoassay platform.

- Re-test a new sample collected at a later date [28].

Biotin Interference

- Problem: High concentrations of biotin (vitamin B7) from supplements can cause significant interference, particularly in assays using biotin-streptavidin chemistry [3].

- Recognition: In sandwich immunoassays, biotin typically causes falsely low results, while in competitive assays, it can cause falsely high results [3].

- Solution: The patient should discontinue biotin supplements for at least 48-72 hours before a new sample is collected [3].

Sample Matrix Effects

- Problem: Differences between serum and plasma matrices can lead to different results for the same analyte [29].

- Recognition: Known issue; reference ranges often differ for serum and plasma.

- Solution: Consistent use of the same sample type (serum or plasma) throughout a study is critical. Note that serum has a lower protein content than plasma, which can affect analyte stability and measurement [29].

Experimental Protocols for Identifying Interference

Protocol 1: Serial Dilution to Detect Hook Effect or Interference

Purpose: To determine if a clinically suspicious result is due to the high-dose hook effect or a non-linear interference [28].

- Prepare Dilutions: Create a series of dilutions (e.g., 1:2, 1:10, 1:100) of the patient's sample using the appropriate assay diluent.

- Re-assay: Measure the analyte concentration in each dilution.

- Interpretation:

- No Hook Effect/Interference: The measured concentration will decrease proportionally with the dilution factor (e.g., a 1:10 dilution yields a result ~10% of the original).

- Hook Effect Present: The measured concentration will increase with dilution until the prozone region is escaped, after which results will become linear.

- Interference Present: Non-linearity in recovery upon dilution suggests the presence of an interfering substance [28].

Protocol 2: Pre-Treatment with Heterophile Blocking Reagents

Purpose: To confirm if heterophile antibodies are causing interference [28].

- Split Sample: Divide the patient sample into two aliquots.

- Treat: Add a heterophile blocking reagent to one aliquot. The other aliquot serves as an untreated control.

- Re-assay: Measure the analyte concentration in both aliquots using the same immunoassay.

- Interpretation: A significant difference (>30-50%) in the results between the treated and untreated samples indicates the presence of heterophile antibody interference.

Data Presentation

Table 1: Common Immunoassay Interferences and Their Effects

| Interference Type | Mechanism | Typical Effect on Result | Common Examples |

|---|---|---|---|

| Heterophile Antibodies [3] [28] | Antibodies that bind assay reagents (e.g., animal antibodies) | Falsely high or low (both possible) | Human anti-mouse antibodies (HAMA) |

| Biotin [3] | Interferes with biotin-streptavidin separation | Falsely low (sandwich); Falsely high (competitive) | High-dose supplement use |

| Cross-reactivity [3] [28] | Antibody binds to structurally similar molecules | Falsely high | DHEA-S in testosterone assays; steroid precursors in cortisol assays |

| High-Dose Hook Effect [28] | Analyte excess prevents sandwich formation | Falsely low | Prolactin, hCG, Calcitonin in tumor patients |

| Sample Hemolysis [28] | Release of proteolytic enzymes or cellular contents | Falsely high or low | Insulin, other peptide hormones |

| Macrocomplexes [28] | Analyte bound to autoantibody (e.g., macroprolactin) | Falsely high (but biologically inactive) | Macroprolactin |

Table 2: Key Research Reagent Solutions for Method Standardization

| Reagent / Material | Function in Hormone Immunoassay | Key Consideration |

|---|---|---|

| Low-Binding Tubes/Vials [30] | Minimizes adsorptive losses of the analyte, improving recovery and reproducibility. | Essential for proteins and peptides at low concentrations. |

| Heterophile Blocking Reagents [28] | Added to patient samples to neutralize heterophile antibody interference. | A critical tool for confirming and resolving a common source of error. |

| Standardized Calibrators [29] | Used to create the calibration curve, defining the relationship between signal and concentration. | Using calibrators from the same batch is vital for inter-assay consistency. |

| Matrix-Matched Controls [29] | Quality control samples (e.g., pooled serum) used to monitor assay performance over time. | Should cover the concentration range of interest (low, medium, high). |

| Appropriate Filters [30] | Remove particulates from samples before analysis. | Can cause adsorptive losses; discarding the first mL of filtrate is recommended. |

Workflow and Relationship Visualizations

Diagram 1: Hormone Immunoassay Interference Troubleshooting

Diagram 2: Pre-analytical Variability Factors

Advanced Methodologies and Evolving Immunoassay Technologies

The evolution of immunoassay platforms from traditional Enzyme-Linked Immunosorbent Assays (ELISA) to automated chemiluminescent systems has revolutionized clinical diagnostics. Among these, Chemiluminescence Immunoassay (CLIA), Electrochemiluminescence Immunoassay (ECLIA), and Chemiluminescent Microparticle Immunoassay (CMIA) represent significant technological advancements. However, their widespread adoption in hormone research introduces critical challenges in analytical variability that can compromise data integrity and reproducibility.

For researchers and drug development professionals, understanding and controlling this variability is paramount. Differences in antibody affinity, reagent composition, signal detection mechanisms, and platform-specific methodologies create substantial inter-assay discrepancies, even when measuring identical biomarkers. Recent studies demonstrate that these variations persist despite strong correlation coefficients between platforms, highlighting the necessity for rigorous standardization protocols and systematic troubleshooting approaches in research settings.

Comparative Performance Characteristics of Modern Immunoassay Platforms

Diagnostic Accuracy Across Platforms

Table 1: Comparison of Diagnostic Performance for COVID-19 Serological Assays [31]

| Assay Platform | Manufacturer | Target | Method | Pooled DOR | Relative Performance |

|---|---|---|---|---|---|

| Elecsys Anti-SARS-CoV-2 total | Roche | Total Ab | ECLIA | 1701.56 | Highest accuracy |

| Elecsys Anti-SARS-CoV-2 N | Roche | Anti-N | ECLIA | 1022.34 | Superior performance |

| Abbott SARS-CoV-2 IgG | Abbott | IgG | CMIA | 542.81 | High accuracy |

| LIAISON SARS-CoV-2 S1/S2 IgG | DiaSorin | IgG | CLIA | 178.73 | Moderate accuracy |

| Euroimmun Anti-SARS-CoV-2 S1-IgG | Euroimmun | IgG | ELISA | 190.45 | Moderate accuracy |

| Euroimmun Anti-SARS-CoV-2 N-IgG | Euroimmun | IgG | ELISA | 82.63 | Lower accuracy |

| Euroimmun Anti-SARS-CoV-2 IgA | Euroimmun | IgA | ELISA | 45.91 | Lowest accuracy |

The diagnostic odds ratio (DOR) represents the odds of a positive test result in diseased individuals relative to the odds of a positive result in non-diseased individuals, making it a key metric for overall test accuracy. This meta-analysis of 57 studies revealed that ECLIA and CMIA methods demonstrated superior diagnostic performance compared with conventional CLIA and ELISA, with no significant difference between ECLIA and CMIA platforms [31]. Total antibody assays showed the highest accuracy, followed by IgG-specific assays.

Inter-Assay Variability in Hormone Measurement

Table 2: Inter-Assay Variability in Thyroid Hormone and Related Testing [32] [33] [34]

| Analyte | Platforms Compared | Correlation Coefficient (ρ) | Key Variability Findings |

|---|---|---|---|

| Thyroglobulin | Beckman (Tg-B) vs. Diasorin (Tg-L) | 0.89 (overall) | Significant negative bias for Tg-L vs. Tg-B; moderate correlation (ρ=0.42) at Tg<2 ng/mL [32] |

| Thyroglobulin | Beckman (Tg-B) vs. Siemens (Tg-A) | 0.92 (overall) | No significant bias; agreement declined at concentrations >50 ng/mL [32] |

| Thyroid Stimulating Hormone | Multiple EQA participants | N/A | Achieved desirable harmonization [33] |

| Free Thyroxine (FT4) | Multiple EQA participants | N/A | Failed to reach minimum harmonization (HI: 1.1-1.9) [33] |

| Vitamin D | Six major platforms | R²: 0.9756-0.9994 | Significant numerical discrepancies despite high correlations [34] |

Harmonization Index (HI) values >1 indicate unsatisfactory harmonization. The finding that FT4 failed to reach minimum harmonization levels across platforms highlights the significant challenge in obtaining comparable results for thyroid function tests [33].

Research Reagent Solutions and Essential Materials

Table 3: Key Research Reagents and Materials for Immunoassay Experiments

| Reagent/Material | Function | Application Example | Critical Considerations |

|---|---|---|---|

| CRM-457 international reference material | Standardization for thyroglobulin assays | Calibrating Tg measurements across platforms [32] | Essential for minimizing inter-assay variability |

| Bio-Rad quality control materials | Quality assurance and precision monitoring | Intra-assay variability assessment [32] [35] | Must cover clinically relevant concentration ranges |

| Manufacturer-specific calibrators | Platform-specific calibration | Routine calibration of Abbott, Roche, Siemens systems [34] | Not interchangeable between platforms |

| Luminol-based substrates | Signal generation in CLIA systems | Chemiluminescent detection in GM and BDG assays [36] | Batch-to-batch consistency affects signal stability |

| Monoclonal antibodies with defined epitope specificity | Antigen capture and detection | SARS-CoV-2 N protein vs. S protein antibody detection [31] | Epitope selection significantly impacts sensitivity |

| Serum/plasma matrix materials | Sample dilution and recovery studies | Linearity evaluation in islet autoantibody assays [35] | Must match patient sample matrix |

Experimental Protocols for Method Comparison and Validation

Protocol for Cross-Platform Method Comparison Studies

Objective: To evaluate the concordance between different immunoassay platforms for measuring specific hormones or biomarkers.

Materials:

- Residual serum samples (minimum n=100 recommended)

- Multiple immunoassay platforms (e.g., Abbott ARCHITECT, Roche Cobas, Siemens Atellica)

- Manufacturer-specific reagents and calibrators

- Quality control materials spanning assay measurement ranges

Procedure:

- Sample Selection and Preparation: Collect residual serum samples covering the clinically relevant range of the analyte. Exclude samples with hemolysis, icterus, or lipemia. Store aliquots at -80°C to maintain stability [32].

- Instrument Calibration: Calibrate all platforms according to manufacturer specifications using proprietary calibrators. Do not interchange calibrators between systems.

- Sample Analysis: Measure all samples in duplicate on each platform within a narrow time window to minimize pre-analytical variation.

- Quality Control: Run quality control materials at three concentrations (low, medium, high) at the beginning and end of each batch.

- Data Analysis:

- Perform correlation analysis using Spearman's coefficient (ρ)

- Assess agreement with Bland-Altman plots

- Calculate concordance rates for clinically relevant thresholds

- Evaluate bias using Passing-Bablok regression [35]

Troubleshooting Tip: When comparing methods, include samples with concentrations near clinical decision points, as agreement is often poorest at these critical levels [32].

Protocol for Assessing Assay Precision and Linearity

Objective: To determine intra-assay precision and linearity of automated immunoassay platforms.

Materials:

- High-titer serum samples

- Assay diluent specified by manufacturer

- Automated immunoassay analyzer with precision software

Procedure:

- Precision Evaluation:

- Prepare two serum pools with analyte concentrations below and above the clinical cut-off

- Perform ten replicate measurements of each pool in a single run

- Calculate coefficients of variation (CVs) and compare with manufacturer claims [35]

- Linearity Assessment:

- Prepare serial dilutions (ranging from 1:10 to 10:1) of high-titer serum samples

- Analyze diluted samples in duplicate

- Compare measured values with expected values based on dilution factors

- Determine the assay's linear range and any observed hook effect [35]

Troubleshooting Tip: If precision falls outside acceptable limits (typically <15% CV), check reagent stability, pipette calibration, and environmental conditions.

Troubleshooting Guides for Common Experimental Challenges

FAQ 1: How should we manage platform switching during longitudinal studies?

Challenge: Changing immunoassay platforms in ongoing research studies introduces variability that compromises data comparability.

Solution:

- Conduct a method comparison study with at least 40 patient samples spanning the measurable range prior to platform transition [32]

- Establish individual patient baselines on both old and new platforms using split-sample testing

- Implement a crossover period where samples are run on both platforms simultaneously

- Apply mathematical recalibration using Passing-Bablok regression equations when direct comparison is necessary [35]

Preventive Measure: When designing longitudinal studies, secure commitments for consistent platform availability or budget for method comparison studies if platform transition becomes unavoidable.

FAQ 2: Why do we observe strong correlations but poor agreement between platforms?

Challenge: High correlation coefficients (R² > 0.95) with significant numerical differences in biomarker measurements.

Root Cause: Correlation measures the strength of relationship between methods, not agreement. Constant or proportional biases cause numerical discrepancies despite strong correlations [34].

Diagnostic Steps:

- Perform Bland-Altman analysis to quantify average bias and limits of agreement

- Check for concentration-dependent bias using difference plots

- Verify calibration traceability to international reference materials

- Assess differences in antibody epitope specificity and assay design [32]

Solution: Establish platform-specific reference ranges and clinical decision limits. Do not interchangeably use results from different platforms without establishing comparability.

FAQ 3: How can we minimize variability in low-concentration hormone measurements?

Challenge: Highest relative variability typically occurs at low analyte concentrations near the functional sensitivity of assays.

Optimization Strategies:

- Concentrate samples when possible (e.g., using centrifugal filters)

- Use assays with superior functional sensitivity (e.g., <0.1 ng/mL for thyroglobulin) [32]

- Increase replicate measurements for low-concentration samples

- Implement longer signal incubation times if platform protocols allow

- Verify that dilutional linearity extends to low concentrations

Quality Control: Include low-concentration quality control materials near clinical decision points in each run, with tighter acceptance criteria than manufacturer recommendations.

Advanced Harmonization Assessment Protocol

Objective: To quantitatively assess harmonization status across multiple testing systems using external quality assessment (EQA) data.

Materials:

- EQA data from at least 3 consecutive cycles

- Biological variation-based quality specifications

- Statistical software (R, SPSS, or MedCalc)

Procedure:

- Data Collection: Gather EQA data for the analyte of interest across multiple cycles [33]

- Calculate Total Allowable Error (TEa): Compute TEa for your laboratory (TEa-Lab) and peer groups (TEa-peer) using bias and coefficient of variation data

- Determine Bias: Calculate average bias from the reference method or peer group mean

- Compute Harmonization Index (HI): Compare TEa values against biological variation thresholds (minimum, desirable, optimal) using the formula: HI = TEa-lab / TEa-desirable [33]

- Interpretation: HI values ≤1 indicate satisfactory harmonization, while HI>1 requires corrective action

Corrective Actions for HI>1:

- Review calibration traceability

- Implement additional quality control measures

- Optimize reagent lot validation procedures

- Participate in additional EQA programs with commutable materials

The evolution from CLIA to ECLIA and CMIA platforms has delivered substantial improvements in automation, throughput, and analytical sensitivity. However, as demonstrated by comparative studies across multiple biomarkers, significant inter-platform variability persists despite technological advancements. This variability presents substantial challenges for multi-center research studies and longitudinal monitoring of hormonal biomarkers.

A strategic approach to minimizing analytical variability must include rigorous method validation before platform implementation, continuous monitoring through EQA programs, and systematic troubleshooting when harmonization issues arise. Researchers should prioritize platform consistency throughout study durations and establish protocol-based approaches for managing necessary platform transitions. Furthermore, the reporting of research findings should explicitly state the analytical platforms and reagent lots used, enabling proper interpretation of results and facilitating future meta-analyses.

By implementing the troubleshooting guides, experimental protocols, and harmonization strategies outlined in this technical support document, researchers can significantly enhance the reliability and comparability of hormone immunoassay data, thereby strengthening the scientific validity of their research outcomes.

The Role of High-Specificity Antibodies and Improved Calibrators

Understanding and Troubleshooting Common Immunoassay Issues

This section addresses frequent challenges encountered during hormone immunoassay experiments and provides evidence-based solutions to minimize analytical variability.

FAQ: My immunoassay shows inconsistent absorbances across the plate. What could be the cause?

Inconsistent absorbances often stem from technical or physical procedural errors rather than reagent failure. The table below summarizes common causes and solutions. [37]

Table: Troubleshooting Inconsistent Absorbance in Immunoassays

| Issue | Impact on Results | Corrective Action |

|---|---|---|

| Plate stacking during incubation | Uneven temperature distribution across wells | Avoid stacking plates; ensure plates are incubated singly on a flat surface |

| Inconsistent pipetting | Variable liquid volumes between wells/replicates | Calibrate pipettes regularly; ensure proper tip sealing; avoid reusing tips |

| Inadequate reagent mixing | Non-uniform antibody/analyte concentration | Mix all reagents and samples thoroughly; equilibrate to room temperature before use |

| Wells drying out | Disruption of the solid-phase antibody matrix | Do not leave plates unattended for prolonged periods after washing |

| Insufficient washing | Variable amounts of unbound antibody remain | Follow washing protocol meticulously; ensure washer nozzles are not clogged |

FAQ: I observe very weak or no color development. What steps should I take?

Weak color development indicates a failure in the signal-generation phase of the assay. A systematic check of reagents and conditions is required. [37] [38]

Table: Troubleshooting Weak or No Color Development

| Category | Possible Cause | Solution |

|---|---|---|

| Reagent Conditions | Reagents not at room temperature | Allow all reagents to warm to ~25°C before starting the assay |

| Incorrect storage of components | Store all kit components as directed (often 2-8°C); check expiration dates | |

| Incompatible buffer (e.g., contains sodium azide) | Avoid azide, which inhibits Horseradish Peroxidase (HRP) activity | |

| Assay Execution | Incorrect incubation time/temperature | Adhere strictly to protocol-specified incubation times and temperatures |

| Contaminated or inactivated TMB substrate | Dispense TMB into a clean, disposable trough; discard if it appears blue prematurely | |

| Plate read at incorrect wavelength | Read TMB substrate at 450 nm | |

| Sample & Matrix | Analyte measurement in a non-optimized matrix (e.g., plasma vs. serum) | Use only the sample matrices validated for the assay |

Immunoassay interference remains a significant source of analytical variability. Major interferents can be exogenous or endogenous, leading to both false-positive and false-negative results. [3]

Diagram: A logical workflow for investigating suspected immunoassay interference.

Table: Common Immunoassay Interferents and Mitigation Strategies

| Interferent | Mechanism | Primarily Affects | Detection/Mitigation Strategies |

|---|---|---|---|

| Cross-reactivity | Structurally similar molecules (metabolites, drugs) are recognized by the antibody. [3] | Competitive immunoassays (e.g., for steroids, T3, T4). [3] | Use a more specific assay (e.g., LC-MS/MS); check for known cross-reactants in patient history. [39] [40] |

| Heterophile Antibodies | Endogenous human antibodies that bind assay antibodies, causing false signals. [3] | Both competitive and sandwich assays. | Use blocking reagent tubes; re-analyze with a different platform/assay design; sample pre-treatment. [3] |

| Biotin | High circulating biotin interferes with biotin-streptavidin separation. [3] | Assays using biotin-streptavidin technology. | Check patient biotin intake; re-test after sufficient biotin washout period. |

| Rheumatoid Factor (RF) | An IgM antibody that can bridge capture and detection antibodies. [3] | Sandwich immunoassays. | Similar mitigation strategies as for heterophile antibodies. |

| High-Dose Hook Effect | Extremely high analyte levels saturate antibodies, leading to falsely low results. [3] | Sandwich immunoassays (e.g., for prolactin, TSH). | Re-test the sample at multiple dilutions; modern assays often have a high Hook threshold. |

Experimental Protocols for Validation and Harmonization

Protocol: Harmonization Assessment Using External Quality Assessment (EQA) Data

Objective: To quantitatively evaluate the harmonization level of a thyroid hormone testing system against peer groups and biological variation-based quality standards. [33]

Methodology: [33]

- Data Collection: Gather EQA data for target hormones (e.g., T3, T4, FT3, FT4, TSH) over a defined period.

- Calculate Total Allowable Error (TEa): For your lab (TEa-Lab) and peer groups (TEa-peer), calculate TEa using the formula based on bias and coefficient of variation (CV) data.

- Determine Allowable Limits: Define three performance limits based on biological variation: optimal, desirable, and minimum.

- Calculate Harmonization Index (HI): Derive the HI by comparing the TEa values against the biological variation thresholds:

HI = TEa / Allowable Limit. - Interpretation: An HI value ≤ 1 indicates satisfactory harmonization. Values > 1 signify a failure to meet the respective quality level.

Application: This protocol allows labs to identify specific assays requiring improvement. For example, one study found TSH achieved desirable harmonization, while T3, T4, FT3, and FT4 failed to reach the minimum level (HI = 1.1-1.9). [33]

Protocol: Validating Immunoassay Results Using Mass Spectrometry

Objective: To confirm the accuracy of immunoassay results, especially for low-concentration analytes or when interference is suspected.

- Sample Selection: Identify samples with immunoassay results that are clinically discordant or suspicious.

- Sample Preparation: Perform liquid-liquid or solid-phase extraction to isolate steroids from the serum matrix. Derivatization may be applied to enhance sensitivity.

- Liquid Chromatography (LC): Separate the extracted steroids using a high-performance LC system with a C18 column. This resolves structurally similar steroids that immunoassays cannot distinguish.

- Tandem Mass Spectrometry (MS/MS):

- Ionization: The eluted steroids are ionized, typically using Electrospray Ionization (ESI).

- First Mass Filter (Q1): Selects the precursor ion (parent mass) of the target steroid.

- Collision Cell (Q2): Fragments the precursor ion into specific product ions using an inert gas.

- Second Mass Filter (Q3): Selects a specific product ion unique to the target steroid.

- Quantification: The intensity of the specific product ion signal is measured against a calibration curve made from certified reference materials, providing a highly specific and accurate concentration.

Application: This protocol is critical for measuring low-level estradiol in postmenopausal women, diagnosing pediatric endocrine disorders, and accurately quantifying testosterone for PCOS diagnosis, where immunoassay precision is often insufficient. [39] [40]

The Scientist's Toolkit: Key Reagent Solutions

The reliability of hormone immunoassays is fundamentally dependent on the quality and specificity of core reagents.

Table: Essential Research Reagents for High-Quality Hormone Immunoassays

| Reagent / Material | Critical Function | Impact on Assay Performance & Variability |

|---|---|---|

| High-Specificity Monoclonal Antibodies (mAbs) | Engineered to bind a single, unique epitope on the target hormone with high affinity. [41] | Reduces cross-reactivity with metabolites and structurally similar hormones, directly improving assay specificity and accuracy. [3] |

| Matrix-Matched Calibrators | Calibrators prepared in a synthetic matrix that closely mimics the patient sample (e.g., serum). | Minimizes matrix effects, ensuring the antibody-binding kinetics in calibrators and patient samples are equivalent, leading to more accurate quantification. |

| Reference Standards | Highly purified hormones with concentrations traceable to international reference materials (e.g., from NIST or CDC). [39] | Enables assay standardization and harmonization across different laboratories and platforms, allowing for comparable results. [33] |

| Stable Isotope-Labeled Internal Standards (for LC-MS/MS) | A known quantity of the target hormone with some atoms replaced by heavy isotopes (e.g., ^13^C, ^2^H). | Compensates for sample-specific losses and ion suppression during sample preparation and MS analysis, vastly improving precision and accuracy in mass spectrometry. [40] |

| Blocking Reagents | A mixture of non-specific antibodies (e.g., animal serums) or proprietary proteins. | Neutralizes heterophile antibodies and other interfering substances in patient samples, reducing a major source of false results. [3] |

Harmonization of Thyroid Hormone Testing Systems

Data derived from EQA programs provides a quantitative measure of how well different laboratories and methods agree.

Table: Harmonization Assessment of Thyroid Hormone Assays Based on EQA Data [33]