Parallelism Recovery Assay Validation in Hormone Measurement: Principles, Methods, and Best Practices for Robust Bioanalytical Data

This article provides a comprehensive guide to parallelism recovery assay validation, a critical process for ensuring the accuracy and reliability of hormone measurements in biological matrices.

Parallelism Recovery Assay Validation in Hormone Measurement: Principles, Methods, and Best Practices for Robust Bioanalytical Data

Abstract

This article provides a comprehensive guide to parallelism recovery assay validation, a critical process for ensuring the accuracy and reliability of hormone measurements in biological matrices. Tailored for researchers and drug development professionals, it covers the foundational principles of assay validation, detailed methodological workflows, advanced troubleshooting strategies for common pitfalls, and robust frameworks for comparative analysis and final assay acceptance. By synthesizing current research and best practices, this resource aims to equip scientists with the knowledge to generate high-quality, clinically meaningful hormone data, ultimately supporting robust diagnostic and therapeutic development.

Core Principles: Understanding Parallelism and Recovery in Hormone Assay Validation

In the rigorous world of bioanalysis, particularly for hormone measurement, the validity of experimental data hinges on the demonstration of two critical methodological pillars: parallelism and recovery. These validation parameters are not mere formalities; they provide objective evidence that an immunoassay accurately measures the intended analyte in a complex biological matrix, such as serum, saliva, or urine. For researchers and drug development professionals, a failure to adequately assess parallelism and recovery can lead to systematically inaccurate results, jeopardizing scientific conclusions and clinical decision-making. This guide delves into the definitions, experimental protocols, and acceptance criteria for these foundational concepts, providing a framework for robust assay validation within hormone research.

Core Concepts: Parallelism and Recovery

Parallelism and spike-and-recovery are distinct but related validation parameters that probe different aspects of assay performance. The table below summarizes their key characteristics.

Table 1: Fundamental Characteristics of Parallelism and Recovery

| Parameter | Definition | Primary Question | Sample Type Used |

|---|---|---|---|

| Parallelism | Assesses the similarity of immunoreactivity between the endogenous analyte in a sample and the standard/calibrator analyte [1] [2]. | Does the real sample, with its endogenous analyte, behave in the same way as the purified standard in the assay? [1] | Samples with high levels of the endogenous analyte of interest. |

| Recovery | Determines the ability to accurately measure a known quantity of analyte spiked into the sample matrix [1] [2]. | Can the assay accurately detect an analyte added to the complex sample matrix, or does the matrix interfere? [1] | Sample matrix spiked with a known concentration of the standard analyte. |

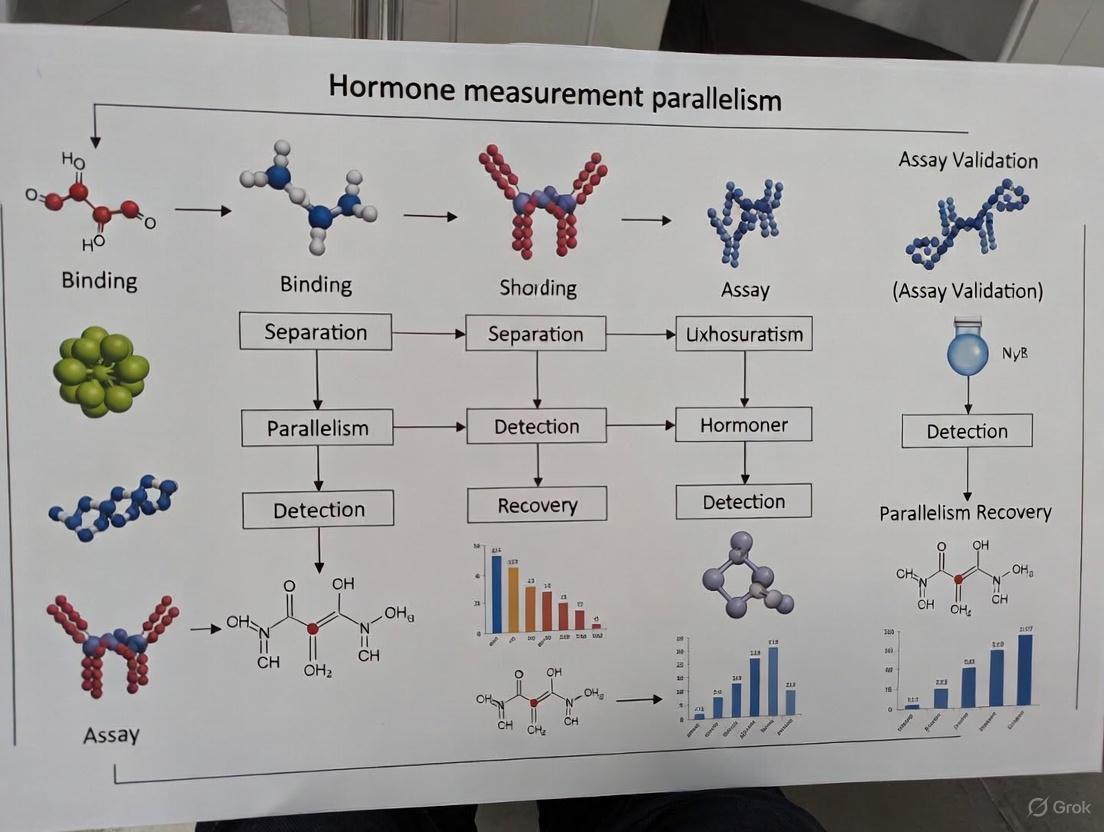

The following diagram illustrates the logical relationship and purpose of these two validation pillars in ensuring assay accuracy.

Experimental Protocols and Data Interpretation

A clear, step-by-step methodology is essential for reliably evaluating parallelism and recovery. The protocols below outline the general principles for conducting these experiments [1].

Protocol for Parallelism Testing

- Sample Identification: Identify at least three independent samples that contain high concentrations of the endogenous analyte. The concentration should be within the assay's measurable range but not exceed the upper limit of quantification [1].

- Serial Dilution: Perform a series of dilutions (e.g., 1:2 serial dilutions) of each sample using the appropriate sample diluent. Continue diluting until the predicted concentration falls below the assay's lower limit of quantification [1].

- Assay and Calculation: Analyze the neat and diluted samples in the assay. Calculate the observed concentration for each dilution, then multiply by the dilution factor to obtain the "back-calculated" concentration [1].

- Data Analysis: Determine the mean concentration from all dilutions that fell within the working range of the standard curve. Calculate the percentage coefficient of variation (%CV) across these back-calculated concentrations [1].

Table 2: Interpretation of Parallelism Results

| Observation | Interpretation | Recommended Action |

|---|---|---|

| %CV within 20-30% (user-defined threshold) [1] | Successful parallelism. Indicates comparable immunoreactivity between the endogenous analyte and the standard. | Assay is suitable for the sample type. |

| %CV higher than acceptable threshold | Loss of parallelism. Suggests significant difference in immunoreactivity, potentially due to post-translational modifications, matrix effects, or interfering substances [1]. | Investigate sample composition; may require assay optimization or sample pre-treatment. |

Protocol for Spike-and-Recovery Testing

- Spiking: Introduce a known quantity of the standard analyte into the sample matrix of interest. The spike should result in a concentration within the standard curve's range. Perform the same spiking procedure into the standard diluent (the assay's buffer matrix) [1].

- Assay and Calculation: Run both the spiked sample matrix and the spiked standard diluent in the assay to obtain observed concentrations.

- % Recovery Calculation: Calculate the percent recovery using the formula:

- % Recovery = (Observed Concentration in Spiked Matrix / Observed Concentration in Spiked Diluent) × 100% [1].

Table 3: Interpretation of Spike-and-Recovery Results

| Observation | Interpretation | Recommended Action |

|---|---|---|

| Recovery ~100% (typically 80-120% is acceptable) [1] | Ideal recovery. Suggests minimal matrix interference and high confidence in assay compatibility. | No action needed; assay performs well with the matrix. |

| Recovery outside 80-120% range [1] | Significant matrix interference. Components in the sample are inhibiting or enhancing the assay signal. | Optimize sample dilution factor, use an alternative diluent, or pre-treat samples to remove interferents. |

The following workflow diagram maps the experimental process from sample preparation to data interpretation for both validation types.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful validation requires careful selection of reagents and materials. The following table details key components used in parallelism and recovery experiments.

Table 4: Essential Research Reagent Solutions for Validation Experiments

| Item | Function in Validation | Key Considerations |

|---|---|---|

| Sample Matrix | The biological fluid (e.g., serum, plasma, urine, saliva) being validated for the assay [1] [3]. | Source, collection method, and storage conditions can significantly impact matrix effects. Use matrices with low or known endogenous analyte levels for recovery studies [1]. |

| Standard/Calibrator Analyte | The highly purified reference material used to create the standard curve and for spiking in recovery experiments [1]. | Purity and integrity are critical. The source (recombinant vs. natural) should be considered, as it can affect antibody binding affinity compared to the endogenous analyte [1]. |

| Sample Diluent | The buffer solution used to dilute samples for parallelism and to prepare spiked standards for recovery [1]. | Must be optimized to closely mimic the sample matrix and minimize interference; a poor choice can cause non-parallelism or poor recovery [1]. |

| Immunoassay Kit | The core components, including plates, capture/detection antibodies, and detection reagents specific to the analyte [1]. | Antibody pairs must be specific and have high affinity for the analyte. The epitopes they recognize are a major factor in determining parallelism [1] [4]. |

| Quality Control (QC) Samples | Samples with known concentrations used to monitor assay performance during the validation runs [2] [5]. | Should be run in parallel to ensure the assay itself is performing within established precision and accuracy parameters during the critical validation experiment. |

Application in Hormone Measurement Research

The principles of parallelism and recovery are acutely relevant in fields like reproductive endocrinology and clinical diagnostics, where measuring hormones in alternative matrices is increasingly common.

- Salivary and Urinary Hormones: A scoping review highlighted the complexities and inconsistencies in methodologies for detecting salivary estradiol and progesterone, and urinary luteinizing hormone (LH). The review noted a general scarcity of reported validity and precision measures, making study comparisons challenging and underscoring the need for rigorous validation like parallelism testing in these matrices [3].

- Validation of At-Home Monitors: A 2025 study validating the quantitative Mira fertility monitor against the established ClearBlue Fertility Monitor (CBFM) for urinary hormones in postpartum and perimenopausal women is a practical example. The study demonstrated strong agreement between the two methods for detecting the LH surge, which inherently provides support for the parallel behavior of the analyte detected by both systems [6].

- Assay Standardization Challenges: The lack of standardization in parathyroid hormone (PTH) immunoassays is a classic example of the consequences of differing antibody specificities. These "generation" of assays detect different fragments of PTH with varying cross-reactivity, leading to poor inter-method comparability [4]. This directly impacts both parallelism (if a standard differs from endogenous hormone fragments) and recovery, complicating clinical decision-making in chronic kidney disease [4].

For researchers and scientists dedicated to generating reliable and meaningful data, a thorough understanding and implementation of parallelism and recovery tests are non-negotiable. These pillars of assay validation provide the foundational evidence that an immunoassay is not only sensitive and precise but also specific and accurate for its intended biological sample. As the field moves towards more complex biomarkers and novel sample matrices, adhering to these rigorous validation principles will be paramount for advancing scientific discovery and ensuring the efficacy and safety of drug development.

The accurate measurement of hormone concentrations represents a cornerstone of both drug development and clinical diagnostics, forming a critical bridge between biomedical research and patient care. In the complex journey from laboratory discovery to therapeutic application, the reliability of hormone data directly impacts decision-making at every stage. Hormone assays provide essential biomarkers for understanding disease mechanisms, evaluating drug efficacy and safety, and establishing diagnostic criteria. However, the path to obtaining valid, reproducible hormone data is fraught with methodological challenges that can compromise data integrity and subsequent clinical interpretations [7].

The process of technology development in medicine follows a complex, non-linear pathway influenced by both scientific capabilities and market forces. This development continuum encompasses pharmaceuticals, medical devices, and clinical procedures, each with distinct yet overlapping evaluation requirements [8]. Within this ecosystem, hormone measurement serves as a critical tool for generating the clinical evidence necessary for regulatory approvals and treatment guidelines. The transition from preclinical research to clinical application demands rigorous validation of analytical methods to ensure their reliability for human subject testing and eventual clinical implementation [9]. This article examines the critical role of hormone measurement across this spectrum, with particular focus on assay validation methodologies that underpin data credibility in both research and diagnostic contexts.

Hormone Assay Methodologies: A Comparative Landscape

Dominant Analytical Platforms

The current landscape of hormone testing is dominated by two principal methodological approaches: immunoassays and liquid chromatography-tandem mass spectrometry (LC-MS/MS). Each platform offers distinct advantages and limitations that must be carefully considered based on application requirements [7].

Immunoassays, including enzyme-linked immunosorbent assays (ELISAs), employ antibody-antigen interactions to detect and quantify hormones. These methods are widely used in clinical and research settings due to their relatively low cost, high throughput capacity, and technical accessibility. However, immunoassays suffer from significant limitations, particularly concerning specificity. The structural similarity among steroid hormones frequently leads to antibody cross-reactivity, resulting in overestimation of target analyte concentrations. For example, dehydroepiandrosterone sulfate (DHEAS) demonstrates substantial cross-reactivity in many testosterone immunoassays, disproportionately affecting results in female patients where testosterone levels are naturally lower [7]. Additional matrix effects, particularly from binding proteins like sex hormone-binding globulin (SHBG) and cortisol-binding globulin (CBG), further compromise accuracy, especially in patient populations with altered binding protein concentrations such as pregnant women, oral contraceptive users, and critically ill patients [7].

Liquid chromatography-tandem mass spectrometry (LC-MS/MS) has emerged as a superior alternative for steroid hormone quantification, offering enhanced specificity, sensitivity, and multiplexing capabilities. This technique physically separates analytes chromatographically before mass-based detection, virtually eliminating cross-reactivity concerns. LC-MS/MS simultaneously measures multiple analytes in a single run while requiring smaller sample volumes—particularly advantageous for pediatric studies or small animal research [7]. Despite these advantages, LC-MS/MS is not infallible; significant interlaboratory variability has been documented even with this advanced methodology. A comparative study analyzing serum samples from women with polycystic ovary syndrome revealed poor correlation between testosterone measurements from different reference laboratories using LC-MS/MS, highlighting the importance of methodological rigor and standardization regardless of platform [7].

Comparative Method Performance

Table 1: Comparison of Major Hormone Assay Methodologies

| Parameter | Immunoassays | LC-MS/MS |

|---|---|---|

| Specificity | Moderate to low (cross-reactivity concerns, especially for steroids) | High (physical separation before detection) |

| Sensitivity | Variable; often insufficient for low hormone concentrations | Excellent, particularly for steroid hormones |

| Throughput | High (automated platforms available) | Moderate (increasing with automation) |

| Multiplexing Capability | Limited (typically single analyte) | Excellent (multiple hormones in single run) |

| Sample Volume | Generally low to moderate | Low (especially important for pediatric/small animal studies) |

| Equipment Cost | Moderate | High |

| Technical Expertise | Moderate | High |

| Susceptibility to Matrix Effects | High (affected by binding proteins) | Low |

| Standardization | Variable between kits and manufacturers | Improving with reference methods |

For peptide hormones, immunoassays remain the predominant methodology, though LC-MS/MS applications are rapidly expanding. The larger molecular size of peptides facilitates immunometric (sandwich) assay formats that generally demonstrate better specificity than competitive immunoassays used for steroids. However, novel challenges are emerging as LC-MS/MS methods identify previously unrecognized protein variants. For instance, the IGF1 variant A70T-IGF1, present in approximately 0.6% of the population, is detected by standard immunoassays but leads to falsely low concentrations when measured by certain LC-MS/MS methods [7]. Such discrepancies underscore the complex interplay between methodological choice and biological variability.

Assay Validation: The Bedrock of Data Credibility

Core Validation Parameters

The transition from research assay to clinically applicable method requires rigorous validation to ensure data reliability. Several key parameters must be established during validation, each addressing specific aspects of analytical performance [7] [10].

Parallelism assesses whether diluted samples behave comparably to the standard curve, confirming that the assay accurately measures the endogenous substance despite matrix differences. This is typically evaluated by serially diluting a sample with high analyte concentration and evaluating if the measured values demonstrate linearity proportional to dilution. Lack of parallelism indicates matrix interference compromising assay accuracy [10].

Recovery experiments evaluate accuracy by spiking known quantities of the pure analyte into sample matrix and measuring the percentage recovered. This identifies matrix effects that may enhance or suppress the analytical signal. Acceptable recovery (typically 85-115%) confirms the assay's accuracy within that specific matrix [10].

Precision encompasses both within-run (intra-assay) and between-run (inter-assay) variability, determining measurement reproducibility. Precision is usually expressed as coefficient of variation (CV%), with lower values indicating better reproducibility. The Clinical Laboratory Improvement Amendments (CLIA) and other regulatory bodies establish precision requirements for clinical assays [11].

Selectivity confirms that the assay specifically measures the intended analyte without interference from structurally similar compounds or matrix components. For immunoassays, this primarily involves evaluating cross-reactivity with known related compounds [7].

Table 2: Key Assay Validation Parameters and Methodologies

| Validation Parameter | Experimental Approach | Acceptance Criteria | Purpose |

|---|---|---|---|

| Parallelism | Serial dilution of high-concentration sample | Linear response proportional to dilution | Confirms accurate measurement in sample matrix |

| Recovery | Spike known analyte amounts into matrix | 85-115% recovery | Identifies matrix effects on accuracy |

| Precision | Repeated measurements of quality control samples | CV% <15% (varies by analyte) | Determines measurement reproducibility |

| Selectivity/Specificity | Cross-reactivity testing with related compounds | <1% cross-reactivity with major metabolites | Ensures measurement of intended analyte only |

| Sensitivity | Repeated measurement of zero standard | Signal significantly different from blank | Determines lowest reliably measurable concentration |

| Matrix Effects | Compare measurements in different matrices | Consistent recovery across matrices | Identifies matrix-specific interference |

Method Verification and Standardization

Simply purchasing commercial assay kits does not guarantee valid results. Each laboratory must perform on-site verification to confirm that published performance claims are achievable in their specific environment with their personnel. This verification should address precision, accuracy, reportable range, and reference intervals [7]. The Centers for Disease Control and Prevention (CDC) Hormone Standardization Program (HoSt) provides a robust framework for improving and certifying analytical performance for testosterone and estradiol measurements. The program includes two phases: Phase 1 focuses on assessment and improvement using samples with reference value assignments, while Phase 2 involves quarterly challenges with blinded samples to verify performance against strict criteria [11].

The CDC HoSt program establishes rigorous performance targets based on biological variability. For testosterone, the current certification requires mean bias within ±6.4% and precision better than 5.3% CV. For estradiol, acceptable bias is within ±12.5% for concentrations >20 pg/mL or ±2.5 pg/mL for concentrations ≤20 pg/mL, with precision better than 11.4% CV [11]. These standardization efforts are critical for ensuring consistency across laboratories and longitudinal studies.

Experimental Protocols: Methodologies in Practice

Sample Preparation and Extraction

Proper sample handling is foundational to reliable hormone measurement. Keratin-based samples (fur, claws) require meticulous cleaning, drying, and pulverization before methanol extraction [10]. For blood samples, consideration of binding protein concentrations is essential, particularly when using direct immunoassays without extraction steps. Conditions affecting binding protein levels (pregnancy, oral contraceptive use, critical illness) may necessitate methodological adjustments to maintain accuracy [7].

The validation of novel sample matrices represents an important advancement in non-invasive monitoring. In wildlife endocrinology, researchers have successfully validated progesterone measurements in American marten claws using ELISA kits, establishing correlation with reproductive tract tissues. This approach enables longitudinal monitoring of reproductive status without sacrificing animals, demonstrating the potential for minimally invasive sampling in research and clinical contexts [10].

Quality Control Practices

Robust quality control systems are essential for generating reliable data. Internal quality controls (IQCs) should span the assay's reportable range and include independent materials from different sources than the calibration standards. These controls must be included in every run to monitor assay performance over time [7]. For research laboratories, implementing procedures based on ISO15189 standards (the international benchmark for medical laboratory quality) significantly enhances data credibility, even when the laboratory itself is not formally certified [7].

Method Comparison Studies

When implementing new methodologies or comparing assay performance, appropriate experimental design is critical. The Clinical Laboratory Standards Institute (CLSI) EP9-A2 guideline "Method Comparison and Bias Estimation using Patient Samples" provides a standardized approach for evaluating measurement procedures [11]. These studies should include samples spanning the clinically relevant range and represent the intended patient population to ensure comprehensive evaluation of method performance across various concentrations and matrix types.

The Translation from Preclinical to Clinical Applications

The Drug Development Pipeline

The drug development process systematically progresses from preclinical discovery to clinical application, with hormone measurements playing critical roles at each stage. Preclinical research encompasses target identification, compound screening, and safety assessment using in vitro systems and animal models. These studies aim to characterize pharmacokinetic and pharmacodynamic profiles, identify potential toxicities, and establish safe starting doses for human trials [9].

The transition to clinical studies represents a critical juncture where methodological rigor becomes paramount. Regulatory agencies require extensive preclinical safety data before approving first-in-human trials. This includes toxicity studies in at least two species (typically one rodent and one non-rodent) following Good Laboratory Practice (GLP) standards [9]. Historical tragedies like the 1937 Elixir Sulfanilamide incident (resulting in over 100 deaths) and the 1950s thalidomide catastrophe (causing more than 10,000 birth defects) underscore the vital importance of rigorous preclinical testing [9].

Clinical Trial Progression

Clinical development proceeds through phased trials with progressively expanding scope. Phase I studies focus primarily on safety and pharmacokinetics in small cohorts of healthy volunteers or patients. Phase II trials explore therapeutic efficacy and dose-response relationships in larger patient groups. Phase III confirmatory trials establish comprehensive safety and efficacy profiles in hundreds to thousands of patients across multiple sites [9].

Throughout this progression, hormone measurements serve as critical biomarkers for target engagement, pharmacological activity, and safety monitoring. However, the high attrition rate in drug development—with only approximately 6.7% of Phase I candidates ultimately achieving regulatory approval—highlights the continued challenges in translating preclinical findings to clinical success [9]. Methodological flaws in biomarker measurement, including hormone assays, contribute to this attrition by generating misleading data that informs faulty decisions.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagent Solutions for Hormone Analysis

| Reagent/Category | Function & Application | Performance Considerations |

|---|---|---|

| ELISA Kits (e.g., Progesterone, Cortisol, Testosterone) | Quantitative measurement in various matrices including serum, fur, claws | Require matrix-specific validation; check for cross-reactivity; assess parallelism and recovery [10] |

| Reference Materials | Calibration and method standardization | Certified reference materials ensure metrological traceability; CDC HoSt programs provide materials with assigned values [11] |

| Quality Control Samples | Monitoring assay precision and accuracy | Should be independent of calibration system; multiple concentrations spanning reportable range; monitor both intra- and inter-assay performance [7] |

| Mass Spectrometry Reagents | LC-MS/MS method development and application | High-purity standards and stable isotope-labeled internal standards essential for accurate quantification [7] |

| Sample Preparation Materials | Extraction and purification of hormones from complex matrices | Matrix-specific optimization required; methanol extraction effective for keratin samples; solid-phase extraction may improve specificity [10] |

| Binding Protein Controls | Assessing matrix effects in immunoassays | Critical for populations with altered binding protein concentrations (pregnancy, oral contraceptive use, critical illness) [7] |

The critical role of hormone measurement in drug development and clinical diagnostics extends far beyond technical analytical performance. Reliable hormone data underpins decision-making throughout the therapeutic development pipeline, from initial target validation to post-market safety monitoring. The complex, interactive nature of medical technology development—influenced by scientific capability, regulatory frameworks, clinical practice patterns, and healthcare economics—demands rigorous attention to assay validation and standardization [8].

The methodological considerations discussed in this article—including platform selection, validation parameters, quality control practices, and standardization programs—collectively form a foundation for generating credible data that reliably informs clinical decisions. As technological advances introduce increasingly sophisticated analytical capabilities, the fundamental principles of assay validation remain essential for distinguishing genuine progress from methodological artifact. By adhering to these principles and actively participating in standardization initiatives, researchers and clinicians can ensure that hormone measurements fulfill their critical role in advancing patient care through rigorous science.

The accurate quantification of hormone levels is a cornerstone of endocrine research, clinical diagnostics, and drug development. The selection of an appropriate analytical method is paramount, as it directly impacts the reliability, reproducibility, and biological relevance of the data generated. Among the available techniques, immunoassays (IA) and liquid chromatography-tandem mass spectrometry (LC-MS/MS) represent two fundamentally different approaches, each with distinct advantages and limitations. Immunoassays, including enzyme-linked immunosorbent assays (ELISA) and chemiluminescent immunoassays (CLIA), leverage the binding specificity of antibodies for hormone detection. In contrast, LC-MS/MS separates hormones based on their physical and chemical properties before detection, offering exceptional specificity and sensitivity. This guide provides an objective, data-driven comparison of these two key platforms, focusing on their performance characteristics, methodological requirements, and suitability for different research applications within the context of hormone assay validation.

Performance Comparison: Analytical and Diagnostic Metrics

Direct comparisons of IA and LC-MS/MS across various hormones and sample matrices reveal critical differences in their performance. The data below, synthesized from recent studies, highlight trends in correlation, bias, and diagnostic accuracy.

Table 1: Comparative Analytical Performance of Immunoassays vs. LC-MS/MS

| Hormone & Sample Type | IA Platform(s) | Correlation with LC-MS/MS (Spearman's r) | Observed Bias | Reference |

|---|---|---|---|---|

| Urinary Free Cortisol (Diagnosing Cushing's Syndrome) | Autobio A6200, Mindray CL-1200i, Snibe MAGLUMI X8, Roche e801 | 0.950 - 0.998 | Proportional positive bias for all IAs [12] [13] | |

| Salivary Sex Hormones (Estradiol, Progesterone, Testosterone) | Salimetrics ELISA | Strong for testosterone only; poor for estradiol and progesterone | Not specified | [14] |

| Serum Cortisol (Post-Dexamethasone Suppression Test) | Roche Elecsys Gen I, Beckman Access | Not specified | Elecsys overestimated by 6.1%; Access underestimated by 5.9% [15] [16] | |

| Plasma Methotrexate (Therapeutic Drug Monitoring) | EMIT, EIA | > 0.93 | Positive bias due to metabolite cross-reactivity | [17] |

The diagnostic performance of an assay is as crucial as its analytical metrics. Research shows that method-specific cut-off values are often necessary when using immunoassays.

Table 2: Diagnostic Performance for Hypercortisolism Screening

| Assay Method | Standard Cut-off (50 nmol/L) | Optimal Method-Specific Cut-off | Sensitivity at Optimal Cut-off | Specificity at Optimal Cut-off |

|---|---|---|---|---|

| LC-MS/MS | Reference Standard | 50 nmol/L | (Reference) | (Reference) |

| Roche Elecsys Gen I | Under-detection | 41 nmol/L | 97.7% | 80.8% |

| Beckman Access | Under-detection | 33 nmol/L | 97.5% | 78.3% |

Core Methodologies and Validation Protocols

A rigorous validation protocol is essential to ensure that any hormone assay, regardless of format, provides accurate and precise results. The following workflow, adapted from a standardized protocol for validating immunoassays in fish plasma, outlines the key stages for establishing a reliable hormone measurement method [18].

Experimental Protocols from Cited Research

- Samples: 337 residual 24-hour urine samples from 94 Cushing's syndrome patients and 243 non-CS patients.

- Immunoassays: Four direct (extraction-free) immunoassays (Autobio A6200, Mindray CL-1200i, Snibe MAGLUMI X8, Roche e801) were performed per manufacturers' instructions.

- LC-MS/MS Reference Method: Urine samples were diluted 20-fold with water, mixed with cortisol-d4 internal standard, centrifuged, and the supernatant was injected into a SCIEX Triple Quad 6500+ mass spectrometer. Separation used a UPLC BEH C8 column with a water/methanol mobile phase gradient.

- Analysis: Method comparison via Passing-Bablok regression and Bland-Altman plots. Diagnostic accuracy was assessed by ROC analysis.

- Parallelism: Pooled plasma samples are serially diluted and the dilution curve is compared to the standard curve. The curves must be parallel (demonstrating linearity with R² > 0.97) to confirm the antibody recognizes the native and standard hormone similarly.

- Accuracy (Recovery): A known amount of the pure standard hormone is spiked into the sample matrix (e.g., plasma). The measured concentration is compared to the expected concentration, with recovery rates ideally between 80-120%.

- Precision: Both analytical (multiple measurements of the same sample in one run) and biological (measurements across different samples) replicates are analyzed to determine the coefficient of variation (CV%), assessing the assay's reproducibility.

Decision Framework: Selecting the Appropriate Assay Platform

The choice between IA and LC-MS/MS depends on the research question, available resources, and required data quality. The following decision pathway aids in selecting the most suitable method.

Immunoassays (IA)

- Strengths: High throughput, lower instrumental cost and operational complexity, excellent for well-defined targets with validated kits [12] [19].

- Weaknesses: Susceptible to cross-reactivity with structurally similar metabolites (e.g., leading to overestimation of methotrexate [17] or cortisol [15]), potential for antibody drift, may require method-specific cut-off values for clinical interpretation [15] [16].

Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS)

- Strengths: Superior specificity and sensitivity, ability to multiplex (measure multiple hormones simultaneously), less susceptible to matrix effects, considered a reference method for steroid hormones [12] [14] [17].

- Weaknesses: High capital and maintenance costs, requires significant technical expertise, slower sample throughput, complex method development [18] [17].

Essential Research Reagent Solutions

Successful hormone quantification relies on a suite of specific reagents and tools. The following table details key solutions used in the experiments cited in this guide.

Table 3: Key Research Reagents and Their Applications

| Reagent / Kit / Instrument | Function in Hormone Analysis | Research Context |

|---|---|---|

| Arbor Assays DetectX ELISA Kits (Progesterone, Cortisol, Testosterone) | Quantify hormones in non-traditional matrices like fur, claws, and saliva via antibody-antigen binding. | Validated for measuring reproductive and stress hormones in American marten claw and fur samples [19]. |

| Commercial EIA Kits (e.g., Salimetrics) | Enable rapid, cost-effective measurement of steroid hormones in saliva and plasma without radioactive materials. | Used for salivary sex hormone measurement, though with poorer performance for estradiol/progesterone vs. LC-MS/MS [14]. |

| SCIEX Triple Quad 6500+ Mass Spectrometer | Detects and quantifies hormones with high specificity based on mass-to-charge ratio after LC separation. | Used as the reference method for urinary free cortisol measurement [12] [13]. |

| Stable Isotope-Labeled Internal Standards (e.g., Cortisol-d4) | Correct for sample loss and matrix effects during sample preparation and ionization in LC-MS/MS. | Added to urine samples prior to UFC analysis to ensure quantification accuracy [12] [13]. |

| Vitamin D Standardization Program (VDSP) Reference Materials | Calibrate assays to ensure standardized results across different methods and laboratories. | Used to evaluate the measurement uncertainty of 25-hydroxyvitamin D immunoassays and LC-MS/MS methods [20]. |

Both immunoassays and LC-MS/MS are powerful tools for hormone measurement, yet they serve different needs within the research ecosystem. Immunoassays offer a practical solution for high-throughput screening where extreme specificity is not critical, provided that thorough validation of parallelism, accuracy, and precision is performed [18] and method-specific cut-offs are established [15]. In contrast, LC-MS/MS is the unequivocal choice for research requiring the highest level of specificity, multiplexing capability, and traceability to a reference method, particularly for challenging matrices like saliva [14] or for monitoring drugs with toxic metabolites [17]. The decision between these platforms should be guided by a clear understanding of the analytical requirements, the biological question at hand, and the available resources. As the field advances, the trend towards leveraging the strengths of both techniques—such as using validated immunoassays for initial screening and LC-MS/MS for confirmation—will continue to enhance the accuracy and reliability of hormone data in scientific research and drug development.

Accurate hormone measurement is fundamental to biomedical research and clinical diagnostics, yet the accuracy of immunoassays is consistently challenged by various sources of interference. This guide objectively compares the performance of different methodologies, focusing on their susceptibility to and management of matrix effects, cross-reactivity, and macromolecular interference, providing supporting experimental data relevant to parallelism recovery assay validation.

The Interference Triad in Hormone Immunoassays

Interference in immunoassays can be defined as the effect of a substance present in the sample that alters the correct value of the result [21]. These interferences are typically categorized into three primary mechanisms:

- Matrix Effects: Occur when components of the sample matrix (e.g., lipids, proteins, salts) non-specifically interact with assay components, altering the antigen-antibody reaction [21] [22]. In microfluidic systems, matrix interference has been shown to be significantly influenced by antibody surface coverage, with low-affinity serum components competing for immobilized antibodies [23].

- Cross-Reactivity: Arises when an antibody binds to structurally similar molecules other than the target analyte, such as hormone metabolites, precursor molecules, or administered drugs [21] [24]. This is a particular problem for steroids and drugs of abuse testing [21].

- Macromolecular Interference: Caused by the formation of large complexes, such as when analytes bind to endogenous immunoglobulins (e.g., macrocomplexes) or binding proteins, which can block antibody binding sites or alter assay kinetics [25] [21]. This can lead to persistently elevated results that do not align with the clinical picture [25].

Table 1: Characteristics and Impact of Common Interfering Substances

| Interference Type | Common Sources | Typical Effect on Results | Affected Assay Types |

|---|---|---|---|

| Matrix Effects | Lipids, heterophilic antibodies, albumin, lysozyme, fibrinogen, sample viscosity [23] [21] | Falsely elevated or lowered values [22] | All immunoassays, particularly microfluidic POC tests [23] |

| Cross-Reactivity | Hormone metabolites (e.g., cortisol vs. fludrocortisone), structurally similar drugs (e.g., digoxin-like factors) [21] [24] | Falsely elevated values (false positives) [21] | Competitive and sandwich immunoassays |

| Macromolecules | Immunoglobulin complexes (e.g., macrotroponin, macroprolactin), hormone-binding globulins [25] [21] | Falsely elevated values (most common) [25] | Immunometric assays (IMA) |

Methodological Comparison: Immunoassay vs. Mass Spectrometry

The choice of analytical platform significantly impacts vulnerability to interference. A direct comparison of chemiluminescent immunoassay (CLIA) and liquid chromatography-tandem mass spectrometry (LC-MS/MS) reveals critical performance differences.

A 2025 study on hypertensive patients demonstrated that CLIA-measured plasma aldosterone concentration (PAC) showed a median value 46.0% higher than that measured by LC-MS/MS [26]. Furthermore, in patients with renal dysfunction, PAC measured by CLIA was significantly elevated, whereas the PAC measured by LC-MS/MS did not show this difference, suggesting that the immunoassay was susceptible to interference from factors related to renal impairment that did not affect the mass spectrometry method [26].

Table 2: Comparative Analytical Performance of CLIA and LC-MS/MS for Aldosterone Measurement

| Performance Parameter | CLIA (Chemiluminescent Immunoassay) | LC-MS/MS (Liquid Chromatography-Tandem Mass Spectrometry) |

|---|---|---|

| Plasma Aldosterone (PAC) | Median 46.0% higher than LC-MS/MS [26] | Lower, more accurate results; reference method [26] |

| Specificity | Susceptible to cross-reactivity; lacks high specificity [26] | High specificity; physically separates analytes [27] [26] |

| Matrix Effect Management | Challenging; requires blocking agents or sample dilution [26] [22] | Robust; sample preparation (e.g., SPE) reduces interferences [27] |

| Result in Renal Dysfunction | Falsely elevated PAC [26] | No significant difference from controls [26] |

| Throughput & Cost | High-throughput, routine, cost-effective | Requires technical expertise, higher equipment cost [26] |

For salivary steroid measurement, a 2025 study detailed a high-throughput 96-well solid-phase extraction (SPE) LC-MS/MS method with UniSpray ionization that achieved optimal recovery (77%) and minimal matrix effects (33%), with detection limits between 1.1 and 3.0 pg/mL [27]. This highlights how advanced sample preparation combined with MS detection can minimize interference in complex matrices like saliva.

Essential Experimental Protocols for Interference Detection

Validation of hormone assays requires specific experiments to identify and quantify interference.

Parallelism (Linearity-of-Dilution) Experiment

This test is critical for assessing matrix effects and is fundamental to parallelism recovery assay validation [28].

- Purpose: To verify that the analyte, when present in the sample matrix, behaves identically to the standard in buffer across a range of dilutions.

- Protocol:

- Prepare a sample with a high concentration of the endogenous analyte.

- Create a series of dilutions (e.g., 1:2, 1:4, 1:8) using the appropriate sample dilution buffer. The same buffer should be used for diluting standards.

- Assay the diluted samples alongside the standard curve.

- Plot the measured concentration of the diluted samples against the dilution factor.

- Interpretation: The plot should produce a straight line. Significant deviation from linearity indicates the presence of matrix interference [28].

Spike-and-Recovery Experiment

This protocol quantitatively measures the extent of matrix interference.

- Purpose: To determine if the assay can accurately detect an analyte that has been added ("spiked") into the sample matrix [22].

- Protocol:

- Divide the sample matrix (e.g., pooled serum) into three aliquots:

- A: Unspiked sample.

- B: Sample spiked with a known concentration of the standard analyte.

- C: The same quantity of standard analyte in a clean dilution buffer.

- Assay all three aliquots and calculate the percent recovery using the formula:

Percent Recovery = ( [Spiked Sample] - [Sample] ) / [Spiked Standard Diluent] × 100[22].

- Divide the sample matrix (e.g., pooled serum) into three aliquots:

- Interpretation: Acceptable recovery typically ranges between 80-120%. Recovery below 80% suggests matrix interference is suppressing the signal, while recovery over 120% may indicate cross-reactivity or other enhancing interference [22].

Protocol for Investigating Macromolecular Interference

Macromolecule interference should be suspected when laboratory results are inconsistent with the clinical presentation [25].

- Purpose: To confirm the presence of high-molecular-weight complexes, such as macrocomplexes.

- Protocol (PEG Precipitation):

- Mix the patient sample with an equal volume of a polyethylene glycol (PEG) solution (e.g., 25% PEG 6000).

- Incubate to allow precipitation of high-molecular-weight species.

- Centrifuge the sample to pellet the precipitates.

- Assay the supernatant and compare the analyte concentration to the original, untreated sample.

- Interpretation: A recovery of <40% in the supernatant after PEG precipitation is indicative of significant macromolecular interference, as the complexed analyte has been precipitated out [25].

Interference Investigation Workflow

The Scientist's Toolkit: Key Reagents and Materials

Successful management of interference relies on the use of specific reagents and methodologies.

Table 3: Essential Research Reagent Solutions for Interference Management

| Tool / Reagent | Primary Function | Application in Interference Management |

|---|---|---|

| Solid-Phase Extraction (SPE) | Selective extraction and purification of analytes from complex matrices [27] | Reduces matrix effects prior to LC-MS/MS analysis; achieved 77% recovery for salivary steroids [27] |

| Polyethylene Glycol (PEG) | Non-specific precipitation of high-molecular-weight species [25] | Used in precipitation protocols to identify macromolecular interference (e.g., macrotroponin) [25] |

| Protein A/G Beads | Binds to the Fc fragment of immunoglobulins [25] | Pull-down experiments to confirm antibody-based macromolecular complexes (limited to IgG) [25] |

| Blocking Buffers (e.g., BSA) | Block nonspecific binding sites on solid phases and assay components [29] [28] | Reduces nonspecific matrix interactions; cross-reactivity may require non-mammalian blockers [28] |

| Matched Antibody Pairs | Pre-validated antibody sets for sandwich ELISA targeting different epitopes [28] | Minimizes cross-reactivity and ensures robust assay development [28] |

| Surfactants (e.g., Tween 20) | Mild non-ionic detergent added to buffers [28] | Minimizes hydrophobic interactions in wash and blocking buffers (typically at 0.05% v/v) [28] |

Strategies for Mitigation and Future Directions

Several practical strategies can be employed to overcome interference challenges:

- Sample Dilution: The simplest and most common method to reduce the concentration of interfering components, though it also reduces sensitivity [24] [22].

- Alternative Platforms: When interference is suspected, retesting the specimen using a different assay methodology or antibody set can provide accurate results [25]. LC-MS/MS is often the preferred alternative due to its high specificity [27] [26].

- Optimized Surface Coverage: In microfluidic systems, increasing antibody surface coverage on the solid phase has been shown to reduce serum matrix interference by outcompeting low-affinity interferents [23].

- Miniaturization and Automation: Platforms like the Gyrolab system use miniaturized, automated flow-through immunoassays that reduce contact times between samples and reagents, thereby favoring specific high-affinity binding and minimizing low-affinity interference [24].

Interference Mitigation Strategies

Matrix effects, cross-reactivity, and macromolecules represent a significant challenge to the accuracy of hormone measurements. While immunoassays like CLIA are vulnerable to these interferences, LC-MS/MS has demonstrated superior performance as a more specific and reliable reference method, though with trade-offs in accessibility and throughput [26]. A rigorous validation process incorporating parallelism and spike-and-recovery experiments is non-negotiable for generating reliable data. For researchers and drug development professionals, a systematic approach to identifying interference—combined with strategic mitigation techniques such as sample dilution, platform switching, and advanced sample preparation—is essential for ensuring data integrity in both preclinical and clinical studies.

Methodological Workflows: Implementing Parallelism and Recovery Testing for Hormone Assays

Parallelism is a critical validation parameter that determines whether actual samples containing high endogenous analyte concentrations provide the same degree of detection in the standard curve after serial dilutions [1]. This test signifies differences in antibody binding affinity to endogenous analyte versus standard/calibration analyte, making it essential for ensuring accurate quantification of hormones and other biomarkers in biological samples. For researchers and drug development professionals, proper parallelism testing validates that an assay maintains proportional response across the expected concentration range, confirming that matrix effects do not interfere with accurate measurement. This guide compares experimental approaches and establishes clear acceptance criteria for evaluating assay performance in hormone measurement research.

Core Principles and Experimental Protocols

Distinguishing Parallelism from Related Concepts

Parallelism is often confused with dilutional linearity and spike-and-recovery, though these tests address distinct validation aspects [1]:

- Parallelism utilizes samples containing high levels of endogenous analyte diluted to demonstrate similar immunoreactivity between endogenous and standard analytes

- Dilutional linearity determines whether sample matrices spiked with detection analyte above the upper limit of detection provide reliable quantification after dilution

- Spike-and-recovery assesses the difference in percent recovery between sample matrices and standard diluent

Experimental Protocol for Parallelism Testing

A robust parallelism testing protocol involves these critical steps [1]:

- Sample Selection: Identify at least 3 samples displaying high concentration of endogenous analyte, but not exceeding the upper limit of quantification in the standard curve

- Serial Dilution: Perform 1:2 serial dilutions using appropriate sample diluent until the predicted concentration falls below the lower limit of quantification

- Analysis: Obtain absorbance readings and calculate mean concentrations only for sample ranges within the standard curve limits

- Calculation: Determine mean concentrations of samples with dilutions factored in and calculate percentage coefficient of variation (%CV)

Figure 1: Parallelism testing workflow demonstrating the key experimental steps from sample selection through final validation assessment.

Serial Dilution Methodology

Serial dilution is a fundamental laboratory technique where the dilution factor stays the same for each step [30]. For parallelism testing:

- Dilution Factor: Commonly use 2-fold or 10-fold serial dilution depending on precision requirements

- Diluent Selection: Choose proper diluent compatible with the sample matrix and analyte

- Calculations: Final dilution factor is calculated by multiplying dilution factors of every step

- Volume Considerations: Equalize liquid volumes across tubes when using plate readers for analysis

The 2-fold serial dilution provides greater precision for determining minimum effective concentrations compared to 10-fold dilutions [30].

Acceptance Criteria and Data Interpretation

Establishing Acceptance Criteria

Acceptance criteria for parallelism should be established based on the assay's intended use and precision requirements [1] [31]:

- %CV Threshold: Samples with %CV within 20-30% of expectations generally display successful parallelism, though the exact percentage should be decided by end users

- Statistical Evaluation: Assess consistency across the dilution series through linear regression analysis

- Tolerance-Based Criteria: Method error should be evaluated relative to the tolerance for two-sided specification limits

Quantitative Data Presentation

Table 1: Example Parallelism Recovery Data Across Different Sample Matrices

| Sample Matrix | Spike Concentration (ng/mL) | % Recovery | Minimum Recommended Dilution |

|---|---|---|---|

| Human Serum Extracted | 2.0 | 102% | Neat |

| Human Serum Extracted | 1.0 | 83% | Neat |

| Human Serum Extracted | 0.5 | 124% | Neat |

| Mouse Serum Extracted | 1.0 | 90.9% | 1:2 |

| Mouse Serum Extracted | 0.5 | 105.8% | 1:2 |

| Mouse Serum Extracted | 0.25 | 115.6% | 1:2 |

| Human Saliva Extracted | 5.0 | 83.3% | 1:2 |

| Human Saliva Extracted | 2.5 | 98.7% | 1:2 |

| Human Saliva Extracted | 1.25 | 108.4% | 1:2 |

Table 2: Inter-assay and Intra-assay CV Profiles for Parallelism Assessment

| Corticosterone (pg/mL) | Intra-assay %CV | Inter-assay %CV |

|---|---|---|

| Low (171) | 8.0 | 13.1 |

| Medium (403) | 8.4 | 8.2 |

| High (780) | 6.6 | 7.8 |

Interpretation of Results

Successful parallelism demonstrates comparable selectivity between analyte and antibody from endogenous sample and standard/calibration analyte [1]:

- Optimal Performance: %CV within 20-30% indicates acceptable parallelism

- Problematic Results: Higher %CV values indicate loss of parallelism and suggest significant differences in immunoreactivity between analytes

- Common Causes: Post-translational modifications or unspecified matrix effects often contribute to failed parallelism tests

Figure 2: Parallelism assessment decision tree with acceptance criteria and investigation pathways for problematic results.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagent Solutions for Parallelism Testing

| Reagent/Material | Function | Application Notes |

|---|---|---|

| Sample Diluent | Matrix for serial dilutions | Should align closely with proposed sample matrix; may require optimization for different sample types |

| Reference Standard | Calibration curve preparation | High purity analyte for standard curve generation |

| Quality Control Materials | Monitoring assay performance | Should span measurement range; used for intra and inter-assay CV determination |

| Coated Plate Systems | Solid phase for binding assays | 96-well formats most common for high-throughput applications |

| Detection Antibodies | Analyte recognition | Conjugated with enzymes, fluorophores, or other detection molecules |

| Washing Buffers | Removing unbound materials | Critical for reducing background signal and improving precision |

| Substrate/Chromogen | Signal generation | Enzymatic, chemiluminescent, or fluorescent detection systems |

| Blocking Buffers | Reducing nonspecific binding | Protein-based solutions to minimize background interference |

Statistical Analysis and Data Quality Assurance

Statistical Approaches for Parallelism Assessment

Robust statistical analysis is essential for reliable parallelism assessment [32]:

- Standard Curve Generation: Establish relationship between known concentrations and assay responses using linear regression models

- %CV Calculation: Determine both intra-assay (within run) and inter-assay (between runs) coefficients of variation

- Regression Analysis: Utilize Deming or Passing-Bablok regression for method comparison studies

Data Quality Assurance

Quality assurance measures for parallelism testing include [33]:

- Normality Testing: Assess distribution of data using kurtosis and skewness measurements (±2 indicates normality)

- Outlier Identification: Detect anomalies deviating from expected patterns

- Reliability Assessment: Establish psychometric properties with Cronbach's alpha >0.7 considered acceptable

- Data Cleaning: Remove questionnaires with certain thresholds of missing data and check for duplications

Proper experimental design for parallelism testing requires careful attention to serial dilution methodology, appropriate acceptance criteria, and robust statistical analysis. The protocols outlined in this guide provide researchers with a framework for validating that immunoassays maintain proportional response across sample dilutions, ensuring accurate hormone measurement in research and drug development applications. By implementing these standardized approaches and maintaining consistent quality control measures, scientists can generate reliable, reproducible data that meets rigorous scientific standards for assay validation.

In hormone measurement and parallelism recovery assay validation, the precision and accuracy of results are fundamentally dependent on the efficacy of sample preparation. This initial step is crucial for removing matrix interferences that can compromise data quality in Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS) analysis. Solid-Phase Extraction (SPE) and Protein Precipitation (PPT) are two widely employed techniques for matrix cleanup, each with distinct mechanisms, advantages, and limitations. Within clinical and bioanalytical research, particularly for quantifying low-abundance biomarkers like steroids, hormones, and peptides such as oxytocin, selecting an appropriate sample cleanup strategy is paramount for achieving the required sensitivity and specificity [34] [35] [36]. This guide provides an objective comparison of SPE and PPT, supported by experimental data and detailed protocols, to inform method development in drug discovery and clinical research.

Fundamental Principles and Comparison

Solid-Phase Extraction (SPE)

SPE is a partitioning process where analytes are separated from a liquid sample by transferring them to a solid stationary phase. The classic SPE procedure involves four main steps: conditioning the sorbent to solvate the stationary phase, loading the sample, rinsing away interferences, and eluting the analytes of interest [37]. SPE sorbents are available in a variety of chemistries, including bonded silicas and polymeric phases.

- Polymeric Sorbents: Materials like polystyrene-divinylbenzene (PS-DVB) are popular due to their wide pH stability, higher sample capacity, and absence of residual silanol groups that can cause irreversible secondary interactions. A key advantage is that their performance remains unaffected if the sorbent dries out between steps, enhancing reproducibility [37].

- Ion-Exchange Sorbents: These sorbents utilize a mixed-mode mechanism, combining hydrophobic interactions with strong ionic interactions between charged groups on the sorbent and the analyte. This allows for highly selective extractions of ionizable substances [37].

Protein Precipitation (PPT)

PPT is one of the most straightforward and rapid sample preparation techniques. It involves adding an organic solvent (e.g., acetonitrile or methanol) to a biological sample such as plasma or serum, causing proteins to denature and precipitate. The precipitated proteins are then removed by filtration or centrifugation, yielding a protein-free sample [38]. However, while PPT effectively removes proteins, it often fails to eliminate other matrix components, such as phospholipids, which can cause significant issues in subsequent LC-MS/MS analysis [38].

Direct Technique Comparison

The table below summarizes a direct experimental comparison of PPT and a specialized Phospholipid Removal (PLR) plate—a form of SPE—for preparing plasma samples for LC-MS/MS analysis [38].

Table 1: Experimental Comparison of Protein Precipitation vs. Phospholipid Removal (PLR) SPE

| Parameter | Protein Precipitation (PPT) | Phospholipid Removal (PLR) SPE |

|---|---|---|

| Phospholipid Removal Efficiency | Incomplete; high phospholipid peak area (1.42 x 108) observed [38]. | Highly effective; minimal phospholipid signal (5.47 x 104 peak area) [38]. |

| Matrix Effects (Ion Suppression) | Significant ion suppression (~75% signal reduction for procainamide) observed due to co-eluting phospholipids [38]. | No significant ion suppression; analyte ionization was unaffected throughout the chromatographic run [38]. |

| Impact on Instrumentation | Leads to source contamination and HPLC column fouling due to phospholipid accumulation, increasing maintenance and costs [38]. | Protects the instrument by removing phospholipids, reducing downtime and extending column lifetime [38]. |

| Analyte Recovery & Linearity | Not quantified in the study, but ion suppression implies compromised accuracy and precision. | Excellent; demonstrated clear linearity (r² = 0.9995) for procainamide across a range of 10-1500 ng/mL [38]. |

| Protocol Complexity | Rapid and straightforward, involving minimal steps [38]. | Similarly straightforward protocol to PPT, but incorporates a specific sorbent to capture phospholipids [38]. |

Experimental Protocols in Practice

Solid-Phase Extraction Protocol for Oxytocin Quantification

The development of a highly sensitive LC-MS/MS method for the quantification of oxytocin in plasma showcases a robust SPE application.

- Objective: To achieve a lower limit of quantification (LLOQ) of 1 ng/L for oxytocin in human plasma, a challenging goal given its remarkably low endogenous levels [36].

- Extraction Procedure: Oxytocin was extracted from plasma using an Oasis HLB 30 mg plate, a polymeric reversed-phase sorbent. A surrogate matrix (PBS-0.1% BSA) was used to prepare calibration standards to avoid endogenous interference [36].

- Outcome: The method was fully validated, achieving an LLOQ of 1 ng/L with precision (coefficient of variation) below 10% and accuracy ranging from 94% to 108%. This demonstrates SPE's capability for highly sensitive and precise quantification of low-abundance peptides in complex biological matrices [36].

Automated Protein Precipitation with Online SPE for Steroid Analysis

A fully automated method for determining steroids in serum combines the simplicity of PPT with the clean-up power of online SPE.

- Objective: To develop a fully automated, specific, and high-throughput method for determining a panel of five steroids in serum to diagnose endocrine diseases [35].

- Extraction Procedure:

- Automated Protein Precipitation: The CLAM-2030 automated sample preparation module pipetted 30 µL of serum into a preconditioned PTFE filter vial. It then added 60 µL of internal standard solution in acetonitrile, mixed the solution, and filtered it under vacuum [35].

- Online SPE and Analysis: The deproteinized extract was automatically injected into a 2D-UHPLC system. The first dimension used a perfusion column to trap and concentrate the steroids while washing away matrix compounds. The analytes were then back-flushed to the analytical column (Raptor Biphenyl) for chromatographic separation and MS/MS detection [35].

- Outcome: The method was successfully validated according to European Medicine Agency guidelines. The automation improved traceability and resulted in significant savings in cost and time, highlighting the efficiency gains from integrating PPT with online SPE [35].

Advanced Precipitation: ZnCl2 Precipitation-Assisted Sample Preparation (ZASP)

An advanced precipitation method has been developed for proteomic analysis, demonstrating the evolution of precipitation techniques.

- Objective: To develop a cost-effective, simple, and widely applicable sample preparation method to efficiently remove LC-MS-incompatible detergents like SDS prior to analysis [39].

- Extraction Procedure: Proteins are recovered by incubating the sample lysate with an equal volume of ZASP precipitation buffer (ZPB), containing 100 mM ZnCl₂ and 50% methanol, at room temperature for 10 minutes. Zinc ions cause protein precipitation by binding to surface residues and altering solubility. The precipitate is then processed for in-solution digestion [39].

- Outcome: ZASP achieved a protein recovery rate of over 90% even from harsh detergent-containing lysates. It outperformed other common methods like filter-aided sample preparation (FASP) and SP3 in terms of protein/peptide identifications, missing cleavage rates, and reproducibility, all at a low cost per sample [39].

The Scientist's Toolkit: Essential Research Reagents

The following table lists key reagents and materials used in the featured experiments, which are essential for developing robust sample preparation workflows in hormone and biomarker research.

Table 2: Key Research Reagent Solutions for Sample Preparation

| Reagent / Material | Function in Sample Preparation | Example Application |

|---|---|---|

| Oasis HLB SPE Plate | A hydrophilic-lipophilic balanced polymeric sorbent for broad-spectrum retention of analytes; excellent for polar compounds [37] [36]. | Extraction of the peptide oxytocin from plasma [36]. |

| Microlute PLR Plate | A specialized SPE sorbent with an active component designed to capture phospholipids without retaining analytes of interest [38]. | Removal of phospholipids from plasma to prevent ion suppression in LC-MS/MS [38]. |

| Polymeric Sorbents (e.g., PS-DVB) | Provide a wide pH stability, high capacity, and are not susceptible to "dewetting," improving reproducibility for acidic, basic, and neutral compounds [37]. | General-purpose cleanup of complex biological samples. |

| Raptor Biphenyl Column | An analytical column with biphenyl stationary phase that offers unique selectivity for separating structurally similar compounds via π-π interactions [35]. | Chromatographic separation of steroids like testosterone and androstenedione [35]. |

| ZASP Precipitation Buffer | A solution of ZnCl₂ in methanol used to precipitate proteins and efficiently remove interfering detergents like SDS from protein lysates [39]. | Proteomic sample preparation from cells and tissues prior to LC-MS analysis [39]. |

| CLAM-2030 Module | An automated sample preparation system that performs tasks like pipetting, mixing, and filtration, enhancing traceability and throughput [35]. | Fully automated protein precipitation and filtration for steroid analysis in serum [35]. |

Workflow and Decision Pathway

The following diagram illustrates a logical workflow for selecting and applying sample preparation techniques in a bioanalytical context, based on the experimental data and protocols discussed.

The choice between Solid-Phase Extraction and Protein Precipitation is dictated by the specific analytical requirements. Protein Precipitation offers unmatched speed and simplicity, making it suitable for high-throughput screens where some matrix effects are acceptable. However, as the experimental data shows, PPT's inability to remove phospholipids can lead to significant ion suppression and instrument maintenance issues [38]. In contrast, SPE provides superior sample cleanup, minimizes matrix effects, and enables the high sensitivity and precision required for low-abundance biomarkers like oxytocin and steroids [35] [36]. The emergence of advanced techniques like ZASP [39] and the trend towards full automation integrating PPT with online SPE [35] point to a future where researchers do not have to choose exclusively between speed and quality. For critical applications such as hormone measurement parallelism recovery assay validation, where data integrity is non-negotiable, SPE-based methods provide the robust and reliable foundation necessary for generating credible results.

The accurate quantification of steroid hormones is a cornerstone of endocrinological diagnostics, essential for diagnosing a wide array of adrenal-related diseases such as adrenal insufficiency, hyperaldosteronism, adrenal tumors, and congenital adrenal hyperplasia [40]. For decades, traditional methods like chemiluminescence immunoassay (CLIA) and radioimmunoassay (RIA) have dominated clinical laboratories. However, these techniques are increasingly recognized as limited by significant drawbacks, including cross-reactivity, matrix interference, and narrow detection ranges, which compromise accuracy, particularly at low and extremely high hormone concentrations [40]. Liquid chromatography-tandem mass spectrometry (LC-MS/MS) has emerged as the recommended method, offering superior specificity, sensitivity, and the unique capability to simultaneously profile multiple steroids in a single analysis [40] [41]. This case study details the validation of a robust, high-throughput LC-MS/MS method for a comprehensive multi-steroid panel, employing solid-phase extraction (SPE) to meet the demanding needs of modern clinical and research settings.

Method Comparison: LC-MS/MS vs. Immunoassay

The transition from immunoassays to LC-MS/MS is driven by the need for more reliable and comprehensive diagnostic data. Table 1 summarizes a comparative analysis, underscoring the analytical advantages of the LC-MS/MS platform.

Table 1: Comparative Analytical Performance of LC-MS/MS versus Immunoassay

| Analytical Parameter | LC-MS/MS Method | Traditional Immunoassay |

|---|---|---|

| Specificity | High; resolves structurally similar steroids [40] | Limited; suffers from antibody cross-reactivity [40] [41] |

| Sensitivity (LLOQ) | Suitable for low-level steroids (e.g., estradiol) [41] | Often inadequate for low concentrations [41] |

| Multiplexing Capability | 15-19 analytes in a single run [40] [41] | Typically single-analyte or limited panels |

| Trueness/Accuracy | Verified with reference materials; recovery 87-116% [41] | Variable and often biased; mean bias >+65% for some steroids [41] |

| Precision (Interday) | Generally <15% [41] | Can be higher and less consistent |

| Dynamic Range | Broad, linear range covering physiological levels [40] | Narrow, requiring sample dilution [40] |

| Matrix Versatility | Validated for serum, plasma, urine [40] [42] | Can be highly matrix-sensitive |

A direct in-house comparison against IVD-CE-certified immunoassays for steroids like 17-hydroxyprogesterone (17P) and androstenedione (ANDRO) revealed substantial inaccuracies in the immunoassays, with mean biases exceeding +65% [41]. Furthermore, immunoassays demonstrated significant limitations at lower concentrations for progesterone (PROG), estradiol (E2), and testosterone (TES) [41]. These findings confirm that LC-MS/MS delivers a level of analytical reliability that immunoassays cannot consistently provide.

Experimental Protocol: A High-Throughput Workflow

Sample Preparation: Automated Solid-Phase Extraction

The developed method employs a high-throughput SPE protocol designed for efficiency and consistency, making it suitable for routine laboratory use [40]. The multi-step process can be visualized in the following workflow diagram.

Diagram 1: High-Throughput SPE Sample Preparation Workflow.

The specific protocol is as follows:

- Protein Precipitation: A 100-500 μL aliquot of serum or plasma is mixed with an internal standard solution and a protein precipitant, such as methanol or a methanol/zinc sulfate mixture [40] [43]. After vortexing and centrifugation, the supernatant is collected.

- Solid-Phase Extraction: The supernatant is loaded onto a conditioned Oasis HLB 96-well μElution plate [40] [43]. This step is amenable to automation using systems like the Tecan Freedom EVO workstation, which significantly improves throughput and frees up staff time [44].

- Washing and Elution: The SPE plate is washed with a solution like ice-cold 50% methanol to remove impurities [43]. The target analytes are then eluted with a strong solvent like pure methanol.

- Evaporation and Reconstitution: The eluate is dried under a gentle nitrogen stream and subsequently reconstituted in a small volume of mobile phase compatible with the LC-MS/MS system, thereby concentrating the sample and enhancing sensitivity [43].

LC-MS/MS Analysis and Instrumentation

Chromatography: Separation is achieved using reversed-phase chromatography, typically with an ACQUITY UPLC BEH C18 column (e.g., 2.1 mm × 100 mm, 1.7 μm) maintained at 30°C [40] [41]. A gradient elution is employed over less than 8 minutes to resolve the 17-19 steroids, optimizing speed and resolution [40] [41].

Mass Spectrometry: Detection uses a triple quadrupole mass spectrometer (e.g., TSQ Endura, Shimadzu 8060) operating in scheduled Multiple Reaction Monitoring (sMRM) mode [40] [45] [41]. This mode maximizes dwell times and ensures sufficient data points across peaks. Ionization is primarily via electrospray ionization (ESI). The use of additives like ammonium fluoride (e.g., 0.2 mmol/L) can significantly enhance ionization efficiency, particularly for challenging analytes in negative mode [41]. Key mass spectrometry parameters are fine-tuned for each steroid, including declustering potential and collision energy, to generate optimal precursor-to-fragment ion transitions [41].

Calibration and Quantification Strategies

Accurate quantification of endogenous steroids is challenging due to the absence of a true analyte-free matrix. The preferred strategy identified in recent literature is surrogate calibration [43]. This method involves using stable-isotope-labeled (SIL) analogues of the target analytes as calibrants. These surrogate calibrants are spiked into the true biological matrix, creating a calibration curve that closely mimics the behavior of the endogenous analytes, thereby controlling for matrix effects [43]. After establishing a response factor between the SIL calibrant and the native analyte, the endogenous concentration is determined with high accuracy. This approach is more robust and efficient than alternatives like the standard addition method, which is time-consuming and requires larger sample volumes [43]. For less complex applications, a single-point calibration has also been demonstrated to be feasible, producing results comparable to a full multi-point curve and improving laboratory efficiency [45].

Validation Data and Analytical Performance

The multi-steroid LC-MS/MS method was rigorously validated according to established bioanalytical principles. Table 2 presents key performance metrics for a selection of steroids from the panel, demonstrating the method's robustness.

Table 2: Analytical Performance Data for a Multi-Steroid Panel

| Analyte | Linear Range (nmol/L) | Lower LOQ | Interday Precision (% CV) | Accuracy (Recovery %) |

|---|---|---|---|---|

| Cortisol (CL) | Wide dynamic range [41] | Meets clinical needs [40] | <15% [41] | 87-116% [41] |

| Testosterone (TES) | Wide dynamic range [41] | Meets clinical needs [40] | <15% [41] | 87-116% [41] |

| Estradiol (E2) | Wide dynamic range [41] | Low-level suitable [41] | <15% [41] | 87-116% [41] |

| Aldosterone (ALDO) | Wide dynamic range [41] | Meets clinical needs [40] | <15% [41] | 87-116% [41] |

| 17-Hydroxyprogesterone (17P) | Wide dynamic range [41] | Meets clinical needs [40] | <15% [41] | 87-116% [41] |

| 11-Deoxycortisol | Wide dynamic range [40] | Meets clinical needs [40] | Data validated [40] | Data validated [40] |

| Dexamethasone | Wide dynamic range [40] | Meets clinical needs [40] | Data validated [40] | Data validated [40] |

The method validation confirmed excellent interday imprecision, generally better than 15% for all analytes [41]. Trueness was proven through recovery experiments using ISO 17034-certified reference materials and proficiency testing (e.g., UK NEQAS) [41]. The combination of high-throughput SPE and a fast LC-MS/MS run enables the processing of a full 96-well plate (~80 patient samples plus standards and controls) in approximately 90 minutes of preparation time [44].

The Scientist's Toolkit: Essential Research Reagents and Materials

The successful implementation of this validated method relies on a set of key reagents and materials. The following table details these essential components.

Table 3: Key Research Reagent Solutions for LC-MS/MS Steroid Analysis

| Item | Function / Application | Specific Examples / Specifications |

|---|---|---|

| Stable Isotope-Labeled Internal Standards (SIL-IS) | Correct for matrix effects & preparation losses; enable surrogate calibration [43] | 13C- or 2H-labeled analogues for each steroid (e.g., cortisone-d8, E1-13C6) [43] |

| SPE μElution Plates | High-throughput sample clean-up and analyte concentration | Oasis HLB 96-well μElution Plates (2 mg sorbent) [40] [43] |

| UPLC Chromatography Column | High-resolution separation of complex steroid mixtures | ACQUITY UPLC BEH C18 (2.1x100 mm, 1.7 μm) [40] |

| Ionization Enhancer | Boosts signal intensity, especially for low-abundance steroids | Ammonium fluoride (NH4F) additive in mobile phase [41] |

| Derivatization Reagent | Improves sensitivity for estrogens and other poorly ionizing steroids | DMIS (1,2-dimethylimidazole-5-sulfonyl chloride) [43] |

| Automated Liquid Handler | Enables walk-away automation of SPE for improved reproducibility & throughput | Tecan Freedom EVO workstation [44] |

This case study validates a high-throughput LC-MS/MS method coupled with SPE for the comprehensive analysis of a multi-steroid panel. The data conclusively shows that this approach surpasses traditional immunoassays in specificity, sensitivity, and accuracy. The implementation of automated SPE and efficient chromatographic separation makes this robust method suitable for both clinical diagnostics and advanced research, providing reliable and comprehensive steroid profiles that are critical for precise endocrinological decision-making.

The emergence of direct-to-consumer at-home fertility monitors represents a significant shift in reproductive health management, enabling individuals to track their fertile window with unprecedented convenience. These devices primarily rely on the quantitative measurement of key urinary hormone metabolites—luteinizing hormone (LH), estrone-3-glucuronide (E3G), and pregnanediol glucuronide (PdG)—to predict and confirm ovulation [46] [47]. Unlike serum-based laboratory tests, these monitors utilize lateral flow assays paired with optical readers to provide quantitative hormone data outside clinical settings [46]. However, their application in novel physiological contexts such as postpartum recovery, perimenopause, and conditions like polycystic ovary syndrome (PCOS) presents unique validation challenges that extend beyond traditional laboratory method verification [6] [48]. This review systematically compares the performance of leading at-home fertility monitors against established reference methods and examines the experimental protocols required to validate their measurements across diverse physiological states, with a specific focus on parallelism recovery assays that ensure analytical validity despite variable urine matrices and metabolite concentrations.