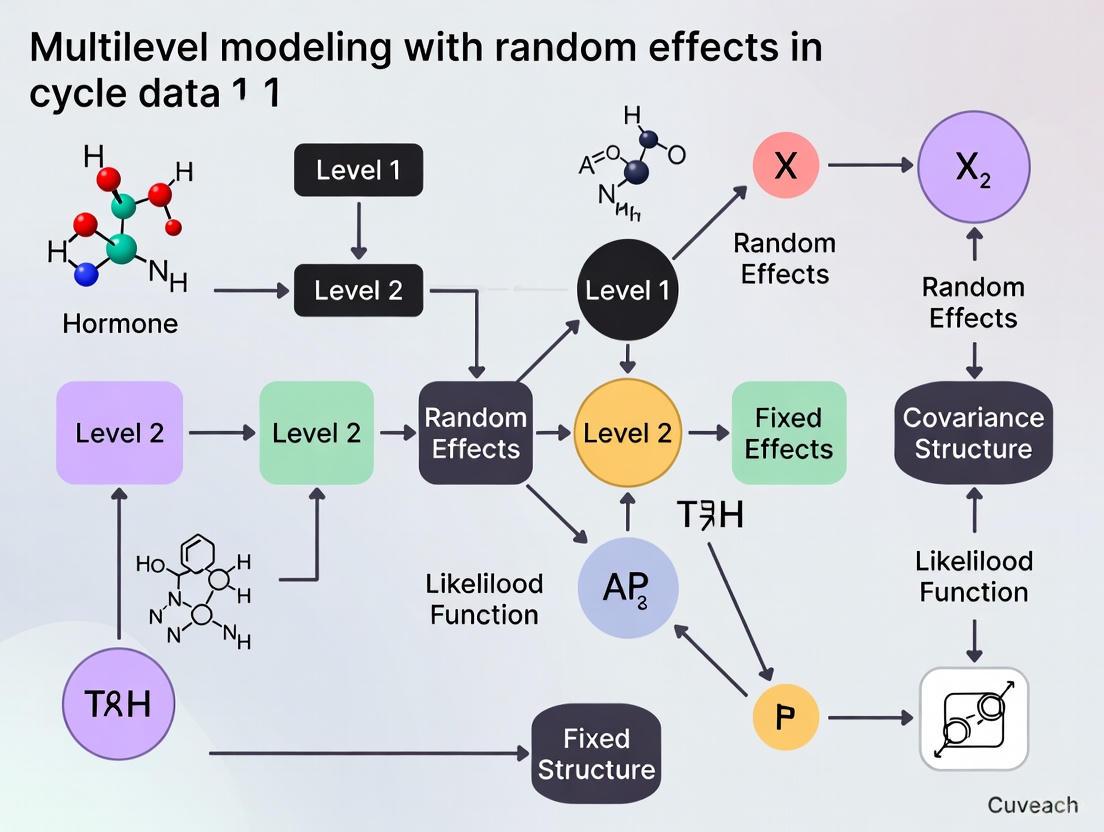

Multilevel Modeling with Random Effects for Longitudinal Data in Biomedical Research: From Foundational Concepts to Clinical Applications

This article provides a comprehensive guide to multilevel modeling (MLM) with random effects for the analysis of longitudinal data in biomedical and drug development research.

Multilevel Modeling with Random Effects for Longitudinal Data in Biomedical Research: From Foundational Concepts to Clinical Applications

Abstract

This article provides a comprehensive guide to multilevel modeling (MLM) with random effects for the analysis of longitudinal data in biomedical and drug development research. It covers foundational concepts, including the necessity of MLM for handling correlated data structures and the interpretation of random effects. The article details methodological applications, from specifying growth models to integrating time-varying covariates, using real-world examples from pharmacology and clinical trials. It further addresses common troubleshooting issues, such as model convergence problems and the selection of covariance structures, and offers comparative validation against traditional methods like repeated-measures ANOVA. Aimed at researchers, scientists, and drug development professionals, this resource bridges statistical theory with practical application to enhance the rigor and interpretability of longitudinal studies.

Understanding the Why: Core Principles of Multilevel Models for Correlated Data

FAQs: Understanding Random Effects

1. What are random effects, and why are they crucial in multilevel modeling for longitudinal research?

Random effects are components of a multilevel model that account for the variability in your outcome variable that exists at different grouping levels (e.g., different research sites, different individual subjects). They are crucial because longitudinal data, such as repeated measurements nested within individual patients, violate the standard statistical assumption that all observations are independent. Using a model that ignores this nested or "clustered" data structure can lead to incorrect standard errors and false positive results [1]. Multilevel models, which include random effects, provide a flexible framework for analyzing such data correctly [2].

2. What is the practical difference between a random intercept and a random slope?

- Random Intercept: This allows the baseline or starting value of the outcome to vary across groups. For example, in a drug trial across multiple clinics, a random intercept model acknowledges that the average health outcome at the start of the study might be different for each clinic, even before any treatment is applied [1].

- Random Slope: This allows the effect or the rate of change of a predictor variable to vary across groups. In a longitudinal study, this often means that the trajectory or growth curve over time is different for each subject. For instance, a random slope for time would indicate that patients are responding to a drug at different rates [1].

3. How do I know if I need to include a random slope in my model?

You should consider a random slope when you have a theoretical or empirical reason to believe that the relationship between a predictor (especially a Level 1 predictor like time) and the outcome is not constant across your groups (e.g., individuals or clinics). Exploratory data analysis, such as plotting individual growth curves, can often suggest whether these relationships vary. Furthermore, model selection tools like AIC (Akaike Information Criterion) or BIC (Bayesian Information Criterion) can be used to compare a model with only a random intercept to one that also includes a random slope. The model with the lower AIC or BIC is generally preferred [3].

4. What does the "variance component" of a random effect tell me?

Variance components quantify the amount of variability in your data that is attributable to different random effects. In a simple random intercept model, you get two key variance components:

- Between-group variance: The variance of the random intercepts, indicating how much the baseline levels differ across groups.

- Within-group variance: The residual variance, indicating how much the observations vary within each group.

A useful statistic derived from these is the Intraclass Correlation Coefficient (ICC). The ICC tells you the proportion of the total variance in the outcome that is explained by the grouping structure. A high ICC suggests that a multilevel model is necessary [1].

5. My model fails to converge. What are the most common troubleshooting steps?

Model convergence issues are often related to the model's complexity relative to the data. Common steps to resolve this include:

- Simplifying the model: Consider removing the random effect with the smallest variance, especially if it is highly correlated with another random effect.

- Checking your data structure: Ensure you have a sufficient number of groups (Level 2 units). Having very few groups (e.g., less than 5) can make it difficult to reliably estimate variance components.

- Re-scaling predictors: Grand-mean centering continuous predictors (subtracting the overall mean from each value) can make the model easier to fit and the intercept more interpretable [1].

Troubleshooting Guides

Issue 1: Selecting the Appropriate Random Effects Structure

Problem: A researcher is unsure whether to model a predictor (e.g., Time) as a fixed effect only, or also as a random slope.

Solution: A data-driven model comparison approach is recommended [3].

Experimental Protocol:

- Define Candidate Models: Formulate a set of nested models.

Model A:Outcome ~ Time + (1 | SubjectID)(Random Intercept only)Model B:Outcome ~ Time + (1 + Time | SubjectID)(Random Intercept and Random Slope for Time)

- Fit the Models: Use statistical software (e.g., the

lme4package in R) to fit both models to your data. - Compare Models: Use information criteria (AIC or BIC) to compare the models. The model with the lower value is generally a better fit, penalized for its complexity. A significant difference (often >10) in BIC provides strong evidence for the better model [3].

The following workflow outlines this model selection process:

Issue 2: Interpreting Variance Components and the ICC

Problem: After running an "empty" model (with no predictors), a researcher needs to understand the partition of variance.

Solution: Calculate and interpret the variance components and the Intraclass Correlation Coefficient (ICC) [1].

Experimental Protocol:

- Fit a Null Model: Estimate a model with only a random intercept for the grouping variable (e.g.,

Outcome ~ (1 | ClinicID)). - Extract Variance Components: From the model output, obtain:

- ( \sigma^20 ): The variance of the random intercepts (between-group variance).

- ( \sigma^2\epsilon ): The residual variance (within-group variance).

- Calculate the ICC: Use the formula: [ ICC = \frac{\sigma^20}{\sigma^20 + \sigma^2_\epsilon} ] This value represents the proportion of total variance that lies between groups. An ICC of 0 would indicate no clustering, while an ICC of 0.2 (20%) is often considered substantial enough to warrant a multilevel modeling approach.

The table below summarizes the key outputs from a hypothetical null model:

Table 1: Example Output and Interpretation from a Null Multilevel Model

| Variance Component | Symbol | Example Value | Interpretation |

|---|---|---|---|

| Between-Clinic Variance | ( \sigma^2_0 ) | 4.5 | There is variability in the baseline outcome level across different clinics. |

| Within-Clinic Variance | ( \sigma^2_\epsilon ) | 15.5 | There is considerable variability among patients within the same clinic. |

| Intraclass Correlation (ICC) | ( \frac{\sigma^20}{\sigma^20 + \sigma^2_\epsilon} ) | 0.225 | 22.5% of the total variance in the outcome is at the clinic level. Multilevel modeling is appropriate. |

Issue 3: Handling Convergence Warnings in Complex Models

Problem: A model with multiple random effects (e.g., random intercepts and random slopes) fails to converge and returns a warning.

Solution: Follow a structured protocol to simplify the model and diagnose the issue.

Experimental Protocol:

- Check Scaling: Grand-mean center all continuous predictors. This reduces correlation between the intercept and slopes, making the model easier to fit [1].

- Simplify Random Effects: If the complex model has a random correlation parameter, try fitting a model without it (e.g., in R,

lme4uses+ (1 + Time || SubjectID)to remove the correlation). High correlation between random effects can cause problems. - Increase Iterations: As a temporary diagnostic, increase the number of iterations the optimizer uses.

- Reduce Complexity: If the above fails, the data may not support a highly complex random effects structure. Consider removing the random effect with the smallest variance and re-fitting the model. The ultimate goal is to find the most complex model that is justifiable and supported by the data without causing convergence issues.

The Scientist's Toolkit

Table 2: Essential Reagents & Resources for Multilevel Modeling Research

| Tool / Reagent | Function / Purpose |

|---|---|

| R Statistical Software | A flexible, open-source programming environment with extensive packages for multilevel modeling, such as lme4 and nlme [2]. |

lme4 R Package |

The primary package for fitting linear and generalized linear mixed-effects models. It allows for the specification of complex random effects structures [3]. |

| Information Criteria (AIC/BIC) | Metrics used for model selection. They balance model fit with complexity, helping to identify the model that best explains the data without overfitting [3]. |

| Grand-Mean Centering | A data preprocessing technique where a variable's mean is subtracted from each of its values. This makes the intercept more interpretable and can resolve model convergence issues [1]. |

| Exploratory Data Analysis (EDA) Plots | Visualization techniques, such as spaghetti plots of individual trajectories, used to form hypotheses about the need for random intercepts and slopes before formal modeling. |

Understanding the Intraclass Correlation Coefficient (ICC)

What is the ICC, and why is it fundamental to multilevel modeling research?

The Intraclass Correlation Coefficient (ICC) is a descriptive statistic used to measure the degree of agreement or similarity among units within the same group or cluster [4]. In the context of multilevel modeling (MLM) with random effects, it quantifies the proportion of the total variance in the outcome that is attributable to systematic differences between clusters, as opposed to variation within clusters [5] [4]. This is crucial because it determines whether MLM is necessary and informs the structure of the random effects.

Mathematically, in its simplest form for a one-way random effects model, the ICC is calculated as the ratio of the between-cluster variance to the total variance [4]: ICC = σ²α / (σ²α + σ²ε) Where:

- σ²α is the variance between clusters (the random effect variance).

- σ²ε is the variance within clusters (the residual or error variance).

The ICC ranges from 0 to 1. An ICC of 0 indicates no shared variance within clusters; members of a group are no more similar than members of different groups. An ICC of 1 indicates perfect agreement within clusters; all the variance is between clusters, with no variance within them [6] [4]. In longitudinal data analysis, a high ICC indicates that a large portion of the variability in the outcome is due to stable, time-invariant differences between individuals, justifying the use of individual growth models [5].

ICC Selection Framework

How do I select the correct form of the ICC for my study design?

Selecting the appropriate ICC form is critical, as different forms are based on distinct assumptions and yield different interpretations [7]. The choice is guided by three key decisions related to your experimental design, which can be visualized in the following workflow and are detailed in the table below.

Decision Workflow for Selecting an Intraclass Correlation Coefficient Form

| Selection Aspect | Available Options | Guidance for Selection |

|---|---|---|

| 1. Statistical Model [7] [8] | One-Way Random Effects: Each subject is rated by a different, random set of raters. | Use for designs where different, randomly selected clusters (e.g., different teachers in different schools) rate each subject. Rare in clinical studies. |

| Two-Way Random Effects: A random sample of raters is selected, and all subjects are rated by the same set of these raters. | Use if you wish to generalize your reliability results to any raters from the larger population with similar characteristics. Common for inter-rater reliability. | |

| Two-Way Mixed Effects: A specific, fixed set of raters is used, and all subjects are rated by them. | Use if the raters in your study are the only raters of interest, and results should not be generalized to other raters. | |

| 2. Unit of Measurement("Type") [7] [8] | Single Rater/Measurement | Use if the measurement protocol in your actual application will rely on a score from a single rater. |

| Average of k Raters/Measurements | Use if the measurement protocol will use the mean score from multiple raters as the basis for assessment. | |

| 3. Definition of Relationship("Definition") [7] [8] | Absolute Agreement | Use when systematic differences between raters (e.g., one rater consistently scoring 2 points higher) are considered relevant and should be included in the reliability estimate. |

| Consistency | Use when only the rank order of subjects is important, and systematic differences between raters are considered irrelevant. |

Experimental Protocols & Calculation

What are the standard methodologies for calculating the ICC in a multilevel context?

The ICC can be derived from a null model (or intercept-only model) in multilevel modeling, which partitions the variance into its between-cluster and within-cluster components [5].

Protocol 1: Estimating ICC from a Multilevel Null Model

Model Specification: The null model is specified as follows:

- Level 1 (Within-cluster): ( Y{ij} = \beta{0j} + R_{ij} )

- Level 2 (Between-cluster): ( \beta{0j} = \gamma{00} + U_{0j} )

- Combined: ( Y{ij} = \gamma{00} + U{0j} + R{ij} ) Here, ( Y{ij} ) is the outcome for individual *i* in cluster *j*, ( \gamma{00} ) is the overall grand mean, ( U{0j} ) is the cluster-specific random effect (deviation from the grand mean), and ( R{ij} ) is the individual-level residual [5].

Variance Component Estimation: Using statistical software (e.g., R, SPSS, SAS), fit the null model using Maximum Likelihood (ML) or Restricted Maximum Likelihood (REML) estimation. The output will provide estimates for:

- ( \tau0^2 ): The variance of ( U{0j} ) (between-cluster variance).

- ( \sigma^2 ): The variance of ( R_{ij} ) (within-cluster variance).

ICC Calculation: Compute the ICC using the formula: ICC = ( \tau0^2 ) / (( \tau0^2 ) + ( \sigma^2 )) [5] [4].

Example from Longitudinal Research: In a study of testosterone levels in female athletes measured over time, the null model output was [5]:

- Variance Between Individuals ( ( \tau_0^2 ) ): 252.9

- Variance Within Individuals ( ( \sigma^2 ) ): 186.1

- ICC Calculation: 252.9 / (252.9 + 186.1) = 0.576 This ICC of 0.576 indicates that 57.6% of the total variance in testosterone levels is attributable to stable differences between individuals, confirming the need for a multilevel modeling approach that accounts for this non-independence of measurements within the same person [5].

Protocol 2: Calculating ICC via Analysis of Variance (ANOVA)

For simpler designs, the ICC can be calculated using mean squares (MS) from a one-way ANOVA [6] [7].

- Formula (One-Way Random, Single Rater, Absolute Agreement): ICC(1,1) = (MSR - MSW) / (MSR + (k+1) * MSW) [7] Where MSR is the Mean Square for Rows (between subjects), MSW is the Mean Square for Residual (within subjects), and k is the number of raters.

The following diagram illustrates the workflow for these two primary calculation methods.

Workflow for Two Primary ICC Calculation Methods

Interpretation and Reporting Standards

How should I interpret the magnitude of the ICC, and what are the best practices for reporting it?

Interpretation Guidelines While context is critical, a common guideline for interpreting the reliability of measurements is as follows [7] [8] [9]:

| ICC Value | Interpretation |

|---|---|

| Below 0.50 | Poor reliability |

| 0.50 to 0.75 | Moderate reliability |

| 0.75 to 0.90 | Good reliability |

| Above 0.90 | Excellent reliability |

Best Practices for Reporting To ensure reproducibility and clarity, your report should include [7] [9]:

- Software and Version: The statistical software and package used (e.g., R

psychpackage, version X.X.X). - ICC Form Specification: Explicitly state the "model," "type," and "definition" used (e.g., "a two-way random-effects model for absolute agreement using a single rater (ICC2,1)").

- Point Estimate and Confidence Interval: Report both the ICC estimate and its 95% confidence interval. The CI provides crucial information about the precision of your estimate [6] [8] [9].

- Descriptive Statistics: Include means and standard deviations for the raters or measurement occasions.

- Variance Components: When relevant, report the estimated variance components (between and within) used to calculate the ICC.

The Scientist's Toolkit

What are the essential research reagent solutions for implementing ICC analysis?

In the context of statistical analysis, "research reagents" refer to the core software tools and functions required to conduct the analysis.

| Tool / Package | Function / Use-Case | Key Features |

|---|---|---|

R psych package [8] |

ICC() function |

Calculates multiple forms of ICC simultaneously. Handles missing data and unbalanced designs using lmer. |

R irr package [6] [8] |

icc() function |

Allows detailed specification of model, type, and definition for a single ICC form. |

Python pingouin package [9] |

intraclass_corr() function |

Computes all ICC forms in a Pandas DataFrame, common in Python-based data science workflows. |

| SPSS [6] [10] | Scale > Reliability Analysis | Provides a menu-driven interface for calculating ICC, often accompanied by an ANOVA table. |

| SAS [10] | PROC MIXED |

A powerful procedure for fitting multilevel models, from which variance components for ICC can be extracted. |

Frequently Asked Questions (FAQs)

My ICC is negative. What does this mean and what should I do? A negative ICC can occur, particularly with Fisher's original unbiased formula, when the underlying population ICC is very low (close to 0) and sampling variation produces an estimate where the between-cluster variance is effectively negative [4]. In the framework of variance components, the between-cluster variance is constrained to be non-negative. In practice, a negative ICC is generally interpreted as evidence of very low reliability, effectively equivalent to an ICC of 0 [6] [4].

What is the difference between "consistency" and "absolute agreement," and why does it matter? This is a critical distinction [8]:

- Absolute Agreement considers both the correlation between ratings and any systematic, additive biases between raters. It is sensitive to differences in means. Use this when the exact numerical score is important.

- Consistency only considers if the raters' scores are correlated in an additive manner. It ignores systematic biases. Use this when only the rank ordering of subjects is important.

For test-retest and intra-rater reliability, the two-way mixed-effects model with absolute agreement is recommended [8].

How does the ICC relate to the design of my longitudinal study? In longitudinal studies, the ICC from a null model indicates how much of the total variation is due to stable differences between individuals versus fluctuations within individuals over time [5] [10]. A high ICC suggests that a major source of variation is the individuals' different starting points (intercepts), guiding you to focus on level-2 (time-invariant) predictors to explain why individuals start at different levels. A low ICC suggests that most of the action is in the within-person variation over time, guiding you to focus on level-1 (time-varying) predictors [5].

Multilevel Models as a Framework for Pharmacometrics and Systems Pharmacology

Troubleshooting Guide: Common Multilevel Model Issues

This guide addresses specific issues you might encounter when developing and estimating multilevel models (MLMs) with pharmacological data.

1. Problem: Model Fails to Converge (Non-Convergence)

- Description: The optimization algorithm cannot find a parameter set that maximizes the likelihood of your observed data. You will typically receive a warning message like "convergence code: 0" or similar, depending on your software [11].

- Causes: Highly complex models (e.g., too many random effects), poorly scaled variables, or insufficient data, especially at higher levels (e.g., too few groups) [12] [11].

- Solutions:

- Increase iterations: Allow the optimizer more attempts to find a solution [11].

- Change the optimizer: Switch to a different optimization algorithm (e.g., from

NLOPTtoBOBYQAin R) [11]. - Simplify the model: Remove unnecessary random effects, especially correlations between them. Start with a random intercepts model before adding random slopes [12] [11].

- Check and scale predictors: Center or standardize continuous predictors to improve model stability [11].

2. Problem: Singular Fit or Boundary Fit

- Description: The model estimates that a variance component is zero or that random effects are perfectly correlated (|r| = 1). In R, you may see "boundary (singular) fit: see help('isSingular')" [11].

- Causes: The model is overfitted, meaning it is trying to estimate random effects for which there is little or no variance in your data [11].

- Solutions:

- Examine variance-covariance estimates: Look for variances near zero or correlations at -1 or 1 [11].

- Simplify the random effects structure: The most common solution is to remove the random effect that is causing the singularity (e.g., remove a random slope) [11].

- Use a simpler model: A model with only random intercepts is often sufficient and more stable than one with both random intercepts and slopes [12].

3. Problem: Insufficient Statistical Power

- Description: The study lacks the ability to detect significant effects, particularly higher-level (Level 2) effects or cross-level interactions [12].

- Causes: Primarily, an insufficient number of Level 2 units (e.g., clinics, studies). While more Level 1 observations per group helps, power for Level 2 effects depends more on the total number of groups [12].

- Solutions:

- Increase the number of groups: Where possible, design studies to maximize the number of Level 2 units. A minimum of 20 groups is often recommended for detecting cross-level interactions [12].

- Use prior information: In a Bayesian framework, using informative priors can help with power in data-limited scenarios [12].

Frequently Asked Questions (FAQs)

Q1: When should I use a multilevel model in pharmacometric or systems pharmacology research? Use an MLM when your data has a hierarchical or nested structure. Common scenarios in this field include [13] [12]:

- Repeated measurements clustered within individual patients or animals.

- Patients clustered within different clinical trial sites or research centers.

- In vitro experiments where multiple technical replicates are nested within a single experimental run. MLMs correctly handle the non-independence of observations within these clusters, preventing biased standard errors and inaccurate conclusions [12].

Q2: What is the difference between Fixed Effects and Random Effects?

- Fixed Effects: These are parameters (like intercepts and slopes) that are assumed to be constant across all groups or levels in your data. They estimate the average effect of a predictor across the entire population [12] [14].

- Random Effects: These are parameters that are allowed to vary across groups. They account for the variability between groups (e.g., how the baseline response or the effect of a drug varies from one clinic to another) [12] [14].

Q3: My research involves both short-term drug response and long-term disease progression. Can multilevel models handle multiple timescales? Yes. The multilevel framework is highly flexible. You can model individual short-term trajectories (Level 1) that are nested within a long-term disease progression model (Level 2). This aligns with the need in Quantitative Systems Pharmacology (QSP) to integrate models across different biological scales and timescales, from cellular mechanisms to whole-body physiology [13].

Q4: How do I choose between FIML and REML estimation?

- Use REML (Restricted Maximum Likelihood): When your primary interest is in obtaining accurate estimates of the variance components (random effects). REML applies a degrees-of-freedom correction, leading to less biased variance estimates. This is the default for most final models [11].

- Use FIML (Full Information Maximum Likelihood): When you need to compare the fit of two models that have different fixed effects. FIML should be used for model comparison, as models with different fixed effects are not comparable under REML [11].

Q5: What is a cross-level interaction and why is it important? A cross-level interaction occurs when a variable at a higher level (e.g., clinic size, a genetic factor common to a strain of animals) moderates the relationship between a variable at a lower level (e.g., drug dose) and the outcome. For example, the efficacy of a drug dose (Level 1) might depend on the patient's genotype (Level 2). Modeling these interactions is key to understanding context-dependent treatment effects [12] [14].

Protocol 1: Fitting a Multilevel Model with Random Intercepts and Slopes This protocol outlines the steps for implementing a common MLM using statistical software like R.

Protocol 2: Integrating QSP and Pharmacometrics via Multilevel Modeling This protocol describes a sequential integration approach for combining different modeling philosophies [15].

Table 1: Summary of Key Multilevel Model Types and Applications

| Model Type | Key Characteristics | Ideal Use Case in Pharmacometrics |

|---|---|---|

| Random Intercepts | Intercepts vary across groups; slopes are fixed. | Accounting for baseline differences between clinical sites or patient subgroups when the effect of a drug (slope) is assumed constant [12]. |

| Random Slopes | Slopes vary across groups; intercepts are fixed. | Modeling situations where the response to a drug (dose-effect slope) is expected to differ across individuals or centers [12]. |

| Random Intercepts & Slopes | Both intercepts and slopes vary across groups. | The most realistic model for personalized medicine; accounts for both baseline variability and individual differences in treatment response [12]. |

Table 2: Essential "Research Reagent Solutions" for Multilevel Modeling

| Item / Concept | Function & Explanation |

|---|---|

| Optimizer Algorithm | The computational engine that finds parameter estimates maximizing the likelihood. Different algorithms (e.g., NLOPT, BOBYQA) can help resolve convergence issues [11]. |

| Likelihood-Ratio Test (LRT) | A statistical test to compare the fit of two nested models. Used to determine if adding parameters (e.g., a random effect) significantly improves the model [12]. |

| Intraclass Correlation Coefficient (ICC) | Measures the proportion of total variance in the data that is accounted for by the grouping structure. Helps decide if a multilevel model is necessary (ICC > 0.05 suggests it is) [12]. |

| Variance-Covariance Matrix (Tau) | Summarizes the estimated variances of the random effects and their correlations. Diagnosing issues here (e.g., correlations of ±1) is key to troubleshooting singularity [11]. |

| Shrinkage Estimate | The process where multilevel models pull estimates for groups with little data towards the overall mean. This provides more robust, generalizable estimates than analyzing groups separately [12]. |

FAQs on Data Structure Fundamentals

What is the core difference between long and wide formats?

The core difference lies in how repeated measurements are structured. In the wide format, each subject or entity occupies a single row, and their repeated responses over time are spread across multiple columns—one column for each time point. In the long format, each subject has multiple rows—one for each time point—leading to repeated values in the identifier column [16] [17].

| Feature | Wide Format | Long Format |

|---|---|---|

| Rows per Subject | One single row [18]. | Multiple rows (one per time point) [16]. |

| Time Representation | Multiple columns (e.g., time1, time2) [17]. |

A single Time column [17]. |

| Data Redundancy | Low; efficient for human reading [17]. | High; identifiers repeat [16]. |

| Primary Use Case | Simple descriptive analysis and data lookup [16]. | Multilevel modeling, visualization, and flexible analysis [16] [19]. |

Which format is required for multilevel (mixed-effects) models?

For conducting multilevel or mixed-effects longitudinal models, you must use the long data format. These models are designed to handle within-subject dependencies, and the algorithm requires the data to be structured with multiple rows per subject to estimate the within-person and between-person effects accurately [19] [20]. Statistical software for mixed models (e.g., lme4 in R, MIXED in SPSS) will expect data in this long structure.

Troubleshooting Guide: Common Data Structure Issues

My statistical software returns an error about "correlated observations." What's wrong?

Problem: This error often occurs when data destined for a multilevel model is in wide format. The model cannot identify the repeated measures for each subject, failing to account for the non-independence of observations within the same individual [20].

Solution: Reshape your data from wide to long format. This creates the explicit structure the model needs to estimate random effects, such as the random intercepts that capture each subject's baseline deviation from the population average [20].

My plot fails to show individual trajectories over time. How do I fix this?

Problem: Visualization packages, particularly ggplot2 in R, require data in a long format to map a single variable (e.g., score) to the y-axis and a time variable to the x-axis. In a wide format, each time point is a separate column, making it impossible to connect measurements into a single line per subject [16] [19].

Solution: Convert your wide-format data to long format. This groups all measurement values into a single column, allowing you to use the group aesthetic in your plotting code to draw a separate line for each subject.

When should I consider using a wide format?

Despite the long format's utility for complex modeling, the wide format has specific advantages:

- Descriptive Statistics and Data Exploration: Calculating summary statistics (e.g., mean, standard deviation) for each time point is more straightforward when each time point is its own column [16].

- Data Lookup and Review: The wide format is often more human-readable and intuitive for quickly checking all data points for a specific subject, as all their information is on one row [17].

- Certain Statistical Procedures: Some analyses, like repeated-measures ANOVA in some software packages, might initially require data in a wide format.

The Researcher's Toolkit: Essential Software & Functions

The following tools are essential for managing and analyzing longitudinal data structures.

| Tool/Function | Primary Use | Key Function for Longitudinal Data |

|---|---|---|

R with tidyr |

Data Wrangling | pivot_longer(): Converts wide data to long format. pivot_wider(): Converts long data to wide format [19]. |

ggplot2 |

Data Visualization | Creates spaghetti plots and growth curves. Requires long format [16] [19]. |

lme4 / nlme |

Multilevel Modeling | Fits linear and nonlinear mixed-effects models (e.g., lmer()). Requires long format [20]. |

| SPSS | Statistical Analysis | "VARSTOCASES" procedure in syntax to reshape wide data to long. Mixed Models procedure analyzes long-format data [21]. |

From Theory to Practice: Specifying and Applying Random Effects Models

Frequently Asked Questions

1. What is the core difference between treating time as a fixed effect versus a random effect in a growth model?

The core difference lies in how the effect of time is generalized. When time is a fixed effect, the model estimates a separate, distinct effect for each time point (e.g., a specific mean for baseline, 3 weeks, 6 weeks). This is used when the time points themselves are of specific interest, or when all possible time levels are included in your study (e.g., only five specific measurement occasions) [22] [23]. When time is a random effect (specifically, a random slope), the model assumes that the effect of time varies across individuals and that the time points in your study represent a random sample from a larger population of possible time points. This allows you to model and understand individual differences in growth trajectories [24].

2. I have only five specific measurement time points (e.g., Baseline, 3 weeks, 6 weeks, 3 months, 6 months). Should I model time as fixed or random?

In this common scenario, you should typically model time as a fixed effect [22]. Since your five time points represent all the levels of interest for your study and are not a random sample from a universe of possible time points, they fit the definition of a fixed factor. You would still model the participant-specific intercepts as random effects to account for the correlation between repeated measures on the same individual. A model with a random intercept for subjects and fixed effects for your five time points is often an appropriate starting point.

3. What are the consequences of incorrectly specifying time as a random effect?

Modeling time as a random effect when the number of time points is small (e.g., five) can lead to estimation problems. The model may have difficulty reliably estimating the variance of the time slopes across individuals, potentially leading to convergence issues or biased estimates [24]. Furthermore, treating time as random when its levels are not exchangeable (i.e., your specific time points have unique, meaningful interpretations) can make the results of your hypothesis tests difficult to interpret correctly.

4. How does the choice between fixed and random time effects influence the research questions I can answer?

This choice directly shapes your analysis focus. A fixed effect model for time answers: "What are the specific average outcome values at each of my measured time points, and how do they differ?" It is ideal for testing the overall effect of an intervention at these specific times [25]. A random slope for time answers: "How much do individuals vary in their rate of change over time, and can other variables predict this variation?" This approach is suited for studying individual differences in growth [24].

Experimental Protocol for Model Specification

This protocol provides a step-by-step methodology for building a multilevel growth model, focusing on the specification of fixed and random effects for time.

1. Research Question Formulation:

- Fixed Time Focus: Define your hypothesis in terms of mean differences at specific time points (e.g., "The intervention group will show a significantly higher mean score at 6 months compared to the control group.").

- Random Time Focus: Define your hypothesis in terms of variability in change (e.g., "There is significant variability in individual slopes over time, and participant age predicts this variability.").

2. Data Structure Preparation:

- Ensure your dataset is in long format, where each row represents a single observation for a single participant at a specific time.

- Variables must include a participant ID, a continuous time metric (e.g., 0, 1, 2, 3, 4), the categorical time factor (e.g., "Baseline," "3weeks"), the outcome variable, and any covariates (e.g., intervention group).

3. Exploratory Data Analysis (EDA):

- Plot individual growth trajectories for a sample of participants to visually assess the general form of change (linear, quadratic) and the degree of variation in intercepts and slopes.

- Calculate and plot the mean of the outcome variable at each time point by group. This initial visualization helps justify the use of fixed time effects.

4. Model Building and Comparison: Follow a logical progression from simpler to more complex models. The table below outlines key models to estimate and compare.

Table: Model Specification and Comparison Protocol

| Model Name | Specification | Research Question | Key Output |

|---|---|---|---|

| 1. Null Model | Outcome ~ 1 + (1|Participant_ID) | What is the average starting point, and how much do participants vary around it? | Intraclass Correlation Coefficient (ICC) |

| 2. Random Intercept, Fixed Time | Outcome ~ TimeFactor + (1|ParticipantID) | Controlling for individual starting points, do the means of the outcome differ across specific time points? | F-test for Time_Factor; ICC |

| 3. Random Intercept, Random Slope | Outcome ~ TimeContinuous + (1 + TimeContinuous|Participant_ID) | On average, does the outcome change over time, and do participants vary in their individual rates of change? | Variance of random slopes; p-value for fixed slope of Time_Continuous |

5. Model Estimation and Diagnostics:

- Estimate models using maximum likelihood (ML) to enable model comparison.

- Check for convergence issues.

- Examine residuals for normality and homoscedasticity.

6. Statistical Inference:

- Use likelihood ratio tests (LRTs) to compare nested models. For example, compare Model 3 (random slope) to Model 2 (fixed time) to test if adding random slopes significantly improves model fit.

- Interpret p-values for fixed effects and confidence intervals for variance components.

Decision Workflow for Specifying Time Effects

The following diagram illustrates the logical process for deciding how to handle time in your growth model.

Table: Key Reagents and Tools for Multilevel Growth Modeling

| Item | Function / Purpose |

|---|---|

| Statistical Software (R/Python) | Provides the computational environment and specialized packages (e.g., lme4, nlme in R; statsmodels in Python) for estimating multilevel models. |

| Multilevel Model Formulation | The mathematical blueprint of your model, defining the levels of nesting, fixed effects, and random effects. It is the most critical "reagent" for a valid analysis [26]. |

| Intraclass Correlation (ICC) | A diagnostic metric calculated from the null model. It quantifies the proportion of total variance in the outcome that is due to differences between clusters (or individuals), determining if multilevel modeling is necessary [26]. |

| Likelihood Ratio Test (LRT) | A statistical "tool" used to compare the goodness-of-fit between two nested models, helping to determine if adding a parameter (like a random slope) significantly improves the model. |

| Visualization Package (e.g., ggplot2) | Software library used to create spaghetti plots and other diagnostic graphics essential for exploratory data analysis and presenting results. |

Modeling Individual Drug Response Trajectories in Clinical Trials

Frequently Asked Questions (FAQs)

Q1: What are individual drug response trajectories and why are they important? Individual drug response trajectories refer to the time-based paths of patient outcomes (e.g., symptom scores) during a clinical trial [27]. Modeling these trajectories is crucial because patients often show significant heterogeneity in their treatment response; for example, some may respond well to a drug while others may not respond or even do worse than those on a placebo [28]. Identifying these distinct patterns helps in understanding the true effect of a treatment, improving trial efficiency, and enabling personalized medicine.

Q2: How do multilevel models improve the analysis of clinical trial data? Clinical trial data often has a hierarchical or "clustered" structure—for instance, repeated measurements are nested within patients, and patients may be nested within different clinical sites [26] [29]. Multilevel models (also known as hierarchical linear models or mixed models) account for this structure by partitioning variance into different levels (e.g., within-patient and between-patient) [26]. Using traditional methods like ordinary least squares (OLS) regression on such data violates the assumption of independent observations and can lead to erroneous conclusions, such as incorrect p-values and standard errors [26] [30].

Q3: What is the key difference between a fixed effect and a random effect? In the context of multilevel modeling:

- A fixed effect applies to a factor where the levels are all the specific, reproducible types of interest to the researcher (e.g., treatment group: drug vs. placebo, or sex: male vs. female) [26].

- A random effect applies to a factor where the levels represent a random sample from a larger population (e.g., different clinics in a trial or different patients), and the goal is to generalize the findings to the population from which these levels were drawn [26] [29].

Q4: What is the Intraclass Correlation Coefficient (ICC) and why does it matter? The Intraclass Correlation Coefficient (ICC) measures the extent to which individuals within the same group (e.g., patients within the same clinic) are more similar to each other than to individuals in different groups [26]. It quantifies the proportion of the total variance in the outcome that is due to the grouping structure. An ICC value above 0 indicates that data are clustered, necessitating the use of multilevel models. Ignoring a high ICC during study planning can lead to a severely underpowered trial, as it affects the required sample size [26].

Q5: What is Growth Mixture Modeling (GMM) and when is it used? Growth Mixture Modeling (GMM) is a trajectory-based method that identifies distinct classes (or clusters) of patients following similar response trajectories over time [28]. Unlike standard multilevel models that assume a single mean trajectory for all patients, GMM can uncover hidden subpopulations, such as "treatment responders" and "treatment non-responders" within the group receiving an active drug [28]. This provides deeper insights into heterogeneous treatment effects.

Troubleshooting Guides

Problem 1: Lack of Model Convergence or Model Fitting Errors

- Problem: Your statistical software fails to fit a multilevel model, returning errors about convergence.

- Solution:

- Check your data structure: Ensure you have a sufficient number of higher-level units (e.g., clinics). A common rule of thumb is to have at least 20-30 groups for reliable estimation [29].

- Simplify the model: Start with a simpler model (e.g., a random intercept model) before attempting more complex models with random slopes. Overly complex models with many random effects can be too much for the data to support.

- Check software documentation: For software like R, using packages like

lmerTestcan provide more robust p-value calculations for fixed effects. If usinglme4, thepvals.fncfunction is no longer supported [29].

Problem 2: No Apparent Trajectory Classes are Found

- Problem: When using trajectory-based methods like GMM, the analysis suggests only a single class of responders, failing to identify expected sub-groups.

- Solution:

- Review model fit indices: Do not rely on a single fit index. Use a combination of criteria like the Schwartz-Bayesian Information Criterion (BIC), the Lo-Mendell-Rubin (LMR) test, and clinical interpretability to select the number of classes [28].

- Ensure clinical relevance: A class should be both statistically supported and clinically meaningful. The literature suggests that a class should contain at least 5% of the sample to be considered stable and meaningful [28].

- Consider pre-processing: Ensure your longitudinal data is properly pre-processed. This may involve using interpolation to handle missing data points and create uniform time intervals, but be cautious not to introduce bias when time gaps are too large [27].

Problem 3: High Placebo Response is Obscuring Drug Effect

- Problem: A high response rate in the placebo arm is masking the signal from the active treatment, a common issue in psychiatric and other clinical trials [28].

- Solution:

- Use trajectory-based analysis: Re-analyze the data using Growth Mixture Modeling. Research has shown that while placebo-treated patients may be characterized by a single response trajectory, active drug arms may contain distinct trajectories of responders and non-responders. Comparing these subgroups to the placebo trajectory can reveal a significant drug effect that was hidden in a traditional analysis [28].

- Incorporate covariates: Use baseline characteristics (e.g., disease severity, demographics) in the model to help explain some of the variance and improve the precision of treatment effect estimates.

- Problem: You have analyzed data from patients clustered within different clinics using a standard linear regression and found a significant effect, but a colleague suggests the analysis may be invalid.

- Solution:

- Test for clustering: First, calculate the Intraclass Correlation Coefficient (ICC). If the ICC is significantly greater than zero, a multilevel model is required [26].

- Switch to a multilevel model: Fit a multilevel model that includes a random intercept for clinic. This accounts for the shared variance within clinics. It is crucial to do this, as demonstrated research shows that conclusions can differ when data are analyzed with multilevel methods compared with traditional linear regression [26] [30].

- Re-evaluate sample size: When planning a study with clustered data, use power calculation methods designed for multilevel designs, as the required sample size increases with both the ICC and the number of patients per cluster [26].

Quantitative Data in Clinical Trajectory Analysis

The table below summarizes key quantitative concepts and metrics from the literature that are essential for designing and interpreting studies on drug response trajectories.

Table 1: Key Quantitative Concepts for Modeling Response Trajectories

| Concept | Description | Impact & Consideration |

|---|---|---|

| Intraclass Correlation (ICC) | Measures proportion of total variance due to clustering [26]. | An ICC > 0 necessitates multilevel modeling. Sample size requirements increase dramatically with ICC and cluster size [26]. |

| Z'-factor | A metric for assessing the quality and robustness of an assay, considering both the assay window and the data variability [31]. | A Z'-factor > 0.5 is considered suitable for screening. A large assay window with high noise may be less robust than a small window with low noise [31]. |

| Schwartz-Bayesian Information Criterion (BIC) | A statistical criterion for model selection, where a lower value indicates a better model fit [28]. | Used alongside the LMR test to determine the optimal number of trajectories in mixture models [28]. |

| Lo-Mendell-Rubin (LMR) Test | A likelihood ratio test that determines if a model with k classes fits significantly better than a model with k-1 classes [28]. | A significant p-value (< 0.05) suggests that the model with more classes provides a superior fit [28]. |

| Entropy | A measure of how well a model classifies individuals into trajectories, ranging from 0 to 1 [28]. | Values closer to 1 indicate clearer separation and more accurate classification of patients into their most likely trajectory [28]. |

Experimental Protocol: Constructing Patient Trajectories

The following protocol outlines the methodology for constructing and analyzing individual patient trajectories from longitudinal clinical trial data, as adapted from recent research [27].

Objective: To model individual patient trajectories from longitudinal clinical data for the purpose of predicting outcomes and identifying distinct response patterns.

Materials: De-identified longitudinal clinical trial dataset (e.g., MGTX trial for myasthenia gravis [27]), statistical software (e.g., R, MPlus), standard interpolation tools.

Methodology:

Data Preprocessing:

- Input: Raw longitudinal data with patient visits ( V0, V1, ..., Vq ) and feature vectors ( \vec{X}{ij} ) for patient ( i ) at visit ( j ) [27].

- Handle Missing Data & Irregular Intervals: Use standard interpolation techniques to project state variables onto a uniform time series. Discard a patient's dataset if time gaps are too large to be filled reliably without exceeding the observed noise level in the data [27].

- Denoising (Optional): Apply a standard filtering technique to smooth the continuous time-dependent trajectory ( P_i(t) ), while preserving step changes associated with singular events like hospitalizations [27].

Trajectory Clustering:

- Define Proximity: Select a norm (e.g., ( L_p ) norm) to calculate the distance between two patient trajectories [27].

- Cluster Identification: Apply a clustering algorithm (e.g., K-means) to partition the ( N ) trajectories ( Pi(t) ) into ( Nc ) clusters ( C_k ). The goal is to group patients with the most similar temporal profiles [27].

- Cluster Validation: Evaluate the quality of the separation between clusters using an index like the Silhouette value ( S(i) ). Values close to 1 indicate that patients are well-matched to their own cluster and poorly-matched to neighboring clusters [27]. Optimize the clustering by running the algorithm iteratively to maximize the separation index.

Model Fitting & Trajectory Analysis:

- Model Selection: For statistical trajectory modeling (e.g., using MPlus), fit models with different numbers of classes and trends (linear, quadratic). Select the best model based on BIC, the LMR test, and clinical interpretability, ensuring each class contains at least 5% of the sample [28].

- Outcome Analysis: Once trajectories are established, use mixed-models to compare outcomes (e.g., HAM-D scores) between the identified trajectory classes and control groups (e.g., placebo), potentially using propensity scores to control for baseline confounding [28].

Workflow Visualization

The following diagram illustrates the logical workflow for constructing and analyzing patient trajectories.

The Scientist's Toolkit

Table 2: Key Analytical Models and Software for Trajectory Research

| Tool / Model | Function | Application Note |

|---|---|---|

| Multilevel Model (HLM) | Analyzes nested data (e.g., repeated measures within patients) by partitioning variance across levels [26] [29]. | Use to account for clinic-to-clinic variability and correctly model the standard errors in multi-site trials. Implementable in R (lme4, lmerTest), SPSS (MIXED), and SAS (PROC MIXED) [29]. |

| Growth Mixture Model (GMM) | Identifies unobserved subpopulations (latent classes) characterized by distinct trajectories of change over time [28]. | Use to discover "responder" and "non-responder" subgroups within a treatment arm. Software like MPlus is specifically designed for this [28]. |

| K-means Clustering | A partitioning algorithm that groups patient trajectories into a pre-specified number (k) of clusters based on a distance metric [27]. | Useful for an empirical, data-driven grouping of trajectories. Quality of separation should be validated with indices like the Silhouette value [27]. |

| Silhouette Value (S(i)) | Measures how similar a patient's trajectory is to its own cluster compared to other clusters (range: -1 to 1) [27]. | A value close to 1 indicates the trajectory is well-matched to its own cluster and poorly-matched to others, validating the clustering structure [27]. |

Incorporating Time-Varying and Time-Invariant Covariates

Frequently Asked Questions

1. What is the fundamental difference between time-varying and time-invariant covariates?

A time-invariant covariate is a variable whose value remains constant for each individual throughout the study period. Examples include gender, ethnicity, or baseline genetic markers. In contrast, a time-varying covariate is a variable whose value can change for an individual across different measurement occasions [32]. Examples in clinical research could include fluctuating biomarkers, drug dosage adjustments, or patient-reported outcomes like pain scores collected at multiple time points [33].

2. When should I consider using a multilevel model with time-varying covariates?

You should consider this approach when analyzing longitudinal data where both the outcome variable and at least one predictor variable are measured repeatedly over time [33]. This is common in studies tracking disease progression, therapeutic response, or any developmental process where predictors are expected to change dynamically alongside the outcome.

3. How does incorrectly modeling the level-1 growth trajectory affect my results?

Failing to correctly model the shape of the level-1 growth trajectory represents a serious specification error [32]. This can lead to:

- Incorrect parameter estimates in the level-1 model.

- Serious errors of inference for the effects of level-2 variables on the slope and intercept.

- Incorrect parameter estimates for other time-varying covariates [32].

4. My model fails to converge or shows a singularity warning. What should I do?

Non-convergence or singularity often stems from over-specified models or extreme multicollinearity [11]. To troubleshoot:

- Simplify the model: Remove random effects, especially correlations between them, to see if the model converges.

- Change the optimizer: Increase the number of iterations, or try a different optimization algorithm [11].

- Investigate the warning: For singularity, check the output for correlations of exactly ±1 between random effects or variances estimated as zero [11].

Troubleshooting Guide

Problem: Model Fails to Converge

Possible Causes and Solutions:

Table: Troubleshooting Model Convergence

| Cause | Diagnostic Check | Solution |

|---|---|---|

| Overly Complex Random Effects | Check if random slopes variances are near zero or correlations are ±1 [11]. | Simplify the random effects structure (e.g., remove random slopes or correlations). |

| Insufficient Iterations | Review model output for iteration limit warnings. | Increase the maximum number of iterations in the optimizer. |

| Incorrect Scaling of Variables | Check the scale of time-varying covariates and time metrics. | Center or rescale variables (e.g., set the initial time point to 0) [33]. |

Problem: Interpreting a Time-Varying Covariate Effect

Background: The coefficient for a time-varying covariate in a multilevel model represents the within-person effect [33]. It answers the question: "When a person's value on the predictor is higher than their own average, how does this relate to their outcome value?"

Solution: To properly isolate this effect, it can be helpful to decompose the time-varying covariate into two parts:

- Between-Person Component: The individual's mean over all time points.

- Within-Person Component: The deviation from their personal mean at each time point.

Including both components in the model allows you to separately estimate the effect of stable differences between people and the effect of time-specific fluctuations within a person [33].

Problem: Modeling Discontinuous or Non-Linear Growth

Background: Many biological processes, such as reading fluency in children, do not follow a straight-line trajectory. They may show gains during treatment periods and decline or stagnation during off-periods [32]. Forcing a linear model on such data is a serious misspecification.

Solution: Use coding schemes with time-varying covariates to capture discontinuities.

- Example: To model "summer slump" in reading skills, create a TVC that allows the growth rate during the summer to differ from the growth rate during the school year [32].

- Alternative Approaches: Consider piecewise growth models or polynomial models, though the latter may require more time points and stronger assumptions [32].

Experimental Protocols & Data Presentation

Protocol: Implementing a Basic Multilevel Model with Time-Varying Covariates in R

This protocol uses the lme4 package in R, a common tool for multilevel modeling [33].

1. Data Preparation

- Ensure your data is in long format, with one row per measurement occasion per subject.

- Create a time variable where the initial assessment is coded as 0 for easier interpretation of the intercept [33].

2. Model Specification

Fit a model with a time-varying covariate (e.g., logincome) using the lmer() function.

3. Model Interpretation

(1 + wave0 | pidp)allows each subject (pidp) to have their own random intercept and random slope for time (wave0).- The fixed effect for

logincomerepresents the within-person association between income and the mental health outcome (sf12mcs) [33].

Table: Key R Packages and Functions

| Package/Function | Purpose | Use Case |

|---|---|---|

lme4::lmer() |

Fits linear mixed-effects models. | Primary function for multilevel models with normal outcomes [33]. |

tidyverse |

Data manipulation and visualization. | Cleaning data, creating summary statistics, and plotting [33]. |

Table: Characteristics of Covariate Types in Multilevel Models

| Characteristic | Time-Invariant Covariate | Time-Varying Covariate |

|---|---|---|

| Definition | Value constant for an individual over time. | Value can change for an individual over time [32]. |

| Level in Model | Level 2 (Between-person). | Level 1 (Within-person). |

| Example Research Question | Do males and females differ in their baseline status or rate of change? | When a patient's biomarker is higher than usual, is their symptom severity also higher? [33] |

| Common Examples | Sex, genotype, randomized treatment group. | Weekly drug dosage, monthly blood pressure, session engagement [34]. |

The Scientist's Toolkit

Table: Essential Reagents & Resources for Multilevel Modeling Research

| Tool or Resource | Function | Application in Research |

|---|---|---|

| MLwiN Software | Specialized software for fitting complex multilevel models [35]. | Models with multiple membership, cross-classified structures, and discrete responses [35]. |

R package lme4 |

Fits linear and generalized linear mixed-effects models [33]. | Accessible, powerful tool for standard hierarchical models; good for beginners. |

Stan / rstan |

Probabilistic programming language for full Bayesian inference. | Highly complex models (e.g., with specific time-varying covariance structures), custom distributions [36]. |

| "A User's Guide to MLwiN" | Comprehensive software manual [35]. | Step-by-step guidance for implementing a wide range of models in MLwiN. |

| Longitudinal Data in Long Format | A data structure with one row per time point per subject [33]. | Necessary for using most statistical software to fit longitudinal multilevel models. |

Workflow and Logical Relationships

The following diagram illustrates the key decision points and processes for successfully incorporating time-varying covariates into a multilevel model.

Workflow for Incorporating Time-Varying Covariates: This diagram outlines the process for building a multilevel model with time-varying covariates, highlighting key steps from data preparation to interpretation, with integrated troubleshooting paths for common issues like non-convergence and singularity.

Frequently Asked Questions (FAQs)

FAQ 1: Why use multilevel modeling for longitudinal testosterone data in athletes? Multilevel modeling (MLM) is essential because it accounts for the nested structure of your data. In a study of athletes, repeated testosterone measurements (Level 1) are nested within individual athletes (Level 2), who may further be nested within teams or training groups (Level 3). MLM handles unbalanced data and unequal time intervals between measurements common in real-world sports research, and allows you to model individual differences in testosterone trajectories over time [37].

FAQ 2: My model shows a correlation between testosterone and performance, but it's not statistically significant. What could be wrong? This is a common challenge. A study on young professional track and field athletes found no significant correlation between testosterone concentration and power/speed measures in female athletes, despite a known physiological relationship. This could be due to:

- Small sample size: The study had 68 athletes, which may lack power to detect an effect [38].

- Moderating variables: The relationship may be influenced by other factors not included in your model, such as genetic variation. For instance, the CAG repeat length in the Androgen Receptor (AR) gene modifies testosterone's effect on other traits like vitality; shorter repeats are linked to greater testosterone sensitivity [39].

- Measurement precision: Ensure you use the most reliable methods, like Liquid Chromatography-Mass Spectrometry (LC-MS), for testosterone assessment [38].

FAQ 3: How should I account for circadian variation when sampling testosterone? Testosterone exhibits a pronounced circadian rhythm. To control for this:

- Standardize the time of sample collection for all participants. Studies often use statistical methods, like multilevel modeling, to predict testosterone levels at a standard time (e.g., 12 PM) based on the actual time of venipuncture [40].

- Collect multiple samples on non-consecutive days to establish a reliable baseline, as done in studies of salivary testosterone [39].

FAQ 4: What is the best way to quantify an athlete's overall exposure to testosterone over time? A common method is to calculate the area under the curve (AUC) of the testosterone trajectory. This involves:

- Plotting longitudinal testosterone measurements against time.

- Using a spline-interpolation function to generate a curve through the data points.

- Calculating the integral (area under the curve) between the first and last measurement. This AUC provides a single value representing the athlete's cumulative exposure to testosterone during the study period [40].

Troubleshooting Guide: Common Errors and Solutions

| Problem Description | Potential Cause | Solution |

|---|---|---|

| Model convergence failure | Overly complex model structure (too many random effects) for the available data. | Simplify the model. Start with a random intercept only model (lmer(popular ~ 1 + (1|class), data)). Then, cautiously add random slopes only if supported by theory and data [37]. |

| Highly correlated random effects | Individual trajectories are too similar or the model is misspecified. | Check the correlation between the random intercepts and slopes. If it's near ±1, consider simplifying the random effects structure or using a Bayesian approach with informative priors. |

| No significant fixed effect of testosterone | High within-athlete variability or a true absence of a direct linear relationship. | Increase measurement frequency. Explore non-linear growth models (e.g., cubic splines). Investigate interaction effects with moderators like age, sex, or genetic markers [38] [39] [41]. |

| Unexplained variance between athletes | Omitted predictor variables at the athlete level (Level 2). | Collect and include athlete-level covariates such as genetic data (e.g., AR-CAG repeat length [39]), training history, body composition [41], or age at peak height velocity [40]. |

Summarized Quantitative Data

Table 1: Testosterone Levels and Physical Performance in Young Professional Athletes (n=68) Source: Adapted from Bezuglov et al., 2023 [38]

| Variable | Male Athletes (Mean) | Female Athletes (Mean) | P-value (Sex Difference) | Correlation with Testosterone (Males) | Correlation with Testosterone (Females) |

|---|---|---|---|---|---|

| Testosterone (nmol/L) | 17.9 | 1.02 | < 0.001 | - | - |

| 30m Sprint (s) | 4.28 | 4.71 | < 0.001 | r = -0.32 | r = 0.10 |

| CMJ Height (cm) | 40.2 | 32.8 | < 0.001 | r = 0.33 | r = -0.09 |

| Body Fat (%) | 11.7 | 19.5 | < 0.001 | r = -0.25 | r = -0.10 |

| Muscle Mass (kg) | 34.5 | 22.7 | < 0.001 | r = 0.19 | r = 0.12 |

CMJ: Countermovement Jump. Significant correlations are in bold.

Table 2: Testosterone Association with Muscle Mass and Strength in Adult Males (NHANES 2011-2014, n=~4,495) Source: Adapted from Frontiers in Physiology, 2025 [41]

| Outcome Measure | Association with log2(Testosterone) (β coefficient or OR) | 95% Confidence Interval | P-value |

|---|---|---|---|

| Appendicular Lean Mass (ALM)/BMI | β: 0.05 | 0.03 to 0.07 | < 0.001 |

| Low Muscle Mass (Odds Ratio) | OR: 0.40 | 0.24 to 0.67 | 0.006 |

| Grip Strength | β: 1.16 | -0.26 to 2.58 | 0.086 |

| Low Muscle Strength (Odds Ratio) | OR: 0.51 | 0.25 to 1.04 | 0.059 |

Experimental Protocol: Longitudinal Testosterone Assessment

Objective: To track testosterone trajectories in adolescent male athletes over time and model their relationship with growth and performance metrics.

Materials:

- See "Research Reagent Solutions" below.

- Hologic QDR-4500A fan-beam densitometer or equivalent for DXA scans [41].

- Calibrated dynamometer for grip strength testing [41].

- Stadiometer for accurate height measurement.

Methodology:

- Participant Recruitment: Recruit a cohort of adolescent male athletes (e.g., aged 9-17) [40].

- Longitudinal Sampling: Collect blood plasma samples at multiple, predefined visits (e.g., at ages 9, 11, 13, 15, and 17). Record the exact time of venipuncture for each sample [40].

- Hormone Assay: Quantify total testosterone and Sex Hormone Binding Globulin (SHBG) using enzyme-linked immunosorbent assays (ELISA). Run 10% of samples in duplicate for quality control. For values below the detection limit, impute with the lower bound value (e.g., 0.28 nmol/L) [40].

- Performance & Anthropometry: At each visit, record height to calculate Age at Peak Height Velocity (APHV) as a proxy for pubertal timing [40]. Conduct DXA scans to determine appendicular lean mass and grip strength tests [41].

- Data Adjustment: Use multilevel modeling to adjust all testosterone measurements to a standard time of day (e.g., 12 PM) to account for circadian variation [40].

Workflow and Relationship Diagrams

Multilevel Data Structure in Athletic Research

Analytical Workflow for Testosterone Modeling

Research Reagent Solutions

| Item | Function in Research | Example Application in Testosterone Studies |

|---|---|---|

| LC-MS/MS (Liquid Chromatography-Tandem Mass Spectrometry) | Gold-standard method for precise and accurate quantification of total serum testosterone. | Used in large-scale studies like NHANES for highly reliable hormone measurement [41]. |

| ELISA Kits (Enzyme-Linked Immunosorbent Assay) | A practical and accessible immunoassay for quantifying testosterone and SHBG from plasma/saliva. | Used in longitudinal cohort studies (e.g., ALSPAC) to process hundreds of samples; requires quality control with duplicate samples [40]. |

| SRM/PowerTap Mobile Ergometers | Devices that measure power output (watts) during cycling, providing objective data on external training load. | Critical for quantifying exercise intensity and volume as a covariate in models, as training load can influence testosterone levels [42]. |

| DXA Scanner (Dual-Energy X-ray Absorptiometry) | Precisely measures body composition, including appendicular lean mass (ALM), a key outcome for testosterone's anabolic effects. | Used to determine the relationship between testosterone levels and muscle mass in cross-sectional studies [41]. |

| Genetic Analysis Tools | For genotyping polymorphisms like the CAG repeat in the Androgen Receptor (AR) gene. | Used to investigate why the relationship between testosterone levels and outcomes (e.g., vitality, performance) varies between individuals [39]. |

Troubleshooting Guide: Frequently Asked Questions

Host-Pathogen Interaction Modeling

Q1: Our 2D intestinal monolayer model fails to accurately predict in vivo pathogen behavior. What key microenvironmental factors are we missing?

A: Traditional 2D monolayers lack essential physiological features that pathogens encounter in vivo. Your model likely misses these critical elements [43]:

- Three-dimensional (3-D) architecture: Pathogens respond to structural cues absent in flat monolayers [43].

- Multicellular complexity: The native intestine contains diverse epithelial (enterocytes, goblet, Paneth cells) and immune cells working in synergy [43].

- Physiologically relevant biomechanical forces: Factors like fluid shear stress can reprogram bacterial gene expression and virulence [43].

- Host cell polarity: The distinct biochemical composition of apical and basolateral surfaces influences pathogen attachment and invasion strategies [43].

- Commensal microbiota: The native microbial community is a major component pathogens interact with [43].

Solution: Implement advanced 3-D model systems summarized in Table 1.

Table 1: Comparison of Advanced 3-D Intestinal Model Systems

| Model Type | Key Features | Advantages | Limitations | Primary Applications |

|---|---|---|---|---|

| Rotating Wall Vessel (RWV) Bioreactor [43] | - Low-fluid-shear conditions- 3-D organotypic structure- Facilitates cellular differentiation & polarization | - In vivo-like morphology & function- Model for host-pathogen interactions | - Requires specialized bioreactor equipment- Can be complex to establish | - Studying infection mechanisms in a more physiological context |

| ECM-Embedded/ Organoid Models [43] | - Cells embedded in Extracellular Matrix (ECM)- Self-organizing 3-D structures- Recapitulates crypt-villus architecture | - High biological relevance- Contains multiple cell types- Can be derived from patient cells | - High complexity and variability- Can be challenging to infect uniformly (e.g., due to internal lumen) | - Personalized disease modeling- Investigating cell-pathogen interactions in a tissue-like context |

| Gut-on-a-Chip (OAC) Models [43] | - Microfluidic channels- Application of cyclic strain (peristalsis-like)- Controlled fluid flow & shear stress | - Incorporates dynamic biomechanical forces- Can co-culture microbes and host cells- Potential for real-time monitoring | - Technically complex and expensive- Still an emerging technology | - Investigating the role of biomechanical forces in infection- Long-term studies of host-microbe dynamics |

Q2: What is the detailed protocol for establishing a baseline 3-D intestinal model using the RWV bioreactor?

A: The following methodology provides a foundation for building a physiologically relevant model for host-pathogen studies [43].

Experimental Protocol: Establishing a 3-D Intestinal Model in the RWV Bioreactor

- Cell Seeding: Seed human intestinal epithelial cells (e.g., Caco-2, HCT-8) onto microcarrier beads within the RWV bioreactor.

- Bioreactor Culture: Culture cells in the RWV bioreactor. The vessel is rotated along the horizontal axis, creating a low-fluid-shear, low-turbulence environment that allows cells to aggregate in three dimensions.

- Model Maturation: Culture for 1-2 weeks. During this time, cells spontaneously differentiate and form 3-D organotypic structures that exhibit in vivo-like characteristics, including:

- Development of distinct apical and basolateral polarity.

- Formation of tight junctions and other intercellular connections.

- Enhanced expression of differentiation markers.

- Model Validation: Prior to infection, confirm the success of the 3-D culture by:

- Histology: Assess 3-D structure and architecture (e.g., via H&E staining).

- Immunofluorescence: Confirm the presence and correct localization of polarity markers (e.g., brush border enzymes on the apical surface) and junctional proteins (e.g., E-cadherin, ZO-1).

- Transepithelial Electrical Resistance (TEER): Measure TEER to verify the formation of a functional, tight barrier.

- Pathogen Infection: Introduce the pathogen of interest to the mature 3-D model. The low-fluid-shear environment of the RWV has been shown to modulate bacterial virulence pathways [43].

- Analysis: Post-infection, analyze outcomes via transcriptomics, proteomics, immunohistochemistry, or measurements of barrier integrity and cytokine production.

Drug-Channel Kinetics & Cardiac Arrhythmia

Q1: Our cellular-level model of an ion channel drug shows antiarrhythmic potential, but it fails to predict proarrhythmic effects in tissue. Why does this happen?

A: This is a classic example of an emergent property that arises from the complex interactions within a higher-order system. Antiarrhythmic drugs often exhibit complex, state-dependent kinetics that interact bidirectionally with the cardiac action potential (AP) [44].

- Cellular-Level Limitations: While a single cell model can predict changes in AP duration (APD) or other cellular metrics, it cannot capture the spatial and dynamic phenomena central to arrhythmias, such as re-entry and spiral wave breakup [44].

- Bidirectional Feedback in Tissue: A drug alters the AP waveform, which in turn affects the drug's potency (e.g., use-dependent block). In tissue, this feedback is modified by electrotonic coupling between cells, leading to unpredictable emergent responses that are not apparent in isolated cell simulations [44].

- The Reentry Problem: Reentrant arrhythmias are fundamentally a tissue-level phenomenon, requiring spatial dimensions and cell-to-cell coupling to manifest [44].

Solution: A multiscale modeling approach is required, where the drug-channel model is integrated into computationally reconstructed tissue.

Table 2: Multiscale Modeling Approach for Predicting Drug Effects

| Modeling Scale | Key Predictions & Parameters | Utility in Drug Assessment | How it Addresses the Proarrhythmic Gap |

|---|---|---|---|

| Atomic/Molecular(e.g., Molecular Dynamics, Docking) [44] | - Drug-receptor binding sites- Specific molecular interactions | - Aids in rational drug design- Identifies key interaction sites | - Provides a structural basis for drug-channel kinetics, informing higher-scale models. |

| Cellular(e.g., Cardiac Myocyte Models) [44] | - Action Potential Duration (APD) |

- Screens for overt proarrhythmic potential (e.g., excessive APD prolongation) | - Identifies drug effects on single-cell behavior, which is a necessary input for tissue models. |

| 1D/2D Tissue(e.g., Cable, Sheet Models) [44] | - Conduction Velocity (CV)- CV restitution- Wavelength for reentry- Spiral wave dynamics | - Predicts vulnerability to reentrant arrhythmias- Tests for spiral wave initiation and stability | - Reveals how drug-induced changes at the cellular level (e.g., altered APD restitution) impact wave propagation and stability in tissue. |

| 3D Organ(e.g., Human Virtual Ventricles) [44] | - Complex reentrant pathways- Interaction with anatomical structures- ECG biomarkers (e.g., T-wave morphology) | - Provides the most clinically relevant prediction of proarrhythmic risk in a human context. | - Incorporates the full anatomical and physiological complexity, allowing for the prediction of drug effects on the whole organ rhythm. |

Q2: What is a robust workflow for developing and validating a multiscale model for cardiac drug safety assessment?

A: A predictive workflow integrates models across scales, moving from the molecular to the organ level.

Experimental & Modeling Protocol: A Multiscale Workflow for Cardiac Drug Safety

- Characterize Drug-Channel Kinetics: Use voltage-clamp experiments on expressed ion channels to develop a mathematical model of the drug's interaction (e.g., state-dependent block). Key parameters include association/dissociation rates (kon, koff) and affinity for different channel states [44].

- Integrate into Cellular Model: Incorporate the drug-channel model into a well-established computational model of a human ventricular myocyte. Simulate effects on the action potential and calcium handling. Key outputs: APD, restitution, and propensity for alternans [44].

- Simulate in 1D/2D Tissue: Embed the drug-affected cell model into 1D (cable) and 2D (sheet) tissue models.

- Validate in 3D Organ Model: Incorporate the model into a high-resolution, anatomically accurate reconstruction of the human ventricles. Simulate the ECG and test the drug's effect on the vulnerability to tachyarrhythmias under simulated pathological conditions (e.g., heart failure, ischemia) [44].

- Iterate and Refine: Compare simulation predictions against experimental and clinical data (e.g., from animal models or early-phase clinical trials) to validate and refine the model.

The Scientist's Toolkit: Research Reagent Solutions