Multilevel Modeling in Biomedical Research: A Complete Guide to Analyzing Hierarchical Data Across the Drug Development Cycle

This comprehensive article provides researchers, scientists, and drug development professionals with an in-depth exploration of multilevel modeling (MLM) applications throughout biomedical research cycles.

Multilevel Modeling in Biomedical Research: A Complete Guide to Analyzing Hierarchical Data Across the Drug Development Cycle

Abstract

This comprehensive article provides researchers, scientists, and drug development professionals with an in-depth exploration of multilevel modeling (MLM) applications throughout biomedical research cycles. Covering foundational concepts to advanced implementation, it demonstrates how MLM addresses hierarchical data structures in everything from single-case experimental designs to large-scale clinical trials. The content explores methodological frameworks within Model-Informed Drug Development (MIDD), offers practical troubleshooting strategies, compares MLM performance against alternative statistical approaches, and provides validation techniques for ensuring robust analytical outcomes in pharmaceutical and clinical research settings.

Understanding Multilevel Modeling: Core Principles for Hierarchical Data in Biomedical Research

What is Multilevel Modeling? Defining Hierarchical Structures in Biomedical Data

Multilevel models (MLMs), also known as hierarchical linear models or mixed-effects models, are a class of statistical techniques designed to analyze data with inherent hierarchical or nested structures [1]. In biomedical research, such data structures are the rule rather than the exception [2]. Patients are naturally nested within physicians, who are nested within clinics, which in turn may be nested within hospitals or geographic regions [3]. Similarly, longitudinal studies feature repeated measurements nested within individual patients [1]. Multilevel modeling provides an appropriate analytical framework for these complex data structures by explicitly modeling variability at each level of the hierarchy [4].

The fundamental insight of multilevel modeling recognizes that observations clustered within the same higher-level unit (e.g., patients treated by the same physician) often share more similarities with each other than with observations from different units [3]. This within-cluster homogeneity violates the standard statistical assumption of independent observations. Multilevel models correct for this non-independence while simultaneously allowing researchers to investigate how factors at different levels of the hierarchy interact to influence outcomes [5]. For example, MLMs can examine how patient-level characteristics (level 1) and physician-level practices (level 2) jointly affect treatment outcomes [3].

The application of multilevel modeling in biomedical research has grown immensely over the past decade [4]. A systematic review of literature from 2010-2020 found that 46.2% of applied multilevel modeling studies were in health/epidemiology, with 78.5% being two-level models [4]. This growth reflects increasing recognition that biomedical phenomena are inherently multilevel, influenced by factors ranging from molecular processes to healthcare system characteristics [4] [2].

Key Concepts and Statistical Foundations

Hierarchical Data Structures

Multilevel models define hierarchical structures through their level organization. The finest scale at which the response variable is measured is called the lower level, while aggregate scales are referred to as higher levels [4]. In a typical biomedical example with patients nested within clinics:

- Level 1: Patients (individual measurements)

- Level 2: Clinics (groups of patients)

More complex hierarchies are possible, such as patients (level 1) within physicians (level 2) within hospitals (level 3) [3]. Longitudinal studies represent another common hierarchical structure where repeated measurements (level 1) are nested within patients (level 2) [1].

The Multilevel Equation System

Multilevel models are characterized by their hierarchical equation system. For a simple two-level model with one level-1 predictor [1]:

Level 1 (Within-group) Equation: Yij = β0j + β1jXij + eij

Level 2 (Between-group) Equations: β0j = γ00 + γ01wj + u0j β1j = γ10 + γ11wj + u1j

Where:

- Yij is the outcome for individual i in group j

- Xij is a level-1 predictor for individual i in group j

- β0j and β1j are the intercept and slope that vary across groups

- γ00 and γ10 are the average intercept and slope across groups

- wj is a level-2 predictor

- γ01 and γ11 are the effects of the level-2 predictor on the intercept and slope

- u0j and u1j are level-2 residuals (random effects)

- eij is the level-1 residual

Types of Multilevel Models

Table 1: Types of Multilevel Models and Their Applications

| Model Type | Key Characteristics | Biomedical Application Example |

|---|---|---|

| Random Intercepts | Intercepts vary across groups; slopes are fixed | Examining baseline blood pressure variation across clinics while assuming the effect of medication dosage is constant |

| Random Slopes | Slopes vary across groups; intercepts are fixed | Modeling how the relationship between exercise duration and weight loss varies across different rehabilitation centers |

| Random Intercepts and Slopes | Both intercepts and slopes vary across groups | Studying how both baseline cholesterol levels and the effect of dietary intervention vary across hospitals |

| Cross-Classified | Units nested in multiple non-hierarchical classifications | Patients nested within both physicians and neighborhoods [2] |

Key Assumptions

Multilevel models operate under several key statistical assumptions [1]:

- Linearity: Relationships between variables are linear (though nonlinear extensions exist)

- Normality: Residuals at each level are normally distributed

- Homoscedasticity: Residuals exhibit constant variance

- Independence: Level-1 and level-2 residuals are uncorrelated

Violations of these assumptions may require transformations, different distributional specifications, or robust standard errors [1].

Applications in Biomedical Research

Table 2: Application of Multilevel Models Across Biomedical Fields (2010-2020) [4]

| Field of Application | Percentage of Articles | Common Model Types | Study Designs |

|---|---|---|---|

| Health/Epidemiology | 46.2% | Two-level (78.5%), Multivariate | Cross-sectional (83.1%) |

| Social Life | 16.9% | Random intercept, Random slope | Longitudinal (9.2%) |

| Education and Psychology | 15.4% | Cross-classified | Repeated measures (6.2%) |

| Other Fields | 21.5% | Mixed effects | Mixed designs |

Specific Biomedical Applications

Multilevel models have been employed across diverse biomedical domains. In epidemiology, they estimate effects between conditions such as smoking, asthma, mental health, and cancer while accounting for geographic clustering [4]. In pharmacology, physiologically based pharmacokinetic/pharmacodynamic (PBPK/PD) modeling uses multilevel frameworks to understand drug response variability across individuals and populations [6].

Physical activity research has effectively utilized multilevel modeling to validate measurement approaches. A 2025 study compared ecological momentary assessment (EMA) with traditional physical activity measures using multilevel modeling to account for repeated measurements nested within participants [7]. The models demonstrated that EMA and the Bouchard Physical Activity Record exhibited better performance in modeling accelerometer data compared to the Global Physical Activity Questionnaire (EMA daily: β=.387, P<.001; BAR daily: β=.394, P<.001; GPAQ: β=.281, P<.001) [7].

In health services research, multilevel models disentangle the heterogeneity in prescribing behaviors among physicians. A Scottish study used mixed effects modeling to explore whether "high-risk-prescribing culture" was driven by individual physicians or practice-level culture, finding that high-risk prescribing was more of an individual-physician issue than a practice-level phenomenon [2].

Experimental Protocols and Implementation

Protocol: Implementing a Two-Level Model for Physical Activity Assessment Validation

Objective: To validate smartphone-delivered Ecological Momentary Assessment (EMA) against accelerometer data and traditional physical activity questionnaires using multilevel modeling.

Materials and Equipment:

- ActiGraph GT3X+ accelerometer

- Smartphones with survey capability

- GPAQ (Global Physical Activity Questionnaire)

- BAR (Bouchard Physical Activity Record) adaptation

Procedure:

- Participant Recruitment: Recruit adult participants (18+) capable of daily physical activity with access to mobile phones [7].

- Baseline Assessment: On day 0, measure body composition and handgrip strength; administer GPAQ.

- Accelerometer Deployment: Instruct participants to wear ActiGraph GT3X+ over the right waist within an anterior axillary line for 7 consecutive days (days 1-6), removing only for water activities.

- EMA Implementation: Deliver physical activity questionnaires via SMS with 2-hour intervals between 8 AM and 10 PM on 1 weekday and 1 weekend day.

- BAR Administration: Participants complete the adapted Bouchard Physical Activity Record daily between days 1-6.

- Data Processing:

- Process accelerometer data using Freedson algorithm for METs estimation

- Apply GPAQ analysis guidelines for data cleaning

- Remove EMA records with less than 6 reports per day

- Multilevel Modeling:

- Specify two-level model with repeated observations (level 1) nested within participants (level 2)

- Include fixed effects for assessment type and covariates

- Include random intercepts for participants

- Estimate using maximum likelihood or Bayesian methods

Statistical Analysis:

- Use multilevel modeling to account for the hierarchical data structure

- Compare parameter estimates (β coefficients) across assessment methods

- Evaluate model fit using information criteria (AIC, BIC)

Protocol: Analyzing Physician Practice Patterns in Prescribing

Objective: To examine the effect of physician advice on patient outcomes while accounting for clustering of patients within physicians and practices.

Materials:

- Electronic health records or survey data

- Statistical software with multilevel modeling capabilities

Procedure:

- Data Structure Setup: Organize data with patients (level 1) nested within physicians (level 2) nested within practices (level 3).

- Variable Definition:

- Level 1 (Patient): Outcome variable (e.g., alcohol-free weeks), patient characteristics, hours of physician advice

- Level 2 (Physician): Physician characteristics, years of experience

- Level 3 (Practice): Practice setting (urban/rural), practice size

- Model Building:

- Start with unconditional model (no predictors) to calculate intraclass correlation

- Add level-1 predictors (e.g., patient characteristics, hours of advice)

- Add level-2 and level-3 predictors

- Consider cross-level interactions (e.g., between practice setting and physician advice)

- Model Estimation: Use restricted maximum likelihood or Bayesian estimation

- Interpretation: Partition variance across levels; interpret fixed effects and variance components

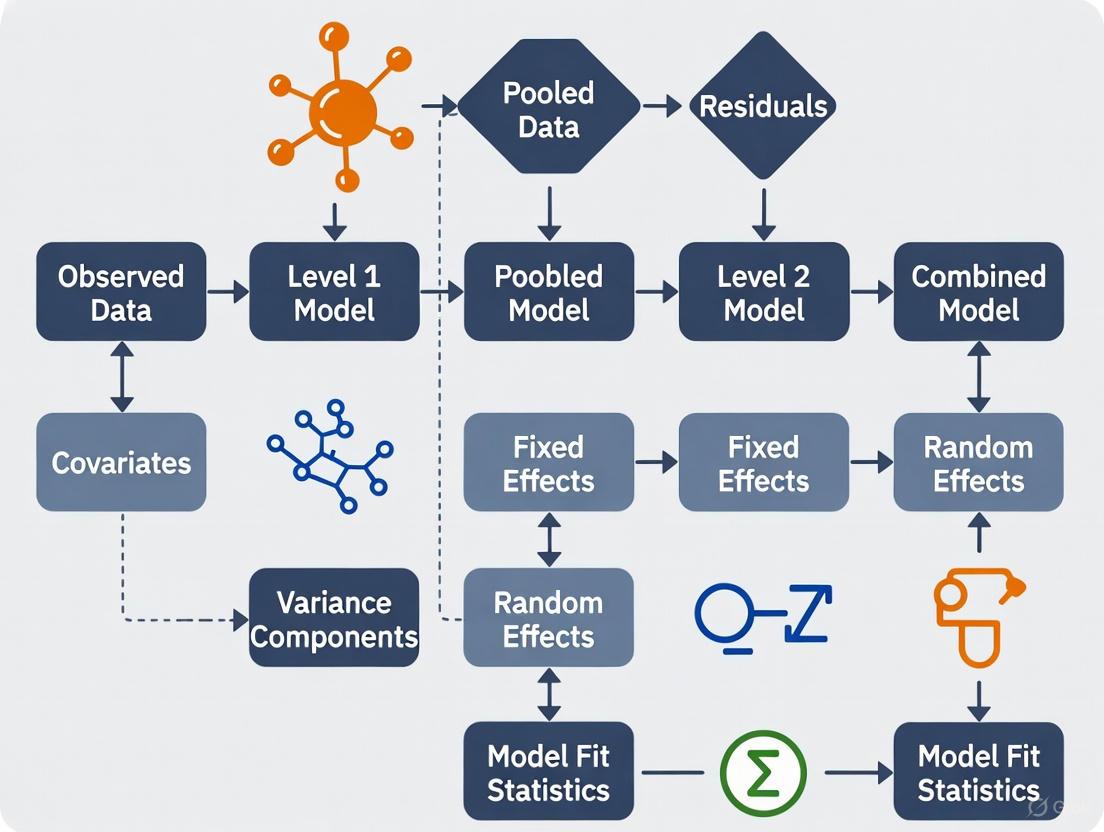

Visualization of Multilevel Structures

Hierarchical Data Structure in Biomedical Research

Multilevel Model Estimation Workflow

The Scientist's Toolkit

Research Reagent Solutions for Multilevel Modeling

Table 3: Essential Software Tools for Multilevel Modeling in Biomedical Research

| Software Tool | Primary Function | Key Features for Multilevel Modeling |

|---|---|---|

| R (lme4, nlme) | Statistical computing | Extensive package ecosystem, flexible model specification, open-source |

| Stata (mixed) | Statistical analysis | Straightforward syntax, comprehensive survey weight handling |

| IBM SPSS (MIXED) | Statistical analysis | GUI and syntax options, accessible for beginners |

| Mplus | Structural equation modeling | Advanced multilevel capabilities, latent variable modeling |

| MLwiN | Multilevel modeling | Specialized for multilevel analysis, Bayesian estimation |

| SAS (PROC MIXED) | Statistical analysis | Robust enterprise solution, handling complex covariance structures |

Handling Complex Survey Weights

When working with complex survey data that includes design weights, analysts should scale weights using two primary methods [8]:

- Method A: Scale weights so they sum to the cluster sample size

- Method B: Scale weights so they sum to the effective cluster size

Current recommendations suggest fitting MLMs using both scaled-weighted and unweighted data, with comparisons across methods providing greater confidence in results [8].

Multilevel modeling represents an essential statistical approach for biomedical researchers working with hierarchical data structures. By properly accounting for nested data relationships, these models enable accurate parameter estimation and appropriate inferences that would be compromised using traditional statistical methods. As biomedical research continues to recognize the multifaceted nature of health determinants across biological, clinical, and social levels, multilevel modeling provides the necessary analytical framework to investigate these complex relationships. Future directions include greater integration of spatial effects into multilevel models, improved handling of causal inference in hierarchical data, and continued development of accessible software implementations [4] [2].

Multilevel modeling (MLM), also known as mixed-effects modeling or hierarchical linear modeling, provides a robust statistical framework for analyzing data with nested or clustered structures commonly encountered in scientific research [1] [9]. These models are particularly valuable in drug development and biomedical research where data often exhibit hierarchical organization—such as repeated measurements within patients, patients within clinical sites, or observations within experimental batches. Unlike traditional statistical methods that assume independence of observations, multilevel models explicitly account for the dependency inherent in clustered data, leading to more accurate estimates and inferences [9] [10].

The fundamental components of multilevel models include random intercepts, random slopes, and variance components, which together enable researchers to partition variability across different levels of the data hierarchy [11] [1]. This partitioning allows for more nuanced research questions that extend beyond traditional "fixed effect" analyses, enabling investigators to simultaneously examine relationships between variables and variability across contextual units [11]. For researchers working with cyclical data or longitudinal interventions, these models offer particular advantages in modeling change over time while accounting for individual differences in response patterns [12].

Core Terminology and Definitions

Random Intercepts

Random intercepts capture group-specific deviations from the overall average response, allowing the baseline outcome level to vary across clusters or subjects [11]. In a random intercept model, each group (e.g., school, clinical site, patient) has its own regression line that is parallel to the overall average line but shifted upward or downward based on the group's characteristics [11]. Formally, the random intercept model extends the standard linear model by including a group-specific random effect:

y_ij = β_0 + β_1*x_ij + u_j + e_ij [9]

Where:

y_ijrepresents the outcome for observation i in group jβ_0is the overall intercept (fixed effect)β_1is the slope coefficient for the predictor x (fixed effect)u_jis the random intercept for group j (representing the deviation of group j from the overall intercept)e_ijis the residual error term

As explained in the presentation by Pillinger, "for the random intercept model, the intercept for the overall regression line is still β0 but for each group line the intercept is β0 + uj" [11]. This terminology can sometimes be confusing, as sometimes the entire group-specific intercept (β0 + uj) is referred to as the random intercept, while other times only the deviation uj is called the random intercept [11].

Random Slopes

Random slopes extend this concept by allowing the relationship between predictors and outcomes to vary across groups, recognizing that effects may not be uniform across all clusters [12]. While random intercept models assume parallel regression lines for different groups, random slope models permit these lines to have different slopes, representing differential effects of explanatory variables across groups [12].

The random slope model incorporates an additional random effect for the slope:

y_ij = β_0 + u_0j + (β_1 + u_1j)*x_ij + e_ij [9]

Where:

u_1jis the random slope for group j (representing the deviation of group j's slope from the overall slope β_1)u_0jis the random intercept for group j- Other terms are as defined previously

As Pillinger explains in her presentation on random slope models, "unlike a random intercept model, a random slope model allows each group line to have a different slope and that means that the random slope model allows the explanatory variable to have a different effect for each group" [12]. This flexibility is particularly valuable when researching treatment effects or biological processes that may operate differently across contexts or populations.

Variance Components

Variance components represent the variances of the random terms in a mixed effects model and quantify how much of the total variation in the response can be attributed to each level of the hierarchy [13]. In a basic two-level random intercept model, there are two variance components: the variance of the group-level random intercepts (σ²u) and the variance of the residual errors (σ²e) [11] [13].

These variance components allow researchers to answer questions about the distribution of variability across levels. For example, in a study of patients within hospitals, the variance components would indicate how much variation in outcomes exists between hospitals versus between patients within the same hospital [11]. The intraclass correlation coefficient (ICC), calculated as σ²u / (σ²u + σ²_e), quantifies the proportion of total variance that lies between groups [9].

Table 1: Interpretation of Variance Components in a Mixed Effects Model

| Component | Symbol | Interpretation | Research Question Example |

|---|---|---|---|

| Level 2 Variance | σ²_u | Unexplained variation between groups after controlling for explanatory variables | How much variation in patient outcomes is between clinical sites? |

| Level 1 Variance | σ²_e | Unexplained variation within groups after controlling for explanatory variables | How much variation in patient outcomes remains within each clinical site? |

| Total Variance | σ²u + σ²e | Total unexplained variation in the response variable | What is the overall unexplained variability in the treatment response? |

Analytical Protocols for Multilevel Modeling

Model Specification Workflow

The process of developing multilevel models follows a systematic sequence to ensure appropriate model specification and interpretation [1] [9]. The workflow begins with assessing whether the data structure necessitates multilevel modeling, proceeds through model specification and estimation, and concludes with model diagnostics and interpretation.

Protocol 1: Random Intercept Model Implementation

Purpose: To implement a random intercept model that accounts for group-level variability while estimating the effects of explanatory variables.

Materials and Software Requirements:

- Statistical software capable of fitting mixed effects models (R with lme4 package, SAS PROC MIXED, Stata mixed command, Python statsmodels)

- Dataset with appropriate nested structure

- Computational resources sufficient for maximum likelihood estimation

Procedure:

- Data Preparation: Structure the data in long format with explicit identifiers for grouping variables. Ensure the response variable is examined at the lowest level of analysis [1].

- Estulate Model Fit: Begin by fitting a null model (variance components model) with no predictors to partition variance between levels:

y_ij = β_0 + u_j + e_ij - Calculate Intraclass Correlation: Compute ICC = σ²u / (σ²u + σ²_e) to determine the proportion of variance at the group level [9].

- Add Fixed Effects: Introduce explanatory variables to the fixed part of the model while maintaining random intercepts:

y_ij = β_0 + β_1*x_ij + u_j + e_ij - Estimation: Use Restricted Maximum Likelihood (REML) estimation for variance components and Maximum Likelihood (ML) for comparing models with different fixed effects [1].

- Diagnostics: Examine residuals at each level, check normality assumptions, and assess model fit using information criteria (AIC, BIC) [9].

Interpretation Guidelines:

- Fixed effects (β coefficients) are interpreted similarly to standard regression coefficients, representing the average relationship between predictors and the outcome across all groups [11].

- Variance components (σ²u and σ²e) indicate how much variability remains at each level after accounting for the fixed effects [13].

- The random intercepts (u_j) represent group-specific deviations from the overall average, with larger values indicating greater heterogeneity between groups [11].

Protocol 2: Random Slope Model Implementation

Purpose: To specify and fit a random slope model that allows the effect of explanatory variables to vary across groups.

Procedure:

- Model Specification: Extend the random intercept model by adding random effects for slopes:

y_ij = β_0 + u_0j + (β_1 + u_1j)*x_ij + e_ij - Covariance Structure: Specify the covariance structure between random intercepts and slopes. The full model estimates three parameters at level 2: variances of intercepts (σ²u0) and slopes (σ²u1), and their covariance (σ_u01) [12].

- Model Comparison: Use likelihood ratio tests or information criteria to compare the random slope model with the nested random intercept model [1].

- Estimation: Employ REML estimation for more accurate variance component estimates [1].

- Visualization: Plot group-specific regression lines to illustrate the variability in slopes across groups [12].

Interpretation Guidelines:

- σ²_u1 represents the variance in slopes between groups, indicating the extent to which the relationship between the predictor and outcome differs across groups [12].

- The covariance between intercepts and slopes (σ_u01) indicates whether groups with higher baseline levels (intercepts) show stronger or weaker relationships between the predictor and outcome [12].

- A positive covariance indicates fanning-out patterns, while negative covariance indicates fanning-in patterns of group-specific regression lines [12].

Table 2: Decision Framework for Random Effects Specification

| Research Goal | Recommended Model | Key Parameters | Interpretation Focus |

|---|---|---|---|

| Control for group effects | Random Intercept | σ²_u, ICC | Proportion of variance between groups |

| Test differential effects across groups | Random Slope | σ²u1, σu01 | Variability in predictor-outcome relationships |

| Full exploration of group differences | Random Intercept and Slope | σ²u0, σ²u1, σ_u01 | Both baseline and relationship differences |

| Simple fixed effects only | Single-level Model | β coefficients | Average effects ignoring grouping |

Application to Research Design

Signaling Pathways in Multilevel Analysis

The conceptual relationships between model components in multilevel analysis can be visualized as a signaling pathway that illustrates how variability flows through the different levels of the model structure.

Research Reagent Solutions for Multilevel Modeling

Table 3: Essential Analytical Tools for Multilevel Modeling Research

| Research Reagent | Function | Example Implementation | ||

|---|---|---|---|---|

| lme4 Package (R) | Fitting linear mixed-effects models | lmer(response ~ predictor + (1|group), data) |

||

| PROC MIXED (SAS) | Estimating mixed models with various covariance structures | PROC MIXED; CLASS group; MODEL y = x; RANDOM INT / SUBJECT=group; |

||

| mixed Command (Stata) | Fitting multilevel mixed models | `mixed y x | group:` | |

| statsmodels (Python) | Estimating mixed effects models in Python | MixedLM.from_formula("y ~ x", data, groups=data["group"]) |

||

| REML Estimation | Producing unbiased variance component estimates | Default method in most software for final models | ||

| ML Estimation | Enabling comparison of models with different fixed effects | Used for model comparison via likelihood ratio tests | ||

| AIC/BIC Criteria | Comparing non-nested models and penalizing complexity | AIC = 2k - 2ln(L) where k is parameters, L is likelihood [9] |

||

| Intraclass Correlation | Measuring proportion of group-level variance | ICC = σ²_u / (σ²_u + σ²_e) [9] |

Advanced Applications and Considerations

Covariance Between Intercepts and Slopes

In random slope models, the covariance between intercepts and slopes (σ_u01) provides important information about the relationship between baseline levels and treatment effects or predictor relationships across groups [12]. This parameter can reveal systematic patterns in how interventions operate across different contexts or populations.

Three possible scenarios exist for this covariance:

- Positive covariance: Groups with higher intercepts show stronger relationships between predictors and outcomes (fanning-out pattern)

- Negative covariance: Groups with higher intercepts show weaker relationships between predictors and outcomes (fanning-in pattern)

- Zero covariance: No systematic relationship between intercepts and slopes across groups [12]

Understanding these patterns is particularly valuable in drug development research where differential treatment effects across sites or patient subgroups may inform personalized medicine approaches or implementation strategies.

Assumptions and Diagnostic Procedures

Multilevel models share many assumptions with general linear models but require additional considerations due to the hierarchical structure [1]:

- Linearity: Relationships between predictors and outcomes are linear at each level of the hierarchy

- Normality: Residuals at each level follow normal distributions

- Homoscedasticity: Constant variance of residuals within levels

- Independence: Observations are independent conditional on the random effects

Diagnostic procedures should include examination of level-specific residuals, checking for normality and constant variance, and assessing potential influential cases using measures such as Cook's distance adapted for multilevel models [9]. For random slope models, it is particularly important to check the distribution of group-specific slopes and their relationship with intercepts.

Power and Sample Size Considerations

Statistical power in multilevel models depends differently on level 1 and level 2 sample sizes [1]. Power for detecting level 1 effects is primarily determined by the total number of individual observations, while power for level 2 effects depends more strongly on the number of groups [1]. To detect cross-level interactions, recommendations suggest at least 20 groups, though fewer may suffice when focusing solely on fixed effects [1].

Table 4: Variance Component Interpretation in Research Context

| Variance Pattern | Interpretation | Research Implications |

|---|---|---|

| High σ²u, Low σ²e | Substantial between-group variation relative to within-group variation | Focus interventions on group-level factors; consider contextual effects |

| Low σ²u, High σ²e | Minimal between-group variation relative to within-group variation | Focus interventions on individual-level factors; group context less important |

| Significant σ²_u1 | Important variability in predictor-outcome relationships across groups | Consider moderated implementation; personalized approaches based on group characteristics |

| Nonsignificant variance components | Minimal evidence for group-level variability | Consider simplifying model by removing random effects |

Random intercepts, random slopes, and variance components form the foundational framework of multilevel modeling approaches that are essential for analyzing nested data structures in biomedical and pharmaceutical research. These methodological tools enable researchers to address complex questions about variability across organizational levels while appropriately accounting for the dependency inherent in clustered data. The protocols and applications outlined in this document provide researchers with practical guidance for implementing these techniques within the context of drug development and scientific research, supporting more accurate and nuanced investigation of hierarchical data structures.

In biomedical research, data are frequently hierarchically organized, creating nested structures that violate the fundamental statistical assumption of data independence. This nesting occurs when experimental units are clustered within higher-level groups, such as multiple cells nested within individual subjects, repeated measurements nested within experimental animals, or patients nested within different clinical centers in a multicenter trial [14] [15]. Ignoring this inherent data structure can lead to substantially inflated Type I error rates, underestimated standard errors, and ultimately, incorrect conclusions about intervention effects [16] [15].

The growing complexity of preclinical and clinical research designs has increased the prevalence of nested data structures, particularly with advances in measurement technologies that generate massive amounts of data at multiple biological levels. For instance, spectroscopic microscopy studies in lung cancer research may collect data from hundreds of thousands of pixels nested within cells, which are in turn nested within individual subjects, who are finally grouped by diagnostic category [14]. This multi-level nesting presents both analytical challenges and opportunities for researchers who employ appropriate statistical methods that explicitly account for these dependencies.

Multilevel modeling (MLM), also known as hierarchical linear modeling, provides a comprehensive statistical framework for analyzing nested data by simultaneously modeling variation at each level of the hierarchy [1]. These models allow researchers to partition variance components across different levels, test hypotheses about cross-level effects, and obtain accurate parameter estimates that properly account for the clustered nature of the data. The application of MLM has expanded substantially with increased computing power and software availability, making these techniques accessible to researchers across various biomedical disciplines [17] [1].

Statistical Foundations of Multilevel Modeling

Variance Partitioning in Nested Designs

The fundamental concept underlying multilevel modeling is the partitioning of total variance into components attributable to different levels of the data hierarchy. In a simple two-level design with measurements nested within subjects, the total variance in the outcome variable is decomposed into between-subject variance and within-subject variance [14] [1]. This partitioning provides crucial information about the extent to which observed variability is due to differences between higher-level units versus differences within those units.

The intraclass correlation coefficient (ICC) quantifies the degree of dependency among observations within the same cluster by representing the proportion of total variance that lies between clusters [1] [15]. ICC values range from 0 to 1, with higher values indicating greater similarity among observations within the same cluster and thus stronger nesting effects that must be accounted for analytically. The formula for ICC in a two-level model is:

ICC = σ²between / (σ²between + σ²within)

where σ²between represents the between-cluster variance and σ²within represents the within-cluster variance [15]. The ICC directly influences the effective sample size in clustered data designs, with higher ICC values substantially reducing the effective independent sample size and statistical power for detecting intervention effects.

Table 1: Variance Components in a Three-Level Nesting Structure

| Variance Component | Description | Interpretation |

|---|---|---|

| Between-Subject Variance (σ²betweenSubjects) | Variability in outcomes attributable to differences between subjects | Represents the variance of subject-level means around the grand mean |

| Between-Cell Variance (σ²betweenCells) | Variability in outcomes attributable to differences between cells within the same subject | Represents the variance of cell-level means around their subject-level mean |

| Between-Pixel Variance (σ²betweenPixels) | Variability in outcomes attributable to differences between pixels within the same cell | Represents the variance of pixel-level measurements around their cell-level mean |

Mathematical Formulation of Multilevel Models

The multilevel model is specified through a series of linked equations that represent relationships at each level of the data hierarchy. For a two-level model with level-1 observations (denoted with subscript i) nested within level-2 clusters (denoted with subscript j), the level-1 model represents the relationship within each cluster [1]:

Yij = β0j + β1jXij + eij

where Yij is the outcome for observation i in cluster j, β0j is the intercept for cluster j, β1j is the slope for cluster j, Xij is the predictor value for observation i in cluster j, and eij is the level-1 residual error term [1]. The unique feature of multilevel models is that the level-1 coefficients (β0j and β1j) become outcome variables in the level-2 models:

β0j = γ00 + γ01Wj + u0j β1j = γ10 + γ11Wj + u1j

where γ00 and γ10 are level-2 intercepts, γ01 and γ11 are level-2 slopes, Wj is a level-2 predictor, and u0j and u1j are level-2 residual error terms [1]. This formulation allows researchers to model systematically varying intercepts and slopes across clusters and to test hypotheses about cross-level interactions.

Practical Consequences of Ignoring Data Nesting

Statistical and Interpretive Errors

Failure to account for data nesting in analytical approaches leads to several serious statistical errors with potentially significant scientific consequences. The most critical problem is the underestimation of standard errors for parameter estimates, particularly for higher-level predictors, which in turn inflates Type I error rates and increases the likelihood of false positive findings [15]. This occurs because conventional statistical tests assume independence of observations, and when this assumption is violated, the effective sample size is substantially smaller than the apparent sample size.

Statistical power in nested designs is influenced differently for level-1 versus level-2 effects. Power for detecting level-1 effects is primarily determined by the total number of individual observations, whereas power for detecting level-2 effects is primarily determined by the number of clusters [1] [15]. This distinction has crucial implications for study design, as increasing the number of observations within clusters does little to improve power for cluster-level effects when the number of clusters is small.

Table 2: Consequences of Ignoring Data Nesting in Different Research Scenarios

| Research Scenario | Primary Nesting Structure | Consequences of Ignoring Nesting |

|---|---|---|

| Multicenter Clinical Trial | Patients nested within clinical centers | Underestimated standard errors for treatment effects; inflated Type I error rates |

| Preclinical Animal Study | Repeated measurements nested within animals; cells nested within animals | Spurious findings of significance; overconfidence in treatment effect estimates |

| In Vitro Experiment | Multiple cells nested within treatment batches; technical replicates | Incorrect conclusions about dose-response relationships; improper variance estimation |

Impact on Sample Size and Research Reproducibility

Inappropriately ignoring nested data structures has direct consequences for sample size requirements and research reproducibility. When lower-level sample sizes are inadequate, the total variability of subject-level means increases, potentially requiring substantial increases in the number of subjects needed to maintain statistical power [14]. This relationship can be quantified through the inflation ratio (IR), which represents the proportional increase in total variance due to inadequate sampling at lower nested levels.

In a three-level nesting structure (e.g., pixels within cells within subjects), the variance of the subject-level mean can be expressed as [14]:

Var(X̄subject) = σ²betweenSubjects/ns + σ²betweenCells/(ns×nc) + σ²betweenPixels/(ns×nc×np)

where ns, nc, and np represent the number of subjects, cells per subject, and pixels per cell, respectively. The inflation ratio quantifies how much the total variance increases due to limited sampling at lower levels:

IR = [σ²betweenSubjects + σ²betweenCells/nc + σ²betweenPixels/(nc×np)] / σ²betweenSubjects

Research has demonstrated that with only 3 observations per lower level, the subject-level sample size may need to be increased by 208% to maintain equivalent power, while with 10 observations per lower level, the increase drops to approximately 23.8% [14]. These findings highlight the critical importance of appropriate sampling at all levels of nested designs to optimize resource allocation and research efficiency.

Experimental Protocols for Nested Data Collection

Protocol 1: Optimal Allocation and Randomization in Preclinical Studies

Purpose: To implement a matching-based modeling approach for optimal intervention group allocation that accounts for complex animal characteristics at baseline, thereby normalizing confounding variability and increasing statistical power [16].

Materials and Reagents:

- Experimental Animals: Appropriate model organisms (e.g., immune deficient mice for xenograft studies)

- Test Articles: Therapeutic interventions (e.g., ARN-509, MDV3100 for prostate cancer models)

- Characterization Equipment: PSA measurement systems, body weight scales, RNA-seq profiling technology

- Analysis Software: R package 'hamlet' or web-based graphical interface (http://rvivo.tcdm.fi/)

Procedural Steps:

- Baseline Characterization: Measure all relevant baseline variables (e.g., body weight, PSA levels, genetic markers, cage conditions) for the entire animal pool prior to intervention [16].

- Optimal Matching: Apply non-bipartite matching algorithms to identify optimal submatches (pairs, triplets, or quadruplets) of animals that minimize the sum of all pairwise distances between members based on multiple baseline characteristics [16].

- Randomized Allocation: Within each optimal submatch, randomly assign members to different treatment arms using a fully blinded procedure to prevent experimenter bias [16].

- Intervention Administration: Implement the planned interventions according to standardized protocols, maintaining blinding throughout the intervention period.

- Response Monitoring: Collect longitudinal response data using consistent measurement protocols and time intervals across all treatment groups.

- Matched Analysis: Analyze intervention responses using mixed-effects models that incorporate the baseline matching information, testing for treatment effects through paired comparisons within optimal submatches [16].

Validation Measures:

- Confirm that treatment groups show no significant differences in baseline characteristics following matched randomization (target: <0.1% of simulations showing baseline differences) [16].

- Verify that matching distance matrices at baseline correlate with post-intervention molecular profiling (e.g., RNA-seq data) to confirm that baseline differences capture meaningful biological variation [16].

Protocol 2: Variance Component Analysis for Sample Size Optimization

Purpose: To quantify variance components at different nesting levels and determine optimal sample sizes at each level that minimize total variance while considering research costs [14].

Materials:

- Measurement Systems: Technology appropriate for level-specific data collection (e.g., spectroscopic microscopy for pixel-level data)

- Statistical Software: Capable of variance component estimation (e.g., R, SAS, SPSS)

- Cost Assessment Tools: Documentation of resource requirements for each level of data collection

Procedural Steps:

- Pilot Data Collection: Collect preliminary data with sufficient sampling at all nested levels to estimate variance components.

- Variance Component Estimation: Use restricted maximum likelihood (REML) estimation or ANOVA-based methods to quantify variance at each level of the nesting structure [14].

- Inflation Ratio Calculation: Compute the inflation ratio for different combinations of level-specific sample sizes to understand how limited sampling at lower levels increases total variability [14].

- Cost Assessment: Document the relative costs of sampling at each level, including materials, personnel time, and processing requirements.

- Optimal Sample Size Determination: Apply optimization procedures to identify the combination of level-specific sample sizes that minimizes total variance given budget constraints using the formulas [14]:

- nc = √(costs × σ²betweenCells / (costc × σ²betweenSubjects))

- np = √(costc × σ²pixels / (costp × σ²betweenCells))

- Power Analysis: Conduct formal power analysis for the planned experimental design incorporating the estimated variance components and planned sample sizes at all levels.

Validation Measures:

- Compare estimated variance components from pilot data with previously published values for similar experimental systems.

- Verify that the planned design achieves sufficient power (typically ≥80%) for detecting the minimally important effect size.

Analytical Approaches for Nested Data

Multilevel Modeling Techniques

Multilevel models can be specified with different combinations of fixed and random effects depending on the research questions and data structure. The three primary types of multilevel models are:

Random Intercepts Models: These models allow intercepts to vary across clusters while holding slopes constant, effectively accounting for baseline differences between clusters while assuming consistent effects of predictors across all clusters [1]. These models are particularly useful for estimating intraclass correlations and determining the proportion of variance attributable to cluster-level differences.

Random Slopes Models: These models allow slopes (the effects of predictors) to vary across clusters while holding intercepts constant, testing whether the relationship between predictors and outcomes differs across clusters [1]. These models are appropriate when researchers hypothesize that the effect of an intervention or predictor variable differs across contexts or clusters.

Random Intercepts and Slopes Models: These comprehensive models allow both intercepts and slopes to vary across clusters, representing the most realistic but also most complex modeling approach [1]. These models partition variance in both initial status and growth trajectories across clusters and require sufficient Level-2 sample sizes for stable estimation.

Alternative Approaches for Nested Data

While multilevel modeling represents the most comprehensive approach for analyzing nested data, several alternative methods may be appropriate in specific research contexts:

Cluster-Robust Standard Errors: This approach uses conventional regression models but adjusts standard errors to account for clustering, providing valid inference without explicitly modeling the multilevel structure [15]. This method is particularly useful when the primary interest is in fixed effects and the cluster structure is not of substantive interest.

Generalized Estimating Equations (GEE): GEE models population-average effects while accounting for within-cluster correlation using a working correlation matrix, providing robust inference for clustered data without requiring full specification of the random effects distribution [15].

Fixed Effects Models: These models control for cluster-level effects by including cluster indicators as predictors, effectively removing all between-cluster variation from the estimation of predictor effects [15]. While this approach provides consistent control for cluster-level confounding, it cannot estimate the effects of cluster-level predictors.

Table 3: Comparison of Analytical Approaches for Nested Data

| Method | Key Features | Advantages | Limitations |

|---|---|---|---|

| Multilevel Models | Explicitly models variance components at multiple levels | Allows cross-level interactions; estimates both within-cluster and between-cluster effects | Computational complexity; distributional assumptions |

| Cluster-Robust Standard Errors | Adjusts standard errors for clustering without changing point estimates | Simple implementation; minimal assumptions | Does not model level-2 effects; limited to certain study designs |

| Generalized Estimating Equations (GEE) | Models marginal means with correlated data | Robust to misspecification of correlation structure | Population-average rather than cluster-specific interpretations |

| Fixed Effects Models | Controls for cluster effects using cluster indicators | Eliminates confounding by cluster-level variables | Cannot estimate cluster-level predictor effects |

Table 4: Research Reagent Solutions for Nested Data Studies

| Resource Category | Specific Tools | Function | Application Context |

|---|---|---|---|

| Statistical Software | R (lme4, nlme, hamlet packages), SAS PROC MIXED, HLM, Mplus | Implementation of multilevel models and variance component analysis | General nested data analysis across research domains |

| Optimal Allocation Tools | Hamlet R package, web-based GUI (http://rvivo.tcdm.fi/) | Matching-based intervention group allocation | Preclinical studies with multiple baseline characteristics |

| Variance Component Estimation | REML estimation procedures, ANOVA-based methods | Quantifying variance at different nesting levels | Sample size planning and optimization |

| Power Analysis Tools | SIMR package for R, PINT, Optimal Design | Power calculations for multilevel designs | Study planning and grant applications |

| Data Visualization | ggplot2 with faceting, specialized multilevel plotting functions | Visualizing nested data structures and model results | Exploratory data analysis and results presentation |

Properly accounting for nested data structures in clinical and preclinical research provides a critical advantage by ensuring appropriate statistical inference, optimizing resource allocation, and enhancing research reproducibility. The explicit modeling of variance components across hierarchical levels enables researchers to distinguish true intervention effects from artifactual findings arising from data non-independence, while also providing insights into the levels at which interventions exert their effects.

The integration of optimal design principles with appropriate analytical approaches represents a fundamental advancement in biomedical research methodology. By implementing the protocols and considerations outlined in this article, researchers can substantially strengthen the validity and efficiency of their studies, accelerating the discovery of meaningful therapeutic interventions and enhancing the translation of research findings into clinical practice.

In drug development, data inherently possesses a multilevel, clustered, or nested structure. This hierarchy arises from the fundamental organization of research and clinical activities. Common examples include repeated biological measurements nested within individual laboratory samples, patients clustered within different clinical trial sites, or preclinical efficacy data grouped by research institutions or experimental batches [4] [3]. The presence of this hierarchy violates the core assumption of independence in traditional statistical models like standard linear regression. Multilevel modeling (MLM), also known as hierarchical linear modeling (HLM), is a statistical technique specifically designed to account for this nested data structure. Its application ensures accurate parameter estimation, prevents the underestimation of standard errors, and ultimately leads to more valid and reliable inferences, which is critical for decision-making in the high-stakes drug development pipeline [4] [3].

Identifying Hierarchical Patterns for MLM Application

Key Indicators of Hierarchical Data Structure

Recognizing when to use MLM begins with identifying the hierarchical patterns in your dataset. The following indicators signal that a multilevel analysis is necessary:

- Non-Independence of Observations: Measurements from the same group (e.g., the same lab, clinic, or batch) are more similar to each other than to measurements from different groups. This intra-group correlation is the primary hallmark of hierarchical data [4] [3].

- Clustered Data Collection: The research design inherently involves clusters. In clinical trials, this is patients within sites; in preclinical research, it could be replicates within an experiment or experiments conducted by different technicians [3].

- Variables at Different Levels: The analysis involves predictors or variables that are measured at different levels of the hierarchy. For instance, a patient-level variable (e.g., genotype) and a site-level variable (e.g., geographic location or standard of care) may both influence the outcome [4].

- Interest in Contextual Effects: The research aims to understand how the context (e.g., the specific clinic environment or lab protocol) influences the outcome variable and its relationship with individual-level predictors [3].

Quantifying Data Hierarchy: The Intraclass Correlation Coefficient (ICC)

The Intraclass Correlation Coefficient (ICC) is a crucial metric that quantifies the degree of similarity among observations within the same cluster. It measures the proportion of the total variance in the outcome that is accounted for by the between-cluster variance [3].

Interpreting the ICC:

- ICC = 0: Indicates no correlation within clusters; observations are independent, and MLM may not be necessary.

- ICC > 0: Indicates that observations within clusters are correlated. Even a small ICC (e.g., 0.05) can justify the use of MLM, as ignoring it can lead to inflated Type I errors [3].

Table 1: Prevalence of MLM Application Across Disciplines (2010-2020)

| Field of Application | Percentage of Articles | Common Use Cases |

|---|---|---|

| Health / Epidemiology | 46.2% | Analyzing patient outcomes clustered within clinics or hospitals; epidemiological studies with geographic clustering [4]. |

| Social Sciences | 16.9% | Studying individual behaviors within social or organizational structures [4]. |

| Education / Psychology | 15.4% | Assessing student performance nested within schools or repeated measures within subjects [4]. |

Consequences of Ignoring Hierarchical Structure

Applying standard statistical models that assume independence to hierarchical data can result in several critical errors:

- Inaccurate Standard Errors: The standard errors of parameter estimates are typically underestimated, making effects appear more statistically significant than they truly are (increased Type I error rate) [4] [3].

- Inefficient Parameter Estimates: The model estimates may be inefficient, meaning they do not make optimal use of the information available in the data [4].

- Misleading Inferences: Ultimately, these inaccuracies can lead to incorrect scientific conclusions and poor decision-making in the drug development process, such as advancing a drug candidate based on flawed evidence [4] [3].

Application Notes and Protocols for Drug Development

Protocol 1: Assessing the Need for MLM in a Preclinical Dataset

1. Objective: To determine if the hierarchical structure of a preclinical efficacy study necessitates the use of multilevel modeling.

2. Experimental Context: A study testing the effect of a new chemical entity (NCE) on tumor growth in animal models, where multiple tumors are measured within each animal, and animals are housed in different cages (batches) [18].

3. Workflow for Hierarchical Pattern Identification: The following diagram outlines the logical decision process for determining the appropriate statistical model.

4. Methodology:

- Data Collection: Record tumor volume for each tumor, along with a unique identifier for the animal and the cage/batch.

- Software Implementation: Use statistical software capable of MLM (e.g., R

lme4, Pythonstatsmodels, SASPROC MIXED). - ICC Calculation: Fit a null model (a random-intercept model with no predictors) to partition the variance.

- Model Formula:

lmer(Tumor_Volume ~ 1 + (1 | Animal_ID)) - ICC Calculation: ICC = (Variance between Animals) / (Variance between Animals + Variance within Animals)

- Model Formula:

5. Decision Criteria:

- Proceed with MLM if the ICC is substantially greater than 0 or if the experimental design includes predictors at multiple levels (e.g., drug dose at the animal level and technician experience at the batch level).

Protocol 2: Implementing a Two-Level MLM for a Multi-Site Clinical Trial

1. Objective: To correctly analyze a continuous clinical outcome (e.g., reduction in biomarker level) from a multi-site trial, accounting for patient-level and site-level effects.

2. Experimental Context: A Phase IIa clinical proof-of-concept study conducted across 20 clinical sites with varying levels of experience and patient demographics [19] [18].

3. Workflow for Multi-Site Analysis: This diagram illustrates the flow of a multilevel analysis from data collection to the interpretation of cross-level effects.

4. Methodology:

- Model Specification:

- Level 1 (Patient-level):

Y_ij = β_0j + β_1j(Treatment_ij) + e_ijY_ijis the biomarker reduction for patientiat sitej.β_0jis the intercept for sitej.β_1jis the slope (treatment effect) for sitej.e_ijis the patient-level error term.

- Level 2 (Site-level):

β_0j = γ_00 + γ_01(Site_Experience_j) + u_0jβ_1j = γ_10 + γ_11(Site_Experience_j) + u_1jγ_00andγ_10are the average intercept and slope.γ_01andγ_11represent the effect of site experience on the intercept and slope.u_0jandu_1jare the site-level random effects.

- Level 1 (Patient-level):

- Software Code Example (R):

5. Interpretation:

- Fixed Effects: The

γcoefficients represent the average effects across all sites. For example,γ_11indicates how the treatment effect varies with site experience. - Random Effects: The variances of

u_0jandu_1jindicate how much the intercepts and treatment slopes vary across sites after accounting for site experience.

Table 2: Essential Research Reagent Solutions for MLM Analysis

| Tool / Reagent | Function in Analysis |

|---|---|

| Statistical Software (R/Python/SAS) | Provides the computational environment and specialized packages (e.g., lme4, statsmodels) for fitting multilevel models and calculating metrics like the ICC [4]. |

| Intraclass Correlation (ICC) | A key diagnostic metric that quantifies the proportion of total variance due to clustering, informing the necessity and structure of the MLM [3]. |

| Domain Expertise | Critical for correctly specifying the levels of hierarchy, selecting relevant variables at each level, and interpreting the contextual effects meaningfully within the drug development context [19]. |

| High-Quality, Structured Metadata | Accurate and consistent data on cluster-level variables (e.g., site ID, batch number, technician ID) is indispensable for building a valid multilevel model [19]. |

Identifying hierarchical patterns is a prerequisite for robust statistical analysis in drug development. The systematic application of MLM, guided by the assessment of clustering through the ICC and a clear understanding of the data's multilevel structure, prevents analytical pitfalls. It allows researchers to draw accurate conclusions about drug efficacy and safety, accounting for the complex, nested reality of their data from preclinical studies to clinical trials. This approach is fundamental to strengthening the evidence base for advancing new therapeutic entities through the development pipeline.

Multilevel models (MLMs), also known as linear mixed models, are powerful statistical tools for analyzing hierarchical or clustered data structures common in longitudinal clinical trials, organizational studies, and biomedical research. These models extend traditional general linear models but introduce additional complexity in their assumptions due to the nested nature of the data [1]. The fundamental assumptions of MLMs can be categorized into three critical areas: independence, normality, and homoscedasticity, though these requirements manifest differently across the levels of the hierarchy compared to standard regression approaches [20] [1].

Proper verification of these assumptions is crucial for obtaining valid inferences in pharmaceutical research and drug development, where multilevel data structures frequently arise from repeated patient measurements, multicenter clinical trials, or longitudinal biomarker studies. Violations can lead to biased standard errors, incorrect confidence intervals, and ultimately flawed scientific conclusions regarding treatment efficacy and safety [21].

Comprehensive Assumptions Framework

Core Assumptions of Multilevel Models

Table 1: Fundamental Assumptions of Multilevel Models

| Assumption Category | Level | Key Requirement | Consequence of Violation |

|---|---|---|---|

| Independence | Level-1 | Residuals are independent within clusters | Biased standard errors, Type I/II errors |

| Level-2 | Random effects are independent between clusters | Incorrect variance components | |

| Cross-Level | Residuals at different levels are unrelated | Invalid hypothesis tests | |

| Normality | Level-1 | Level-1 residuals are normally distributed | Biased fixed effects estimates |

| Level-2 | Random effects are multivariate normal | Incorrect random effects inferences | |

| Homoscedasticity | Level-1 | Constant variance of level-1 residuals | Inefficient parameter estimates |

| Level-2 | Constant variance of random effects across groups | Biased random effects estimates | |

| Functional Form | Model Structure | Correct linear relationship specification | Model misspecification bias |

Beyond the standard assumptions of general linear models, MLMs introduce level-specific requirements for residuals and random effects. The independence assumption is modified to account for the expected correlation within clusters while maintaining independence between clusters [1] [5]. The normality assumption extends to the distribution of random effects at higher levels, while homoscedasticity must be verified separately for each level of the hierarchy [20].

Mathematical Foundation

The assumptions can be understood through the mathematical formulation of a 2-level null model:

Level 1: ( Y{ij} = \beta{0j} + R{ij} ), where ( R{ij} \sim N(0, \sigma^2) )

Level 2: ( \beta{0j} = \gamma{00} + U{0j} ), where ( U{0j} \sim N(0, \tau_{00}) )

Combined: ( Y{ij} = \gamma{00} + U{0j} + R{ij} )

The critical assumptions require that ( R{ij} ) and ( U{0j} ) are independent of each other, predictors at one level are unrelated to errors at another level, and both residual terms follow normal distributions with constant variances [20] [21].

Experimental Validation Protocols

Workflow for Assumption Checking

Protocol 1: Verifying Independence Assumptions

Objective: Confirm that residuals are independent within clusters and random effects are independent between clusters.

Procedure:

- Extract Level-1 Residuals: Obtain conditional residuals from the fitted MLM using statistical software (e.g.,

resid(model)in R) [20]. - Extract Level-2 Random Effects: Retrieve random intercepts and slopes using

ranef(model)$GROUPcommands [20]. - Assess Cross-Level Independence: Create scatterplots of level-2 residuals against level-2 predictors and calculate correlation coefficients:

- Code:

cor.test(l2_data$predictor, l2_data$intercept_resid)[20] - Interpretation: Non-significant correlations (p > 0.05) support independence

- Code:

- Evaluate Random Effects Independence: Check correlation between different random effects (e.g., intercepts and slopes) using variance-covariance matrix examination.

Acceptance Criteria: Non-significant correlations (p > 0.05) between residuals and predictors at corresponding levels, and absence of patterned residual plots.

Protocol 2: Assessing Normality Requirements

Objective: Verify normal distribution of level-1 residuals and multivariate normality of level-2 random effects.

Procedure:

- Level-1 Residual Normality:

- Create Q-Q plots of standardized level-1 residuals

- Perform Shapiro-Wilk test:

shapiro.test(residuals(model)) - Alternative: Kolmogorov-Smirnov test for distribution fit [22]

Level-2 Random Effects Normality:

- Generate Q-Q plots for each set of random effects

- Use Mahalanobis distance to assess multivariate normality of random effects

- Conduct formal tests on empirical Bayes estimates of random effects

Influence Diagnostics:

- Calculate Cook's distance for level-2 units

- Compute covariance ratio statistics to identify influential clusters

Acceptance Criteria: Points approximately follow reference line in Q-Q plots, formal tests non-significant (p > 0.05), and no extreme outliers in influence diagnostics.

Protocol 3: Evaluating Homoscedasticity

Objective: Confirm constant variance of residuals at all levels across predicted values and predictors.

Procedure:

- Level-1 Homoscedasticity:

- Plot standardized residuals versus fitted values

- Create residual plots against each predictor variable

- Use Breusch-Pagan test for heteroscedasticity

Level-2 Homoscedasticity:

- Plot random effects against level-2 predictors

- Assess variability of cluster-specific residuals across groups

- Use Levene's test on cluster means

Variance Function Modeling:

- Specify variance functions if heteroscedasticity is detected

- Consider weighted multilevel models for unequal variances

Acceptance Criteria: Random scatter in residual plots without systematic patterns, non-significant formal tests for heteroscedasticity (p > 0.05).

Visualization and Diagnostic Tools

Diagnostic Plot Framework

Implementation in Statistical Software

Table 2: Diagnostic Procedures by Software Platform

| Software | Residual Extraction | Random Effects Extraction | Diagnostic Plots | Formal Tests |

|---|---|---|---|---|

| R/lme4 | resid(model) |

ranef(model) |

plot(model) |

shapiro.test() |

| SPSS | SAVE PRED | SAVE FIXPRED | Chart Builder | EXAMINE VARIABLES |

| Stata | predict r, res |

predict u, re |

lgraph |

swilk r |

| Mplus | SAVEDATA: FILE | SAVEDATA: FILE | Plot: TYPE=PLOT1 | MODEL FIT: CHISQ |

Research Reagent Solutions

Table 3: Essential Analytical Tools for MLM Diagnostics

| Tool Category | Specific Implementation | Function in Diagnostic Process |

|---|---|---|

| Residual Extraction | R: resid(), lme4 package |

Extracts level-1 conditional residuals for normality checks |

| Random Effects Extraction | R: ranef(), Stata: predict u, re |

Obtains BLUPs for level-2 assumption verification |

| Normality Testing | Shapiro-Wilk, Kolmogorov-Smirnov | Formal tests for distributional assumptions |

| Influence Diagnostics | Cook's Distance, DFBETAS | Identifies influential level-2 units |

| Variance-Covariance Examination | VarCorr() in R, estat icc in Stata |

Assesses random effects covariance structure |

| Visualization Packages | ggplot2, lattice in R |

Creates diagnostic plots for assumption checking |

Remediation Strategies for Violations

When assumption violations are detected, several remediation strategies are available:

Non-Normal Residuals:

- Transformation of dependent variable (log, square root)

- Robust estimation methods with heavy-tailed distributions

- Bootstrap confidence intervals for fixed effects

Heteroscedasticity:

- Variance function modeling in the level-1 error structure

- Weighted multilevel models with explicit variance specifications

- Heteroscedasticity-consistent standard errors

Dependent Errors:

- Alternative covariance structures for residuals (AR, TOEP)

- Additional random effects to account for unmeasured clustering

- Spatial or temporal correlation structures for longitudinal data

Functional Form Misspecification:

- Polynomial terms for nonlinear relationships

- Spline functions or generalized additive mixed models

- Interactive effects and moderated relationships

Reporting Standards and Documentation

Comprehensive reporting of assumption checks is essential for reproducible research. The LEVEL (Logical Explanations & Visualizations of Estimates in Linear mixed models) guidelines recommend documenting [21] [23]:

- Variance Partitioning: Report variance components and intraclass correlation coefficients

- Diagnostic Procedures: Explicitly describe methods used for checking assumptions

- Visual Evidence: Include diagnostic plots in supplementary materials

- Remediation Actions: Document any corrections applied for assumption violations

- Software Implementation: Specify software, packages, and code used for diagnostics

Proper documentation ensures transparency and enables other researchers to evaluate the validity of multilevel modeling results in scientific publications, particularly in regulatory submissions for drug development.

Rigorous attention to the fundamental assumptions of independence, normality, and homoscedasticity in multilevel modeling is essential for valid statistical inference in biomedical and pharmaceutical research. The protocols and diagnostic frameworks presented here provide researchers with comprehensive tools for verifying these requirements and implementing appropriate corrections when violations occur. By systematically applying these validation procedures, scientists can enhance the reliability of their conclusions regarding drug efficacy, patient outcomes, and longitudinal biomarker patterns in complex multilevel data structures.

Implementing Multilevel Models: Methodological Frameworks for Drug Development Applications

MLM within the Model-Informed Drug Development (MIDD) Paradigm

Model-Informed Drug Development (MIDD) employs a wide array of quantitative approaches to streamline drug development and inform regulatory and internal decision-making. Among these, multilevel modeling (MLM) stands out as a powerful statistical framework for analyzing data with inherent hierarchical or clustered structures. MLM, also known as hierarchical linear modeling or mixed-effects modeling, accounts for correlations between observations nested within higher-level units, such as repeated patient measurements, multiple clinical sites, or continuous cycle data [24] [25].

Within the MIDD paradigm, MLM provides a robust methodology for understanding complex, layered data generated throughout the drug development lifecycle. Its application is crucial for deriving meaningful insights from multi-source data, ensuring accurate parameter estimation, and ultimately, for making efficient and informed decisions during drug development programs [25].

Theoretical and Methodological Foundations

Multilevel modeling is fundamentally designed to handle non-independence in data, a common feature in biomedical research where observations are nested within larger groups [25].

Core Concepts of MLM

- Data Hierarchy: A common hierarchical structure in clinical research involves repeated observations (Level-1) nested within individual patients (Level-2), who may further be nested within clinical sites (Level-3) [25]. For example, in a longitudinal pharmacokinetic (PK) study, drug concentration measurements (Level-1) are nested within subjects (Level-2).

- Fixed and Random Effects: Fixed effects represent the average relationship between predictors and the outcome across the entire population (e.g., the average effect of a drug dose on concentration). Random effects capture the variability in these relationships across higher-level units (e.g., how the baseline drug concentration or the slope of the dose-response relationship varies randomly from patient to patient) [24] [25].

- Variance Partitioning: MLM partitions the total variance in the outcome into different components attributable to each level of the hierarchy (e.g., within-patient variance and between-patient variance) [25].

The Multilevel Modeling Cycle for Research

Applying MLM within MIDD follows a cyclical, iterative process that aligns with the full-cycle research methodology, which emphasizes dynamic interaction between observation, theory building, and experimentation [26]. The process is not linear; insights from later stages often necessitate returning to earlier steps to refine the model, reflecting the iterative nature of scientific discovery and model-informed development [26].

The following diagram illustrates this iterative research cycle, which integrates the multidisciplinary nature of MIDD.

Application Notes: Utilizing MLM for Analyzing Cyclical Data in MIDD

Biological rhythms, such as circadian cycles or menstrual cycles, can significantly influence drug pharmacokinetics and pharmacodynamics [27]. MLM provides an ideal framework for analyzing this type of cyclical data.

Key Considerations for Cyclical Data

- Within-Person Process: Cyclical influences are fundamentally within-person processes. Analyzing them as between-subject variables conflates within-subject variance with between-subject variance and lacks validity [27]. MLM naturally handles this by modeling within-cycle and between-cycle variations simultaneously.

- Phase Coding: Accurately coding the phase of a cycle (e.g., follicular vs. luteal phase in a menstrual cycle) is critical. This requires precise measurement of cycle start dates and, ideally, confirmation of ovulation for hormonal cycles [27].

- Modeling Hormonal Interactions: Complex interactions, such as between estradiol (E2) and progesterone (P4), can be modeled using MLM to predict outcomes like heart rate variability across different cycle phases [27].

Exemplar Application: Modeling Circadian Influence on Drug Clearance

Background: A drug for hypertension is suspected to have varying clearance rates due to circadian rhythms. Understanding this variation is crucial for optimizing dosing schedules.

MLM Approach: A two-level model is constructed with repeated PK samples (Level-1) nested within patients (Level-2).

- Level-1 Model (Within-Patient):

Clearance_ij = β_0j + β_1j*(Time_of_Day_ij) + e_ij- Here,

Clearance_ijis the observed clearance for patientjat timei.β_0jis the estimated average clearance for patientj, andβ_1jcaptures the within-patient effect of time of day on clearance for patientj.

- Here,

- Level-2 Model (Between-Patient):

β_0j = γ_00 + γ_01*(Age_j) + u_0jβ_1j = γ_10 + u_1j- The patient-specific average clearance (

β_0j) is modeled as a function of the overall average clearance (γ_00) and the patient's age (γ_01). The circadian slope (β_1j) is allowed to vary randomly across patients (u_1j) around an average effect (γ_10).

MIDD Insight: This model quantifies the average circadian effect on clearance (γ_10) and identifies if this effect is consistent across patients (variance of u_1j). If the circadian effect is significant but highly variable, a personalized dosing approach might be warranted.

Experimental Protocols

Protocol: A Multilevel Analysis of Drug Response Across Clinical Sites

1. Objective: To quantify the between-site variability in drug response for a Phase III clinical trial and identify site-level characteristics (e.g., regional practices, patient demographics) that explain this variability.

2. Experimental Design:

- Design: Retrospective analysis of a multicenter, randomized controlled trial.

- Participants: Patients from all investigative sites included in the trial.

- Primary Outcome: Clinical response measured by a pre-specified continuous or binary endpoint at week 12.

3. Data Collection:

- Collect patient-level data: outcome, treatment arm, baseline covariates (e.g., disease severity, age, sex).

- Collect site-level data: geographic region, site type (academic vs. private), average experience of investigators.

4. Statistical Analysis - MLM Specification:

- Model for Continuous Outcome:

- Level-1 (Patient):

Y_ij = β_0j + β_1j*(Treatment_ij) + β_2*(Covariate1_ij) + ... + e_ij - Level-2 (Site):

β_0j = γ_00 + γ_01*(Site_Type_j) + u_0jβ_1j = γ_10 + u_1j

- Where:

Y_ij: Outcome for patientiat sitej.β_0j: Intercept (e.g., average control group response) for sitej.β_1j: Treatment effect for sitej.u_0jandu_1j: Random effects for the intercept and slope, respectively, capturing site-level variance.

- Level-1 (Patient):

5. Interpretation and Reporting:

- Report the fixed effects (

γ_10for average treatment effect;γ_01for effect of site type). - Report the variance components (variance of

u_0jandu_1j). A significant variance ofu_1jindicates heterogeneity of treatment effect across sites. - Use the model to shrin estimates of site-specific effects, providing more reliable estimates than analyzing each site separately [25].

Protocol: Intensive Longitudinal Design for Cyclical Exposure-Response

1. Objective: To characterize the within-patient relationship between drug exposure and a biomarker response across a biological cycle (e.g., menstrual cycle) in a Phase I study.

2. Experimental Design:

- Design: Repeated measures within subjects over multiple cycles.

- Participants: Healthy volunteers or patients with regular cycles.

- Sampling: Intensive pharmacokinetic and pharmacodynamic (PK/PD) sampling at key phases of the cycle (e.g., at least three observations per cycle phase) [27].

3. Data Collection:

- Record precise cycle start dates and confirm phases with ovulation tests or hormone measurements [27].

- Collect PK samples (e.g., for drug concentration) and PD measurements (e.g., biomarker levels) at each visit.

- Use daily diaries or ecological momentary assessment (EMA) for subjective outcomes [27].

4. Statistical Analysis - MLM Specification:

- Level-1 (Within-Cycle/Within-Subject):

Biomarker_ij = β_0j + β_1j*(Drug_Concentration_ij) + β_2j*(Cycle_Phase_ij) + e_ij - Level-2 (Between-Subject):

β_0j = γ_00 + u_0jβ_1j = γ_10 + u_1jβ_2j = γ_20

- This model assesses whether the exposure-response relationship (

β_1j) varies across individuals and whether the biomarker level differs by cycle phase (β_2j).

5. Interpretation:

- The model provides an average exposure-response (

γ_10) and its between-subject variability (variance ofu_1j). - A significant

γ_20indicates a meaningful effect of the cycle phase on the biomarker, independent of drug exposure.

The Scientist's Toolkit: Essential Reagents and Materials

Table 1: Key Research Reagent Solutions for Implementing MLM in MIDD

| Item Name | Type (Software/Resource) | Primary Function in MLM/MIDD |

|---|---|---|

| Mplus [25] | Statistical Software | Flexible software for fitting a wide range of multilevel regression and structural equation models. Handles complex latent variable models and diverse data types. |

| SAS PROC MIXED [25] | Statistical Software (Procedure) | A standard procedure within SAS for fitting linear mixed models (multilevel models). Widely used in pharmaceutical statistics and clinical trial analysis. |

| HLM Software [28] | Statistical Software | Specialized software dedicated to fitting hierarchical linear models. Known for its intuitive interface that mirrors the multilevel model structure. |

| R with lme4/nlme packages [24] | Statistical Software (Packages) | Open-source environment with powerful packages (lme4, nlme) for fitting linear and nonlinear mixed-effects models. Highly flexible and customizable. |

| Carolina Premenstrual Assessment Scoring System (C-PASS) [27] | Methodological Tool | A standardized system for diagnosing premenstrual disorders based on daily symptom ratings. Exemplifies the rigorous prospective data collection needed for cyclical analysis. |