Mastering Systematic Review Keyword Research: A Comprehensive Guide for Biomedical Professionals

This article provides a comprehensive framework for conducting exhaustive keyword research in systematic reviews for biomedical and clinical research.

Mastering Systematic Review Keyword Research: A Comprehensive Guide for Biomedical Professionals

Abstract

This article provides a comprehensive framework for conducting exhaustive keyword research in systematic reviews for biomedical and clinical research. Covering foundational principles to advanced optimization techniques, it addresses how to identify key concepts, leverage controlled vocabularies like MeSH and Emtree, employ innovative methods such as the WINK technique, troubleshoot common pitfalls, and validate search strategies. Designed for researchers, scientists, and drug development professionals, this guide ensures methodological rigor, enhances search sensitivity and specificity, and ultimately supports the creation of robust, reproducible, and comprehensive systematic reviews.

Understanding the Core Principles of Systematic Search Strategies

The systematic review process represents a cornerstone of evidence-based practice, providing a structured methodology for synthesizing existing research to inform clinical and policy decisions. The foundation of any high-quality systematic review is a precisely formulated research question, which guides every subsequent step from literature search to data synthesis. This protocol details the application of the PICO framework—a mnemonic encompassing Patient, Intervention, Comparison, and Outcome—as a rigorous methodology for developing focused research questions and extracting strategic keywords for comprehensive literature retrieval. We present experimental protocols for translating PICO elements into search strategies, visualization methodologies for representing the systematic review workflow, and reagent solutions for implementing search syntax across major biomedical databases. Proper application of this framework ensures research questions are both clinically relevant and structured to facilitate efficient, reproducible evidence retrieval, thereby establishing a robust foundation for systematic reviews that validly address their intended clinical or scientific inquiries.

The PICO framework is a systematic tool used to formulate focused, answerable clinical questions in evidence-based practice. By deconstructing a clinical scenario into its core components, PICO provides structure for developing research questions that are both directly relevant to patient problems and phrased to direct searches toward precise, relevant evidence [1]. This framework addresses the fundamental challenge that natural language questions often lack the specificity required for efficient literature searching, leading to potentially incomplete or biased evidence retrieval [1]. The modest investment of time required to construct a PICO question yields significant returns through more effective and efficient evidence searches, enabling researchers and clinicians to more rapidly locate the best available evidence to inform clinical decision-making [1].

Originally developed for interventional questions, the PICO framework has evolved to accommodate various types of clinical inquiries, including those related to diagnosis, prognosis, etiology, and prevention [1] [2]. Its utility has been recognized as potentially universal for scientific endeavors beyond clinical settings, with some proponents arguing it can be applied to all research designs and disciplines [2]. This broader application conceptualizes PICO elements as inherent components of all research: the research object (Problem), application of a theory or method (Intervention), alternative theories or methods (Comparison), and knowledge generation (Outcome) [2]. Within systematic reviews specifically, PICO serves dual purposes: framing the clinical question and developing comprehensive literature search strategies [2].

PICO Components and Question Formulation

Core PICO Elements

The PICO framework comprises four core components that systematically define the key elements of a clinical research question. These components provide the structural foundation for developing focused, answerable questions suitable for systematic inquiry:

P (Patient, Problem, or Population): This element refers to the individual or group of patients or the clinical problem being addressed. When defining this component, researchers should consider not only the specific health condition but also relevant demographic factors (age, sex), comorbid conditions, clinical setting, and other characteristics that define the population of interest [1]. A crucial consideration is defining a population that balances clinical specificity with practical research feasibility—while a patient might be a "73-year-old woman with hypertension," the research population would more appropriately be "older adults with hypertension" or "post-menopausal women with hypertension" to align with how study populations are typically defined in clinical research [1].

I (Intervention or Investigated Condition): This component specifies the main intervention, diagnostic test, exposure, or prognostic factor under investigation. The intervention should be described with sufficient detail to enable precise searching, including specifics such as dosage, frequency, duration, or intensity when applicable [1]. For non-interventional questions, this element might encompass exposures, risk factors, prognostic factors, or diagnostic tests depending on the question type [2].

C (Comparison or Control): The comparison element represents the alternative against which the intervention is evaluated. This may be a standard treatment, placebo, different intervention, or even no intervention [1]. In some question types (particularly prognosis or etiology), this component may be less relevant or inapplicable [1]. The comparison provides essential context for interpreting the relative benefits or harms of the intervention.

O (Outcome(s)): Outcomes are the measurable effects, consequences, or endpoints of interest that determine the effectiveness or impact of the intervention. Outcomes should be clinically important rather than surrogate markers whenever possible [1]. Examples include mortality rates, disease incidence, symptom resolution, functional status improvements, or test performance characteristics (sensitivity, specificity) [1]. Defining relevant, patient-centered outcomes enhances the clinical utility of the systematic review.

PICO Variations by Question Type

The application and emphasis of PICO elements vary significantly depending on the type of clinical question being investigated. The table below illustrates how each PICO component is operationalized across major question domains:

Table 1: PICO Elements Across Different Question Types

| Question Type | Patient/Population | Intervention/Investigation | Comparison | Outcomes |

|---|---|---|---|---|

| Therapy | Patient's disease or condition | Therapeutic intervention (drug, surgery, advice) | Standard care, another intervention, or placebo | Mortality, complications, disease recurrence, quality of life |

| Diagnosis | Target disease or condition | Diagnostic test or procedure | Current reference standard test | Test utility (sensitivity, specificity, accuracy) |

| Prognosis | Prognostic factor or clinical problem | Disease, drug, or time exposure | Standard care or no exposure (may be inapplicable) | Survival rates, disease progression, recovery time |

| Etiology/Harm | Risk factors or general health condition | Exposure of interest (including dose and duration) | Absence of exposure or alternative exposure | Disease incidence, rates of disease progression |

| Prevention | Risk factors and general health condition | Preventive measure (medication, lifestyle change) | Absence of preventive measure | Disease incidence, mortality, morbidity rates |

Formulating the Question Statement

After identifying the key PICO elements, researchers synthesize them into a formal question statement. The specific structure and verb tense vary by question type, but all should maintain clarity, focus, and directness. The following examples illustrate properly structured PICO questions:

Therapy: "In [Population], does [Intervention] result in [Outcome] compared with [Comparison]?" Example: "In patients with hypertension and at least one additional cardiovascular disease risk factor, does tight systolic blood pressure control lead to lower rates of myocardial infarction, stroke, heart failure, and cardiovascular mortality compared to conservative control?" [1]

Diagnosis: "In [Population], is [Intervention] as accurate as [Comparison] for diagnosing [Outcome]?" Example: "Among asymptomatic adults at low risk of colon cancer, is fecal immunochemical testing (FIT) as sensitive and specific for diagnosing colon cancer as colonoscopy?" [1]

Prognosis: "In [Population], do those with [Intervention] have different [Outcome] than those without [Intervention]?" Example: "Among adults with pneumonia, do those with chronic kidney disease (CKD) have a higher mortality rate than those without CKD?" [1]

Etiology/Harm: "Are [Population] with [Intervention] at higher risk of [Outcome] than [Comparison]?" Example: "Are women with a history of pelvic inflammatory disease (PID) at higher risk for gynecological cancers than women with no history of PID?" [1]

Prevention: "In [Population], does [Intervention] reduce [Outcome] compared with [Comparison]?" Example: "Among adults with a history of myocardial infarction, does adherence to a Mediterranean diet lower risk of a second myocardial infarction compared to those who do not adopt a Mediterranean diet?" [1]

Experimental Protocol: Translating PICO to Search Strategy

Keyword Extraction and Vocabulary Mapping

The initial phase of transforming a PICO question into an executable search strategy involves systematic keyword extraction and vocabulary mapping. This protocol ensures comprehensive coverage of relevant terminology across biomedical databases:

Deconstruct PICO Elements: List all relevant terms for each PICO component separately. For the population element, include specific diagnoses, demographic terms, clinical settings, and related conditions. For interventions, include generic and brand names, procedure terminology, and technical specifications.

Expand Terminology: Utilize controlled vocabularies such as Medical Subject Headings (MeSH) in MEDLINE or Emtree in Embase to identify standardized terms for each concept. Include synonyms, related terms, acronyms, plural forms, and British/American spelling variations.

Structure Search Blocks: Organize search terms into conceptual blocks corresponding to each PICO element. Combine terms within each block using Boolean OR operators, then combine the conceptual blocks using Boolean AND operators to ensure results address all PICO components.

Implement Syntax and Truncation: Apply appropriate search syntax for each database, including truncation symbols for word stemming, phrase searching with quotation marks, and proximity operators where available to refine retrieval.

Table 2: Search Strategy Development Reagents

| Research Reagent | Function | Implementation Example |

|---|---|---|

| Boolean Operators | Logical connectors that define relationships between search terms | AND: narrows search (hypertension AND diet); OR: broadens search (hypertension OR high blood pressure); NOT: excludes terms (hypertension NOT pulmonary) |

| Controlled Vocabulary | Pre-defined standardized terms used to index database content | MeSH (Medical Subject Headings) in PubMed; Emtree in Embase; CINAHL Headings in CINAHL |

| Truncation Symbols | Wildcards that retrieve variant word endings | * (asterisk) for multiple characters: hypertens* retrieves hypertension, hypertensive; ? (question mark) for single character: wom?n retrieves woman, women |

| Proximity Operators | Specify distance between search terms within documents | NEAR/x in some platforms: (diet NEAR/5 hypertension) finds terms within 5 words of each other |

| Field Codes | Restrict search to specific database fields | [ti] for title; [au] for author; [mh] for MeSH terms; [tw] for text words |

Search Strategy Optimization and Validation

After constructing the initial search strategy, systematic optimization and validation are essential to ensure comprehensive retrieval while maintaining precision:

Pilot Testing: Execute the preliminary search strategy and review initial results for relevance. Analyze the search terms used in highly relevant articles to identify potentially missing terminology.

Recall and Precision Assessment: Calculate preliminary recall (proportion of relevant articles retrieved from a known set) and precision (proportion of relevant articles in search results) using a validated set of key articles identified through known-source searching.

Search Iteration: Refine the search strategy based on pilot results, adding newly identified terms and adjusting syntax to improve retrieval. Document all search iterations with dates and results for transparency and reproducibility.

Peer Review: Utilize the PRESS (Peer Review of Electronic Search Strategies) framework to have an information specialist or subject expert review the search strategy for completeness, syntax errors, and logical structure.

Database Translation: Adapt the refined search strategy for additional databases, accounting for differences in controlled vocabularies, search syntax, and available fields. Maintain conceptual consistency while respecting database-specific requirements.

Data Analysis and Synthesis Methodology

Systematic Review Classification and Approach

Systematic reviews can incorporate qualitative, quantitative, or mixed-method approaches depending on the nature of the included studies and the research question. The decision between these approaches significantly influences the data analysis plan:

Table 3: Systematic Review Classification by Methodology

| Review Type | Research Questions | Data Type | Analysis Methods | Results Presentation |

|---|---|---|---|---|

| Qualitative Systematic Review | Open-ended questions to understand concepts or formulate hypotheses | Words, concepts, themes from observations, interviews, literature | Content analysis, thematic analysis, discourse analysis | Textual summary identifying patterns, themes, meanings |

| Quantitative Systematic Review | Test or confirm existing hypotheses or theories | Numerical data from measurements, counts, ratings | Statistical analysis, meta-analysis | Numbers, graphs, statistical summaries with effect sizes |

| Mixed-Methods Systematic Review | Complex questions requiring both exploratory and confirmatory approaches | Both textual and numerical data | Separate qualitative and quantitative synthesis followed by integration | Integrated presentation explaining how qualitative results contextualize quantitative findings |

Quantitative Synthesis (Meta-Analysis) Protocol

When studies are sufficiently homogeneous in design, quality, and measured outcomes, quantitative synthesis through meta-analysis provides a statistical approach to combining results across studies:

Effect Size Calculation: Extract or calculate appropriate effect sizes from each included study based on outcome type. For continuous outcomes, use mean differences or standardized mean differences; for dichotomous outcomes, use risk ratios, odds ratios, or risk differences [3].

Weighting Strategy: Assign weights to individual studies based on precision, typically using inverse variance weighting where larger studies with smaller standard errors contribute more to the pooled estimate [3].

Model Selection: Choose between fixed-effect models (assuming a single true effect size across studies) and random-effects models (assuming effect sizes vary across studies due to both sampling error and genuine differences) [3]. The choice should be based on clinical and methodological considerations alongside statistical heterogeneity assessment.

Heterogeneity Quantification: Calculate I² statistics and Cochran's Q to quantify between-study heterogeneity, with I² values of 25%, 50%, and 75% typically representing low, moderate, and high heterogeneity respectively [3].

Sensitivity and Subgroup Analyses: Conduct planned sensitivity analyses to assess the robustness of findings to methodological choices, and subgroup analyses to explore potential sources of heterogeneity [3].

Bias Assessment: Evaluate potential for publication bias and small-study effects using funnel plots, Egger's test, or other appropriate statistical methods [3].

For heterogeneous studies where statistical pooling is inappropriate, narrative synthesis following a systematic approach is recommended, collecting major findings by study type and categorizing results as positive, negative, mixed, or inconclusive based on frequency and consistency of findings [4].

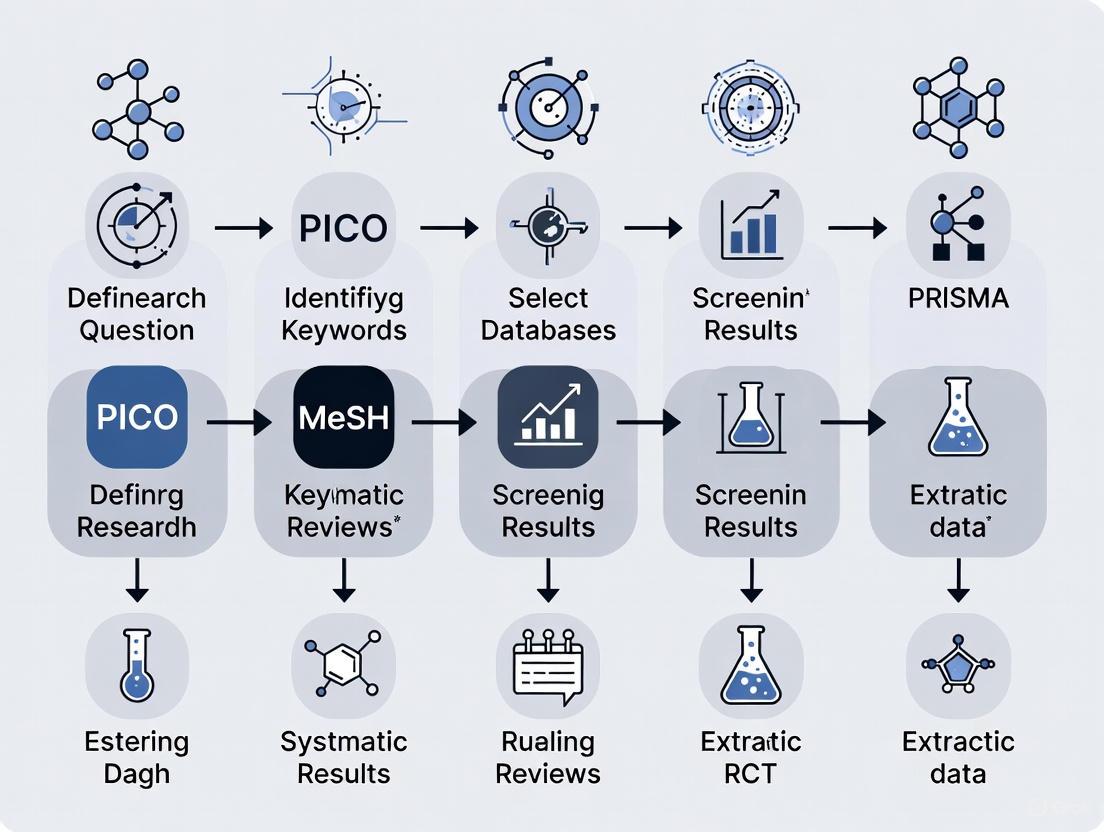

Visualization of Systematic Review Workflow

The following diagram illustrates the complete systematic review process from question formulation through evidence synthesis, highlighting the central role of the PICO framework:

Systematic Review Workflow from Question to Synthesis

PICO to Keywords Transformation Protocol

The translation of PICO elements into searchable concepts requires systematic mapping of clinical concepts to database terminology. The following protocol ensures comprehensive keyword development:

Conceptual Expansion: For each PICO element, brainstorm related terms, synonyms, acronyms, and variations in terminology. Consult domain experts, textbooks, and relevant articles to identify additional terminology.

Vocabulary Mapping: Identify controlled vocabulary terms (MeSH, Emtree) for each key concept. Include both broader and narrower terms to ensure appropriate scope.

Syntax Application: Structure the search using Boolean logic, with OR operations within concepts and AND operations between PICO concepts. Apply appropriate truncation and field codes based on database capabilities.

Iterative Refinement: Test search sensitivity and precision using known relevant articles. Modify strategy based on results, adding missing terms and removing non-productive terms.

The following diagram illustrates the transformation of PICO elements into executable search strategies:

PICO Elements to Search Strategy Transformation

Application Example: Therapy Question

To illustrate the complete process from question formulation to search strategy development, consider the following therapy question example:

Clinical Scenario: A clinician seeks evidence regarding the effectiveness of cognitive behavioral therapy versus medication for treating depression in adolescents.

PICO Elements:

- P: Adolescents with major depressive disorder

- I: Cognitive behavioral therapy

- C: Selective serotonin reuptake inhibitors (SSRIs)

- O: Reduction in depressive symptoms, remission rates

PICO Question: "In adolescents with major depressive disorder, does cognitive behavioral therapy result in greater reduction in depressive symptoms and higher remission rates compared to treatment with SSRIs?"

Keyword Development:

Table 4: Search Terms for Depression Therapy Example

| PICO Element | Conceptual Terms | Specific Search Terms | MeSH Terms |

|---|---|---|---|

| Population | Adolescents with depression | teen, adolescent, youth, young person, "major depressive disorder", depression, depressive | "Depressive Disorder", "Adolescent" |

| Intervention | Cognitive behavioral therapy | "cognitive behavioral therapy", CBT, "cognitive therapy", "behavior therapy" | "Cognitive Behavioral Therapy" |

| Comparison | SSRIs | SSRI, "selective serotonin reuptake inhibitor", fluoxetine, sertraline, citalopram, escitalopram | "Serotonin Uptake Inhibitors" |

| Outcome | Symptom reduction, remission | "depressive symptoms", remission, response, "Hamilton Depression Rating Scale", "Beck Depression Inventory", improvement | "Treatment Outcome", "Remission Induction" |

Sample PubMed Search Strategy:

This example demonstrates the systematic translation of a clinical question into an executable search strategy, illustrating the practical application of the PICO framework for evidence retrieval.

The Critical Role of Sensitivity vs. Specificity in Systematic Reviews

In the rigorous process of conducting a systematic review, the development of a search strategy is a foundational step that directly impacts the validity and comprehensiveness of the findings. The principle of evidence synthesis requires that reviewers identify as many relevant studies as possible to minimize bias and provide reliable conclusions [5]. This introduces a fundamental tension in search strategy design: the balance between sensitivity (the ability to identify all relevant records) and precision (the proportion of retrieved records that are relevant) [6]. For systematic reviews, the scale overwhelmingly tips in favor of sensitivity, accepting that a large volume of irrelevant records will be retrieved to ensure that nearly all pertinent studies are captured [6]. This application note delineates detailed protocols for constructing highly sensitive search strategies, framed within the broader context of methodological rigor in systematic reviews.

Theoretical Framework: Sensitivity and Specificity in Retrieval

Defining the Core Concepts

- Sensitivity (Recall): In search terminology, sensitivity refers to the number of relevant records identified divided by the total number of relevant records in existence. A highly sensitive search aims to maximize this proportion.

- Precision: Precision is the number of relevant records identified divided by the total number of records (both relevant and irrelevant) retrieved by the search.

- The Trade-off: In practice, maximizing sensitivity involves broadening search parameters, which invariably decreases precision by retrieving more irrelevant records [6]. This trade-off is an inherent characteristic of information retrieval. For systematic reviews, which aim to be as comprehensive as possible, it is methodologically sound to favor sensitivity [6].

The Role of Keyword Research

Keyword research serves as the primary mechanism for controlling the sensitivity-precision balance. A poorly constructed search strategy risks missing key studies, introducing selection bias and potentially invalidating the review's conclusions [7]. The goal is to create a search strategy that functions as a wide net, capturing the vast majority of relevant literature, with the understanding that subsequent screening phases will filter out irrelevant results [6].

Quantitative Evidence: Impact of Search Strategy Techniques

The following table summarizes empirical findings on the performance of different search strategy approaches, highlighting their impact on sensitivity.

Table 1: Comparative Performance of Search Strategy Techniques

| Technique | Description | Impact on Sensitivity | Key Findings |

|---|---|---|---|

| Conventional Keyword Selection | Relies on a limited set of keywords and controlled vocabulary terms derived from initial subject expert input. | Baseline | Retrieved 74 and 197 articles for two test queries [7]. |

| WINK (Weightage Identified Network of Keywords) Technique | Uses network visualization charts (e.g., VOSviewer) to analyze keyword interconnections, excluding terms with limited networking strength [7]. | Significantly Increased | Yielded 69.81% and 26.23% more articles for the same test queries compared to the conventional approach [7]. |

| Combining Keywords & Controlled Vocabulary | Uses both free-text keywords (for title/abstract) and standardized index terms (e.g., MeSH, Emtree) [6] [8]. | Increased | Index terms find studies based on conceptual relevance, not just word presence, capturing records missed by keywords alone [6]. |

| Systematic Grey Literature Search | Searching beyond bibliographic databases (e.g., trial registries, theses, conference abstracts) [6]. | Increased | Mitigates publication bias by identifying unpublished or non-journal studies that database searches may miss [6]. |

Experimental Protocols for Search Strategy Development

Protocol 1: Foundational Search Strategy Formulation

This protocol outlines the standard methodology for building a comprehensive search strategy.

I. Materials and Reagents

- Bibliographic Databases: Access to relevant databases (e.g., MEDLINE/PubMed, Embase, Cochrane Central Register of Controlled Trials, PsycINFO) [8].

- Grey Literature Sources: Access to clinical trial registries (e.g., ClinicalTrials.gov), institutional repositories, and conference proceedings [6].

- Search Strategy Tools: Reference management software (e.g., EndNote, Covidence) for storing and deduplicating results [6].

II. Methodology

- Define Core Concepts: Deconstruct the research question into its fundamental components using a framework like PICO (Population, Intervention, Comparison, Outcome) [8].

- Generate Synonym List: For each PICO concept, compile an exhaustive list of synonyms, acronyms, spelling variants, and related terms [8].

- Incorporate Controlled Vocabulary: Identify the corresponding controlled vocabulary terms (e.g., MeSH in PubMed, Emtree in Embase) for each concept [6] [8].

- Apply Search Syntax:

- Combine all synonymous terms for a single concept with the Boolean operator

ORto broaden the search [6]. - Use truncation (

*or$) to capture word variations (e.g.,therap*finds therapy, therapies, therapist) [6]. - Use wildcards (

?or#) to account for spelling variations (e.g.,wom#nfinds woman and women) [6]. - Combine the different concepts (P, I, C, O) with the Boolean operator

AND[6].

- Combine all synonymous terms for a single concept with the Boolean operator

- Translate and Test: Translate the search strategy for the syntax requirements of other databases. Test the search performance by verifying if known key studies are retrieved [6] [8].

Protocol 2: Application of the WINK Technique

This advanced protocol utilizes a network analysis approach to objectively refine keyword selection, thereby enhancing sensitivity.

I. Materials and Reagents

- Primary Tool: VOSviewer software for constructing and visualizing bibliometric networks [7].

- Data Source: A preliminary set of relevant articles or a Scopus/PubMed search on the broad topic.

- Validation Database: PubMed (for its robust MeSH indexing) [7].

II. Methodology

- Data Extraction: Extract keywords (author keywords, Index Keywords) from the preliminary article set.

- Network Visualization: Input the keywords into VOSviewer to generate a network visualization chart. This chart maps the interconnections and co-occurrence strength between keywords within the research domain [7].

- Keyword Weightage Analysis: Analyze the network to identify keywords with high "weightage," indicated by strong links to multiple core concepts. Keywords with limited or weak networking strength are candidates for exclusion [7].

- MeSH Term Identification: Utilize the high-weightage keywords and the "MeSH on Demand" tool on PubMed to identify the most relevant and comprehensive set of MeSH terms [7].

- Search String Construction: Build the final search string using the identified MeSH terms and high-weightage keywords, combining them with Boolean operators.

- Performance Validation: Execute the search and compare the yield against a conventionally developed search string to quantify the improvement in sensitivity [7].

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Resources for Systematic Review Search Strategies

| Item | Function / Application |

|---|---|

| Medical Subject Headings (MeSH) | The NLM's controlled vocabulary thesaurus used for indexing articles in PubMed/MEDLINE. Using MeSH terms ensures studies are found by concept, not just author terminology [7] [6]. |

| Boolean Operators (AND, OR, NOT) | Logical commands used to combine search terms. OR broadens a search (increases sensitivity), while AND narrows it (increases precision) [6]. |

| Truncation and Wildcards | Symbols (e.g., *, ?, #) that account for word variations and plurals, ensuring different spellings and endings of a word are captured [6]. |

| Bibliographic Databases | Multidisciplinary and subject-specific databases (e.g., PubMed, Embase, Scopus, CINAHL, PsycINFO) that must be searched comprehensively to avoid database-specific bias [8]. |

| Grey Literature Sources | Trial registries, dissertations, and conference proceedings. Searching these is critical to minimize publication bias, as they contain studies not published in commercial journals [6]. |

| Covidence | A web-based software platform that facilitates collaboration during the systematic review process, including storing search strategies, screening results, and data extraction [6]. |

| PRISMA-S Checklist | An extension of the PRISMA statement that provides reporting standards for the search process, ensuring transparency and reproducibility [8]. |

The critical role of sensitivity in systematic review search strategies cannot be overstated. A methodologically sound approach prioritizes a comprehensive, sensitive search to capture the breadth of existing evidence, accepting the subsequent burden of a low-precision, high-volume result set. The protocols outlined herein—from the foundational use of controlled vocabulary and Boolean logic to the advanced, data-driven WINK technique—provide researchers with a structured pathway to achieve this goal. By rigorously applying these methods and transparently reporting the process, researchers can fortify the integrity of their systematic reviews, ensuring that their conclusions are built upon the most complete evidence base possible.

In the realm of evidence-based medicine and systematic reviews, comprehensive literature searching is paramount. Controlled vocabularies, also known as subject headings, thesauri, or descriptor terms, provide an organized, standardized approach to classifying knowledge across scientific databases [9]. These pre-defined, carefully selected words and phrases solve two major challenges in literature retrieval: the problem of synonyms, where multiple terms describe the same concept, and ambiguity, where the same term has different meanings across contexts [10].

For researchers, scientists, and drug development professionals conducting systematic reviews, mastering controlled vocabularies is not optional—it is essential for methodological rigor. These vocabularies bring uniformity to how publications are indexed within databases, creating consistency and precision that transcends the variable terminology authors might use [11]. This guide provides detailed application notes and protocols for the three predominant controlled vocabularies in the health sciences: Medical Subject Headings (MeSH), Emtree, and CINAHL Headings, framing their use within a robust keyword research methodology for systematic reviews.

Core Concepts and Definitions

What are Controlled Vocabularies?

Controlled vocabularies are structured, hierarchical lists of terms used by bibliographic databases to tag records based on their subject matter [12] [9]. Indexers, who are often specially trained, read the full text of articles and assign the most relevant terms from the vocabulary to represent the concepts covered [11]. This process transforms diverse natural language into a consistent, searchable language.

- Textwords (Keywords): Terms you choose yourself to search parts of an article's record, such as the title, abstract, and author-provided keywords [10]. This is often called "free-text" searching.

- Subject Headings: The pre-assigned terms from a controlled vocabulary used to label articles by their core concepts [10].

- Hierarchical Structure: Most vocabularies are organized in trees from broader to narrower terms, allowing searchers to explore related concepts [12].

The Imperative for Comprehensive Searching

A comprehensive systematic review search strategy must incorporate both subject headings and textwords for each concept [10]. Relying solely on one method risks missing critical evidence. Subject headings can be missing from records, and new or highly specific concepts may not yet have a dedicated subject heading [12]. Conversely, textword searching alone is vulnerable to synonyms and variations in author terminology [10].

Table 1: Comparison of Search Term Types

| Feature | Subject Headings | Textwords (Keywords) |

|---|---|---|

| Definition | Pre-assigned, standardized terms from a database's controlled vocabulary [10] | Natural language terms chosen by the searcher [10] |

| Consistency | High; uniform across all indexed records [11] | Low; depends on author's word choice |

| Searches | The entire record, regardless of where the concept is discussed | Specific fields (e.g., Title, Abstract) [10] |

| Advantages | Solves synonym and ambiguity problems [10] | Captures new concepts not yet in vocabularies |

| Disadvantages | Requires learning each database's system; indexing may be delayed | Requires guessing all possible term variations |

The Scientist's Toolkit: Database Vocabularies and Research Reagents

Systematic reviewers must be equipped with knowledge of the key "research reagents"—the databases and their associated vocabularies. Each database uses a unique controlled vocabulary tailored to its disciplinary focus [12] [10].

Table 2: Essential Research Reagents: Major Databases and Their Controlled Vocabularies

| Database | Primary Discipline | Controlled Vocabulary | Vocabulary Characteristics |

|---|---|---|---|

| MEDLINE | Biomedicine and Life Sciences | Medical Subject Headings (MeSH) [13] | One of the oldest, best-known health thesauri; hierarchical structure [12] |

| Embase | Pharmacology and Biomedicine | Emtree [14] | Extensive coverage of drugs and medical devices; updated 3 times yearly [14] |

| CINAHL | Nursing and Allied Health | CINAHL Subject Headings [15] | Modeled on MeSH but adapted for nursing and allied health literature [9] |

| APA PsycInfo | Psychology and Behavioral Sciences | APA Thesaurus [11] | Focus on psychological concepts and processes |

| Cochrane Library | Evidence-Based Medicine | MeSH | Uses MeSH for indexing systematic reviews and trials |

Experimental Protocols for Vocabulary-Driven Search Strategy

The following protocols provide a step-by-step methodology for integrating controlled vocabularies into a systematic review search strategy.

Protocol 1: Foundational Search Strategy Development

This protocol outlines the core process of building a search strategy using controlled vocabularies and textwords.

Materials:

- Database Interface: (e.g., Ovid, EBSCOhost, PubMed)

- Thesaurus Browser: MeSH Browser [16], Emtree, CINAHL Headings [15]

- Documentation Tool: Spreadsheet or dedicated systematic review software

Procedure:

- Deconstruct Research Question: Break down your primary research question into discrete core concepts (e.g., for "How do environmental pollutants affect endocrine function?", the concepts are "environmental pollutants" and "endocrine function") [7].

- Identify Keywords (Textwords): For each concept, brainstorm a comprehensive list of synonyms, related terms, and spelling variations. Use truncation (

*or?) and wildcards to account for word variations [15]. - Map to Subject Headings: For each database to be searched, use the built-in thesaurus or term finder tool to identify the corresponding controlled vocabulary terms for your concepts [12] [9].

- Combine Search Sets: Within each concept, combine all identified keywords and subject headings using the Boolean operator

OR. This creates a comprehensive search set for each concept. - Combine Concepts: Combine the different concept search sets using the Boolean operator

ANDto finalize the search strategy. - Test and Refine: Run the search and review a sample of results. Iteratively refine the strategy by adding missing terms or removing sources of noise.

Protocol 2: Advanced Vocabulary Techniques (Explode, Focus, Qualifiers)

This protocol details the application of advanced database features to enhance search precision and recall.

Materials:

- A subject heading identified via Protocol 1.

- Database interface supporting advanced features (Ovid, EBSCOhost).

Procedure:

- 'Explode' a Subject Heading:

'Focus' a Subject Heading (Major Concept):

- Purpose: To restrict results to records where the subject heading is considered a major point of the article, not just a minor mention. This increases search specificity [12].

- When to Use: When a topic has a large body of literature and you need to narrow results to the most relevant, in-depth studies [12].

- Method:

Apply Qualifiers (Subheadings):

- Purpose: To restrict the search to a specific aspect of a subject heading, such as adverse effects, drug therapy, or psychology [12].

- When to Use: To narrow a broad subject heading to a more precise research question aspect.

- Method:

Data Presentation: Comparative Syntax and Functionality

The implementation of advanced features varies significantly across databases and platforms. The following tables synthesize the key syntax differences.

Table 3: Syntax for Exploding and Focusing Subject Headings Across Platforms

| Database | Interface | Explode Syntax (Example) | Focus/Major Syntax (Example) |

|---|---|---|---|

| MEDLINE | Ovid | exp Health education/ [12] |

*Health education/ [12] |

| Embase, Emcare, APA PsycInfo | Ovid | exp Health education/ [12] |

*Health education/ [12] |

| CINAHL | EBSCOhost | MH "Health Education+" [12] |

MM "Health Education" [15] |

| Cochrane Library | Cochrane | MeSH descriptor: [Health Education] explode all trees [12] |

[mh Education[mj]] [12] |

| ERIC | ProQuest | MAINSUBJECT.EXACT.EXPLODE("Patient Education") [12] |

MJMAINSUBJECT.EXACT("Patient Education") [12] |

Table 4: Search Field Codes for Comprehensive Literature Searching

| Database | Interface | Title Field | Abstract Field | Subject Heading Field |

|---|---|---|---|---|

| MEDLINE | Ovid | .ti. |

.ab. |

/ (e.g., Health education/) |

| Embase | Ovid | .ti. |

.ab. |

/ |

| CINAHL | EBSCOhost | TI |

AB |

MH for exact heading, SU for keyword-in-subjects [15] |

| PubMed | --- | [ti] |

[ab] |

[mh] |

Advanced Application: The WINK Technique for Systematic Keyword Selection

The Weightage Identified Network of Keywords (WINK) technique is a modern methodology that integrates computational analysis with expert insight to enhance the keyword selection process for systematic reviews [7].

Objective: To develop a structured framework for keyword identification that improves the thoroughness and precision of evidence synthesis by analyzing the interconnections among keywords within a specific domain [7].

Materials:

- Bibliographic database (e.g., PubMed/MEDLINE)

- Visualization Software: VOSviewer [7]

- Seed keywords from subject experts

Procedure:

- Initial Search: Conduct a preliminary search using seed keywords derived from the research question and subject expert insight [7].

- MeSH Term Extraction: Utilize tools like "MeSH on Demand" to identify relevant MeSH terms from the results of the initial search [7].

- Network Visualization and Weightage Assignment:

- Extract the MeSH terms and keywords from a robust set of relevant articles.

- Input these terms into VOSviewer to generate a network visualization chart.

- Analyze the networking strength between terms related to different concepts (e.g., Q1 and Q2 concepts) [7].

- Keyword Pruning and Selection: Exclude keywords with limited networking strength, prioritizing those with higher weightage and stronger connections within the network [7].

- Search String Construction: Build the final search string using the high-weightage MeSH terms and keywords identified through the WINK process.

- Validation: Comparative studies have shown that search strings built with the WINK technique can retrieve significantly more articles (e.g., 69.81% more for one research question) compared to conventional approaches [7].

Mastering MeSH, Emtree, and CINAHL Headings is a foundational skill for researchers conducting systematic reviews in the health sciences. A protocolized approach that systematically combines controlled vocabulary (to control for synonymy and ambiguity) with a comprehensive textword search (to capture nascent and unindexed concepts) is non-negotiable for achieving high recall and precision. By applying the detailed application notes and experimental protocols outlined in this document—from basic search construction to advanced techniques like exploding and focusing, and even leveraging cutting-edge methods like the WINK technique—researchers and drug development professionals can ensure their literature searches are rigorous, reproducible, and minimize the risk of bias, thereby laying a solid foundation for a high-quality systematic review.

In the realm of evidence-based medicine, systematic reviews are a cornerstone of scientific literature, providing a comprehensive synthesis of existing research on a specific question. The integrity and validity of a systematic review are fundamentally dependent on the completeness of the literature search, which aims to capture as many relevant studies as possible [6]. A critical challenge in constructing a comprehensive search strategy is accounting for the inherent variability in human language. Authors of primary research may describe the same concept using different synonyms, spelling variants (e.g., "behavior" vs. "behaviour"), or acronyms (e.g., "CVA" for "cerebrovascular accident") [17] [18]. Failure to account for these variations can lead to a biased and incomplete set of results, ultimately undermining the review's conclusions. Therefore, the meticulous identification and incorporation of natural language variants, including synonyms, spelling variations, and acronyms, is not merely a technical step but a fundamental principle in conducting rigorous systematic reviews [19] [20].

This document provides detailed application notes and protocols for identifying and handling these language variations within the context of keyword research for systematic reviews. It is structured to guide researchers, scientists, and drug development professionals through practical methodologies, supported by quantitative data and experimental protocols, to enhance the sensitivity and comprehensiveness of their search strategies.

Effective search strategy development relies on understanding the types of language variations and their impact. The primary goal is to maximize sensitivity (retrieving all relevant records) while accepting a trade-off in precision (retrieving only relevant records) to ensure comprehensiveness [6].

Table 1: Types of Natural Language Variations in Search Strategies

| Variation Type | Definition | Impact on Search | Examples |

|---|---|---|---|

| Synonyms & Related Terms | Different words or phrases used to describe the same concept. | High; crucial for recall. | "heart attack" vs. "myocardial infarction"; "kidney failure" vs. "renal failure" [21] [22] |

| Spelling Variants | Differences in spelling based on regional language conventions. | Medium; can cause relevant studies to be missed. | "tumor" vs. "tumour"; "pediatric" vs. "paediatric" [17] |

| Acronyms & Abbreviations | Shortened forms of phrases or words. | High; extremely common and ambiguous in scientific literature. | "CVA" for "cerebrovascular accident" or "costovertebral angle"; "MI" for "myocardial infarction" or "medical illustrator" [23] [18] |

| Subject Headings | Controlled vocabulary terms (e.g., MeSH, Emtree) assigned by database indexers. | High; tag articles by concept, overcoming keyword limitations [6] [21]. | MeSH term "Renal Insufficiency, Chronic" encompasses "chronic kidney disease," "chronic renal failure," "CKD," and "CRF" [21]. |

Table 2: Impact of Structured Keyword Identification Techniques

| Technique | Description | Reported Efficacy |

|---|---|---|

| Conventional Search (Subject Expert Input) | Relies on keywords and MeSH terms identified by domain experts. | Baseline for comparison [7]. |

| WINK Technique | Uses network visualization to assign weightage to MeSH terms, excluding those with limited networking strength [7]. | Retrieved 69.81% and 26.23% more articles for two sample research questions compared to conventional approaches [7]. |

| Combined Distributional Models | Uses ensemble semantic spaces (Random Indexing + Random Permutation) from clinical and journal article corpora for synonym and abbreviation extraction [24]. | Achieved a recall of 0.39 for abbreviations to long forms and 0.47 for synonyms within the top 10 candidate terms [24]. |

| LLM/BERT Disambiguation | Employs large language models (e.g., ChatGPT) or BERT-based models for acronym and symbol sense disambiguation [18]. | BERT-based models achieved over 95% accuracy in disambiguating acronym senses in clinical notes [18]. |

Experimental Protocols for Identifying Language Variations

Protocol: Building a Gold Set and Extracting Terms

Purpose: To create a foundational set of relevant articles ("gold set") to identify the synonyms, acronyms, and spelling variants used in the existing literature on the topic [20] [22].

Materials:

- Access to major bibliographic databases (e.g., PubMed/MEDLINE, Embase).

- Citation tracking tools (e.g., Scopus, Web of Science).

- Reference management software (e.g., EndNote, Zotero).

Methodology:

- Identify Sentinel Articles: Compile a shortlist of 3-6 highly relevant, authoritative papers fundamental to your research topic through preliminary scoping searches and expert consultation [20] [22].

- Forward and Backward Citation Tracking:

- Backward Tracking: Review the reference lists of the sentinel articles to identify prior key studies.

- Forward Tracking: Use citation databases to find newer articles that have cited the sentinel articles.

- Gold Set Compilation: Combine sentinel articles with the most relevant studies identified through citation tracking to form your "gold set."

- Term Extraction:

- Manual Analysis: Read the titles, abstracts, and full texts of gold set articles to manually extract keywords, synonyms, and acronyms.

- Tool-Assisted Analysis: Use tools like the Yale MeSH Analyzer to automatically extract the MeSH terms assigned to each article in your gold set [20]. This helps identify the controlled vocabulary used for key concepts.

- Validation: The final search strategy must be tested to ensure it retrieves all articles in the gold set.

Protocol: Systematic Keyword Mining and Expansion

Purpose: To systematically generate a comprehensive list of free-text keywords and account for spelling and morphological variations.

Materials:

- Bibliographic databases (PubMed, Ovid platforms).

- Text mining tools (e.g., PubMed PubReMiner, WriteWords word frequency counter) [19].

Methodology:

- Seed Term Search: Execute a preliminary search in a database like PubMed using 2-3 core concepts from your research question.

- Text Mining:

- PubMed PubReMiner: Enter a key phrase to query PubMed and analyze the resulting corpus. The tool provides frequency lists of words, MeSH terms, and authors found in the retrieved citations, helping to identify common synonyms and terminology [19].

- Word Frequency Tools: Use tools like WriteWords to analyze the text of multiple gold set article abstracts, generating a list of the most frequent words and phrases.

- Account for Spelling Variants: Proactively include common spelling variations for identified keywords (e.g., "behavior" OR "behaviour") [20].

- Apply Truncation and Wildcards: Use database-specific symbols to account for word stemming and pluralization.

Protocol: Acronym and Abambiguation Disambiguation

Purpose: To identify the expansions of relevant acronyms and resolve their ambiguities for accurate search formulation.

Materials:

- Access to clinical or biomedical text corpora (e.g., CASI dataset) [18].

- NLP tools or pre-trained models (e.g., BERT-based models, UMLS::SenseRelate) [18] [24].

- Medical dictionaries and terminology resources (e.g., Stedman's Medical Abbreviations) [18].

Methodology:

- Acronym Identification: Use heuristic rule-based and statistical methods to identify acronyms and abbreviations composed of capital letters that appear frequently in a target corpus [18].

- Sense Inventory Creation: For each target acronym, compile a list of all possible expansions (senses) using medical dictionaries, textbooks, and ontologies like the UMLS [18].

- Disambiguation:

- Knowledge-Based Method: Use tools like UMLS::SenseRelate, which calculates a score for each potential sense based on its similarity to the surrounding terms in the text, selecting the sense with the highest score [18].

- Supervised Machine Learning: Train a model, such as BioBERT, on an annotated dataset like the Clinical Acronym Sense Inventory (CASI). The model learns to classify the correct sense of an acronym based on its contextual window (e.g., the 12 preceding and subsequent words) [18].

- Search Integration: In the search strategy, include both the acronym and its most common, relevant long forms connected by the Boolean

ORoperator.

Visualization of Workflows

Search Strategy Development Workflow

Search strategy development workflow for systematic reviews

Natural Language Variation Identification

Identifying natural language variations for a core concept

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Handling Natural Language in Systematic Reviews

| Tool / Resource Name | Type | Primary Function | Application in Protocol |

|---|---|---|---|

| Medical Subject Headings (MeSH) [21] [7] | Controlled Vocabulary | Provides a standardized set of concepts and terms for indexing PubMed/MEDLINE. | Used to tag articles by concept, overcoming limitations of free-text keywords. Essential for comprehensive searching [6]. |

| Yale MeSH Analyzer [20] | Web-based Tool | Extracts and analyzes MeSH terms from a set of PubMed records. | Protocol 3.1: Rapidly identifies controlled vocabulary terms associated with gold set articles. |

| PubMed PubReMiner [19] | Text Mining Tool | Queries PubMed and provides frequency analysis of words and MeSH in results. | Protocol 3.2: Helps identify common synonyms, keywords, and terminology used in the literature on a topic. |

| UMLS::SenseRelate [18] | Knowledge-Based NLP Tool | Disambiguates ambiguous terms in biomedical text by assigning UMLS concepts. | Protocol 3.3: Used for acronym and symbol sense disambiguation based on contextual similarity. |

| BioBERT [18] | Pre-trained Language Model | A BERT-based model pre-trained on large-scale biomedical corpora. | Protocol 3.3: Can be fine-tuned for high-accuracy acronym sense disambiguation tasks using clinical datasets. |

| VOSviewer [7] | Network Visualization Software | Creates, visualizes, and explores maps based on network data of scientific literature. | Used in the WINK technique to generate network charts for analyzing keyword interconnections and assigning weightage [7]. |

| Ovid MEDLINE Field Guide [19] | Database Documentation | Details all searchable fields within the Ovid MEDLINE database. | Critical for constructing precise search syntax (e.g., .ti,ab for Title/Abstract) during search strategy refinement. |

In the realm of evidence-based medicine, the systematic review represents the highest standard for synthesizing research findings. The integrity and comprehensiveness of a systematic review are fundamentally dependent on the effectiveness of the initial literature search, a process fraught with the risk of selection bias and incomplete retrieval. This Application Note addresses this critical stage by detailing a structured methodology for building a "Gold Set" of references. A Gold Set is a curator-validated collection of key publications that serves as the foundational corpus for the subsequent, rigorous process of term discovery. The protocols herein are designed to minimize bias and maximize recall, providing researchers, scientists, and drug development professionals with a reproducible framework for constructing a robust search strategy, which is the cornerstone of any high-quality systematic review [7].

Comparative Analysis of Keyword Identification Techniques

The selection of keywords and indexing terms is a pivotal decision point that can determine the success of a systematic review. The table below summarizes the core characteristics of a traditional expert-driven approach versus the more structured Weightage Identified Network of Keywords (WINK) technique [7].

Table 1: Comparison of Keyword Identification Techniques for Systematic Reviews

| Feature | Traditional Expert-Driven Approach | WINK Technique |

|---|---|---|

| Core Methodology | Relies on subject matter experts (SMEs) to suggest keywords based on domain knowledge [7]. | Integrates computational analysis (network visualization) with SME validation to identify and weight terms [7]. |

| Primary Tools | Database thesauri (e.g., MeSH on Demand), expert consultation [7]. | VOSviewer for network chart generation, PubMed/MeSH for term validation [7]. |

| Key Advantage | Leverages deep domain-specific insight and context. | Systematically maps the semantic landscape of a research field, reducing expert bias [7]. |

| Key Limitation | Potential for selection bias and omission of non-obvious or emerging terminology [7]. | Requires access to and familiarity with bibliometric software and analysis. |

| Quantified Efficacy | Serves as the baseline for comparison. | Demonstrated 69.81% and 26.23% more articles retrieved for two sample research questions compared to the conventional approach [7]. |

| Best Application Context | Initial scoping searches, topics with well-established and stable terminology. | Complex, multi-faceted research questions where keyword relationships are not immediately apparent [7]. |

Experimental Protocols

This section provides step-by-step protocols for implementing the two primary methodologies for building a Gold Set.

Protocol 1: Construction of an Initial Gold Set via Expert Curation

3.1.1 Objective: To assemble a preliminary Gold Set of reference articles using the traditional, expert-driven method to establish a baseline for further refinement.

3.1.2 Materials & Reagents:

- Access to bibliographic databases (e.g., PubMed/MEDLINE, Scopus, Embase).

- Reference management software (e.g., EndNote, Zotero, Mendeley).

3.1.3 Methodology:

- Define Research Question: Formulate a clear, structured research question. For the purposes of this protocol, we will use the example: "What is the relationship between oral and systemic health?" [7].

- Engage Subject Matter Experts (SMEs): Convene a panel of at least 2-3 domain experts.

- Brainstorm Seed Keywords: With the SME panel, brainstorm a list of broad seed keywords and concepts. For the example question, this may include: "oral health," "periodontitis," "systemic health," "diabetes," "cardiovascular disease" [7].

- Identify Controlled Vocabulary: Using database tools like "MeSH on Demand" in PubMed, identify the preferred controlled vocabulary terms (e.g., MeSH terms) for the seed keywords [7].

- Execute Preliminary Search: Construct a basic Boolean search string and execute it in the chosen database(s).

- Example String:

((oral health[MeSH Terms]) OR (periodontitis[Title/Abstract])) AND ((((systemic health[Title/Abstract]) OR (systemic diseases[Title/Abstract])) OR (diabetes[Title/Abstract])) OR (cardiovascular disease[Title/Abstract]))[7].

- Example String:

- Screen and Select: Manually screen the results (typically by title and abstract) to identify a core set of 10-20 highly relevant, seminal papers. This collection forms the Initial Gold Set.

Protocol 2: Enhancement of the Gold Set using the WINK Technique

3.2.1 Objective: To expand and validate the Initial Gold Set by applying a systematic, network analysis-based technique to identify high-weightage keywords, thereby ensuring a more comprehensive literature search.

3.2.2 Materials & Reagents:

- The Initial Gold Set from Protocol 1.

- Bibliometric analysis software (e.g., VOSviewer, an open-access tool) [7].

- Database access (PubMed/MEDLINE).

3.2.3 Methodology:

- Data Export: For the articles in the Initial Gold Set, export their full metadata, including titles, abstracts, and keywords/MeSH terms, from the database.

- Network Visualization:

- Import the metadata into VOSviewer.

- Perform a term co-occurrence analysis, using keywords or MeSH terms as the unit of analysis.

- Generate a network visualization map where nodes represent terms and the links between nodes represent their strength of co-occurrence across the literature [7].

- Identify High-Weightage Keywords: In the network map, identify keywords with high link strength and density, indicating they are central to the research topic. Conversely, note keywords with limited networking strength for potential exclusion [7].

- SME Validation: Present the network map to the SME panel to validate the identified key terms and contextualize the findings.

- Build Enhanced Search String: Construct a new, exhaustive Boolean search string incorporating the validated, high-weightage MeSH terms.

- Example Enhanced String for Oral/Systemic Health: This string would incorporate a wide array of systemic health MeSH terms (e.g.,

cardiovascular diseases[MeSH],diabetes mellitus[MeSH],pregnancy[MeSH],obesity[MeSH]) and oral health MeSH terms (e.g.,periodontal diseases[MeSH],chronic periodontitis[MeSH],oral health[MeSH]) [7].

- Example Enhanced String for Oral/Systemic Health: This string would incorporate a wide array of systemic health MeSH terms (e.g.,

- Final Gold Set Assembly: Execute the enhanced search. The resulting, larger set of relevant articles, after screening, constitutes the Final Enhanced Gold Set, ready for use in term discovery for the full systematic review.

Workflow Visualization

The following diagram illustrates the integrated workflow for building the Gold Set, combining both Protocols 1 and 2.

The Scientist's Toolkit: Essential Research Reagents & Solutions

The following table details the key digital tools and platforms required to execute the protocols described in this document.

Table 2: Essential Research Reagents & Digital Solutions for Term Discovery

| Item | Function & Application in Protocol | Example/Source |

|---|---|---|

| Bibliographic Database | Primary source for literature retrieval and metadata export. Essential for both Protocols 1 and 2. | PubMed/MEDLINE [7], Scopus, Embase |

| Reference Management Software | Organizes the Gold Set references, manages citations, and deduplicates results from multiple database searches. | EndNote, Zotero, Mendeley |

| Controlled Vocabulary Tool | Identifies standardized indexing terms (e.g., MeSH) to improve search precision. Used in Protocol 1, Step 4. | MeSH on Demand [7] |

| Bibliometric Analysis Software | Generates network visualization charts to analyze keyword co-occurrence and strength. Core tool for Protocol 2, Step 2. | VOSviewer [7] |

| Boolean Search Interface | The platform within a database where structured search strings are built and executed. Used throughout all protocols. | PubMed Advanced Search Builder |

Building and Executing a Comprehensive Search Strategy

A Step-by-Step Method for Systematic Search Strategy Development

A meticulously developed search strategy is the cornerstone of any high-quality systematic review, serving as the primary determinant of the review's comprehensiveness, reliability, and freedom from bias. A robust strategy ensures the identification of all relevant literature on a specific research question, forming a solid evidence base for subsequent synthesis and conclusion. This document provides detailed Application Notes and Protocols for developing a systematic search strategy, framed within the broader context of conducting rigorous keyword research for systematic reviews. The guidance is tailored for researchers, scientists, and drug development professionals, with the goal of standardizing this critical process and enhancing the methodological quality of reviews.

Key Principles of a Systematic Search

The development of a search strategy is guided by several core principles essential for mitigating bias and ensuring the review's validity.

- Sensitivity vs. Precision: A systematic review search must favor sensitivity (the ability to identify all relevant records) over precision (the ability to exclude irrelevant records). Capturing a proportion of irrelevant records is necessary to ensure comprehensiveness [6].

- Reproducibility: Every step of the search strategy, from the initial terms to the final syntax, must be documented with sufficient detail to allow exact replication by other researchers [6].

- Pre-Specified Protocol: The search strategy should be developed as part of a pre-defined review protocol, which outlines the study methodology, including background, research question, and inclusion/exclusion criteria [25]. Registering this protocol on a platform like PROSPERO reduces the risk of bias and guards against duplicate efforts [25].

Preliminary Steps: Scoping and Question Formulation

Before constructing the search string, foundational work is required to define the review's scope and boundaries clearly.

Formulating a Research Question Using Frameworks

A well-defined, structured research question is the critical first step, as it guides every subsequent stage of the review process [26]. Using a formal framework helps in creating a clear, focused, and answerable question.

Table 1: Common Frameworks for Structuring Research Questions

| Framework | Components | Best Suited For |

|---|---|---|

| PICO [25] [26] | Population, Intervention, Comparator, Outcome | Therapy-related questions; can be adapted for diagnosis and prognosis. |

| PICOTTS [26] | Population, Intervention, Comparator, Outcome, Time, Type of Study, Setting | A more detailed extension of PICO. |

| SPIDER [25] [26] | Sample, Phenomenon of Interest, Design, Evaluation, Research Type | Qualitative and mixed-methods research. |

| PECO [25] | Population, Environment, Comparison, Outcome | Questions about the effect of an exposure. |

| SPICE [25] [26] | Setting, Perspective, Intervention/Exposure/Interest, Comparison, Evaluation | Evaluating services or projects from a specific perspective. |

| ECLIPSE [25] [26] | Expectation, Client group, Location, Impact, Professionals, SErvice | Health policy and management searches. |

Conducting Scoping Searches

Once a preliminary question is formed, conducting scoping searches in relevant databases is recommended [25]. These initial searches help to:

- Identify key papers and seminal works in the field.

- Boost understanding of the topic's terminology and scope.

- Provide a feel for the volume of existing literature, allowing for refinement of the question if it is too broad or too narrow [25].

- Build a "gold set" of relevant references, which can be used to test the performance of the final search strategy [20].

Core Methodology: Developing the Search Strategy

This section outlines the protocol for translating the research question into a formal, executable search strategy.

Identifying Search Terms

Search terms are derived directly from the key concepts within the chosen research framework (e.g., PICO). A comprehensive approach involves identifying two types of terms for each concept.

- Keywords: These are free-text words and phrases that authors might use in a study's title or abstract. Strategies for capturing variants include:

- Index Terms: Also known as subject headings, these are standardized terms from a controlled vocabulary assigned by professional indexers to describe the content of articles (e.g., MeSH in MEDLINE, Emtree in Embase) [6]. Using these terms is crucial because they allow retrieval of relevant articles that may not contain your specific keywords in the title or abstract.

A robust search strategy must include both keywords and index terms for each concept to ensure high sensitivity [6]. Relying solely on one type risks missing relevant studies.

Combining Terms with Boolean Operators

Boolean logic is used to combine the identified terms into a coherent search string.

- OR: Used to combine synonyms, related terms, and both keyword and index terms within the same concept. This broadens the search and increases sensitivity.

- AND: Used to combine different concepts (e.g., Population AND Intervention). This narrows the search to records that contain all specified concepts.

- NOT: Used to exclude specific records (e.g., exclude animal studies). This should be used cautiously as it can inadvertently exclude relevant studies [6].

The following diagram illustrates the logical workflow for building a systematic search strategy.

To minimize database-specific bias, it is essential to search multiple bibliographic databases. A minimum of two databases is recommended, though the exact choice should be based on the research topic [26].

Table 2: Key Bibliographic Databases and Specialist Tools

| Resource Name | Type | Primary Function & Characteristics |

|---|---|---|

| MEDLINE (via PubMed/Ovid) [26] | Bibliographic Database | Life sciences and biomedical database using MeSH terms; maintained by the U.S. NLM. |

| Embase [26] | Bibliographic Database | Comprehensive biomedical and pharmacological database with strong coverage of drug studies. |

| Cochrane Central [6] | Bibliographic Database | Specialized register of controlled trials, a key source for interventional reviews. |

| Google Scholar [26] | Search Engine | Provides broad search of scholarly literature but lacks transparency and precision for systematic reviews. |

| Covidence [6] [26] | Review Management Tool | Streamlines the screening, data extraction, and quality assessment phases of a review. |

| Rayyan [26] | Review Management Tool | Aids in the screening phase by allowing collaborative blinding and inclusion/exclusion decisions. |

Searching for Grey Literature

An over-reliance on published literature introduces publication bias, as studies with positive or significant results are more likely to be published [6]. A comprehensive search must therefore include grey literature, which includes:

- Clinical trial registers (e.g., ClinicalTrials.gov)

- Ongoing studies

- Theses and dissertations

- Conference abstracts and proceedings

- Reports from government agencies and institutional repositories [6]

This section details key reagents and software solutions essential for executing a systematic search strategy efficiently and accurately.

Table 3: Research Reagent Solutions for Systematic Searching

| Tool / Resource | Category | Function & Application |

|---|---|---|

| PubMed / MEDLINE [26] | Primary Database | Foundational database for biomedical reviews; uses MeSH for indexing. |

| Embase [26] | Primary Database | Critical for drug development reviews due to extensive pharmacological coverage. |

| EndNote, Zotero, Mendeley [26] | Reference Manager | Import, deduplicate, and manage thousands of search results; essential for organization. |

| Covidence, Rayyan [26] | Screening Tool | Facilitate blinded title/abstract and full-text screening by multiple reviewers. |

| Inciteful.xyz [27] | Scoping Tool | Captures relevant systematic review citations to create a seed set for testing strategy retrieval. |

| PubReMiner [27] | Keyword Identification Tool | Identifies common keywords and MeSH terms from a set of PubMed records. |

| Yale MeSH Analyzer [20] | MeSH Analysis Tool | Extracts and analyzes MeSH terms from a "gold set" of key papers to inform search strategy. |

| 2Dsearch [27] | Grey Literature Tool | Saves search strings for multiple grey literature sites with rudimentary search capabilities. |

Search Strategy Experimental Protocol

Objective

To construct, execute, and validate a sensitive and reproducible search strategy for a systematic review.

Materials

- Computer with internet access.

- Access to selected bibliographic databases (see Table 2).

- Reference management software (e.g., EndNote, Zotero).

- Screening tool (e.g., Covidence, Rayyan) - optional but recommended.

Step-by-Step Procedure

- Protocol Finalization: Confirm the finalized research question, inclusion criteria, and exclusion criteria as per the pre-registered protocol [25].

- Term Generation: For each PICO (or other framework) concept:

- a. List all relevant keywords from scoping searches, including spelling variants, synonyms, and plural forms.

- b. Identify corresponding controlled vocabulary terms (MeSH, Emtree) for each concept using database thesauri or tools like the Yale MeSH Analyzer [20].

- Strategy Assembly (in a test database):

- a. Create concept groups by combining all synonyms (keywords and index terms) for a single concept with the Boolean

OR. - b. Combine the resulting concept groups with the Boolean

AND. - c. Apply necessary search filters (e.g., language, date, study type) with caution.

- a. Create concept groups by combining all synonyms (keywords and index terms) for a single concept with the Boolean

- Strategy Validation:

- a. Run the assembled search strategy.

- b. Check if the "gold set" of known relevant articles is successfully retrieved [20].

- c. If key articles are missing, analyze the reason: Are missing terms, spelling variations, or alternative index terms needed? Refine the strategy accordingly.

- Search Translation and Execution:

- a. Translate the finalized search strategy for each additional database, adapting the syntax and controlled vocabulary terms as needed [27]. Note that automated translation tools primarily help with syntax, not subject term translation [27].

- b. Run the final search in all selected databases and grey literature sources.

- Results Management:

- a. Export all records from each database into your reference manager.

- b. Perform deduplication using the reference manager's functionality or a dedicated tool.

- c. Export the deduplicated results into a screening tool or spreadsheet for the title/abstract screening phase.

- Documentation:

A rigorous, systematic search strategy is a methodical process that requires careful planning, iterative testing, and meticulous documentation. By adhering to the principles and protocols outlined in this document—formulating a structured question, combining keywords and index terms using Boolean logic, searching multiple databases and grey literature, and validating the strategy—researchers can create a foundation for a systematic review that is comprehensive, unbiased, and reproducible. This methodological rigor is paramount for generating reliable evidence to inform scientific discourse and drug development decision-making.

The precision and comprehensiveness of a systematic review are fundamentally dependent on the strategy employed for literature retrieval. A core pillar of this strategy is the selection of appropriate bibliographic databases. Within evidence-based medicine, systematic reviews are a cornerstone, synthesizing scientific evidence to inform clinical practice, guide healthcare policies, and direct future research [28] [7]. An ineffective search that fails to capture a substantial proportion of relevant studies introduces bias and compromises the review's validity and reliability. Consequently, understanding the coverage, strengths, and weaknesses of major databases is not merely a preliminary step but a critical methodological decision. This article provides detailed application notes and protocols for selecting and utilizing databases, framed within the broader context of conducting rigorous keyword research for systematic reviews. The guidance is tailored for researchers, scientists, and drug development professionals who require methodologically sound and efficient approaches to evidence synthesis.

Database Performance and Coverage: A Quantitative Analysis

Choosing databases is not a one-size-fits-all process; it requires an understanding of their relative contributions to finding unique, relevant studies. Relying solely on one or two major databases can lead to missing a significant number of included studies.

Table 1: Unique Contribution of Major Databases to Systematic Reviews

| Database | Percentage of Unique Included References Retrieved | Key Characteristics |

|---|---|---|

| Embase | 7.6% (132 of 1746) [29] | Strong coverage of European and Asian literature, particularly for pharmacology and drug research. |

| MEDLINE | Not specified as highest, but essential [29] | Premier biomedical database from the U.S. National Library of Medicine, uses MeSH thesaurus. |

| Web of Science Core Collection | Contributed unique references [29] | Multidisciplinary, includes conference proceedings, strong citation tracking. |

| Google Scholar | Contributed unique references [29] | Broad coverage of grey literature and open-access sources, requires careful searching. |

| Cochrane Library | Increased coverage beyond PubMed/Embase [30] | Essential for controlled trials and Cochrane reviews. |

| PubMed | Provides substantial coverage, but not alone [30] | Interface for searching MEDLINE, includes publisher-supplied and in-process citations. |

A prospective study analyzing 58 published systematic reviews found that 16% of all included references were found in only a single database, with Embase being the most prolific source of these unique references [29]. This underscores the risk of relying on a single data source. The performance of database combinations can be quantified by their recall—the proportion of all relevant references that the search manages to retrieve.

Table 2: Performance of Database Combinations

| Database Combination | Overall Recall | Reviews with 100% Recall | Key Findings |

|---|---|---|---|

| Embase + MEDLINE + Web of Science + Google Scholar | 98.3% | 72% | Recommended minimum combination for adequate coverage [29]. |

| PubMed + Embase (across four specialties) | 71.5% (average) | Not specified | An average of 28.5% of relevant publications were missed [30]. |

| PubMed + Embase + Cochrane + PsycINFO + CINAHL, etc. | >95% (potential) | Varies by topic | Supplementary databases are essential for comprehensive coverage [30]. |

The evidence suggests that searching only PubMed and Embase may miss, on average, over a quarter of relevant publications, and an estimated 60% of published systematic reviews fail to retrieve 95% of all available relevant references because they do not search an adequate number of databases [29] [30].

Experimental Protocol: Testing Database Coverage for a Specific Review Topic

Objective: To empirically determine the optimal combination of databases for a systematic review on a specific topic, minimizing the risk of missing relevant studies while managing screening workload.

Methodology:

- Define a Gold Standard: Create a small, representative set of 20-30 known relevant studies for the review topic. These can be identified through preliminary scoping searches or expert consultation.

- Develop a Preliminary Search Strategy: Formulate a basic search strategy using the key concepts of the review topic. This strategy should be kept constant to isolate the effect of the database.

- Execute Searches Across Databases: Run the identical search strategy in multiple candidate databases (e.g., MEDLINE, Embase, Cochrane Central, Web of Science, Scopus, PsycINFO, CINAHL).

- Measure Retrieval and Deduplication: For each database, record the total number of results and then identify how many of the gold-standard references are retrieved. Use citation management software to perform deduplication against a reference database containing all records from all tested databases.

- Calculate Performance Metrics:

- Recall: (Number of gold-standard records found in the database / Total number of gold-standard records) x 100.

- Unique Contribution: Number of gold-standard records found only in that database.

- Number Needed to Read (NNR): Total records from the database after deduplication / Number of gold-standard records found; this estimates the screening burden per relevant study found [28].

Expected Outcome: This protocol yields a quantitative basis for selecting the most efficient database combination for a specific review topic, balancing high recall with a manageable screening load.

A Protocol for Systematic Database Selection and Search Execution

Workflow for Database Selection and Keyword Deployment

The following diagram visualizes the logical workflow for selecting databases and developing a comprehensive search strategy, integrating both keyword and index term searching.

Detailed Application Notes for the Protocol