Log-Transformation of Hormone Ratios: A Methodological Guide for Robust Biomarker Analysis in Biomedical Research

This article provides a comprehensive guide for researchers and drug development professionals on the application of log-transformation to hormone ratio data.

Log-Transformation of Hormone Ratios: A Methodological Guide for Robust Biomarker Analysis in Biomedical Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the application of log-transformation to hormone ratio data. It explores the foundational reasons for transforming skewed hormone data, details step-by-step methodological applications, addresses common troubleshooting and optimization challenges, and presents validation frameworks for comparing analytical approaches. By synthesizing current methodological debates and empirical evidence, this guide aims to equip scientists with the knowledge to implement log-transformations appropriately, enhance the robustness of their statistical analyses, and draw more reliable biological inferences from hormone ratio data.

Why Transform? The Statistical and Biological Rationale for Log-Transforming Hormone Ratios

In endocrine research, the use of hormone ratios has become increasingly prevalent for capturing the joint effect or "balance" of two hormones with opposing or mutually suppressive physiological effects. Commonly studied ratios include testosterone/cortisol, estradiol/progesterone, and testosterone/estradiol, which aim to provide a single integrative marker of hormonal dynamics beyond what can be understood from individual hormone measurements alone [1]. However, hormone data frequently exhibits a fundamental statistical property that complicates their analysis: inherent positive skewness in their distributions.

Many hormone concentrations approximate log-normal rather than normal distributions, meaning their logarithmic values are normally distributed while their raw values are not [1]. This distributional asymmetry presents significant methodological challenges for statistical analysis and interpretation, particularly when researchers create ratios from these skewed variables. The combination of skewed numerator and denominator distributions can lead to ratio distributions with marked outliers and exponential increases as denominator values approach zero, fundamentally undermining the robustness and validity of research findings [1].

This Application Note addresses the critical methodological considerations for working with skewed hormone data and ratios, with particular emphasis on the transformational approaches needed to ensure statistical robustness and biological validity within the context of advanced hormone research and drug development.

Theoretical Foundation: The Statistical Problem with Raw Ratios

Limitations of Raw Hormone Ratios

Raw hormone ratios suffer from several statistical and interpretative problems that have been widely recognized in methodological literature. When hormone levels are measured with error—both from assay imperfections and physiological fluctuations—this noise becomes substantially exaggerated in ratio measures [1].

Key Limitations Include:

- Extreme Sensitivity to Measurement Error: Noise in measured hormone levels is amplified by ratios, particularly when the denominator distribution is positively skewed, which is frequently observed in endocrine data [1].

- Exponential Inflation with Small Denominators: As denominator values approach zero, ratio values increase exponentially, creating extreme outliers that disproportionately influence statistical results [1].

- Distributional Abnormalities: Ratio distributions tend to be highly skewed and leptokurtic (heavy-tailed), even when component hormones are normally distributed [1].

- Directional Arbitrariness: The ratio A/B is not linearly related to B/A, and researchers rarely provide biological justification for choosing one directional ratio over another [1].

- Interpretative Ambiguity: Associations between ratios and outcomes may be driven solely by one hormone, additive effects of both, or complex interactions, making mechanistic interpretation difficult [1].

The Measurement Error Amplification Problem

A previously unrecognized limitation of raw hormone ratios is their striking lack of robustness to measurement error. Simulations using both idealized distributions and empirically observed distributions from estrogen and progesterone studies demonstrate that the validity of raw hormone ratios—defined as the correlation between measured levels and underlying effective levels—drops rapidly in the presence of realistic levels of measurement error [1].

Table 1: Impact of Measurement Error on Hormone Ratio Validity

| Condition | Effect on Raw Ratio Validity | Effect on Log-Ratio Validity |

|---|---|---|

| Moderate Measurement Error | Substantial decrease in validity | Minimal impact, remains robust |

| Skewed Denominator Distribution | Dramatic amplification of error impact | Minimal impact from skewness |

| Positively Correlated Hormone Levels | Enhanced noise amplification | May provide more valid measurement than raw ratio |

| Small Denominator Values | Exponential inflation of ratio values | Linear transformation prevents inflation |

Methodological Solution: Log-Transformation of Hormone Data

Theoretical Basis for Logarithmic Transformation

The log-transformation of hormone ratios provides a mathematically sound alternative that addresses the fundamental limitations of raw ratios. The logarithmic transformation converts multiplicative relationships into additive ones, which aligns with the physiological reality that many hormonal effects operate on proportional rather than absolute scales.

The transformation is straightforward:

- Raw Ratio: ( R = A/B )

- Log-Transformed Ratio: ( \ln(R) = \ln(A) - \ln(B) )

This simple transformation yields a variable that captures equal additive but opposing effects of two log-transformed hormones [1]. From a distributional perspective, since hormone levels often naturally follow log-normal distributions, their log-transformed values typically approximate normal distributions, satisfying the distributional assumptions of many parametric statistical tests [1].

Advantages of Log-Transformed Ratios

Distributional Normalization: Log-transformation typically converts skewed hormone distributions to near-normality, reducing the influence of extreme outliers and satisfying the distributional assumptions of parametric statistical methods [1].

Directional Symmetry: Unlike raw ratios where ( A/B \neq B/A ), the log-transformed ratios maintain the relationship ( \ln(A/B) = -\ln(B/A) ). Results using either directional ratio will be identical in magnitude though opposite in sign, eliminating arbitrary choice justification [1].

Robustness to Measurement Error: Simulation studies demonstrate that log-ratios are remarkably robust to measurement error. Their validity remains higher and more stable across samples compared to raw ratios, particularly under conditions of moderate noise with positively correlated hormone levels [1].

Physiological Interpretation: Many biological systems respond to proportional rather than absolute changes in hormone concentrations, making logarithmic transformations more physiologically meaningful than linear models for representing hormone-action relationships.

Experimental Protocols for Hormone Ratio Analysis

Protocol 1: Log-Transformation of Hormone Ratios

This protocol provides a standardized approach for calculating and analyzing log-transformed hormone ratios from raw concentration data.

Materials and Equipment:

- Raw hormone concentration data (from mass spectrometry or immunoassay)

- Statistical software (R, Python, SPSS, SAS)

- Data visualization software

Procedure:

Data Quality Assessment

- Examine raw distributions of both numerator and denominator hormones

- Identify and document extreme values or potential assay artifacts

- Check for values below limit of detection that may require imputation

Logarithmic Transformation

- Apply natural log transformation to both hormone concentrations:

- ( \ln(\text{Hormone}A) ) and ( \ln(\text{Hormone}B) )

- For concentrations below detection limits, use established imputation methods (e.g., half the detection limit)

- Verify transformation success by examining distribution normality

- Apply natural log transformation to both hormone concentrations:

Ratio Calculation

- Calculate log-ratio as the difference between transformed values:

- ( \text{LogRatio} = \ln(\text{Hormone}A) - \ln(\text{Hormone}B) )

- For directional consistency, establish biological rationale for numerator/denominator assignment

- Calculate log-ratio as the difference between transformed values:

Statistical Analysis

- Proceed with standard parametric tests (t-tests, ANOVA, correlation, regression)

- For regression models: ( Y = \beta0 + \beta1(\ln(A) - \ln(B)) + \epsilon )

- Report results with appropriate back-transformation for interpretation

Validation:

- Compare model fit statistics between raw and log-transformed ratio models

- Assess residual plots to verify homoscedasticity

- Conduct sensitivity analyses with different imputation approaches for values below detection limits

Protocol 2: Comprehensive Ratio Analysis with Component Modeling

This advanced protocol addresses interpretative challenges by simultaneously modeling ratio and component effects.

Procedure:

Preliminary Analysis

- Calculate both raw and log-transformed ratios

- Conduct correlation analysis between ratios and outcome variables

- Perform principal component analysis on log-transformed hormone concentrations

Multiple Regression Framework

- Implement comprehensive regression model including:

- ( Y = \beta0 + \beta1\ln(A) + \beta2\ln(B) + \beta3(\ln(A) \times \ln(B)) + \epsilon )

- Compare constrained model (( \beta1 = -\beta2 )) representing pure ratio effect

- Use likelihood ratio test to compare constrained vs. unconstrained models

- Implement comprehensive regression model including:

Interpretation Framework

- If ( \beta1 \approx -\beta2 ) and ( \beta_3 \approx 0 ), evidence supports pure ratio effect

- If ( \beta_3 ) significant, evidence for interactive effect beyond simple ratio

- If only ( \beta1 ) or ( \beta2 ) significant, evidence for single hormone drive

Validation and Sensitivity Analysis

- Bootstrap confidence intervals for ratio and interaction terms

- Cross-validate model performance using train-test splits

- Compare predictive accuracy across different transformational approaches

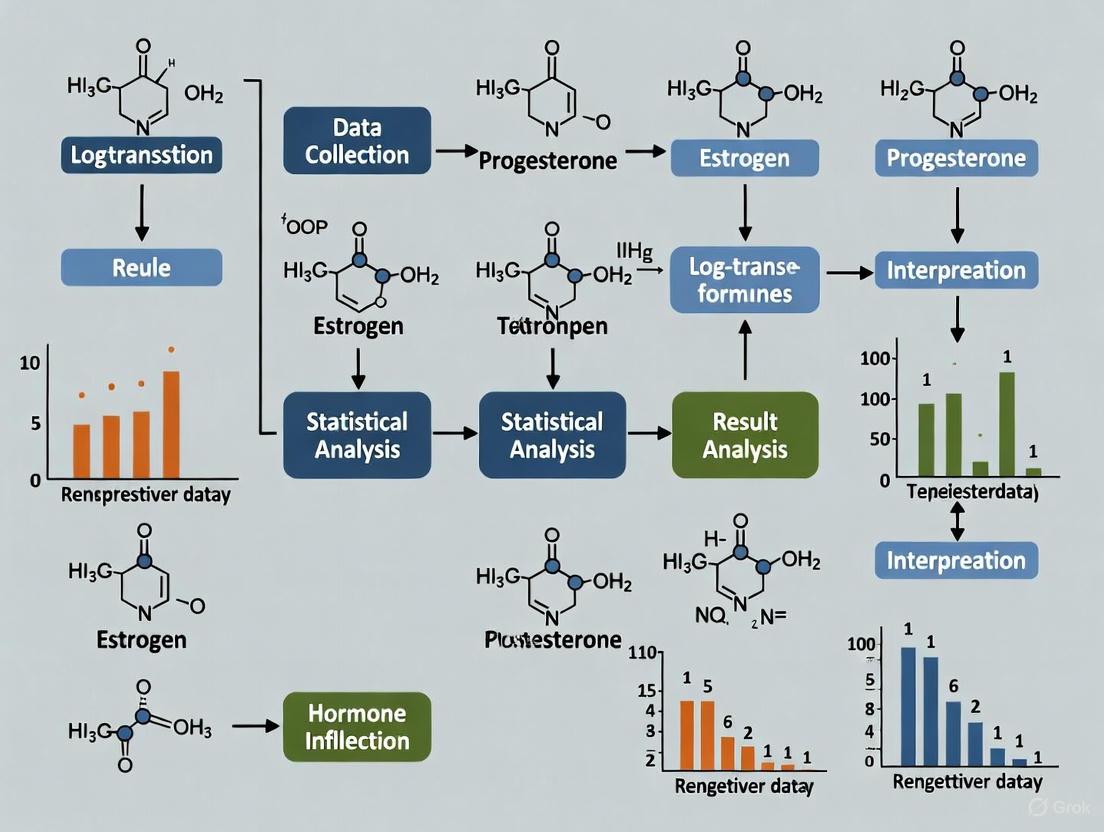

Figure 1: Experimental workflow for comprehensive hormone ratio analysis

Research Reagent Solutions for Hormone Analysis

Table 2: Essential Research Reagents and Materials for Hormone Ratio Studies

| Reagent/Material | Function | Technical Specifications |

|---|---|---|

| ID LC-MS/MS Kits | Gold-standard hormone quantification using isotope dilution liquid chromatography-tandem mass spectrometry | High specificity/sensitivity; minimal cross-reactivity; lower limit of detection: progesterone 0.86 ng/dL, estradiol 1.72 pg/mL [2] |

| Quality Control Materials | Monitor assay precision and accuracy across batches | Should span clinically relevant ranges; commutability with patient samples; long-term stability |

| Automated Sample Preparation Systems | Standardize pre-analytical processing | Liquid handling precision <5% CV; temperature-controlled processing; minimal sample transfer steps |

| Statistical Software Packages | Implement transformation and modeling protocols | R, Python, or specialized packages with bootstrap and cross-validation capabilities |

| Data Visualization Tools | Assess distributions and model diagnostics | Graph creation for distribution assessment; residual plotting; interactive exploratory analysis |

Advanced Analytical Framework: Machine Learning Applications

Recent advances in machine learning provide powerful approaches for modeling complex relationships in hormone data while maintaining interpretability through explainable AI techniques.

Explainable Machine Learning Protocol

Model Development Framework:

- Algorithm Selection: XGBoost or other gradient boosting machines for capturing nonlinear relationships

- Feature Engineering: Include log-transformed hormone values alongside demographic, anthropometric, and metabolic variables

- Interpretability Implementation: SHAP (SHapley Additive exPlanations) values for feature importance quantification [2]

Implementation Procedure:

- Preprocess data using log-transformation of hormone concentrations

- Develop XGBoost model with stratified train-test splits (70/30)

- Compute SHAP values to interpret feature contributions

- Validate model performance using RMSE, MAE, and R² metrics

- Compare feature importance patterns across different population subgroups

Figure 2: Explainable machine learning framework for hormone ratio predictors

Case Application: Progesterone-Estradiol Ratio Modeling

A recent study demonstrated this approach by modeling the log-transformed progesterone-estradiol (P4:E2) ratio in postmenopausal women using NHANES data. The XGBoost model achieved test set performance of RMSE = 0.746, MAE = 0.574, and R² = 0.298. SHAP analysis identified FSH (0.213), waist circumference (0.181), and CRP (0.133) as the most influential contributors, providing data-driven insights into hormonal dynamics [2].

Table 3: Feature Importance in P4:E2 Ratio Machine Learning Model

| Predictor Feature | SHAP Value | Biological Interpretation |

|---|---|---|

| FSH | 0.213 | Reflects hypothalamic-pituitary-gonadal axis feedback regulation |

| Waist Circumference | 0.181 | Represents adipose tissue contribution to hormone biosynthesis and metabolism |

| C-Reactive Protein (CRP) | 0.133 | Indicates inflammatory state influence on hormonal pathways |

| Total Cholesterol | 0.085 | Suggests lipid metabolism interplay with steroid hormone production |

| Luteinizing Hormone (LH) | 0.066 | Indicates gonadal axis regulation of hormonal balance |

The inherent asymmetry of hormone distributions presents significant methodological challenges that require transformational approaches for valid statistical analysis and biological interpretation. Log-transformation of hormone ratios addresses the fundamental limitations of raw ratios by providing distributional normalization, directional symmetry, and robustness to measurement error.

For researchers and drug development professionals implementing hormone ratio analyses, the following evidence-based recommendations are provided:

Routine Implementation of Log-Transformation: Apply natural log transformation to hormone concentrations before ratio calculation as a standard practice in analytical protocols.

Comprehensive Modeling Approach: Implement both ratio and component-interaction models to distinguish true ratio effects from single-hormone drives or complex interactions.

Methodological Transparency: Clearly report transformation approaches and provide biological justification for ratio directionality in publications.

Advanced Analytical Frameworks: Incorporate machine learning with explainable AI techniques for identifying complex, nonlinear relationships in high-dimensional hormone data.

Assay Quality Considerations: Utilize mass spectrometry-based hormone quantification where possible to minimize measurement error that disproportionately affects ratio measures.

The consistent application of these methodological principles will enhance the validity, reproducibility, and biological interpretability of hormone ratio research across basic science, clinical investigation, and drug development contexts.

Analyzing hormone data presents unique statistical challenges that can compromise the validity of research findings if not properly addressed. Hormone ratios, such as the testosterone-to-cortisol (T/C) ratio or estradiol-to-progesterone (EP) ratio, have gained popularity in neuroendocrine literature as a straightforward method for simultaneously analyzing the effects of two interdependent hormones [3]. However, these analyses are associated with significant statistical and interpretational concerns that researchers must carefully consider [3]. The core motivations for implementing specialized statistical approaches stem from three interconnected problems: inherent non-linearity in hormonal relationships, susceptibility to outlier influence, and the consequent degradation of model fit quality.

The fundamental issue with ratio-based analysis lies in the distributional properties and inherent asymmetry of ratios [3]. This asymmetry means that parametric statistical analyses can be affected by the ultimately arbitrary decision of which way around the ratio is computed (i.e., A/B or B/A), potentially leading to different statistical conclusions from the same underlying data. Furthermore, the presence of outliers—data points that deviate significantly from the overall pattern—can have a disproportionate influence on regression models, leading to biased parameter estimates and poor predictive performance [4]. These challenges are particularly pronounced in hormone research where biological variability, assay limitations, and complex feedback mechanisms create data structures that frequently violate the assumptions of traditional statistical methods.

Theoretical Foundations and Methodological Rationale

The Problem of Ratio Asymmetry and Distribution

Hormone ratios inherently possess asymmetric properties that complicate their statistical analysis. The distribution of ratios tends to be skewed, particularly when the denominator variable has a distribution that includes values close to zero [3]. This skewness violates the normality assumption underlying many parametric statistical tests, potentially leading to increased Type I or Type II errors. The arbitrary direction of ratio calculation (A/B vs. B/A) further compounds this problem, as the same biological relationship can yield statistically different results based purely on this computational decision [5].

Logarithmic transformation of hormone ratios addresses these distributional concerns by effectively symmetrizing the ratio distribution. The transformation converts the multiplicative relationship between numerator and denominator into an additive one, making the statistical analysis more robust to the direction of ratio calculation [3] [5]. This approach is particularly valuable when testing hormonal predictors in complex models, such as the three-way interactions examined in ovulatory shift research [5].

Outlier Influence in Regression Models

In nonlinear regression, outliers can significantly distort results, leading to inaccurate parameter estimates and unreliable predictions [4]. The detection and management of outliers is therefore crucial for robust regression analysis. Outliers exert disproportionate influence on regression coefficients, reduce predictive accuracy, produce misleading hypothesis testing results, and negatively impact the quality of statistical measures such as R² and mean squared error (MSE) [6].

The challenge is particularly acute in hormone research due to the complex, nonlinear relationships often observed in endocrine systems. Unlike linear regression, detecting outliers in nonlinear regression is more challenging due to limited diagnostic tools [7]. This limitation has motivated researchers to employ machine learning techniques that can effectively handle large datasets, missing values, and outliers without strict distributional assumptions [7].

Table 1: Statistical Challenges in Hormone Ratio Analysis and Their Consequences

| Statistical Challenge | Impact on Analysis | Common Consequences |

|---|---|---|

| Ratio Asymmetry | Different results from A/B vs. B/A calculation | Inconsistent findings, reduced reproducibility |

| Non-Normal Distribution | Violation of parametric test assumptions | Increased Type I/II errors, biased p-values |

| Outlier Sensitivity | Disproportionate influence on model parameters | Skewed conclusions, reduced predictive accuracy |

| Multicollinearity | Unstable parameter estimates, inflated variance | Difficulty interpreting individual predictor effects |

Analytical Approaches and Protocols

Log-Transformation Protocol for Hormone Ratios

The implementation of log-transformation for hormone ratios follows a systematic protocol designed to normalize distribution and mitigate ratio asymmetry:

Step 1: Data Quality Assessment

- Visually inspect raw hormone values using scatter plots and histograms

- Identify potential assay errors or biological impossibilities

- Document decision rules for data exclusion prior to analysis

Step 2: Ratio Calculation

- Calculate ratios in both directions (A/B and B/A) for comparative purposes

- Address zero values in denominator using minimal value replacement or other appropriate methods

- Document the biological rationale for ratio direction selection

Step 3: Logarithmic Transformation

- Apply natural log transformation to calculated ratios:

ln(ratio) - Verify transformation success through distribution comparison (Q-Q plots, skewness statistics)

- For zero-containing datasets, apply

ln(ratio + k)where k is a small constant

Step 4: Analysis Implementation

- Conduct statistical analyses on log-transformed ratios

- Report results with back-transformed interpretations where appropriate

- Include sensitivity analyses comparing transformed and untransformed results

This protocol directly addresses the distributional concerns associated with ratio analysis while providing a more robust foundation for parametric statistical testing [3] [5].

Comprehensive Outlier Detection and Management

A multi-method approach to outlier detection enhances robustness against different types of outliers and influential points:

Visual Inspection Methods

- Generate scatter plots of raw data to identify gross outliers

- Create residual plots after initial model fitting to detect pattern deviations

- Use box plots for univariate outlier identification in each hormone variable

Statistical Detection Methods

- Calculate Studentized residuals: Points with values > |2| or |3| warrant investigation

- Compute Cook's Distance: Values > 4/n (where n is sample size) indicate influential points

- Apply Hadi's Potential method, which combines leverage and residual information

Robust Regression Implementation

- Apply Least Absolute Deviations (LAD) regression as a resistant alternative to OLS

- Utilize M-Estimation with Huber's T or Tukey's Biweight functions to reduce outlier influence

- Implement Least Trimmed Squares (LTS) regression, which minimizes the sum of smallest squared residuals

This comprehensive protocol enables researchers to identify and address outliers through removal, transformation, or robust statistical methods that diminish their influence [4] [6].

Outlier Detection and Management Workflow

Advanced Robust Estimation Techniques

For datasets exhibiting both multicollinearity and outliers, specialized robust estimators provide enhanced protection against both problems simultaneously. The Poisson regression context is particularly relevant for hormone count data or event frequency outcomes:

Poisson Maximum Likelihood Estimator (PMLE) Limitations

- PMLE is highly sensitive to outliers, which can distort estimated coefficients and lead to misleading results [6]

- Multicollinearity among explanatory variables leads to variance inflation, coefficient signal errors, and increased mean squared error [6]

Robust Poisson Two-Parameter Estimator (PMT-PTE)

- Combines transformed M-estimator (MT) with two-parameter estimation

- Simultaneously addresses outlier influence and multicollinearity

- Demonstrates superior performance in scenarios with both problems present [6]

Implementation Protocol

- Diagnose multicollinearity using variance inflation factors (VIF) and condition indices

- Assess outlier presence through the methods described in Section 3.2

- Apply PMT-PTE estimation when both conditions are identified

- Compare results with traditional PMLE to quantify improvement

This advanced approach is particularly valuable in hormone research where correlated predictors and unusual observations frequently co-occur [6].

Table 2: Comparison of Statistical Approaches for Hormone Data Analysis

| Method | Primary Application | Advantages | Limitations |

|---|---|---|---|

| Log-Transformation | Ratio asymmetry, Non-normal distributions | Symmetrizes ratio distribution, Stabilizes variance | Interpretation complexity, Zero value handling |

| Non-Parametric Methods | Non-normal data, Small samples | Distribution-free, Robust to outliers | Reduced statistical power, Limited model complexity |

| Robust Regression (M-Estimation) | Outlier contamination | Reduces outlier influence, Maintains efficiency | Computational complexity, Limited software implementation |

| PMT-PTE Estimator | Multicollinearity + Outliers | Handles both problems simultaneously | Methodological complexity, Emerging validation |

Implementation Framework and Research Reagents

Experimental Design and Data Collection Protocol

Proper experimental design establishes the foundation for robust statistical analysis of hormone data:

Pre-Analytical Phase

- Standardize sample collection procedures to minimize technical variability

- Implement quality control measures for hormone assays

- Determine sample size through power analysis accounting for expected effect sizes and variability

Data Collection and Management

- Record raw hormone values with appropriate precision

- Document all assay characteristics (sensitivity, intra-assay CV, inter-assay CV)

- Create comprehensive metadata including time of collection, participant characteristics, and technical batch information

Quality Assessment Procedures

- Implement blind duplicate samples to assess measurement reliability

- Include control samples with known concentrations across assay runs

- Establish criteria for data exclusion prior to statistical analysis

This systematic approach to data collection minimizes introduction of artifacts that could exacerbate statistical challenges in subsequent analysis.

Computational Tools and Research Reagents

Successful implementation of these advanced statistical methods requires appropriate computational tools and analytical frameworks:

Table 3: Essential Research Reagent Solutions for Advanced Hormone Analysis

| Tool/Category | Specific Examples | Function/Purpose |

|---|---|---|

| Statistical Software | R, Python with statsmodels | Implementation of robust statistical methods |

| Specialized Packages | R: robustbase, MASSPython: Sklearn | Access to robust regression and outlier detection methods |

| Visualization Tools | ggplot2, Matplotlib, Seaborn | Data quality assessment and model diagnostic plotting |

| Machine Learning Algorithms | Random Forest, Gradient Boosting | Nonlinear pattern detection without distributional assumptions |

Software Implementation Protocol

- Utilize R

robustbasepackage for M-estimation and robust regression methods - Apply Python

statsmodelswith RLM for robust linear modeling - Employ machine learning algorithms (Random Forest, Gradient Boosting) for comparison with traditional methods [7]

- Implement custom functions for ratio transformation and diagnostic testing

The integration of these computational tools enables comprehensive analysis that addresses the core challenges of non-linearity, outliers, and model fit in hormone research.

Comprehensive Hormone Data Analysis Workflow

Addressing non-linearity, outliers, and model fit deficiencies represents a critical foundation for valid inference in hormone research. The methodological approaches outlined in this document provide researchers with a comprehensive framework for enhancing the robustness and interpretability of their findings. The core motivations for implementing these techniques stem from fundamental statistical properties of hormone data that frequently violate assumptions of traditional analytical methods.

Implementation should follow a systematic process beginning with thorough data quality assessment, proceeding through appropriate transformation and outlier management, and culminating in robust model fitting with comprehensive validation. The log-transformation of ratios addresses distributional asymmetry, while multi-method outlier detection and robust estimation techniques protect against influential observations. Advanced approaches like the PMT-PTE estimator offer solutions for complex scenarios involving both multicollinearity and outlier contamination.

Future methodological development will likely incorporate increasingly sophisticated machine learning approaches that can identify complex nonlinear relationships without strict distributional assumptions [7]. However, regardless of methodological advancement, the fundamental principles of understanding data structure, assessing model assumptions, and implementing appropriate statistical solutions will remain essential for valid hormone research.

The use of hormone ratios, such as testosterone/cortisol or estradiol/progesterone, is a popular methodology in endocrine research to capture the joint effect of two hormones with opposing actions. Despite their prevalence, the statistical foundation for using raw ratios has been widely criticized. A common misconception, or "myth," is that the primary reason for log-transforming hormone ratios is to normalize a skewed distribution. This application note reframes the decision to log-transform, divorcing it from the simple goal of achieving a normal distribution and recentering it on a more critical methodological imperative: enhancing robustness to measurement error. We synthesize recent evidence demonstrating that log-transformation is fundamentally superior for preserving the validity of ratio measures in the presence of the measurement noise inherent to hormonal assays.

The Core Methodological Problem: Measurement Error

A previously unrecognized but critical limitation of raw hormone ratios is their striking lack of robustness to measurement error [1]. Hormone levels are subject to two key sources of noise:

- Assay Imperfection: The inability of laboratory assays to perfectly assess concentrations in a sample.

- Physiological Discrepancy: The difference between circulating levels at the time of sample collection and the effective hormone levels at the site of action.

Raw ratios dramatically amplify this noise, especially when the denominator's distribution is positively skewed—a common feature of endocrine data. Under these conditions, a high frequency of small denominator values can cause the ratio to explode, making the measured value highly sensitive to minor fluctuations and a poor reflection of the underlying biological ratio [1].

Table 1: Key Problems with Raw Hormone Ratios and the Log-Transform Solution

| Aspect | Raw Ratio (A/B) | Log-Transformed Ratio (ln(A/B)) |

|---|---|---|

| Distribution | Often highly skewed and leptokurtic, with outliers [1] [8] | Tends toward a normal, symmetric distribution [8] |

| Robustness to Error | Poor; validity drops rapidly with measurement error [1] | High; validity remains more stable with measurement error [1] |

| Ratio Asymmetry | A/B is not linearly related to B/A; choice of ratio is arbitrary [8] | ln(A/B) = -ln(B/A); the choice is statistically inconsequential [1] [8] |

| Interpretation | Obscures underlying mechanisms; can be driven by complex interactions [1] | Represents additive, opposing effects of two logged hormones [1] |

Experimental Protocols for Ratio Analysis

Protocol 1: Assessing Robustness to Measurement Error via Simulation

This protocol outlines a method to evaluate the performance of raw versus log-transformed ratios under realistic measurement error conditions.

1. Objective: To quantify the decline in validity (correlation between measured and true underlying ratios) for raw and log-transformed ratios as measurement error increases.

2. Materials & Data Input:

- True Hormone Values: A dataset of "true" hormone levels for two hormones (A and B). These can be empirically observed distributions (e.g., from studies of estrogen and progesterone) or idealized distributions simulated to match real-world parameters [1].

- Statistical Software: Capable of running Monte Carlo simulations (e.g., R, Python).

3. Procedure:

- Step 1: Calculate the "true" ratio, ( R{true} = A/B ), and the "true" log-ratio, ( ln(R{true}) ), from the base dataset.

- Step 2: For a range of realistic error levels (e.g., coefficient of variation from 5% to 20%), simulate "measured" hormone values. This is done by adding random noise to the true values of A and B. For example: ( A{measured} = A{true} + \epsilon ), where ( \epsilon ) is random noise proportional to the chosen error level. Repeat this process thousands of times (Monte Carlo simulation) [1].

- Step 3: For each simulation, calculate the "measured" raw ratio (( A{measured}/B{measured} )) and the "measured" log-ratio (( ln(A{measured}) - ln(B{measured}) )).

- Step 4: For each error level, compute the validity coefficient: the correlation between all simulated "measured" ratios and the "true" ratio. Plot validity against measurement error for both raw and log-transformed ratios.

4. Expected Outcome: The validity of the raw ratio will drop precipitously as measurement error increases, particularly with a skewed denominator. The validity of the log-ratio will be higher and exhibit significantly greater stability across the same error range [1].

Protocol 2: Predictive Modeling with Log-Transformed Ratios

This protocol employs machine learning to model a biologically relevant log-transformed hormone ratio, demonstrating a modern application.

1. Objective: To identify key predictors of the log-transformed progesterone-to-estradiol (P4:E2) ratio in postmenopausal women using an explainable machine learning framework [2].

2. Materials & Reagents:

- Study Population: A cohort of postmenopausal women (e.g., n=1902 from NHANES) not using hormone therapy [2].

- Hormone Measurement: Serum samples analyzed via Isotope Dilution Liquid Chromatography-Tandem Mass Spectrometry (ID LC-MS/MS). This is the gold standard, chosen for its high specificity and sensitivity, minimizing the measurement error discussed in Protocol 1 [2].

- Feature Data: Anthropometric (e.g., waist circumference), metabolic (e.g., total cholesterol, CRP), demographic, and dietary data collected via standardized protocols [2].

3. Procedure:

- Step 1: Data Preparation. Calculate the target variable: ( ln(P4:E2) = ln(progesterone) - ln(estradiol) ). Ensure all hormone values are above the assay's limit of detection (LOD) [2].

- Step 2: Model Training. Split the data into training (70%) and testing (30%) sets. Train an XGBoost model (or another suitable algorithm) to predict the log-transformed P4:E2 ratio using the selected features.

- Step 3: Model Interpretation. Calculate SHAP (SHapley Additive exPlanations) values for the trained model. SHAP values quantify the contribution of each feature to the model's predictions for each individual, providing global feature importance [2].

- Step 4: Validation. Evaluate model performance on the held-out test set using metrics like R², RMSE (Root Mean Square Error), and MAE (Mean Absolute Error).

4. Expected Outcome: A validated predictive model where the top contributors to the log-transformed P4:E2 ratio (e.g., FSH, waist circumference, CRP) are identified and ranked based on their SHAP values, offering data-driven, interpretable insights into hormonal dynamics [2].

Data Presentation & Comparative Analysis

The following table synthesizes quantitative findings from key studies that utilize log-transformation for biomarker analysis, highlighting its application and benefits.

Table 2: Empirical Evidence Supporting Log-Transformation in Biomarker Analysis

| Study Context | Transformation Applied | Key Quantitative Findings | Interpretation & Advantage |

|---|---|---|---|

| Predictive Modeling of P4:E2 Ratio [2] | Natural log-transformed ratio: ( ln(progesterone/estradiol) ) | XGBoost model performance on test set: R² = 0.298, RMSE = 0.746, MAE = 0.574. | Log-transformation created a well-behaved, continuous target variable suitable for powerful machine learning algorithms, enabling the identification of non-linear predictors. |

| Women's Health Initiative (WHI) Hormone Therapy Trials [9] | Log-transformation of cardiovascular biomarkers (LDL-C, HDL-C, etc.). Analysis reported as ratios of geometric means. | CEE vs. Placebo over 6 years: LDL-C ratio of geometric means = 0.89 (95% CI: 0.88-0.91). Interpretation: an 11% reduction. | Using ratios of geometric means (back-transformed from log-scale analyses) provides a symmetric, clinically interpretable effect size that is not skewed by the data's distribution. |

| Methodological Simulation on Hormone Ratios [1] | Comparison of raw ratio vs. log-ratio validity under measurement error. | The validity of the raw ratio dropped rapidly with increasing error, especially with a skewed denominator. The log-ratio's validity was higher and more stable. | Log-transformation is not just a distributional correction but a critical procedure for ensuring the analytical robustness of ratio-based measures. |

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagents and Materials for Robust Hormone Ratio Research

| Item | Function / Rationale | Considerations for Protocol |

|---|---|---|

| ID LC-MS/MS [2] | Gold-standard method for quantifying steroid hormones with high specificity and sensitivity, thereby minimizing the fundamental problem of measurement error. | Preferable over immunoassays due to minimal cross-reactivity and higher precision, especially at low concentrations. |

| Standardized Anthropometric Tools [2] | To collect accurate and consistent feature data (e.g., waist circumference) that may be key predictors in models. | Follow established protocols (e.g., NHANES) to ensure measurement reliability and cross-study comparability. |

| Specialized Statistical Software (R, Python) | To perform advanced analyses such as Monte Carlo simulations, log-transformations, and machine learning modeling (XGBoost, SHAP). | Necessary for implementing the robust methodologies described in Protocols 1 and 2. |

| Log-Transformation [1] [2] [9] | A mathematical operation applied to raw data to enhance the robustness and interpretability of ratios and other biomarkers. | This is a foundational "methodological reagent" for modern hormone ratio analysis, not merely an optional data cleanup step. |

Decision Workflow for Hormone Ratio Analysis

The following diagram outlines a systematic workflow for deciding on the appropriate use and transformation of hormone ratios in a research setting.

The use of ratios to represent the balance between two biological compounds, particularly hormones, is a widespread practice in physiological and clinical research. Ratios such as testosterone/cortisol, estradiol/progesterone (E/P), and testosterone/estradiol are increasingly employed to capture the joint effect of two hormones with opposing or mutually suppressive actions [1]. These ratios are often treated as singular, meaningful indices that summarize a complex biological relationship into a single metric, ostensibly simplifying statistical analysis and interpretation.

However, this convenience comes at a significant methodological cost. The computation and use of simple ratios (A/B) present substantial statistical and interpretative problems that are frequently overlooked in research practice [1]. The arbitrary nature of choosing A/B over B/A, the distortion of distributions, and the amplification of measurement error collectively represent a significant conundrum in endocrine research. This paper examines these problems within the broader context of methodological research on log-transformation of hormone ratios, providing evidence-based protocols for robust ratio analysis.

Statistical Problems with Raw Ratio Computation

Arbitrary Directionality and Interpretative Challenges

The fundamental arbitrariness in deciding whether a hormonal relationship is best represented as A/B or B/A constitutes a primary methodological weakness. The ratio A/B is not linearly related to B/A, meaning that analytical results will vary substantially depending on which formulation is chosen [1]. This decision is rarely justified biologically or statistically in research literature, yet it fundamentally alters analytical outcomes.

Different underlying associations can produce the same observed association between a ratio and an outcome: (a) the association may be driven solely by one hormone in the ratio; (b) it may result from additive effects of both hormones; or (c) it may reflect genuine statistical interactions between them [1]. Using raw ratios often obscures which of these mechanisms is operative, potentially leading to flawed biological interpretations.

Distributional Problems and Outlier Sensitivity

Raw ratio distributions tend to be highly skewed and leptokurtic (heavy-tailed), with marked outliers, even when the component hormones are normally distributed [1]. This problem exacerbates when the denominator's coefficient of variation (standard deviation divided by the mean) is large, indicating the presence of relatively small denominator values. As denominator values approach zero, ratio values increase exponentially, creating extreme outliers that disproportionately influence statistical models.

Table 1: Comparative Properties of Raw Ratios Versus Log-Transformed Ratios

| Property | Raw Ratio (A/B) | Log-Transformed Ratio (ln(A/B)) |

|---|---|---|

| Distribution | Highly skewed, leptokurtic | Approximately normal |

| Directionality | A/B ≠ B/A | ln(A/B) = -ln(B/A) |

| Measurement Error Robustness | Low; error is amplified | High; robust to error |

| Interpretation | Multiplicative | Additive (difference between logs) |

| Component Relationship | Obscured | Transparent (ln(A) - ln(B)) |

| Outlier Sensitivity | High | Low |

Measurement Error Amplification

A previously unrecognized limitation of raw ratios is their striking lack of robustness to measurement error [1]. Hormone levels are measured with error from multiple sources, including assay imprecision and discrepancies between sampled levels and physiologically effective concentrations. Noise in measured hormone levels becomes substantially exaggerated in ratio calculations, particularly when the denominator distribution is positively skewed—a common occurrence with hormone data.

Simulation studies demonstrate that the validity of raw hormone ratios (correlation between measured levels and underlying effective levels) drops rapidly with realistic measurement error levels [1]. This effect is amplified with skewed denominator distributions and positively correlated hormone levels, common conditions in endocrine research.

Quantitative Evidence: Simulation Studies and Empirical Data

Simulation Studies on Measurement Error

Controlled simulations using both idealized distributions and empirically observed hormone distributions reveal striking differences in robustness between raw and log-transformed ratios. Under realistic error conditions, the validity of raw ratios decreases dramatically, while log-transformed ratios maintain substantially higher and more stable validity across samples [1].

Table 2: Impact of Measurement Error on Ratio Validity (Simulation Findings)

| Error Condition | Raw Ratio Validity | Log-Transformed Ratio Validity | Amplifying Factors |

|---|---|---|---|

| Low Measurement Error | Moderate | High | Skewed denominator |

| Moderate Measurement Error | Low | Moderate-High | Positive correlation between hormones |

| High Measurement Error | Very Low | Moderate | Small denominator values |

| Typical Research Conditions | Rapid decline | Stable | All combined factors |

Under some conditions—particularly with moderate noise and positively correlated hormone levels—log-transformed ratios may provide a more valid measurement of the underlying ratio than the measured raw ratio itself [1].

Empirical Evidence from Hormone Research

In research on the estradiol-progesterone ratio, less than half of the total variance can be accounted for by linear main effects and linear × linear interactions [1]. This indicates that most variance likely arises from more complex interactions of unspecified forms, suggesting that raw ratios capture variance components that resist clear biological interpretation.

Despite these problems, use of hormone ratios continues to grow. A Web of Science search identified 168 papers with "testosterone-cortisol ratio" in title, abstract, or keywords, with 36% published since 2017 [1]. Similarly, 131 papers referenced "testosterone-estradiol ratio" or "estradiol-testosterone ratio," with 37% appearing since 2017 [1].

Experimental Protocols for Robust Ratio Analysis

Protocol 1: Assessment of Ratio Directionality

Purpose: To determine whether A/B or B/A better captures the underlying biological relationship.

Procedure:

- Identify a clinically or biologically validated outcome strongly associated with the hormonal balance (e.g., conceptive status for E/P ratio)

- Calculate both A/B and B/A ratios using the same hormone measurements

- Compute correlations between each ratio formulation and the validation outcome

- Statistically compare correlation strengths using Steiger's Z-test for dependent correlations

- Select the ratio direction with stronger association for subsequent analyses

Validation: Roney (2019) used this approach, finding E/P associated more strongly with conceptive status than P/E, justifying E/P as the preferred formulation [1].

Protocol 2: Log-Transformation of Ratio Data

Purpose: To normalize ratio distributions and reduce sensitivity to measurement error.

Procedure:

- Verify distributional properties of raw hormone values (skewness, kurtosis)

- Apply natural log transformation to each hormone value: ln(A) and ln(B)

- Compute log-ratio as the difference: ln(A) - ln(B) = ln(A/B)

- Verify normalization of resulting distribution (Shapiro-Wilk test, Q-Q plots)

- For analyses requiring absolute scale, back-transform results using exponentiation

Note: Log-transformation assumes positive hormone values. For values below detection limit, use established imputation methods (e.g., half the detection limit) before transformation.

Protocol 3: Component-Based Analysis as Alternative to Ratios

Purpose: To disentangle the individual contributions of each hormone and their interaction.

Procedure:

- Enter raw or log-transformed levels of each hormone as separate predictors in regression models

- Include a linear × linear interaction term between the two hormones

- Use hierarchical model building to test:

- Model 1: Hormone A as predictor

- Model 2: Hormones A and B as additive predictors

- Model 3: Hormones A, B, and their interaction as predictors

- Compare model fit statistics (AIC, BIC, R²) to determine optimal formulation

- Use simple slopes analysis to interpret significant interactions

Interpretation: This approach clarifies whether observed ratio associations are driven by one component, additive effects, or genuine interaction [1].

Visualization of Ratio Analysis Methodologies

Analytical Decision Pathway for Ratio Computation

Measurement Error Propagation in Ratio Computation

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Materials for Hormone Ratio Research

| Item | Function | Implementation Example |

|---|---|---|

| Mass Spectrometry (ID LC-MS/MS) | Gold-standard hormone quantification with high specificity and sensitivity | Measures progesterone and estradiol with minimal cross-reactivity [2] |

| Log-Transformation Software | Converts skewed distributions to near-normal | R, Python, or specialized statistics packages for ln(A/B) computation |

| Quantitative Hormone Monitor | At-home longitudinal hormone tracking | MIRA device measures E3G, LH, FSH, PdG in urine [10] |

| Contrast Validation Tool | Ensures accessibility of visualizations | Color contrast analyzers (axe DevTools) verify 4.5:1 minimum ratio [11] |

| Distribution Assessment Tools | Evaluates normality and outlier influence | Shapiro-Wilk test, Q-Q plots, skewness/kurtosis measures |

The arbitrary computation of A/B versus B/A ratios represents a significant methodological conundrum in hormone research with implications for statistical conclusion validity and biological interpretation. Evidence demonstrates that raw ratios suffer from distributional abnormalities, directional arbitrariness, and striking sensitivity to measurement error. Log-transformed ratios and component-based analyses offer more robust alternatives that preserve biological meaning while enhancing statistical reliability. The protocols and decision frameworks presented here provide researchers with validated methodologies for navigating the ratio conundrum, promoting more rigorous and interpretable research practices in endocrine science and drug development.

In endocrine research and pharmacology, scientists frequently use hormone ratios (e.g., testosterone/cortisol, estradiol/progesterone) to capture the joint effect or "balance" between two interdependent hormones [8] [1]. These ratios are popular for their straightforward interpretation as an index of hormonal dominance. However, raw ratios suffer from significant statistical and interpretational problems that can compromise research validity [8] [1].

A primary concern is their inherent asymmetry: the ratio A/B is not linearly related to B/A, making statistical results dependent on the arbitrary decision of which hormone serves as numerator or denominator [8]. Furthermore, distributions of raw ratios tend to be highly skewed and leptokurtic, violating assumptions of parametric statistical tests [8] [1]. Perhaps most critically, a previously unrecognized limitation is that raw hormone ratios exhibit a striking lack of robustness to measurement error [1]. In the presence of even moderate assay noise, the validity of raw ratios—the correlation between measured levels and underlying effective levels—drops rapidly, especially when the denominator hormone has a positively skewed distribution [1].

Log-transformation of ratios addresses these concerns while providing a more biologically interpretable metric for research on hormonal balance and pharmacological effects.

Theoretical Foundation: What Log-Transformed Ratios Actually Measure

Mathematical Definition and Biological Interpretation

A log-transformed ratio is fundamentally different from its raw counterpart. Mathematically, the transformation is expressed as:

[ \ln\left(\frac{A}{B}\right) = \ln(A) - \ln(B) ]

This equation reveals that a log-ratio actually measures the difference between the logarithms of the two component values [8] [1]. In biological terms, this represents the relative dominance or balance between two interacting substances on a multiplicative scale [12].

Whereas raw ratios capture a simple proportion, log-transformed ratios quantify the logarithmic difference between components, which aligns with how many biological systems actually operate [12]. Hormonal effects often follow multiplicative rather than additive patterns, and many biological parameters naturally follow log-normal rather than normal distributions [12].

Addressing Statistical Concerns

Log-transformation of ratios resolves multiple statistical issues inherent to raw ratios:

- Distribution Normalization: Log-transformed ratios typically exhibit more symmetrical, normal-like distributions, even when the component variables or raw ratios are highly skewed [8] [13]. This satisfies the distributional assumptions of many parametric statistical tests.

- Symmetry: The log-ratio (\ln(A/B)) is simply the negative of (\ln(B/A)), making statistical results invariant to the arbitrary choice of numerator and denominator [8] [1]. This ensures consistent findings regardless of ratio orientation.

- Robustness to Measurement Error: Log-transformed ratios demonstrate substantially greater resilience to measurement error compared to raw ratios, maintaining higher validity (correlation with underlying true values) under conditions of realistic assay noise [1].

Table 1: Comparison of Raw vs. Log-Transformed Ratio Properties

| Property | Raw Ratio (A/B) | Log-Transformed Ratio ln(A/B) |

|---|---|---|

| Distribution | Often highly skewed [8] | More symmetrical, normal-like [8] [13] |

| Symmetry | A/B ≠ B/A [8] | ln(A/B) = -ln(B/A) [8] [1] |

| Measurement Error Robustness | Low; validity drops rapidly with noise [1] | High; maintains validity under noise [1] |

| Mathematical Form | A/B | ln(A) - ln(B) |

| Biological Interpretation | Simple proportion | Multiplicative balance on logarithmic scale |

Quantitative Comparison: Performance Advantages of Log-Transformed Ratios

Statistical Performance Under Measurement Error

Simulation studies demonstrate the superior performance of log-transformed ratios under realistic research conditions. When hormone levels are measured with error—due to both assay limitations and temporal fluctuations—log-transformed ratios maintain significantly higher validity than raw ratios [1].

The validity advantage of log-transformations is particularly pronounced when:

- The denominator hormone has a positively skewed distribution [1]

- Hormone levels are positively correlated [1]

- Moderate to high levels of measurement error are present [1]

Under some conditions with positively correlated hormones and moderate noise, log-transformed ratios may provide a more valid measurement of the underlying raw ratio than the measured raw ratio itself [1].

Predictive Performance in Data Analysis

Empirical comparisons show that log-ratio transformations improve predictive performance in statistical models. In one analysis using compositional data (which shares mathematical properties with hormone ratios), log-ratio transformations consistently outperformed raw features in classification accuracy [14]:

Table 2: Performance Comparison of Ratio Transformations in Classification

| Transformation Type | Mean Accuracy | Performance Notes |

|---|---|---|

| Raw Features | Baseline | Outperformed by all log-ratio transforms [14] |

| CLR (Centered Log-Ratio) | Solid improvement | Better suited when balance and symmetry are important [14] |

| ALR (Additive Log-Ratio) | High accuracy | Great for interpretability with natural baseline [14] |

| PLR (Pairwise Log-Ratio) | 96.7% (highest) | Lowest variability across folds [14] |

| ILR (Isometric Log-Ratio) | Solid improvement | Statistically elegant but less intuitive [14] |

Experimental Protocols and Methodologies

Standard Protocol for Log-Ratio Analysis of Hormonal Data

Protocol Title: Analysis of Hormone Balance Using Log-Transformed Ratios

Principle: This protocol standardizes the process of calculating, transforming, and analyzing hormone ratios to ensure robust and biologically interpretable results in studies of endocrine function.

Materials and Reagents:

- Hormone measurement system (e.g., ELISA, LC-MS/MS)

- Statistical software with log-transformation capabilities

- Data collection templates ensuring complete paired measurements

Procedure:

- Hormone Measurement:

- Collect biological samples (saliva, blood, etc.) under standardized conditions

- Assay both hormones of interest (A and B) in the same run to minimize batch effects

- Record absolute concentrations in appropriate units (pg/mL, nmol/L, etc.)

Data Quality Control:

- Identify and address non-detectable values using appropriate imputation methods

- Check for implausible values or measurement errors

- Ensure paired measurements for both hormones are available for all subjects

Ratio Calculation and Transformation:

- Calculate raw ratio: ( R = A/B )

- Apply natural log transformation: ( L = \ln(R) = \ln(A) - \ln(B) )

- Alternative: Calculate directly as difference of log-transformed hormones

Statistical Analysis:

- Assess distribution normality using Shapiro-Wilk or Kolmogorov-Smirnov tests

- Conduct planned analyses (correlation, regression, group comparisons) using log-transformed ratios

- For group comparisons, use lognormal Welch's t-test or nonparametric Brunner-Munzel test [12]

- For paired comparisons, use lognormal ratio paired t-test [12]

Results Interpretation:

- Interpret coefficients in multiplicative terms (e.g., "a one-unit increase in the log-ratio corresponds to an X-fold increase in the A/B ratio")

- Back-transform results when necessary for clinical/biological interpretation

- Report both statistical significance and effect sizes

Notes and Troubleshooting:

- Zeros in the data pose challenges for log transformations; consider appropriate replacement strategies for non-detectable values

- When comparing groups, ensure homogeneity of variance assumptions are met

- For multivariate analyses, consider moderation analysis as an alternative approach to directly test interactive effects [8]

Alternative Method: Moderation Analysis

For researchers seeking to avoid ratio-based metrics entirely, moderation analysis provides a compelling alternative [8]:

Procedure:

- Enter raw or log-transformed levels of both hormones as separate predictors

- Include the linear × linear interaction term between the two hormones

- Test the significance of the interaction term

- Conduct simple slopes analysis to characterize the nature of significant interactions

Advantages: Avoids ratio construction entirely and directly tests for interactive effects between hormones [8].

Limitations: May not capture the complex, non-linear interactions that ratios sometimes reflect [1].

Visualization of Log-Ratio Concepts and Workflows

Conceptual Framework for Log-Ratio Interpretation

Conceptual Framework for Log-Ratio Interpretation

Experimental Workflow for Log-Ratio Analysis

Experimental Workflow for Log-Ratio Analysis

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Materials for Hormone Ratio Studies

| Material/Reagent | Function/Application | Considerations |

|---|---|---|

| ELISA Kits | Quantification of specific hormone concentrations | Select validated kits with appropriate sensitivity and dynamic range |

| LC-MS/MS Systems | Gold standard for hormone quantification | Provides high specificity but requires specialized equipment |

| Sample Collection Tubes | Standardized biological sample collection | Use appropriate preservatives for stability |

| Statistical Software (R, Python) | Data transformation and analysis | Ensure capability for log-transformations and non-parametric tests |

| Reference Standards | Assay calibration and quality control | Essential for measurement accuracy across batches |

| Log-Transformation Algorithms | Mathematical processing of ratio data | Implement with error handling for zero or negative values |

Log-transformed ratios represent more than just a statistical convenience—they provide a biologically meaningful metric for quantifying the balance between interdependent biological factors. By measuring the logarithmic difference between components, log-ratios align with the multiplicative nature of many physiological processes while overcoming the statistical limitations of raw ratios.

The enhanced robustness to measurement error, distributional improvements, and invariance to ratio orientation make log-transformed ratios superior for research applications in endocrinology, pharmacology, and beyond. When properly implemented through standardized protocols and interpreted within biological context, log-transformed ratios offer a powerful tool for understanding complex biological relationships.

From Theory to Practice: A Step-by-Step Guide to Implementing Log-Transformations

In statistical modeling of endocrine data, logarithmic transformation is a fundamental tool to address skewed distributions and heteroscedasticity (the overproportional increase of variance with growing hormone concentrations) [15]. Hormone data, such as salivary cortisol or testosterone/cortisol ratios, frequently exhibit positive skewness, characterized by a long right tail of high values [15] [3] [16]. Applying a log transformation helps make these distributions more symmetric and stabilizes variance across the measurement range, which are key assumptions for parametric statistical tests like ANOVA and linear regression [15] [16].

The natural logarithm (ln), with base e (≈2.718), and the base-10 logarithm (log10) are the two primary log functions used in scientific research. While mathematically equivalent for modeling purposes—differing only by a multiplicative constant—the choice between them carries important implications for interpretation, convenience, and convention in hormone analysis [17] [18]. This guide provides a detailed framework for selecting and applying the appropriate logarithmic transformation in hormone studies, complete with protocols and analytical workflows.

Mathematical and Practical Comparison of ln and log10

Core Mathematical Relationship

The natural logarithm (ln) and the base-10 logarithm (log10) are functionally identical for purposes of data modeling. They are connected by a constant scaling factor [18]:

ln(X) ≈ 2.303 * log10(X)

This relationship means that the shape of the data distribution after transformation is identical; the only difference is the scale of the resulting values. Consequently, statistical significance tests (e.g., p-values) for models will be the same regardless of which logarithm is used [17].

Key Properties and Interpretative Considerations

Table 1: Comparative Properties of Natural Log and Base-10 Log

| Property | Natural Log (ln) | Base-10 Log (log10) |

|---|---|---|

| Base Value | Base e (≈ 2.718) [17] | Base 10 [17] |

| Interpretation of Unit Change | A one-unit increase in ln(X) is approximately equivalent to a ~100% proportional increase in X [19] [17]. | A one-unit increase in log10(X) is equivalent to a tenfold increase in X [17]. |

| Coefficient Interpretation | Coefficients can be interpreted directly as approximate proportional differences (e.g., a coefficient of 0.06 suggests a 6% difference) [19]. | Coefficients relate to orders of magnitude. Less intuitive for proportional change. |

| Common Software Syntax | LN() in Excel, log() in R and SAS [18] |

LOG10() in R, LOG() in Excel [18] |

| Typical Application Domain | Economics, medicine, biology, and general scientific research [19] [16] [18] | Engineering and some physical sciences [17] |

The central practical difference lies in interpretation. The natural log is favored in many biological and medical contexts because its coefficients are more directly interpretable as approximate percentage changes [19] [17]. For example, in a linear regression model of the form ln(Y) = a + bX, a one-unit change in X is associated with an approximate b * 100% change in Y. This property stems from the mathematical fact that for small values of r, ln(1 + r) ≈ r [17].

Application Notes and Experimental Protocols

Protocol 1: Systematic Selection of a Power Transformation

This protocol is adapted from methodology used for analyzing salivary cortisol time series [15] and can be applied to any skewed hormone variable or ratio.

1. Problem Assessment and Preliminary Checks

- Objective: Determine if a log (or other power) transformation is necessary and which is optimal for your data.

- Prerequisites: Check for the presence of zeros or values below the assay's limit of detection (LOD). Logs of zero or negative numbers are undefined [16].

- Handling Low/Zero Values: If such values are rare (<2%), add a small positive constant (e.g., 0.01 or 1/2 the LOD) to all measurements before transformation. If they are common, alternative methods beyond simple logging may be required [16].

2. Data Transformation and Distribution Evaluation

- Procedure:

- Apply candidate transformations to your raw hormone data (X): Raw (X), Square Root (√X), Natural Log (ln(X)), and Base-10 Log (log10(X)).

- For each transformed variable, generate histograms with superimposed normal curves and Q-Q plots.

- Calculate descriptive statistics: skewness (target ~0) and kurtosis.

- Perform a statistical test for normality (e.g., Shapiro-Wilk test).

- Output Evaluation: The optimal transformation produces a distribution that is most symmetric (skewness nearest zero) and has the highest p-value in the normality test. Research on cortisol has shown that the best transformation is not always

lnor√Xand must be determined empirically [15].

3. Homoscedasticity Assessment

- Procedure: If analyzing the relationship between two variables (e.g., a hormone and a clinical score), create scatter plots of the transformed data.

- Output Evaluation: Look for a "fanning" pattern in the raw data that disappears after transformation, indicating stabilized variance [15].

4. Implementation and Documentation

- Decision: Select the transformation that best achieves normality and homoscedasticity.

- Documentation: Clearly report the chosen transformation (e.g., "Natural log-transformed cortisol values were used in all analyses") and the rationale (e.g., "This transformation effectively normalized the distribution and stabilized variance").

Protocol 2: Analysis of Hormone Ratios

The analysis of ratios (e.g., Testosterone/Cortisol or Cortisol/DHEA) is common but introduces specific statistical challenges, including inherent distribution asymmetry [3].

1. Ratio Calculation and Transformation

- Objective: To analyze a hormone ratio while meeting the assumptions of parametric tests.

- Procedure:

- Calculate the ratio

R = A/B. - Apply a log transformation to the ratio

R. The choice oflnorlog10is less critical than applying a log transform itself. Usinglnis common practice.

- Calculate the ratio

- Rationale: Log-transforming a ratio solves two problems simultaneously:

- It converts the inherently asymmetric ratio distribution into a more symmetric one [3].

- It renders the analysis invariant to the direction of the ratio calculation because

ln(A/B) = ln(A) - ln(B), which is the same as- [ln(B) - ln(A)]except for the sign. This means the result is statistically equivalent whether you useA/BorB/A[3].

2. Statistical Analysis and Interpretation

- Procedure: Use the log-transformed ratio (

ln(R)) as the dependent or independent variable in your general linear model (e.g., regression, ANOVA). - Interpretation: Back-transform the results for interpretation. The mean of

ln(R)corresponds to the geometric mean ofR. The confidence intervals calculated on theln(R)scale can be back-transformed (exponentiated forln; 10^ forlog10) to obtain a confidence interval for the ratio itself on the original scale [16].

Visualization of the Transformation Selection Workflow

The following diagram outlines the logical decision process for handling skewed hormone data, from initial assessment to final analysis.

The Scientist's Toolkit: Essential Reagents and Materials

Table 2: Key Research Reagent Solutions for Hormone Analysis

| Item | Function / Application |

|---|---|

| Salivary Collection Kits (e.g., Salivette) | Standardized collection of saliva samples for non-invasive measurement of hormones like cortisol, testosterone, and DHEA [15]. |

| Enzyme-Linked Immunosorbent Assay (ELISA) Kits | High-throughput, antibody-based quantification of specific hormone concentrations in biological fluids (serum, saliva, urine) [15]. |

| Liquid Chromatography-Mass Spectrometry (LC-MS/MS) | Gold-standard method for highly specific and sensitive simultaneous measurement of multiple hormones and their metabolites [15]. |

| Statistical Software (R, SPSS, SAS, Stata) | Platforms for executing data transformation, normality testing (e.g., Shapiro-Wilk), and general linear model analysis [18]. |

| Box-Cox Transformation Procedure | A systematic, data-driven method to identify the optimal power transformation (λ parameter) to normalize a variable, with ln being a special case (λ=0) [15]. |

The choice between the natural log (ln) and base-10 log (log10) in hormone analysis is primarily one of interpretative convenience and field convention, not statistical necessity. For researchers in endocrinology and drug development, the natural logarithm is generally recommended due to the intuitive interpretation of its coefficients as approximate proportional or percentage changes, aligning with common biological questions [19] [17]. However, the most critical step is not the automatic application of ln, but the systematic evaluation of whether any transformation—and which one—best normalizes the distribution and stabilizes the variance of the specific hormone dataset, as demonstrated in the provided protocols [15] [16]. Adopting this rigorous, data-informed approach ensures the validity of subsequent statistical inferences and enhances the reliability of research findings.

This application note provides a detailed protocol for pre-processing analytical data, with a specific focus on challenges prevalent in biomedical research, such as hormone ratio analysis. The procedures outlined here are designed to transform raw, messy data into a reliable, analysis-ready format. The protocol places special emphasis on handling zeros, missing values, and performing background correction, which are critical steps for ensuring the validity of subsequent statistical analyses, including the log-transformation of hormone ratios. Inconsistent or improper handling of these data issues can introduce significant bias, distort biological interpretations, and lead to non-reproducible findings. By following the standardized workflow and methodologies described herein, researchers can enhance data quality, improve analytical robustness, and facilitate the generation of reliable scientific conclusions.

In data-driven research, the axiom "garbage in, garbage out" is a fundamental principle; the quality of the input data directly determines the validity of the output [20]. Data pre-processing encompasses the techniques used to evaluate, filter, manipulate, and encode raw data to make it suitable for machine learning algorithms and statistical analysis [20]. Its primary goals are to resolve issues like missing values, errors, noise, and inconsistencies, thereby improving overall data quality [20]. In the specific context of hormonal research, where analyses often involve ratios of sex hormones (e.g., estradiol-to-progesterone) and their log-transformations, the initial handling of data is paramount [5].

The log-transformation of hormone ratios is a common practice to normalize distributions and stabilize variance. However, this transformation is highly sensitive to data quality issues. Zeros and missing values in the raw hormone measurements can make log-transformation impossible or mathematically unstable, while uncorrected background noise can lead to biased ratio estimates. Therefore, a rigorous and standardized pre-processing pipeline is not merely a preliminary step but a foundational component of methodology research in this field, directly impacting the falsifiability of scientific theories [5].

Table 1: Typology of Missing Data and Recommended Handling Strategies

| Type of Missing Data | Description | Example in Hormonal Research | Recommended Handling Method |

|---|---|---|---|

| Missing Completely at Random (MCAR) | The missingness is unrelated to any other variables, observed or unobserved. | A hormone sample value is missing due to a random pipetting error or a machine's temporary malfunction. | Deletion or Imputation. Removal is less likely to introduce bias. Imputation via mean/median/mode is also acceptable [21]. |

| Missing at Random (MAR) | The missingness is related to other observed variables but not the unobserved value itself. | Older study participants are systematically more likely to skip a sensitive question about medication use. The missing data is related to the observed variable 'age' [21]. | Advanced Imputation. Methods like Multiple Imputation by Chained Equations (MICE) or model-based imputation are preferred to account for the relationship with other variables. |

| Missing Not at Random (MNAR) | The missingness is related to the unobserved value itself. | Participants with very high levels of a stress hormone are less likely to return for the follow-up test. The missingness is directly related to the unmeasured hormone level [21]. | Sophisticated Modeling. Requires techniques like selection models or pattern-mixture models that explicitly account for the mechanism of missingness. |

Table 2: Methods for Handling Zeros, Outliers, and Background Noise

| Data Issue | Category | Description | Handling Technique |

|---|---|---|---|

| Zeros | True Zero | A value that is genuinely zero (e.g., a concentration below the detection limit reported as zero). | Context-specific handling. May require imputation with a small value (e.g., half the detection limit) prior to log-transformation or use of models that handle censored data. |

| False Zero | A zero resulting from a data entry error, a failed measurement, or a missing value incorrectly coded as zero. | Treat as a Missing Value. Recode the false zero as NA or NULL and then apply appropriate missing data strategies from Table 1. |

|

| Outliers | Univariate | A data point that differs significantly from other observations in a single variable. | Identification: Visualization (box plots, scatterplots) or statistical methods (IQR). Handling: Removal, capping, or transformation, depending on the cause [21]. |

| Multivariate | A combination of values across two or more variables that is unusual. | Identification: Mahalanobis distance. Handling: Investigation to determine if it is an error or a genuine, rare biological state. | |

| Background Noise | Technical Noise | Non-biological signal introduced during sample preparation or instrument measurement. | Background Correction: Subtract the signal from negative control samples (e.g., blank buffers) from all experimental samples. |

Experimental Protocols

Protocol 1: Comprehensive Handling of Missing Values

Objective: To systematically identify, classify, and handle missing values in a dataset to minimize bias and prepare data for analysis.

Materials:

- Raw dataset (e.g., hormone concentration measurements)

- Statistical software (e.g., R, Python with pandas/scikit-learn)

Procedure:

- Data Acquisition and Import: Load the raw dataset into your analytical environment. This is the most critical machine learning preprocessing step [20].

- Identification and Quantification: Generate a summary report listing each variable and its count of missing values. Visualize the pattern of missingness using libraries like

missingnoin Python. - Classification: Classify the missing data for each variable according to the typology in Table 1 (MCAR, MAR, MNAR). This requires domain knowledge and an investigation of the data collection process.

- Remediation Strategy:

- For MCAR Data: If the proportion is small (e.g., <5%), complete case analysis (removal of rows with missing values) may be acceptable, though it can lead to loss of information [20]. Alternatively, use simple imputation.

- For MAR Data: Employ multiple imputation techniques. For example, use the

MICEpackage in R orIterativeImputerin scikit-learn to create several complete datasets, analyze each one, and pool the results. - For MNAR Data: Consider sophisticated statistical models or conduct sensitivity analyses to understand how the MNAR assumption affects the results.

- Documentation: Meticulously document the proportion of missing data for each variable, the assumed mechanism (MCAR, MAR, MNAR), and the specific imputation method used for each.

Protocol 2: Strategy for Zeros and Background Correction

Objective: To distinguish between and appropriately handle true zeros and false zeros, and to correct for technical background noise.

Materials:

- Raw instrument readings for experimental samples.

- Readings from negative control samples (blanks).

- Information on the detection limit of the assay.

Procedure:

- Background Correction: a. Calculate the average signal intensity from the negative control samples. b. Subtract this average background signal from all experimental sample readings. c. Note: If any corrected value becomes negative or zero, it should be treated as a value below the detection limit and handled as a special case of a "zero" in the next step.