Keyword Clustering for Research: A Scientist's Guide to Accelerating Discovery and Literature Review

This guide provides researchers, scientists, and drug development professionals with a comprehensive framework for implementing keyword clustering in scientific research.

Keyword Clustering for Research: A Scientist's Guide to Accelerating Discovery and Literature Review

Abstract

This guide provides researchers, scientists, and drug development professionals with a comprehensive framework for implementing keyword clustering in scientific research. It covers foundational concepts, practical methodologies for both general and life-sciences-specific applications, advanced optimization techniques, and evaluation strategies. By moving beyond simple keyword searches, you will learn how to systematically map research topics, uncover hidden semantic relationships in literature, and dramatically improve the efficiency and comprehensiveness of your data discovery process, from target identification to literature review.

Beyond the Search Bar: Understanding Keyword Clustering and Its Revolutionary Role in Modern Research

What is Keyword Clustering? Defining SERP-Based vs. NLP-Based Grouping for Scientific Literature

Keyword clustering is an analytical process that involves grouping related keywords or search terms into thematic clusters based on specific measures of similarity. In scientific and bibliometric research, this technique is fundamental for mapping the intellectual structure of a field, identifying emerging topics, and analyzing knowledge domains [1]. The core premise is that by analyzing the relationships between keywords, researchers can uncover latent thematic patterns and conceptual frameworks within large volumes of academic literature. This process transforms disjointed keywords into structured knowledge representations that facilitate comprehensive research topic analysis.

The development of keyword semantic representation methods in bibliometrics has evolved significantly, progressing along the pathway of "co-word matrix to co-word network to word embedding" alongside advancements in text mining technology [2]. These methodological innovations have enabled researchers to move beyond simple frequency counts toward sophisticated semantic analyses that capture the contextual and relational aspects of scientific terminology. For research topics in scientific domains, effective keyword clustering provides a systematic approach to organizing literature, identifying research gaps, and understanding the interconnectedness of concepts within and across disciplines.

Comparative Analysis of Clustering Methodologies

Fundamental Definitions and Conceptual Frameworks

SERP-Based Keyword Clustering groups keywords by analyzing search engine results pages, operating on the principle that if different keywords return similar URLs in their top search results, they likely share underlying topical relationships and can be addressed with similar content [3] [1] [4]. This method reflects how search engines interpret keyword relationships, making it particularly valuable for understanding competitive landscapes and user intent alignment [5]. The general algorithm involves fetching search results for each keyword, comparing the URLs that appear, and grouping keywords that share sufficient overlapping results based on a customizable threshold [1].

NLP-Based Keyword Clustering utilizes natural language processing and artificial intelligence to group keywords based on their semantic similarity and linguistic relationships [4]. This approach interprets, analyzes, and relates the meanings of different keywords to each other, forming clusters that revolve around the same core concept regardless of search engine behavior. Techniques include word embedding, co-word networks, and semantic+structure integration models that capture linguistic patterns and contextual relationships between terms [2] [4].

Comparative Evaluation of Performance Characteristics

Experimental comparisons across scientific domains demonstrate significant performance differences between methodological approaches. Co-word networks and word embedding techniques display satisfactory performance, while co-word matrices exhibit subpar results [2]. Among network embedding algorithms, LINE and Node2Vec outperform DeepWalk, Struc2Vec, and SDNE in bibliometric applications. However, no singular approach stands out as universally superior, indicating that method selection must consider factors such as corpus size and semantic cohesion of domain keywords [2].

Table 1: Quantitative Comparison of Keyword Clustering Methodologies

| Characteristic | SERP-Based Clustering | NLP-Based Clustering |

|---|---|---|

| Primary Data Source | Search Engine Results Pages (SERPs) | Linguistic corpora & text databases |

| Core Analytical Principle | URL overlap in top search results | Semantic similarity & linguistic patterns |

| Key Performance Metrics | SERP overlap percentage (typically 30-70%) [6] [7] | Semantic coherence scores & cluster purity |

| Typical Cluster Formation | Groups keywords with similar ranking pages | Groups keywords with related meanings |

| Domain Adaptation | Automatically adapts to search engine interpretations | Requires domain-specific tuning of models |

| Processing Limitations | 200-20,000 keywords per operation [3] | Virtually unlimited with sufficient resources |

| Resource Requirements | Higher (requires API calls to search engines) [4] | Lower (primarily computational resources) |

Experimental Protocols for Research Applications

Protocol 1: SERP-Based Keyword Clustering for Literature Mapping

Objective: To identify core research topics and their interrelationships through analysis of search engine results patterns for scientific terminology.

Materials and Reagents:

- Research Reagent Solutions:

- Keyword List: Compilation of relevant scientific terminology from domain literature databases

- SERP API: Application Programming Interface for accessing search engine results (e.g., ValueSerp, Google Custom Search JSON API) [8]

- Clustering Tool/Platform: Software for calculating SERP overlaps and forming clusters (e.g., custom scripts or commercial tools) [3] [7]

- Analysis Environment: Computational environment (R, Python) for statistical analysis and visualization of results

Methodology:

- Keyword Compilation: Extract keywords from scientific databases, literature repositories, or research publications relevant to the target domain. Compile a comprehensive list of candidate terms for analysis [5].

- SERP Data Collection: For each keyword, query the search engine API to retrieve the top 10 organic results (URLs) [1]. Record the complete SERP data including domain URLs, positions, and additional features.

- Similarity Calculation: Compute pairwise similarity between all keywords using Jaccard similarity coefficient or overlap percentage based on shared URLs in their top results [1] [7].

- Cluster Formation: Apply hierarchical clustering or community detection algorithms to group keywords using a predetermined overlap threshold (typically starting at 30-70%) [6] [7].

- Validation and Interpretation: Analyze cluster coherence by examining the thematic consistency of grouped keywords. Validate against known domain taxonomy or expert judgment.

Protocol 2: NLP-Based Semantic Clustering for Conceptual Analysis

Objective: To map the conceptual structure of a research domain through semantic analysis of keyword relationships independent of search engine behavior.

Materials and Reagents:

- Research Reagent Solutions:

- Text Corpus: Domain-specific collection of research publications, abstracts, and related texts

- Semantic Models: Pre-trained or domain-adapted word embedding models (Word2Vec, GloVe, BERT)

- Processing Tools: Natural language processing libraries (NLTK, spaCy, Gensim) for text preprocessing

- Clustering Algorithms: Implementation of machine learning clustering methods (K-means, hierarchical clustering, DBSCAN)

Methodology:

- Corpus Preparation: Compile and preprocess a representative text corpus from the target research domain. Perform standard NLP preprocessing including tokenization, stopword removal, and lemmatization [9].

- Semantic Representation Generation: Convert keywords into vector representations using selected semantic models. Options include:

- Similarity Matrix Construction: Calculate pairwise semantic similarity between all keyword vectors using cosine similarity or other appropriate metrics.

- Cluster Identification: Apply clustering algorithms to group semantically similar keywords. Optimize cluster number using elbow method or silhouette analysis.

- Conceptual Mapping: Interpret and label clusters based on the semantic themes of constituent keywords. Analyze inter-cluster relationships to map conceptual boundaries.

Application in Scientific Literature Analysis

Bibliometric Mapping and Research Trend Identification

Keyword clustering enables systematic analysis of publication patterns and knowledge structures within scientific domains. Experimental comparisons across domains including quantum entanglement, immunopathology, monetary policy, and artificial intelligence demonstrate that semantic representation methods significantly impact clustering quality in bibliometric research [2]. By applying keyword clustering to publication data, researchers can identify emerging topics, map interdisciplinary connections, and trace the evolution of research fronts over time. The Microsoft Academic Graph (MAG) field of study hierarchy provides established "evaluation standards" for validating clustering results in specific domains [2].

Research Topic Categorization and Gap Analysis

For thesis research and comprehensive literature reviews, keyword clustering facilitates systematic organization of existing knowledge and identification of underexplored areas. SERP-based clustering reveals how current literature addresses specific research questions, while NLP-based approaches uncover conceptual relationships that may not be apparent through traditional literature review methods [4]. This dual perspective enables researchers to position their work within existing scholarly conversations while identifying novel research directions that bridge conceptual domains.

Table 2: Application Scenarios for Keyword Clustering in Scientific Research

| Research Phase | SERP-Based Applications | NLP-Based Applications |

|---|---|---|

| Literature Review | Identifying core papers addressing related research questions | Mapping conceptual relationships across disparate literature |

| Research Gap Identification | Discovering under-optimized topics in current literature | Revealing unexplored conceptual connections between domains |

| Thesis Structuring | Organizing chapters around established research conversations | Developing novel conceptual frameworks based on semantic analysis |

| Interdisciplinary Research | Finding bridge concepts shared across disciplinary boundaries | Identifying transferable methodologies and theoretical frameworks |

| Research Trend Analysis | Tracking evolution of topical focus over time | Mapping conceptual drift and emergence of new research paradigms |

Implementation Considerations for Research Contexts

Method Selection Criteria

Choosing between SERP-based and NLP-based approaches requires careful consideration of research objectives, domain characteristics, and available resources. SERP-based clustering is particularly valuable when the research goal involves understanding current literature organization and identifying opportunities for contribution within existing scholarly conversations [4] [5]. NLP-based methods excel when the objective is novel conceptual mapping, interdisciplinary exploration, or understanding deep semantic relationships between research concepts [2] [4].

Corpus size significantly influences method selection. For smaller, well-defined domains, NLP approaches can capture nuanced semantic relationships effectively. For large-scale bibliometric analyses spanning multiple disciplines, SERP-based methods provide scalable solutions that reflect how knowledge is currently organized and accessed [3] [2]. Hybrid approaches that combine both methodologies often yield the most comprehensive insights for complex research topics.

Validation and Quality Assessment

Establishing cluster quality requires both quantitative metrics and qualitative validation. Quantitative measures include internal validation metrics (silhouette coefficient, Davies-Bouldin index) and external validation against established taxonomies like the MAG field of study hierarchy [2]. Qualitative assessment involves domain expert evaluation of cluster coherence, interpretability, and practical utility for the research context. For thesis research, validation should ensure that clusters accurately represent the intellectual structure of the field and meaningfully support the research objectives.

In the age of big data, researchers, scientists, and drug development professionals face unprecedented information overload. The volume and complexity of modern scientific data—from genomic sequences and high-throughput screening results to clinical trial data and scientific literature—threaten to overwhelm traditional analysis methods. Cluster analysis serves as a powerful research multiplier by transforming this data deluge into actionable knowledge, enabling the discovery of hidden patterns, relationships, and subgroups within complex datasets without prior hypotheses [10]. This data-driven technique decomposes inter-individual heterogeneity by identifying more homogeneous subgroups, making it particularly valuable for exploring complex biological systems and patient populations in drug development [11].

Core Methodologies: A Quantitative Framework

Cluster analysis encompasses a family of algorithms that group data points based on their similarities. Selecting the appropriate method is crucial for generating valid, reproducible insights.

Table 1: Quantitative Comparison of Major Clustering Techniques [10]

| Algorithm | Primary Objective | Key Considerations | Best for Data Characteristics |

|---|---|---|---|

| K-means Clustering | Group data into a pre-defined number (K) of spherical clusters [10] | - Sensitive to initial centroid placement; run multiple times.- Assumes spherical, equally-sized clusters.- Requires specifying K beforehand.- Efficient for large datasets. | Well-defined, separated spherical clusters; known or tested cluster number. |

| Model-based Clustering | Identify groups based on specific probability distributions (e.g., Gaussian) [10] | - Requires assumptions about data distribution.- Handles varying cluster shapes/sizes.- Robust to noise and outliers.- Can estimate optimal cluster number. | Data following a assumed statistical distribution; handling noise. |

| Density-based Clustering (e.g., DBSCAN) | Identify clusters of arbitrary shape based on data point density [10] | - Finds irregular shapes.- Robust to outliers.- No need to specify cluster count.- May struggle with varying densities. | Irregular cluster shapes; noisy data; unknown cluster number. |

| Fuzzy Clustering | Allow data points to belong to multiple clusters with membership scores [10] | - Allows partial membership.- Useful for undefined boundaries.- Provides membership degrees.- More complex to interpret. | Overlapping clusters; uncertain cluster assignments. |

Advanced and Extended Clustering Methods

Beyond the basic models, several advanced techniques address specific analytical challenges:

- Kernel Methods: Transform data into a higher-dimensional space to identify non-linear relationships that are not separable in the original input space [11].

- Deep Learning Clustering: Use deep neural networks to learn meaningful feature representations and cluster structures simultaneously, particularly powerful for high-dimensional data [11].

- Semi-supervised Clustering: Incorporate limited labeled data to guide the clustering process, improving results when some prior knowledge is available [11].

- Clustering Ensembles: Combine multiple clustering solutions to produce a more robust, stable, and accurate consensus result [11].

Experimental Protocols and Workflows

Implementing cluster analysis requires meticulous attention to experimental design and execution. The following protocols ensure rigorous and reproducible outcomes.

General Clustering Workflow

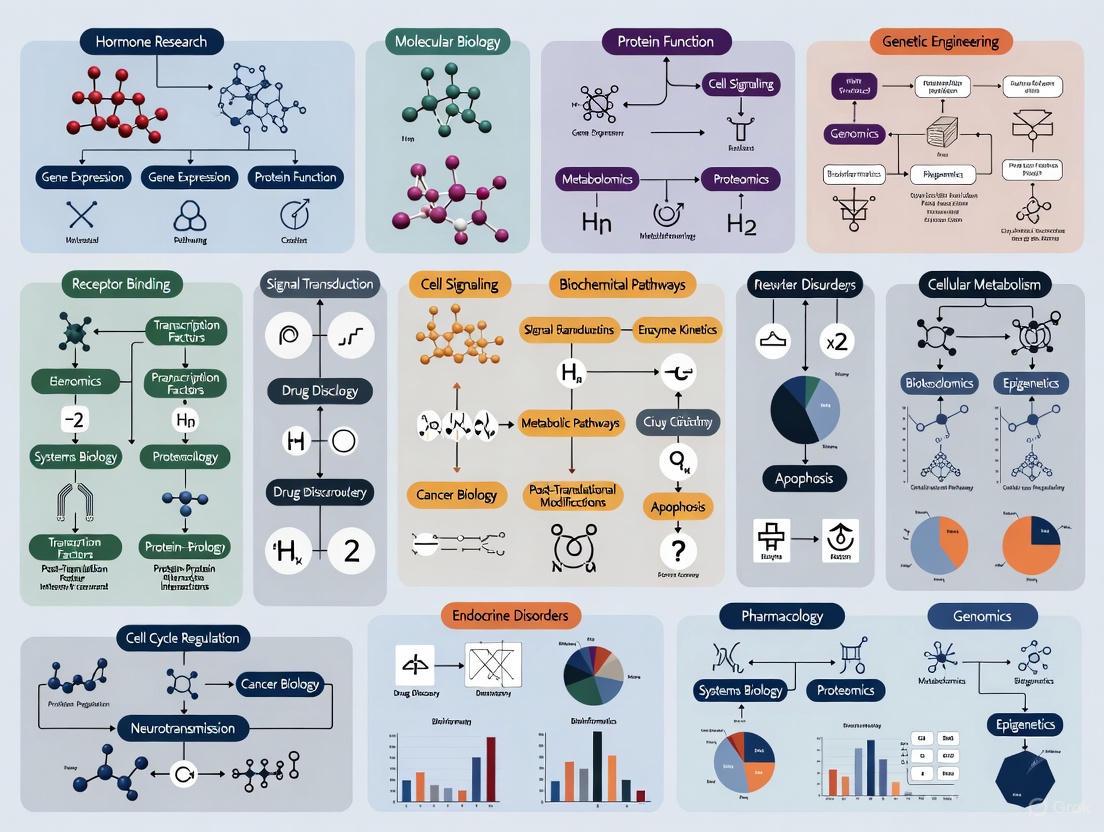

Diagram 1: Generalized clustering workflow for research.

Detailed Protocol for K-means Clustering

K-means clustering is one of the most widely used algorithms due to its simplicity and efficiency [10].

Objective: To partition n observations into k clusters where each observation belongs to the cluster with the nearest mean.

Materials and Reagents:

- Table 2: Research Reagent Solutions for K-means Clustering

| Item | Function | Implementation Example |

|---|---|---|

| Quantitative Dataset | Raw input data for clustering | Matrix format (samples x variables) |

| Standardization Tool | Normalizes variables to comparable scales | Z-score normalization function |

| K-means Algorithm | Core computational engine | sklearn.cluster.KMeans or kmeans() in R |

| Distance Metric | Measures similarity between data points | Euclidean distance calculator |

| Cluster Validation Index | Evaluates resulting cluster quality | Silhouette score, Within-cluster Sum of Squares |

Procedure:

- Specify the Number of Clusters (K): Determine the value of K based on domain knowledge or use optimization methods like the elbow method to test a range of potential values [10].

- Initialize Centroids: Randomly select K data points from the dataset as initial cluster centers [10].

- Assign Points to Clusters: Calculate the distance between each data point and all centroids. Assign each point to the cluster whose centroid is closest [10].

- Recalculate Centroids: Compute the new centroid (mean) of each cluster based on all points currently assigned to it [10].

- Iterate Until Convergence: Repeat steps 3 and 4 until cluster assignments stabilize and centroids no longer change significantly between iterations [10].

Protocol for Data Preparation

Data quality is paramount for successful cluster analysis, as the output is highly sensitive to input data characteristics [10].

Diagram 2: Data preparation protocol flow.

Methods for Handling Missing Data [10]:

- Complete Case Analysis: Use only data points with complete information, removing any with missing values.

- Mean Imputation: Replace missing values with the mean value of that variable across all observations.

- Regression Imputation: Use regression models to predict missing values based on other available variables.

- K-nearest Neighbor Imputation: Estimate missing values using the values from the most similar complete cases.

Feature Scaling and Normalization:

- Standardization (Z-score): Transform variables to have a mean of 0 and standard deviation of 1.

- Min-Max Normalization: Scale variables to a fixed range, typically [0, 1].

- Log Transformation: Reduce skewness in highly variable data.

Visualization and Interpretation Framework

Effective visualization is critical for interpreting clustering results and communicating findings to diverse stakeholders.

Cluster Visualization Techniques

Table 3: Methods for Visualizing and Interpreting Cluster Results [10]

| Technique | Purpose | Implementation Guidance |

|---|---|---|

| Scatterplots | Visualize data points and cluster assignments in 2D/3D space | Color code points by cluster; use PCA for dimensionality reduction |

| Heatmaps | Display similarity matrices or variable means across clusters | Show cluster profiles using color intensity for values |

| Dendrograms | Illustrate hierarchical relationships in clustering results | Display merge distances to show cluster relationships |

| Cluster Profiles | Characterize typical features of each cluster | Calculate and display mean/median values of variables within clusters |

| Dimensionality Reduction (PCA, t-SNE) | Visualize high-dimensional clusters in 2D space | Reveal complex relationships not visible in original data space |

Visualizing High-Dimensional Relationships

Diagram 3: High-dimensional data visualization process.

Application in Research Contexts

Drug Development and Mental Health Research

In mental health research, cluster analysis has proven particularly valuable for identifying patient subgroups based on symptom patterns, treatment responses, or biological markers. This approach helps decompose the heterogeneity of mental disorders into more homogeneous subtypes, potentially enabling more targeted interventions [11]. The methodology supports precision medicine approaches by identifying patient strata that may respond differently to therapeutics.

Research Topic Analysis and Literature Mining

For the specific thesis context of creating keyword clusters for research topics, cluster analysis enables:

- Topic Modeling: Grouping related publications based on keyword co-occurrence patterns

- Research Trend Identification: Detecting emerging thematic areas within scientific literature

- Interdisciplinary Connection Mapping: Revealing relationships between distinct research domains

- Knowledge Gap Discovery: Identifying underexplored areas in the research landscape

Validation and Reporting Standards

Cluster Validation Techniques

Validating clustering results is essential for ensuring robust findings:

- Internal Validation: Assess cluster quality using intrinsic characteristics (e.g., silhouette width, within-cluster sum of squares)

- External Validation: Compare cluster solutions with external benchmarks or known labels when available

- Stability Validation: Evaluate the consistency of clusters across multiple algorithm runs or subsamples of the data

Reporting Guidelines

Comprehensive reporting is critical for reproducibility and trust in cluster analysis results. The developing TRoCA (Transparent Reporting of Cluster Analyses) guidelines emphasize reporting key methodological aspects [12]:

- Data preprocessing approaches and handling of missing values

- Clustering algorithm settings and hyperparameters

- Criteria for selecting optimal clustering solutions

- Cluster characterization and interpretation methods

- Validation approaches and results

Cluster analysis serves as a powerful research multiplier that enables scientists to transform information overload into structured knowledge. By providing systematic approaches to identify patterns in complex data, these methods accelerate discovery across diverse domains—from patient stratification in drug development to literature mapping in research topic analysis. The rigorous application of the protocols and guidelines presented here ensures that cluster analysis delivers reproducible, actionable insights that multiply research effectiveness in the age of big data.

In the digital age, the foundational step for disseminating scientific research is understanding how target audiences search for information. Search intent—the purpose or reason behind a user's online query—is a critical concept for researchers, scientists, and drug development professionals seeking to ensure their work is discoverable by the right audiences [13] [14]. For a research group publishing a novel study on a kinase inhibitor, success is not just about publication in a high-impact journal, but also about the work being found online by other scientists, potential collaborators, or industry partners. Google and other search engines have refined their algorithms to prioritize content that best satisfies user intent [13]. Consequently, a comprehensive scientific content strategy must be built upon a framework of search intent, ensuring that research outputs are strategically aligned with the specific informational needs of the global scientific community at various stages of inquiry and collaboration.

Defining the Core Classifications of Search Intent

Search intent is traditionally categorized into four main types. For scientific contexts, these classifications align closely with the distinct stages of research, development, and professional engagement.

Informational Intent: The user seeks to learn or find information [13] [14]. This is the most common starting point for scientific inquiry.

- Scientific Context: A researcher is looking for background information, a foundational theory, or a specific experimental method. Their queries often begin with "what," "how," or "why" [14].

- Example Queries: "what is CRISPR-Cas9", "mechanism of action of SGLT2 inhibitors", "how to perform western blot".

- Content Format: Review articles, methodology papers, conference presentation slides, and educational blog posts are ideal for satisfying informational intent [14].

Commercial Investigation (Commercial Intent): The user is in a consideration phase, researching and comparing options before a decision [13] [14].

- Scientific Context: A lab manager or principal investigator is evaluating different technologies, reagents, or software platforms before a purchase. They know what they need but are determining the best solution.

- Example Queries: "best NGS platform 2025", "qPCR machine reviews", "comparison of antibody suppliers".

- Content Format: Product comparison guides, technical specifications sheets, and case studies demonstrating product efficacy effectively serve this intent [14].

Transactional Intent: The user intends to perform an action or make a purchase [13] [14].

- Scientific Context: The user is ready to acquire a reagent, download a software package, register for a conference, or access a specific dataset.

- Example Queries: "buy recombinant protein XYZ", "download Pymol license", "register for AACR annual meeting".

- Content Format: E-commerce product pages, software download links, and conference registration portals are tailored for transactional intent [14].

Navigational Intent: The user aims to find a specific website or online destination [13] [14].

- Scientific Context: A scientist wants to go directly to the PubMed database, a specific journal's homepage (e.g., "Nature website"), or a company's portal (e.g., "Addgene login").

- Example Queries: "NIH grants login", "Springer author guidelines", "UniProt database".

- Content Format: Ensuring your organization's or project's website ranks for its own name is key to satisfying navigational intent.

Table 1: Search Intent Classifications in Scientific Research

| Intent Type | User Goal | Example Scientific Queries | Optimal Content Format |

|---|---|---|---|

| Informational | Acquire knowledge | "role of mitochondria in apoptosis", "protocol for ELISA" | Review articles, method protocols, blog posts |

| Commercial Investigation | Compare options | "best practices for cell line authentication", "HPLC vs FPLC" | Product comparisons, whitepapers, case studies |

| Transactional | Perform an action | "purchase Taq polymerase", "download dataset" | Product pages, software download links, registration forms |

| Navigational | Locate specific site | "PubMed Central", "Cell journal submission" | Homepage, login portals, specific website pages |

The Role of Search Intent in Keyword Clustering for Research Topics

Keyword clustering is the process of organizing semantically related keywords into groups based on shared search intent and topical relevance [15]. For scientific research, this translates to creating a comprehensive topical map. Instead of creating individual, fragmented pieces of content for each minor keyword variant, clustering allows you to target hundreds of related search terms with a single, authoritative resource [15]. This approach aligns perfectly with how modern search engines like Google operate. Algorithms such as RankBrain and BERT are designed to understand that terms like "CRISPR off-target effects," "minimizing CRISPR errors," and "specificity of CRISPR-Cas9" are conceptually connected [15]. By creating one definitive guide or review article that comprehensively covers a cluster, you signal to search engines that your content is the most relevant and complete resource for that entire research topic, thereby increasing your chances of ranking for all associated terms. This strategy also efficiently avoids keyword cannibalization, where multiple pages on your own site compete for the same search terms [15].

Quantitative Data Analysis of Search Intent

A data-driven approach is essential for validating search intent and effectively clustering keywords. The process involves collecting quantitative data and analyzing it to make informed decisions.

Table 2: Key Quantitative Metrics for Search Intent Analysis

| Metric | Definition | Application in Intent Analysis |

|---|---|---|

| Search Volume | The average monthly searches for a keyword [15]. | Identifies high-interest topics and core terms within a cluster. |

| Click-Through Rate (CTR) | The percentage of users who click on a search result after seeing it. | Indicates how well a search result snippet (title, meta description) matches the perceived intent. |

| Keyword Difficulty | A metric estimating the competition level to rank for a keyword. | Helps prioritize target clusters; informational intent may have lower difficulty than high-value transactional terms. |

| Pogo-sticking | User behavior of quickly returning to search results after clicking a link. | A high rate suggests the content did not satisfy the search intent. |

Descriptive statistics, including measures of central tendency like the mean and median, and measures of variability like standard deviation, provide a crucial first look at your keyword data [16] [17]. For instance, calculating the average search volume for keywords within a potential cluster helps determine the overall traffic potential. A high standard deviation in search volume might indicate that the cluster contains both popular core topics and niche subtopics, which can inform content structure [16] [17].

Inferential statistics, such as correlation analysis, can be used to identify relationships between different keyword groups [17]. A strong positive correlation between the search volumes of two keyword sets might suggest they are semantically related and could belong to the same broader cluster. Furthermore, hypothesis testing (e.g., t-tests, ANOVA) can be applied to compare the performance (e.g., CTR, time on page) of content pages optimized for different search intents, providing statistical evidence for refining your content strategy [17] [18].

Diagram 1: Quantitative Analysis Workflow for Keyword Clustering.

Experimental Protocol: Determining and Clustering by Search Intent

This protocol provides a step-by-step methodology for analyzing and grouping keywords for a research topic.

Objective: To programmatically identify search intent and create semantically coherent keyword clusters for a defined research topic to guide content creation.

Materials & Research Reagents:

- Keyword Research Tool (e.g., Answer Socrates, Semrush): Functions to generate a broad list of related search terms and their volumes [15] [19].

- SERP Analysis Tool: Direct observation of Google search results to classify intent.

- Data Analysis Software (e.g., Python with Pandas, R, SPSS): Used for statistical analysis and, if using advanced methods, for NLP-based clustering [15] [17].

- Keyword Clustering Platform (e.g., Keyword Insights, KeyClusters): Automates the process of grouping keywords based on SERP overlap or semantic similarity [19].

Procedure:

- Keyword Generation: Using your keyword research tool, input 3-5 seed keywords related to your research topic (e.g., "protein aggregation," "amyloid fibrils," "neurodegeneration"). Export a comprehensive list of related keywords and their monthly search volumes into a CSV file [15].

- Initial Intent Grouping: Manually code a subset of the keywords into informational, commercial, transactional, and navigational intent categories. Use this to establish a baseline. Analyze the SERP for each keyword—the types of content ranking (product pages, reviews, blog posts) are the strongest indicator of intent [13] [14].

- Automated Clustering: Upload your keyword CSV to a clustering tool. Select a SERP-based clustering method, which groups keywords for which Google returns similar results, as this most accurately reflects search engine understanding [19].

- Cluster Validation & Refinement: Review the automated clusters.

- Merge clusters that are overly granular and cover the same core topic.

- Split clusters that are too large and contain multiple, distinct subtopics.

- Ensure all keywords within a cluster share the same core search intent [15].

- Content Mapping: Assign each final cluster to a specific piece of content (e.g., a review article, a product page, a technical note). The main keyword in the cluster (often the highest volume term) should be the primary target, with supporting keywords naturally integrated throughout the content [15].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Search Intent and Keyword Clustering

| Tool / Reagent | Function in the Research Process |

|---|---|

| SERP Analysis | The definitive method for classifying search intent by observing real-world search engine results [13] [14]. |

| Keyword Clustering Tool (e.g., Keyword Insights) | Automates the grouping of semantically related keywords, saving significant time and improving accuracy [19]. |

| Statistical Software (e.g., SPSS, R, Python) | Enables quantitative analysis of keyword data, including descriptive stats and validation of cluster relationships [17] [18]. |

| Natural Language Processing (NLP) | Advanced method to understand semantic relationships between terms beyond simple keyword matching [15]. |

Diagram 2: Experimental Protocol for Keyword Clustering.

Integrating the core principles of search intent into a scientific communication strategy is not merely a technical SEO exercise; it is a fundamental practice for enhancing the visibility and impact of research. By systematically categorizing search queries into informational, commercial, transactional, and navigational intent, and employing a rigorous, data-driven methodology of keyword clustering, researchers and drug development professionals can ensure their vital work efficiently reaches its intended audience. This structured approach ensures that the right content reaches the right researchers at the right stage in their workflow, ultimately accelerating the pace of scientific discovery and collaboration.

The exponential growth of digital scientific information presents a significant challenge for researchers, scientists, and drug development professionals. With global patent filings exceeding 3.5 million annually and continuous expansion of scientific literature in databases like PubMed and Scopus, traditional information retrieval methods have become dangerously inadequate for comprehensive prior art identification and knowledge discovery [20]. This data deluge creates a "researcher's dilemma": how to efficiently extract meaningful technological insights and relationships from millions of dispersed documents.

Clustering methodologies have emerged as powerful computational approaches to address these challenges by automatically grouping similar documents, identifying hidden patterns, and revealing technological relationships that would remain obscured through manual analysis. This Application Note provides detailed protocols for implementing clustering-based strategies across major research databases, enabling professionals to navigate complex information landscapes and accelerate innovation cycles in drug development and scientific research.

Table 1: Key Challenges in Research Databases Addressed by Clustering

| Database | Primary Challenges | Clustering Solution | Impact |

|---|---|---|---|

| Patent Databases | Fragmented classification, multi-jurisdictional coverage, non-patent literature integration | AI-powered novelty search, citation network clustering, visual element similarity recognition | Reduces prior art blind spots by up to 70% compared to ad-hoc approaches [20] |

| PubMed | Disconnected biomedical entities, siloed clinical trials data, terminology variability | Biomedical entity linkage, author name disambiguation, multi-source citation integration | Creates 482 million biomedical entity linkages across 36M+ papers [21] |

| Scopus | Interdisciplinary content complexity, emerging terminology, citation network fragmentation | Keyword extraction algorithms, topic modeling, cross-disciplinary convergence analysis | Identifies research hotspots and emerging trends through bibliometric clustering [22] |

Clustering Fundamentals for Database Analysis

Core Clustering Algorithm Types

Clustering algorithms for database analysis generally fall into three primary categories, each with distinct strengths for specific research applications. Partition-based methods like K-means and Gaussian Mixture Models (GMM) create flat, non-overlapping groups ideal for initial database segmentation. Density-based approaches identify irregularly shaped clusters based on data point concentration, effectively handling noise and outliers common in patent classifications. Hierarchical methods build nested cluster trees through agglomerative (bottom-up) or divisive (top-down) strategies, particularly valuable for exploring citation networks and technological evolution pathways [23] [24].

Recent evaluations across multiple domains demonstrate significant performance variations among clustering algorithms. In medical imaging data analysis, GMM achieved 89% median accuracy in classifying time activity curves, substantially outperforming other methods like Fuzzy C-means (83%) and ICA with K-means (81%) [23]. For spatial transcriptomics data, multi-slice clustering methods that integrate information across contiguous tissue sections have shown particular promise for identifying spatially coherent patterns in gene expression [24].

Key Performance Metrics

Validating clustering effectiveness requires multiple quantitative metrics that assess different aspects of performance. Internal validation measures include silhouette scores (cluster cohesion and separation) and Davies-Bouldin index (inter-cluster similarity). External validation employs adjusted rand index (similarity to ground truth) and normalized mutual information (information-theoretic similarity) when reference classifications exist [25]. Stability measures assess result consistency across subsamples or parameters, particularly crucial for patent trend analysis where reproducibility is essential.

For spatial transcriptomics data, comprehensive frameworks like STEAM (Spatial Transcriptomics Evaluation Algorithm and Metric) leverage machine learning classification to evaluate clustering consistency through metrics including Kappa score, F1 score, accuracy, and percentage of abnormal spots [25]. Similar rigorous evaluation is recommended when applying clustering to patent and literature databases.

Application Protocols

Protocol 1: AI-Powered Patent Novelty Search and Clustering

Experimental Background and Objective

Prior art identification through traditional Boolean keyword searching proves increasingly inadequate in global patent landscapes where Asian patent offices now account for over 60% of global filings [20]. This protocol implements an AI-enhanced clustering approach to patent novelty searching that surfaces conceptually relevant prior art regardless of terminology variations or jurisdictional differences.

Research Reagent Solutions

Table 2: Essential Components for AI-Powered Patent Clustering

| Component | Function | Implementation Example |

|---|---|---|

| Patsnap Eureka AI Agent | Automated classification-based searching with intelligent keyword variation | Processes 2 billion+ data points across patents and scientific literature [20] |

| Distributed Patent Keyword Extraction Algorithm (PKEA) | Extracts representative keywords from patent texts for classification | Uses Skip-gram model; outperforms TF-IDF, TextRank, and RAKE in patent classification accuracy [26] |

| Citation Network Analyzer | Maps forward and backward citations to trace technological lineage | Identifies foundational prior art through citation density and pattern analysis [20] |

| Visual Element Similarity Recognition | Computer vision analysis of patent figures and diagrams | Detects shape-based similarity across technical domains for mechanical and design patents [20] |

Step-by-Step Procedure

Data Collection and Preprocessing

- Access global patent databases through platforms like Derwent Innovations Index (covering 65+ million patent documents) or Lens.org [27] [28]

- Export patent sets in plain text format containing titles, abstracts, claims, and citations

- Apply distributed keyword extraction algorithms (PKEA) to identify representative terms, encoding words into real-valued, dense vectors that capture semantic relationships [26]

Multi-Stage Cluster Analysis

- Execute classification-based clustering using concurrent searches across IPC, CPC, USPC, and FI/F-term classification systems [20]

- Perform citation network clustering through backward citation analysis (references cited by applicant/examiner) and forward citation tracking (later patents citing the target)

- Apply visual element clustering for mechanical and design patents using computer vision similarity detection

Result Validation and Synthesis

- Validate comprehensiveness through systematic comparison of clusters against major patent offices and relevant technical databases

- Apply temporal claim evolution tracking to analyze how patent claims transform throughout prosecution

- Generate structured novelty reports with similarity scores and legal status indicators

Protocol 2: Biomedical Knowledge Integration Through PubMed Clustering

Experimental Background and Objective

Biomedical research information remains fragmented across papers, patents, and clinical trials, creating significant barriers to comprehensive therapeutic development. This protocol implements the PubMed Knowledge Graph (PKG 2.0) framework, which connects over 36 million papers, 1.3 million patents, and 0.48 million clinical trials through 482 million biomedical entity linkages [21].

Research Reagent Solutions

Table 3: Essential Components for Biomedical Knowledge Integration

| Component | Function | Implementation Example |

|---|---|---|

| Biomedical Entity Recognizer | Extracts fine-grained entities (genes, drugs, diseases) from literature | Identifies and links equivalent entities across papers, patents, and clinical trials [21] |

| Author Name Disambiguator | Resolves author identity ambiguity across databases | High-performance algorithm addressing name variations and homonyms [21] |

| Multi-Source Citation Integrator | Unifies citation networks across publication types | Integrates 19 million citation linkages between papers, patents, and clinical trials [21] |

| Cross-Database Project Linker | Connects research outputs to funding sources | Links publications to NIH Exporter data through 7 million project linkages [21] |

Step-by-Step Procedure

Data Integration and Entity Extraction

- Collect PubMed papers, USPTO patents, and ClinicalTrials.gov records

- Apply fine-grained biomedical entity extraction to identify genes, drugs, diseases, and compounds across all document types

- Implement high-performance author name disambiguation to resolve identity ambiguity

Multi-Dimensional Clustering

- Perform entity-based clustering to group documents referencing similar biomedical concepts

- Execute citation network clustering to identify intellectual lineages and knowledge flows

- Apply project-based clustering to connect research outputs with funding sources and institutional networks

Cross-Domain Knowledge Discovery

- Analyze cluster intersections to identify translational research pathways connecting basic science to clinical applications

- Identify patent-paper clusters to surface commercially relevant basic research

- Detect clinical trial-paper clusters to reveal publication bias or unpublished results

Experimental Background and Objective

Traditional citation counting fails to identify scholarly works significantly associated with technological innovation trends. This protocol adapts statistical enrichment methods from genomics to identify publications disproportionately referenced in patents from rapidly evolving technology areas, revealing critical science-technology linkages [29].

Research Reagent Solutions

Table 4: Essential Components for Technology Trend Analysis

| Component | Function | Implementation Example |

|---|---|---|

| Time-Series Trend Identifier | Detects significant innovation trends through patent analysis | Uses negative binomial distribution to model patent counts per IPC classification over time [29] |

| Statistical Enrichment Analyzer | Identifies over-represented scholarly works in patent references | Applies Fisher's exact test with false-discovery rate adjustment (p ≤ 0.001) [29] |

| Cross-Disciplinary Convergence Mapper | Analyzes classification co-occurrence across technical domains | Maps intersections between IPC, CPC, and other classification systems [20] |

Step-by-Step Procedure

Innovation Trend Identification

- Collect patent data for target technology domain (e.g., 60,776 CRISPR patents or 33,489 cyanobacteria patents) [29]

- Count patents per priority year and International Patent Classification (IPC) code

- Model counts using negative binomial distribution to account for over-dispersion

- Identify statistically significant trend changes (p ≤ 10⁻¹⁰ and Δ ≥ 100 patents)

Scholarly Work Enrichment Analysis

- Compile all scholarly works referenced by patents in identified trend IPCs

- For each scholarly work, test if reference frequency is enriched in trend patents versus baseline

- Apply false-discovery rate multiple-testing adjustment (p ≤ 0.001)

- Identify significantly over-represented publications driving innovation trends

Cross-Domain Cluster Validation

- Validate enriched publications through technical expertise assessment

- Map citation networks around key publications to identify knowledge flows

- Analyze author-inventor relationships to detect direct academic-commercial linkages

Performance Benchmarking

Comparative Analysis of Clustering Approaches

Table 5: Performance Metrics Across Clustering Methodologies

| Clustering Method | Accuracy Domain | Performance Metric | Comparative Advantage |

|---|---|---|---|

| Gaussian Mixture Model (GMM) | Medical Imaging Data | 89% median accuracy in TAC classification [23] | Superior for normally distributed cluster shapes |

| Fuzzy C-Means (FCM) | Medical Imaging Data | 83% median accuracy in TAC classification [23] | Effective for overlapping cluster boundaries |

| ICA + Mini Batch K-means | Medical Imaging Data | 81% median accuracy in TAC classification [23] | Computational efficiency for large datasets |

| AI-Powered Novelty Search | Patent Prior Art | 76% hit rate, 32% recall rate [20] | Significantly outperforms general-purpose AI tools |

| Multi-Slice Clustering | Spatial Transcriptomics | Enables analysis of contiguous tissue sections [24] | Maintains spatial relationships across samples |

Clustering methodologies represent transformative approaches for addressing fundamental challenges in navigating PubMed, Scopus, and patent databases. Through the protocols detailed in this Application Note, researchers and drug development professionals can implement sophisticated clustering strategies that enhance prior art identification, reveal hidden science-technology linkages, and accelerate innovation cycles. As global patent volumes continue growing and biomedical literature expands, clustering technologies will become increasingly essential for extracting meaningful technological intelligence from complex information ecosystems. Future developments in multi-modal clustering that integrate textual, visual, and citation data will further enhance our ability to map and navigate the increasingly complex landscape of scientific and technological knowledge.

Application Notes: Core Concepts and Quantitative Frameworks

This document outlines formal protocols for implementing keyword clustering to establish topical authority in research-intensive fields. The methodologies are designed for researchers, scientists, and drug development professionals to structure digital research outputs systematically, enhancing discoverability and scholarly impact.

Foundational Terminology and Definitions

- Keyword Cluster: A group of keywords that are semantically related and can be served by a single, comprehensive piece of content. This process organizes keywords based on search intent and semantic relevance to avoid self-competition and create authoritative resources [15].

- Topical Authority: The perceived expertise a website or domain earns by extensively covering all facets of a specific topic. This is achieved by creating a network of high-quality, interconnected content based on a clear keyword cluster hierarchy [15].

- Semantic Relationships: The contextual and meaningful connections between words and phrases beyond simple keyword matching. Modern search engines use these relationships, understood through updates like BERT, to group related concepts [15].

- Search Intent: The primary goal a user has when typing a query into a search engine. Clustering must group keywords with identical intent—whether informational, commercial, or transactional—to effectively meet user needs [15].

Quantitative Data on Clustering Approaches and Outcomes

Table 1: Comparison of Keyword Clustering Approaches [19]

| Clustering Approach | Core Methodology | Key Advantage | Key Limitation |

|---|---|---|---|

| SERP-Based | Groups keywords that share ranking pages in Search Engine Results Pages (SERPs). | Reflects how search engines actually understand and group topics. | Highly dependent on the quality and current state of SERP data. |

| NLP-Based (Natural Language Processing) | Uses AI to identify semantic relationships between keywords based on their meaning. | Can uncover non-obvious, contextual relationships between terms. | May not always align with how search engines group topics in practice. |

Table 2: Keyword Clustering Impact Metrics [15]

| Metric | Pre-Clustering State | Post-Clustering State | Change |

|---|---|---|---|

| Content Pieces | 12 blog posts | 4 comprehensive guides | -66% |

| Keywords Targeted per Piece | 1-2 keywords | A cluster of related keywords | ~+500% |

| Organic Traffic | Baseline (Mediocre rankings) | 167% increase | +167% |

Experimental Protocols

Protocol A: Manual Keyword Clustering via Search Intent Analysis

This protocol provides a foundational, manual method for establishing initial keyword clusters.

- Objective: To manually group a keyword set into semantically coherent clusters based on search intent and topical relevance.

- Research Reagent Solutions:

- Methodology:

- Keyword Aggregation: Use a keyword research tool to generate an extensive list of seed keywords and their variants relevant to the research topic (e.g., "protein powder," "whey protein isolate," "protein solubility"). Export this data to a CSV file [15].

- Intent Annotation: Manually analyze and label each keyword with its perceived search intent (Informational, Commercial, Transactional).

- Topical Grouping: Within each intent group, organize keywords into sub-groups based on core topic. Each cluster should have a primary, high-search-volume keyword and semantically related supporting terms [15].

- Content Mapping: Assign each finalized cluster to a single, comprehensive piece of content (e.g., a review article, protocol database, or methodology guide).

Protocol B: AI-Assisted Semantic Clustering

This protocol leverages Large Language Models (LLMs) to scale and enhance the clustering process for larger datasets.

- Objective: To programmatically cluster large keyword volumes (100+ keywords) using an LLM API for improved contextual understanding.

- Research Reagent Solutions:

- Python Scripting Environment: The core platform for script execution [15].

- LLM API (e.g., Anthropic's Claude Sonnet 3.5): Provides the natural language processing engine. This model has been tested for optimal results in this application [15].

- Structured Prompt: A precise set of instructions guiding the AI's clustering logic.

- Methodology:

- Data Preparation: Load the keyword CSV file and partition the data into manageable chunks to comply with API token limits [15].

- API Execution: Feed each keyword chunk to the LLM API with a structured prompt. An example prompt is: "You are an expert SEO analyst. Group these keywords and their monthly search volumes into clear categories based on search intent and topic. Rules: Use clear, concise category names; Group by core topic and user intent; Keep categories focused but not too granular; Use natural search language; Consider search volume patterns; Group similar modifiers together" [15].

- Output Consolidation: Collect the JSON or CSV output from the API and merge the results into a master clustering file.

- Human-in-the-Loop Validation: A domain expert must review and refine the AI-generated clusters to correct any contextual errors.

Protocol C: SERP-Based Clustering for Competitive Analysis

This protocol uses the SERP-based clustering method to align content strategy directly with search engine logic.

- Objective: To group keywords based on an analysis of which pages currently rank for them in search results.

- Research Reagent Solutions:

- Methodology:

- Keyword Input: Upload a cleaned list of target keywords to the chosen tool.

- SERP Analysis Execution: The tool programmatically checks the top-ranking URLs for each keyword in the search index.

- Cluster Generation: The algorithm groups keywords that share a critical mass of common ranking URLs.

- Strategic Application: Use the resulting clusters to identify content gaps, optimize existing pages to target entire clusters, and understand the competitive landscape for each topic group.

Mandatory Visualizations

Keyword Clustering and Content Mapping Workflow

Topical Authority Site Architecture

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Keyword Research and Clustering

| Tool / Reagent | Function | Typical Application in Research |

|---|---|---|

| Keyword Insights | An end-to-end platform for clustering and content workflow. Processes large datasets (up to 200k keywords) and integrates an AI writer [19]. | Enterprise Use: Ideal for large research institutions or projects requiring a complete solution from data processing to content production. |

| KeyClusters | A specialized tool focusing exclusively on SERP-based clustering with a pay-as-you-go pricing model [19]. | Targeted Analysis: Perfect for focused projects where researchers already have keyword data and need reliable, subscription-free clustering. |

| Answer Socrates | A tool for discovering keywords and generating initial semantic clusters based on search intent [15]. | Initial Discovery: Excellent for the early stages of a project to build a foundational list of terms and understand the topic landscape. |

| Python & LLM API | A custom scripting solution using models like Anthropic's Claude for scalable, contextual clustering [15]. | Custom & Scalable Projects: Best for teams with technical expertise needing to cluster large volumes of keywords with high contextual accuracy. |

| ChartExpo | A visualization tool for creating charts (e.g., Likert scales, bar charts) within platforms like Excel and Google Sheets without coding [30]. | Data Presentation: Used to visualize quantitative data from keyword research, such as search volume distribution or cluster performance metrics. |

From Theory to Lab Bench: A Step-by-Step Methodology for Building Effective Research Clusters

Effective keyword discovery is the cornerstone of successful scientific literature retrieval, directly impacting the quality and efficiency of research. For professionals in drug development and biomedical sciences, mastering specialized search terminologies is not merely advantageous but essential. This protocol details a systematic methodology for building comprehensive keyword clusters by leveraging the synergistic power of Medical Subject Headings (MeSH) from PubMed and complementary keyword extraction from Google Scholar. The process is framed within a broader thesis on creating structured keyword clusters for research topics, enabling researchers to conduct more precise, recall-oriented searches that form the foundation for systematic reviews, grant applications, and drug development projects.

Comparative studies have quantitatively demonstrated that search strategies employing MeSH terms achieve significantly higher recall (75%) and precision (47.7%) compared to basic text-word searching (54% recall and 34.4% precision) [31]. This performance advantage makes MeSH an indispensable component of professional search strategies, particularly for complex research tasks requiring comprehensive coverage.

Key Concepts and Definitions

Medical Subject Headings (MeSH)

MeSH is a controlled and hierarchically-organized vocabulary thesaurus developed and maintained by the National Library of Medicine (NLM) [32]. It serves as the standard vocabulary for indexing articles in MEDLINE/PubMed, the NLM Catalog, and other NLM databases, providing a consistent way to retrieve information despite variations in author terminology [33].

Keyword Clusters

Keyword clusters represent organized groups of search terms centered around core research concepts. These clusters typically include:

- Controlled vocabulary (MeSH terms)

- Synonyms and entry terms from MeSH records

- Text-word variations including acronyms, initialisms, and spelling variants

- Related terms from hierarchical structures

Precision and Recall in Information Retrieval

- Recall: The proportion of all relevant documents in a database that are successfully retrieved by a search [31]. Higher recall reduces the risk of missing pertinent literature.

- Precision: The proportion of retrieved documents that are actually relevant to the search question [31]. Higher precision increases search efficiency by reducing irrelevant results.

Experimental Protocols

Protocol 1: MeSH Term Discovery via MeSH Database

Purpose: To identify and extract relevant MeSH terms for constructing comprehensive keyword clusters.

Methodology:

- Access the MeSH Database: From the PubMed homepage, navigate to "Explore" or "More Resources" and select the "MeSH database" link [34].

- Initial Concept Search: Enter primary research concepts into the search box. For example, search "nerve block" to identify corresponding MeSH terms [34].

- Analyze MeSH Records: Review the displayed MeSH record which includes:

- Scope Note: Definition and contextual usage

- Entry Terms: Synonyms, variations, and related phrases that map to this MeSH term

- Tree Structures: Hierarchical placement showing broader, narrower, and related concepts [33]

- Extract Vocabulary Components: Systematically collect:

- Primary MeSH heading

- All entry terms (natural language synonyms)

- Relevant narrower terms from the hierarchy

- Applicable subheadings for concept qualification

- Implement Search Strategy: Use the PubMed Search Builder to incorporate selected terms into search queries [33].

Technical Notes: MeSH terms are updated annually to reflect changes in medical terminology, with the 2025 version containing 30,956 main headings [35]. The "explode" feature is applied by default, automatically including all more specific terms in the hierarchy beneath your chosen term [33].

Protocol 2: MeSH Term Extraction via MeSH on Demand

Purpose: To automatically identify MeSH terms from existing text such as abstracts or research questions.

Methodology:

- Access Tool: Navigate to the MeSH on Demand website (https://meshb.nlm.nih.gov/MeSHonDemand) [34].

- Input Text: Copy and paste research text (up to 10,000 characters) including abstracts, specific aims, or key paragraphs [34].

- Process Text: Click "Find MeSH Terms" to initiate automatic term identification using Natural Language Processing and the NLM Medical Text Indexer [34].

- Review Results: Examine both highlighted terms within the text and the alphabetically listed MeSH terms displayed alongside the text [34].

- Incorporate into Clusters: Add identified relevant terms to your growing keyword cluster.

Technical Notes: This method is particularly valuable for researchers new to a field or when dealing with emerging terminology that may not be familiar [34].

Protocol 3: Complementary Keyword Discovery via Google Scholar

Purpose: To identify additional text-word variants, emerging terminology, and discipline-specific language not yet incorporated into controlled vocabularies.

Methodology:

- Execute Phrase Search: Conduct initial searches using core concepts enclosed in quotation marks for exact phrase matching (e.g., "hospital acquired infection") [33] [36].

- Apply Advanced Search Features:

- Title Restriction: Use

intitle:operator or advanced search field to find terms in article titles (e.g.,intitle:penicillin) [36] - Author Search: Apply

author:operator to locate key researchers in the field [37] - Publication Restriction: Use

source:or publication search to identify terminology used in specific journals [36]

- Title Restriction: Use

- Analyze Results:

- Review titles and snippets for alternative terminology

- Identify relevant "Related articles" and "Cited by" references for additional vocabulary [37]

- Examine accessible full-text articles for additional context and terminology

- Extract Text-Word Variants: Systematically collect identified synonyms, acronyms, and related terminology.

Technical Notes: Google Scholar's coverage includes preprints, conference proceedings, and other gray literature that may contain emerging terminology not yet represented in MeSH [37].

Protocol 4: Cluster Integration and Search Strategy Formulation

Purpose: To synthesize discovered terms into organized keyword clusters and construct comprehensive search strategies.

Methodology:

- Organize by Concept: Structure identified terms into conceptual groups corresponding to research question components (e.g., population, intervention, outcome).

- Apply Boolean Logic:

- Combine synonyms within concepts using

OR - Link different concepts using

AND - Exclude irrelevant concepts cautiously using

NOT[33]

- Combine synonyms within concepts using

- Incorporate Both Vocabulary Types:

- Include appropriate MeSH terms with

[mesh]tag - Add text-words with appropriate field tags such as

[tiab]for title/abstract [33]

- Include appropriate MeSH terms with

- Apply Search Enhancements:

- Test and Refine: Execute preliminary searches and review results for relevance, adjusting clusters as needed.

Table 1: Quantitative Comparison of Search Method Performance

| Search Method | Recall | Precision | Best Use Cases |

|---|---|---|---|

| MeSH Terms Only | 75% | 47.7% | Comprehensive systematic reviews, drug development background research |

| Text-Words Only | 54% | 34.4% | Emerging topics, recent publications not yet indexed, gene names |

| Combined Approach | Highest | Optimal balance | All professional research contexts requiring both completeness and relevance |

Workflow Visualization

Diagram 1: Keyword discovery workflow integrating MeSH and Google Scholar approaches.

Research Reagent Solutions

Table 2: Essential Digital Tools for Keyword Discovery and Literature Search

| Tool Name | Function | Access Method |

|---|---|---|

| MeSH Database | Identify controlled vocabulary terms, entry terms, and hierarchical relationships | Via PubMed interface under "More Resources" > "MeSH Database" [32] [34] |

| MeSH on Demand | Automatically extract MeSH terms from provided text using NLP | Direct access at https://meshb.nlm.nih.gov/MeSHonDemand [34] |

| PubMed Advanced Search | Construct complex queries combining MeSH and text-words with Boolean operators | PubMed "Advanced" link; uses history and search builder features [33] |

| Google Scholar Advanced Search | Identify emerging terminology and discipline-specific language not in controlled vocabularies | Menu icon > Advanced Search or direct use of operators [36] [38] |

| NCBI Accounts | Save search strategies and create alerts for ongoing keyword discovery | Free registration through NCBI for search persistence [33] |

Expected Outcomes and Validation

Researchers implementing these protocols can expect to develop comprehensive keyword clusters that significantly enhance literature search effectiveness. Validation should include:

- Recall Assessment: Comparison of results against known key papers in the field to ensure comprehensive coverage.

- Precision Evaluation: Monitoring the percentage of relevant articles in initial search results.

- Cluster Completeness: Regular review and expansion of keyword clusters as new terminology emerges.

The hierarchical nature of MeSH provides significant advantages for both broadening and narrowing searches [33] [34]. By understanding tree structures, researchers can strategically move up (broader terms) or down (narrower terms) the hierarchy to optimally balance recall and precision for their specific research needs.

Recent updates to MeSH, including the 2025 version, continue to enhance its utility with new terms such as "Scoping Review" and "Plain Language Summaries" reflecting evolving research communication practices [35]. Regular consultation of MeSH update reports ensures researchers maintain current keyword clusters aligned with the most recent vocabulary standards.

Selecting the appropriate clustering method is a critical strategic decision that directly impacts the efficiency and effectiveness of your research topic analysis. The choice between manual, automated, and AI-powered approaches depends on your project's scale, available resources, and required precision. This section provides a systematic comparison and detailed experimental protocols for implementing each method.

Method Comparison and Selection Guidelines

The table below summarizes the core characteristics of the three primary clustering approaches to inform your selection strategy.

Table 1: Keyword Clustering Method Comparison

| Method | Typical Volume | Time Investment | Key Strengths | Primary Limitations |

|---|---|---|---|---|

| Manual Clustering | < 100 keywords [39] | High (hours to days) [39] | Full researcher control, deep understanding of semantic relationships, no tool cost [15] | Not scalable, prone to human error and inconsistency [19] |

| Automated Tool-Based Clustering | 1,000 - 200,000 keywords [19] [4] | Medium (minutes to hours) [40] | High scalability, uses actual SERP data, integrates with content planning [4] [19] | Subscription or usage costs, requires learning and setup [4] |

| AI-Powered & Custom Script Clustering | Flexible, often chunked [15] | Low processing time, high setup time [15] | High customizability, can combine SERP and semantic analysis, leverages advanced LLMs [41] [42] | Highest technical barrier, API costs, prompt engineering required [15] [43] |

Selection Workflow

Use the following decision workflow to identify the optimal method for your research project.

Protocol 1: Manual Clustering for Foundational Analysis

Manual clustering is the foundational protocol, ideal for validating automated results or handling small, highly-specialized keyword sets.

Experimental Protocol

Materials:

- Primary Dataset: List of target keywords and search volumes [15].

- Analysis Software: Spreadsheet software (e.g., Google Sheets, Microsoft Excel).

- Validation Tool: Access to search engine results pages (SERPs) for intent analysis.

Procedure:

- Data Preprocessing: Import your keyword list into a spreadsheet. Standardize formatting and remove duplicates [4].

- Intent Classification: Manually label each keyword's primary search intent [15]. Use the following classifications:

- Informational: Seeking knowledge (e.g., "what is kinase inhibition").

- Commercial: Investigating products/services (e.g., "mass spectrometry vendors").

- Transactional: Ready to purchase or acquire (e.g., "buy CRISPR kit").

- Thematic Grouping: Sort keywords into preliminary thematic clusters based on shared core topics (e.g., "protein purification," "cell culture protocols") [15].

- SERP Validation: For keywords within the same thematic cluster, conduct a spot check of the top 10 SERPs. If results are substantially different, reconsider the grouping [4].

- Cluster Finalization: Create a final list of clusters, assigning a descriptive, researcher-friendly name to each (e.g., "ProteinQuantificationMethods") [15].

Protocol 2: Automated Tool-Based SERP Clustering

This protocol leverages specialized software for high-throughput, search-engine-aligned clustering, suitable for large-scale research topic mapping.

Experimental Protocol

Materials:

- Clustering Platform: Access to a dedicated keyword clustering tool (e.g., Keyword Insights, KeyClusters, SE Ranking) [19] [40].

- Input Data: Comprehensive keyword list, ideally with search volumes [4].

- Configuration Settings: Defined project parameters (location, language, clustering threshold).

Procedure:

- Tool Selection and Setup: Select a tool based on required volume, budget, and integration needs (see Table 2). Create a new project and configure location/language settings to match your target audience [4].

- Data Upload: Upload your keyword CSV file(s). Map the keyword and search volume columns as required by the platform [4].

- Parameter Configuration: Adjust clustering parameters. A standard starting threshold is a 40-50% SERP similarity. Higher thresholds create more specific clusters [43] [19].

- Execution and Processing: Initiate the clustering operation. Processing time varies from minutes to hours based on keyword volume and tool capacity [40].

- Output Analysis and Export: Review the generated clusters. Analyze metrics like cluster search volume, intent labeling, and current ranking performance. Export the final clustered list for content planning [39] [19].

Table 2: Automated Keyword Clustering Tools for Research

| Tool Name | Clustering Methodology | Key Feature for Researchers | Pricing Model |

|---|---|---|---|

| Keyword Insights [19] [4] | SERP-based | High-volume processing (up to 200k keywords), integrates with AI writer agent for content drafting [19]. | Subscription from ~$49/month [19] |

| KeyClusters [40] | SERP-based | Pay-per-use model, no subscription; ideal for project-based work [40]. | ~$9 per 1,000 keywords [40] |

| Answer Socrates [40] [15] | Question & Semantic Focus | Excels at finding recursive, long-tail question keywords; generous free plan [40]. | Freemium, Paid from ~$9/month [40] |

| SE Ranking [19] | SERP-based | Integrated within a full SEO suite; good for all-in-one workflow management [19]. | Subscription + ~$4 per 1,000 keywords [19] |

Protocol 3: AI-Powered and Custom Script Clustering

This advanced protocol provides maximum flexibility, using large language models (LLMs) and custom scripts for nuanced, context-aware clustering.

Experimental Protocol

Materials:

- AI Platform/API: Access to an LLM platform (e.g., Team-GPT, OpenAI API, Anthropic Claude) [41] [15].

- Computing Environment: Python environment with necessary libraries (e.g., pandas, scikit-learn) for script-based methods [43].

- Input Data: Cleaned keyword list, preferably with metadata.

Procedure: A) Using an AI Platform (e.g., Team-GPT):

- Prompt Engineering: Use a structured prompt builder to define the task. Specify your domain (e.g., "drug development"), target audience, and desired output format [41].

- Model Selection: Choose an appropriate AI model (e.g., ChatGPT o3, Claude Sonnet) based on the task's complexity [41].

- Execution and Iteration: Execute the prompt. Review the AI-generated clusters and iteratively refine the prompt or output until satisfied [41].

- Output Management: Save effective prompts and clusters as reusable "custom instructions" for future projects [41].

B) Using a Custom Python Script:

- SERP Data Acquisition: Acquire SERP data for your keyword list using an appropriate API [43].

- Data Preprocessing: Import the SERP data into a Pandas DataFrame. Filter for page 1 results and compress ranking URLs into a single string per keyword [43].

- Similarity Calculation: Implement a similarity function (e.g.,

serps_similarity) to compare the overlap and order of URLs between all keyword pairs [43]. - Cluster Assignment: Set a similarity threshold (e.g., 0.4). Group keywords that meet or exceed this threshold into the same cluster [43].

- Validation: Manually validate a sample of the script's output to ensure logical grouping before full implementation.

The following workflow diagram illustrates the two primary AI-powered pathways.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Keyword Clustering Experiments

| Tool / Resource | Primary Function | Example in Protocol |

|---|---|---|

| Spreadsheet Software | Foundational platform for manual data sorting, labeling, and analysis [39] [15]. | Manual Clustering (Protocol 1) |

| SERP Analysis API | Provides real-time search engine results page data for automated, intent-based clustering [43]. | Custom Script Clustering (Protocol 3B) |

| Dedicated Clustering Platform | Integrated software solution automating the entire SERP-overlap clustering workflow [19] [4]. | Automated Tool Clustering (Protocol 2) |

| Large Language Model (LLM) | AI engine for understanding semantic context and generating clusters based on meaning and intent [41] [15]. | AI Platform Clustering (Protocol 3A) |

| Python Data Science Stack | Custom programming environment for building and executing tailored clustering algorithms [43]. | Custom Script Clustering (Protocol 3B) |

This application note provides a systematic evaluation of three prominent keyword clustering platforms—Keyword Insights, KeyClusters, and Semrush—within the context of academic and scientific research. We detail specific methodologies for implementing these tools to map complex research topics, with a focus on creating a structured, authoritative content framework that aligns with modern search engine algorithms. The protocols are designed to enable researchers, scientists, and drug development professionals to efficiently establish topical authority in their respective fields.

Keyword clustering is an advanced search engine optimization (SEO) technique that involves grouping semantically related search terms that can be effectively targeted with a single, comprehensive piece of content [44]. This methodology marks a significant departure from the obsolete "one keyword, one page" approach, instead empowering a single authoritative document to rank for hundreds or thousands of related search queries [44].

For the research community, this approach provides a structured framework for organizing complex scientific information. It enables the creation of a content architecture that mirrors the conceptual relationships within a research domain, thereby:

- Building Topical Authority: By comprehensively covering all facets of a research topic, institutions and individual researchers can signal their expertise to search engines, establishing themselves as authoritative sources [44].

- Enhancing User Experience: Researchers visiting such a resource find a centralized hub of information, addressing their primary query and anticipated subsequent questions, which increases engagement and reduces bounce rates [44].

- Maximizing Resource Efficiency: Consolidating efforts into fewer, more powerful content assets yields a higher return on investment by attracting traffic from a vast array of related search terms [44].

The underlying mechanism that makes keyword clustering effective is SERP Overlap Analysis [44]. This data-driven method operates on the principle that if two different search queries return a significant number of identical pages in Google's top results, then Google interprets the intent behind those queries as being similar. Consequently, they can be targeted with the same content [44]. Advanced clustering tools automate this analysis at scale, transforming a disorganized list of keywords into a coherent content strategy derived directly from search engine behavior.

Platform Comparison & Selection Guide