Inclusive Recruitment in the Digital Age: Effective EDC Strategies for Engaging Vulnerable Populations in Clinical Research

This article provides a comprehensive guide for researchers and drug development professionals on recruiting vulnerable populations into Electronic Data Capture (EDC) studies.

Inclusive Recruitment in the Digital Age: Effective EDC Strategies for Engaging Vulnerable Populations in Clinical Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on recruiting vulnerable populations into Electronic Data Capture (EDC) studies. It explores the ethical and scientific imperative for diversity, outlines actionable community-centered and digitally-enabled methodologies, addresses common challenges like mistrust and logistical barriers, and presents data-driven frameworks for validating and optimizing recruitment strategies. By synthesizing current research and real-world case studies, this resource aims to equip clinical teams with the tools needed to build more inclusive, generalizable, and successful research cohorts.

Why Inclusion Matters: The Scientific and Ethical Imperative for Recruiting Vulnerable Populations

In clinical research, "vulnerable populations" refer to groups of people who can be harmed, manipulated, coerced, or deceived by unscrupulous researchers because of their limited decision-making ability, lack of power, or disadvantaged status [1]. While race and ethnicity are often discussed, vulnerability extends far beyond these factors to include children, prisoners, individuals with impaired decision-making capacity, and those who are economically or educationally disadvantaged [1].

The ethical inclusion of these populations is crucial—while they are at higher risk of harm or injustice in research, they are also consistently underrepresented and underserved in clinical studies [1]. This underrepresentation creates significant scientific and ethical problems, as it limits the generalizability of research findings and perpetuates health disparities. Excluding vulnerable groups is itself biased and unethical, as it prevents these populations from benefiting from scientific progress and fails to produce research that reflects real-world patient diversity [1].

Within Electronic Data Capture (EDC) research, these vulnerabilities present unique challenges and opportunities. EDC systems, which are web-based software platforms used to collect, clean, and manage clinical trial data in real time [2], can potentially reduce some barriers to participation through decentralized trial designs and remote data collection. However, they may also introduce new challenges related to digital literacy, access to technology, and comfort with electronic systems, particularly for economically or educationally disadvantaged groups [3].

Frequently Asked Questions (FAQs)

Q1: What specific factors beyond race and ethnicity make a population vulnerable in clinical research?

Vulnerability in clinical research stems from multiple interconnected factors that limit an individual's ability to provide fully autonomous, informed consent or protect their own interests. These factors include [1]:

- Impaired decision-making capacity: This includes individuals with cognitive disabilities, neurological conditions, or temporary impairments that affect their ability to understand research risks and benefits.

- Limited power or autonomy: Prisoners, institutionalized individuals, or those in hierarchical relationships (such as students or employees) may face overt or subtle coercion.

- Socioeconomic disadvantage: Individuals with low income, limited education, or inadequate access to healthcare may participate due to economic desperation rather than genuine interest.

- Situational stressors: Pregnant individuals during the perinatal period, people experiencing acute medical crises, or those undergoing significant life changes face additional stressors that can impact decision-making [4].

Q2: How can EDC systems create or exacerbate vulnerabilities in research participation?

EDC systems, while efficient, can introduce specific barriers for vulnerable populations:

- Digital literacy requirements: Elderly, less educated, or technologically inexperienced participants may struggle with EDC interfaces, leading to exclusion or poor engagement [3].

- Access to technology: Economically disadvantaged participants may lack reliable internet access, smartphones, or computers needed for EDC-based trials [3].

- Language and cultural barriers: EDC systems without multilingual support or culturally appropriate interfaces can exclude non-native speakers [2].

- Privacy concerns: Vulnerable groups may have heightened concerns about data security and confidentiality in electronic systems [4].

Q3: What strategies can protect vulnerable populations while promoting their ethical inclusion in EDC research?

Ethical inclusion requires targeted protective measures:

- Enhanced consent processes: Implement multi-stage consent verification, use plain language, and assess comprehension, especially for those with impaired decision-making capacity [1].

- Cultural and linguistic adaptation: Ensure EDC systems support multiple languages and culturally appropriate content [2] [3].

- Technology access support: Provide devices, internet access, or technical assistance to economically disadvantaged participants [3].

- Community engagement: Partner with community organizations representing vulnerable groups to co-design recruitment strategies and materials [4].

- Ongoing monitoring: Implement additional oversight mechanisms for studies involving vulnerable populations [1].

Q4: How can researchers differentiate between fair compensation and undue influence when recruiting vulnerable participants?

This distinction is crucial for ethical recruitment:

- Fair compensation appropriately reimburses for time, travel, and incidental expenses without becoming a primary motivation for participation.

- Undue influence occurs when compensation is so substantial that it persuades participants to take risks they would otherwise refuse.

- Context-specific assessment: Consider the economic circumstances of the population—what constitutes fair compensation may vary, but should never exploit vulnerability [1].

Troubleshooting Guides: Addressing Common Recruitment Challenges

Challenge: Low Enrollment of Economically Disadvantaged Populations

Symptoms: Consistent underrepresentation of low-income participants despite broad recruitment efforts; high dropout rates after initial enrollment.

Diagnostic Steps:

- Assess structural barriers: Evaluate transportation costs, childcare needs, and time off work required for participation [5].

- Review compensation structure: Determine if reimbursement adequately covers expenses and time without becoming coercive [1].

- Evaluate technological requirements: Assess whether EDC system requires reliable internet access, specific devices, or advanced digital literacy [3].

Solutions:

- Implement decentralized trial elements using EDC systems that support remote participation [2] [5].

- Provide technology assistance, including loaner devices or technical support hotlines [3].

- Offer flexible visit schedules and remote monitoring options to reduce transportation and time burdens [2].

- Structure compensation to cover actual expenses and time without creating undue influence [1].

Challenge: Difficulty Recruiting Participants with Limited Health Literacy

Symptoms: Potential participants express confusion about study purposes; consent forms require repeated explanation; high screening failure rates.

Diagnostic Steps:

- Evaluate communication materials: Assess readability of consent forms, surveys, and study information [3].

- Review EDC interface complexity: Determine if the electronic data capture system uses medical jargon or complex navigation [3].

- Assess comprehension verification: Check if processes exist to confirm understanding of study requirements and consent [1].

Solutions:

- Simplify EDC interfaces with intuitive navigation, visual aids, and plain language [3].

- Implement multi-media consent processes with video explanations and interactive comprehension checks [1].

- Provide ongoing support through research coordinators who can explain concepts and procedures [3].

- Use adaptive questioning in EDC systems that tailors questions based on previous responses to reduce cognitive burden [3].

Challenge: Underrepresentation of Geographically Isolated Populations

Symptoms: Enrollment concentrated near major medical centers; participants from rural areas consistently underrepresented; high dropout rates due to travel burden.

Diagnostic Steps:

- Map participant distribution: Compare enrollment patterns with disease prevalence across geographic regions [5].

- Identify access barriers: Determine travel time, transportation options, and local infrastructure limitations [5].

- Evaluate EDC capabilities: Assess whether the electronic data capture system supports fully remote or hybrid trial designs [2].

Solutions:

- Implement decentralized clinical trials (DCTs) using EDC systems that enable remote data collection [5].

- Establish satellite research sites or partner with local healthcare providers in underserved areas [4].

- Utilize mobile technologies and bring-your-own-device (BYOD) approaches to reduce geographic barriers [3].

- Combine local in-person recruitment with national online strategies to broaden geographic reach [4].

Quantitative Data: Recruitment Challenges and Strategies

Table 1: Common Recruitment Challenges for Vulnerable Populations in Clinical Research

| Challenge Category | Specific Barriers | Impact on Recruitment | Potential Solutions |

|---|---|---|---|

| Economic Factors | Transportation costs, lost wages, childcare expenses [5] | 60% of oncology trials enroll <5 participants per site [5] | Decentralized trials, expense reimbursement, flexible scheduling [5] |

| Geographic Access | Distance to research sites, limited local infrastructure [5] | 70% of eligible US patients live >2 hours from research centers [5] | Satellite sites, remote monitoring, hybrid trial designs [5] |

| Educational/Cognitive | Health literacy limitations, cognitive impairments [1] [3] | Complex PRO instruments cause cognitive strain and reduce completion [3] | Simplified interfaces, multimedia consent, comprehension checks [1] [3] |

| Technological Access | Limited digital literacy, lack of reliable internet/devices [3] | Digital divide excludes vulnerable populations from ePRO collection [3] | BYOD options, low-tech alternatives, technology training [3] |

| Trust & Historical Factors | Medical mistrust due to historical exploitation [1] | Underrepresentation persists despite recruitment efforts [1] | Community partnerships, transparent communication [4] |

Table 2: Effective Recruitment Strategies for Vulnerable Populations

| Strategy Type | Specific Approaches | Target Populations | Implementation Considerations |

|---|---|---|---|

| Community-Engaged Recruitment | Building trust with community organizations [4], cultural liaisons [4] | Racial/ethnic minorities, low-income groups [4] | Requires time investment, authentic partnerships beyond transactional relationships [4] |

| Digital Adaptation | Multilingual EDC interfaces [2], low-bandwidth compatibility [2] | Non-native speakers, rural populations | Balance technological efficiency with accessibility needs [3] |

| Protocol Flexibility | Remote data collection, flexible scheduling, hybrid visits [5] | Working adults, geographically isolated | Maintain scientific integrity while reducing participation burden [3] |

| Participant Support | Transportation assistance, technology loans, childcare services [5] | Low-income families, single parents | Budget allocation for support services rather than just recruitment materials [5] |

| Simplified Procedures | Plain language consent, streamlined EDC interfaces, reduced visit frequency [3] | Individuals with limited health literacy, cognitive impairments | Balance scientific rigor with participant comfort and comprehension [3] |

Methodologies for Inclusive Recruitment in EDC Research

Layered Recruitment Methodology for Diverse Sample Enrollment

The "Mamma Mia" study successfully recruited a large, diverse sample of pregnant individuals (n=1,953) through a structured methodology that combined multiple approaches [4]:

Preparation Phase:

- Community Partnership Development: Established relationships with both formal organizations (WIC clinics, community health centers) and informal networks (community health workers, peer advocates) embedded within the lived experiences of pregnant individuals from historically marginalized groups [4].

- Recruitment Material Development: Created culturally and linguistically appropriate recruitment materials, including multiple flyer versions tailored to different communities [4].

- Tracking System Implementation: Used REDCap, a secure web-based data collection system, to track recruitment sources and monitor diversity benchmarks [4].

Active Recruitment Phase:

- Multi-Channel Outreach: Implemented both online (social media, digital newsletters, listserv emails) and in-person (community events, healthcare settings) recruitment strategies [4].

- Continuous Monitoring: Held weekly team meetings to review recruitment progress against diversity goals and adjust strategies as needed [4].

- Community-Aligned Messaging: Ensured all recruitment materials and communications reflected cultural values and concerns of target populations [4].

This methodology resulted in successful recruitment of a diverse national sample, meeting internal demographic goals of at least 50% of participants identifying as a race or ethnicity other than White and at least 25% as low-income (household income <$50,000) [4].

Ethical Engagement Protocol for Vulnerable Populations

Based on successful engagement of vulnerable populations, researchers should implement these methodological safeguards [1]:

Comprehensive Vulnerability Assessment:

- Identify potential physical, psychological, social, and economic vulnerabilities during screening [1].

- Assess decision-making capacity using validated tools when appropriate [1].

- Evaluate potential for coercion or undue influence in the recruitment context [1].

Enhanced Informed Consent Process:

- Utilize plain language documents with appropriate reading levels [1].

- Implement multi-stage consent verification with comprehension assessments [1].

- Include independent advocates for participants with impaired decision-making capacity [1].

Ongoing Monitoring and Support:

- Establish regular check-ins to assess continued willingness to participate [1].

- Provide accessible channels for concerns or questions throughout the study [1].

- Implement additional oversight mechanisms for studies involving vulnerable populations [1].

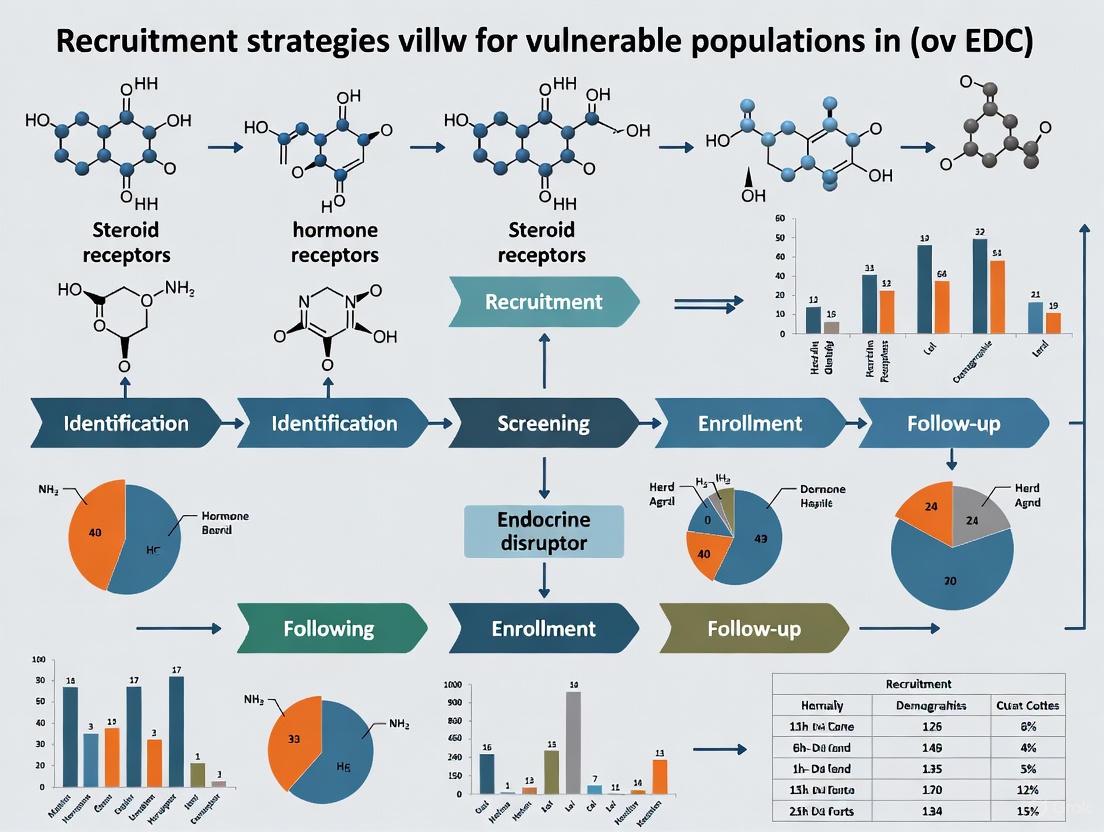

Visualizing Vulnerability Factors in Clinical Research

Vulnerability Factors in Clinical Research Diagram Description: This diagram illustrates the multifaceted nature of vulnerability in clinical research, extending beyond race and ethnicity to include five primary dimensions: impaired decision-making capacity, power imbalances, socioeconomic disadvantage, situational stressors, and health status factors. Each dimension contains specific subfactors that can create vulnerability, demonstrating the complex interplay of elements that researchers must consider when designing inclusive studies.

Research Reagent Solutions: Tools for Inclusive EDC Research

Table 3: Essential Research Tools for Inclusive EDC Studies with Vulnerable Populations

| Tool Category | Specific Solutions | Primary Function | Considerations for Vulnerable Populations |

|---|---|---|---|

| EDC Platforms with Accessibility Features | Castor EDC [2] [3], Medrio EDC [2], OpenClinica [2] | Enable remote data collection with multilingual support, flexible form design | Select platforms with low-bandwidth functionality, mobile compatibility, and intuitive interfaces [2] [3] |

| Electronic Consent (eConsent) Tools | REDCap [4], Custom eConsent modules | Facilitate multimedia consent processes with comprehension checks | Must include accessibility features, multiple language options, and offline capabilities [4] |

| Participant Engagement Systems | Castor eCOA/ePRO [3], TrialKit [2] | Support patient-reported outcomes collection with adaptive questioning | Implement Bring-Your-Own-Device (BYOD) approaches with paper alternatives [3] |

| Community Partnership Frameworks | Structured collaboration protocols [4] | Build trust and ensure cultural relevance of research materials | Require authentic relationship-building beyond transactional arrangements [4] |

| Comprehension Assessment Tools | Quizzes, teach-back methods, decision aids [1] | Verify understanding of research participation and consent | Should be appropriate for various literacy levels and available in multiple formats [1] |

| Data Collection Alternatives | Paper forms, telephone questionnaires, in-person interviews [3] | Ensure participation options for technology-limited individuals | Maintain data quality while offering accessible alternatives to digital platforms [3] |

In the pursuit of rigorous scientific research, recruitment strategy decisions create a critical tension between practical convenience and scientific validity. Researchers frequently utilize homogeneous convenience samples—participant groups that are easily accessible and similar in key demographics—because they are "cheap, efficient, and simple to implement" [6]. Despite these practical advantages, this approach carries significant scientific costs that threaten both the generalizability of findings and the safety of resulting interventions.

This technical support guide examines these threats through the specific lens of Electronic Data Capture (EDC) research involving vulnerable populations. When research aims to develop interventions for broad or diverse populations, homogeneous sampling can produce biased estimates of population effects and obscure critical subpopulation differences [6]. Furthermore, in clinical trials, inadequate representation can lead to incomplete harms reporting, limiting a clinician's ability to accurately balance potential benefits and risks of an intervention [7]. The following sections provide researchers with troubleshooting guidance to identify, address, and prevent these issues in their research programs.

Troubleshooting Guides & FAQs

Troubleshooting Guide: Common Sampling Problems & Solutions

| Problem Symptom | Potential Root Cause | Diagnostic Steps | Recommended Solutions |

|---|---|---|---|

| Limited Generalizability: Research findings fail to translate to real-world populations. | Use of a homogeneous convenience sample that does not reflect target population diversity [6]. | 1. Compare sample demographics with target population demographics.2. Conduct heterogeneity of treatment effects analysis.3. Assess external validity through pilot testing. | Shift to homogeneous convenience sampling with a clear, narrow generalizability focus [6] or implement digital recruitment platforms to increase diversity [8]. |

| Incomplete Harms Data: Adverse events (AEs) are underreported or lack critical context. | Inadequate AE monitoring protocols and unclear definitions, especially in critically ill populations where AEs are difficult to distinguish from natural disease progression [7]. | 1. Audit adherence to CONSORT harms reporting guidelines [7].2. Review AE definitions, severity grading, and attribution methods in the protocol.3. Check for missing denominator data for AE analyses. | Implement and report detailed, protocol-defined AE criteria (severity, attribution) before trial initiation [7]. Ensure consistent application across all sites. |

| Fraudulent Enrollment: Ineligible participants enroll in studies of vulnerable populations. | Efforts to respect participant privacy (e.g., not requiring proof of stigmatized condition) are exploited by individuals motivated by compensation [9]. | 1. Monitor for inconsistent or suspicious participant data.2. Implement verification steps that balance rigor with respect for privacy.3. Audit enrollment procedures. | Develop a comprehensive recruitment strategy that combines various tailored elements to verify eligibility while respecting vulnerability and minimizing burden [9] [10]. |

| Poor Recruitment of Underrepresented Groups: Cohort lacks diversity, limiting study validity. | Systemic barriers (distance, clinic-based eligibility), socioeconomic barriers, and lack of trust [8]. | 1. Analyze recruitment source demographics.2. Solicit feedback from community partners on barriers.3. Review digital platform accessibility for varying levels of digital literacy. | Engage with community organizations [10] and deploy participant-centric digital health research platforms (DHRPs) designed for broad access and engagement [8]. |

| Inconsistent Data Collection: Data quality suffers across multiple research sites. | Lack of standardized EDC procedures, insufficient training, and poorly configured system validation [11]. | 1. Review EDC system validation documentation.2. Audit adherence to SOPs for data entry and handling.3. Check change control records for mid-study modifications. | Establish and maintain written Standard Operating Procedures (SOPs) for system setup, data collection, handling, and change control [11]. Provide ongoing training. |

Frequently Asked Questions (FAQs)

Q1: What is the core scientific risk of using a homogeneous convenience sample? The core risk is biased estimation. Estimates of population effects and subpopulation differences derived from such samples are often not reflective of true effects in the target population because the sample poorly represents it. This poor generalizability directly threatens the validity and applicability of your research conclusions [6].

Q2: In clinical trials, how can homogeneous samples lead to safety threats? Homogeneous samples can mask variable safety profiles across different subpopulations. Furthermore, even in single-group trials, inadequate harms collection and reporting—a common issue—can prevent a true understanding of risks. Studies often fail to adequately describe AE definitions, severity, attribution, and collection procedures, limiting the ability to accurately balance a therapy's benefits and harms [7].

Q3: When working with vulnerable populations, how can I verify eligibility without violating privacy or increasing burden? This is a complex challenge. Overly burdensome verification can discourage participation, while excessive privacy protection can leave studies "vulnerable to infiltration by ineligible individuals" [9]. The solution is a tailored, multi-faceted strategy developed in collaboration with community partners that respects the unique concerns of the population while incorporating sufficient safeguards for data integrity [9] [10].

Q4: What are the key regulatory and best practice requirements for EDC systems in clinical research? Key requirements include:

- System Validation: The EDC system must be validated to ensure completeness, accuracy, reliability, and consistent intended performance [11].

- Standard Operating Procedures (SOPs): Maintain written SOPs covering system setup, installation, use, validation, data collection, handling, security, and change control [11].

- Data Traceability: It must always be possible to compare original data observations with processed data [11].

- Training: Personnel must be qualified by education, training, and experience to perform their respective tasks [11].

Q5: How can digital platforms help improve cohort diversity and generalizability? Digital Health Research Platforms (DHRPs) can minimize traditional barriers to participation like transportation costs, site access, and time commitment. A well-designed, participant-centric platform can successfully recruit and engage individuals from different racial, ethnic, and socioeconomic backgrounds and other groups underrepresented in biomedical research, thereby building more diverse and generalizable cohorts [8].

Experimental Protocols & Methodologies

Protocol for Implementing a Homogeneous Convenience Sample with Clear Generalizability

When a probability sample is not feasible and a homogeneous convenience sample must be used, the following protocol helps clarify and constrain the study's generalizability claims.

Objective: To deliberately limit the sample to a specific sociodemographic subgroup to achieve clearer, albeit narrower, generalizability [6]. Materials: Pre-defined inclusion/exclusion criteria, recruitment materials, EDC system. Procedure:

- Define the Homogeneous Subgroup: Explicitly define the single sociodemographic factor (e.g., ethnicity, urbanicity, SES) or combination of factors on which the sample will be homogeneous.

- Establish Rigorous Eligibility Criteria: Configure the EDC system to enforce strict screening based on the defined subgroup criteria [11].

- Document the Sampling Frame: Clearly record the method of participant access and recruitment (e.g., single clinic, university population, specific community organization) [6].

- Report Limitations Transparently: In the study manuscript, explicitly state the specific population to which findings can be generalized, based on the homogeneous sample, and acknowledge the unknown generalizability to other populations.

Protocol for Comprehensive Harms Monitoring in Clinical Trials

Adherence to this protocol ensures robust collection and reporting of safety data, as recommended by CONSORT guidelines [7].

Objective: To systematically identify, collect, attribute, grade, and report all adverse events (AEs) during a clinical trial. Materials: Protocol with pre-specified AE definitions, EDC system with AE-specific forms, validated severity grading scales. Procedure:

- Pre-define AEs: Before trial initiation, define all AEs of interest in the study protocol. Include clear definitions for severity grading (e.g., mild, moderate, severe) and rules for attribution (i.e., the relationship of the AE to the study drug) [7].

- Configure EDC System: Build the EDC module to capture:

- Train Site Personnel: Standardize training for all research staff on AE definitions, grading, attribution rules, and EDC data entry procedures [11].

- Continuous Monitoring: Monitor AE data in real-time using EDC reports. Have a Data and Safety Monitoring Board (DSMB) review cumulative AE data periodically [7].

- Report Results: In the final manuscript, present the absolute risk of each AE type per arm, graded by severity and seriousness. Provide both the number of AEs and the number of patients with AEs, using consistent denominators [7].

Visualizations: Workflows and Logical Diagrams

Sampling Strategy Decision Pathway

The diagram below outlines the logical decision process for selecting a sampling strategy, highlighting the trade-offs between different approaches.

Adverse Event Monitoring Workflow

This workflow details the process for identifying, documenting, and reporting Adverse Events within an EDC system, crucial for ensuring patient safety and data integrity.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key methodological and technological solutions for addressing the challenges associated with sampling and data integrity.

| Item / Solution | Function / Purpose | Key Considerations |

|---|---|---|

| Homogeneous Convenience Sampling | A sampling method that intentionally limits participants to specific sociodemographic subgroups. | Provides clearer, narrower generalizability compared to heterogeneous convenience samples, reducing some forms of bias [6]. |

| Digital Health Research Platform (DHRP) | A participant-centric digital platform for recruitment, enrollment, data collection, and engagement via web/mobile apps. | Effective for increasing access and engagement with diverse, underrepresented populations when designed for varying digital literacy [8]. |

| CONSORT Harms Reporting Checklist | A guideline of 18+ items for standardized reporting of adverse events in clinical trials. | Improves transparency and completeness of safety data; commonly missed items include AE severity grading and attribution definitions [7]. |

| Community Engagement Builder | A tool within a DHRP to facilitate collaboration with community organizations and tailor recruitment. | Critical for building trust and effectively recruiting vulnerable and hard-to-reach populations [8] [10]. |

| Electronic Data Capture (EDC) System | A validated computerized system for collecting, managing, and storing clinical trial data. | Requires SOPs for setup, data handling, system security, and change control to ensure data integrity and regulatory compliance [11]. |

| Standard Operating Procedures (SOPs) | Written, detailed instructions to achieve uniformity in the performance of a specific function. | Essential for consistent EDC system use, data collection, and harms monitoring across all research sites and personnel [11]. |

FAQs on Diversity Action Plans (DAPs) and Regulatory Compliance

What is the current status of the FDA's Diversity Action Plan guidance?

As of 2025, the requirement for submitting Diversity Action Plans remains in effect under the Food and Drug Omnibus Reform Act (FDORA) of 2022 [12]. The FDA released a draft guidance in June 2024, which was temporarily removed in January 2025 and subsequently restored to the FDA website by a court order in February 2025 [13] [14] [12]. The FDA is statutorily required to issue final guidance within nine months of the close of the draft's comment period, with an expected deadline of June 26, 2025 [12]. Sponsors should continue preparing DAPs, as the legal mandate under FDORA is unchanged.

What studies require a Diversity Action Plan?

DAPs are mandated for specific clinical studies [12]:

- Phase 3 or pivotal studies of drugs and biologics.

- Certain medical device trials that are not exempt from Investigational Device Exemption (IDE) regulations.

What are the key components of a Diversity Action Plan?

A DAP must include several core elements [14] [12]:

- Enrollment Goals: Specific targets for underrepresented racial, ethnic, sex, and age groups, disaggregated for the clinically relevant population.

- Rationale for Goals: Justification explaining the significance of these targets for the study's objectives.

- Outreach Strategies: Detailed methods for achieving enrollment and retention, such as community engagement and cultural competency training.

- Program Overview: Context of the disease/condition and the affected populations.

- Progress Updates: Plans for reporting on progress toward enrollment targets.

Troubleshooting Common DAP Implementation Challenges

Challenge: Meeting Enrollment Goals for Underrepresented Populations

Problem: Despite plans, actual enrollment of participants from underrepresented backgrounds remains low.

Solutions:

- Leverage Digital Recruitment Tools: Use Electronic Data Capture (EDC) systems and patient registries to identify and reach diverse populations more effectively [15]. Deploy culturally tailored messaging on platforms like Facebook, which has shown higher click-through and enrollment rates among target groups, such as African American adults [15].

- Implement Multi-Modal Outreach: Combine digital methods (patient portal messages, email) with traditional methods (postal mail) to mitigate the limitations of any single channel. Studies show this approach increases recruitment of Black or African American adults [15].

- Utilize Decentralized Clinical Trial (DCT) Components: Technologies like telemedicine and home health visits, supported by modern EDC systems, reduce geographic and logistical barriers to participation [16] [17].

Challenge: Integrating DAP Strategy with EDC System Workflows

Problem: Diversity goals are not operationally supported by clinical data capture systems.

Solutions:

- Select EDC Systems that Support Flexible and Accessible Trials: Choose platforms with strong support for decentralized trials, mobile data entry, and multilingual interfaces to engage broader populations [2].

- Employ Risk-Based Monitoring: Use EDC capabilities for centralized data review to focus resources on critical data points and issues, improving efficiency and quality without requiring 100% source data verification [18]. This frees up resources that can be redirected to diversity initiatives.

- Ensure Cultural Competence in Digital Tools: Verify that your eConsent modules and patient-facing application components are available in multiple languages and are culturally appropriate [19].

Quantitative Data on Representation Gaps

The following table summarizes documented disparities in clinical trial participation, highlighting why DAPs are necessary [12].

| Population Group | U.S. Population (Approx. %) | Clinical Trial Participation (Approx. %) | Therapeutic Area Examples |

|---|---|---|---|

| Black/African American | 14% | 5-7% | N/A |

| Hispanic/Latino | 18% | <8% | N/A |

| Women | N/A | Varies | Cardiovascular Disease (41.9% participation vs. 49% prevalence) [12] |

| Women | N/A | Varies | Psychiatry (42% participation vs. 60% prevalence) [12] |

| Women | N/A | Varies | Cancer (41% participation vs. 51% prevalence) [12] |

Strategic Workflow: Integrating DAPs with EDC-Facilitated Recruitment

The diagram below outlines a strategic workflow for leveraging EDC systems to achieve Diversity Action Plan goals.

Research Reagent Solutions: Essential Tools for DAP Implementation

The following table details key digital tools and their functions in supporting diverse recruitment as part of a DAP strategy.

| Tool / Solution | Primary Function in DAP Support |

|---|---|

| Modern EDC Systems (e.g., Medidata Rave, Veeva Vault) | Centralized, real-time data capture; supports remote monitoring and decentralized trial components to reduce participant burden [16] [2]. |

| eConsent Modules | Facilitate the informed consent process in multiple languages and with multimedia, improving understanding for participants with varying literacy levels or language preferences [2] [19]. |

| Clinical Trial Management Systems (CTMS) | Track recruitment metrics and enrollment demographics in real-time, allowing for quick identification of gaps and adjustment of strategies [19]. |

| Patient Registries & EMR Screening | Enable identification of potential participants from diverse backgrounds directly through electronic medical records and pre-existing research registries [15]. |

| Digital Outreach Platforms | Allow for targeted, culturally tailored recruitment campaigns on social media and other online channels to reach specific underrepresented communities [15]. |

Experimental Protocol: A Method for Testing Culturally Tailored Recruitment Messages

Objective: To optimize digital recruitment materials for engaging specific underrepresented populations.

Background: A one-size-fits-all recruitment message often fails to resonate with diverse communities. This protocol uses an iterative, data-driven approach (A/B testing) to refine outreach [15].

- Hypothesis Generation: Formulate a hypothesis that a recruitment ad with culturally relevant imagery and messaging will yield a higher engagement rate for a target population (e.g., African American adults with a specific condition) compared to a standard, non-tailored ad.

- Material Development:

- Variable A (Control): Develop a standard recruitment ad using generic imagery and text.

- Variable B (Test): Develop a tailored ad. This involves:

- Imagery: Using representative images of the target population.

- Messaging: Framing the trial's value proposition in a culturally relevant context, potentially emphasizing community benefit and addressing historical mistrust.

- Trust Elements: Featuring endorsements from trusted community leaders or organizations.

- Deployment: Run both ad sets simultaneously on chosen digital platforms (e.g., Facebook, Instagram), targeting the same demographic and geographic criteria.

- Data Collection & Metrics: Track key performance indicators (KPIs) for a predetermined period, typically:

- Click-through rate (CTR)

- Conversion rate (number of individuals who complete a pre-screener or contact form)

- Analysis: Compare the KPIs for Variable A and Variable B. Statistical analysis can determine if the difference in performance is significant.

- Iteration: Implement the winning version as the new standard. Use insights gained to inform the development of further tests for continuous improvement. This method was successfully used in the ADAPTABLE study, which found higher click-per-impression and enrollment yields with tailored ads [15].

Troubleshooting Guide: FAQs on Recruiting and Retaining Vulnerable Populations

FAQ 1: What are the most effective strategies for building trust with vulnerable populations who have historical reasons to mistrust research?

- Issue: Potential participants are wary of research due to past ethical injustices or personal experiences of marginalization.

- Solution: Trust is not built through a single action but through consistent, respectful, and transparent practices.

- Community Engagement: Proactively partner with community organizations and advocacy groups that already have the trust of the population you wish to engage. These groups can act as bridges and legitimize your research efforts [20] [21].

- Trauma-Informed Approach: Adopt a trauma-informed perspective in all interactions. This involves recognizing the potential for past trauma, ensuring physical and emotional safety, and prioritizing participant autonomy and choice throughout the research process [22].

- Transparency: Be clear about the study's goals, potential risks and benefits, and how participants' data will be used and protected. Use plain language in all communications and consent forms, avoiding technical jargon [23] [20].

FAQ 2: How can we reduce participant burden to improve retention in long-term studies?

- Issue: Participants drop out due to the high logistical, financial, or time burden of study participation.

- Solution: Integrate participant-centric design from the very beginning of the trial protocol development [24] [25].

- Decentralized Elements: Incorporate flexible options such as virtual visits, local labs for sample collection, or in-home health services to minimize travel [24].

- Streamlined Technology: Use intuitive, user-friendly digital platforms for data entry (ePRO) and communication. Complex or multiple logins cause frustration and non-compliance [24].

- Flexible Scheduling: Allow participants to schedule visits at times that are convenient for them, including outside of standard business hours where possible [24].

FAQ 3: Our retention rates are low. What proactive strategies can we implement during the study design phase?

- Issue: Retention is treated as an afterthought, leading to reactive and often ineffective measures when participants drop out.

- Solution: Prospective planning for retention is critical. Follow SPIRIT guideline 18b, which recommends that protocols explicitly describe "plans to promote participant retention and complete follow-up" [25].

- Combined Strategies: Plan for a bundle of retention strategies rather than relying on a single method. The most common combinations involve reminders paired with either monetary incentives or flexible data collection methods [25].

- Motivational Design: Frame the trial experience to support participants' intrinsic psychological needs for autonomy (feeling in control), competence (feeling effective), and relatedness (feeling connected) [26]. This is more effective than relying solely on external controls or payments.

- Budget for Retention: Allocate funds specifically for retention activities, such as conditional monetary incentives, transportation reimbursements, and retention staff time [25].

FAQ 4: How can we ensure our digital tools and platforms are accessible and engaging for all participants?

- Issue: Digital clinical trial platforms are often unintuitive, creating a barrier to participation, especially for those with low tech literacy.

- Solution: Treat the participant's digital experience as a critical retention tool.

- Intuitive User Experience (UX): Design digital interfaces to be as simple and easy to use as a modern consumer app. This includes clear navigation, accessible color schemes, and larger text options [24].

- Multilingual and Culturally Adapted Content: Provide all study materials and software interfaces in the participant's preferred language, using certified translations and being mindful of cultural nuances [24].

- Integrated Support: Build in features like automated reminders for visits and study tasks, as well as easy-to-find contact information for participant support [24] [27].

FAQ 5: What specific ethical safeguards are required when including vulnerable populations?

- Issue: Vulnerable individuals may have limited autonomy, health literacy, or be at risk of coercion.

- Solution: Implement structured safeguards approved by an Independent Review Board (IRB) or Ethics Committee [23].

- Tailored Informed Consent: The consent process must be comprehensible. Use simplified language, visual aids, and allow ample time for questions. Researchers should verify understanding, not just obtain a signature [23].

- Independent Oversight: The IRB must rigorously evaluate whether involving a vulnerable population is necessary and that all additional protections (e.g., use of a witness during consent, documentation for guardians) are in place [23].

- Non-Coercive Compensation: Ensure that any payment for participation is appropriate and does not become an undue inducement that overrides a person's ability to freely assess the risks [23].

Experimental Protocols for Key Methodologies

Protocol 1: Implementing a Participatory Research Model with Vulnerable Populations

This protocol outlines a method for engaging people with lived experience (PWLE) as co-researchers throughout the research process, fostering equity and trust [20].

Recruitment and Onboarding:

- Recruit PWLE from diverse sources, including existing partnerships, patient advocacy groups, and community organizations. Clearly define their status as paid co-researchers [20].

- Hold initial individual meetings to discuss roles, expectations, authorship, and compensation, ensuring full transparency [20].

Integration into Research Structure:

- Involve PWLE in all research phases: design, methodology, analysis, and dissemination [20].

- Structure the project with a large executive committee that includes PWLE for high-level direction and smaller working groups for specific objectives.

Communication and Meeting Management:

Protocol 2: Integrating Retention-By-Design into Trial Planning

This protocol ensures that participant retention is proactively planned during the trial design stage, as recommended by SPIRIT guidelines [25].

Retention Risk Assessment:

- During protocol development, identify factors that may increase dropout risk (e.g., long duration, high participant burden, vulnerable population).

Strategy Selection and Planning:

- Select a combination of retention strategies based on the risk assessment. Common effective pairs include "reminders with flexible data collection" and "reminders with conditional monetary incentives" [25].

- Detail these strategies explicitly in the trial protocol, including a description and a plan for collecting outcome data from participants who discontinue the intervention [25].

Budgeting and Resource Allocation:

- Secure funding and assign responsibility for the implementation of the chosen retention strategies before the trial begins.

Signaling Pathway: From Historical Mistrust to Ethical Recruitment

The following diagram illustrates the logical workflow and necessary paradigm shift for ethically recruiting vulnerable populations in clinical research.

Research Reagent Solutions: Essential Methodologies and Tools

This table details key methodological approaches and digital tools that function as essential "reagents" for successful and ethical research with vulnerable populations.

| Research 'Reagent' | Function & Purpose | Key Considerations |

|---|---|---|

| Participatory Research Framework [20] | Engages people with lived experience as co-researchers throughout the project to ensure relevance, inclusivity, and empowerment. | Requires dedicated resources, a trained liaison, and flexibility to accommodate participants' capacities. |

| Trauma-Informed Approach [22] | Creates a research environment that prioritizes safety, choice, and empowerment for participants with histories of trauma. | Must be applied at all stages, from recruitment and consent to data collection and follow-up. |

| Digital Participant Portal (e.g., ENGAGE!) [27] | A centralized platform (with eConsent, reminders, virtual visits) that simplifies participation and improves communication. | Critical for reducing burden. Must have an intuitive user experience (UX) and be accessible in multiple languages [24]. |

| Self-Determination Theory (SDT) [26] | A theoretical framework for designing trials that support participant autonomy, competence, and relatedness to boost intrinsic motivation. | Helps move beyond purely financial incentives to create a more engaging and sustainable participant experience. |

| Community-Based Organization Partnerships [10] [21] | Provides a trusted gateway to hard-to-reach populations, lending credibility and cultural competence to the research. | Involve partners early in the design process; this is a collaborative relationship, not just a recruitment channel. |

Technical Support Center: Troubleshooting Guides and FAQs

This technical support center provides targeted guidance for researchers facing the dual challenge of protecting participant privacy while ensuring eligible enrollment in studies using Electronic Data Capture (EDC) systems, particularly when working with vulnerable populations.

Troubleshooting Guide: Common Technical and Methodological Challenges

Issue 1: Low Enrollment from Underrepresented Groups

Problem: Digital recruitment campaigns are failing to reach or enroll sufficient participants from vulnerable or underrepresented populations.

Solution: Implement a multi-faceted digital approach informed by successful case studies.

Methodology: The "All of Us" Research Program successfully enrolled 87% of participants from underrepresented groups by combining community collaboration, accessible platform design, and hybrid participation options [8]. Their technical architecture used a highly configurable, low-code approach that supported an open ecosystem for integrating diverse digital health technologies [8].

Technical Implementation:

- Utilize platforms supporting multilingual interfaces and right-to-left text for linguistic diversity [2]

- Implement low-bandwidth compatibility for regions with limited internet infrastructure [2]

- Deploy mobile-first EDC platforms with offline data collection capabilities for areas with connectivity challenges [2]

Issue 2: Suspected Fraudulent Enrollments in Digital Recruitment

Problem: Automated bots or ineligible participants are attempting enrollment in fully remote studies.

Solution: Implement layered verification protocols.

Methodology: A vaping cessation RCT recruiting adolescents successfully implemented both automated detection systems and manual verification protocols that identified 960 potentially suspicious entries [28]. They combined this with structured screening for decisional capacity, achieving a 73.7% pass rate [28].

Technical Implementation:

- Deploy automated fraud detection algorithms within your EDC workflow

- Establish manual verification protocols for borderline cases

- Implement role-based access controls to protect verification data [29]

Issue 3: Participant Drop-off During Electronic Consent Process

Problem: Participants, particularly those with lower digital literacy, abandon the study during the eConsent process.

Solution: Redesign the consent workflow using accessibility principles and plain language.

Methodology: Modern eClinical platforms are incorporating Web Content Accessibility Guidelines (WCAG) conformance as a default requirement, reducing cognitive load and making interfaces usable for people with varying abilities and digital aptitudes [30]. This is particularly crucial for vulnerable populations who may have multiple barriers to participation.

Technical Implementation:

Issue 4: Data Silos Between EHR and EDC Systems

Problem: Site staff must re-enter data from electronic health records (EHR) into EDC systems, increasing burden and error risk.

Solution: Implement interoperability standards for seamless data flow.

Methodology: Industry leaders are adopting "open rails" using HL7 FHIR to CDISC mappings and Digital Data Flow (USDM) standards to enable data movement from EHR to EDC/eSource with minimal rekeying [30]. This reduces transcription errors and gives site staff time for higher-value activities.

Technical Implementation:

- Select EDC systems with robust API capabilities for EHR integration [2]

- Implement FHIR standards for health data exchange

- Utilize middleware for protocol-to-CDM (Clinical Data Management) transformations

Issue 5: Privacy Concerns Deterring Participation

Problem: Potential participants express concerns about data privacy and how their health information will be used.

Solution: Implement transparent data practices and privacy-preserving technologies.

Methodology: Leading researchers recommend showing participants—in one screen—what data is collected, why, for how long, and how to revoke consent [30]. When using AI, privacy-preserving techniques like federated learning allow models to be trained across institutions without centralizing identifiable data [30].

Technical Implementation:

- Implement "privacy by design" in EDC system architecture

- Utilize federated learning approaches for multi-site studies

- Develop clear data governance frameworks with participant-facing explanations

- Implement role-based access controls with "sensitive data" tagging for additional protection [29]

Frequently Asked Questions (FAQs)

Q: How can we ensure our EDC system is accessible to participants with disabilities? A: Ensure WCAG 2.1 AA compliance across all participant-facing surfaces [31]. This includes providing sufficient color contrast (4.5:1 for normal text), not using color as the only means of conveying information, ensuring keyboard navigation, and providing text alternatives for non-text content [32]. Conduct usability testing with people with disabilities.

Q: What specific EDC features support recruitment and retention of vulnerable populations? A: Key features include: multilingual support, mobile-friendly interfaces, offline data collection capability, low-bandwidth functionality, simple navigation, and integration with decentralized trial components like eConsent and ePRO [2]. Platforms like TrialKit offer mobile-first design specifically for resource-limited environments [2].

Q: How can we balance rigorous eligibility verification with privacy protection? A: Implement a risk-based, phased approach to data collection. Collect only essential information initially, with additional verification steps after initial eligibility screening. Use secure, encrypted methods for document transfer and ensure all verification data is stored with appropriate access controls [29].

Q: What are the key regulatory considerations for privacy in EDC systems? A: EDC systems must comply with 21 CFR Part 11 for electronic records, HIPAA for health information privacy, and GDPR for international studies [2]. Systems should maintain comprehensive audit trails, role-based access controls, and data encryption both in transit and at rest [29].

Q: How can we detect and prevent fraudulent enrollments without compromising legitimate participants? A: Implement layered verification including automated checks for duplicate entries, manual review of suspicious patterns, and confirmation workflows through multiple channels [28]. Balance security with accessibility by providing alternative verification paths for participants with limited technology access.

Quantitative Data on Recruitment and Privacy

Table 1: Digital Recruitment Outcomes in Diverse Populations

| Study/Program | Sample Size | Underrepresented Recruitment | Key Success Factors |

|---|---|---|---|

| All of Us Research Program [8] | 705,719 participants | 87% (613,976) from underrepresented groups | Community collaboration, accessible platform design, hybrid participation options |

| Adolescent Vaping Cessation RCT [28] | 1,681 participants | Reached target age range (13-17) nationwide | Youth advisory board, IRB waiver of parental consent, structured capacity screening |

| Digital Health Research Platform [8] | 705,719 enrolled | 46% racial/ethnic minorities, 8% rural, 31% over 65, 20% low SES | Participant-centric design, multilingual capability, reduced participation barriers |

Table 2: EDC System Features Supporting Privacy and Enrollment

| Feature Category | Specific Capabilities | Privacy and Enrollment Benefits |

|---|---|---|

| Access Controls | Role-based permissions, sensitive data tagging [29] | Protects confidential information while allowing appropriate access |

| Audit Capabilities | Complete change tracking: what, when, who [29] | Ensures data integrity and traceability for regulatory compliance |

| Interoperability | EHR integration, API capabilities, FHIR standards [30] | Reduces data re-entry errors and site burden |

| Decentralized Trial Support | eConsent, ePRO, remote monitoring [2] | Expands geographic reach and reduces participation barriers |

| Security Protocols | Data encryption, two-factor authentication [29] | Protects participant data and builds trust |

Experimental Workflow for Privacy-Preserving Enrollment

The following diagram illustrates a methodological framework for balancing eligibility verification with privacy protection when enrolling vulnerable populations in EDC research:

Research Reagent Solutions: Essential Tools for Digital Research

Table 3: Essential Digital Research Tools for Privacy-Preserving Enrollment

| Tool Category | Specific Solutions | Function in Privacy/Enrollment |

|---|---|---|

| EDC Platforms | Medidata Rave, Oracle Clinical One, Veeva Vault, REDCap [2] | Secure data capture with compliance frameworks for diverse trial designs |

| Mobile Data Collection | TrialKit, Medrio [2] | Enables participation from resource-limited environments with offline capability |

| Accessibility Tools | ANDI, Colour Contrast Analyser, WebAIM [32] | Ensures interfaces are usable by people with diverse abilities |

| Interoperability Standards | HL7 FHIR, CDISC, USDM [30] | Enables data exchange while reducing re-entry errors and site burden |

| Privacy-Preserving Analytics | Federated Learning Systems [30] | Allows collaborative analysis without centralizing identifiable data |

| Fraud Detection | Automated screening algorithms [28] | Identifies suspicious enrollment patterns while protecting legitimate participants |

Building Bridges: Community-Centered and Digitally-Enabled Recruitment Strategies

Community-Based Participatory Research (CBPR) is a collaborative research approach that equitably involves community members, organizational representatives, and researchers in all aspects of the research process [33]. All partners contribute expertise and share decision-making power to combine knowledge with action to improve health outcomes and reduce health disparities [33] [34]. This approach is particularly valuable for Electronic Data Capture (EDC) research focusing on vulnerable populations, as it builds trust, enhances cultural relevance, and improves the validity and sustainability of research outcomes.

CBPR's historical roots lie in the work of Kurt Lewin, who coined the term "action research" in the 1940s, and Orlando Fals Borda, who emphasized avoiding the "monopoly on learning" that results from top-down researcher-community relationships [33] [34]. In the context of EDC research, which utilizes digital systems for collecting, storing, and managing clinical research data [35], CBPR principles ensure that these technological tools are deployed in ways that are accessible, acceptable, and beneficial to vulnerable communities.

Core Principles of CBPR in Digital Research Environments

The integration of CBPR with EDC systems requires adherence to key principles that redefine traditional research relationships [33] [34]:

- Community as a unit of identity: Recognizing that individuals belong to larger, socially constructed communities characterized by shared values, norms, and mutual commitment.

- Co-learning and capacity building: Facilitating collaborative, bidirectional learning between researchers and community partners.

- Balance between research and action: Ensuring projects combine knowledge generation with interventions that yield mutual benefits for all partners.

- Long-term commitment and sustainability: Fostering partnerships that extend beyond a single funding cycle or research project.

The following table contrasts how CBPR approaches differ fundamentally from traditional research methodologies, particularly in the context of EDC systems and recruitment of vulnerable populations:

Table 1: Comparison of Traditional Research and CBPR Approaches in EDC Research

| Research Process Component | Traditional, Non-Patient-Centered Research | Community-Based Participatory Research |

|---|---|---|

| Research Idea/Question | Driven by funding priorities and researchers' academic interests [33]. | Driven by a social justice imperative and community's expressed needs; ideas identified by or with the impacted community [33]. |

| Researcher-Participant Relationship | Minimal relationship based primarily on researcher-participant dynamics; individuals approached without necessarily addressing community priorities [33]. | Relationship developed over time through mutual interest; community members have official status on advisory boards or as co-investigators [33]. |

| Intervention Design | Researchers design interventions based on evidence-based practice and current science [33]. | Communities co-design interventions through participation on advisory boards, reflecting both scientific standards and community knowledge/values [33]. |

| Data Collection & Measures | Researchers choose measures based primarily on psychometric properties from prior studies [33]. | Community provides input on measure selection and/or co-designs locally specific instruments in addition to standard tools [33]. |

| Recruitment & Retention | Relies on clinic-based models that often limit diversity and generalizability of outcomes [8] [36]. | Uses multi-faceted approaches (in-person, digital, print) with community input to overcome systemic and socioeconomic barriers [8] [36]. |

| Dissemination | Research disseminated primarily to academic audiences; advancement of researcher/institutional interests is primary [33]. | Research disseminated in multiple formats across various venues to be accessible to community; community well-being is a priority [33]. |

CBPR-EDC Implementation Framework: Workflows and Processes

Implementing CBPR principles within EDC research requires an iterative, cyclical process that continuously engages community partners. The following workflow diagram illustrates this collaborative process:

Diagram 1: CBPR-EDC Implementation Cycle

The digital architecture supporting CBPR-EDC integration requires specific technical components to facilitate community engagement and data collection. The following diagram outlines this system architecture:

Diagram 2: Digital Platform Architecture for CBPR

Essential Toolkit for CBPR-EDC Research

Successful implementation of CBPR within EDC research requires specific tools and methodologies to ensure equitable participation and technically robust data collection.

Table 2: Essential Research Reagent Solutions for CBPR-EDC Implementation

| Tool Category | Specific Solution/Method | Function in CBPR-EDC Research |

|---|---|---|

| Digital Infrastructure | Participant-Centric Digital Health Research Platform (DHRP) [8] [36] | Provides secure, accessible tools for recruitment, enrollment, multisource data collection, and long-term engagement via web and mobile apps. |

| Community Engagement | Community Engagement Builder (CEB) [8] [36] | Enables customized, community-specific engagement and culturally appropriate communication strategies. |

| Data Collection | Electronic Case Report Forms (eCRFs) [35] | Digital forms for structured patient data collection that can be customized with community input to ensure cultural and contextual relevance. |

| Participant Management | Participant Experience Manager (PXM) [8] [36] | Facilitates the participant journey through the study with tools accessible to different levels of digital access, literacy, and comfort. |

| Data Integration | Research Cloud (RC) & Data Harmonization Tools [8] [36] | Secure cloud environment for storing, harmonizing, and integrating diverse data sources (EHR, genomics, wearables, surveys). |

| Partnership Governance | Community Advisory Boards & Steering Committees [33] | Formal structures for equitable community involvement in oversight, decision-making, and research direction. |

| Capacity Building | Co-Learning & Training Modules [33] [34] | Resources to build research capacity within communities and cultural competency among researchers. |

Experimental Protocols for Recruiting Vulnerable Populations

Protocol: Multi-Modal Recruitment Strategy for Diverse Populations

Objective: To recruit participants from vulnerable and underrepresented populations using a CBPR-informed, digitally-enabled approach.

Methodology:

- Community Partnership Development: Establish formal partnerships with community-based organizations through memoranda of understanding that specify roles, responsibilities, and data governance [33] [34].

- Cultural and Linguistic Adaptation: Co-design recruitment materials with community partners to ensure cultural appropriateness, using plain language and multiple formats (print, digital, video) [8].

- Hybrid Recruitment Implementation:

- Digital Campaigns: Implement targeted social media and online advertising developed with community input [8] [36].

- In-Person Engagement: Utilize community locations and events identified as trustworthy by community partners [8].

- Provider Referrals: Train healthcare providers in partner organizations on referral procedures [8].

EDC System Configuration:

- Implement multi-language support in the EDC interface [8] [36].

- Configure accessibility features for varying levels of digital literacy [8].

- Set up community-specific landing pages within the platform [36].

Protocol: Building Digital Trust and Accessibility in Vulnerable Populations

Objective: To enhance digital research participation among groups with historical distrust of research or limited digital access.

Methodology:

- Digital Access Assessment: Collaboratively assess technology access, literacy, and preferences within the community [8] [36].

- Technical Support Infrastructure:

- Transparency Mechanisms:

EDC System Configuration:

- Implement privacy-preserving technologies with clear data flow visualizations [8] [36].

- Configure role-based access for community partners to appropriate research data [33].

- Develop simplified data visualization tools for community interpretation of findings [36].

Technical Support Center: Troubleshooting Common CBPR-EDC Implementation Challenges

Frequently Asked Questions

Q1: How can we ensure our EDC system is accessible to participants with varying levels of digital literacy?

A: Implement a participant-centric digital health platform that accommodates different digital aptitudes through [8] [36]:

- Simplified user interfaces with progressive disclosure of complex features

- Multiple access pathways (web, mobile, tablet) with synchronized data

- Video tutorials and visual guides co-designed with community partners

- Option for assisted completion with research staff or community health workers

- Accessibility features complying with WCAG 2.2 AA guidelines, including sufficient color contrast (minimum 4.5:1 for standard text) [37] [38]

Q2: What strategies are effective for building trust and sustaining engagement with vulnerable populations through digital platforms?

A: Building trust requires [33] [8] [36]:

- Transparent data governance with community control over data use

- Regular communication through preferred community channels (SMS, email, in-person)

- Cultural and linguistic adaptation of all platform content and interfaces

- Establishing community oversight boards with genuine decision-making power

- Providing ongoing value to participants through regular feedback of findings in accessible formats

- Implementing the "Comparative Insights" microservice to allow participants to view anonymized, aggregate data compared to others with similar demographics [36]

Q3: How can we effectively integrate qualitative community knowledge with quantitative EDC data?

A: Successful integration requires [33]:

- Using EDC systems that support mixed-methods data collection (structured and unstructured data)

- Implementing community participatory analysis sessions where quantitative findings are interpreted through community wisdom

- Designing eCRFs that capture both standardized measures and community-narrative data

- Training community members in basic data interpretation and researchers in community cultural contexts

- Utilizing platform features that allow for annotation and contextualization of quantitative findings

Troubleshooting Guide

Table 3: Common CBPR-EDC Implementation Challenges and Solutions

| Challenge | Potential Causes | Solutions & Troubleshooting Steps |

|---|---|---|

| Low recruitmentof targetpopulation | - Lack of trust in research institutions- Digital access barriers- Culturally inappropriate materials- Inconvenient participation requirements | 1. Co-design recruitment materials with community partners [33]2. Implement hybrid participation options (digital and in-person) [8]3. Engage trusted community messengers in recruitment [34]4. Use community-specific communication channels identified by partners |

| High participantdropout rates | - High participant burden- Lack of ongoing engagement- Insufficient technical support- Limited perceived benefit | 1. Implement reduced-burden EDC designs with staggered data collection [8]2. Establish community-based technical support networks [36]3. Create regular feedback mechanisms to share findings with participants4. Utilize engagement microservices (messaging, dashboards) to maintain connection [36] |

| Data quality issues | - Poorly designed eCRFs- Cultural mismatch of measures- Inadequate training of data collectors- Technical usability problems | 1. Pilot-test eCRFs with community members before full implementation [33]2. Adapt standardized measures with community input for cultural relevance [33]3. Train community members as data collectors [33]4. Conduct usability testing with diverse users before launch [8] |

| Community partnerdisengagement | - Tokenistic involvement- Uncompensated labor- Power imbalances in decision-making- Capacity limitations | 1. Establish formal memoranda of understanding with compensation for community partner time [33]2. Implement shared governance structures with genuine authority [34]3. Provide capacity-building resources for community partners4. Create rotating leadership roles to distribute responsibility |

Outcome Assessment and Evidence Base

Research demonstrates that CBPR approaches integrated with participant-centric EDC systems can successfully engage diverse and vulnerable populations. The All of Us Research Program, which utilizes a CBPR-informed digital platform, has recruited 705,719 participants, with 87% (613,976) from populations underrepresented in biomedical research [8] [36]. These include racial and ethnic minorities (46%), rural dwelling individuals (8%), adults over 65 (31%), and individuals with low socioeconomic status (20%) [8] [36].

The successful implementation relies on the technical architecture described in this guide, particularly the microservices that support appointment management, asynchronous messaging, case management, and comparative insights that provide value back to participants [36]. The flexibility of this digital infrastructure allows for the adaptation to community-specific needs while maintaining data security and integrity—a critical concern when working with vulnerable populations [8] [35] [36].

Defining the Local Champion and Understanding Their Role

What is a "Local Champion" in the context of community engagement for research?

A Local Champion, often referred to as a "Stakeholder Engagement Champion" in global health research, is a locally-based professional who facilitates meaningful connections between researchers and communities [39] [40]. These individuals possess strong communication skills and deep contextual understanding of the local health system, culture, and stakeholders [39]. They act as bridges, ensuring that research aligns with local priorities and is conducted in a culturally appropriate manner, which is particularly crucial when working with vulnerable populations [39] [41].

How does the role of a Champion differ from traditional community outreach?

Unlike traditional outreach, which is often transactional and event-based, Champions foster genuine partnerships through sustained engagement [42]. Where outreach might involve one-way information dissemination, Champions enable two-way communication, actively bringing community perspectives into the research process and creating opportunities for co-creation of solutions [42] [43]. This distinction is vital for building the trust necessary for successful recruitment and retention of vulnerable populations in research studies [41].

Identification and Selection of Local Champions

What are the key characteristics to look for when identifying potential Local Champions?

The table below summarizes the core competencies and traits of effective Local Champions, drawing from successful implementations in global research settings [39]:

| Characteristic Category | Specific Qualities and Competencies |

|---|---|

| Communication Skills | Proficiency in local language(s), ability to communicate with diverse stakeholders (from community members to policymakers), strong interpersonal skills [39]. |

| Contextual Understanding | Deep knowledge of local socio-economic, cultural, and political context; familiarity with health challenges and affected communities; understanding of health system structures [39]. |

| Personal Attributes | Cultural sensitivity, empathy, collaborative work ethic, willingness to learn, commitment to including marginalized groups [39]. |

| Professional Background | Diverse backgrounds accepted (program managers, researchers, health practitioners); prior experience with stakeholder engagement or health research implementation is valuable [39]. |

What methods are most effective for identifying true local champions?

Research comparing identification methods in healthcare settings suggests several effective approaches [44]:

- Social Network Analysis (Sociometric Approach): Mapping advice-seeking relationships within a community to identify naturally influential members [44].

- Positional Approach: Identifying individuals in formal leadership positions within community structures [44].

- Staff Selection and Peer Nominations: Having existing organization staff or community members nominate respected individuals [44].

- Combined Methods: Using multiple identification techniques together increases the likelihood of finding the most effective champions [44].

A study in low-resource clinic settings found that opinion leaders identified through positional, staff selection, and self-identification methods were significantly positively correlated with those identified through more resource-intensive social network analysis [44].

Building Effective Collaboration Frameworks

How should I structure support for Local Champions to ensure their success?

The RESPIRE program's Stakeholder Engagement Champion Model provides a proven framework for supporting Champions [39] [40]:

Figure 1: Support framework for Local Champions, based on the RESPIRE program model [39] [40].

What specific capacity-building activities are most effective for Champions?

Successful programs incorporate multiple capacity-building approaches [39]:

- Regular peer exchange meetings (monthly virtual meetings for experience sharing)

- Mentorship from experts in community and stakeholder engagement

- Tailored training sessions on fundamental engagement concepts and principles

- Practical skill development for engaging underserved communities and individuals with low literacy levels

- Access to resource repositories with relevant materials and published examples

Trust-Building Strategies with Vulnerable Populations

What specific trust-building strategies have proven effective when working with vulnerable populations through Local Champions?

Research on clinical trials in Ghana identified several evidence-based strategies for building trust with vulnerable populations [41]:

Figure 2: Evidence-based trust building strategies across the research lifecycle for vulnerable populations [41].

How can we address specific trust barriers commonly encountered in vulnerable populations?

Local Champions are particularly effective at addressing specific trust barriers [39] [41]:

- Blood sample misconceptions: In Northern Ghana, some community members believed blood samples were sold or used for ritual purposes. Local Champions with cultural understanding were able to address these concerns through appropriate communication strategies [41].

- Historical medical injustices: Champions can acknowledge and validate communities' lived experiences that shape perceptions of research, demonstrating cultural awareness and humility [42] [41].

- Fear of exploitation: By ensuring community interests are represented throughout the research process, Champions help rebalance power dynamics and prevent tokenistic involvement [39] [43].

Troubleshooting Common Collaboration Challenges

What are the most common challenges in collaborating with Local Champions and how can they be addressed?

| Challenge Category | Specific Problem | Recommended Solutions |

|---|---|---|

| Structural Challenges | Power imbalances between HIC and LMIC researchers [39] | Decentralize decision-making; give Champions autonomy over strategy and resources [39]. |

| Limited institutional capacity for engagement [39] | Invest in infrastructure; formalize Champion roles within organizations [39]. | |

| Operational Challenges | Increased workload for Champions [39] | Provide adequate compensation; integrate role into job descriptions; secure dedicated funding [39]. |

| Managing information overload for Champions [45] | Use centralized digital platforms to streamline communication; prepare for efficient meetings [45]. | |

| Relationship Challenges | Tokenistic engagement expectations [43] | Establish clear expectations about meaningful participation from the outset [43]. |

| Failure to "close the feedback loop" [43] | Implement systematic reporting back to stakeholders on how input was used [43]. |

How can we maintain Champion engagement and prevent burnout?

- Provide adequate resources: The RESPIRE program allocated approximately GBP 50,000 per country for engagement activities plus GBP 10,000 for Champion salaries [39].

- Recognize Champions' contributions: Create opportunities for Champions to present their work and disseminate their experiences [39].

- Foster peer support networks: Regular meetings allow Champions to share challenges and solutions, reducing isolation [39].

- Balance autonomy with support: Give Champions freedom to design context-specific strategies while providing central technical support and mentorship [39].

Evaluating Champion Effectiveness and Impact

What metrics should we use to evaluate the effectiveness of Local Champions?

Effective evaluation moves beyond simple enrollment numbers to capture relationship quality and trust building [42]:

- Community partnership metrics: Number and quality of community partnerships formed, diversity of stakeholders engaged [42]

- Process indicators: Attendance at engagement events, demographics reached, materials distributed and understood [42]