Evaluating Reliability in Phase Classification Systems: From Clinical Trials to AI-Driven Medical Imaging

This article provides a comprehensive analysis of the reliability, application, and optimization of phase classification systems across biomedical research and clinical practice.

Evaluating Reliability in Phase Classification Systems: From Clinical Trials to AI-Driven Medical Imaging

Abstract

This article provides a comprehensive analysis of the reliability, application, and optimization of phase classification systems across biomedical research and clinical practice. It explores foundational principles in established frameworks like clinical trial phases and cancer staging, examines methodological applications in drug development and medical imaging, addresses common challenges in data quality and system selection, and presents comparative evaluations of traditional versus novel, data-driven approaches. Aimed at researchers, scientists, and drug development professionals, this review synthesizes critical insights to guide the selection, implementation, and advancement of robust classification systems essential for research integrity, clinical decision-making, and regulatory success.

Understanding the Bedrock: Core Principles of Biomedical Phase Classification

Defining Phase Classification and Its Critical Role in Biomedical Research

Clinical trials represent the gold standard in medical research, providing a structured pathway for evaluating new treatments, medications, and medical devices from initial laboratory discovery to widespread clinical use [1]. This structured progression through multiple phases is designed to systematically establish the safety and efficacy of investigational compounds while protecting patient welfare. The phase classification system serves as a critical framework that guides decision-making for researchers, regulators, and sponsors throughout the drug development process.

The definition and consistent application of phase classification are fundamental to the reliability of biomedical research outcomes. Without standardized phase definitions, comparing results across studies, synthesizing evidence through systematic reviews, and making informed judgments about a drug's developmental progress would be significantly compromised. The phase classification system enables stakeholders to quickly understand a therapy's stage of development and the quality of evidence supporting it, forming the foundation for evidence-based medicine and regulatory approval decisions.

Standard Phase Classifications and Definitions

The Core Four-Phase Model

Clinical trials are predominantly classified into four main phases (1-4), each with distinct objectives, participant populations, and methodological approaches [1] [2]. These phases build sequentially upon knowledge gained in previous stages, creating a comprehensive development pathway.

Table 1: Core Clinical Trial Phase Classifications

| Phase | Primary Objectives | Participant Population & Size | Typical Duration | Key Outcomes |

|---|---|---|---|---|

| Phase 1 | Assess safety, tolerability, pharmacokinetics, and determine dosage range [1] [2] | 15-100 healthy volunteers or patients [1] [2] | Several months [1] | Maximum tolerated dose, safety profile, pharmacokinetic properties [2] |

| Phase 2 | Evaluate efficacy against specific conditions and further assess safety [1] [2] | Up to 100-300 patients with the target condition [1] [2] | Several months to 2 years [1] | Preliminary efficacy evidence, optimal dosing regimen, common side effects [2] |

| Phase 3 | Confirm efficacy, monitor adverse reactions, compare to standard treatments [1] [3] | 300-3,000 patients across multiple sites [1] [3] | 1-4 years [1] | Substantial evidence of efficacy, safety profile in larger population, risk-benefit assessment [3] |

| Phase 4 | Post-marketing surveillance of long-term effects in general population [1] [2] | Anyone receiving treatment after approval; no set limit [1] [2] | Ongoing/continuous [1] | Identification of rare side effects, long-term risks and benefits, optimal use patterns [2] |

Specialized Phase Classifications

Beyond the core four phases, specialized classifications address specific research needs:

Phase 0 (Exploratory Studies): Also known as human microdosing studies, Phase 0 trials represent an innovative approach to early drug development [2]. These studies administer single, subtherapeutic doses of an investigational drug to a very small number of subjects (typically 10-15) to gather preliminary pharmacokinetic and pharmacodynamic data [2]. Unlike traditional Phase 1 trials, Phase 0 studies are not intended to evaluate therapeutic efficacy or establish a maximum tolerated dose, but rather to determine whether the drug behaves in humans as predicted from preclinical models [2]. This approach enables earlier "go/no-go" decisions in the development pipeline, potentially saving substantial time and resources by quickly eliminating non-viable compounds.

Adaptive and Bayesian Designs: Recent methodological advances have introduced more flexible trial designs that may span traditional phase boundaries [3]. Adaptive designs allow for modifications to trial protocols based on interim data without compromising validity, while Bayesian approaches enable more efficient evaluation of multiple treatments under a single master protocol [3]. These innovative designs represent an evolution in phase classification, particularly notable during the COVID-19 pandemic where platform trials demonstrated enhanced efficiency for rapidly evaluating multiple therapeutic candidates [3].

Quantitative Analysis of Success Rates Across Phases

The progression of drug candidates through clinical trial phases follows a predictable pattern of attrition, with the majority of compounds failing to advance to subsequent phases. Comprehensive analysis of 3,999 compounds developed between 2000-2010 revealed an overall success rate from Phase 1 to marketing approval of approximately 12.8% [4]. This aligns with other studies indicating that only 5-14% of treatments entering clinical trials successfully complete all phases and receive regulatory approval [1]. These statistics highlight the rigorous nature of the development process and the importance of reliable phase classification for accurate success rate benchmarking.

Table 2: Drug Approval Success Rates by Therapeutic Area

| Therapeutic Area (ATC Code) | Success Rate | Key Influencing Factors |

|---|---|---|

| Blood and blood forming organs (B) | Statistically higher success [4] | Well-understood physiology, validated biomarkers |

| Genito-urinary system and sex (G) | Statistically higher success [4] | Disease heterogeneity, clear clinical endpoints |

| Anti-infectives for systemic use (J) | Statistically higher success [4] | External targets (bacteria, viruses), established efficacy models |

| Oncology | Lower than average success [4] | Disease complexity, tumor heterogeneity, toxicity challenges |

| Neurology | Lower than average success [4] | Blood-brain barrier, disease complexity, limited biomarkers |

Success Rates by Drug Characteristics

Drug approval success rates vary substantially based on specific compound characteristics, highlighting how drug features influence developmental trajectories:

Table 3: Success Rates by Drug Modality and Mechanism

| Parameter Category | Specific Characteristic | Approval Success Rate | Notes |

|---|---|---|---|

| Drug Modality | Biologics (excluding mAb) | 31.3% [4] | Higher specificity and potency |

| Small molecules | Lower than biologics [4] | Broader tissue distribution | |

| Monoclonal antibodies | Intermediate [4] | Target-specific delivery | |

| Drug Action | Stimulant | 34.1% [4] | Enhanced predictability |

| Inhibitor | Intermediate [4] | Varies by target class | |

| Antagonist | Slightly higher than agonist [4] | Better safety profiles | |

| Drug Target | Enzyme targets with biologics | High success [4] | Well-characterized pathways |

| Non-host targets | Higher than host targets [4] | Reduced toxicity concerns |

The variability in success rates across different drug characteristics underscores the importance of considering compound features when interpreting phase-specific outcomes. Drugs targeting enzymes with biologic modalities demonstrate particularly high success rates (31.3%), while those with stimulant mechanisms show the highest overall success (34.1%) [4]. These differences likely reflect variations in target validation, mechanistic understanding, and safety profiles across categories.

Reliability Assessment of Phase Classification Systems

Methodological Framework for Assessing Reliability

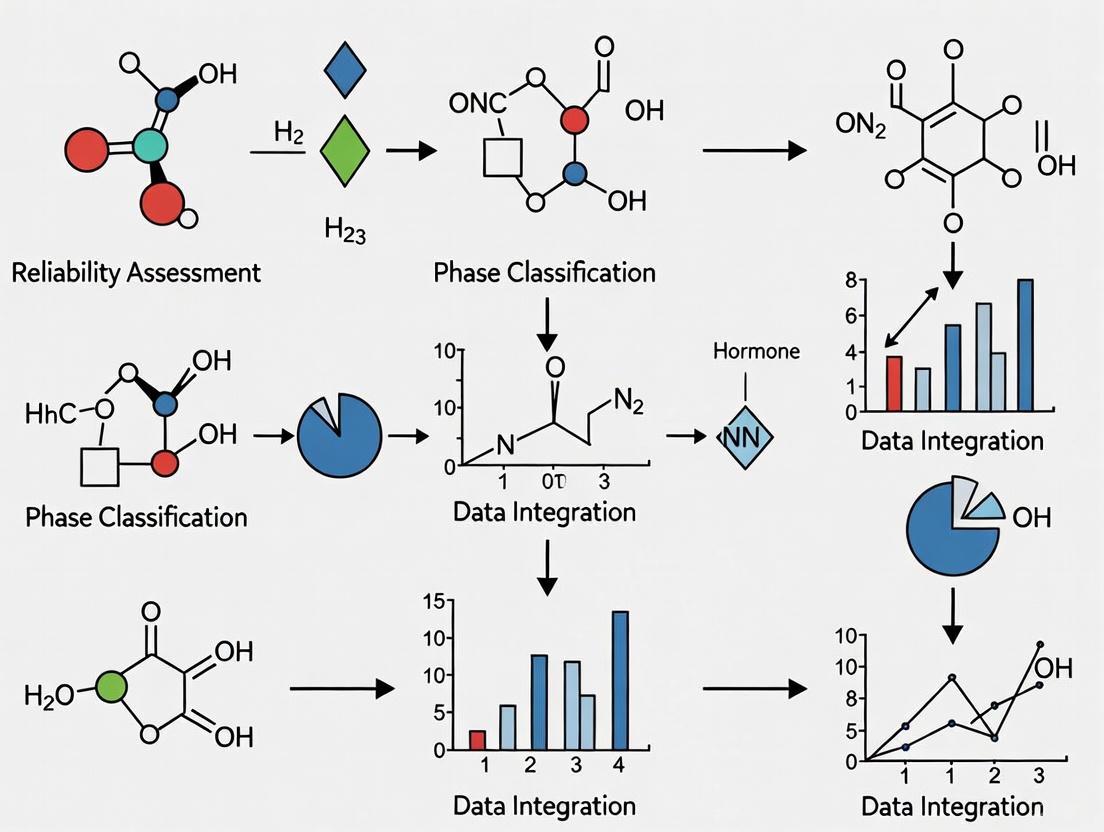

The reliability of phase classification systems can be evaluated using methodologies adapted from systematic review quality assessment and qualitative research. Recent research demonstrates that group discussions among multiple independent raters significantly improve the reliability and validity of qualitative classifications [5]. The following workflow illustrates a robust approach to assessing classification reliability:

Reliability Assessment Workflow

This methodological framework emphasizes the importance of multiple independent assessments followed by structured resolution of discrepancies. Implementation of this approach in systematic reviews has demonstrated that most classification disagreements arise from straightforward errors (such as overlooking information) rather than fundamental interpretive differences [5]. Through structured discussion, approximately 80% of initial discrepancies can be successfully resolved, significantly enhancing classification reliability [5].

Common Threats to Classification Reliability

Several factors can compromise the reliability of phase classification systems in biomedical research:

Ambiguity in Phase Transition Criteria: The boundaries between phases, particularly between Phase 2 and 3, may be blurred in complex adaptive trial designs [3].

Inconsistent Reporting Practices: Primary registries may exhibit variability in how phase information is reported and classified, creating challenges for cross-study comparisons [6].

Interpretive Subjectivity: Without standardized operational definitions, classification of studies, particularly those with non-traditional designs, may vary between assessors [5].

Evidence from methodological research indicates that the most frequent sources of classification discrepancy include simple oversights (missing relevant information in study documentation), interpretive differences (varying application of classification criteria), and ambiguity in source materials [5]. These threats to reliability can be substantially mitigated through the implementation of structured assessment protocols.

Experimental Protocols for Phase Classification Assessment

Inter-Rater Reliability Assessment Protocol

Objective: To quantitatively evaluate the reliability of clinical trial phase classifications across multiple independent raters.

Materials and Reagents:

- Source Dataset: Clinical trial registrations from WHO International Clinical Trials Registry Platform (ICTRP) [6]

- Coding Manual: Operational definitions for each phase classification with decision rules

- Assessment Platform: Secure database for independent rating with audit capability

Methodology:

- Rater Selection: Recruit 5 independent raters with expertise in clinical research methodology [5]

- Training Session: Conduct standardized training on phase classification criteria using exemplars

- Independent Rating: Each rater classifies 100 randomly selected trials from the ICTRP database

- Initial Agreement Calculation: Compute intraclass correlation coefficients (ICC) and percentage agreement [5]

- Structured Discussion: Convene moderated session to discuss discrepancies and resolve through consensus [5]

- Final Classification: Establish reference standard through iterative discussion and evidence review

- Error Analysis: Categorize sources of disagreement (oversight, interpretation, ambiguity) [5]

Validation Measures:

- Calculate pre- and post-discussion agreement metrics

- Assess validity against expert-derived gold standard

- Quantify error rates by category and rater experience level

Systematic Review Quality Assessment Protocol

Objective: To evaluate the impact of phase classification reliability on systematic review conclusions.

Materials:

- AMSTAR 2 Tool: Validated instrument for assessing methodological quality of systematic reviews [7]

- PRISMA 2020 Checklist: Reporting guideline for transparent systematic review documentation [7]

- Sample of Systematic Reviews: 8 systematic reviews from nutrition evidence portfolio [7]

Methodology:

- Review Selection: Identify systematic reviews making clinical recommendations based on phase-classified evidence [7]

- Quality Assessment: Apply AMSTAR 2 tool to evaluate methodological robustness [7]

- Reporting Transparency: Evaluate completeness of phase classification reporting using PRISMA-S checklist [7]

- Reproducibility Assessment: Attempt to reproduce search strategies and study classification within 10% margin of original results [7]

- Bias Assessment: Evaluate potential for interpretation bias in phase-based subgroup conclusions [7]

Outcome Measures:

- Proportion of systematic reviews with critical methodological weaknesses in phase classification

- Percentage reproducibility of original search and classification strategy

- Evidence of interpretation bias in phase-specific conclusions

Essential Research Reagent Solutions for Phase Classification Research

Table 4: Key Methodological Reagents for Classification Research

| Reagent/Tool | Primary Function | Application Context | Validation Status |

|---|---|---|---|

| AMSTAR 2 Tool [7] | Assess methodological quality of systematic reviews | Evaluation of evidence synthesis reliability | Validated tool with established critical domains |

| PRISMA 2020 Checklist [7] | Ensure transparent reporting of systematic reviews | Standardization of review methodology | Widely adopted reporting guideline |

| PRISMA-S Extension [7] | Assess reporting transparency of search strategies | Evaluation of literature search reproducibility | Specialized extension for search methods |

| ICTRP Database [6] | Source of clinical trial registration data | Large-scale analysis of phase distribution | WHO-maintained global registry |

| Inter-Rater Reliability Metrics [5] | Quantify agreement between multiple classifiers | Reliability assessment of phase assignments | Multiple validated statistics (ICC, kappa) |

These methodological reagents form the foundation of rigorous phase classification research. The AMSTAR 2 tool enables critical appraisal of systematic review methodology, identifying weaknesses in how evidence from different trial phases is synthesized and interpreted [7]. The PRISMA guidelines and their extensions ensure transparent reporting of classification methods, while the ICTRP database provides a comprehensive source of phase classification data across the global research landscape [6]. Finally, standardized reliability metrics allow quantitative assessment of classification consistency across raters and timepoints [5].

The classification of clinical trials into standardized phases provides an indispensable framework for organizing, interpreting, and applying biomedical research evidence. This systematic approach enables stakeholders across the research ecosystem to quickly understand a therapy's stage of development, the quality of evidence supporting it, and the appropriate applications of trial results. The reliability of these classification systems directly impacts the validity of evidence synthesis, resource allocation decisions, and ultimately, the development of safe and effective therapies.

While the core phase definitions remain remarkably consistent across the global research infrastructure, methodological advances in trial design and evolving research paradigms continue to challenge traditional classification boundaries. Maintaining the reliability of these systems requires ongoing methodological vigilance, including structured approaches to classification, transparent reporting practices, and quantitative assessment of inter-rater reliability. As biomedical research continues to evolve toward more efficient and patient-centered approaches, the phase classification system will similarly adapt while maintaining its fundamental role in ensuring the reliable translation of scientific discovery into clinical practice.

The clinical trial process is a meticulously structured sequence designed to answer critical questions about a new medical intervention's safety, efficacy, and optimal use. The established phase classification system—encompassing phases 0 through 4—provides a standardized framework for researchers, regulators, and sponsors to navigate the complex journey from laboratory concept to approved therapy and beyond. This guide offers a detailed, objective comparison of each clinical trial phase, presenting core objectives, methodologies, and quantitative outcomes to assess the reliability and consistency of this phased system in modern drug development.

Quantitative Comparison of Clinical Trial Phases

The following table summarizes the key design parameters and success rates across the different clinical trial phases, illustrating the progressive nature of therapeutic development.

Table 1: Key Characteristics and Outcomes Across Clinical Trial Phases

| Phase | Primary Objective | Typical Study Participants | Approximate Duration | Key Endpoints | Typical Success Rate (Moving to Next Phase) |

|---|---|---|---|---|---|

| Phase 0 | Exploratory PK/PD analysis; "Go/No-Go" decision [8] | 10-15 healthy volunteers or patients [8] [3] | Several days [8] | Microdose PK, target modulation [8] [3] | Not Applicable (Informational only) |

| Phase I | Establish safety profile and MTD/RP2D [9] [10] [11] | 20-100 healthy volunteers or patients [9] [3] | Several months [9] | Safety, tolerability, DLTs, MTD, PK/PD [9] [10] | ~70% [9] |

| Phase II | Determine preliminary efficacy and further assess safety [9] [12] | Up to several hundred patients with the condition [9] [12] | Several months to 2 years [9] | Efficacy (e.g., ORR, PFS), dose-response, safety [9] [12] | ~33% [9] |

| Phase III | Confirm efficacy, monitor ADRs, compare to standard treatment [9] [11] | 300-3,000 patients with the condition [9] [3] | 1 to 4 years [9] | Efficacy (e.g., PFS, OS), safety vs. comparator [9] [12] | 25-30% [9] |

| Phase IV | Post-marketing safety monitoring and effectiveness in real-world settings [13] [11] | Several thousand patients with the condition [9] [13] | Long-term (many years) [13] | Long-term safety, rare ADRs, effectiveness [13] [14] | Not Applicable (Post-approval) |

Abbreviations: PK: Pharmacokinetics; PD: Pharmacodynamics; MTD: Maximum Tolerated Dose; RP2D: Recommended Phase 2 Dose; DLT: Dose-Limiting Toxicity; ORR: Objective Response Rate; PFS: Progression-Free Survival; OS: Overall Survival; ADR: Adverse Drug Reaction.

Detailed Experimental Protocols and Methodologies by Phase

Phase 0: Exploratory Microdosing Studies

Objective and Rationale: Phase 0 trials, conducted under an Exploratory IND application, are designed to expedite clinical evaluation by assessing whether an agent modulates its intended target in humans before committing to large-scale trials [8]. They have no therapeutic or diagnostic intent.

Detailed Methodology:

- Dosing Protocol: Participants receive a single, subtherapeutic microdose (less than 1/100th of the dose calculated to yield a pharmacological effect) or a short course of pharmacologically active but subtherapeutic doses [8].

- Key Measurements: Studies focus on ultrasensitive accelerator mass spectrometry or positron emission tomography (PET) to track the microdose. For pharmacodynamic studies, serial tumor or surrogate tissue biopsies are analyzed using a validated assay to measure target modulation (e.g., enzyme inhibition) [8].

- Statistical Considerations: The small sample size (typically 10-15 participants) necessitates careful consideration of interpatient variability and the analytical performance of the PD assay. Establishing statistical significance for target modulation is challenging due to this heterogeneity [8].

Phase I: First-in-Human Safety and Dosing

Objective and Rationale: The primary goal is to determine the maximum tolerated dose (MTD) and recommended Phase 2 dose (RP2D), characterize the drug's pharmacokinetic (PK) and pharmacodynamic (PD) profile, and identify the acute side effects [9] [10] [15].

Detailed Methodology:

- Common Trial Designs: The most traditional design is the "3 + 3" cohort expansion design [15].

- Three participants are enrolled at a starting dose, typically derived from preclinical animal studies.

- If no Dose-Limiting Toxicities (DLTs) occur, the trial escalates to the next higher dose level.

- If one DLT is observed, the cohort is expanded to three additional participants (totaling six). If no further DLTs occur, escalation continues.

- The MTD is defined as the dose level below which ≥2 of 3-6 participants experience a DLT [15].

- Alternative Designs: To improve efficiency, Accelerated Titration Designs (ATDs) or Continual Reassessment Methods (CRM) may be used. These designs aim to limit the number of participants exposed to subtherapeutic doses and can provide a more precise estimate of the MTD [15].

- Key Measurements: Intensive safety monitoring (vitals, labs, ECGs), PK sampling to determine parameters like AUC and half-life, and, increasingly, PD biomarkers to demonstrate target engagement [10] [15].

Phase II: Preliminary Efficacy and Safety

Objective and Rationale: Phase II trials are designed to provide initial evidence of the drug's efficacy in a specific patient population and to further evaluate its safety [9] [12].

Detailed Methodology:

- Common Trial Designs:

- Single-Arm Trials: The most common design, often using a Simon's two-stage design to minimize patient exposure to ineffective therapies [12]. In the first stage, a small number of patients are treated. If a pre-specified number of responses (e.g., tumor shrinkage) is observed, the trial proceeds to the second stage, where more patients are accrued. If not, the trial is stopped for futility [12].

- Randomized Phase II Trials: These may compare two or more doses of the same drug or different experimental regimens. While not powered for definitive comparisons, they provide better evidence for selecting a regimen for Phase III [12].

- Key Endpoints:

- For oncology, the Objective Response Rate (ORR) based on RECIST criteria has been a traditional endpoint [12].

- Progression-Free Survival (PFS) and other time-to-event endpoints are now commonly used, especially for targeted therapies and immunotherapies where disease stabilization is a key outcome [12].

Phase III: Therapeutic Confirmatory Trials

Objective and Rationale: These are large-scale, definitive studies designed to demonstrate and confirm the efficacy of the investigational treatment relative to a standard-of-care control and to collect comprehensive safety data [9] [11].

Detailed Methodology:

- Trial Design: The gold standard is the randomized, controlled, double-blind trial [3] [11].

- Randomization: Patients are randomly assigned to the investigational arm or the control arm (placebo or standard therapy) to minimize selection bias.

- Blinding: In double-blind studies, neither the participant nor the investigator knows the treatment assignment, preventing assessment bias [3].

- Key Endpoints: These are often clinically meaningful endpoints such as Overall Survival (OS), PFS, or quality of life measures. The studies are powered to detect a statistically significant difference between the treatment arms [12].

Phase IV: Post-Marketing Surveillance

Objective and Rationale: To monitor the long-term safety and effectiveness of the drug after it has been approved and is in widespread clinical use [13] [11].

Detailed Methodology:

- Study Types:

- Observational/Non-Interventional Studies (NIS): Treatment choices follow routine medical practice. Data on safety, tolerability, and effectiveness are collected from thousands of patients in a naturalistic setting, providing real-world evidence [13].

- Large Simple Trials (LSTs): A hybrid design randomizing a large number of participants with follow-up per routine practice, maximizing both validity and generalizability [13].

- Regulatory-Required Studies: Regulatory agencies like the FDA may mandate specific Phase IV studies (e.g., to investigate a specific safety concern, use in a special population, or a new indication) as a condition of approval [14].

- Key Activities: Continuous spontaneous adverse event monitoring and reporting systems are critical for identifying rare but serious adverse reactions that were undetectable in pre-marketing trials due to their low incidence [13].

Visualizing the Clinical Trial Development Pathway

The following diagram illustrates the sequential and evaluative nature of the clinical trial process, highlighting key decision points from preclinical research through post-marketing surveillance.

The Scientist's Toolkit: Essential Reagents and Materials

The conduct of clinical trials relies on a standardized set of tools and materials to ensure data quality, patient safety, and regulatory compliance.

Table 2: Essential Materials and Reagents in Clinical Trials

| Item | Primary Function | Application Context |

|---|---|---|

| Investigational Product | The drug, biologic, or device being evaluated. | Administered to participants in all interventional phases (1-3) according to the protocol-defined dose and schedule [10]. |

| Validated Pharmacodynamic (PD) Assay | To quantitatively measure a drug's effect on its molecular target in human tissue. | Critical for Phase 0 trials and increasingly integrated into Phase 1 trials of targeted therapies to demonstrate proof-of-mechanism [8] [15]. |

| RECIST Criteria (Response Evaluation Criteria In Solid Tumors) | Standardized methodology for measuring tumor response in solid cancers. | A key tool for determining efficacy endpoints (e.g., Objective Response Rate) in Phase 2 and 3 oncology trials [12]. |

| Informed Consent Form (ICF) | Document ensuring participants understand the trial's purpose, procedures, risks, and benefits before enrolling. | An ethical and regulatory requirement for all clinical trial phases involving human participants [10] [11]. |

| Electronic Data Capture (EDC) System | Software platform for collecting clinical trial data electronically from investigational sites. | Used from Phase 1 onwards to ensure data accuracy, integrity, and efficient management for analysis and regulatory submission [10]. |

| Serious Adverse Event (SAE) Reporting Forms | Standardized documents for reporting any untoward medical occurrence that results in death, is life-threatening, requires hospitalization, or results in significant disability. | Mandatory for reporting to sponsors, IRBs/ECs, and regulators during all interventional phases (1-4) to ensure continuous safety monitoring [13]. |

The established clinical trial framework of Phases 0 through 4 provides a robust, sequential, and logical pathway for translating scientific discovery into safe and effective therapies. Each phase serves a distinct purpose, from initial exploratory and safety assessments in Phase 0 and I, to preliminary and confirmatory efficacy evaluations in Phase II and III, culminating in long-term safety monitoring in Phase IV. While the system demonstrates high reliability through its rigorous, phased approach to risk mitigation, its effectiveness is contingent on the precise execution of detailed experimental protocols and the use of validated tools and reagents. Understanding the specific objectives, methodologies, and success rates of each phase is fundamental for researchers and drug development professionals to reliably navigate the complex journey of therapeutic development.

For researchers, scientists, and drug development professionals, cancer staging systems provide the essential taxonomic framework that enables systematic investigation of disease progression, therapeutic efficacy, and patient outcomes. These systems establish a common language for describing cancer extent, without which comparative effectiveness research, clinical trial design, and population surveillance would be impossible. The reliability of cancer phase classification systems forms the bedrock of translational cancer research, facilitating the precise communication that allows discoveries to move from laboratory benches to clinical applications and ultimately to global health initiatives.

This comparative analysis examines the anatomical precision, data requirements, and research applications of major staging classifications: the comprehensive TNM system, the surveillance-oriented SEER Summary Stage, and emerging simplified alternatives designed for challenging data environments. Understanding the operational characteristics, validation evidence, and implementation contexts of these systems is fundamental to selecting appropriate methodologies for specific research objectives and resource settings.

The TNM Classification System: The Gold Standard for Anatomical Staging

System Architecture and Principles

The Tumor, Node, Metastasis (TNM) system, maintained through collaboration between the Union for International Cancer Control (UICC) and the American Joint Committee on Cancer (AJCC), represents the global gold standard for cancer staging [16] [17]. Its systematic approach classifies cancer based on three key anatomical domains:

- T (Primary Tumor): Describes the size and local extent of the original tumor using categories T0 (no evidence of primary tumor) to T4 (large size and/or extensive local invasion) [16] [18]

- N (Regional Lymph Nodes): Characterizes the extent of cancer spread to regional lymph nodes, ranging from N0 (no nodal involvement) to N3 (extensive nodal metastasis) [16] [18]

- M (Distant Metastasis): Indicates the presence or absence of distant spread, classified as M0 (no distant metastasis) or M1 (distant metastasis present) [16] [18]

These components combine to form stage groupings (0, I, II, III, IV) that correlate with prognosis and guide therapeutic decisions [19] [20]. The system undergoes periodic evidence-based revisions; the 9th edition for lung cancer implemented in January 2025 introduces refined N2 subcategories (N2a single station versus N2b multilevel) and M1c subdivisions (M1c1 single organ system versus M1c2 multiple organ systems) [21].

Research Applications and Methodological Considerations

The TNM system provides the foundational taxonomy for clinical trial stratification, therapeutic development, and prognostic research. Its precision supports investigation of stage-specific therapeutic responses and enables fine-grained survival analyses [21] [22]. The system's clinical integration means that treatment guidelines worldwide are structured according to TNM classifications, making it indispensable for drug development and comparative effectiveness research.

Experimental Protocol for TNM Validation: The validation of TNM revisions follows a rigorous multinational methodology exemplified by the International Association for the Study of Lung Cancer (IASLC) Staging Project [23]:

- Multicenter Data Collection: Prospective collection of detailed anatomical and outcome data from thousands of patients across multiple international centers

- Statistical Analysis: Multivariable analyses assessing the prognostic performance of proposed T, N, and M categories using overall survival as the primary endpoint

- Internal Validation: Bootstrapping techniques to verify statistical reliability and identify optimal cut points for size categories

- External Review: Peer review by multidisciplinary international experts before implementation

- Clinical Correlation: Integration of pathological and clinical data to ensure anatomical classifications correspond to biological behavior

This evidence-based approach ensures that TNM revisions reflect genuine prognostic differences rather than arbitrary anatomical distinctions.

System Architecture and Principles

The Surveillance, Epidemiology, and End Results (SEER) Summary Stage system, developed by the National Cancer Institute, employs a simplified conceptual framework optimized for population-level surveillance [17]. Unlike TNM's detailed anatomical focus, SEER Summary Stage categorizes cancer extent using broader categories:

- In situ: Pre-invasive malignant cells confined to the epithelium of origin

- Localized: Cancer confined to the organ of origin

- Regional: Cancer extended beyond the organ of origin to adjacent tissues or regional lymph nodes

- Distant: Cancer has metastasized to remote anatomical sites

- Unknown: Insufficient information for classification

This system prioritizes consistency abstractability over clinical precision, making it particularly valuable for epidemiological monitoring and health services research where detailed anatomical data may be unavailable [17].

Research Applications and Methodological Considerations

SEER Summary Stage excels in population-level studies examining cancer burden, disparities in stage at diagnosis, and healthcare system performance monitoring. Its simplified categories enable high completeness rates in cancer registry data, facilitating robust epidemiological analyses across diverse settings [17]. The system supports research investigating macro-level determinants of cancer outcomes, though its limited granularity restricts utility for precision medicine applications or targeted therapy development.

Simplified Staging Alternatives for Challenged Data Environments

System Architectures and Principles

Recognizing the implementation challenges of comprehensive staging systems, particularly in resource-limited settings, several simplified alternatives have emerged:

- Condensed TNM (cTNM): Developed by the European Network of Cancer Registries, this system applies generalized size and extension criteria across all tumor sites rather than organ-specific classifications, sacrificing site-specific precision for broader applicability [17]

- Essential TNM (eTNM): A UICC-initiated system designed for settings with incomplete diagnostic information, using simplified T (T0, T1, T2, T3, T4, TX), N (N0, N1, N2, N3, NX), and M (M0, M1, MX) categories with less detailed subclassifications [17]

- Registry-Derived Stage: An Australian approach that synthesizes available clinical, pathological, and administrative data to assign stage when complete formal staging is unavailable, employing algorithms to maximize data utility despite information gaps [17]

Research Applications and Methodological Considerations

These simplified systems enable cancer control research in settings where comprehensive staging implementation is constrained by diagnostic infrastructure, data collection capacity, or workforce limitations. While unable to support precision medicine applications, they provide meaningful data for public health planning, resource allocation, and monitoring of early detection initiatives [17]. Their development represents a pragmatic response to the reality that imperfect staging data may still yield valuable insights for cancer control.

Comparative Analysis: Data Requirements, Applications, and Limitations

Table 1: Comparative Analysis of Cancer Staging Systems

| System Attribute | TNM (9th Edition) | SEER Summary Stage | Simplified Alternatives (cTNM/eTNM) |

|---|---|---|---|

| Primary Application Context | Clinical management, therapeutic trials, prognostic research | Population surveillance, epidemiological research, health services evaluation | Resource-constrained settings, cancer control planning |

| Data Requirements | Detailed anatomical imaging, pathological evaluation, multidisciplinary review | Basic extent-of-disease information from available sources | Limited pathological/imaging data, adaptable to available information |

| Staging Granularity | High (precise anatomical subcategories) | Low (broad extent categories) | Variable (moderate to low) |

| Registry Completeness Rates | Often low (complexity challenges abstraction) | Generally high (simplified categories) | Moderate to high (adapted to local capacity) |

| Prognostic Discrimination | Excellent (validated against survival outcomes) | Moderate (broad category limitation) | Fair to moderate (limited precision) |

| Clinical Trial Utility | High (supports precise patient stratification) | Limited (insufficient for molecularly-targeted trials) | Low (inadequate precision for most trials) |

| Global Implementability | Variable (requires advanced diagnostic resources) | High (adaptable to diverse resource settings) | High (designed for challenged environments) |

| Revision Cycle | Regular evidence-based updates (e.g., 9th edition 2025) | Periodic updates | Irregular, limited validation |

Table 2: Research Context Appropriateness by Study Design

| Research Objective | Optimal Staging System | Rationale | Key Methodological Considerations |

|---|---|---|---|

| Molecular Correlates of Progression | TNM | Precise anatomical characterization enables correlation with molecular alterations | Requires standardized tissue collection protocols and central pathology review |

| Therapeutic Clinical Trials | TNM | Enriches patient populations for targeted interventions, supports regulatory approval | Must adhere to current edition specifications; staging consistency critical across sites |

| Global Cancer Burden Comparisons | SEER Summary Stage | Maximizes data completeness and comparability across diverse healthcare systems | Must account for differential diagnostic intensity between compared populations |

| Health Disparities Research | SEER Summary Stage | Enables identification of system-level factors affecting stage at diagnosis | Stage migration effects may complicate temporal and cross-system comparisons |

| Resource-Limited Setting Control Programs | Simplified (eTNM/cTNM) | Provides actionable data despite infrastructure limitations | Requires validation against local outcomes data; hybrid approaches may maximize utility |

Experimental Protocols for Staging System Validation

Protocol for Prognostic Performance Assessment

Objective: Quantitatively compare the prognostic discrimination of staging systems for specific cancer types using population-based data.

Methodology:

- Cohort Identification: Assemble retrospective cohort with documented cancer extent and vital status

- Stage Assignment: Apply each staging system to all cases using standardized algorithms

- Survival Analysis: Calculate stage-specific observed and relative survival using actuarial methods

- Discrimination Assessment: Compute concordance statistics (Harrell's C-index) for each system

- Model Adjustment: Evaluate staging system performance after adjusting for relevant covariates

Data Elements: Demographic characteristics, diagnostic confirmation, treatment details, follow-up duration, vital status

Analytical Approach: Multivariable Cox proportional hazards models with likelihood ratio tests to compare prognostic discrimination between systems

Objective: Evaluate the feasibility and reproducibility of staging system implementation across diverse abstractor skill levels and data completeness environments.

Methodology:

- Case Selection: Identify representative case series spanning disease spectrum

- Abstractor Recruitment: Engage participants with varied expertise (registrars, clinicians, trainees)

- Staging Assignment: Participants apply each system to identical cases using source documents

- Quality Assessment: Measure inter-rater reliability (Cohen's κ) and completeness rates

- Time Efficiency: Document time required for staging assignment per system

Evaluation Metrics: Inter-rater agreement, completeness rates, abstraction time, accuracy against reference standard

Research Reagent Solutions: Essential Methodological Tools

Table 3: Essential Research Resources for Staging System Investigations

| Research Resource | Function in Staging Research | Implementation Considerations |

|---|---|---|

| UICC/AJCC TNM Classification Manual (9th Edition) | Reference standard for anatomical staging criteria | Requires institutional licensing; digital versions facilitate integration with electronic data capture |

| SEER Summary Stage Manual (2018) | Reference standard for surveillance staging | Open access availability enhances implementation across resource settings |

| Structured Data Abstraction Tools | Standardized electronic case report forms for staging data collection | Should incorporate validation rules and logic checks to minimize abstraction errors |

| Cancer Registry Software Platforms | Enable systematic staging data management and quality control | Interoperability with hospital information systems critical for efficient data flow |

| Statistical Analysis Packages (R, SAS, Stata) | Support survival analyses and prognostic model development | Requires customized programming for stage-specific survival estimation |

| Natural Language Processing Algorithms | Automated extraction of staging elements from unstructured clinical narratives | Training with domain-specific corpora improves performance for staging concept identification |

Visualizing Staging System Selection and Relationships

Staging System Selection Algorithm for Research Applications

Data Flow from Clinical Sources to Research Applications

The reliability of cancer phase classification systems varies substantially across methodologies, with inherent trade-offs between anatomical precision, abstractability, and implementation feasibility. The TNM system remains indispensable for therapeutic development and precision medicine applications, while SEER Summary Stage provides robust infrastructure for population surveillance and health services research. Simplified alternatives offer pragmatic solutions for challenged data environments, though with constrained prognostic discrimination.

Future staging research should focus on integrating molecular classifiers with anatomical extent data, developing computational approaches for automated staging abstraction, and validating hybrid methodologies that maintain prognostic relevance despite information limitations. The ongoing evolution of these systems will continue to reflect both advances in biological understanding and practical implementation realities across diverse global settings.

The advancement of medical science hinges on the development and validation of robust, reliable classification systems that enable precise diagnosis, inform treatment decisions, and predict patient outcomes. This guide objectively compares two distinct yet equally critical frameworks emerging in their respective domains: artificial intelligence (AI)-driven CT phase classification systems and the Igls criteria for β-cell replacement therapy outcomes. While applied in different clinical contexts—medical imaging and transplant medicine—both frameworks share a common purpose: to replace subjective assessment with standardized, data-driven evaluation. The reliability of such systems is paramount, as it directly impacts their clinical adoption and utility in both patient management and research settings. This analysis examines the architectural methodologies, performance metrics, and validation evidence for each framework, providing researchers and clinicians with a comparative understanding of their operational principles and relative strengths.

AI in CT Phase Classification: Technical Architectures and Performance

Technical Approaches and Methodologies

Automated CT phase classification systems employ diverse deep learning architectures to categorize contrast enhancement phases, a critical prerequisite for accurate diagnostic interpretation and downstream AI applications. The dominant approach utilizes residual neural networks (ResNet), with ResNet-18 being successfully implemented as a shared feature extraction backbone in a two-step classification strategy. This architecture first distinguishes arterial, portal venous, and delayed phases, then further classifies arterial phase images into early or late arterial sub-categories [24]. This cascading refinement strategy has demonstrated superior performance over single-step classification, significantly enhancing accuracy by addressing the subtle feature differences between early and late arterial phases [24].

An alternative methodology employs organ segmentation coupled with machine learning, creating a more explainable and computationally efficient pipeline. This approach automatically segments key organs—including liver, spleen, heart, kidneys, lungs, urinary bladder, and aorta—using pre-trained deep learning algorithms, then extracts first-order statistical features (average, standard deviation, and percentile values) from these regions [25]. These features subsequently feed into classifier models such as Random Forests, achieving exceptional accuracy by mimicking the radiologist's logic of assessing enhancement patterns in specific anatomical structures [25].

Comparative Performance Data

The table below summarizes the documented performance of various AI approaches for medical image classification across multiple clinical applications:

Table 1: Performance Metrics of AI Classification Systems in Medical Applications

| Application | AI Architecture | Dataset Size | Accuracy | Sensitivity/Specificity | AUC | Citation |

|---|---|---|---|---|---|---|

| Abdominal CT Phase Classification | Two-step ResNet | 1,175 scans (internal); 215 scans (external) | 98.3% (internal); 99.1% (external) | Sensitivities: 95.1%-99.5% across phases | N/R | [24] |

| CT Phase Classification via Organ Segmentation | Organ Segmentation + Random Forest | 2,509 CT images | 99.4% | Average AUC >0.999 | >0.999 | [25] |

| Stroke Classification (Hemorrhagic, Ischemic, Normal) | ResNet-18 | 6,653 CT brain scans | 95% | N/R | N/R | [26] |

| Renal Mass Malignancy Classification | Multi-phase CNN | 13,261 CT volumes from 4,557 patients | N/R | Surpassed 6 of 7 radiologists | 0.871 (prospective test) | [27] |

| HCC Diagnosis | CNN | 27,006 patients (7 studies) | N/R | Sensitivity: 63-98.6%; Specificity: 82-98.6% | 0.869-0.991 | [28] |

Abbreviations: AUC: Area Under the Curve; N/R: Not Reported; CNN: Convolutional Neural Network; HCC: Hepatocellular Carcinoma

Experimental Workflow and Research Reagents

The typical development pipeline for an AI-based CT phase classification system involves several methodical stages, as visualized in the following workflow:

Figure 1: AI CT Phase Classification Development Workflow

For researchers developing or implementing such systems, key computational and data resources include:

Table 2: Research Reagent Solutions for AI CT Classification

| Reagent Category | Specific Examples | Function in Research | |

|---|---|---|---|

| Deep Learning Frameworks | PyTorch, TensorFlow | Provides foundation for model development and training | [24] |

| Pre-trained Architectures | ResNet-18, ResNet-50 | Serves as feature extraction backbone via transfer learning | [24] |

| Segmentation Models | Pre-trained organ segmentation algorithms | Enables organ-based feature extraction approach | [25] |

| Data Augmentation Techniques | Random flipping, rotation, translation | Increases dataset diversity and improves model generalization | [26] |

| Feature Selection Algorithms | Boruta, MRMR, RFE | Identifies most predictive features in organ-based approach | [25] |

Igls Criteria for Transplant Outcomes: Standardization in β-Cell Replacement

Framework Definition and Evolution

The Igls criteria establish a standardized classification system for evaluating functional outcomes following β-cell replacement therapy (including pancreas and islet transplantation), addressing a critical need for consistent reporting across centers and enabling meaningful comparison between different therapeutic approaches [29]. First established in 2017 through consensus between the International Pancreas & Islet Transplant Association (IPITA) and the European Pancreas & Islet Transplant Association (EPITA), the criteria define graft function through four hierarchical categories—Optimal, Good, Marginal, and Failure—based on integration of four key parameters: glycated hemoglobin (HbA1c), severe hypoglycemia events, insulin requirements, and C-peptide levels [29].

The system has undergone refinement since its initial introduction, with a 2019 symposium examining its implementation and proposing revisions that would better align with continuous glucose monitoring (CGM) metrics and facilitate comparison with artificial pancreas systems [29]. Subsequent adaptations have emerged for specific patient populations, including modified versions for islet autotransplantation (IAT) where pre-transplant baseline parameters are often unavailable [30].

Classification Criteria and Comparative Performance

The table below outlines the core Igls criteria and its performance in clinical validation studies:

Table 3: Igls Criteria Classification and Validation Outcomes

| Assessment Aspect | Optimal Function | Good Function | Marginal Function | Failure | Citation |

|---|---|---|---|---|---|

| HbA1c | ≤6.5% (48 mmol/mol) | <7.0% (53 mmol/mol) | ≥7.0% (53 mmol/mol) | Baseline | [29] |

| Severe Hypoglycemia | None | None | < Baseline frequency | Baseline | [29] |

| Insulin Requirements | None | <50% of baseline | ≥50% of baseline | Baseline | [29] |

| C-peptide | > Baseline | > Baseline | > Baseline | ≤ Baseline | [29] |

| Treatment Success | Yes | Yes | No | No | [29] |

| Clinical Validation (Cross-sectional study) | N/A | 33% of recipients | 27% of recipients | 40% of recipients | [31] |

| PROMs Validation | N/A | Better well-being outcomes | Greater fear of hypoglycemia, anxiety, diabetes distress | N/A | [31] |

Modified Criteria for Islet Autotransplantation (IAT)

For islet autotransplantation settings, where patients typically lack pre-existing diabetes and pre-transplant baselines, several institutions have developed modified criteria:

Table 4: Comparison of IAT-Specific Modified Classification Systems

| Classification System | C-peptide Threshold (Fasting) | HbA1c Requirement | Insulin Independence | Citation |

|---|---|---|---|---|

| Igls Updates | Optimal: Any; Good: ≥0.2 ng/mL; Marginal: ≥0.1 ng/mL; Failed: <0.1 ng/mL | Optimal: ≤6.5%; Good: <7%; Marginal: ≥7% | Required for Optimal only | [30] |

| Chicago Auto-Igls | >0.5 ng/mL for all categories except Failure | Optimal: ≤6.5%; Good: <7%; Marginal: ≥7% | Required for Optimal only | [30] |

| Minnesota Auto-Igls | ≥0.2 ng/mL (≥0.5 ng/mL stimulated) for Optimal/Good/Marginal | Optimal: ≤6.5%; Good: <7%; Marginal: ≥7% | Required for Optimal only | [30] |

| Data-Driven Approach | No predefined thresholds; cluster-based | No predefined thresholds; cluster-based | Not a defining factor | [30] |

Clinical Application Workflow

The practical application of the Igls criteria in clinical practice follows a structured assessment pathway:

Figure 2: Igls Criteria Clinical Application Workflow

Comparative Analysis: Reliability Dimensions Across Frameworks

Methodological Reliability and Validation Evidence

Both classification frameworks demonstrate substantial but distinct validation evidence supporting their reliability:

AI CT Phase Classification relies primarily on technical performance metrics against expert-annotated ground truth. The exceptionally high accuracy rates (98.3-99.4%) reported across multiple studies [24] [25] indicate robust technical reliability. External validation across multiple hospitals with 99.1% accuracy [24] further supports generalizability. The two-step ResNet approach demonstrates that architectural decisions directly impact reliability, with its cascaded classification significantly outperforming single-step models (98.3% vs. 91.7% accuracy) [24].

Igls Criteria validation emphasizes clinical correlation and patient-reported outcome measures. Cross-sectional analysis demonstrates the criteria's ability to differentiate not only metabolic outcomes but also psychosocial status, with "Marginal" function associated with greater fear of hypoglycemia, severe anxiety, diabetes distress, and low mood compared to "Good" function [31]. This person-reported validation strengthens the clinical reliability of the classification boundaries. The criteria also effectively discriminate long-term outcomes between different transplant modalities (islet vs. pancreas vs. simultaneous pancreas-kidney) [29].

Implementation Challenges and Adaptation

Both systems face implementation challenges that affect their reliability in real-world settings:

AI CT Systems confront data heterogeneity issues, with performance variations across different scanner manufacturers, acquisition protocols, and patient populations. Studies note that models trained on heterogeneous datasets can demonstrate significant performance variations, with accuracy differences exceeding 40% between test sets [32]. Explainability remains a challenge, though approaches utilizing organ segmentation and feature extraction offer more transparent decision pathways compared to end-to-end deep learning models [25].

Igls Criteria face challenges in specific patient populations, particularly islet autotransplantation recipients where pre-transplant baseline values are unavailable [30]. This has necessitated the development of multiple modified criteria (Chicago, Minneapolis, Milan protocols) with differing C-peptide thresholds, potentially compromising cross-center comparability. The recent proposal of a data-driven approach without predefined thresholds may address this limitation by identifying natural clusters within patient data [30].

This comparative analysis reveals that while AI-driven CT phase classification and the Igls criteria operate in fundamentally different clinical domains, both represent significant advancements in standardized assessment methodology. The AI framework offers exceptionally high technical accuracy (98.3-99.4%) through sophisticated pattern recognition, potentially streamlining workflow and reducing human error in image interpretation [24] [25]. The Igls criteria provide comprehensive clinical evaluation through multidimensional integration of biochemical and patient-reported outcomes, effectively predicting both physiological and psychosocial outcomes [29] [31].

For researchers and clinicians, the choice between these frameworks—or their implementation in tandem—depends on the specific clinical question. AI classification excels in tasks requiring rapid, reproducible image analysis, while the Igls criteria offer nuanced assessment of complex clinical outcomes. Both systems continue to evolve, with AI models incorporating test-time adaptation to address data distribution shifts [33], and the Igls criteria expanding to incorporate continuous glucose monitoring metrics [29]. Their parallel development underscores a broader trend in medicine: the pursuit of objective, standardized classification systems that enhance both clinical decision-making and research comparability across institutions and therapeutic approaches.

The Impact of Classification Choice on Data Interpretation and Regulatory Decisions

The selection of a classification system is a critical methodological step that directly shapes scientific interpretation and dictates regulatory outcomes. In pharmaceutical development and healthcare policy, this choice determines how drugs are grouped on formularies, influencing patient access, treatment costs, and the direction of clinical research. This guide objectively compares two prominent drug classification systems—the USP Drug Classification (USP DC) and the USP Medicare Model Guidelines (USP MMG)—within the broader context of reliability research for phased classification systems. By examining their structures, update cycles, and applications, stakeholders can make informed, evidence-based decisions that enhance the reliability and consistency of drug development and coverage.

Drug classification systems are foundational tools that organize medications into hierarchical categories and classes based on their therapeutic use, pharmacological mechanism, and chemical properties [34]. They create a standardized language for managing drug formularies, which are the lists of prescription drugs covered by a health insurance plan. The reliability of these systems—their accuracy, consistency, and adaptability over time—is paramount. Just as reliability engineering assesses how systems maintain functionality under defined conditions for a specified time [35], the reliability of a classification system is measured by its ability to accurately reflect the evolving therapeutic landscape without introducing disruptive changes that could impede patient care or research continuity.

The United States Pharmacopeia (USP), a nonprofit organization, develops and maintains two primary drug classification systems that are widely used in the United States [36] [34]:

- USP Medicare Model Guidelines (USP MMG): Developed specifically for Medicare Part D plans under a federal mandate.

- USP Drug Classification (USP DC): Designed for non-Medicare Part D plans, including commercial health plans and those offering Essential Health Benefits.

These systems serve as a critical nexus between scientific progress, clinical practice, and regulatory policy. Their structure and revision process directly impact which drugs are readily accessible to patients and how clinical trials are designed and interpreted.

Comparative Analysis of USP MMG and USP DC

A direct comparison of the USP MMG and USP DC reveals significant differences in their design, scope, and operational cadence, which in turn affect their reliability and suitability for different applications.

Table 1: Core Structural and Operational Comparison of USP MMG and USP DC

| Feature | USP Medicare Model Guidelines (MMG) | USP Drug Classification (DC) |

|---|---|---|

| Regulatory Mandate | Developed under the Medicare Modernization Act [34] | No specific federal mandate; created for broader commercial use [34] |

| Intended Market | Medicare Part D plans exclusively [34] | Non-Medicare Part D plans (e.g., commercial, EHBs) [34] |

| Update Cycle | Every 3 years (e.g., MMG v9.0 effective 2024-2026) [36] | Annually (e.g., USP DC 2025 published Jan. 2025) [36] [34] |

| Scope of Drugs | Part D eligible drugs only [34] | More comprehensive, includes outpatient drugs beyond Part D scope (e.g., cough/cold, fertility drugs) [36] [34] |

| Structural Granularity | Two-tiered (Category & Class) [34] | Four-tiered, including Pharmacotherapeutic Groups (PGs) [36] [34] |

| Example Drug Count | Not specified in sources | Over 2,055 example drugs in USP DC 2025 [34] |

The divergent update cycles are a critical differentiator impacting system reliability. The USP DC's annual revision cycle offers higher adaptability, allowing it to incorporate new FDA-approved drugs and emerging clinical evidence more rapidly [36]. In contrast, the MMG's three-year cycle, while ensuring stability for government planning, risks creating a lag between scientific innovation and its reflection in the classification standard. This lag can directly impact data interpretation in longitudinal clinical studies and delay patient access to novel therapies within government programs.

Furthermore, the USP DC's additional layer of Pharmacotherapeutic Groups (PGs) provides superior granularity. For instance, the USP DC 2025 added 65 new PGs, with 34 in the "Molecular Target Inhibitor" class and 24 in the "Monoclonal Antibody/Antibody-Drug Conjugate" class [36]. This level of detail is essential for precise formulary management and accurate data analysis in specialized fields like oncology, where drugs with different molecular targets, while belonging to the same broad class, are not clinically interchangeable.

Experimental Data and Case Studies

Quantitative Analysis of Classification System Application

The impact of classification choice is quantifiable. Analysis of the Alzheimer's disease (AD) drug development pipeline for 2025 provides a clear example of how categories shape the understanding of a therapeutic landscape.

Table 2: Analysis of the 2025 Alzheimer's Disease Drug Development Pipeline by Therapeutic Purpose

| Therapeutic Purpose Category | Percentage of Pipeline (%) | Representative Drug Targets / Mechanisms |

|---|---|---|

| Biological Disease-Targeted Therapies (DTTs) | 30% | Amyloid-beta (Aβ), Tau, Inflammation [37] |

| Small Molecule DTTs | 43% | Inflammation, Synaptic Function, Proteostasis [37] |

| Cognitive Enhancers | 14% | Transmitter receptors, Synaptic plasticity [37] |

| Neuropsychiatric Symptom Ameliorators | 11% | Agitation, Psychosis, Apathy [37] |

| Repurposed Agents | 33% (of total agents) | Varies (e.g., drugs originally for other indications) [37] |

This categorization reveals strategic priorities: over 70% of the pipeline is dedicated to disease-targeting therapies rather than symptomatic relief. The high proportion of repurposed agents (33%) also highlights a key area where classification systems must be flexible enough to accommodate drugs being investigated for new, off-label uses. A system without the granularity to classify these repurposed agents accurately could obscure promising research trends.

Experimental Protocol: Formulary Comparison and Gap Analysis

To objectively assess the practical impact of classification choice, researchers and formulary managers can employ the following experimental protocol:

Objective: To identify gaps in formulary coverage and differences in drug grouping by comparing the mapping of a specific drug portfolio (e.g., oncology drugs) using both the USP MMG and the USP DC systems.

Materials & Reagents:

- Drug Portfolio List: A comprehensive list of drugs, including National Drug Codes (NDCs) and active ingredients.

- USP MMG v9.0 Alignment File: The official mapping document from CMS.

- USP DC 2025 Database: The freely available Microsoft Excel file or the subscription-based USP DC PLUS with RxNorm mappings [34].

- Database Management Software: (e.g., PostgreSQL, Microsoft Access) to store and cross-reference data.

Methodology:

- Data Import: Load the drug portfolio and both classification system mappings into the database.

- Automated Mapping: Run queries to assign each drug in the portfolio to its corresponding category and class in both the MMG and DC systems.

- Gap Analysis: Identify drugs present in the USP DC that have no corresponding classification in the MMG due to its narrower scope.

- Granularity Comparison: For drugs classified in both systems, compare the level of detail. Specifically, note where the USP DC provides a specific Pharmacotherapeutic Group (PG) while the MMG places the drug in a broader "Miscellaneous" or "Other" class.

- Impact Assessment: Calculate the percentage of the portfolio affected by classification gaps or differences in granularity.

Expected Output: This experiment will yield quantitative data on the limitations of the triennial MMG compared to the annual DC in covering a modern drug portfolio. It will demonstrate how the choice of system can lead to under-representation of certain drugs on a formulary and create blind spots in data analysis.

Diagram 1: Formulary Analysis Workflow. This diagram outlines the experimental protocol for comparing classification systems.

Navigating and contributing to drug classification systems requires a specific set of data tools and resources.

Table 3: Essential Research Reagents and Resources for Drug Classification Research

| Tool / Resource | Function / Purpose | Relevance to Classification |

|---|---|---|

| USP DC PLUS (Subscription) | Provides coded identifiers (NDCs, RxCUIs) for linking classification to product and pricing data [34]. | Essential for large-scale, automated analysis of formularies and drug utilization patterns. |

| ClinicalTrials.gov API | Allows programmatic access to trial data for pipeline analysis [37]. | Enables empirical tracking of how new therapeutic agents are defined and categorized in research. |

| RxNorm Database | Provides standardized normalized names for clinical drugs and links to many drug vocabularies. | Serves as a critical terminology bridge for mapping drugs across different classification systems and datasets. |

| DrugBank | A comprehensive bioinformatics and chemoinformatics resource on drugs and drug mechanisms. | Used to verify and research the mechanism of action and therapeutic intent of pipeline agents [37]. |

| Reliability Engineering Models | Models (e.g., Markov) used to assess system performance over time under degradation [35] [38]. | Provides a conceptual framework for evaluating the stability and failure modes of a classification system over its update cycles. |

Logical Framework and Pathways for System Selection

The decision of which classification system to use is not arbitrary. It should be guided by a logical framework that aligns the system's characteristics with the user's primary objectives, whether for regulatory compliance, clinical research, or commercial formulary management.

Diagram 2: System Selection Logic. A decision pathway for choosing between USP MMG and USP DC.

The choice between drug classification systems like the USP MMG and USP DC has a profound and measurable impact on data interpretation and regulatory decisions. The evidence demonstrates that the USP DC, with its annual update cycle and more granular four-tiered structure, offers greater adaptability and precision for dynamic environments like commercial formulary management and contemporary clinical research. Conversely, the USP MMG provides the stability and specific compliance framework required for the federally regulated Medicare Part D program.

The reliability of any phased classification system hinges on its design and maintenance. Stakeholders must engage proactively with standards-setting organizations like USP during public comment periods to ensure these vital tools evolve with the scientific landscape [36]. By applying the comparative data, experimental protocols, and logical frameworks presented here, researchers, scientists, and drug development professionals can make strategic, evidence-based classification choices that ultimately enhance the reliability of their work and promote better patient outcomes.

From Theory to Practice: Implementing Classification Systems in Research and Clinics

Clinical trials represent the cornerstone of modern medical research, providing a structured framework for evaluating new treatments, medications, and medical devices. The traditional clinical development pathway proceeds through four sequential phases (I-IV), each serving distinct objectives in establishing a therapeutic agent's safety and efficacy profile [1]. This phased classification system has evolved over decades in response to scientific, ethical, and regulatory developments, creating a standardized language for researchers, regulators, and drug development professionals worldwide [39].

The reliability of this phase classification system rests on its systematic approach to risk management and evidence generation. Each phase functions as a gatekeeping mechanism, requiring candidate therapies to meet increasingly stringent evidence thresholds before progressing further [39]. This structured evaluation process aims to balance scientific rigor with ethical considerations, ensuring that human subjects are not exposed to unnecessary risks while facilitating the development of promising therapies. Understanding the operational specifics of each phase—including their unique objectives, dosage considerations, and population parameters—is fundamental to evaluating the reliability and applicability of this classification framework in contemporary drug development.

Comparative Analysis of Clinical Trial Phases

The established four-phase model represents a progression from initial safety assessment in small groups to post-marketing surveillance in diverse populations. Each phase builds upon knowledge gained in previous stages, with decision points between phases determining whether a drug candidate advances further in development [1]. The following comparative analysis examines the operational parameters across this developmental continuum.

Table 1: Core Characteristics of Clinical Trial Phases

| Phase | Primary Objectives | Typical Population Size | Population Type | Dosage Considerations | Key Endpoints |

|---|---|---|---|---|---|

| Phase I | Assess safety, tolerability, pharmacokinetics, and identify maximum tolerated dose [1] [39] | 20-100 participants [1] | Healthy volunteers (except in toxic therapies like oncology) [1] [39] | Dose-escalation designs to determine safe dosage range [1] | Safety/adverse events; pharmacokinetic parameters; maximum tolerated dose [1] |

| Phase II | Evaluate preliminary efficacy and further assess safety profile [1] [40] | 100-300 patients [1] [40] | Patients with the target disease or condition [40] | Uses dose identified in Phase I; may compare multiple doses [40] | Efficacy signals; side effect profile; optimal dosing [1] |

| Phase III | Confirm efficacy, monitor adverse reactions, compare to standard treatments [1] [41] | 300-3,000+ participants [1] [41] | Large patient populations with the condition, often across multiple sites [41] | Optimal dose from Phase II; compared against control treatments [41] | Clinical efficacy on primary endpoints; safety in diverse populations; risk-benefit assessment [41] |

| Phase IV | Monitor long-term safety and effectiveness in real-world settings [1] | Variable, often large thousands [1] | Broad patient populations in real-world clinical practice [1] | Approved dosage under real-world conditions [1] | Rare adverse events; long-term outcomes; effectiveness in broader populations [1] |

Table 2: Success Rates and Timeline Considerations

| Phase | Typical Duration | Success Rate (Advancement to Next Phase) | Regulatory Focus |

|---|---|---|---|

| Phase I | Several months [1] | Approximately 5-14% of treatments complete all phases and receive approval [1] | Initial human safety, dose-ranging [1] |

| Phase II | Several months to 2 years [1] | ~33% of drugs move from Phase II to Phase III [12] | Preliminary efficacy, continued safety [40] |

| Phase III | 1-4 years [1] | 50-60% chance of approval at Phase III stage [41] | Definitive efficacy evidence for marketing approval [41] |

| Phase IV | Continuous monitoring (no set duration) [1] | N/A (post-approval phase) | Post-marketing safety surveillance [1] |

Experimental Protocols and Methodological Approaches

Phase I Trial Methodologies

Phase I trials employ specialized designs to determine safe dosage ranges and characterize initial human safety profiles. Traditional dose-escalation designs include the 3+3 design, where small cohorts of 3 participants receive increasing dose levels until predetermined toxicity thresholds are reached [42]. Modern approaches have evolved to include model-based designs such as the Continual Reassessment Method (CRM), Bayesian Optimal Interval (BOIN) design, and modified Toxicity Probability Interval (mTPI-2) methods, which use statistical modeling to improve efficiency in identifying the maximum tolerated dose (MTD) [42].

The MTD is typically defined as the highest dose where the probability of dose-limiting toxicity (DLT) is close to or does not exceed a target toxicity rate (pT), often set at pT = 0.30 for oncology trials [42]. Bayesian statistical frameworks have been developed for sample size planning in Phase I trials, with methods like BayeSize using effect size concepts in dose-finding and operating under constraints of statistical power and type I error rates [42]. These methodologies employ composite hypotheses testing—comparing H0 (none of the doses are MTD) versus H1 (one of the doses is MTD)—to determine appropriate sample sizes under specified statistical constraints [42].

Phase II Trial Design Frameworks

Phase II trials serve as critical "go/no-go" decision points, determining whether a treatment demonstrates sufficient promise to warrant further investigation in large-scale Phase III trials [12]. Single-arm trials with historical controls are commonly employed, particularly in oncology, where objective response rate (ORR) based on RECIST criteria has traditionally been the primary endpoint [12]. Simon's two-stage design is a widely implemented approach that minimizes patient exposure to ineffective agents by incorporating an interim futility analysis after enrollment of a relatively small number of patients (<30) [12].

Randomized Phase II trials are increasingly employed to provide more robust efficacy signals and reduce reliance on historical controls, which may introduce bias due to differences in populations, standard-of-care, or assessment methods [12]. Endpoint selection has expanded beyond tumor response to include time-to-event endpoints such as progression-free survival (PFS), particularly for combination therapies and molecularly targeted agents where disease stabilization may be more relevant than tumor shrinkage [12]. Phase II trials also generate insights on adverse event management, treatment tolerability, and optimal regimens for future study [12].

Phase III Trial Design Considerations

Phase III trials employ rigorous methodological approaches to generate definitive evidence for regulatory approval. Randomization is a cornerstone principle, typically implemented through computer-generated algorithms that assign participants to treatment or control groups, often with stratification by key prognostic factors (age, disease severity, biomarkers) to reduce variability and improve statistical power [41]. Blinding procedures—particularly double-blinding where neither investigator nor participant knows the treatment assignment—are implemented to minimize bias in outcome assessment, especially for subjective endpoints [41].

Control groups may utilize placebo controls or active comparators, with selection dependent on ethical considerations and disease context [41]. For severe or life-threatening conditions where withholding effective treatment would be unethical, active-controlled trials are mandatory [41]. Sample size determination is based on power calculations that incorporate expected effect sizes, dropout rates, outcome variability, and target power thresholds (typically 80-90%) [41]. Endpoint specification requires careful pre-definition of clinically relevant, statistically valid primary endpoints that reflect meaningful patient benefit, with hierarchical testing strategies to control type I error when assessing multiple endpoints [41].

Figure 1: Clinical Trial Phase Progression Pathway

Sample Size Determination Methodologies

Statistical Foundations for Sample Size Planning

Sample size determination represents a critical methodological consideration across all trial phases, balancing statistical requirements with practical constraints. For definitive Phase III trials, frequentist approaches traditionally dominate, with sample size chosen to control type I error (typically α=0.05) and achieve specified power (usually 80-90%) to detect a predefined treatment effect size [43]. However, this approach has limitations, particularly in that it does not explicitly incorporate the size of the target population who might benefit from the treatment—a consideration especially relevant in rare diseases or small populations where large trials may be infeasible [43].

Decision-theoretic approaches offer an alternative framework that incorporates explicit consideration of the patient horizon—the size of the population who might benefit from the treatment—in sample size determination [43]. These methods aim to maximize the total expected utility, which includes benefits to both trial participants and future patients who will receive the treatment based on trial results [43]. Asymptotic analysis indicates that as the population size N becomes large, the optimal trial size in such frameworks is O(√N), providing mathematical insight into the relationship between population size and efficient trial sizing [43].

Bayesian Methods in Sample Size Determination

Bayesian methods have emerged as valuable approaches for sample size planning, particularly in early-phase trials. For Phase I dose-finding trials, Bayesian designs such as the CRM, BOIN, and mTPI-2 use prior distributions updated with accumulating trial data to guide dose escalation and MTD identification [42]. The BayeSize method employs a Bayesian hypothesis testing framework, using two types of priors—fitting priors (for model fitting) and sampling priors (for data generation)—to conduct sample size calculation under constraints of statistical power and type I error [42].