Direct Measurement vs. Estimation in Drug Development: A Critical Comparison for Enhancing Research Rigor and Success

This article provides a critical examination of the methodologies of direct measurement versus estimation across the drug development lifecycle.

Direct Measurement vs. Estimation in Drug Development: A Critical Comparison for Enhancing Research Rigor and Success

Abstract

This article provides a critical examination of the methodologies of direct measurement versus estimation across the drug development lifecycle. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles of both approaches, their practical applications from discovery to post-market surveillance, and strategies for troubleshooting common pitfalls. By presenting a comparative validation of their impact on data integrity, regulatory success, and return on investment, this review offers a strategic framework for making evidence-based methodological decisions to de-risk development and accelerate the delivery of innovative therapies.

The Pillars of Precision: Defining Direct Measurement and Estimation in Biomedical Research

In menstrual cycle research, the accurate determination of cycle phases is fundamental to investigating how hormonal fluctuations influence physiological and psychological outcomes. The methodological approaches to phase identification fall into two distinct categories: direct measurement through biochemical analysis or imaging, and informed estimation based on assumptions and proxy indicators. This guide compares these core methodologies, providing researchers with the experimental data and protocols needed to select appropriate techniques for their specific scientific objectives.

Defining the Core Methodologies

Direct Measurement

Direct measurement involves quantifying biological variables through objective, empirical methods to precisely identify menstrual cycle phases. This approach provides the highest level of accuracy by directly assessing hormonal concentrations or physiological events. [1] [2]

Informed Estimation

Informed estimation utilizes proxy measures, calculations, and assumptions to infer cycle phases without direct biochemical or imaging confirmation. These methods rely on established patterns and statistical predictions. [1] [3]

Experimental Protocols for Direct Measurement

Quantitative Hormone Monitoring Protocol

The Quantum Menstrual Health Monitoring Study establishes a gold standard protocol for direct hormonal measurement: [2]

Objective: To characterize patterns in urine reproductive hormones (FSH, E13G, LH, PDG) that predict and confirm ovulation, referenced to serum hormones and ultrasound.

Design: Prospective cohort with longitudinal follow-up tracking urinary hormones with serum correlations and ultrasound-confirmed ovulation.

Participants: Three groups - regular cycles (24-38 days), polycystic ovarian syndrome with irregular cycles, and athletes with irregular cycles.

Methods:

- Daily urine samples analyzed with Mira monitor for FSH, E13G, LH, PDG

- Serial ultrasounds for follicular tracking and ovulation confirmation

- Serum hormone measurements for correlation

- Anti-Müllerian hormone levels for ovarian reserve context

- Bleeding patterns tracked via validated Mansfield-Voda-Jorgensen Menstrual Bleeding Scale

Sample Size: 50 participants over 3 cycles (150 total cycles) provides 80% power to detect differences of 0.5 days in estimated ovulation day. [2]

Ovarian Hormone Assessment Protocol

For studies requiring precise hormone documentation: [1] [4]

Ovulation Confirmation:

- Serum progesterone ≥9.5 nmol/L (≥3 ng/ml) during luteal phase

- Alternative: Quantitative Basal Temperature (QBT) tracking validated against LH surge

- Direct LH surge measurement in urine

Cycle Phase Timing:

- Follicular phase: From menses onset through ovulation day

- Luteal phase: From post-ovulation through day before next menses

- Phases confirmed via hormone levels rather than calendar estimates

Experimental Protocols for Informed Estimation

Calendar-Based Calculation Method

This approach relies on temporal assumptions without biochemical confirmation: [1] [3]

Standardized Cycle Day Coding:

- Forward-count method: Days 1-10 from menstrual onset

- Backward-count method: Days -1 to -10 preceding next menstruation

- Requires two "bookend" menstrual start dates

Phase Estimation:

- Follicular phase: First 14 days of 28-day model cycle

- Luteal phase: Final 14 days of 28-day model cycle

- Assumes consistent 13.3-day luteal phase (SD=2.1 days)

Limitations: Only 3% of cycle length variance attributable to luteal phase variance; 69% attributable to follicular phase length variation. [1]

Symptothermal and Proxy Methods

Combining multiple estimation approaches: [1] [3]

Basal Body Temperature (BBT):

- Measures progesterone-mediated thermogenic effect

- Rise of 0.3-0.5°C indicates ovulation occurrence

- Limited for prediction; only confirms post-ovulation

Cervical Mucus Observations:

- Quality changes throughout cycle

- Most fertile quality near ovulation

Cycle Length Assumptions:

- Regular cycles defined as 21-35 days

- Uses population averages for phase timing

Comparative Experimental Data

Accuracy Metrics for Phase Identification Methods

Table 1: Comparison of Methodological Accuracy for Ovulation Detection

| Method | Gold Standard Reference | Detection Capability | Error Range | Practical Limitations |

|---|---|---|---|---|

| Transvaginal Ultrasound | Direct visualization | Pre-ovulatory follicle growth + ovulation confirmation | ±0 days | Resource-intensive, requires multiple visits |

| Serum Progesterone | Biochemical confirmation | Post-ovulation confirmation (≥9.5 nmol/L) | Laboratory variability | Cannot predict ovulation timing |

| Urinary LH Monitoring | LH surge correlation | Predicts ovulation 24-36 hours prior | ±12-24 hours | Misses anovulatory cycles |

| Quantitative Basal Temperature | Validated against LH surge | Confirms ovulation after occurrence | ±1-2 days | Cannot predict ovulation timing |

| Calendar Calculation | Statistical averages | Estimates based on population norms | ±3-5 days | High individual variability |

Hormonal Correlation Data

Table 2: Validation Data for Quantitative Urinary Hormone Monitoring

| Hormone | Biological Role in Cycle | Correlation with Serum | Pattern for Phase Identification | Clinical Utility |

|---|---|---|---|---|

| Luteinizing Hormone (LH) | Triggers ovulation | r=0.85-0.92 with serum LH | Surge precedes ovulation by 24-36 hours | Prediction of ovulation |

| Pregnanediol Glucuronide (PDG) | Urinary metabolite of progesterone | r=0.79-0.88 with serum progesterone | Rises after ovulation, peaks mid-luteal | Confirmation of ovulation |

| Estrone-3-Glucuronide (E13G) | Urinary estrogen metabolite | r=0.80-0.90 with serum estradiol | Rises through follicular phase, peaks peri-ovulatory | Follicular development tracking |

| Follicle-Stimulating Hormone (FSH) | Follicle development stimulation | r=0.75-0.85 with serum FSH | Early follicular rise, suppressed in luteal phase | Ovarian reserve assessment |

Methodological Workflows

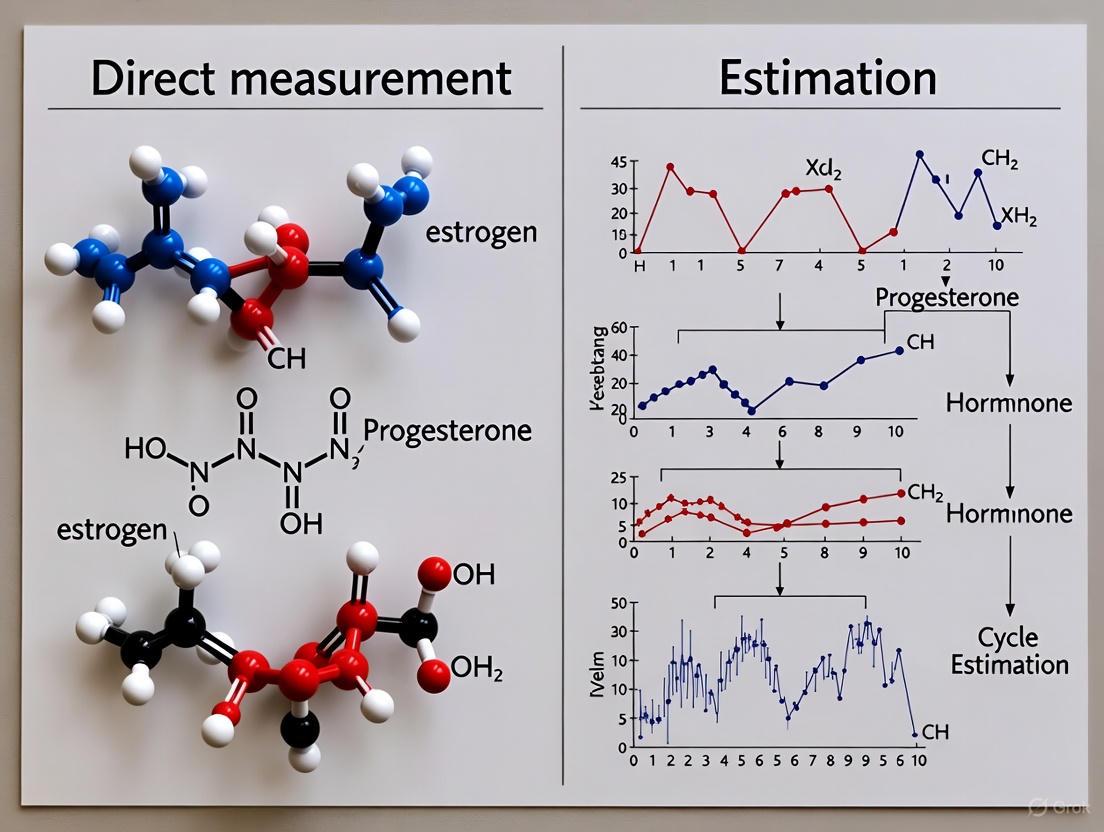

Diagram 1: Methodological pathways for menstrual cycle phase identification showing direct measurement and informed estimation approaches with their respective applications.

Research Reagent Solutions

Table 3: Essential Materials and Methods for Menstrual Cycle Phase Research

| Research Tool | Specific Function | Methodological Category | Key Specifications |

|---|---|---|---|

| Mira Fertility Monitor | Quantitative urine hormone measurement | Direct Measurement | Measures FSH, E13G, LH, PDG with smartphone integration |

| AliveCor KardiaMobile | Electrocardiographic recordings | Direct Measurement | 6-lead ECG for physiological monitoring across cycles |

| Serum Progesterone Assay | Ovulation confirmation | Direct Measurement | Threshold ≥9.5 nmol/L for confirmed ovulation |

| Digital Basal Thermometer | Temperature shift detection | Informed Estimation | Precision ±0.1°C for Quantitative Basal Temperature method |

| Transvaginal Ultrasound | Follicular development tracking | Direct Measurement | Gold standard for ovulation day identification |

| Menstrual Cycle Diary | Symptom and bleeding pattern tracking | Informed Estimation | Structured documentation for cycle characteristics |

| LH Surge Test Kits | Urinary luteinizing hormone detection | Direct Measurement | Predicts ovulation 24-36 hours prior to occurrence |

The distinction between direct measurement and informed estimation represents a fundamental methodological divide in menstrual cycle research. Direct measurement approaches, including quantitative hormone monitoring and ultrasound confirmation, provide precision essential for drug development and mechanistic studies where temporal accuracy is critical. Informed estimation methods, utilizing calendar calculations and proxy indicators, offer practical alternatives for large-scale studies or clinical applications where resource constraints preclude intensive monitoring. The experimental data presented in this guide enables researchers to make evidence-based decisions about methodological approaches based on their specific precision requirements, resource availability, and research objectives. As the field advances, standardized application of these core methodologies will enhance reproducibility and facilitate more meaningful comparisons across menstrual cycle studies.

The selection of a research methodology is a pivotal decision that extends far beyond mere technical preference, directly influencing data integrity, the validity of scientific conclusions, and the financial viability of research-dependent enterprises. Nowhere is this stakes more apparent than in the field of menstrual cycle phase research, which serves as a powerful case study for a broader scientific challenge: the critical trade-offs between direct measurement and estimation-based approaches. In disciplines ranging from women's health to drug development, the choice between these methodological paths carries profound implications for both scientific accuracy and resource allocation.

The menstrual cycle, characterized by complex, dynamic hormonal interactions, presents a particular challenge for researchers. While the acceleration of female-specific research is a welcome development, a concerning trend has emerged wherein assumed or estimated menstrual cycle phases are increasingly used to characterize ovarian hormone profiles [5]. This practice, often proposed as a pragmatic solution for field-based research in elite athlete environments where time and resources are constrained, essentially amounts to guessing the occurrence and timing of critical hormonal fluctuations [5]. Such methodological shortcuts risk significant consequences for understanding female athlete health, training adaptations, performance outcomes, and injury patterns, while simultaneously impacting the efficient deployment of research resources.

This guide provides a comprehensive comparison of methodological approaches in menstrual cycle research, with a specific focus on the rigorous comparison of direct hormonal measurement against emerging estimation techniques, particularly those leveraging wearable devices and machine learning. By synthesizing current evidence, detailing experimental protocols, and presenting quantitative performance data, we aim to equip researchers, scientists, and drug development professionals with the analytical framework necessary to make informed methodological choices that balance scientific rigor with practical constraints.

Methodological Foundations: Direct Measurement vs. Estimation

The fundamental division in menstrual cycle phase determination lies between approaches that directly quantify biological markers and those that infer cycle status through estimation.

The Gold Standard: Direct Measurement

Direct measurement methodologies involve the quantitative assessment of hormonal or physiological biomarkers to pinpoint menstrual cycle phases with high specificity. These approaches are characterized by their high analytical validity and provide the definitive evidence required for establishing causal relationships between hormonal status and physiological outcomes.

Core Physiological Principles: The menstrual cycle is orchestrated by three inter-related cycles: the ovarian cycle (lifecycle of an oocyte), the hormonal cycle (fluctuations in ovarian hormones), and the endometrial cycle (changes in the uterine lining) [5]. For research purposes, the hormonal cycle is most relevant, with a eumenorrheic (healthy) cycle defined by specific parameters: cycle lengths between 21-35 days, nine or more consecutive periods annually, evidence of a luteinizing hormone (LH) surge, and an appropriate progesterone profile during the luteal phase [5]. It is critical to note that regular menstruation and cycle length alone do not guarantee a eumenorrheic hormonal profile, as subtle disturbances like anovulation or luteal phase deficiency can remain undetected without direct measurement [5].

Key Direct Measurement Protocols:

- Urinary Luteinizing Hormone (LH) Detection: Identifies the LH surge that precedes ovulation by approximately 24-36 hours, providing a clear marker for the onset of the fertile window.

- Serum or Salivary Progesterone Assessment: Confirms ovulation and assesses luteal phase sufficiency through quantitative measurement of progesterone levels, typically occurring 5-7 days post-ovulation.

- Estrogen Metabolite Tracking: Monumbers estrone-3-glucuronide (E3G) levels in urine to track follicular development and the estrogen rise preceding ovulation.

- Combined Hormonal Profiling: Utilizes multiple synchronized measurements (e.g., LH, E3G, and pregnanediol glucuronide PdG) throughout the cycle to comprehensively characterize hormonal dynamics [6].

The Emerging Paradigm: Estimation and Prediction

Estimation methodologies attempt to determine menstrual cycle phases through indirect means, ranging from simple calendar-based calculations to sophisticated machine learning algorithms processing physiological data from wearable devices.

Calendar-Based Methods: The simplest estimation approach relies on counting days from the onset of menstruation and applying population-average assumptions about phase timing. This method suffers from significant limitations as it cannot account for inter- and intra-individual variability in cycle length and phase duration, nor can it detect anovulatory cycles or luteal phase defects [5].

Wearable Device-Based Machine Learning: Advanced estimation approaches utilize continuous physiological data from wearable sensors, processed through machine learning algorithms to classify cycle phases. These systems typically monitor parameters including:

- Heart Rate (HR) and Heart Rate Variability (HRV)

- Skin Temperature and Core Body Temperature

- Sleep Metrics

- Electrodermal Activity (EDA)

- Respiratory Rate [7] [8]

The underlying premise is that hormonal fluctuations throughout the menstrual cycle produce detectable changes in these autonomic and physiological parameters, creating signatures that machine learning models can learn to recognize.

Comparative Performance Analysis

Rigorous evaluation of both methodological approaches reveals significant differences in accuracy, reliability, and applicability across research contexts.

Accuracy and Reliability Metrics

Table 1: Performance Comparison of Menstrual Phase Identification Methods

| Methodological Approach | Reported Accuracy | Phase Classification Capability | Key Limitations |

|---|---|---|---|

| Direct Hormonal Measurement | Not applicable (gold standard) | Definitive identification of all phases | Requires participant compliance with sample collection; higher resource burden |

| Machine Learning (Wearable Data - 3 phases) | 87% accuracy, AUC-ROC: 0.96 [7] | Period, Ovulation, Luteal | Reduced performance with irregular cycles |

| Machine Learning (Wearable Data - 4 phases) | 68% accuracy, AUC-ROC: 0.77 [7] | Period, Follicular, Ovulation, Luteal | Challenging to distinguish follicular phase |

| Calendar-Based Estimation | Not validated | Limited to menstruation vs. non-menstruation | Cannot confirm ovulation or detect luteal phase; high error rate |

| minHR + XGBoost Model | Significantly improves luteal phase recall vs. BBT [8] | Luteal phase classification, ovulation prediction | Specialized feature engineering required |

Table 2: Technical and Resource Requirement Comparison

| Parameter | Direct Measurement | Machine Learning Estimation |

|---|---|---|

| Financial Cost | High (assay kits, laboratory analysis) | Moderate (device cost, computational resources) |

| Participant Burden | High (frequent sample collection) | Low (passive data collection) |

| Technical Expertise Required | Laboratory techniques, biochemical analysis | Data science, machine learning, signal processing |

| Data Latency | Hours to days (processing time) | Near real-time (potential for immediate feedback) |

| Scalability | Limited by cost and labor | Highly scalable once model is trained |

Contextual Strengths and Limitations

The performance data reveals that while machine learning approaches show promise, particularly for classifying three main cycle phases, they currently cannot match the precision of direct hormonal measurement for definitive phase identification. The decline in accuracy from 87% for three-phase classification to 68% for four-phase classification highlights the particular challenge in distinguishing the follicular phase from other cycle phases [7]. This limitation is significant for research requiring precise timing of interventions relative to specific hormonal milestones.

The robustness of direct measurement is particularly valuable for detecting subtle menstrual disturbances, which have been reported in up to 66% of exercising females [5]. These disturbances, including anovulatory cycles and luteal phase deficiency, are often asymptomatic but represent potential precursors to more severe menstrual dysfunction and can profoundly impact research outcomes if undetected.

Emerging evidence suggests that combining multiple physiological parameters improves estimation accuracy. One study demonstrated that using heart rate at the circadian rhythm nadir (minHR) significantly improved luteal phase classification and ovulation prediction, particularly in individuals with high variability in sleep timing, where it outperformed traditional basal body temperature (BBT) tracking by reducing absolute errors in ovulation detection by 2 days [8].

Experimental Protocols and Methodological Implementation

Direct Measurement Protocol: Multi-Hormone Tracking

Objective: To definitively identify menstrual cycle phases through synchronized measurement of key reproductive hormones.

Materials and Reagents:

- Mira Plus Starter Kit or similar urinary hormone analyzer: Quantifies LH, E3G (estrogen metabolite), and PdG (progesterone metabolite) [6]

- Phlebotomy supplies (for serum progesterone verification): Venous blood collection equipment

- Salivary collection kits (as an alternative to serum): Salivettes or similar collection devices

- Electronic hormone data management system: Secure database for tracking results

Procedure:

- Baseline Assessment: Record participant demographics, including age, typical cycle length, and gynecological history.

- Cycle Day Determination: Instruct participants to record first day of menstruation as Cycle Day 1.

- Urinary Hormone Monitoring:

- Begin daily testing from Cycle Day 7 until menstruation or confirmed ovulation.

- Collect first morning urine samples for consistency.

- Analyze samples for LH, E3G, and PdG according to device manufacturer instructions.

- Ovulation Confirmation: Identify LH surge (typically a ≥2.5-fold increase from baseline) with ovulation occurring 24-36 hours post-surge.

- Luteal Phase Verification:

- Measure serum or salivary progesterone 5-7 days post-ovulation.

- Confirm sufficient luteal phase with progesterone levels >3 ng/mL in saliva or >5 ng/mL in serum.

- Data Integration: Synchronize all hormonal measurements with cycle day and participant-reported symptoms.

Quality Control:

- Validate all point-of-care devices against laboratory standards annually.

- Implement duplicate testing for 10% of samples to ensure consistency.

- Establish standard operating procedures for sample collection, storage, and analysis.

Machine Learning Estimation Protocol: Multi-Modal Wearable Data

Objective: To classify menstrual cycle phases using physiological signals from wearable devices through machine learning algorithms.

Materials and Reagents:

- Wrist-worn wearable devices (e.g., Fitbit Sense, EmbracePlus, E4 wristband): Capable of continuous monitoring of HR, HRV, skin temperature, EDA, and accelerometry [7] [6]

- Data preprocessing and analysis platform: Python or R with relevant machine learning libraries (scikit-learn, TensorFlow)

- Cloud computing resources (for large dataset processing): AWS, Google Cloud, or Azure instances

Procedure:

- Data Collection:

- Recruit participants meeting inclusion criteria (regular cycles, no hormonal contraception).

- Distribute wearable devices with standardized wearing instructions.

- Collect continuous physiological data for minimum of two complete menstrual cycles.

- Implement ground truth validation through urinary LH testing or menstrual bleeding logs.

Feature Engineering:

- Extract time-domain features from physiological signals (mean, standard deviation HR, etc.).

- Calculate circadian rhythm parameters, including heart rate at circadian nadir (minHR) [8].

- Generate rolling window statistics to capture temporal patterns.

- Normalize features to account for inter-individual variability.

Model Training:

- Implement Random Forest, XGBoost, or neural network architectures.

- Adopt leave-last-cycle-out or leave-one-subject-out cross-validation approaches.

- Optimize hyperparameters through grid search or Bayesian optimization.

- Address class imbalance through techniques like SMOTE or class weighting.

Model Evaluation:

- Assess performance using accuracy, precision, recall, F1-score, and AUC-ROC.

- Generate confusion matrices to identify specific phase misclassification patterns.

- Validate on held-out test set not used during model development.

Implementation Considerations:

- Ensure sufficient sample size (N>50 cycles) for robust model development.

- Account for inter-individual variability through personalized or subgroup models.

- Address missing data through appropriate imputation strategies.

Visualization of Methodological Approaches

Direct Measurement vs. Estimation Methodological Workflow

The Researcher's Toolkit: Essential Reagent Solutions

Table 3: Key Research Reagents and Materials for Menstrual Cycle Phase Determination

| Reagent/Material | Primary Function | Application Context | Considerations |

|---|---|---|---|

| Urinary LH Test Kits | Detects luteinizing hormone surge preceding ovulation | Direct measurement approach; ovulation confirmation | Quality varies between brands; sensitivity thresholds important |

| Progesterone Immunoassay Kits | Quantifies progesterone levels in serum/saliva | Direct measurement; luteal phase confirmation | Requires laboratory equipment; salivary less invasive but serum more established |

| Wrist-Worn Wearable Devices | Continuous monitoring of physiological parameters (HR, temp, EDA) | Estimation approach; machine learning feature extraction | Data quality varies; device validation important for research |

| Continuous Glucose Monitors | Tracks interstitial glucose levels | Emerging research on metabolic fluctuations across cycle | Off-label use for research; requires calibration |

| Hormone Data Management Software | Securely stores and analyzes hormonal data | Both approaches; data integration and visualization | HIPAA compliance essential for participant privacy |

| Machine Learning Platforms | Processes wearable data for phase classification | Estimation approach; model training and deployment | Python/R ecosystems most common; cloud computing often needed |

Implications for Research Integrity and Financial Risk

The methodological choice between direct measurement and estimation carries profound implications that extend beyond technical considerations to encompass research validity and financial consequences.

Impact on Data Integrity and Scientific Validity

The use of assumed or estimated menstrual cycle phases represents a fundamental methodological compromise that undermines research validity. As critically noted in recent literature, "Assuming or estimating menstrual cycle phases is neither a valid (i.e., how accurately a method measures what it is intended to measure) nor reliable (i.e., a concept describing how reproducible or replicable a method is) methodological approach" [5]. When researchers substitute measurements with assumptions, they introduce systematic error that can obscure true physiological relationships and potentially lead to erroneous conclusions.

The financial implications of methodological choice manifest across multiple dimensions:

- Direct Costs: Laboratory-based hormonal assays entail substantial per-sample costs, while wearable devices require significant upfront investment but lower marginal costs per additional data point.

- Personnel Resources: Direct measurement approaches demand trained personnel for sample collection, processing, and analysis, whereas estimation approaches require data science expertise for algorithm development and validation.

- Error-Related Costs: Methodological errors resulting from phase misclassification can invalidate entire studies, wasting research investments and delaying scientific progress.

- Opportunity Costs: Resource-intensive direct measurement may limit sample size or study duration, potentially reducing statistical power and generalizability.

Risk Assessment and Mitigation Strategies

Research organizations should adopt structured risk assessment methodologies when evaluating methodological approaches:

Qualitative Risk Assessment: For early-stage research, qualitative evaluation of methodological risks using categorical scales (high, medium, low) can provide rapid insight into the most significant threats to research validity [9]. This approach is particularly valuable for identifying operational challenges and stakeholder concerns.

Quantitative Risk Assessment: For large-scale studies with significant resource allocation, quantitative methods that assign financial values to potential methodological failures enable more rigorous decision-making. Techniques like Monte Carlo simulations can model the probability and impact of different error scenarios [9] [10].

Risk Mitigation Framework:

- Methodological Alignment: Ensure the selected approach matches the research question's precision requirements.

- Validation Protocols: Implement rigorous validation of estimation methods against gold standard measurements in a representative subsample.

- Transparent Reporting: Clearly document all methodological limitations and potential sources of error in publications.

- Resource Allocation Planning: Balance methodological rigor with practical constraints through careful study design and power analysis.

The methodological choice between direct measurement and estimation in menstrual cycle research represents a critical decision point with far-reaching consequences for data integrity, scientific validity, and financial efficiency. While direct hormonal measurement remains the gold standard for definitive phase identification, emerging estimation approaches leveraging wearable technology and machine learning offer promising alternatives for applications where maximum precision is not required.

The current evidence suggests that a contingency-based approach may be most appropriate:

- Direct Measurement should be prioritized for research requiring definitive phase identification, such as clinical trials of hormone-sensitive interventions, investigations of menstrual disorders, and studies establishing causal physiological mechanisms.

- Estimation Approaches may be suitable for large-scale epidemiological research, longitudinal monitoring studies, and applications where participant burden must be minimized, provided their limitations are clearly acknowledged.

Future methodological development should focus on hybrid approaches that combine the efficiency of wearable-based monitoring with targeted direct measurement for validation and calibration. As machine learning algorithms improve and multi-modal sensing capabilities advance, the performance gap between estimation and direct measurement may narrow, but the fundamental distinction between measured and inferred biological states will remain a critical consideration for research integrity.

The high stakes of methodological choice demand rigorous evaluation of options, transparent reporting of limitations, and careful alignment between methodological capabilities and research objectives. By making informed choices grounded in empirical evidence of methodological performance, researchers can optimize both scientific validity and resource utilization in this rapidly evolving field.

The journey of a new drug from concept to market is a meticulously regulated sequence of stages, each serving as a critical gate for evaluating safety and efficacy. This process universally follows a five-stage framework: Discovery and Development, Preclinical Research, Clinical Research (Phases I-III), FDA Review, and Post-Market Safety Monitoring [11] [12]. Within this high-stakes environment, researchers and developers continually face fundamental decisions about how to assess progress and probability of success at each milestone. These decisions pivot on a core methodological choice: whether to rely on direct measurement of empirical data obtained from laboratory experiments and clinical trials or to employ model-based estimation that predicts outcomes using computational frameworks and historical data. The pharmaceutical industry's profound financial risk—with average development costs reaching $2.6 billion and timelines spanning 10-15 years—makes these measurement and estimation decisions crucial for managing attrition rates that see approximately 90% of candidates failing during human trials [11] [13]. This guide objectively compares the performance of these two methodological approaches across the drug development lifecycle, examining how each contributes to the structured quantification of risk, efficacy, and commercial viability.

The Five-Stage Framework: A Comparative Landscape for Measurement and Estimation

The standardized drug development pathway establishes distinct contexts for measurement and estimation, with each stage presenting unique questions that demand different quantitative approaches. The following analysis deconstructs this framework to identify where direct measurement or estimation provides superior insights.

Table: Key Questions and Methodological Approaches Across the Drug Development Lifecycle

| Development Stage | Primary Questions of Interest | Direct Measurement Approaches | Model-Based Estimation Approaches |

|---|---|---|---|

| Discovery & Development | - Which compounds show biological activity?- What is the binding affinity? | - High-throughput screening- In vitro binding assays- Crystallography | - Quantitative Structure-Activity Relationship (QSAR)- AI-based candidate prediction- Generative adversarial networks (GANs) for molecular design |

| Preclinical Research | - What is the compound's toxicity profile?- How is it absorbed and metabolized? | - In vitro cytotoxicity tests- In vivo animal studies- Histopathological examination | - Physiologically Based Pharmacokinetic (PBPK) modeling- Quantitative Systems Pharmacology/Toxicology (QSP/T)- Allometric scaling for human dose prediction |

| Clinical Phase I | - What is the maximum tolerated dose?- What are the pharmacokinetic parameters? | - Clinical safety monitoring- Serial blood sampling for concentration measurements- Adverse event documentation | - Population PK (PPK) modeling- First-in-Human (FIH) Dose Algorithms- Bayesian hierarchical models for dose escalation |

| Clinical Phase II | - Does the drug demonstrate efficacy?- What is the optimal dosing regimen? | - Clinical endpoint assessment- Biomarker measurement- Randomized controlled trials | - Exposure-Response (ER) modeling- Model-based meta-analysis (MBMA)- Clinical trial simulation for power calculations |

| Clinical Phase III | - Does benefits outweigh risks in larger populations?- How do efficacy and safety compare to standard care? | - Large-scale randomized controlled trials- Time-to-event analysis- Subgroup analysis | - Semi-mechanistic PK/PD modeling- Model-integrated evidence (MIE)- Adaptive trial designs with sample size re-estimation |

| FDA Review & Post-Market | - Are there rare adverse events?- How does the drug perform in real-world use? | - Voluntary adverse event reporting- Prescription databases analysis- Active surveillance studies | - Virtual population simulation- Bayesian signal detection algorithms- Pharmacoepidemiologic models using real-world data |

Stage 1: Discovery and Development – Early Screening Decisions

In the discovery phase, researchers identify disease targets and screen compounds for potential therapeutic activity [11]. Direct measurement traditionally dominates this stage through high-throughput screening of thousands of compounds against biological targets, with activity measured through in vitro assays that quantify binding affinity, potency, and functional activity. These experimental measurements provide definitive evidence of biological interaction but are resource-intensive and limited to chemical space that can be physically synthesized and tested [12].

Estimation approaches have emerged as powerful alternatives, particularly Quantitative Structure-Activity Relationship (QSAR) modeling, which predicts biological activity based on chemical structure without physical synthesis of every analog [12]. Artificial intelligence and machine learning approaches now accelerate this process further; generative adversarial networks (GANs) can design novel molecular structures with optimized properties, while deep learning models predict binding affinities with increasing accuracy [14]. The comparative performance shows estimation methods dramatically expanding the explorable chemical space while direct measurement provides essential validation for promising candidates.

Stage 2: Preclinical Research – Predicting Human Response from Model Systems

Preclinical research assesses compound safety and biological activity before human testing, requiring extensive laboratory and animal studies [11]. Direct measurement here includes in vitro tests (cell culture toxicity, enzyme inhibition) and in vivo animal studies that measure toxicity, pharmacokinetics (absorption, distribution, metabolism, excretion), and pharmacodynamics (biological effects). These empirical observations form the foundational safety dataset required for regulatory approval to begin human trials [11] [15].

Estimation methodologies bridge the translational gap between animal models and human response. Physiologically Based Pharmacokinetic (PBPK) modeling creates mechanistic frameworks that simulate drug disposition based on physiological parameters, while Quantitative Systems Pharmacology/Toxicology (QSP/T) models biological pathways to predict therapeutic and adverse effects [12]. These estimation approaches incorporate species-specific physiological differences to predict human pharmacokinetics and safe starting doses for clinical trials, complementing direct animal data with human-focused projections.

Stages 3-4: Clinical Research and FDA Review – Quantifying Human Response

The clinical trial phases represent the most resource-intensive portion of development, where methodological choices significantly impact cost and timeline [13]. Direct measurement produces the definitive human evidence through controlled clinical trials: Phase I establishes safety and dosage in 20-100 subjects; Phase II evaluates efficacy and side effects in several hundred patients; Phase III confirms therapeutic benefit and monitors adverse reactions in 300-3,000+ patients [11] [15]. These trials generate empirical measurements of clinical endpoints, safety parameters, and biomarker responses that form the primary evidence for regulatory decisions [15].

Model-informed Drug Development (MIDD) approaches provide estimation frameworks that optimize clinical development. Population PK (PPK) models quantify and explain variability in drug exposure between individuals, while Exposure-Response (ER) analysis characterizes the relationship between drug exposure and efficacy or safety outcomes [12]. These estimation methods enable more informative trial designs, support dose selection, identify subpopulations with different response characteristics, and help extrapolate to untested scenarios. For the FDA review stage, while the regulatory decision itself relies on direct measurement from adequate and well-controlled trials, estimation approaches can support labeling claims and help design post-market requirements [12].

Stage 5: Post-Market Safety Monitoring – Detecting Rare Events

After approval, drugs enter post-market surveillance where detection of rare or long-term adverse events becomes paramount [11]. Direct measurement occurs through voluntary reporting systems (e.g., FDA's MedWatch), targeted active surveillance, and Phase IV clinical studies conducted as post-approval commitments [11]. These approaches capture real-world safety data but suffer from underreporting, confounding, and limited ability to detect very rare events without enormous sample sizes.

Estimation approaches enhance signal detection through disproportionality analysis of spontaneous reporting databases, Bayesian data mining algorithms that identify unexpected reporting patterns, and pharmacoepidemiologic models that analyze electronic health records and claims data [12]. These methods estimate background incidence rates, adjust for confounding factors, and calculate the probability that observed event frequencies exceed expected levels, providing statistical signals that trigger more focused direct measurement studies.

Quantitative Comparison: Performance Metrics Across Methodologies

The relative value of direct measurement versus estimation varies significantly across development stages, with implications for cost, timeline, and decision quality. The following tables synthesize quantitative performance data from industry studies.

Table: Transition Probabilities and Development Timelines by Stage [13]

| Development Stage | Average Duration (Years) | Probability of Transition to Next Stage | Primary Reason for Failure |

|---|---|---|---|

| Discovery & Preclinical | 2-4 | ~0.01% (to approval) | Toxicity, lack of effectiveness |

| Phase I | 2.3 | 52%-70% | Unmanageable toxicity/safety |

| Phase II | 3.6 | 29%-40% | Lack of clinical efficacy |

| Phase III | 3.3 | 58%-65% | Insufficient efficacy, safety |

| FDA Review | 1.3 | ~91% | Safety/efficacy concerns |

Table: Methodological Performance Comparison Across Development Contexts

| Development Context | Direct Measurement Accuracy | Estimation Model Accuracy | Relative Speed | Resource Requirements |

|---|---|---|---|---|

| Target Identification | High (but limited to testable hypotheses) | Moderate-High (depends on training data) | Measurement: SlowEstimation: Fast | Measurement: HighEstimation: Moderate |

| Toxicity Prediction | High for tested scenarios | Moderate (varies by model) | Measurement: SlowEstimation: Fast | Measurement: Very HighEstimation: Low |

| Human Dose Projection | Requires clinical trial data | Moderate-High (PBPK/QSAR) | Measurement: Very SlowEstimation: Fast | Measurement: Extremely HighEstimation: Low |

| Efficacy Determination | High (gold standard) | Moderate (supplemental) | Measurement: SlowEstimation: Fast | Measurement: Extremely HighEstimation: Low-Moderate |

| Safety Signal Detection | High for common events | Superior for rare events | Measurement: SlowEstimation: Fast | Measurement: HighEstimation: Low |

Experimental Protocols: Methodologies for Direct Measurement and Estimation

Protocol 1: Direct Measurement of Clinical Efficacy (Phase III Trial)

Objective: To directly measure the superiority of a new drug compared to standard therapy or placebo for the intended indication.

Methodology:

- Design: Randomized, double-blind, controlled trial with parallel groups [15]

- Participants: 300-3,000 patients with confirmed diagnosis of the target disease [15]

- Intervention: Administration of investigational drug versus control (placebo/active comparator)

- Primary Endpoints: Clinically relevant endpoints specific to the disease (e.g., overall survival, progression-free survival, symptom reduction scale)

- Duration: Typically 2-4 years, including enrollment, treatment, and follow-up periods [13]

- Analysis: Intent-to-treat population with pre-specified statistical analysis plan

- Key Measurements: Absolute risk reduction, relative risk reduction, number needed to treat, hazard ratios with confidence intervals

Quality Controls: Good Clinical Practice (GCP) compliance, independent data monitoring committee, centralized endpoint adjudication, validated assessment instruments [11]

Protocol 2: Model-Based Estimation of First-in-Human Dose

Objective: To estimate a safe starting dose for initial human trials using integrated mathematical modeling approaches [12].

Methodology:

- Data Inputs: In vitro potency (IC50, EC50), animal PK/PD data, physicochemical properties, target receptor occupancy models [12]

- Model Framework: Integration of PBPK modeling with quantitative systems pharmacology

- Key Components:

- Allometric Scaling: Predict human clearance and volume of distribution from animal data using species-invariant time methods

- Toxicity Exposure Margin: Calculate human equivalent dose based on no observed adverse effect level (NOAEL) from animal studies with appropriate safety factors

- Pharmacologically Active Dose (PAD): Estimate minimum anticipated biological effect level (MABEL) using target affinity and occupancy models

- Virtual Population Simulation: Generate variability estimates using demographic and pathophysiological data

- Output: Recommended starting dose with proposed escalation scheme

Validation: Comparison to historical compounds with known human response, sensitivity analysis of key parameters, regulatory review of modeling approach [12]

Visualization of Methodological Relationships

The following diagrams illustrate the conceptual relationships and workflow integration between direct measurement and estimation approaches throughout the drug development lifecycle.

Diagram 1: Parallel application of direct measurement and estimation approaches across the five-stage drug development framework. Both methodologies contribute throughout the lifecycle, with varying relative importance at different stages.

Diagram 2: Iterative workflow integrating model-based estimation with direct measurement validation in Model-Informed Drug Development (MIDD). Dashed lines indicate calibration and validation pathways between methodologies.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key reagents, computational tools, and materials essential for implementing both direct measurement and estimation approaches in drug development research.

Table: Research Reagent Solutions for Drug Development Methodology

| Item/Category | Function/Purpose | Application Context |

|---|---|---|

| High-Throughput Screening Assays | Enable parallel testing of thousands of compounds for biological activity | Direct measurement in discovery phase; generates training data for estimation models [12] |

| Animal Disease Models | Provide in vivo systems for evaluating compound efficacy and toxicity | Direct measurement in preclinical research; parameterizes PBPK and QSP models [11] [12] |

| Clinical Biomarker Assays | Quantify biological responses to therapeutic intervention in human subjects | Direct measurement in clinical trials; informs exposure-response models [12] |

| PBPK/PD Modeling Software | Simulate drug disposition and effects using physiological parameters | Estimation approach for predicting human pharmacokinetics and dose selection [12] |

| QSAR Modeling Platforms | Predict compound properties and activity from chemical structure | Estimation method for prioritizing synthesis candidates and optimizing lead compounds [12] |

| Population PK/PD Analysis Tools | Quantify and explain variability in drug exposure and response | Estimation methodology for analyzing sparse clinical data and identifying covariates [12] |

| Clinical Trial Simulation Software | Predict trial outcomes and optimize design parameters using mathematical models | Estimation approach for improving trial efficiency and probability of success [12] |

| AI/ML Algorithm Suites | Identify patterns in high-dimensional data and make predictions from complex datasets | Estimation methodology for target identification, candidate optimization, and biomarker discovery [14] |

The comparison between direct measurement and estimation methodologies reveals a complex landscape where neither approach dominates exclusively. Rather, the most effective drug development strategies intelligently integrate both methodologies according to stage-specific requirements and decision contexts. Direct measurement provides the definitive empirical evidence required for regulatory approval and remains the gold standard for establishing efficacy and safety [11] [15]. Conversely, estimation approaches offer powerful tools for prioritizing resources, optimizing designs, and extrapolating knowledge—particularly through Model-Informed Drug Development (MIDD) frameworks that have demonstrated potential to reduce late-stage attrition rates and compress development timelines [12].

The evolving frontier of drug development methodology points toward increased integration of these approaches, with artificial intelligence and machine learning creating new opportunities to enhance both measurement precision and estimation accuracy [14]. As the industry confronts persistent challenges of rising costs and timelines, the strategic balance between measurement and estimation will increasingly determine research productivity and commercial success. Future methodology research should focus on quantitative frameworks for optimally allocating resources between these approaches across the development lifecycle to maximize the probability of delivering innovative medicines to patients in need.

In scientific research and drug development, the choice between direct measurement and estimation is a fundamental methodological crossroad. While direct measurement provides superior accuracy, estimation is frequently employed across various domains, from menstrual cycle phase determination in sports science to cost forecasting in pharmaceutical development. This practice persists even when the risks of estimation—including invalid data, biased conclusions, and misinformed clinical or business decisions—are well-documented [5] [16]. This guide objectively compares these approaches by examining the experimental data, methodologies, and practical constraints that drive this methodological selection, providing researchers with evidence-based insights for designing their studies.

Direct Measurement vs. Estimation: A Conceptual and Practical Comparison

Defining the Terms and Their Methodological Rigor

In research, direct measurement involves obtaining empirical data through specific assays, sensors, or calibrated instruments. In contrast, estimation constitutes an "informed best guess" of a value, which can be based either on indirect information (indirect estimation) or on direct measures of the variable of interest (direct estimation) [5]. The core distinction lies in the underlying scientific rigor: estimation, particularly when indirect, inevitably relies on more assumptions than direct measurement. If these assumptions are unreasonable or violated, the estimation becomes invalid [5].

The table below summarizes the core characteristics of each approach.

Table 1: Fundamental Characteristics of Direct Measurement and Estimation

| Characteristic | Direct Measurement | Estimation |

|---|---|---|

| Definition | Obtaining empirical data via specific assays, sensors, or instruments [5]. | An "informed best guess" of a value, often based on indirect information or models [5]. |

| Basis | Empirical observation and data collection. | Assumptions, historical data, and predictive models. |

| Key Strength | High validity and reliability when methodologies are sound [5]. | Pragmatism and resource efficiency, especially when direct measurement is infeasible [5]. |

| Inherent Risk | Can be resource-intensive, time-consuming, and sometimes impractical in field settings [5]. | Lower validity; amounts to "guessing" if underlying assumptions are flawed, with significant implications for downstream conclusions [5]. |

Quantitative Comparison of Outcomes

The choice between these methodologies has tangible consequences for data quality and experimental outcomes. Discrepancies are evident in fields as diverse as physiology and drug development.

Table 2: Comparative Outcomes of Estimation vs. Direct Measurement in Research

| Field of Study | Estimation Approach & Outcome | Direct Measurement Approach & Outcome | Performance Gap / Key Finding |

|---|---|---|---|

| Menstrual Cycle Phase Tracking | Calendar-based estimation: Classifies cycle phases based on counting days from menstruation, assuming a standard hormonal profile [5]. | Hormone level confirmation: Uses urine (luteinizing hormone) or blood/saliva (progesterone) tests to confirm ovulation and luteal phase [5]. | Estimation fails to detect up to 66% of subtle menstrual disturbances (e.g., anovulatory cycles) common in exercising females, leading to misclassification [5]. |

| Menstrual Cycle Phase Classification (Machine Learning) | Feature: "day" (days since menstruation onset) for phase classification and ovulation prediction [8]. | Feature: "day + minHR" (using heart rate at circadian rhythm nadir) for the same tasks [8]. | Adding the direct physiological measure (minHR) significantly improved luteal phase classification and reduced ovulation day detection absolute errors by 2 days in individuals with variable sleep schedules [8]. |

| Drug Development Costing | Estimates based on confidential surveys from large pharmaceutical firms, with assumptions on success rates and discount rates [17]. | Models using publicly available data (e.g., FDA databases, clinical trial registries) and transparent parameters [17] [18]. | Estimated pre-approval cost per approved drug: $2.6 billion (capitalized, from private data) [17] vs. median of $985.3 million (capitalized, from public data) [17]. Methodology and data source dramatically alter estimates. |

Experimental Protocols for Method Comparison

Protocol 1: Validating Menstrual Cycle Phase Determination

This protocol is designed to quantitatively compare the accuracy of estimated and directly measured menstrual cycle phases.

- Objective: To determine the error rate of calendar-based estimation of menstrual cycle phases against a reference standard of hormonal phase determination.

- Participant Selection: Recruit naturally menstruating participants (cycle lengths 21-35 days) and confirm eumenorrheic status via hormonal assessment [5].

- Experimental Groups:

- Estimation Group: Cycle phases are determined using a calendar-based count, starting from the first day of menstruation [5].

- Direct Measurement Group: Cycle phases are determined via direct hormonal measurement. This requires a combination of urinary luteinizing hormone (LH) surge detection kits to identify ovulation and mid-luteal phase serum or saliva progesterone analysis to confirm a sufficient progesterone rise [5].

- Data Analysis: Calculate the percentage of cycles in the Estimation Group that were misclassified (e.g., an anovulatory cycle classified as ovulatory, or an incorrect phase assignment) compared to the Direct Measurement Group.

Protocol 2: Benchmarking Machine Learning Models for Cycle Tracking

This protocol evaluates the performance enhancement gained by incorporating a direct physiological measure into a predictive model.

- Objective: To evaluate the performance improvement in luteal phase classification and ovulation prediction when using a direct circadian rhythm measure (

minHR) versus a simple calendar feature (day). - Data Collection: Under free-living conditions, collect data from participants over multiple menstrual cycles. Data streams must include:

- Self-reported menstruation onset.

- Continuous heart rate (to derive

minHR). - Basal Body Temperature (BBT) (for a traditional comparison) [8].

- Feature Sets & Model Training:

- Develop a machine learning model (e.g., XGBoost).

- Train and test the model using three distinct feature combinations:

day(estimation),day + BBT(semi-direct), andday + minHR(direct) [8].

- Performance Metrics: Use nested cross-validation to assess model performance. Key metrics include recall for the luteal phase and the mean absolute error for predicting the day of ovulation [8].

The Underlying Drivers: Why Estimation Persists

The reliance on estimation, despite its risks, is driven by a confluence of practical, economic, and technical factors.

- Pragmatism and Resource Constraints: In field-based research, such as studies involving elite athletes, time, financial resources, and participant availability are often severely limited. Researchers may be forced to adopt estimation as a "pragmatic and convenient" way to generate data when direct measurement is logistically prohibitive [5].

- Technical and Methodological Complexity: Some phenomena are inherently difficult to measure directly. For instance, the only definitive way to confirm ovulation is via transvaginal ultrasonic visualisation, a procedure described as "infinitely challenging" outside controlled clinical settings [5]. In such cases, estimation via proxy measures becomes a necessary compromise.

- Data Scarcity and Feasibility: In drug development, comprehensive and transparent cost data for clinical trials is often not publicly available. One analysis noted that only 18% of FDA-approved drugs had publicly available cost data, forcing many analyses to rely on estimations from limited or confidential data sets [17].

- Perceived "Good Enough" Accuracy: For initial screening, triage, or in contexts where extreme precision is not critical, an estimation with a known and acceptable error margin may be deemed sufficient for decision-making, especially if the cost of direct measurement is high.

Visualization of Method Selection and Risks

The following diagram maps the decision pathway and consequences of choosing between estimation and direct measurement, highlighting key risk points.

Essential Research Reagent Solutions

The following table details key materials and tools used in direct measurement methodologies discussed in this guide.

Table 3: Key Reagents and Tools for Direct Measurement Protocols

| Item Name | Function/Application | Key Consideration |

|---|---|---|

| Luteinizing Hormone (LH) Urine Test Kits | Detects the pre-ovulatory LH surge to pinpoint ovulation timing in menstrual cycle research [5]. | Confirms ovulation but does not verify subsequent hormonal support from the corpus luteum. |

| Progesterone Assay Kits (Saliva/Blood) | Quantifies progesterone levels to confirm a sufficient luteal phase post-ovulation [5]. | Saliva offers non-invasive sampling but may have different accuracy profiles compared to serum tests. |

| Wearable Heart Rate Monitors | Enables continuous, free-living collection of heart rate data for deriving direct physiological features like minHR [8]. |

Device accuracy and validity for detecting subtle physiological nadirs must be established for research purposes. |

| Clinical Trial Cost Databases (e.g., Medidata, IQVIA GrantPlan) | Provides real-world, per-patient cost data based on negotiated clinical trial contracts for direct cost modeling [18]. | Access is often proprietary; studies using public data (e.g., ClinicalTrials.gov) promote transparency and replicability [17] [18]. |

The tension between estimation and direct measurement is a fundamental aspect of scientific and industrial research. While estimation offers a pragmatic path forward under constraints, the evidence consistently shows that it introduces significant risks of error, bias, and misclassification [5] [16] [8]. Direct measurement, though often more demanding, remains the gold standard for producing valid, reliable, and actionable data. The most robust research strategy involves transparently acknowledging the limitations of estimation when it must be used, employing direct measurement wherever feasible, and leveraging emerging technologies like machine learning that integrate direct physiological measures to enhance accuracy and practicality [8].

In the burgeoning field of female-specific physiology research, precise terminology and rigorous methodological definitions are paramount for generating valid and reliable data. The central thesis of this guide is that the accuracy of menstrual cycle phase classification—oscillating between direct hormonal measurement and calendar-based estimation—directly dictates the quality and interpretability of research outcomes. This is particularly critical for applications in drug development and sports science, where subtle physiological changes can inform dosing, training protocols, and injury mitigation strategies. This document provides a comparative analysis of key terminologies and methodologies, underpinned by experimental data, to establish a foundational framework for researchers and scientists.

The core challenge lies in the inherent biological variability of the menstrual cycle. A eumenorrheic cycle is not defined by regularity of bleeding alone but by a specific hormonal profile confirming ovulation and adequate luteal phase function [5]. In contrast, the term naturally menstruating should be applied when a cycle length between 21 and 35 days is established through calendar-based counting, but no advanced testing is used to establish the hormonal profile [5]. This distinction is not semantic; it is fundamental. Studies relying on assumptions or estimations rather than direct measurements risk misclassifying phases, especially given the high prevalence (up to 66%) of subtle menstrual disturbances in exercising females, such as anovulatory or luteal phase deficient cycles, which can go entirely undetected without biochemical verification [5].

Key Terminology and Conceptual Framework

A clear understanding of the following terms is essential for designing and interpreting research involving the menstrual cycle.

- Eumenorrhea: A healthy menstrual cycle characterized by cycle lengths ≥ 21 days and ≤ 35 days, resulting in nine or more consecutive periods per year, biochemical evidence of a luteinising hormone (LH) surge, and a correct hormonal profile with sufficient progesterone in the luteal phase [5]. This term should be reserved for situations where menstrual function has been confirmed through advanced testing.

- Naturally Menstruating: A term for individuals who experience regular menstruation with cycle lengths between 21 and 35 days, but whose hormonal profile and ovulatory status have not been confirmed via direct measurement [5]. This classification can only reliably differentiate between days of menstruation and non-menstruation without attributing specific phase names.

- Phase-Transition Probabilities: The likelihood of accurately identifying the shift from one hormonally distinct phase of the menstrual cycle to another (e.g., from the late follicular phase to ovulation). This probability is maximized by direct measurement and significantly reduced when using estimation-based methods.

The following conceptual diagram illustrates the decision pathways and associated outputs for defining a menstrual cycle in a research context.

Comparative Analysis: Direct Measurement vs. Estimation

The methodological approach to phase determination is the single greatest factor influencing data quality. The table below provides a structured comparison of the two paradigms.

Table 1: Comparison of Methodological Approaches for Menstrual Cycle Phase Determination

| Feature | Direct Measurement | Estimation / Assumption |

|---|---|---|

| Core Principle | Phases determined via biochemical or physiological biomarkers. | Phases guessed based on calendar counting or self-report. |

| Key Techniques | - Serum hormone analysis (progesterone, oestradiol)- Urine luteinising hormone (LH) kits- Basal Body Temperature (BBT)- Circadian rhythm nadir heart rate (minHR) [8] | - Counting days from last menstrual period- Retrospective questionnaires- Assuming fixed phase lengths |

| Validity & Reliability | High; based on objective, measured data. | Low to very low; amounts to guessing and lacks scientific rigour [5] [19]. |

| Ability to Detect Subtle Disturbances | High; can identify anovulatory and luteal phase deficient cycles. | None; these disturbances are asymptomatic and remain undetected [5]. |

| Impact on Data Interpretation | Enables causal links between hormonal status and outcomes. | Conclusions are unreliable and risk significant implications for health and performance guidance [5]. |

| Practical Limitations | More resource-intensive (cost, time, equipment). | Perceived as pragmatic and convenient in field-based research. |

Experimental Evidence Demonstrating the Superiority of Direct Measurement

The theoretical limitations of estimation are borne out in experimental data. A systematic review on ACL injury risk found the quality of evidence was "low to very low" when studies used biochemical verification, and it would be further compromised without it. The review concluded it was "inconclusive whether a particular MC phase predisposes women to greater non-contact ACL injury risk," a finding potentially linked to methodological inconsistencies [20].

Conversely, a novel machine learning model utilizing a direct measure of heart rate at the circadian rhythm nadir (minHR) significantly improved luteal phase classification and ovulation day detection compared to models using only calendar day or BBT, particularly in individuals with high variability in sleep timing. The minHR-based model reduced absolute errors in ovulation detection by 2 days compared to the BBT-based model, demonstrating the practical advantage of a robust direct measure [8].

Quantitative Data Synthesis in Menstrual Cycle Research

The choice of methodology directly influences the physiological and cognitive outcomes measured in research. The following tables synthesize quantitative findings from studies that employed direct measurement techniques.

Table 2: Effects of Menstrual Cycle Phase on Physical Performance (Directly Measured Phases)

| Performance Domain | Key Finding (Phase Comparison) | Effect Size / Outcome | Source |

|---|---|---|---|

| Exercise Performance | Trivial reduction in early follicular vs. all other phases. | ES0.5 = -0.06 [95% CrI: -0.16 to 0.04] | Meta-Analysis [21] |

| ACL Injury Risk Surrogates | Inconclusive evidence for a high-risk phase; knee laxity fluctuates. | Association found between knee laxity changes and knee joint loading. | Systematic Review [20] |

| Muscular Strength (BRACTS Intervention) | Significant improvement in strength across all phases in the exercise group. | Cohen's d for grip and quadriceps strength maximal in follicular and mid-cycle phases. | RCT [22] |

Table 3: Effects of Menstrual Cycle Phase on Cognitive Performance (Directly Measured Phases)

| Cognitive Domain | Key Finding (Phase Comparison) | Effect Size / Outcome | Source |

|---|---|---|---|

| Reaction Time | Fastest during ovulation; slowest during mid-luteal phase. | ~30 ms faster during ovulation vs. mid-luteal. | UCL Study [23] |

| Working Memory & Attention | Better performance during pre-ovulatory (high-oestradiol) vs. menstrual phase. | Significant improvement in Digit Span and Trail Making Test B (p < 0.05). | Combined Study [24] |

| Global Cognitive Performance | No systematic robust evidence for significant cycle shifts across multiple domains. | Hedges' g analysis showed no robust differences in speed or accuracy. | Meta-Analysis [25] |

Detailed Experimental Protocols

To ensure reproducibility, detailed methodologies from key cited studies are outlined below.

- Objective: To develop a machine learning model for classifying menstrual cycle phases and predicting ovulation using heart rate at the circadian rhythm nadir (minHR).

- Population: 40 healthy women (18–34 years) over a maximum of three menstrual cycles.

- Data Collection: Conducted under free-living conditions. minHR was derived from continuous heart rate monitoring.

- Model Development: An XGBoost model was trained and evaluated using nested leave-one-group-out cross-validation.

- Feature Combinations: Three sets were evaluated: 1) "day" (days since menstruation onset), 2) "day + minHR", and 3) "day + BBT".

- Outcome Measures: Performance in luteal phase classification (recall) and ovulation day detection (absolute error).

- Objective: To examine the effects of the menstrual cycle on neuromuscular and biomechanical surrogates of non-contact ACL injury risk during dynamic tasks.

- Population: Injury-free, eumenorrheic women (18–40 years), with MC phases verified via biochemical analysis and/or ovulation kits.

- Intervention: Participants performed dynamic, high-impact tasks (jump-landing, change of direction).

- Outcome Measures: Kinetic and kinematic data (knee abduction moments, joint loads) collected using 3D motion analysis and/or force plates. Neuromuscular activity via surface electromyography (sEMG).

- Analysis: Comparison of outcome measures across a minimum of two defined MC phases.

- Objective: To explore how different phases of the menstrual cycle and physical activity level affect cognitive performance.

- Population: 54 naturally menstruating women (18-40 years), categorized by activity level (inactive to elite).

- Phase Determination: Participants were tracked across four key phases (first day of menstruation, late follicular, ovulation, mid-luteal). Ovulation was directly detected.

- Cognitive Tests: Participants completed a battery of computer-based tests measuring reaction time, accuracy, and spatial timing anticipation.

- Analysis: Within-subject comparison of cognitive performance across the four measured cycle phases.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 4: Key Reagents and Materials for Menstrual Cycle Phase Determination Research

| Item | Function / Application in Research |

|---|---|

| Luteinising Hormone (LH) Urine Kits | Detects the pre-ovulatory LH surge, a key marker for confirming ovulation and defining the peri-ovulatory phase. |

| Electrochemiluminescence Immunoassay (ECLIA) | Quantifies serum concentrations of steroid hormones (oestradiol, progesterone, testosterone) with high sensitivity for precise phase classification [24]. |

| Salivary Hormone Profiling Kits | A less invasive alternative to serum sampling for tracking progesterone and oestradiol levels, though may have higher variability. |

| Basal Body Temperature (BBT) Thermometer | A digital thermometer capable of measuring subtle shifts (0.1°C) in resting body temperature to infer the post-ovulatory progesterone rise. |

| Wearable Heart Rate Monitor | Enables continuous, free-living data collection for deriving circadian-based metrics like minHR, used in advanced phase classification models [8]. |

| 3D Motion Capture System | Quantifies biomechanical surrogates of injury risk (e.g., knee joint angles and moments) during dynamic tasks [20]. |

| Surface Electromyography (sEMG) | Measures neuromuscular activation patterns of key musculature (e.g., quadriceps, hamstrings) during physical performance tests [20]. |

The workflow for a comprehensive study integrating multiple direct measurement tools is complex. The following diagram outlines the sequential phases and key activities for such a research protocol.

The evidence consolidated in this guide unequivocally demonstrates that the validity of research on the menstrual cycle is inextricably linked to the rigor of its methodology. The terminological distinction between eumenorrheic and naturally menstruating is critical for accurately characterizing a study population. For research aiming to establish causal links between hormonal fluctuations and physiological or cognitive outcomes, the use of direct measurement of phase (via LH kits, serum progesterone, or novel biomarkers like minHR) is non-negotiable. While estimation-based approaches may seem pragmatic, they introduce unacceptably high levels of uncertainty and risk generating misleading data, which can have tangible negative repercussions on female athlete health, performance guidance, and drug development outcomes. Future research must prioritize methodological quality, transparent reporting, and the development of more accessible direct measurement tools to advance our understanding of female physiology.

From Theory to Practice: Implementing Measurement and Estimation Across the Development Pipeline

The traditional drug discovery paradigm, characterized by lengthy development cycles and high failure rates, has long relied on estimation-based approaches in its early stages [26] [27]. This process typically spans 10-15 years with costs exceeding $2 billion per approved drug, with clinical trial success rates declining precipitously from Phase I (52%) to an overall success rate of merely 8.1% [26]. The high attrition rate, particularly in Phase II where approximately 70% of candidates fail due to lack of efficacy, underscores the critical limitations of indirect estimation methods in predicting biological activity and clinical translatability [28].

In this context, a paradigm shift is occurring toward direct measurement and holistic biological simulation, mirroring the broader scientific imperative to replace assumptions with validated data [5]. Artificial intelligence (AI) and modern Quantitative Structure-Activity Relationship (QSAR) models are at the forefront of this transformation, moving beyond traditional reductionist approaches that focused narrowly on fitting ligands into protein pockets [29]. Instead, cutting-edge AI-driven drug discovery (AIDD) platforms now integrate multimodal data—including genomics, proteomics, phenotypic data, chemical structures, and clinical information—to construct comprehensive biological representations and enable more direct, predictive assessment of compound behavior before synthesis and testing [26] [29]. This review compares the performance of contemporary computational approaches in target identification and lead optimization, highlighting how AI and QSAR models are reducing reliance on estimation and advancing more direct, measurement-driven discovery.

Technology Comparison: Traditional vs. Modern Computational Approaches

Fundamental Differences in Methodology and Biological Representation

The transition from traditional computational tools to modern AI-driven platforms represents more than a simple technological upgrade—it constitutes a fundamental shift in how biology is conceptualized and modeled in silico.

Traditional QSAR and Molecular Modeling operated on principles of biological reductionism, focusing on discrete molecular interactions. These methods utilized predefined chemical descriptors (molecular weight, logP, etc.) and statistical approaches to establish relationships between chemical structure and biological activity [29]. Structure-based drug discovery assumed that modulating a specific protein target would address disease pathology, with computational efforts centered on narrow-scope tasks like molecular docking and ligand-based virtual screening [29]. While valuable, this reductionist approach often failed to capture the complexity of biological systems, leading to promising compounds that failed in later stages due to unanticipated effects in more complex biological environments.

Modern AI-Driven Platforms embrace a holistic, systems biology approach that is largely hypothesis-agnostic. Instead of studying targets in isolation, these platforms use deep learning systems to integrate multimodal data and construct comprehensive biological representations [29]. For example, knowledge graphs can encode billions of relationships between biological entities, while generative models explore vast chemical spaces to identify novel compounds optimized for multiple parameters simultaneously [30] [29]. This approach allows researchers to model complex biological networks and emergent properties rather than focusing solely on single target-ligand interactions, moving from estimation to more direct computational measurement of potential drug behavior.

Table 1: Core Methodological Differences Between Traditional and Modern Approaches

| Aspect | Traditional QSAR/Modeling | Modern AI-Driven Platforms |

|---|---|---|

| Philosophical Basis | Biological reductionism | Systems biology holism |

| Data Utilization | Structured chemical & biological data | Multimodal data (omics, images, text, clinical) |

| Target Approach | Single-target focus | Multi-target, polypharmacology |

| Hypothesis Generation | Human-driven, hypothesis-dependent | AI-driven, hypothesis-agnostic |

| Chemical Exploration | Limited to known chemical space | Billions of virtual compounds via generative AI |

| Validation Approach | Sequential experimental validation | Continuous active learning with experimental feedback |

Performance Metrics and Experimental Validation

Recent studies and industry reports demonstrate significant performance advantages of modern AI platforms across key discovery metrics. These improvements highlight how AI approaches deliver more direct, accurate predictions compared to estimation-based traditional methods.

In target identification, AI platforms have shown remarkable efficiency gains. Insilico Medicine's PandaOmics platform leverages 1.9 trillion data points from over 10 million biological samples and 40 million documents, using natural language processing and machine learning to uncover novel therapeutic targets [29]. This approach has demonstrated the ability to identify 73% more gene-phenotype associations for complex human diseases compared to standard methods [30]. The platform's holistic analysis of multimodal data provides a more direct measurement of target-disease relationships than traditional literature-based estimation.

In lead optimization, generative AI has dramatically compressed design cycles. Exscientia reports in silico design cycles approximately 70% faster and requiring 10× fewer synthesized compounds than industry norms [31]. In one program, a clinical candidate was achieved after synthesizing only 136 compounds, whereas traditional programs often require thousands [31]. This efficiency stems from AI's ability to directly optimize multiple parameters simultaneously—including potency, selectivity, and ADMET properties—rather than relying on sequential estimation and testing.

Table 2: Quantitative Performance Comparison of Discovery Technologies

| Performance Metric | Traditional Methods | Modern AI Platforms | Experimental Evidence |

|---|---|---|---|

| Target Identification Efficiency | Manual literature review & pathway analysis | 73% more gene-phenotype associations identified | Deep neural networks vs. standard methods [30] |

| Hit-to-Lead Timeline | 2-4 years (industry average) | 18 months (Insilico Medicine IPF program) | Novel target to preclinical candidate [32] |

| Compounds Synthesized | Thousands (typical) | 136 compounds (Exscientia CDK7 program) | Clinical candidate achievement [31] |

| Virtual Screening Enrichment | Baseline | 50-fold improvement vs. traditional methods | Integrated pharmacophoric features & protein-ligand data [33] |

| Lead Optimization Cycle | Months per cycle | ~70% faster design cycles | Exscientia platform metrics [31] |

Experimental Protocols and Methodologies

Modern AI-Driven Workflow for Target Identification

The following diagram illustrates the integrated, multi-modal approach used by leading AI platforms for target identification, representing a significant departure from traditional estimation-based methods: