Content Validity Index (CVI) in Survey Development: A Step-by-Step Guide for Biomedical Researchers

This article provides a comprehensive guide for researchers and drug development professionals on the development and validation of surveys using the Content Validity Index (CVI).

Content Validity Index (CVI) in Survey Development: A Step-by-Step Guide for Biomedical Researchers

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the development and validation of surveys using the Content Validity Index (CVI). It covers foundational concepts of content validity, detailed methodological steps for CVI calculation and implementation, strategies for troubleshooting common issues, and advanced techniques for instrument validation. By integrating current methodologies, practical Excel tutorials, and examples from recent clinical and biomedical research, this guide serves as an essential resource for ensuring the rigor and credibility of data collection instruments in scientific studies.

Understanding Content Validity: The Cornerstone of Robust Survey Design

Defining Content Validity and its Critical Role in Research

Content validity is a fundamental psychometric property that assesses the extent to which an instrument's items accurately and fully represent the entire domain of the construct being measured [1]. It answers a critical question: Does the content of this measurement tool adequately sample all facets of the concept we intend to measure? Unlike face validity, which merely concerns superficial appearance, content validity requires systematic, rigorous evaluation of test content by subject matter experts to ensure no important components are missing and that all items are relevant and appropriate for the intended purpose [1].

In research contexts—particularly in healthcare, education, and social sciences—content validity serves as the bedrock for drawing valid inferences from data. Without adequate content validity, statistical significance based on test scores may be inaccurate or misleading, compromising research integrity and practical applications such as clinical assessments, educational testing, and personnel selection [1]. For drug development professionals and researchers, establishing content validity is essential for justifying the use of any instrument for a specific measurement purpose, ensuring that the tools accurately capture the intended constructs, whether measuring patient-reported outcomes, symptom severity, or treatment effectiveness.

Theoretical Framework and Definitions

Core Components of Content Validity

Content validity primarily evaluates two key aspects of an instrument: relevance and representativeness [1]. Relevance ensures each item appropriately reflects the target construct, while representativeness guarantees the items comprehensively cover the entire conceptual domain. This dual focus distinguishes content validity from other validity forms and establishes its critical role in instrument development.

Content Validity vs. Construct Validity

While both are essential measurement properties, content validity and construct validity serve distinct purposes [1]:

Content Validity focuses specifically on the instrument's content, assessing how well the items sample the domain of interest. It is typically evaluated deductively by defining the construct and systematically selecting items from that domain through expert judgment.

Construct Validity is a broader concept that encompasses content validity as one aspect. It investigates whether the instrument truly measures the theoretical construct it claims to measure by examining the relationship between test scores and other variables, internal structure, and responses to interventions [1].

The relationship between these validities is hierarchical: content validity is a necessary but insufficient condition for construct validity. An instrument can have good content validity yet lack construct validity if it doesn't truly measure the intended psychological construct [1].

Table 1: Key Differences Between Content Validity and Construct Validity

| Feature | Content Validity | Construct Validity |

|---|---|---|

| Definition | Extent to which items reflect the specific concept being measured | Extent to which a test measures the underlying theoretical construct |

| Scope | Narrower; focuses on items and their relationship to content domain | Broader; encompasses content validity and other validity evidence |

| Focus | Relevance and representativeness of items | Meaning of test scores in relation to theoretical framework |

| Evaluation Methods | Expert review, item-domain congruence | Factor analysis, relationships with other variables, experimental interventions |

Quantitative Assessment of Content Validity

Content Validity Index (CVI) Methodology

The Content Validity Index (CVI) is a widely accepted quantitative method for assessing content validity, particularly in healthcare and social science research [2] [3]. The CVI is calculated at two levels: Item-Level CVI (I-CVI) for individual items and Scale-Level CVI (S-CVI) for the entire instrument.

The assessment process typically involves a panel of 3-10 subject matter experts who evaluate each item using a 4-point Likert scale for relevance: 1 = "not relevant," 2 = "somewhat relevant," 3 = "quite relevant," and 4 = "highly relevant" [2]. These ratings are then converted to binary values (0 or 1) for calculation, with ratings of 3 or 4 considered "valid" (coded as 1) and ratings of 1 or 2 considered "not valid" (coded as 0) [2].

Calculation Protocols

I-CVI Calculation: The I-CVI is computed for each item as the proportion of experts giving a rating of 3 or 4 [2]. For example, if 5 out of 6 experts rate an item as 3 or 4, the I-CVI would be 5/6 = 0.83.

S-CVI Calculation: The Scale-Level Content Validity Index can be computed using two approaches [2]:

- S-CVI/Ave: The average of all I-CVIs across the instrument

- S-CVI/UA: The proportion of items that achieve a rating of 3 or 4 by all experts

Table 2: Content Validity Index Thresholds and Standards

| Number of Experts | Minimum Acceptable I-CVI | Source |

|---|---|---|

| 2 | 0.80 | Davis (1992) |

| 3-5 | 1.00 | Polit & Beck (2006), Polit et al. (2007) |

| 6-8 | 0.83 | Lynn (1986) |

| ≥9 | 0.78 | Lynn (1986) |

For newly developed instruments, a CVI value of ≥0.80 is generally required to confirm that items possess high, clear, and relevant content validity [2]. The S-CVI/Ave should ideally exceed 0.90 for the overall instrument to be considered to have excellent content validity [3].

Experimental Protocols for Content Validation

Instrument Design and Development Phase

The content validation process begins with comprehensive instrument design through a three-step process [3]:

Domain Determination: Clearly define the content domain of the construct through literature review, interviews with target respondents, and focus groups. This step requires precise definition of the construct's attributes, characteristics, boundaries, dimensions, and components.

Item Generation: Develop items that comprehensively sample the identified content domain. Qualitative research methods, including interviews with individuals familiar with the concept, are invaluable for generating instrument items that enrich and expand upon existing literature.

Instrument Construction: Refine and organize items into a suitable format and sequence, collecting finalized items into a usable measurement instrument.

Expert Judgment Phase

This phase involves quantitative and qualitative evaluation by an expert panel [3]:

Expert Panel Selection: Assemble 5-10 content experts with professional or research experience in the field. Including lay experts (potential research subjects) ensures the instrument represents the target population.

Evaluation Process: Experts quantitatively rate each item for relevance, clarity, and comprehensiveness using standardized scales. They also provide qualitative feedback on grammar, wording, sequencing, and scoring.

Data Analysis: Calculate quantitative indices (CVR, I-CVI, S-CVI) and analyze qualitative feedback to refine and improve the instrument.

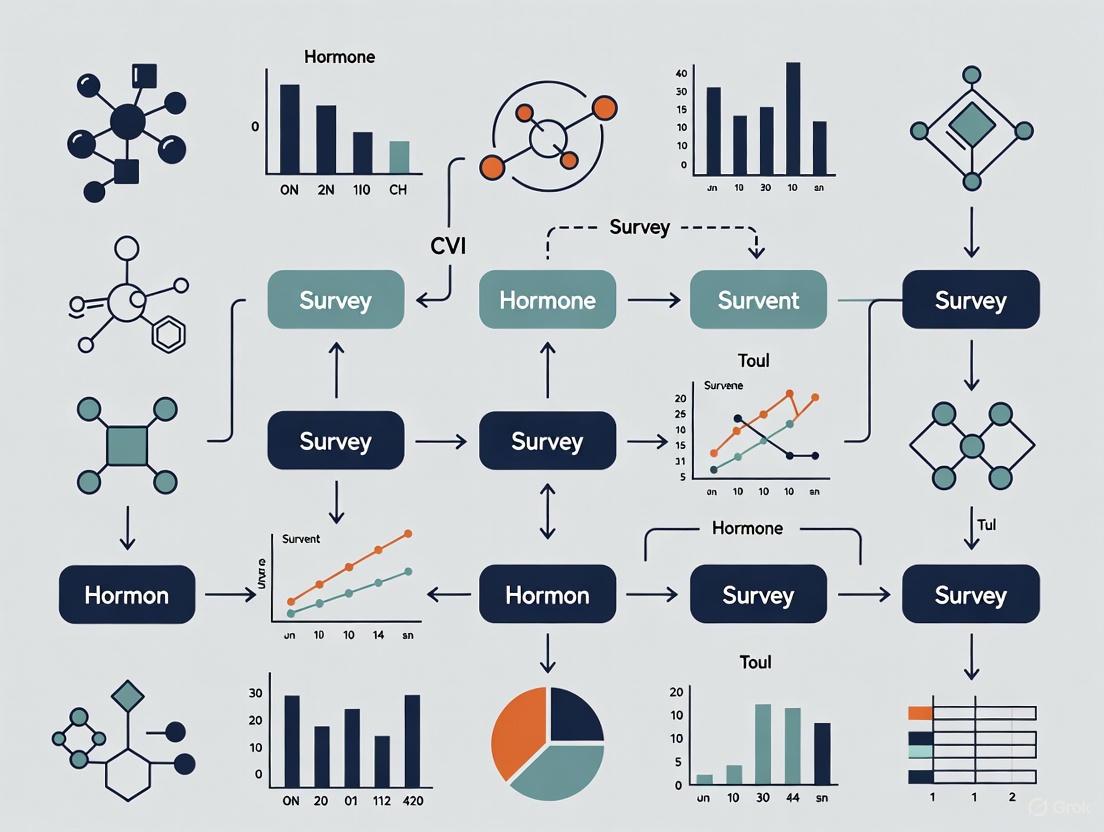

Diagram 1: Content Validity Assessment Workflow

Application in Research Settings

Cross-Cultural Translation and Validation

Content validity methodology is particularly crucial in cross-cultural research and instrument translation. A 2025 study demonstrating the translation of a patient-reported outcome measure for head and neck cancer highlights this application [4]. Researchers used CVI methodology where an expert panel of nurses proficient in both Spanish and English independently reviewed and rated a forward translation for cultural relevance and translation equivalence. The study achieved excellent content validity with average CVI scores of 0.95 for cultural relevance and 0.84 for translation equivalence, with problematic items (CVI <0.59) refined through cognitive interviews with native Spanish-speaking patients [4].

Healthcare and Clinical Research Applications

In healthcare research, content validity is essential for developing patient-centered instruments. A methodological study examining patient-centered communication in oncology wards identified seven dimensions through content validation: trust building, informational support, emotional support, problem solving, patient activation, intimacy/friendship, and spirituality strengthening [3]. From an initial set of 188 items, content validity process refined the instrument to appropriate items across these domains, achieving an S-CVI/Ave of 0.93, demonstrating excellent content validity [3].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Content Validity Research

| Research Reagent | Function/Purpose | Application Notes |

|---|---|---|

| Expert Panel | Provides subject matter expertise for item evaluation | Select 5-10 experts with minimum 5 years field experience; include both content experts and lay experts from target population |

| 4-Point Likert Scale | Standardized rating system for item relevance | 1=Not relevant, 2=Somewhat relevant, 3=Quite relevant, 4=Highly relevant; prevents neutral responses |

| CVI Calculation Framework | Quantitative assessment of content validity | Computes I-CVI (item-level) and S-CVI (scale-level); requires binary conversion of ratings (1,2=0; 3,4=1) |

| Statistical Software (Excel) | Automated CVI calculation | Uses COUNTIF, AVERAGE functions; reduces human error in manual calculations |

| Cognitive Interview Protocol | Qualitative refinement of problematic items | Identifies issues with wording, comprehension; used for items with CVI <0.59 |

| Content Validity Ratio (CVR) | Assesses essentiality of items | CVR = (Nₑ - N/2)/(N/2); Nₑ=number indicating "essential", N=total experts |

Advanced Methodological Considerations

Modified Kappa Statistic

Beyond CVI, the modified kappa statistic accounts for chance agreement among experts [3]. This approach calculates the probability of chance agreement and provides a more robust statistical evaluation of content validity. Kappa values >0.74 are considered excellent, while values between 0.60-0.74 are considered good.

Universal Agreement vs. Average Agreement

The S-CVI/UA (universal agreement) tends to be more conservative than S-CVI/Ave (average agreement) because it requires all experts to agree on each item [2] [3]. With larger expert panels, S-CVI/UA typically yields lower values, making S-CVI/Ave more practical while still maintaining rigorous standards.

Diagram 2: CVI Calculation Methodology

Content validity remains a foundational requirement for developing psychometrically sound research instruments. Through systematic application of CVI methodology—including expert panel evaluation, quantitative assessment of I-CVI and S-CVI, and rigorous adherence to established thresholds—researchers can ensure their measurement tools adequately represent the constructs under investigation. For drug development professionals and scientific researchers, robust content validation provides the necessary foundation for collecting meaningful, reliable data that can accurately inform clinical decisions, treatment development, and scientific understanding. The protocols and applications outlined in this article provide a comprehensive framework for implementing content validity assessment across diverse research contexts.

In research instrument development, content validity is a fundamental concept that assesses whether the items in a questionnaire or scale adequately represent the entire domain of the construct being measured [3]. Also known as definition validity and logical validity, it answers the question of to what extent the selected sample of items comprehensively covers the content area [3]. Establishing content validity is a critical prerequisite for other forms of validity and must receive the highest priority during instrument development, as an instrument lacking content validity cannot establish reliability [3].

The Content Validity Index (CVI) has emerged as a widely accepted quantitative method for evaluating content validity, particularly in educational, social science, and healthcare research [2]. This metric systematically quantifies expert agreement on item relevance, providing researchers with evidence that their assessment content fairly and adequately represents a defined domain of knowledge or performance [5] [2]. For thesis research in survey development, establishing content validity through CVI represents an essential methodological step that strengthens theoretical foundations and enhances overall data quality.

Understanding I-CVI and S-CVI

The Content Validity Index operates at two distinct but complementary levels: the item level and the scale level.

Item-Level Content Validity Index (I-CVI)

The Item-Level Content Validity Index (I-CVI) represents the proportion of content experts who agree that a specific item is relevant to the construct being measured [2]. Calculated for each individual item in an instrument, I-CVI provides granular information about which items may require revision or elimination. This item-level analysis allows researchers to identify problematic items while retaining well-performing ones, thus enabling targeted instrument refinement.

Scale-Level Content Validity Index (S-CVI)

The Scale-Level Content Validity Index (S-CVI) evaluates the overall validity of the entire questionnaire or scale [2]. It can be calculated using two different approaches:

- S-CVI/Universal Agreement (S-CVI/UA): The proportion of items that achieve a perfect relevance rating from all experts [2]. This method represents the most stringent approach to establishing content validity.

- S-CVI/Average (S-CVI/Ave): The average of all I-CVI values for items in the scale [2]. This approach provides a more comprehensive overview of content validity across the entire instrument.

Research has demonstrated that these two calculation methods can yield different results. One study found that while the S-CVI/UA was low, the S-CVI/Ave was 0.93, indicating good overall content validity despite the difficulty in achieving universal expert consensus on all items [3].

Quantitative Standards and Interpretation

Establishing clear thresholds for acceptable CVI values is essential for rigorous instrument development. The following table summarizes the widely accepted standards based on the number of experts involved in content validation:

Table 1: Content Validity Index Thresholds Based on Number of Experts

| Number of Experts | Acceptable I-CVI Values | Source of Recommendation |

|---|---|---|

| 2 | At least 0.8 | Davis (1992) |

| 3 to 5 | Should be 1 | Polit & Beck (2006), Polit et al., (2007) |

| At least 6 | At least 0.83 | Polit & Beck (2006), Polit et al., (2007) |

| 6 to 8 | At least 0.83 | Lynn (1986) |

| At least 9 | At least 0.78 | Lynn (1986) |

For newly developed instruments, current research recommends a CVI value of ≥ 0.8 to confirm that items possess high, clear, and relevant content validity [2]. The S-CVI/Ave should also meet or exceed this 0.8 threshold for the overall instrument to be considered valid [2].

Experimental Protocol for CVI Assessment

Implementing a systematic content validity study involves a structured two-step process: instrument development and expert judgment [3].

Stage 1: Instrument Design

The initial design phase consists of three critical steps:

Domain Determination: Precisely define the content area related to the variables being measured through comprehensive literature review, interviews with respondents, and focus groups [3]. This step establishes clear boundaries, dimensions, and components of the construct.

Item Generation: Develop individual items that adequately sample from the defined content domain [3]. Qualitative research methods can be particularly valuable for generating items that reflect the lived experience of the target population.

Instrument Formation: Refine and organize items into a suitable format and sequence, creating a usable instrument [3]. This includes attention to grammar, wording, and scoring procedures.

Stage 2: Expert Judgment

The judgment phase involves quantitative evaluation by content experts:

Expert Panel Selection: Convene a panel of 3-10 experts with substantial experience (typically ≥5 years) in the relevant field [2] [3]. Including both content experts and potential respondents ensures professional judgment and population representation [3].

Relevance Rating: Experts independently rate each item using a four-point Likert scale: 1 = "Not relevant," 2 = "Somewhat relevant," 3 = "Quite relevant," and 4 = "Highly relevant" [2].

Data Collection and Analysis: Compile expert ratings and calculate CVI values using standardized computational procedures [2].

The following workflow diagram illustrates the complete CVI assessment process:

Computational Methodology

Calculating CVI values involves a systematic transformation of expert ratings into quantitative validity indices. Microsoft Excel provides an accessible platform for performing these calculations efficiently.

Data Preparation

Create a structured data table with questionnaire items as rows and expert ratings as columns [2]. Record all ratings using the four-point Likert scale (1 = "Not relevant" to 4 = "Highly relevant").

Binary Conversion of Relevance Ratings

Convert the Likert scale ratings to binary values (0 or 1) to facilitate CVI calculation [2]:

Table 2: Binary Conversion Scheme for Expert Ratings

| Likert Scale Rating | Binary Conversion | Interpretation |

|---|---|---|

| 1, 2 | 0 | Not Valid |

| 3, 4 | 1 | Valid |

In Excel, use the formula =IF(B2>=3,1,0) where B2 contains the expert's rating [2]. Apply this formula across all expert columns and item rows.

I-CVI Calculation

Compute the Item-Level CVI using the following procedure:

Count Expert Agreement: For each item, count the number of experts who rated it as valid (binary value = 1) using the formula

=COUNTIF(H2:J2,">=1")where H2:J2 contains the binary values for the three experts [2].Calculate I-CVI: Divide the number of agreeing experts by the total number of experts using the formula

=K2/3where K2 contains the agreement count [2].Categorize Items: Classify items as valid or invalid based on established thresholds using the formula

=IF(L2>=0.8,"Valid","Invalid")where L2 contains the I-CVI value [2].

S-CVI Calculation

Compute Scale-Level CVI using two approaches:

S-CVI/Average: Calculate the average of all I-CVI values using Excel's AVERAGE function [2].

S-CVI/Universal Agreement:

- First, identify items where all experts agree (binary value = 1 for all experts) using the formula

=IF(AND(H2=1,I2=1,J2=1),1,0)[2]. - Then, calculate the proportion of such items out of the total number of items.

- First, identify items where all experts agree (binary value = 1 for all experts) using the formula

The following diagram illustrates the computational workflow for CVI calculation:

The Researcher's Toolkit: Essential Reagents for CVI Studies

Table 3: Essential Methodological Components for Content Validity Studies

| Research Component | Function | Implementation Example |

|---|---|---|

| Expert Panel | Provide professional judgment on item relevance | 3-10 content experts with ≥5 years field experience [2] [3] |

| Four-Point Likert Scale | Quantify expert ratings of item relevance | 1="Not relevant" to 4="Highly relevant" [2] |

| Binary Conversion Protocol | Transform ordinal ratings into dichotomous validity judgments | Ratings 3-4 → 1 (Valid); Ratings 1-2 → 0 (Not Valid) [2] |

| CVI Calculation Framework | Compute item-level and scale-level validity indices | I-CVI, S-CVI/UA, S-CVI/Ave [2] |

| Threshold Standards | Establish minimum acceptability criteria for validity indices | I-CVI ≥ 0.8; S-CVI/Ave ≥ 0.8 [2] |

| Statistical Software | Automate computation and reduce human error | Microsoft Excel with COUNTIF, AVERAGE functions [2] |

Application in Drug Development Research

For drug development professionals, establishing content validity is particularly crucial when developing patient-reported outcome (PRO) measures, clinician-rated scales, and other instruments used in clinical trials. The CVI framework provides methodological rigor that aligns with regulatory requirements for instrument validation in pharmaceutical research.

In healthcare contexts, content validity ensures that training tools, pre/post-test questionnaires, and research instruments align with evidence-based practices [2]. The patient-centered communication instrument study demonstrates how CVI methodology can be applied to develop culturally appropriate measures for specific patient populations, such as cancer patients in oncology wards [3].

When adapting instruments for cross-cultural use or specific disease populations, content validity assessment becomes an essential first step that informs subsequent translation, cultural adaptation, and psychometric validation processes. The quantitative nature of CVI provides compelling evidence for regulatory submissions regarding the content validity of clinical outcome assessments.

The Content Validity Index, with its item-level (I-CVI) and scale-level (S-CVI) components, provides researchers with a systematic, quantitative method for establishing the content validity of research instruments. Through rigorous expert evaluation and standardized computational procedures, the CVI framework enables drug development professionals, researchers, and scientists to ensure their survey instruments adequately represent the constructs they intend to measure.

By implementing the protocols and methodologies outlined in this article, thesis researchers can strengthen the methodological foundation of their survey development research, producing instruments that demonstrate both quantitative rigor and qualitative relevance to their target domains.

Application Notes

The Role of the Expert Panel in Content Validity

In Content Validity Index (CVI) survey development research, expert panels serve as the definitive reference standard for assessing how well instrument items represent the construct being measured [6]. The panel's primary function is to independently evaluate item relevance, clarity, and comprehensiveness based on a predefined conceptual framework [7]. This judgment-based process ensures that a data collection instrument accurately captures all facets of the concept under investigation, whether it pertains to professional quality of life, drug toxicity, spiritual health, pain, or other phenomena [6]. The methodology is particularly valuable in cross-cultural translation of patient-reported outcome measures, where it systematically identifies problematic items requiring refinement by evaluating both cultural relevance and translation equivalence [8].

Consequences of Inadequate Panel Sizing

Determining the appropriate expert panel size involves balancing methodological rigor with practical constraints. Evidence from diagnostic research indicates substantial heterogeneity in panel composition, with underpowered panels risking unreliable consensus and oversized panels creating administrative burdens without commensurate benefits [9]. In pharmaceutical and healthcare research, insufficient panel sizes may compromise content validity assessments, leading to measurement instruments that fail to detect clinically significant differences in patient outcomes or drug effects. Conversely, properly constituted panels enhance instrument reliability, support regulatory approval processes, and strengthen evidence for drug efficacy claims through psychometrically sound measurement.

Experimental Protocols

Protocol 1: Determining Optimal Panel Size and Composition

Purpose and Principle

This protocol establishes standardized procedures for constituting expert panels with optimal size and composition to ensure robust content validity assessments in pharmaceutical research and survey development.

Materials and Reagents

Table 1: Research Reagent Solutions for Expert Panel Formation

| Item | Function | Specifications |

|---|---|---|

| Expert Recruitment Database | Identifies potential panelists with relevant expertise | Minimum 5 years domain-specific experience; peer-reviewed publications or clinical specialization |

| Qualification Matrix | Standardizes expert selection | Evaluates expertise diversity, methodological experience, and conflict of interest status |

| Four-Point Likert Scale | Quantifies expert ratings | 1=Not relevant; 2=Somewhat relevant; 3=Quite relevant; 4=Highly relevant [2] |

| Content Validity Index (CVI) Calculator | Computes validity metrics | Microsoft Excel with COUNTIF and AVERAGE functions for I-CVI and S-CVI calculations [2] |

Procedure

Define Expertise Requirements: Identify the specific domains of knowledge needed based on the instrument's target construct (e.g., clinical pharmacology, oncology, psychometrics, cross-cultural adaptation).

Establish Panel Size Parameters:

- For most applications, constitute panels of 3-5 experts for focused instrument development [6].

- For high-stakes validation (e.g., regulatory submission), expand to 5-8 experts to enhance reliability [9].

- For complex constructs requiring multiple specialties, consider 8-12 experts with staggered participation through modified Delphi techniques [7].

Recruit and Screen Experts:

- Select experts based on:

- Minimum 5 years of domain-specific experience

- Peer-reviewed publications or clinical specialization in relevant field

- Diversity of perspectives (methodology, clinical practice, cultural background)

- Absence of significant conflicts of interest

- Select experts based on:

Implement Independent Rating:

- Provide experts with the conceptual definition of the construct

- Distribute instrument items with standardized rating scales

- Ensure independent assessment without conferring to prevent groupthink

Calculate Content Validity Metrics:

- Compute Item-Level CVI (I-CVI) for each instrument item

- Calculate Scale-Level CVI (S-CVI) for the overall instrument

- Apply predetermined thresholds (typically I-CVI ≥0.78, S-CVI ≥0.90) for acceptable validity [6]

Protocol 2: Content Validity Assessment Using CVI Methodology

Purpose and Principle

This protocol provides a standardized approach for quantifying content validity through systematic expert evaluation, enabling researchers to refine measurement instruments for drug development research.

Procedure

Expert Panel Briefing:

- Conduct orientation session to review construct definitions and rating criteria

- Distribute rating materials with clear instructions

- Establish timeline for completion (typically 2-3 weeks)

Item Evaluation:

- Experts rate each item using four-point Likert scale for relevance:

- 1 = Not relevant

- 2 = Somewhat relevant

- 3 = Quite relevant

- 4 = Highly relevant [2]

- Additional ratings may be collected for clarity, comprehensiveness, and cultural appropriateness

- Experts rate each item using four-point Likert scale for relevance:

Data Collection and Processing:

- Compile expert ratings in structured format (e.g., Excel spreadsheet)

- Convert Likert ratings to binary values (3-4 = "valid", 1-2 = "not valid")

- Calculate I-CVI as proportion of experts rating item 3 or 4

- Compute S-CVI/Average as mean of I-CVIs across all items

Item Refinement and Iteration:

- Flag items with I-CVI below acceptable threshold (typically <0.78)

- Conduct cognitive interviews to understand rating discrepancies

- Revise problematic items based on qualitative feedback

- Re-submit revised items for re-evaluation if necessary

Data Presentation and Analysis

Expert Panel Configuration Parameters

Table 2: Optimal Panel Size Recommendations for Different Research Contexts

| Research Context | Recommended Panel Size | Key Considerations | Supporting Evidence |

|---|---|---|---|

| Diagnostic Accuracy Studies | 3-4 experts (median) | Most common configuration in medical research; balances reliability and feasibility | Analysis of 318 studies showing 55% used 3-4 experts [9] |

| Drug Development and Regulatory Submissions | 5-8 experts | Enhanced rigor for regulatory review; multidisciplinary representation | Methodological guidance for high-stakes validation [9] |

| Cross-Cultural Translation and Adaptation | 6+ experts | Requires language proficiency and cultural expertise alongside clinical knowledge | CVI methodology for PROM translation [8] |

| General Survey Development | 3-5 experts | Cost-effective for most research instruments with acceptable validity | Established CVI methodology guidelines [6] |

Content Validity Index Calculation and Interpretation

Table 3: CVI Thresholds and Decision Rules for Instrument Development

| Metric | Calculation Method | Acceptability Threshold | Interpretation | Implementation Example |

|---|---|---|---|---|

| Item-Level CVI (I-CVI) | Proportion of experts rating item 3 or 4 on relevance scale | ≥0.78 for panels of 3-5 experts | Item has acceptable content validity | Item with 4/5 experts rating 3-4: I-CVI=0.80 [2] |

| Scale-Level CVI (S-CVI/Ave) | Average of I-CVIs across all scale items | ≥0.90 | Overall instrument has excellent content validity | Mean of 20 items with average I-CVI=0.92 [8] |

| Scale-Level CVI (S-CVI/UA) | Proportion of items rated 3-4 by all experts | ≥0.80 | High degree of universal expert agreement | 16/20 items universally endorsed: S-CVI/UA=0.80 [2] |

Technical Specifications

Statistical Analysis and Reporting Requirements

Implementation Guidelines for Pharmaceutical Research

For drug development applications, expert panels should include:

- Clinical subject matter experts with direct patient experience in the therapeutic area

- Psychometric specialists skilled in measurement theory and instrument design

- Regulatory science experts familiar with FDA, EMA, or other relevant guidelines

- Patient representation when developing patient-reported outcome measures

- Cross-cultural experts for global clinical trials requiring instrument translation

Panel management should incorporate:

- Blinded independent ratings to prevent dominance by influential members

- Structured feedback mechanisms for item refinement

- Documentation of all decisions for regulatory audit trails

- Iterative review cycles until validity thresholds are achieved

The rigorous application of these expert panel protocols ensures that content validity assessments in pharmaceutical research meet the highest methodological standards, producing measurement instruments capable of generating reliable, regulatory-grade evidence for drug development programs.

Establishing Acceptable CVI Thresholds for Scientific Rigor

Content validity is a critical component in the development of research instruments, ensuring that items adequately represent the construct domain being measured [2]. The Content Validity Index (CVI) has emerged as a widely accepted quantitative method for assessing this validity, particularly in healthcare, social sciences, and educational research [2] [10]. The CVI methodology systematically quantifies expert agreement on item relevance, providing researchers with a rigorous approach to instrument validation [11]. This application note establishes evidence-based thresholds for CVI interpretation and provides standardized protocols for implementation within pharmaceutical and clinical research settings, where measurement precision directly impacts drug development outcomes and patient safety.

The CVI framework operates at two distinct levels: the Item-Level CVI (I-CVI), which assesses individual items, and the Scale-Level CVI (S-CVI), which evaluates the entire instrument [2] [10]. Proper application of CVI methodology requires understanding both statistical thresholds and methodological best practices, from expert panel selection to statistical calculation [12]. The following sections provide comprehensive guidance on establishing psychometrically sound CVI standards for research instrument development.

Quantitative Standards for CVI Interpretation

Established Thresholds for Content Validity

Based on methodological research and widespread application across studies, specific quantitative thresholds have been established for interpreting CVI values. These standards ensure instruments meet minimum psychometric requirements for content validity.

Table 1: Established CVI Thresholds for Instrument Validation

| Index Type | Acceptable Threshold | Ideal Threshold | Key Considerations |

|---|---|---|---|

| I-CVI (Item-Level) | ≥ 0.78 [13] [12] | 1.0 for 3-5 experts [2] | Threshold varies based on number of experts |

| S-CVI/Ave (Scale-Level Average) | ≥ 0.80 [10] [14] | ≥ 0.90 [10] | Most commonly reported S-CVI method |

| S-CVI/UA (Scale-Level Universal Agreement) | ≥ 0.80 [2] | Not commonly used | Very stringent; all experts must agree on all items |

The I-CVI threshold of ≥0.78 is particularly important as it represents excellent agreement after adjusting for chance [12]. For studies with smaller expert panels (3-5 experts), some methodologies recommend a more stringent I-CVI of 1.0, as there is less opportunity for disagreement without compromising validity [2].

CVI Thresholds Based on Expert Panel Size

The acceptable CVI values are influenced by the number of content experts participating in validation. Smaller panels require higher agreement levels to achieve statistical significance.

Table 2: CVI Thresholds Based on Number of Experts

| Number of Experts | Acceptable I-CVI Value | Source Recommendation |

|---|---|---|

| 2 | At least 0.8 | Davis (1992) [2] |

| 3 to 5 | Should be 1 | Polit & Beck (2006), Polit et al., (2007) [2] |

| 6 to 8 | At least 0.83 | Lynn (1986) [2] |

| At least 9 | At least 0.78 | Lynn (1986) [2] |

These thresholds account for the probability of chance agreement, with larger panels allowing for slightly lower agreement rates while maintaining methodological rigor [2] [12]. For pharmaceutical and clinical research applications, where instruments may inform regulatory decisions or clinical trials, adhering to the more conservative thresholds is recommended.

Experimental Protocols for CVI Assessment

Protocol 1: Comprehensive CVI Calculation Workflow

This protocol provides a step-by-step methodology for calculating CVI values, adaptable for various research contexts including clinical outcome assessments and patient-reported outcome measures.

Materials and Reagents:

- Expert panel (5+ recommended for clinical trials research)

- 4-point Likert scale rating form (1 = not relevant; 2 = somewhat relevant; 3 = quite relevant; 4 = highly relevant)

- Data collection platform (Microsoft Excel, SPSS, or specialized validation software)

- CVI calculation template

Procedure:

- Expert Panel Recruitment: Select 3-10 content experts with demonstrated expertise in the target domain [10] [11]. For drug development applications, include clinical specialists, methodological experts, and patient representatives where appropriate.

- Item Rating: Distribute the instrument with instructions for experts to rate each item using the 4-point relevance scale [2].

- Data Compilation: Compile expert ratings in a structured format with items as rows and experts as columns.

- Dichotomization: Convert Likert scale ratings to binary values (0 or 1), where ratings of 3 or 4 become "1" (valid), and ratings of 1 or 2 become "0" (not valid) [2].

- I-CVI Calculation: For each item, calculate I-CVI as the number of experts rating it 3 or 4 divided by the total number of experts [2].

- S-CVI Calculation:

- Threshold Application: Compare calculated CVIs to established thresholds (Table 1) and make item retention decisions [2].

Troubleshooting:

- If I-CVI values fall below thresholds, review qualitative expert feedback, revise problematic items, and consider re-evaluation

- If expert disagreement is high, assess whether panel composition appropriately represents the content domain

- If S-CVI values are inadequate, review instrument framework and theoretical foundation

Protocol 2: Excel-Based CVI Calculation

For researchers without specialized statistical software, Microsoft Excel provides a accessible platform for CVI calculation [2] [15].

Materials and Reagents:

- Microsoft Excel (2016 or later)

- Expert rating data

- CVI calculation template

Procedure:

- Data Setup: Create a table with questionnaire items as rows and expert ratings as columns [2]

- Binary Conversion:

- Select target cell for first binary conversion

- Enter formula:

=IF(original_cell>=3,1,0) - Drag formula to complete binary matrix for all experts and items [2]

- Expert Agreement Count:

- Select cell for first agreement count

- Enter formula:

=COUNTIF(binary_range,">=1") - Drag formula to calculate for all items [2]

- I-CVI Calculation:

- Select cell for first I-CVI value

- Enter formula:

=agreement_cell/total_experts - Drag formula to calculate for all items [2]

- Item Categorization:

- Select cell for validity categorization

- Enter formula:

=IF(I-CVI_cell>=0.8,"Valid","Invalid") - Drag formula to categorize all items [2]

- S-CVI Calculation:

- Calculate S-CVI/Ave:

=AVERAGE(I-CVI_range) - Calculate proportion of items with I-CVI meeting threshold [2]

- Calculate S-CVI/Ave:

Validation:

- Verify formula accuracy with test data

- Cross-validate manual calculation for subset of items

- Ensure consistent formatting throughout the worksheet

Research Reagent Solutions for CVI Studies

Table 3: Essential Methodological Components for CVI Assessment

| Research Component | Function in CVI Assessment | Implementation Examples |

|---|---|---|

| Expert Panel | Provides content domain expertise | Clinical specialists, methodological experts, patient representatives [10] [11] |

| Rating Scale | Standardizes relevance assessment | 4-point Likert scale (1=not relevant to 4=highly relevant) [2] [10] |

| Calculation Framework | Quantifies expert agreement | I-CVI, S-CVI/Ave, S-CVI/UA formulas [2] [12] |

| Statistical Software | Automates CVI computation | Microsoft Excel, SPSS, R, specialized validation packages [2] [15] |

| Decision Rules | Guides item retention/revision | Threshold application (I-CVI ≥ 0.78, S-CVI/Ave ≥ 0.80) [2] [12] |

Application in Pharmaceutical and Clinical Research

In drug development contexts, establishing content validity is particularly crucial for clinical outcome assessments (COAs), patient-reported outcomes (PROs), and other measurement instruments used in clinical trials [10] [16]. Regulatory agencies increasingly require demonstrated content validity for instruments supporting labeling claims.

Recent applications in healthcare research demonstrate the utility of rigorous CVI assessment. In nursing research, the Nursing Process Evaluation Tool (NPET) achieved an S-CVI/Ave of 0.88, confirming its validity for assessing AI-generated nursing care plans [10]. Similarly, a medication self-management instrument for older adults maintained adequate content validity, with 83% of items achieving CVI scores above 0.80 for relevance [11].

The CVI methodology has also been successfully applied in specialized clinical contexts. A drug clinical trial participation feelings questionnaire for cancer patients underwent rigorous content validation during development [16], while a shave biopsy training checklist demonstrated a content validity index of 0.76, surpassing the required threshold of 0.62 [17].

Establishing acceptable CVI thresholds is fundamental to scientific rigor in instrument development. The standards outlined in this document—I-CVI ≥ 0.78 and S-CVI/Ave ≥ 0.80—provide empirically supported benchmarks for content validity assessment across research contexts. The protocols and methodologies presented enable consistent application of these standards, particularly in pharmaceutical and clinical research where measurement precision directly impacts development decisions and patient outcomes. As measurement science evolves, these CVI thresholds and methodologies provide a foundation for developing valid, reliable instruments that generate trustworthy scientific evidence.

A Practical Framework for CVI Calculation and Implementation

Content Validity Index (CVI) is a critical psychometric metric used to quantify the degree to which an instrument, such as a questionnaire or survey, adequately measures the construct it intends to assess. Establishing content validity is a fundamental step in instrument development, particularly in pharmaceutical and clinical research where measurement accuracy directly impacts study outcomes and decision-making. The CVI methodology systematically incorporates expert judgment to evaluate item relevance and representativeness, providing quantitative evidence that the instrument's content reflects the targeted domain [18].

This protocol details a standardized approach for calculating CVI, specifically focusing on the transformation of expert Likert scale ratings into binary scores and the subsequent computation of item-level and scale-level validity indices. This process is essential for researchers developing instruments to measure complex constructs in drug development, clinical outcomes assessment, and healthcare research where valid measurement tools are prerequisites for generating reliable data [3].

Theoretical Framework and Key Concepts

Defining Content Validity

Content validity provides evidence about the degree to which elements of an assessment instrument are relevant to and representative of the targeted construct for a particular assessment purpose [18]. Unlike other forms of validity that focus on test scores, content validity evaluates the actual content of the instrument itself [18]. This distinction is particularly important when measuring complex, multi-dimensional constructs common in healthcare research and pharmaceutical development.

Four essential components comprise content validity:

- Domain definition: How the concept measured by an instrument is operationally defined

- Domain representation: The extent to which instrument items cover the entire content domain

- Domain relevance: How pertinent each item is to the target construct

- Appropriateness of test construction: The technical quality of item development and formatting [18]

Content Validity Index (CVI) and Its Components

The CVI methodology quantifies content validity through two primary levels of analysis:

- Item-Level CVI (I-CVI): The proportion of content experts giving a item a relevance rating of 3 or 4 on a 4-point Likert scale [2] [19]

- Scale-Level CVI (S-CVI): The overall validity of the entire instrument, calculated through two approaches:

Table 1: Content Validity Index Types and Interpretation

| Validity Index | Definition | Calculation Method | Acceptability Threshold |

|---|---|---|---|

| I-CVI | Content validity index for individual items | Number of experts rating 3-4 / Total number of experts | ≥0.78 for 6+ experts; ≥0.83 for 3-5 experts [3] |

| S-CVI/Ave | Average of all I-CVIs | Sum of all I-CVIs / Total number of items | ≥0.90 for excellent validity [18] |

| S-CVI/UA | Universal agreement on all items | Number of items rated 3-4 by all experts / Total items | ≥0.80 for adequate validity [2] |

Materials and Research Reagents

Essential Research Materials

Table 2: Essential Materials for CVI Assessment

| Material/Resource | Specification | Purpose/Function |

|---|---|---|

| Expert Panel | 3-10+ content experts with domain knowledge | Provide relevance ratings based on subject matter expertise |

| Rating Instrument | 4-point Likert scale (1=not relevant to 4=highly relevant) | Collect expert judgments on item relevance |

| Data Collection Tool | Electronic survey platform or paper forms | Systematically gather expert ratings |

| Statistical Software | Microsoft Excel, SPSS, R | Calculate CVI indices and analyze results |

| Validation Instrument | Questionnaire, scale, or assessment tool | Target of the content validity evaluation |

Expert Panel Selection and Composition

The quality of CVI assessment depends heavily on appropriate expert panel selection. For instrument development in pharmaceutical and clinical research, experts should possess:

- Advanced qualifications (PhD, MD, or equivalent) in the relevant field

- Minimum of 5 years of experience in the target domain [2]

- Research or clinical expertise specifically related to the construct being measured

- Diversity of perspectives to minimize bias

The number of experts should balance practical constraints with methodological rigor. While some researchers recommend 2-3 experts for initial screening [2], others suggest 5-10 experts for robust validation [3]. For high-stakes instruments in drug development, larger panels (8-12 experts) may be warranted to ensure comprehensive evaluation.

Experimental Protocol

The following diagram illustrates the complete CVI calculation workflow from expert rating to final validity determination:

Step-by-Step Calculation Methodology

Step 1: Setting Up the Data Structure

Create a structured data table in Excel or statistical software with the following organization:

- Rows: Individual items from the instrument being validated

- Columns: Expert ratings using the 4-point Likert scale

- Rating Scale:

Table 3: Example Data Structure for Expert Ratings

| Item Number | Expert 1 | Expert 2 | Expert 3 | Expert 4 |

|---|---|---|---|---|

| Item 1 | 4 | 3 | 4 | 3 |

| Item 2 | 2 | 3 | 1 | 2 |

| Item 3 | 3 | 4 | 4 | 4 |

| Item 4 | 4 | 4 | 3 | 4 |

Step 2: Converting Likert Scale Ratings to Binary Values

Transform the 4-point Likert scale ratings into dichotomous values to facilitate CVI calculation:

- Ratings of 3 ("Quite relevant") or 4 ("Highly relevant") = 1 (Valid)

- Ratings of 1 ("Not relevant") or 2 ("Somewhat relevant") = 0 (Not valid) [2]

Excel Implementation:

- Position cursor in first cell of binary conversion area

- Enter formula:

=IF(B2>=3,1,0)where B2 contains the first expert rating - Drag formula horizontally to apply to all expert columns

- Drag formula vertically to apply to all items [2]

Table 4: Binary Conversion of Expert Ratings

| Item Number | Expert 1 Binary | Expert 2 Binary | Expert 3 Binary | Expert 4 Binary |

|---|---|---|---|---|

| Item 1 | 1 | 1 | 1 | 1 |

| Item 2 | 0 | 1 | 0 | 0 |

| Item 3 | 1 | 1 | 1 | 1 |

| Item 4 | 1 | 1 | 1 | 1 |

Step 3: Counting Expert Agreement Per Item

Calculate the number of experts who rated each item as valid (score of 1):

Excel Implementation:

- Use COUNTIF function:

=COUNTIF(H2:J2,">=1") - Where H2:J2 represents the range of binary scores for a single item [2]

Step 4: Calculating Item-Level CVI (I-CVI)

Compute the I-CVI for each item by dividing the number of experts who agreed the item was valid by the total number of experts:

Formula: I-CVI = Number of experts rating item 3 or 4 / Total number of experts [2] [19]

Excel Implementation:

- Formula:

=K2/3(where K2 contains agreement count, and 3 is number of experts) - For varying expert numbers, replace 3 with actual count [2]

Table 5: I-CVI Calculation and Interpretation

| Item Number | Agreement Count | I-CVI Value | Interpretation |

|---|---|---|---|

| Item 1 | 4 | 1.00 | Excellent |

| Item 2 | 1 | 0.25 | Poor (needs revision) |

| Item 3 | 4 | 1.00 | Excellent |

| Item 4 | 4 | 1.00 | Excellent |

Step 5: Categorizing Items as Valid or Invalid

Apply established thresholds to determine whether each item meets content validity standards:

- For panels of 3-5 experts: I-CVI ≥ 0.8 indicates valid item [2]

- For panels of 6+ experts: I-CVI ≥ 0.83 indicates valid item [3]

Excel Implementation:

- Use IF function:

=IF(L2>=0.8,"Valid","Invalid") - Where L2 contains the I-CVI value [2]

Step 6: Calculating Scale-Level CVI (S-CVI)

Compute the overall validity of the entire instrument using two approaches:

S-CVI/Ave (Average Approach):

- Calculate average of all I-CVIs: S-CVI/Ave = Sum of all I-CVIs / Total number of items [2] [19]

- Acceptability threshold: ≥0.90 indicates excellent overall content validity [18]

S-CVI/UA (Universal Agreement Approach):

- Calculate proportion of items that achieve relevance ratings of 3 or 4 from all experts

- Formula: S-CVI/UA = Number of items with I-CVI = 1.00 / Total number of items [2]

- Acceptability threshold: ≥0.80 indicates adequate overall content validity [2]

Application in Pharmaceutical and Clinical Research

The CVI methodology has significant applications in drug development and clinical research. In one study validating pediatric pain knowledge instruments in Ghana, researchers calculated I-CVIs ranging from 0.62 to 1.00 for relevance and 0.69 to 1.00 for clarity, with S-CVI/Ave values of 0.87 and 0.89, leading to revision of 5 items and retention of 37 items [19]. This demonstrates how CVI analysis directly informs instrument refinement in multicultural research contexts.

In another example from cancer communication research, investigators developed a patient-centered communication instrument through rigorous content validation. From an initial 188 items, content validity analysis identified seven dimensions with an overall S-CVI/Ave of 0.93, indicating excellent content validity despite a low S-CVI/UA, which the authors attributed to the large number of content experts making universal agreement difficult to achieve [3].

Technical Considerations and Best Practices

Critical Values and Statistical Significance

When using the Content Validity Ratio (CVR) approach, which employs a different calculation method, researchers should consult critical value tables to determine statistical significance. The CVR formula is: CVR = (nₑ - N/2) / (N/2), where nₑ is the number of panelists indicating "essential" and N is the total number of panelists [20]. The minimum acceptable CVR values vary by panel size:

Table 6: Critical Values for Content Validity Ratio (CVR)

| Number of Panelists | Minimum CVR |

|---|---|

| 5 | 0.99 |

| 6 | 0.99 |

| 7 | 0.99 |

| 8 | 0.75 |

| 9 | 0.78 |

| 10 | 0.62 |

Common Methodological Challenges and Solutions

- Expert disagreement: Use modified kappa statistics to account for chance agreement [18]

- Panel size limitations: Combine quantitative CVI with qualitative expert feedback

- Cross-cultural adaptation: Assess both relevance and clarity separately, as done in the Ghana pediatric pain study [19]

- Complex constructs: Implement multi-stage validation with iterative item refinement

This protocol provides a comprehensive framework for calculating Content Validity Index through systematic transformation of Likert scale ratings to binary scoring. The step-by-step methodology enables researchers in pharmaceutical development and clinical research to quantitatively assess whether their instruments adequately measure target constructs. Proper implementation of CVI analysis strengthens instrument development, enhances measurement validity, and ultimately contributes to more reliable research outcomes in drug development and healthcare assessment.

By following this standardized approach, researchers can generate robust validity evidence for their instruments, meeting the rigorous methodological standards required in regulatory submissions, clinical trial endpoints, and patient-reported outcome measures.

In research disciplines, including pharmaceutical sciences and clinical outcome assessment development, the Content Validity Index (CVI) is a crucial psychometric measure used to quantify the degree to which a survey or assessment instrument adequately represents the construct it is intended to measure [21]. Establishing content validity provides the foundational evidence that scale items are relevant and representative of the target domain, thereby ensuring that subsequent data collection yields meaningful and interpretable results [2] [21]. The process systematically captures expert judgment, transforming qualitative feedback into quantitative metrics suitable for rigorous scientific evaluation. This article provides researchers with detailed application notes and protocols for calculating CVI using Microsoft Excel, enhancing efficiency, reproducibility, and accuracy in instrument development.

Core CVI Concepts and Quantitative Standards

The evaluation of content validity operates at two primary levels: the Item-level CVI (I-CVI), which assesses individual items, and the Scale-level CVI (S-CVI), which evaluates the entire instrument [2] [21]. The calculation of these indices relies on expert ratings of item relevance, typically collected using a 4-point Likert scale (e.g., 1 = Not relevant, 2 = Somewhat relevant, 3 = Quite relevant, 4 = Highly relevant) [2]. Ratings are subsequently dichotomized, with ratings of 3 and 4 considered "relevant," and 1 and 2 considered "not relevant" for validity calculations [2].

Acceptability thresholds for CVI values are not arbitrary but are based on established scientific consensus and adjust for the number of experts involved [2]. The following table summarizes the key CVI indices and their corresponding benchmarks.

Table 1: Key Content Validity Indices and Acceptability Thresholds

| Index Name | Definition | Calculation | Acceptability Standard |

|---|---|---|---|

| Item-CVI (I-CVI) | Proportion of experts agreeing on an item's relevance [21]. | Number of experts rating item 3 or 4 / Total number of experts |

- 3-5 experts: 1.00 [2]- 6-10 experts: ≥ 0.78 [21]- ≥ 9 experts: ≥ 0.78 [2] |

| S-CVI/Ave | The average of all I-CVI scores for items on the scale [2] [21]. | Sum of all I-CVIs / Total number of items |

≥ 0.90 is considered excellent [21]. |

| S-CVI/UA(Universal Agreement) | The proportion of items on the scale that achieved a relevance rating of 3 or 4 from all experts [2] [21]. | Number of items with I-CVI = 1.00 / Total number of items |

A more conservative measure; no universal threshold, but higher values indicate stronger agreement. |

| Content Validity Ratio (CVR) | Assesses whether an item is deemed "essential" [22] [23]. | (n_e - N/2) / (N/2)where n_e = number of experts rating "essential," N = total experts [22]. |

Must exceed a critical value based on the number of experts (see Table 2) [22]. |

Table 2: Lawshe's CVR Critical Values Table [22]

| Number of Experts | Minimum CVR Value |

|---|---|

| 5 | 0.99 |

| 6 | 0.99 |

| 7 | 0.99 |

| 8 | 0.75 |

| 9 | 0.78 |

| 10 | 0.62 |

| 15 | 0.49 |

| 20 | 0.42 |

| 30 | 0.33 |

| 39 | 0.33 |

Experimental Protocol for CVI Assessment

Stage 1: Expert Panel Preparation and Data Collection

- Define the Construct and Domain: Clearly articulate the theoretical construct and its boundaries. This operational definition guides the entire validation process [21].

- Assemble an Expert Panel: Engage a panel of 5-10 Subject Matter Experts (SMEs). Experts should have extensive experience in the field related to the construct [22] [23]. Document their credentials and expertise.

- Develop the Rating Instrument: Create a survey where experts rate each item on a 4-point relevance scale (1=Not relevant, 2=Somewhat relevant, 3=Quite relevant, 4=Highly relevant) [2]. Include space for qualitative feedback on item clarity, wording, and comprehensiveness.

- Collect Ratings: Distribute the rating instrument to the expert panel. Ensure ratings are completed independently to avoid conferring bias.

Stage 2: Excel Data Setup and CVI Computation

- Data Entry: In a new Excel workbook, set up a table where rows represent questionnaire items and columns represent expert ratings. Enter the raw 4-point scale ratings.

- Dichotomize Ratings: Convert the 4-point scale ratings to binary values (1 for relevant, 0 for not relevant).

- Excel Formula: In the first cell of a new "Expert 1 Binary" column, enter:

=IF(Original_Rating_Cell>=3,1,0). Drag the fill handle to apply this formula to all items and experts [2].

- Excel Formula: In the first cell of a new "Expert 1 Binary" column, enter:

- Calculate I-CVI:

- Step 1 - Count Agreements: Use

COUNTIFto sum the number of experts who rated each item as relevant.- Excel Formula:

=COUNTIF(Binary_Range, ">=1")whereBinary_Rangeis the range of binary cells for a single item [2].

- Excel Formula:

- Step 2 - Compute Proportion: Divide the agreement count by the total number of experts.

- Excel Formula:

=Agreement_Count_Cell / Total_Number_of_Experts[2].

- Excel Formula:

- Step 1 - Count Agreements: Use

- Categorize Item Validity: Flag items as "Valid" or "Invalid" based on the I-CVI threshold.

- Excel Formula:

=IF(I-CVI_Cell>=0.8, "Valid", "Invalid")[2]. The 0.8 threshold can be adjusted based on the number of experts (see Table 1).

- Excel Formula:

- Calculate S-CVI/Ave: Compute the average of all I-CVI values.

- Excel Formula:

=AVERAGE(I-CVI_Range)[2].

- Excel Formula:

- Calculate S-CVI/UA:

- Step 1 - Flag Universal Agreement: For each item, check if all experts rated it as relevant.

- Excel Formula:

=IF(SUM(Binary_Range)=Total_Number_of_Experts, 1, 0)[2].

- Excel Formula:

- Step 2 - Compute Proportion: Sum the universal agreement flags and divide by the total number of items.

- Excel Formula:

=SUM(UA_Flag_Range) / Total_Number_of_Items.

- Excel Formula:

- Step 1 - Flag Universal Agreement: For each item, check if all experts rated it as relevant.

The following workflow diagram illustrates the complete CVI computation protocol in Excel.

For researchers executing a CVI study, the key "reagents" are not only computational tools but also the human and methodological components.

Table 3: Essential Research Reagents for CVI Studies

| Tool/Resource | Function/Role in CVI Protocol |

|---|---|

| Subject Matter Experts (SMEs) | Provide critical judgments on item relevance and representativeness. They are the primary source of validation data [22] [23]. |

| 4-Point Relevance Scale | The standardized metric (1-4) for collecting expert judgments on each item, which is later dichotomized for analysis [2]. |

| Microsoft Excel | The computational platform for data organization, dichotomization, and calculation of I-CVI, S-CVI/Ave, and S-CVI/UA using built-in functions [2]. |

| Structured Rating Form | The instrument (e.g., digital survey) used to present items to experts and collect their ratings in a consistent, organized manner. |

| CVI Threshold Tables | Reference standards (e.g., Lawshe's Table, Polit & Beck thresholds) used to make objective retain/reject decisions for items and the overall scale [2] [22]. |

Manually computing CVI is prone to error and inconsistency. Leveraging Microsoft Excel with a structured protocol, as outlined in this article, provides a systematic, efficient, and reproducible method for establishing content validity. By implementing the specific formulas and workflows—such as IF for dichotomization, COUNTIF for aggregating expert agreement, and AVERAGE for calculating scale-level indices—researchers can ensure the rigor and defensibility of their survey instruments. This robust quantitative foundation is essential for developing high-quality measures that yield trustworthy data in critical research and development fields.

Content validity evidence is a critical component in the development of surveys and measurement instruments, ensuring that items adequately represent the construct domain being measured. The Content Validity Index (CVI) has emerged as the most widely utilized quantitative method for evaluating this psychometric property [24]. The CVI system operates at two distinct levels: the Item-Level CVI (I-CVI), which assesses individual items, and the Scale-Level CVI (S-CVI), which evaluates the entire instrument [25] [24]. Within S-CVI, two primary approaches exist: S-CVI/UA (Universal Agreement) and S-CVI/Ave (Average) [2]. Proper interpretation of these metrics is essential for researchers, scientists, and drug development professionals to make informed decisions about instrument refinement and validation. This application note provides a comprehensive framework for interpreting I-CVI and S-CVI/Ave scores within the context of survey development research.

Quantitative Interpretation Guidelines

Established Standards and Thresholds

The table below summarizes the widely accepted quantitative standards for interpreting I-CVI and S-CVI/Ave scores in instrument development:

Table 1: Interpretation Guidelines for CVI Metrics

| Metric | Score Range | Interpretation | Action Implied | Key References |

|---|---|---|---|---|

| I-CVI | ≥ 0.78 | Excellent | Item should be retained | [24] |

| 0.70 - 0.78 | Requires revision | Item needs modification | [25] | |

| < 0.70 | Unacceptable | Item should be eliminated | [25] | |

| S-CVI/Ave | ≥ 0.90 | Excellent | Scale has excellent content validity | [24] |

| 0.80 - 0.89 | Good | May require minor revisions | [2] | |

| < 0.80 | Questionable | Requires significant revision | [2] |

These thresholds provide a systematic approach for evaluating both individual items and the overall instrument. For newly developed instruments, a more conservative approach is often recommended, with I-CVI values of ≥ 0.80 considered necessary to confirm that items possess high, clear, and relevant content validity [2].

Comparative Analysis from Recent Studies

Recent validation studies demonstrate the practical application of these standards across various research domains:

Table 2: CVI Values in Recent Instrument Validation Studies

| Instrument | Field | I-CVI Range | S-CVI/Ave | Expert Panel Size | Reference |

|---|---|---|---|---|---|

| Personalized Exercise Questionnaire (PEQ) | Musculoskeletal Disorders | 0.50 - 1.00 | 0.91 | 42 | [25] |

| Musculoskeletal Self-Management Questionnaire (MSK-SMQ) | Persistent MSK Conditions | 0.91 - 1.00 | 0.96 | 91 (3 panels) | [26] |

| Tele-Primary Care Oral Health CIS | Digital Health | N/R | 0.90 | 10 | [27] |

The variability in I-CVI ranges observed in these studies highlights the importance of both item-level and scale-level analysis. For instance, the PEQ development study retained some items with I-CVI as low as 0.50 while achieving an acceptable S-CVI/Ave of 0.91, demonstrating how instruments with varying item-level performance can still achieve adequate overall content validity [25].

Experimental Protocols for CVI Evaluation

Judgmental Validation Protocol

The judgmental validation process involves rigorous evaluation by content experts through the following methodology:

Expert Panel Selection: Recruit 3-10 subject matter experts with demonstrated expertise in the construct domain [25] [2]. For highly specialized domains (e.g., drug development), include professionals with diverse but relevant backgrounds (clinicians, researchers, methodologists).

Rating Procedure: Present experts with the instrument items and a 4-point Likert scale for rating item relevance: 1 = "not relevant," 2 = "somewhat relevant," 3 = "quite relevant," and 4 = "highly relevant" [2] [27].

Data Collection: Utilize structured forms that allow experts to rate each item and provide qualitative feedback on clarity, wording, and appropriateness [25].

Binary Conversion: Convert Likert ratings to binary values (0 or 1), where ratings of 3 or 4 are converted to 1 (relevant), and ratings of 1 or 2 are converted to 0 (not relevant) for CVI calculation [2].

Quantitative Calculation Protocol

The following workflow illustrates the systematic process for calculating and interpreting CVI metrics:

Diagram 1: CVI Calculation and Interpretation Workflow

I-CVI Calculation Methodology: For each item, I-CVI is calculated as the number of experts giving a rating of "very relevant" (or 3-4 on the Likert scale) divided by the total number of experts [25]. The formula is expressed as:

I-CVI = (Number of experts rating item as 3 or 4) / (Total number of experts) [2]

S-CVI/Ave Calculation Methodology: S-CVI/Ave is computed as the average of all I-CVI values in the instrument [24] [2]. The formula is expressed as:

S-CVI/Ave = Σ(I-CVI) / (Number of items) [2]

Excel-Based Computational Protocol

For researchers with minimal statistical expertise, Microsoft Excel provides an accessible platform for CVI calculation:

- Data Setup: Create a table with items as rows and expert ratings as columns [2].

- Binary Conversion Formula: Use Excel formula

=IF(B2>=3,1,0)to convert Likert ratings to binary values [2]. - I-CVI Calculation: Apply formula

=COUNTIF(H2:J2,">=1")/3to calculate I-CVI for each item [2]. - S-CVI/Ave Computation: Use

=AVERAGE(L2:L11)to calculate the scale-level index from all I-CVI values [2]. - Categorization: Implement formula

=IF(L2>=0.8,"Valid","Invalid")to automatically flag items requiring revision [2].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Content Validation Research

| Research Reagent | Function/Application | Implementation Considerations |

|---|---|---|

| Expert Panel | Provides judgmental evidence of content relevance | Select 3-10 experts with verified domain expertise; ensure diversity of perspectives [25] |

| Structured Rating Form | Standardizes expert evaluation process | Include 4-point Likert scale for relevance ratings; provide space for qualitative feedback [2] |

| CVI Calculation Template | Automates quantitative validity computation | Excel-based templates with pre-programmed formulas enhance accuracy and efficiency [2] |

| Content Validity Index (CVI) | Quantifies item and scale-level validity | Calculate both I-CVI and S-CVI/Ave for comprehensive assessment [24] |

| Qualitative Feedback Framework | Captures expert insights for item refinement | Implement cognitive interviewing methods to understand expert interpretations [25] |

Advanced Interpretation Considerations

Integration of Quantitative and Qualitative Evidence

Sophisticated interpretation of CVI results requires integrating quantitative metrics with qualitative insights:

- Cognitive Interviewing: Supplement CVI scores with cognitive interviews to understand how experts interpret items and identify potential issues with wording or clarity [25].

- Iterative Refinement: Use the combination of quantitative scores and qualitative feedback in an iterative refinement process, particularly for items with I-CVI scores between 0.70-0.78 [25].

- Domain Analysis: Examine patterns across domains or subscales to identify systematic content gaps, even when overall S-CVI/Ave meets thresholds [25].

Statistical Refinements

For advanced applications, researchers should consider:

- Chance Agreement Adjustment: Compute modified kappa statistics (K*) to adjust I-CVI for chance agreement, particularly with smaller expert panels [24].

- Panel Composition Effects: Recognize that S-CVI/UA (universal agreement) tends to produce more conservative estimates than S-CVI/Ave, especially with larger panels [25].

- Essentiality Assessment: Complement relevance assessment with essentiality ratings using the Content Validity Ratio (CVR) for more comprehensive content validation [26].

Proper interpretation of I-CVI and S-CVI/Ave scores requires both adherence to established psychometric thresholds and thoughtful consideration of contextual factors in instrument development. The protocols and guidelines presented in this application note provide researchers, scientists, and drug development professionals with a systematic framework for evaluating content validity evidence. By implementing these methodologies and utilizing the provided research reagents, professionals can enhance the rigor of their survey development processes and ensure their instruments adequately represent the constructs of interest. The integration of quantitative metrics with qualitative insights remains paramount for sophisticated content validity assessment in research and applied settings.

The Content Validity Index (CVI) is a critical quantitative measure in psychometric instrument development, ensuring questionnaire items accurately reflect the construct being measured [2]. This case study details the application of CVI methodology in developing and validating the Drug Clinical Trial Participation Feelings Questionnaire (DCTPFQ) for cancer patients [28]. The process demonstrates rigorous content validation within a broader research framework on survey development, providing a model for researchers and drug development professionals.

Methodological Framework

Theoretical Foundation and Item Generation

The DCTPFQ was developed using a structured, multi-phase methodology combining qualitative and quantitative approaches [28]. The initial phase established a robust theoretical foundation using Meleis's transitions theory and the Roper-Logan-Tierney model to conceptualize the patient experience during clinical trial participation [28].

- Qualitative Data Collection: Researchers conducted semi-structured, open-ended interviews with 10 cancer patients from a clinical trial center to capture authentic experiences [28]. Interviews explored four domains: participative cognition, healthcare resources, subjective experience, and support from relatives and friends [28].

- Initial Item Pool: Through literature review, theoretical framework application, and patient interviews, researchers generated 44 initial items for the questionnaire [28].

Content Validation Procedure

The content validation followed a rigorous multi-step process consistent with established CVI methodology [2] [29].

Table: Content Validation Procedure for DCTPFQ

| Step | Procedure | Participants | Output |

|---|---|---|---|

| 1. Delphi Expert Consultation | Expert rating of item relevance using 4-point Likert scale | Panel of content experts | Initial item reduction and refinement |

| 2. Pilot Testing | Preliminary testing of questionnaire | Target patient population | Assessment of readability and comprehension |

| 3. First CVI Assessment | Calculation of I-CVI and S-CVI/Ave | Expert panel | Identification of non-valid items (I-CVI < 0.78) |

| 4. Questionnaire Modification | Removal, addition, and modification of items | Research team | Improved questionnaire version |

| 5. Second CVI Assessment | Re-calculation of CVI values | Same expert panel | Final validation of all items |

CVI Calculation Protocol

The CVI calculation followed established quantitative methods essential for content validity assessment [2] [29]:

- Expert Panel: A panel of content experts rated each item's relevance using a 4-point Likert scale (1 = not relevant, 2 = somewhat relevant, 3 = relevant, 4 = very relevant) [29].

- Item-Level CVI (I-CVI): Calculated for each item by dividing the number of experts rating it 3 or 4 by the total number of experts [2] [29]. The accepted threshold for item retention was I-CVI ≥ 0.78 [29].

- Scale-Level CVI (S-CVI/Ave): Computed as the average of all I-CVI values, with the acceptable standard set at ≥ 0.90 [29].

For studies with 3-5 experts, some methodologies recommend an I-CVI of 1.00, while with larger panels (≥6 experts), the threshold is typically ≥ 0.83 [2].

Results and Quantitative Analysis

Content Validity Outcomes

The application of CVI methodology to the DCTPFQ yielded strong content validity evidence:

Table: CVI Results for DCTPFQ Development

| Validation Metric | Initial Pool | After Delphi & Pilot | Final Questionnaire |

|---|---|---|---|

| Number of Items | 44 | 36 | 21 |

| I-CVI Range | Not specified | Not specified | All items ≥ 0.78 |

| S-CVI/Ave | Not specified | Not specified | 0.934 |

| Test-Retest Reliability | - | - | 0.840 |

| Cronbach's Alpha | - | - | 0.934 |

The validation process refined the questionnaire from 44 initial items to a final 21-item instrument structured across four key factors: cognitive engagement, subjective experience, medical resources, and relatives and friends' support [28]. The final questionnaire demonstrated excellent psychometric properties with a Cronbach's alpha of 0.934 and test-retest reliability of 0.840 [28].

Construct Validity Correlations

The DCTPFQ showed significant correlations with established measures, confirming construct validity:

- Fear of Progression Questionnaire—short form (r = 0.731, p < 0.05) [28]

- Mishel's Uncertainty in Illness Scale (r = 0.714, p < 0.05) [28]

Experimental Protocols

Workflow Visualization

CVI Calculation Protocol

The Scientist's Toolkit

Table: Essential Research Reagents for CVI Survey Validation

| Tool/Resource | Function in CVI Validation | Application Example |

|---|---|---|

| Expert Panel | Content experts with domain-specific knowledge to assess item relevance | 12 orthodontic specialists validated functional appliance questionnaire [29] |

| 4-Point Likert Scale | Rating system for expert evaluation of item relevance (1=not relevant; 4=very relevant) | Used in DCTPFQ development for expert ratings [28] |

| I-CVI Calculator | Computational tool to calculate Item-Level Content Validity Index | Excel formulas (=COUNTIF, =AVERAGE) automate CVI calculation [2] |

| S-CVI/Ave Calculator | Tool to compute Scale-Level Content Validity Index as average of I-CVI values | Determines overall questionnaire content validity [2] [29] |

| Delphi Method Protocol | Structured communication technique for gathering expert consensus | Multiple rounds of expert consultation refine questionnaire items [28] |

| Statistical Software (SPSS) | Analyzes reliability and validity metrics beyond content validation | Cronbach's alpha calculation for internal consistency [29] |

This case study demonstrates the systematic application of CVI methodology in developing the DCTPFQ, highlighting its essential role in ensuring content validity for clinical trial assessment tools. The rigorous process—from theoretical foundation and expert validation to quantitative CVI assessment—provides a validated, reliable instrument measuring cancer patients' clinical trial participation experiences across four key dimensions. This methodology offers researchers and drug development professionals a replicable framework for developing psychometrically sound questionnaires, ultimately contributing to improved understanding of patient experiences in clinical trials and supporting the development of more patient-centric drug development practices.

Navigating Common Challenges and Enhancing CVI Outcomes

Within the rigorous framework of content validity index (CVI) survey development research, the Item-Level Content Validity Index (I-CVI) serves as a fundamental quantitative metric for evaluating individual instrument items. Defined as the proportion of experts who rate an item as quite relevant or very relevant (typically ratings of 3 or 4 on a four-point Likert scale), the I-CVI provides critical insight into the relevance and representativeness of each item in measuring the intended construct [21] [30]. Establishing robust content validity is a necessary condition for the accuracy and credibility of research findings, particularly in high-stakes fields like drug development where measurement error can have significant consequences [30].