Beyond the Ratio: Robust Methods to Mitigate Hormone Measurement Error in Research and Drug Development

Hormone ratios are widely used in biomedical research to capture the joint effect of hormones with opposing actions, yet their validity is critically threatened by measurement error.

Beyond the Ratio: Robust Methods to Mitigate Hormone Measurement Error in Research and Drug Development

Abstract

Hormone ratios are widely used in biomedical research to capture the joint effect of hormones with opposing actions, yet their validity is critically threatened by measurement error. This article provides a comprehensive guide for researchers and drug development professionals on the sources and impacts of this error, with a focus on practical methodological solutions. We explore the foundational statistical weaknesses of raw ratios, present robust alternatives like log-ratios and multivariate models, offer troubleshooting strategies for assay and study design, and outline validation frameworks for comparing methodological performance. The goal is to equip scientists with the knowledge to enhance the reliability and interpretability of their findings in endocrinology research.

The Invisible Problem: Why Hormone Ratios Are Inherently Vulnerable to Error

Hormone ratios are a prevalent tool in endocrine research, used to capture the joint effect or "balance" between two hormones with opposing or mutually suppressive actions. Researchers frequently employ ratios like testosterone/cortisol, estradiol/progesterone, and testosterone/estradiol to summarize complex endocrine interactions into a single, manageable variable. Despite their interpretive challenges, the use of hormone ratios has been increasing across numerous research domains [1].

The primary allure of ratios lies in their conceptual simplicity and biological plausibility. When hormones functionally oppose one another—such as cortisol suppressing testosterone activity—their ratio appears to offer an elegant solution for quantifying their net effect. Some researchers argue that specific ratios effectively predict biological outcomes, with Roney (2019) contending, for instance, that the raw estradiol/progesterone ratio serves as a good index of the outcomes of complex hormonal sequences [1].

However, this apparent simplicity masks significant methodological pitfalls that can compromise research validity unless properly addressed.

Theoretical Foundations & Justifications (The "Allure")

Common Justifications for Ratio Use

Researchers typically justify using hormone ratios based on several interconnected theoretical premises:

- Capturing Functional Balance: Ratios aim to quantify the net effect of two hormones with opposing physiological actions, such as the proposed balance between anabolic (testosterone) and catabolic (cortisol) processes [1].

- Summarizing Complex Interactions: In systems like the menstrual cycle, where hormones interact through "complex, temporal sequences," ratios like estradiol/progesterone (E/P) are valued as summary variables that might reflect underlying neurobiological states better than individual hormones [1].

- Predictive Efficacy: Some ratios demonstrate strong empirical associations with outcomes. For example, the E/P ratio reportedly associates more strongly with conception probability than its inverse (P/E) or individual hormones, justifying its use for predicting related behaviors [1].

Biological Plausibility of Ratios

The theoretical foundation for ratios often extends to specific biological mechanisms:

- Mutual Suppression: In some endocrine axes, one hormone directly suppresses the production or action of another. Cortisol can decrease pituitary sensitivity to gonadotropins and inhibit gonadal function, effectively downregulating testosterone production [1].

- Receptor Dynamics: Hormones can modulate each other's effects by regulating receptor availability. Progesterone is known to inhibit estradiol effects by reducing receptor densities, while estradiol may stimulate progesterone receptor proliferation [1].

Critical Methodological Pitfalls

Previously Recognized Statistical Problems

Even before considering measurement error, hormone ratios present several well-documented statistical challenges:

- Distributional Problems: Ratio distributions tend to be highly skewed and leptokurtic with marked outliers, even when component hormones are normally distributed. This is particularly pronounced when the denominator's coefficient of variation is large [1].

- Directional Arbitrariness: The ratio A/B is not linearly related to B/A, yet researchers rarely provide biological justification for choosing one direction over the other, potentially leading to different conclusions from the same data [1].

- Interpretational Ambiguity: An association between a ratio and an outcome could be driven solely by one component hormone, by additive effects of both, or by interactive effects. Using ratios may obscure the actual neurobiological mechanisms [1].

The Measurement Error Problem: A "Striking Lack of Robustness"

A previously unrecognized limitation with profound implications is the extreme sensitivity of raw hormone ratios to measurement error [1] [2].

Hormone levels are inherently subject to multiple sources of error:

- Analytical Error: Assays cannot perfectly assess exact hormone concentrations "in the tube" [1].

- Temporal Discrepancy: Levels at sample collection may not reflect effective physiological concentrations due to pulsatile secretion, circadian rhythms, and other temporal factors [1].

Table 1: Impact of Measurement Error on Ratio Validity

| Condition | Effect on Raw Ratio Validity | Effect on Log-Ratio Validity |

|---|---|---|

| Ideal Measurement (No Error) | High (Baseline) | High (Baseline) |

| Realistic Measurement Error | Drops rapidly | Remains robust |

| Skewed Denominator Distribution | Severely amplified error | Minimal impact |

| Positively Correlated Hormones | Moderate improvement | High and stable validity |

| Overall Performance | Poor robustness | Excellent robustness |

Noise in measured hormone levels becomes substantially exaggerated in ratio calculations, particularly when the denominator's distribution is positively skewed—a common occurrence with hormone data. Simulation studies demonstrate that the validity of raw hormone ratios (correlation between measured and underlying effective levels) drops rapidly with realistic measurement error levels [1].

Troubleshooting Guide: Hormone Ratio Analysis

FAQ 1: My hormone ratio produces extreme outliers that skew my results. What should I do?

Problem: A small number of ratio values are orders of magnitude larger than the rest, creating severe positive skew and potentially dominating statistical analyses.

Solutions:

- Identify the Cause: Check for implausibly small denominator values. Values approaching zero cause ratios to increase exponentially.

- Log-Transform: Calculate the ratio as the difference between log-transformed hormones [ln(A) - ln(B)] instead of the raw ratio (A/B). This automatically handles skew and prevents extreme values [1].

- Alternative Approaches: Consider using component hormones as separate predictors in regression models, potentially including their interaction term, rather than forcing them into a ratio.

Prevention: Always examine distributions of both component hormones before calculating ratios. Consider establishing minimum detectable values for exclusion.

FAQ 2: I'm getting different results depending on which hormone I put in the numerator vs. denominator. How do I choose?

Problem: The arbitrary decision of which hormone to place in the numerator versus denominator significantly changes analytical outcomes, with no clear biological guidance.

Solutions:

- Theoretical Justification: Base the decision on biological mechanism rather than convenience. If one hormone functionally suppresses the other's action, the suppressed hormone typically belongs in the numerator.

- Empirical Testing: Test which ratio direction (A/B or B/A) more strongly predicts biologically relevant outcomes in validation datasets [1].

- Use Log-Ratios: With log-transformed ratios [ln(A/B)], the choice only affects the sign of the association, not its magnitude or pattern, eliminating this arbitrariness [1].

Prevention: Pre-specify ratio direction in research protocols based on biological rationale, and report sensitivity analyses showing both directions.

FAQ 3: My ratio seems to be driven by only one hormone. How do I confirm it captures true balance?

Problem: The ratio appears to reflect variation in only one component hormone rather than capturing their functional balance or interaction.

Solutions:

- Component Correlation: Check correlations between the ratio and each component hormone. A ratio capturing true balance should correlate substantially with both components.

- Regression Decomposition: Enter both individual hormones alongside the ratio in regression models. If the ratio remains significant while individual hormones do not, it suggests the ratio captures unique variance.

- Interactive Models: Test a model containing both hormones and their linear × linear interaction term. Compare this to the ratio model to determine what the ratio actually captures [1].

Prevention: Use alternative statistical approaches like response surface analysis that can model complex interactions without ratio constraints.

FAQ 4: How does measurement error specifically affect my hormone ratio?

Problem: Even modest measurement inaccuracies in hormone assays become dramatically amplified when calculating ratios, potentially compromising study conclusions.

Solutions:

- Assay Validation: Rigorously determine and report the coefficient of variation (CV) for your hormone assays at relevant concentration ranges.

- Error Propagation Assessment: Recognize that ratio error approximately equals √(CV₁² + CV₂²), potentially creating substantial compounded error.

- Log-Ratio Preference: Use log-transformed ratios, which are mathematically more robust to measurement error. Under some conditions, measured log-ratios may provide a more valid measurement of the underlying true raw ratio than the measured raw ratio itself [1].

Prevention: Incorporate measurement error considerations into sample size calculations and preferentially use log-ratios.

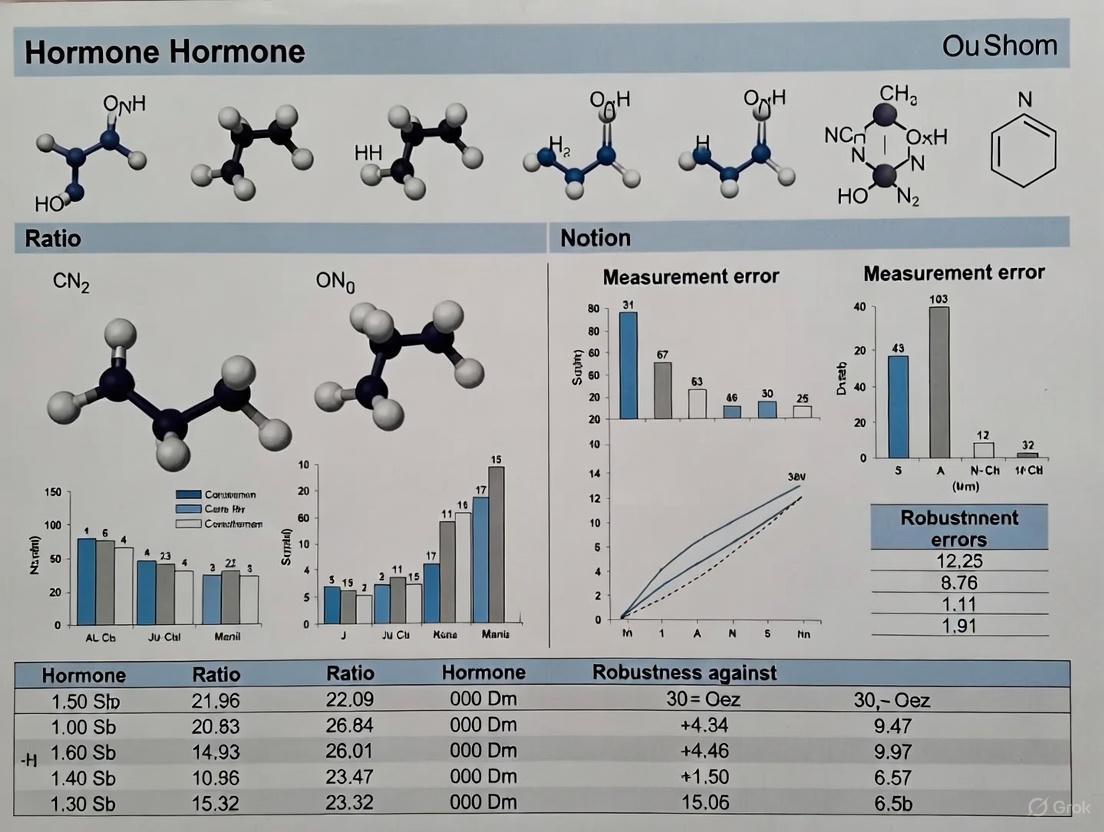

Diagram 1: Measurement Error Impact on Ratio Validity. This flowchart visualizes how measurement error from different sources affects raw versus log-transformed ratios, and how data characteristics like skewness amplify negative consequences for raw ratios [1].

Robust Methodologies & Experimental Protocols

Recommended Protocol: Implementing Log-Transformed Ratios

Purpose: To calculate hormone ratios that are robust to measurement error and distributional problems.

Procedure:

- Screen Data: Examine distributions of both hormone A and hormone B for outliers and extreme skewness.

- Log-Transform: Apply natural log transformation to both hormones:

- lnA = ln(hormoneA)

- lnB = ln(hormoneB)

- Calculate Log-Ratio: Compute the ratio as the difference between log-transformed values:

- logratio = lnA - ln_B

- Validate Transformation: Confirm that the resulting log-ratio approximates a normal distribution using normality tests or Q-Q plots.

- Statistical Analysis: Use the log-ratio variable in subsequent analyses (correlations, regression models).

Interpretation: A one-unit increase in the log-ratio corresponds to a multiplicative change in the original hormone ratio.

Recommended Protocol: Component-Plus-Interaction Analysis

Purpose: To determine what drives ratio-outcome associations and avoid interpretational ambiguity.

Procedure:

- Standardize Variables: Convert both hormones to z-scores (mean=0, SD=1) to facilitate coefficient interpretation.

- Specify Regression Model: Test a comprehensive model including:

- Outcome ~ HormoneA + HormoneB + (HormoneA × HormoneB)

- Compare to Ratio Model: Test a separate model containing only the ratio:

- Outcome ~ Ratio_AB

- Model Comparison: Use likelihood-ratio tests or information criteria (AIC/BIC) to determine which approach better explains the outcome.

- Probe Interactions: If the interaction term is significant, use simple slopes analysis or visualization to interpret its nature.

Interpretation: This approach determines whether ratio effects are driven by one component, additive effects, or true interactive effects.

Experimental Protocol: ELISA-Based Hormone Measurement for Ratio Calculation

Purpose: To accurately measure hormone concentrations from biological samples while minimizing measurement error for subsequent ratio calculation.

Procedure:

- Sample Collection:

Standard Curve Preparation:

ELISA Protocol:

- Bring all reagents, standards, and samples to room temperature before use.

- Add standards and samples to appropriate wells; incubate according to manufacturer specifications.

- Add detection antibody; incubate followed by thorough washing.

- Add enzyme substrate; incubate in darkness until color development.

- Stop the reaction and read optical density (OD) at specified wavelength (typically 450nm) [3].

Data Processing:

- Subtract blank OD values from all standards and samples.

- Fit standard curve using 4-parameter logistic (4PL) regression for optimal accuracy across the measurement range [3].

- Interpolate sample concentrations from the standard curve.

- Apply dilution factors to calculate final concentrations.

Quality Control:

Diagram 2: Workflow for Robust Hormone Ratio Analysis. This flowchart outlines the decision process for selecting and validating hormone ratio methodologies, emphasizing robust alternatives to raw ratios [1].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Reagents for Hormone Ratio Research

| Item | Function | Considerations |

|---|---|---|

| High-Sensitivity ELISA Kits | Quantifying specific hormones in biological samples | Prefer kits with low CV% and validated standard curves; select based on expected concentration ranges [3]. |

| Certified Reference Standards | Calibrating assays for accurate absolute concentration | Required for each target analyte; source from validated suppliers with documented purity [4]. |

| Isotopically Labeled Internal Standards | Correcting for matrix effects in MS-based assays | Essential for LC-MS/MS workflows; use chemically identical analogs (e.g., D³-cortisol) [4]. |

| Quality Control Materials | Monitoring assay performance across batches | Include at multiple concentration levels; use for both intra- and inter-assay validation [3] [4]. |

| Automated SPE Systems | Sample purification for complex matrices | Improve consistency and throughput; particularly valuable for steroid hormone panels [4]. |

| LC-MS/MS System | Gold-standard multi-analyte quantification | Enables simultaneous measurement of 10-30+ steroid analytes; provides high specificity via MRM [4]. |

| 4PL Curve Fitting Software | Accurate standard curve interpolation | Superior to linear regression for broad dynamic ranges; available in platforms like GraphPad Prism [3]. |

Hormone ratios offer undeniable allure through their conceptual simplicity and potential to summarize complex endocrine interactions. However, their methodological pitfalls—particularly the striking sensitivity to measurement error—demand careful consideration in research design and analysis [1].

The path forward requires methodological sophistication rather than abandonment of ratio concepts. Researchers should:

- Acknowledge Limitations: Explicitly recognize and address the statistical and measurement challenges of ratios.

- Prefer Robust Methods: Implement log-transformed ratios as standard practice unless strong theoretical reasons support raw ratios.

- Validate Interpretations: Use component-plus-interaction analyses to verify what biological relationships ratios actually capture.

- Report Transparently: Document all methodological decisions regarding ratio calculation and validation procedures.

By adopting these practices, researchers can harness the conceptual value of hormone balance while maintaining the methodological rigor necessary for robust scientific conclusions.

Frequently Asked Questions (FAQs) & Troubleshooting Guides

Biological Noise & Phenotypic Variability

Q1: What is the difference between biological "noise" and "molecular phenotypic variability"?

In scientific literature, noise refers specifically to the intrinsic stochasticity of biochemical reactions (like transcription and translation) that leads to variation in mRNA and protein production between genetically identical cells under the same conditions [5]. Molecular phenotypic variability, which is what we typically measure, is the observed variation in molecular phenotypes (e.g., mRNA/protein abundance) across a cell population. This observed variability arises from a combination of stochastic noise and deterministic regulatory mechanisms that cells use to modulate this noise [5].

Q2: What genomic features are known to influence transcriptional variability?

Several DNA-level features have been linked to modulating transcriptional variability [5]:

- TATA-box promoters: Genes with TATA-box containing promoters show higher levels of transcriptional variability and are often involved in rapid response to environmental stress [5].

- Transcription Factor Binding Sites (TFBSs): Variability increases with the number of TFBSs [5].

- CpG Islands (CGIs): The presence of CGIs in promoter regions and gene bodies is linked to a reduction in transcriptional variability. Genes associated with short CGIs tend to be more variably expressed [5].

- Transcriptional Start Sites (TSSs): Variability decreases with a higher number of TSSs [5].

Measurement Error & Hormone Ratios

Q3: Why are raw hormone ratios particularly problematic for research?

Raw hormone ratios (e.g., testosterone/cortisol, estradiol/progesterone) suffer from a striking lack of robustness to measurement error [2]. Noise in the measured levels of each hormone—arising from imperfect assays or temporal fluctuations—is dramatically exaggerated when one hormone is divided by the other. This problem is especially severe when the distribution of the denominator hormone is positively skewed, which is common for many hormones. This can lead to low validity (the correlation between measured levels and the underlying effective levels) and unreliable research findings [2].

Q4: What is a more robust alternative to using raw hormone ratios?

Using log-transformed ratios (log-ratios) is a much more robust approach. Simulations show that log-ratios maintain higher validity under realistic levels of measurement error and their validity is more stable across different samples. In some conditions, a measured log-ratio can be a more valid measurement of the underlying raw ratio than the measured raw ratio itself [2].

Assay Validation & Experimental Error

Q5: What are the main types of experimental error I should account for in my data?

Experimental error is typically categorized as follows [6] [7]:

Table 1: Types of Experimental Error

| Type of Error | Definition | Examples | How to Minimize |

|---|---|---|---|

| Random Error | Unpredictable variations that cause readings to skew randomly around the true value. | Fluctuations in instrument readings, biological variation between samples [6]. | Collect more data; use statistical analysis (mean, standard deviation); increase sample size [6]. |

| Systematic Error | A consistent, reproducible error that skews all results in the same direction. | Incorrectly calibrated instruments, consistent timing errors, incorrect measurements [6]. | Careful experimental design and calibration; cannot be corrected statistically after data collection [6]. |

| Human Error | Mistakes made by the experimenter during the procedure. | Adding the wrong concentration of a chemical to a sample [6]. | Thorough preparation; carefully following and double-checking procedures [6]. |

Q6: Which parameters are critical to validate an immunoassay method like ELISA?

A full validation of an in-house immunoassay should investigate the following key parameters [8]:

- Precision: The closeness of agreement between independent test results. This includes repeatability (within-run) and intermediate precision (between-run) [8].

- Trueness: The agreement between the average value from a large series of results and an accepted reference value [8].

- Robustness: The ability of the method to remain unaffected by small, deliberate variations in method parameters (e.g., incubation time, temperature) [8].

- Limits of Quantification (LOQ): The highest and lowest analyte concentrations that can be measured with acceptable precision and trueness [8].

- Selectivity/Specificity: The ability of the method to accurately measure the analyte in the presence of other components that may be expected to be present in the sample matrix [8].

- Parallelism & Dilution Linearity: Demonstrates that a sample can be reliably diluted and still provide an accurate result [8].

- Recovery: The detector response for an analyte added to and extracted from the biological matrix compared to the true concentration [8].

- Sample Stability: The stability of the analyte in the sample matrix under specific storage conditions [8].

Experimental Protocols & Methodologies

Protocol: Method Validation for Immunoassays (Precision)

This protocol is adapted from international validation guidelines [8].

Purpose: To determine the repeatability and intermediate precision of an immunoassay.

Materials:

- Validated assay protocol (calibrators, controls, reagents)

- Sample aliquots of at least two different concentrations (Low and High QC)

- Appropriate microplate reader

Procedure:

- Experimental Design: Over a period of at least 5 days, analyze the Low and High QC samples in replicates of at least 3 per run. Perform one run per day.

- Analysis: Analyze all samples according to the established assay protocol.

- Calculation: Calculate the mean concentration, standard deviation (SD), and coefficient of variation (%CV) for the replicates at each level.

- Within-Run Precision (Repeatability): Calculate the mean, SD, and %CV using the replicate values from a single run.

- Between-Run Precision (Intermediate Precision): Calculate the mean, SD, and %CV using all the replicate values from all runs (e.g., 5 days x 3 replicates = 15 data points per QC level).

Acceptance Criteria: Acceptance criteria depend on the intended use of the assay. For many bioanalytical methods, a %CV of ≤15-20% is often considered acceptable, with stricter criteria (e.g., ≤10-15%) for critical diagnostic markers [8].

Protocol: Optimizing Plate Coating for a New In-House ELISA

Purpose: To optimize the conditions for immobilizing an antigen or capture antibody to a microplate.

Materials:

- Polystyrene microplates (e.g., 96-well)

- Coating antigen or capture antibody

- Coating buffers (e.g., phosphate-buffered saline (PBS, pH 7.4) or carbonate-bicarbonate buffer (pH 9.4))

- Plate shaker (optional)

- Microplate washer

Procedure:

- Prepare Coating Solutions: Prepare a dilution series of your antigen or capture antibody (e.g., 0.5, 1, 2, 5, 10 µg/mL) in your chosen coating buffer.

- Coat Plate: Add 50-100 µL of each concentration to individual wells of the microplate. Include wells with coating buffer only as a blank control.

- Incubate: Cover the plate and incubate. Test different conditions: typically for 1-2 hours at room temperature or overnight at 4°C.

- Wash: Remove the coating solution and wash the plate 2-3 times with a wash buffer (e.g., PBS with 0.05% Tween 20).

- Block: Add a blocking buffer (e.g., 1% BSA or 5% non-fat dry milk in wash buffer) to all wells to cover any remaining protein-binding sites. Incubate for 1-2 hours at room temperature.

- Proceed with Assay: After blocking and a final wash, proceed with the standard ELISA steps (adding sample, detection antibodies, substrate, etc.).

- Analysis: The optimal coating concentration is the lowest concentration that yields the maximum signal-to-noise ratio for your target analyte.

Visualization: Pathways, Workflows & Relationships

Diagram: Classification of Experimental Error

Figure 1: A classification of experimental error sources and their mitigation strategies [6] [7].

Diagram: Key Steps in a Sandwich ELISA Workflow

Figure 2: The core workflow for a Sandwich ELISA, a common format for sensitive protein detection [9] [10].

Diagram: Data Processing with Network Filters

Figure 3: A pipeline for denoising biological data using network filters and community detection [11].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Immunoassay Development and Validation

| Item | Function / Description | Key Considerations |

|---|---|---|

| Polystyrene Microplates | Solid surface for immobilizing antigens or antibodies through passive adsorption [10]. | Choose clear for colorimetry, black/white for fluorescence/chemiluminescence. Ensure high protein-binding capacity and low well-to-well variation [10]. |

| Capture & Detection Antibodies | Form the core of a specific immunoassay. The capture antibody binds the analyte, and the detection antibody provides the signal [10]. | For sandwich ELISA, ensure the antibody pair recognizes different, non-overlapping epitopes on the target analyte. Use antibodies from different host species to avoid interference [10]. |

| Enzyme Conjugates | Enzymes linked to detection antibodies to generate a measurable signal. Common examples are Horseradish Peroxidase (HRP) and Alkaline Phosphatase (AP) [9] [10]. | The choice of enzyme determines the available substrates and detection mode (colorimetric, chemiluminescent, fluorescent). |

| Enzyme Substrates | Chemicals converted by the enzyme conjugate into a detectable product (e.g., colored, fluorescent, or luminescent) [9] [10]. | Select based on desired sensitivity and available detection instrumentation (e.g., TMB for colorimetric HRP detection). |

| Blocking Buffers | Solutions of irrelevant proteins (e.g., BSA, casein) used to cover any remaining protein-binding sites on the plate after coating, preventing nonspecific binding of other assay components [10]. | Optimization may be required to find the blocking agent that gives the lowest background for your specific assay. |

| Volatile Buffers & Additives | For LC-MS applications, mobile phases must contain volatile components (e.g., formic acid, ammonium formate/acetate) to prevent contamination and signal suppression in the mass spectrometer [12]. | Avoid non-volatile buffers like phosphate. Use high-purity additives at the lowest effective concentration [12]. |

Q1: What is the primary statistical problem with using raw hormone ratios in research?

The primary problem is that raw hormone ratios suffer from a striking lack of robustness to measurement error [2]. Hormone levels are measured with inherent error from assays and biological variability. When one hormone is divided by another, this noise is not merely passed on but can be dramatically exaggerated. This is especially severe when the denominator hormone has a positively skewed distribution (where most values are low, but a few are very high), which is common for many hormones [2] [13]. The resulting ratio can be a poor reflection of the underlying biological relationship, leading to invalid conclusions.

Q2: Why are skewed distributions in the denominator so problematic for ratios?

A skewed distribution means the variable has a long tail of high values. In the context of a ratio, this creates two major issues:

- Amplification of Error: Small measurement errors in low denominator values cause massive, disproportionate swings in the calculated ratio [2]. A tiny change in a small denominator leads to a large change in the final quotient.

- Non-Normality: Many common statistical tests (like t-tests or Pearson correlation) assume data is normally distributed. Skewed ratio data violates this assumption, which can lead to misleading p-values and confidence intervals [13].

Q3: What is the practical impact of this on my research findings?

Using invalid ratios can lead to false positives (Type I errors) or false negatives (Type II errors) in your statistical analyses. It reduces the validity of your findings and can misdirect future research. One study demonstrated that the correlation between a measured raw ratio and the underlying "true" biological ratio can drop rapidly to unacceptably low levels with realistic amounts of measurement noise [2].

Troubleshooting Guide: Identifying and Correcting Ratio Issues

Table 1: Diagnostic Checklist for Problematic Ratios

| Step | Check | Indicator of a Problem | Solution |

|---|---|---|---|

| 1 | Examine the denominator's distribution | Histogram shows a cluster of low values and a long right tail (positive skew) [13] | Apply a logarithmic transformation to the raw data before forming ratios [2] |

| 2 | Check for correlation between numerator and denominator | Numerator and denominator are positively correlated | Consider alternative models (e.g., regression with an interaction term) instead of a ratio |

| 3 | Assess the impact of measurement error | Your assay has a high coefficient of variation (CV) or is known to have cross-reactivity issues [14] | Use log-ratios, which are more robust to this error, or invest in more precise measurement techniques like LC-MS/MS [2] [14] |

| 4 | Evaluate the ratio's distribution | The calculated ratio itself is highly skewed | Use log-transformed ratios for all downstream statistical analyses |

Recommended Workflow for Robust Ratio Analysis

The following workflow outlines the key steps to diagnose ratio problems and apply the correct solution.

Advanced Solutions & Experimental Protocols

Q4: What is the recommended alternative to using raw ratios?

The most robust and recommended alternative is to use log-transformed ratios [2]. This means taking the logarithm of the numerator and the denominator before creating the ratio. In practice, this is equivalent to calculating:

log(Ratio) = log(Numerator) - log(Denominator)

Log-transformation helps in two key ways:

- It symmetrizes skewed distributions, making the data more normal [13].

- It is far more robust to measurement error. Under some conditions, a measured log-ratio can be a more valid indicator of the underlying biological ratio than the measured raw ratio itself [2].

Q5: How do I implement log-ratios in my experimental analysis?

Follow this detailed protocol for robust analysis:

- Step 1: Data Collection. Measure hormone levels using a high-quality method. Where possible, use LC-MS/MS over immunoassays to minimize cross-reactivity and matrix effects, which are common sources of error [14].

- Step 2: Data Preprocessing. Add a small constant to all hormone measurements if zeros are present (to allow log-transformation), then apply a natural log (ln) or base-10 log (log10) transformation to both the numerator and denominator variables.

- Step 3: Create the Variable. Calculate the log-ratio as the simple difference:

log_ratio = log(numerator) - log(denominator). - Step 4: Statistical Analysis. Use this new

log_ratiovariable in all subsequent statistical models (e.g., t-tests, regression, ANOVA). The coefficients for the log-ratio will have a multiplicative interpretation.

Q6: Are there real-world examples where this approach has been successful?

Yes. In prostate cancer research, the luteinizing hormone to testosterone (LH/T) ratio has been investigated as a predictive biomarker. The analysis of this hormone ratio, which is prone to the statistical issues described, was performed using rigorous statistical modeling and validation (logistic regression, nomograms, bootstrapping) to ensure robust findings [15]. This careful approach underscores the importance of proper methodology when working with hormone ratios.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagent Solutions for Hormone Measurement

| Item | Function in Research | Key Consideration |

|---|---|---|

| LC-MS/MS (Liquid Chromatography-Tandem Mass Spectrometry) | Gold-standard for measuring steroid hormone concentrations with high specificity [14] | Superior to immunoassays by minimizing cross-reactivity; essential for accurate denominator measurement. |

| High-Specificity Immunoassays | Antibody-based measurement of hormone levels. | Prone to cross-reactivity and matrix effects; requires rigorous validation for each study population [14]. |

| Stable Isotope-Labeled Internal Standards | Used in LC-MS/MS to correct for sample preparation losses and ionization variability. | Critical for achieving high accuracy and precision, thereby reducing measurement error. |

| Validated Hormone Standard Curves | Calibrators used to convert instrument signal into concentration values. | Must be traceable to international standards to ensure consistency and comparability across labs and studies. |

| Sample Preparation Kits (e.g., Solid-Phase Extraction) | Purify and concentrate hormones from complex biological matrices like serum or plasma. | Reduces interfering substances that can contribute to measurement error. |

Frequently Asked Questions (FAQs)

What is the core problem with using raw hormone ratios in research? Raw hormone ratios, such as testosterone/cortisol or estradiol/progesterone, suffer from a striking lack of robustness to measurement error. Noise in the measured hormone levels is substantially exaggerated by the ratio calculation, especially when the denominator hormone has a positively skewed distribution. This can rapidly degrade the validity of the ratio—the correlation between the measured value and the underlying biological reality. Using log-transformed ratios is a much more robust alternative. [2]

How can measurement error lead to incorrect biological conclusions in network analysis? In genomics and metabolomics, measurement error can dangerously affect the identification of regulatory networks. When using standard statistical methods like Ordinary Least Squares (OLS) that ignore measurement error, the estimated association parameters (e.g., regression coefficients) become biased and inconsistent. This means they do not converge to the true value even with larger sample sizes. Consequently, statistical tests lose reliability, leading to inflated false-positive rates and erroneous conclusions about which genes or metabolites are associated. [16]

What are the different types of measurement error I should consider? Measurement errors are generally categorized into three types:

- Systematic Errors: Repeatable, predictable errors caused by imperfections in the analyzer or test setup. These can often be characterized and mathematically reduced through calibration. [17]

- Random Errors: Unpredictable fluctuations due to factors like instrument noise or connector repeatability. These can be minimized through techniques like signal averaging but not completely removed. [17]

- Drift Errors: Changes in instrument performance over time after calibration, often due to temperature fluctuations. These can be mitigated by ensuring a stable environment and re-calibrating regularly. [17]

My experiment failed. What is a systematic approach to find the cause? A structured troubleshooting methodology involves six key steps [18]:

- Identify the problem without assuming the cause.

- List all possible explanations, from obvious to less apparent.

- Collect data by checking controls, equipment, reagents, and procedures.

- Eliminate explanations based on the collected data.

- Check with experimentation by designing tests for the remaining causes.

- Identify the cause and implement a fix.

Troubleshooting Guides

Guide 1: Troubleshooting Hormone Ratio Analyses

Problem: A calculated hormone ratio shows a weak or unexpected association with a behavioral or physiological outcome, leading to concerns about the validity of the finding.

| Possible Cause | Diagnostic Checks | Corrective Actions |

|---|---|---|

| High measurement error in denominator hormone [2] | Check the coefficient of variation (CV) for repeated measurements of the denominator hormone. Examine the distribution of the denominator for positive skew. | Switch from using a raw ratio to a log-ratio (e.g., log(horomoneA) - log(hormoneB)), which is more robust to measurement error. [2] |

| Correlated measurement errors [19] | Review the assay methodology. Errors from sample preparation or run batch can be correlated, distorting association networks. | Implement proper experimental designs that allow for quantifying the size of correlated errors. Use statistical methods that account for this error structure. [19] |

| Inappropriate statistical method | Determine if your analysis method (e.g., OLS regression) assumes error-free measurements. | Use measurement error models (e.g., Corrected OLS) that explicitly incorporate error equations for the variables, producing consistent estimators. [16] |

Guide 2: General Experimental Troubleshooting Framework

This framework can be applied to a wide range of experimental failures, from PCR to cell culture.

1. Identify and Define the Problem Clearly state what went wrong. Example: "No PCR product is detected on the agarose gel, but the DNA ladder is visible." [18]

2. Brainstorm Possible Causes List every potential source of the problem. For a failed PCR, this includes:

- Reagents: Taq polymerase, MgCl2, dNTPs, primers, DNA template.

- Equipment: Thermocycler block temperature calibration.

- Procedure: Incorrect cycling parameters. [18]

3. Investigate and Collect Data

- Check Controls: Did positive and negative controls work as expected? [18]

- Review Procedures: Compare your lab notebook to the established protocol. [18]

- Inspect Materials: Check expiration dates and storage conditions of reagents. [18]

4. Eliminate and Isolate Based on your data collection, rule out causes. If the positive control worked, the thermocycler and most reagents are likely fine, pointing to the specific DNA template or primers. [18]

5. Test with Experimentation Design a targeted experiment. For the PCR example, this could involve running the DNA template on a gel to check for degradation and confirming its concentration. [18]

6. Implement the Solution Once the root cause is identified (e.g., low DNA template concentration), adjust the protocol and re-run the experiment. Consider preventive measures for the future, such as using a pre-made master mix to reduce pipetting error. [18]

Experimental Protocols & Data

Quantifying the Impact of Measurement Error

The following table summarizes simulation results from regulatory network studies, demonstrating how measurement error biases inference. The "Corrected OLS" method explicitly models measurement error, unlike standard OLS. [16]

Table 1: Impact of 20% Measurement Error on Parameter Estimation (Simulation Results) [16]

| Sample Size (n) | True Coefficient (β) | Avg. Estimated β (Standard OLS) | Avg. Estimated β (Corrected OLS) |

|---|---|---|---|

| 50 | 0.9 | 0.83 | 0.90 |

| 100 | 0.9 | 0.82 | 0.90 |

| 500 | 0.9 | 0.82 | 0.90 |

The attenuation of estimates using Standard OLS is clear and does not improve with larger sample sizes, demonstrating its inconsistency.

Table 2: False Positive Rate (Type I Error) at 5% Significance Level [16]

| Sample Size (n) | Standard OLS | Corrected OLS |

|---|---|---|

| 50 | 10.5% | 5.1% |

| 100 | 15.5% | 5.0% |

| 500 | 28.5% | 4.9% |

Standard OLS fails to control the false positive rate when measurement error is present; the rate inflates dramatically as the sample size increases.

Protocol: Implementing a Corrected Regression for Data with Measurement Error

This protocol outlines the steps to perform a regression analysis that accounts for measurement error in an independent variable, based on the methodology described in BMC Bioinformatics. [16]

1. Model Specification:

- Define the true relationship: ( y = α + βx + ε ), where ( ε ) is the biological error.

- Define the measurement equations: ( X = x + ϵ₁ ) and ( Y = y + ϵ₂ ), where ( ϵ₁ ) and ( ϵ₂ ) are the measurement errors.

2. Error Variance Estimation:

- Estimate the variance of the measurement error (( σ²_{ϵ₁} )) for your variable ( x ). This can be derived from technical replicates or from the known precision of your assay.

3. Parameter Estimation:

- Use a statistical method that incorporates the measurement error structure. This can be a structural equation modeling (SEM) framework or a specific corrected formula. The core idea is to adjust the standard covariance calculations using the estimated error variance.

4. Inference:

- Calculate standard errors and confidence intervals using the formulas derived from the measurement error model to ensure reliable hypothesis testing.

Diagram 1: How measurement error impacts the inference pathway, showing correct and incorrect analytical choices.

Diagram 2: A generalizable troubleshooting workflow for laboratory experiments.

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Measurement Quality Assurance

| Item | Function / Application | Key Consideration |

|---|---|---|

| Certified Reference Materials | Provides a ground truth for calibrating instruments and validating assays. | Essential for quantifying and correcting systematic measurement errors. [17] |

| Pre-made Master Mixes | Reduces pipetting steps and variability in reactions like PCR. | Minimizes operator error and improves reproducibility. [18] |

| Stable Control Samples | Used in every assay run to monitor precision and drift over time. | Allows for the quantification of batch-to-batch measurement error. [19] |

| Log-Ratio Transformation | A mathematical approach to analyze two-part compositions like hormone ratios. | More robust to the skewing effects of measurement error than raw ratios. [2] |

| Statistical Software for Measurement Error Models | For implementing corrected estimators (e.g., Corrected OLS, Structural Equation Models). | Necessary for obtaining unbiased parameter estimates when variables contain error. [16] |

Building a Robust Toolkit: Statistical and Practical Alternatives to Raw Ratios

Troubleshooting Guides

Guide 1: Resolving Poor Statistical Results with Hormone Ratios

Problem: Your analysis of raw hormone ratios (e.g., Testosterone/Cortisol, Estradiol/Progesterone) shows unstable results, low validity, or extreme outliers, potentially due to measurement error. Explanation: Raw hormone ratios suffer from a striking lack of robustness to measurement error, a problem often overlooked in research. Hormone levels inherently contain noise from assay imperfections and discrepancies between sampled levels and physiologically effective levels. This noise is dramatically amplified in a raw ratio, especially when the denominator's distribution is positively skewed—a common feature of hormone data. The validity (the correlation between the measured ratio and the underlying true ratio) drops rapidly as measurement error increases [1] [2]. Solution: Apply a log-transformation to the ratio. Steps:

- Log-transform the individual hormone concentrations. This step also helps address the typical positive skewness of hormone data, making distributions more normal [1] [20].

- Calculate the log-ratio. Simply subtract the log-transformed denominator from the log-transformed numerator:

ln(A/B) = ln(A) - ln(B)[1] [21]. - Use the log-ratio in your statistical models. Proceed with correlation, regression, or other analyses using the log-transformed variable. Expected Outcome: The log-ratio will be much more robust to measurement error. Its validity remains higher and more stable across samples. In some cases, the measured log-ratio can be a more valid indicator of the underlying biological balance than the measured raw ratio itself [1].

Guide 2: Choosing Between a Ratio and Alternative Models

Problem: You are unsure whether a hormone ratio is the correct model for your research question or how to interpret a significant result. Explanation: A significant association between a ratio (A/B) and an outcome can be driven by several underlying scenarios, making biological interpretation ambiguous. The effect might be due solely to hormone A, solely to hormone B, from their additive effects, or from a true interactive effect between them. Using a raw ratio also introduces an arbitrary choice, as the results will differ depending on whether you use A/B or B/A [1]. Solution: Use a structured approach to model selection and interpretation. Steps:

- For Robustness and Symmetry: Start with the log-ratio. It solves the asymmetry problem because

ln(A/B) = -ln(B/A), ensuring your results do not depend on an arbitrary choice of numerator and denominator [1] [20]. - To Decompose the Effect: Follow up your ratio analysis by including the two log-transformed hormones as separate predictors in a multiple regression model. This helps clarify if one hormone is driving the effect [1] [20].

- To Test for Interaction: To explicitly test for a synergistic or antagonistic interaction, include an interaction term (Hormone A * Hormone B) alongside the main effects of both hormones in your regression model [1] [20]. Expected Outcome: This multi-step approach provides a more nuanced and interpretable understanding of how the two hormones are jointly associated with your outcome, moving beyond the black box of a simple ratio.

Frequently Asked Questions (FAQs)

FAQ 1: Why should I log-transform a hormone ratio instead of using the raw values?

Log-transforming a ratio provides three key advantages:

- Robustness to Error: It is significantly less sensitive to measurement error, which is unavoidable in hormone assays. Noise in the data has a much smaller impact on the validity of a log-ratio compared to a raw ratio [1] [2].

- Symmetry: The log-ratio solves the arbitrariness of choosing a numerator or denominator. The results from

ln(A/B)are simply the inverse (negative) ofln(B/A), which is not true for raw ratios [1]. - Improved Distribution: Hormone data are often log-normally distributed. Log-transforming the components of a ratio typically results in a more normal distribution of the final variable, which is beneficial for many parametric statistical tests [1] [20] [22].

FAQ 2: What are the practical implications of using log-ratios in drug development research?

In translational and clinical research, using a more robust biomarker leads to more reliable and reproducible findings. For example, a logarithmic model of hormone receptors (log(ER)*log(PgR)/Ki-67) has been validated as a predictive marker for treatment response in hormone receptor-positive breast cancer patients [23]. Employing log-ratios can help in identifying more consistent biomarkers for patient stratification, drug response prediction, and understanding complex endocrine interactions in network analyses [24]. This reduces the risk of building models on statistical artifacts caused by noisy raw ratios.

FAQ 3: My outcome variable is a hormone concentration. Should I log-transform it too?

Yes, it is often recommended to log-transform positive data like hormone concentrations. The primary reason is not necessarily to achieve a normal distribution, but to make additive and linear models more appropriate. A multiplicative relationship on the original scale becomes a linear one on the log scale, which often aligns better with the underlying biology and improves model validity [22].

Experimental Protocols

Protocol 1: Methodology for Simulating Ratio Robustness

This protocol is based on simulations used to demonstrate the fragility of raw ratios [1].

Objective: To quantitatively compare the robustness of raw ratios versus log-ratios under varying degrees of measurement error.

Materials:

- Statistical software (e.g., R, Python)

- A dataset of empirically observed hormone levels (e.g., estradiol and progesterone) or parameters to generate synthetic data with known properties (e.g., positive skewness, positive correlation between hormones).

Workflow:

Steps:

- Obtain/Generate Baseline Data: Use a dataset with measured hormone pairs or generate synthetic data that mimics real-world hormonal distributions (e.g., positively skewed, positively correlated).

- Simulate Measurement Error: Add random, realistic measurement noise to the baseline hormone levels. Systematically vary the level (magnitude) of this noise across multiple simulation runs.

- Calculate Ratios: For each level of simulated noise, calculate both the raw ratio (A/B) and the log-ratio (

ln(A) - ln(B)) from the noisy data. - Assess Validity: Calculate the correlation between the ratios derived from the noisy data and the "true" ratios from the original, clean baseline data. This correlation coefficient is the measure of validity.

Expected Output: A plot showing that as measurement error increases, the validity of the raw ratio drops rapidly, while the validity of the log-ratio remains high and stable.

Protocol 2: Implementing a Log-Ratio in a Machine Learning Pipeline

This protocol is adapted from studies using explainable AI to investigate hormonal balances [25].

Objective: To build a predictive model for a log-transformed hormone ratio and identify key influencing factors.

Materials:

- Dataset with hormone measurements (preferably via mass spectrometry), anthropometric, demographic, and metabolic variables [25].

- Python/R environment with libraries like

XGBoostandSHAP.

Workflow:

Steps:

- Data Preprocessing: Handle missing values. Standardize or normalize all feature variables. Split data into training and test sets (e.g., 70/30).

- Calculate Target Variable: For each subject, compute the natural log of the hormone ratio:

Target = ln(Progesterone / Estradiol)[25]. - Train ML Model: Use a supervised learning algorithm like XGBoost to regress the features onto the log-transformed target variable. Optimize hyperparameters via cross-validation.

- Interpret with SHAP: Compute SHAP (SHapley Additive exPlanations) values for the trained model. This reveals the contribution and direction of effect for each feature in predicting the log-ratio.

Expected Output: A validated predictive model and a ranked list of features (e.g., FSH, waist circumference) that are most influential in determining the hormonal balance, providing data-driven biological insights [25].

Table 1: Comparative Performance of Raw vs. Log-Transformed Ratios

This table summarizes the core methodological differences and performance under measurement error, as established in the literature [1] [2] [20].

| Feature | Raw Ratio (A/B) | Log-Transformed Ratio (ln(A/B)) |

|---|---|---|

| Robustness to Measurement Error | Low; validity drops rapidly with noise. | High; validity remains high and stable. |

| Effect of Skewed Denominator | Amplifies error and creates outliers. | Mitigates the impact of skewness. |

| Symmetry (A/B vs. B/A) | Not symmetrical; results are different. | Symmetrical; ln(A/B) = -ln(B/A). |

| Statistical Distribution | Often highly skewed and leptokurtic. | Tends towards a more normal distribution. |

| Biological Interpretation | Can be ambiguous. | Captures equal, opposing effects on a log scale. |

Table 2: Essential Research Reagent Solutions for Hormone Ratio Analysis

| Item | Function/Benefit | Key Consideration |

|---|---|---|

| Mass Spectrometry (e.g., ID LC-MS/MS) | Gold-standard for hormone quantification. High specificity and sensitivity, minimal cross-reactivity [25]. | Preferable over immunoassays for research due to superior accuracy. |

| Hair Samples | Provides a long-term, stable biomarker for hormonal activity, integrating weeks to months of exposure [24]. | Complements acute measurements from blood/saliva. |

| Statistical Software (R/Python) | For implementing log- transformations, simulations, and advanced models (machine learning, network analysis). | Essential for robust and reproducible data analysis. |

| Explainable AI (XAI) Packages (e.g., SHAP) | Interprets complex machine learning models to identify key predictors of a hormonal ratio [25]. | Moves beyond "black box" predictions to generate biological insights. |

Technical Support & Troubleshooting

This section addresses common challenges researchers face when implementing multivariate models to improve the robustness of hormone ratio analysis.

Frequently Asked Questions

Q1: My multivariate model fails to converge when I include all desired interaction terms. What steps should I take?

A: Convergence issues often arise from the high dimensionality of the full interaction model, a problem known as the "curse of dimensionality." The number of potential pairwise interactions increases quadratically with the number of predictors [26]. To resolve this:

- Solution 1: Use a Low-Rank Factorization. Implement methods like

survivalFM, which approximates all pairwise interaction effects using a factorized parametrization [26]. Instead of directly estimating each interaction term βi,j, it uses an inner product between low-rank latent vectors,β~i,j = 〈pi, pj〉[26]. This drastically reduces the number of parameters to be estimated, overcoming computational limitations. - Solution 2: Apply Stronger Regularization. Increase the strength of L2 (Ridge) regularization to penalize overly complex models and steer the optimization towards a solution. Optimize the regularization parameter via cross-validation within your training set [26].

Q2: How can I diagnose if measurement error in my hormone assays is significantly biasing my model's conclusions?

A: Measurement error can severely distort analytical outcomes, a principle known as "Garbage In, Garbage Out" (GIGO) [27].

- Diagnostic Step 1: Conduct a Sensitivity Analysis. Introduce synthetic error into your dataset by adding random noise to your predictor variables. Re-run your analysis and observe the stability of your key parameters (e.g., coefficients for main and interaction effects). Large shifts indicate high sensitivity to measurement error [28].

- Diagnostic Step 2: Validate with Alternative Methods. Use a gold-standard measurement method (if available) on a subset of samples to quantify the error structure. Alternatively, employ methods like Confident Learning to estimate the joint distribution between noisy observed labels and uncorrupted true labels, characterizing the label error in your data [29].

Q3: My dataset has a clustered structure (e.g., repeated measurements from the same patient). Which model is more appropriate: MANOVA or a Mixed-Effects Model?

A: While MANOVA can handle multiple dependent variables, it assumes independence of all observations and does not account for data dependence [30]. For clustered or longitudinal data, such as repeated hormone measurements from patients:

- Recommended Solution: Use a Linear Mixed-Effects Model. Mixed-effects models include both fixed effects (the main and interaction effects you are testing) and random effects (to account for variation between clusters, e.g., individual patients). This provides greater validity and higher reproducibility for correlated data by explicitly modeling the data's covariance structure [30]. MANOVA's requirement for independence of observations is often violated in such experimental designs [31].

Troubleshooting Guide: Common Error Messages and Solutions

Table 1: Troubleshooting common implementation errors.

| Error Message / Symptom | Likely Cause | Solution |

|---|---|---|

| Model convergence warnings, "Hessian matrix is singular" | High multicollinearity between predictors or their interaction terms; insufficient data for model complexity. | 1. Check Variance Inflation Factors (VIFs) for main effects.2. Switch to a regularized model (e.g., Ridge regression) or a low-rank interaction model [26].3. Increase sample size if possible. |

| Coefficient estimates are unstable or have implausibly large standard errors when interactions are included. | Measurement error in the predictor variables is amplified in the constructed interaction terms [28]. | 1. Prioritize and use assays with higher precision for key variables.2. Consider measurement error models (e.g., regression calibration) that adjust for known error variances. |

| Clustering results are unstable or not biologically interpretable. | Clustering algorithms (like k-means) are highly sensitive to random measurement error, which can lead to spurious clusters and misclassification [28]. | 1. Pre-process data to smooth or denoise.2. Use a more robust method like Latent Profile Analysis (LPA), a model-based clustering technique that can better handle error in a single variable [28]. |

Experimental Protocols & Workflows

This section provides detailed methodologies for key analytical procedures.

Protocol for Implementing thesurvivalFMModel

This protocol details the steps for implementing a multivariate survival model with comprehensive pairwise interactions, as applied in the UK Biobank study [26].

1. Software and Package Installation

- The

survivalFMR package is required. Installation can typically be performed from CRAN or the author's repository. - Critical Software Check: Verify that all dependent packages (

survival,Matrix) are correctly installed and loaded.

2. Data Preparation and Standardization

- Format your data into a dataframe where each row is an observation (e.g., a patient) and columns include:

- Time-to-event: The duration until the event of interest (e.g., disease onset).

- Event status: A binary indicator (e.g., 1 for event occurred, 0 for censored).

- Predictor variables: Standardize all continuous predictors (e.g., hormone levels, clinical biomarkers) to a mean of 0 and standard deviation of 1. This ensures that regularization penalizes coefficients equally and improves model convergence [26].

3. Model Training with Cross-Validation

- Split your data into training and validation sets, or use the training set for cross-validation.

- Use the

survivalFM()function, specifying the survival formula (e.g.,Surv(time, event) ~ .). - The method incorporates a low-rank factorization for interactions and uses an efficient quasi-Newton optimization algorithm [26].

- Hyperparameter Tuning: Perform k-fold cross-validation (e.g., 10-fold) on the training set to select the optimal value for the L2 regularization parameter and the rank of the factorization

k[26]. The optimal values are those that maximize a performance metric like Harrell's C-index.

4. Model Evaluation and Interpretation

- Apply the fitted model to the held-out test set.

- Evaluate performance using metrics of discrimination (C-index), explained variation, and reclassification [26].

- Extract the estimated coefficients for main effects and the factorized interaction matrices to interpret the biological relationships.

Workflow for implementing the survivalFM model.

Protocol for Processing Multivariate Time-Series Data from EHR

This protocol, adapted from COVID-19 EHR processing, is relevant for structuring longitudinal hormone data [32].

1. Environmental Setup

- Use a Conda environment to manage dependencies. Create one with

conda create -n hormone_analysis python=3.11and activate it withconda activate hormone_analysis[32]. - Install required packages:

pandas,numpy,scikit-learn.

2. Data Standardization and Cleaning

- Load raw data tables (e.g., from laboratory information systems).

- Standardize Format: Create a table where each row represents a unique patient-record time point. Key columns include:

PatientID,RecordTime,AdmissionTime,DischargeTime,Outcome[32].- Subsequent columns contain demographic and laboratory values (e.g., Hormone A, Hormone B) at that time point.

- Clean Data:

- Convert categorical variables (e.g., Gender) to numerical.

- Ensure time columns are in a consistent

Y/M/Dformat [32]. - Remove records with missing critical data (

PatientID,RecordTime). - Drop variables (columns) that are entirely missing or contain no variance.

3. Merging Records and Feature Engineering

- Temporal Merging: Group data by

PatientIDandRecordTimeto combine entries for the same patient on the same day. Calculate the mean of numeric values to create a single daily record [32]. - Calculate Derived Variables: Compute key outcomes and features, such as:

- Length of Stay (LOS):

DischargeTime - AdmissionTime[32]. - Hormone Ratios: Calculate ratios (e.g., Hormone A / Hormone B) for each time point.

- Time-Series Features: Create lagged variables or rolling averages to capture temporal dynamics.

- Length of Stay (LOS):

The Scientist's Toolkit

Research Reagent Solutions

Table 2: Essential computational and statistical tools for robust multivariate analysis.

| Tool / Solution | Function in Analysis | Relevance to Hormone Ratio Research |

|---|---|---|

survivalFM R package |

Enables scalable estimation of all pairwise interaction effects in survival models using low-rank factorization [26]. | Models how interactions between different hormone levels jointly influence time-to-event outcomes (e.g., disease onset), moving beyond single ratios. |

cleanlab Python library |

Implements "Confident Learning" to characterize and identify label errors in datasets [29]. | Quantifies and helps correct for misclassification or measurement error in categorical outcomes, improving data quality before modeling. |

Linear & Generalized Mixed-Effects Models (e.g., lme4 in R) |

Models data with clustered or repeated measures by incorporating fixed and random effects [30]. | Correctly accounts for the non-independence of repeated measurements from the same subject, a common feature in longitudinal hormone studies. |

| Latent Profile Analysis (LPA) | A model-based clustering technique that is more robust to random measurement error than k-means [28]. | Identifies distinct patient subtypes based on multi-hormonal profiles, even when assays contain noise. |

| Data Shapley / Beta Shapley | A principled framework to quantify the contribution of each individual training datum to a model's prediction [29]. | Identifies which specific hormone measurements are most influential on a model's output, aiding in outlier detection and data valuation. |

Visualizing Error Propagation in Analytical Pipelines

Understanding how measurement error propagates is crucial for robustness.

Error propagation in ratio versus multivariate models. The diagram illustrates that using a simple ratio amplifies initial measurement error, which is then fed into a model. In contrast, a multivariate model using the original measured values can account for error within a more complex framework, potentially mitigating its impact.

FAQ: Solving Common Problems in Hormone Ratio Analysis

1. My hormone ratio data is highly skewed and has extreme outliers. What should I do? Raw hormone ratios often produce skewed distributions and extreme outliers, especially when the denominator hormone has a positively skewed distribution with values approaching zero [2] [1]. To address this:

- Solution A: Use log-transformation. Calculate your ratio as ln(A/B), which is equivalent to ln(A) - ln(B). This transformation typically creates a more normal, symmetric distribution [1] [20].

- Solution B: Use non-parametric statistical tests that do not assume normality [20].

- Avoid: Using raw ratios in parametric statistical analyses (like t-tests or linear regression) without checking the distributional assumptions.

2. My results change drastically if I calculate A/B instead of B/A. Is this normal? Yes, this is a known limitation of raw ratios. The ratio A/B is not linearly related to B/A, so the choice of numerator and denominator can arbitrarily influence your results [1].

- Solution: Switch to log-transformed ratios. A key advantage of log-ratios is that ln(A/B) = -ln(B/A). This means your results will be consistent in magnitude regardless of which hormone is the numerator, only the sign of the effect will change [1] [33].

3. I'm concerned that measurement error is affecting my ratio. How can I make my analysis more robust? Measurement error (from assay imperfections or biological variability) is a major threat to the validity of hormone ratios. Noise in measured levels can be dramatically exaggerated when forming a ratio [2].

- Solution: Log-ratios are strongly recommended. Simulations show that the validity of a raw ratio—its correlation with the underlying true ratio—drops rapidly with realistic levels of measurement error. Log-ratios are significantly more robust to this noise, maintaining validity much more effectively [2] [1]. Ensuring high-quality measurement techniques, such as LC-MS/MS for steroid hormones to minimize cross-reactivity, is also critical [14].

4. What is a better alternative if I want to understand the individual contributions of each hormone? While ratios aim to capture a "balance," they can obscure whether an effect is driven by one hormone, both additively, or by their interaction [1] [20].

- Solution: Use separate terms and an interaction effect in your regression model. Instead of a single ratio term, include:

- The raw or log-transformed value of Hormone A.

- The raw or log-transformed value of Hormone B.

- An interaction term between Hormone A and Hormone B (e.g., A * B). This approach allows you to disentangle the unique and joint effects of the two hormones and is often more insightful than a simple ratio [1] [20].

Decision Workflow and Method Comparison

The following diagram outlines the key decision points for choosing the right analytical approach for your hormone data.

The table below provides a detailed comparison of the three main statistical approaches for analyzing two interrelated hormones.

| Analytical Approach | Key Advantage | Key Disadvantage | Best Used When... |

|---|---|---|---|

| Raw Hormone Ratio (A/B) | Intuitive and simple to calculate [1]. | Lacks robustness to measurement error; results are not symmetric (A/B ≠ B/A); produces skewed distributions [2] [1]. | A specific, biologically-validated raw ratio is the primary variable of interest [1]. |

| Log-Transformed Ratio (ln(A/B)) | Robust to measurement error; creates symmetric, better-behaved data for analysis; results are consistent in magnitude (ln(A/B) = -ln(B/A)) [2] [1] [33]. | Interpretation is less intuitive (a difference in log-ratios); captures a fixed, additive relationship on a log scale [1]. | The research goal is to robustly measure the "balance" between two hormones, especially with assay noise or skewed data [2]. |

| Separate Terms with Interaction | Unambiguously shows the individual contributions of each hormone and their statistical interaction; avoids the interpretational pitfalls of ratios [1] [20]. | Less useful for directly testing the "balance" hypothesis; requires more complex modeling and potentially a larger sample size. | The goal is to understand how each hormone independently and jointly influences the outcome [20]. |

Experimental Protocol: Implementing a Robust Hormone Ratio Analysis

This protocol guides you from data collection to analysis, emphasizing steps to minimize measurement error.

1. Pre-Analysis Phase: Minimizing Measurement Error at the Source

- Assay Selection: Choose a high-specificity method. For steroid hormones (e.g., testosterone, cortisol), LC-MS/MS is often superior to immunoassays due to less cross-reactivity with other molecules [14].

- Assay Verification: Perform an on-site verification of the assay kit, even if it is commercially sourced. Test its performance, including precision and potential matrix effects, with samples that reflect your specific study population [14].

- Sample Handling: Standardize the timing of sample collection, storage conditions, and minimize freeze-thaw cycles to reduce pre-analytical variability [14].

2. Data Preparation and Transformation

- Screen for Skewness: Check the distributions of both raw hormone values and any calculated raw ratios. Positive skew is common.

- Apply Log Transformation: For each hormone, create a new log-transformed variable:

Hormone_A_log = ln(Hormone_A). - Calculate Log-Ratios: Create the log-ratio variable:

Log_Ratio = Hormone_A_log - Hormone_B_log. This is your robust measure of hormonal balance.

3. Statistical Analysis and Interpretation

- For Log-Ratios: Use the

Log_Ratiovariable in your correlational or regression models. A one-unit increase in theLog_Ratiorepresents a multiplicative change in the original A/B ratio. - For Separate Terms: In a multiple regression model, include

Hormone_A_logandHormone_B_logas simultaneous predictors. To test for an interaction, also include a product termHormone_A_log * Hormone_B_log.

The Scientist's Toolkit: Essential Research Reagent Solutions

| Tool or Reagent | Function in Hormone Research | Key Considerations |

|---|---|---|

| LC-MS/MS (Mass Spectrometry) | Gold-standard method for measuring steroid hormones with high specificity [14]. | Reduces cross-reactivity issues common in immunoassays. Requires significant expertise and infrastructure [14]. |

| High-Specificity Immunoassays | Measure hormone concentrations using antibody-antigen binding. | Verify performance for your sample matrix. Be aware of cross-reactivity, especially for steroid hormones [14]. |

| Stable Isotope-Labeled Internal Standards | Used in LC-MS/MS to correct for sample-specific losses and ion suppression/enhancement [14]. | Critical for achieving high accuracy and precision in mass spectrometry-based assays. |

| Commercial Quality Control (QC) Samples | Independent samples with known ranges used to monitor assay precision and accuracy over time [14]. | Should be different from the kit manufacturer's controls to independently track performance. |

Troubleshooting Guides

Guide 1: Resolving Poor Predictive Performance of Hormone Ratios

Problem: Your raw estradiol-to-progesterone (E/P) ratio shows weak or inconsistent correlations with key biological outcomes, such as conception probability.

Solution: Implement log-transformation of the ratio.

- Action 1: Check for skewness in your raw hormone data, particularly for progesterone. Positively skewed distributions are common and amplify measurement error in ratios [2] [1].

- Action 2: Calculate the natural log (ln) of the E/P ratio instead of using the raw ratio. The log-ratio is computed as ln(E/P) = ln(E) - ln(P) [1].

- Action 3: Re-run your statistical analysis using the log-transformed ratio. Empirical evidence shows that

ln(E/P)is a superior predictor of conception risk compared to the rawE/Pratio [34].

Justification: Raw ratios are strikingly non-robust to measurement error. Noise in the assay is exaggerated when one hormone is divided by another, especially when the denominator has a skewed distribution. Log-transformation mitigates this effect, leading to a more valid and reliable metric [2] [1].

Guide 2: Addressing Data Interpretation Challenges

Problem: The results of your analysis are difficult to interpret or communicate. The relationship between the raw hormone ratio and the outcome is not intuitive.

Solution: Interpret the exponentiated coefficients from your regression model.

- Action 1: When your outcome variable is log-transformed, the exponentiated regression coefficient represents a ratio of geometric means [35].

- Action 2: For a simple model,

exp(coefficient)can be interpreted as the factor by which the outcome is multiplied for a one-unit change in the predictor. For example, an exponentiated coefficient of 1.12 indicates a 12% increase in the outcome [35]. - Action 3: Use the log-ratio for statistical testing due to its robustness and then back-transform the results for a more intuitive biological explanation.

Justification: The log-transform linearizes the metric and creates a more normal sampling distribution, making it more suitable for standard statistical tests. The results, however, can be translated back to the original scale for clearer interpretation [35] [33].

Frequently Asked Questions (FAQs)

FAQ 1: Why should I use a log-transformed hormone ratio instead of a raw ratio?

You should use a log-transformed ratio primarily to overcome a striking lack of robustness to measurement error inherent in raw ratios [2] [1]. Hormone levels are measured with noise from assays and biological variation. In a raw ratio, this noise is dramatically amplified, especially when the denominator's distribution is positively skewed (a common feature of hormone data). This amplification rapidly reduces the validity of the ratio—its correlation with the underlying true biological value. Log-transformed ratios (e.g., ln[E/P]) are much more robust to this noise, maintaining higher and more stable validity across samples [2] [1]. Furthermore, log-transformed ratios have more symmetrical, normal-like distributions, which is desirable for many statistical analyses [1] [33].

FAQ 2: My colleague insists that the raw E/P ratio is more biologically meaningful. How do I respond?

You can respond with empirical evidence. A 2022 study directly compared hormonal predictors of conception risk and found that the log-transformed E/P ratio was a relatively good predictor, whereas the raw E/P ratio was a relatively poor predictor [34]. While the theoretical "balance" of hormones might be conceptualized as a ratio, the practical application of a raw ratio in statistical models is severely hampered by its statistical properties. The log-ratio more accurately captures the underlying hormonal state that predicts real-world outcomes like conception.

FAQ 3: Are there any other alternatives to using hormone ratios?

Yes, a commonly recommended alternative is to include both hormones as separate predictors in your statistical model.

- Approach: In a regression model, include the main effects of log-transformed estradiol (ln[E]) and log-transformed progesterone (ln[P]), as well as their interaction term (ln[E] * ln[P]) [1].

- Advantage: This approach does not assume that the two hormones have equal but opposite effects, allowing you to disentangle the unique contribution of each hormone and test for a true interactive effect [1].

- Consideration: This method requires more data and can be less powerful for detecting the specific "balance" effect that a ratio is designed to capture. Using the log-ratio and the two-hormone model as complementary analyses is a robust strategy.

Data Presentation: Raw vs. Log-Transformed Ratios

The following table summarizes the core differences between using raw and log-transformed hormone ratios, based on simulation and empirical studies [2] [34] [1].

| Feature | Raw Hormone Ratio (E/P) | Log-Transformed Ratio (ln[E/P]) |

|---|---|---|

| Robustness to Measurement Error | Low; validity drops rapidly with noise [2] [1]. | High; validity remains more stable [2] [1]. |

| Data Distribution | Often highly skewed and leptokurtic [1]. | More symmetrical, approximate normality [1] [33]. |

| Dependence on Skewed Denominator | High; small values in denominator create extreme outliers [2]. | Low; effect of skewed denominator is mitigated. |

| Interpretation of Ratio A/B vs. B/A | Not equivalent; A/B ≠ B/A. Choice of numerator is arbitrary [1]. | Equivalent; ln(A/B) = -ln(B/A). Choice of numerator only changes the sign [1]. |

| Predictive Power for Conception Risk | Relatively poor predictor [34]. | Relatively good predictor [34]. |

| Recommended Use | Not recommended for statistical modeling as a primary metric. | Recommended for statistical testing and modeling. |

Experimental Protocol: Implementing Log-Transformed E/P Ratios

This protocol details the steps for calculating and analyzing log-transformed estradiol/progesterone ratios.

1. Sample Collection & Hormone Assay:

- Collect biological samples (e.g., saliva, blood) according to a standardized schedule aligned with the ovarian cycle phases (e.g., follicular, peri-ovulatory, luteal) [36].

- Assay samples for estradiol (E) and progesterone (P) concentrations using a validated method (e.g., ELISA, mass spectrometry). Record raw concentration values.

2. Data Preprocessing:

- Screen for Outliers: Check raw hormone distributions for extreme outliers that may indicate assay errors.

- Log-Transform Hormone Values: Calculate the natural logarithm (ln) of the raw estradiol and progesterone values to create two new variables:

ln_Eandln_P.- Note: This step helps normalize the distributions of the individual hormones before ratio calculation [1].

3. Calculate the Log-Transformed Ratio:

- Compute the log-transformed ratio by subtracting

ln_Pfromln_E:ln_ratio = ln_E - ln_P

4. Statistical Analysis:

- Use