Beyond the Lab: Unlocking Academic Impact with Google Search Console Keyword Insights

This guide provides researchers, scientists, and drug development professionals with a strategic framework for using Google Search Console (GSC) as a powerful tool for academic keyword research.

Beyond the Lab: Unlocking Academic Impact with Google Search Console Keyword Insights

Abstract

This guide provides researchers, scientists, and drug development professionals with a strategic framework for using Google Search Console (GSC) as a powerful tool for academic keyword research. It moves beyond basic setup to demonstrate how to uncover the precise search terms used by peers and practitioners, identify content gaps in your field, optimize existing publications for greater visibility, and validate your findings against other data sources. By translating GSC data into actionable insights, you can significantly increase the discoverability and impact of your research in an increasingly digital landscape.

Laying the Groundwork: Understanding Google Search Console for Academic Visibility

Why GSC is a Non-Negotiable Tool for the Modern Researcher

In the competitive landscape of academic research, particularly in fields like drug development, visibility is paramount. Google Search Console (GSC) provides an unparalleled, data-driven foundation for understanding and optimizing how a research group's digital output is discovered, offering direct insights into the search behavior of the scientific community [1].

Quantitative Data Tables for Research Performance Analysis

The performance data within GSC can be segmented to track specific aspects of a research portfolio's online presence. The following tables summarize key quantitative metrics.

Table 1: Query Performance Analysis This analysis helps identify which search terms are leading others to your work [1] [2].

| Query Type | Primary Use Case | Key Metric | Research Insight |

|---|---|---|---|

| Branded Queries [3] | Track existing reputation | Click-Through Rate (CTR) | Measures recognition of your lab, PI, or key methodologies. |

| Non-Branded Queries [3] | Discover new audiences | Impressions | Reveals organic growth and how new users find your content. |

| Quick-Win Keywords [2] | Prioritize optimization efforts | Average Position (11-20) | Identifies terms on the cusp of page one for rapid ranking gains. |

| Long-Tail Keywords | Target specific, high-intent queries | Clicks | Uncovers highly specific queries that signal deep research interest. |

Table 2: Page Performance & Content Gap Analysis This assesses which published content (e.g., papers, protocols, lab websites) is most effective at attracting traffic [4] [2].

| Page URL | Clicks | Impressions | Average Position | Top Query | Research Implication |

|---|---|---|---|---|---|

| /publications/paper-2025 | 150 | 4,500 | 12.5 | "mTOR inhibitor resistance" | High interest, but ranking can be improved; a content gap may exist. |

| /protocols/elisa-assay | 45 | 800 | 8.2 | "elisa protocol step-by-step" | Strong performer for a methodology; consider expanding into video. |

| /research/drug-target | 12 | 250 | 34.0 | "new kinase target 2025" | Low visibility; page may not adequately cover the topic or its entities. |

Experimental Protocols for Academic Keyword Research

The following protocols provide a reproducible methodology for leveraging GSC in a research setting.

Protocol 1: Foundational Brand & Non-Brand Traffic Analysis

Objective: To segment and analyze website traffic to measure brand recognition versus organic discovery [3].

Materials:

- Research Reagent Solutions:

- GSC Performance Report: The primary tool for accessing search analytics data [1].

- Branded Queries Filter: An AI-assisted filter within GSC that automatically classifies queries as branded or non-branded [3].

- Data Export Functionality: For downloading data to CSV or Google Sheets for further analysis [5].

Methodology:

- Access: Navigate to the "Performance > Search results" report in GSC [2].

- Segment: Apply the "Branded queries" filter. Observe the breakdown of clicks and impressions [3].

- Compare: Switch the filter to "Non-branded" queries. Note the volume and CTR differences.

- Analyze: A high ratio of branded to non-branded clicks indicates strong brand recognition but potentially limited reach. A growing non-branded segment signals successful content marketing to new audiences [3].

- Document: Use the "Annotations" feature to mark the date of major publications or conference presentations, allowing for retrospective correlation with traffic spikes [6].

Protocol 2: Identification of "Quick-Win" Keyword Opportunities

Objective: To systematically identify search queries for which your pages rank just outside the first page of results, allowing for efficient optimization [2].

Materials:

- Research Reagent Solutions:

Methodology:

- Filter for Position: In the "Performance > Search results" report, scroll to the queries table. Add a filter for "Position" [2].

- Set Range: Filter for queries where the average position is between 11 and 20. This range represents the "top of page two" [2].

- Export Data: Export this filtered dataset for analysis.

- Prioritize: Sort the exported data by "Impressions" to identify the hidden opportunities with the largest potential audience.

- Optimize: For each high-priority query, inspect the corresponding page. Intentionally incorporate the target query and related entities into the page's title, headings, and body text to strengthen relevance.

Protocol 3: Advanced Data Extraction via the GSC API

Objective: To overcome the 1,000-row data limitation of the GSC web interface and conduct comprehensive, large-scale keyword analysis [4].

Materials:

- Research Reagent Solutions:

- Google Search Console API: A free programmatic interface with a much higher data limit (up to 50,000 rows per day) [4].

- Search Analytics for Sheets: A freemium Google Sheets add-on that provides a user-friendly interface for the GSC API without requiring coding [4].

- Google Sheets: The platform for data manipulation and analysis.

Methodology:

- Install Tool: Install the "Search Analytics for Sheets" add-on from the Google Workspace Marketplace [4].

- Configure Query: In a new Google Sheet, open the add-on. Select your verified site, date range (e.g., 16 months), and search type (Web) [4].

- Select Dimensions: In the "Group by" section, select "Query" and "Page" to see which queries lead to which pages. For deeper analysis, add "Country" and "Device" [4].

- Retrieve Data: Run the query. The add-on will populate the sheet with up to 10,000 rows of data (free tier), bypassing the web interface's limitation [4].

- Analyze: Use spreadsheet functions to sort, filter, and pivot this enriched dataset to uncover long-tail keywords and content gaps across your entire digital portfolio.

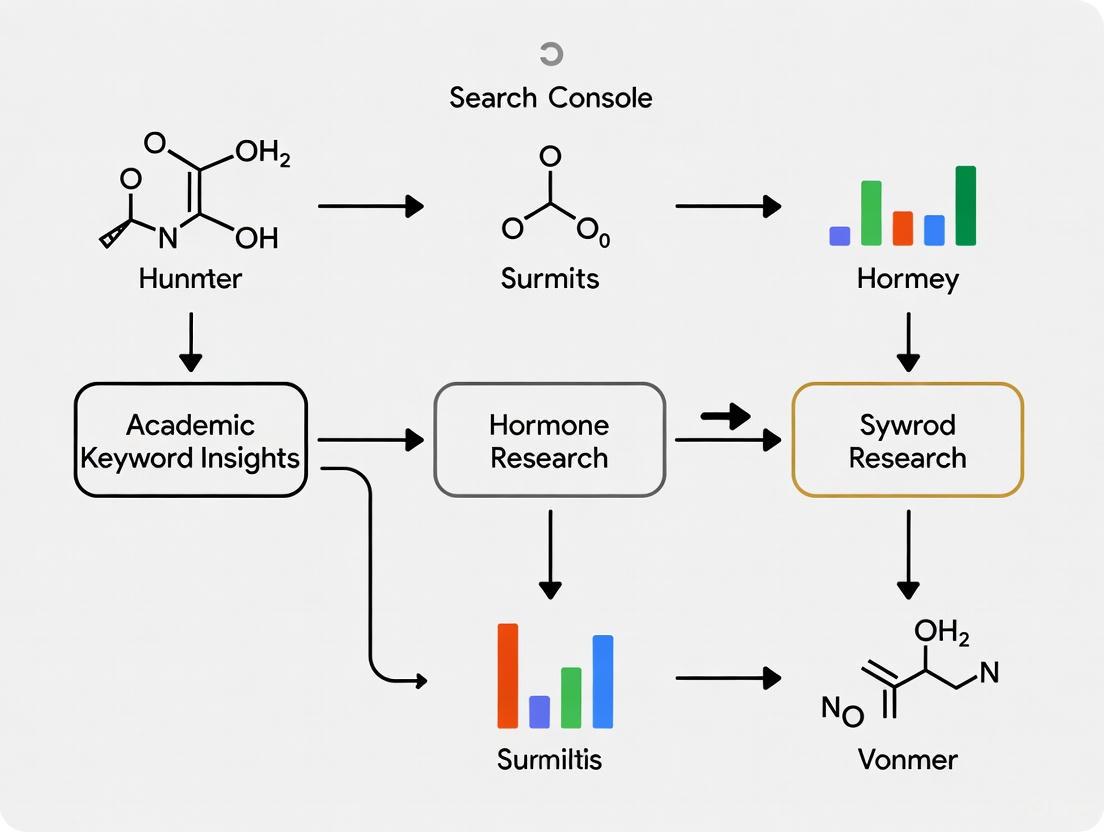

Visualization of Research Workflows

The following diagrams, generated with Graphviz, illustrate the logical workflows for the experimental protocols.

Diagram 1: Brand versus non-brand traffic analysis protocol.

Diagram 2: Quick-win keyword identification and optimization workflow.

Diagram 3: Advanced data extraction via the GSC API for comprehensive analysis.

Table of Contents

- Introduction

- Quantitative Summary of Core Metrics

- Experimental Protocol for Data Extraction

- Data Analysis Workflow

- The Scientist's Toolkit

- Advanced Analysis & Methodological Notes

For researchers, scientists, and drug development professionals, visibility in academic search engines is a critical determinant of impact. Google Search Console (GSC) serves as a primary instrument for measuring this visibility, providing raw data on how a scholarly website appears in Google Search results. This document frames the core metrics of GSC—clicks, impressions, Click-Through Rate (CTR), and Average Position—within a rigorous, academic methodology. Proper interpretation of these metrics, especially in light of recent significant changes to Google's reporting, enables the optimization of academic content to ensure key research outputs are discovered by the intended audience [7] [8] [9]. The protocols herein are designed to integrate GSC data analysis into the scholarly research workflow.

The following table defines the fundamental GSC metrics, their quantitative formulas, and their significance in an academic research context.

Table 1: Core Google Search Console Metrics and Formulae

| Metric | Definition | Formula | Significance in Academic Research |

|---|---|---|---|

| Clicks | The number of times users clicked on a URL from Google Search results to reach your site. [10] | - (Count) | Measures actual engagement and traffic acquisition. Indicates successful translation of search interest into site visitation. [8] [9] |

| Impressions | The number of times a URL appeared in search results viewed by a user, even if below the fold. [10] | - (Count) | Quantifies raw visibility and the potential audience for research content. Post-September 2025, this reflects more accurate, human-centric visibility. [7] [8] |

| Click-Through Rate (CTR) | The percentage of impressions that resulted in a click. [10] | (Clicks / Impressions) * 100 |

Evaluates the effectiveness of a search result snippet (title and meta-description) in enticing users to click. [10] |

| Position | The average highest position a site held for a query or page. [10] | - (Average) | Tracks ranking performance. A lower number is better (e.g., position 1 is the top result). It is now calculated only on visible positions (1-20). [8] [10] |

Experimental Protocol for Data Extraction and Baseline Establishment

Protocol 1: Establishing a Post-September 2025 GSC Baseline

Purpose: To account for Google's discontinuation of the &num=100 parameter and establish a reliable baseline for future trend analysis [7] [8].

Background: In mid-September 2025, Google ceased support for a parameter that allowed automated crawlers to retrieve 100 search results per query. This had artificially inflated impression counts and worsened average position metrics by including data from positions beyond what human searchers typically view. The removal of this "crawler noise" resulted in a sudden, widespread drop in impressions and an improvement in average position, providing a more accurate reflection of genuine search visibility [7] [9].

Materials:

- Access to a verified property in Google Search Console.

- Data annotation system (e.g., spreadsheet, analytics platform).

Methodology:

- Data Annotation: In all reports and datasets, annotate the change with the following or similar text: "Data reported in Google Search Console from 9/13/2025 onwards reflects a change in Google's measurement methodology. The

&num=100parameter was discontinued, removing impressions generated by third-party crawlers and providing a more accurate baseline of human search activity" [7]. - Baseline Definition: Define the two-week period commencing September 13, 2025, as "Baseline Week 0" for all subsequent performance comparisons.

- Historical Data Treatment: For long-term trend analysis spanning the change period (Feb 1 - Sept 12, 2025), consider using a prior-year comparison or applying a normalization factor, as direct comparison is invalid [7].

- Validation: Confirm that click data remained stable throughout the transition period, validating that actual user behavior was unaffected [8] [9].

Protocol 2: Systematic Extraction of Performance Data

Purpose: To methodically extract key performance data from the GSC Search Results report.

Materials:

- Google Search Console access.

- Data export tool (e.g., GSC UI, GSC API, third-party connectors like SE Ranking for large datasets >1000 rows) [10].

Methodology:

- Navigate: Within GSC, select the relevant property and open the "Performance" report (Search Results > Search).

- Define Parameters:

- Date Range: Set a custom range (data is available for the past 16 months) [10].

- Tab Selection: Analyze data by Query, Page, Country, and Device tabs to isolate specific variables.

- Filters: Use the "Add filter" button to drill down by specific queries, pages, countries, devices, or search type (Web, Image, Video) [10].

- Data Export:

- Standard Export: Use the "Export" function within the GSC UI to download data for the current view (limited to 1000 rows) as a CSV, Google Sheet, or Excel file [10].

- API Export (High-Volume): For sites with large data sets, use the GSC API to programmatically extract up to 5000 rows of data, which can be managed via a Google Sheets add-on [10].

Data Analysis Workflow

The following diagram maps the logical workflow from data extraction to academic insight, incorporating the critical methodological change.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Digital Materials for GSC Analysis

| Research Reagent (Tool) | Function / Explanation |

|---|---|

| Google Search Console | The primary source of truth. Provides validated data directly from Google on search performance, indexing status, and technical site health. [10] |

| GSC Performance Report | The core interface for analyzing clicks, impressions, CTR, and position. Allows for data segmentation and is the source for data extraction. [10] |

| URL Inspection Tool | A diagnostic reagent. Provides a deep, page-level analysis of how Google crawls, indexes, and serves a specific URL from a scholarly site. [10] |

| Search Console Insights | A synthesized report. Offers an accessible overview of top content, trending queries, and how audiences discover content across Search and Discover. [11] |

| GSC Application Programming Interface (API) | An automation reagent. Allows for the programmatic extraction of large volumes of GSC data for integration into custom dashboards and advanced analytical models. [10] |

| Data Annotation Flag | A methodological control. A note appended to datasets and reports explaining the September 2025 methodology change, ensuring accurate historical comparison. [7] |

| Third-Party Data Connector (e.g., SE Ranking) | A filtration/purification tool. Used to bypass GSC's 1000-row export limit, enabling comprehensive data analysis for large academic portals. [10] |

Advanced Analysis and Methodological Notes

- Focus on Positions 1-20: The September 2025 update means GSC now primarily tracks visibility within the first 20 search positions. Rankings beyond this range are generally no longer captured, making performance within this "visible zone" the critical focus [7] [8].

- Metric Reliability Hierarchy: For assessing true impact, prioritize metrics in this order: 1) Clicks (direct measure of engagement), 2) CTR (measure of snippet effectiveness), 3) Impressions (measure of potential reach), and 4) Average Position (contextual ranking data) [8] [9]. Clicks remain the most reliable north-star metric [8].

- The "Alligator Effect" Resolution: The phenomenon of rising impressions with flat clicks (resembling an open alligator's mouth) observed from February to September 2025 has been resolved. It is now understood to have been caused by automated crawler activity. The subsequent closure of the "mouth" post-September 12 provides a more accurate baseline for analysis [7] [8].

Setting Up and Verifying Your Academic Profile or Lab Website in GSC

For researchers, scientists, and drug development professionals, an online presence is crucial for disseminating findings, attracting collaboration, and enhancing the impact of their work. Google Search Console (GSC) is an indispensable, free tool that provides unparalleled insights into how an academic profile or lab website performs in Google Search. Properly setting up and verifying your site in GSC is the foundational step in leveraging its data. This process grants you access to sensitive performance metrics and enables actions that affect your site's presence on Google [12]. Within the broader thesis of leveraging GSC for academic keyword research, verification unlocks the data necessary to understand which search queries lead peers to your publications, what research topics are garnering the most attention, and how your site's visibility evolves over time. This data is critical for informing not only your online strategy but also for understanding the reach and impact of your scientific work.

Verification Methods: Protocol and Selection Criteria

Ownership verification in Google Search Console is a mandatory security step to ensure that only legitimate site owners can access sensitive search performance data and manage site settings [12]. A verified owner has the highest level of permissions. For an academic lab, maintaining verification is critical for continuous data collection and monitoring. The following protocol details the available verification methods.

Comparative Analysis of Verification Methods

The choice of verification method depends on your technical access to the website. The table below summarizes the primary methods, their requirements, and their suitability for common academic website platforms.

Table 1: Comparison of Google Search Console Verification Methods

| Verification Method | Technical Requirements | Best For Academic Sites Hosted On | Protocol Notes |

|---|---|---|---|

| HTML File Upload [12] | Upload a unique HTML file to the root directory of your web server. | Custom hosting, departmental servers. | High reliability; requires direct file system access. |

| HTML Tag [12] | Add a unique <meta> tag to the <head> section of your site's homepage. |

WordPress (with theme access), custom HTML sites. | Non-intrusive; tag must remain in place permanently. |

| Google Analytics [12] [13] | Use an existing Google Analytics tracking code on your site that you have "Edit" permissions for. | Sites already using Google Analytics. | Streamlined if GA is already configured; requires no code changes. |

| Google Tag Manager [12] [13] | Use an existing Google Tag Manager container snippet on your site that you have "View, Edit, and Manage" permissions for. | Sites managed via GTM. | Simplifies management of multiple scripts and tags. |

| Domain Name Provider [12] | Add a DNS TXT record to your domain's configuration. | University-owned domains, custom domains. | Verifies an entire domain (all subdomains and protocols); most complex but comprehensive. |

Detailed Experimental Protocol: HTML File Upload Verification

The HTML file upload method is a reliable and straightforward verification technique. The following is a step-by-step protocol.

Protocol 1: Site Verification via HTML File Upload

Research Reagent Solutions:

- Reagent 1: Google Search Console Account: A Gmail account is required to access the service.

- Reagent 2: Site Access Credentials: FTP, SFTP, or administrative access to the hosting control panel (e.g., cPanel) for the academic website.

- Reagent 3: File Manager/FTP Client: Software such as FileZilla or the hosting provider's web-based file manager.

Methodology:

- Property Creation: Log into Google Search Console. Either add a new property by clicking "Add Property" or select an unverified property. Use the URL-prefix property type (e.g.,

https://yourlab.university.edu) [12]. - Method Selection: On the verification screen, select "HTML file upload" as your verification method [12].

- File Download: Download the unique, user-specific HTML verification file provided by Google. Do not modify the file's name or content [12].

- File Transfer: Using your file access credentials, upload the downloaded HTML file to the root directory of your website. The root directory is the top-level folder that contains your site's primary files (e.g.,

index.html). - Validation Test: Open an incognito browser window and navigate to the full URL of the uploaded file (e.g.,

https://yourlab.university.edu/google-site-verification-XXXXXXXXXXXX.html). Confirm that a blank page loads without any authentication required [12]. - Verification: Return to the Search Console verification page and click "Verify."

- Data Collection Onset: Note that data collection for your property begins as soon as it is added, but it typically takes a few days for data to become visible in the reports after successful verification [12].

- Property Creation: Log into Google Search Console. Either add a new property by clicking "Add Property" or select an unverified property. Use the URL-prefix property type (e.g.,

Troubleshooting:

- File Not Found: Ensure the file is in the root directory and the URL is correct. Test the URL in an incognito window [12].

- Incorrect Content: The file must not be altered. Re-download and re-upload the original file from Search Console [12].

- Redirects: Search Console will not follow redirects to a different domain. If your site redirects all traffic (e.g., from

httptohttps), this is supported, but cross-domain redirects will cause verification to fail [12].

Post-Verification: Core GSC Reports for Academic Keyword Insights

Once verified, the Performance Report becomes the primary tool for your academic keyword research. It provides data on clicks, impressions, click-through rate (CTR), and average position for your property [14] [15].

The Performance Report and Branded Query Filter

A powerful new feature for keyword analysis is the branded queries filter. This AI-assisted tool automatically differentiates between:

- Branded queries: Searches that include your lab, PI, or key publication names, including variations and misspellings (e.g., "Smith lab autophagy," "J Biol Chem 2025 kinase inhibitor") [3].

- Non-branded queries: All other searches (e.g., "mechanisms of protein degradation," "new cancer drug targets 2025") [3].

This segmentation is vital for academic research. Branded queries indicate existing recognition and the direct seeking of your work, reflecting your established reputation. Non-branded queries represent organic growth and discovery, showing how new audiences find your content without prior intent, which is crucial for understanding your field's broader interest landscape [3] [14]. This filter is available within the Search results Performance report and as an Insights card, but it is only available for top-level properties with sufficient query volume [3].

Detailed Experimental Protocol: Analyzing Keyword Performance

Protocol 2: Performance Analysis for Academic Keyword Discovery

Research Reagent Solutions:

- Reagent 1: Verified GSC Property: A successfully verified lab website or academic profile.

- Reagent 2: Data Segmentation Tools: The built-in GSC filters for Queries, Pages, Countries, and Devices.

Methodology:

- Report Navigation: In GSC, navigate to "Search Results" > "Performance" report.

- Data Extraction and Baseline Measurement:

- Set the date range to the last 12 months for a comprehensive view.

- Observe the total clicks, impressions, CTR, and average position from the chart.

- Note: The chart totals include data from all queries, while the table below may show a lower sum because it omits anonymized queries to protect user privacy [14] [15].

- Branded vs. Non-Branded Segmentation:

- Click "++ New ++" button and select the "Branded queries" filter.

- Apply the "Branded" filter and record the performance metrics (clicks, impressions).

- Change the filter to "Non-branded" and record the same metrics.

- Analysis: Calculate the ratio of branded to non-branded traffic. A high branded ratio suggests strong name recognition, while a high non-branded ratio indicates effective content discoverability for general topics [3].

- Top Query and Page Identification:

- Click the "Queries" tab to see the top 1,000 queries that trigger impressions for your site [14].

- Click the "Pages" tab to see which specific pages (e.g., a publication page, a methodology description) receive the most traffic.

- Analysis: Cross-reference the top queries with the top pages. Identify which content satisfies which user intents.

- High-Impression, Low-CTR Analysis:

- In the "Queries" tab, sort by "Impressions" (high to low) and then observe the CTR column.

- Identify queries with a high number of impressions but a low CTR.

- Analysis: This indicates your page is shown often for this query but not clicked. This is an opportunity to optimize the page's title tag and meta description to be more compelling and relevant to the query [14].

Table 2: Key Metrics in the GSC Performance Report [14] [15]

| Metric | Definition | Interpretation for Academic Research |

|---|---|---|

| Clicks | Count of user clicks from Google Search results to your site. | Direct measure of traffic driven by specific research topics or publications. |

| Impressions | Count of times your property appeared in a search result. | Indicator of the visibility and potential reach of your research content. |

| CTR (Click-Through Rate) | (Clicks / Impressions) * 100. The percentage of impressions that resulted in a click. | Measures how appealing your search snippet is for a given query. |

| Average Position | The average topmost position your site appeared in for searches. | Tracks ranking performance for key academic terms; aim for position 10 or lower [14]. |

Data Integrity and Limitations in GSC Reporting

When using GSC for research, it is critical to understand its data processing to draw accurate conclusions. Two primary limitations affect the reported data:

- Privacy Filtering: Queries performed by a very small number of users (anonymized queries) are omitted from the detailed query table to protect user privacy. These clicks are still included in the chart totals, which can cause a discrepancy between the chart and table sums [14] [15].

- Data Row Limits: The Performance report in the web interface shows a maximum of 1,000 rows of data for queries or pages. For extremely large sites, this means not all data rows are shown, focusing instead on the most important ones for your property [15].

The meticulous setup and verification of your academic or lab website in Google Search Console is a critical first experiment in a sustained research program into your digital footprint. By following the detailed protocols for verification and subsequent performance analysis, you transform GSC from a simple webmaster tool into a powerful data source for understanding the scholarly conversation around your work. The insights gleaned from branded versus non-branded query traffic, top-performing pages, and impression patterns provide a quantitative basis for strategically optimizing your online content, ultimately enhancing the dissemination and impact of your scientific research.

For researchers, scientists, and drug development professionals, disseminating findings through publications, securing funding, and tracking the competitive landscape are fundamental to advancing scientific progress. The Google Search Console (GSC) Performance report provides a critical, data-driven portal to understand the search behavior of your target academic audience [1]. This document details a protocol for leveraging this tool to gain academic keyword insights, allowing you to optimize your online scholarly content, from lab websites to publication repositories, for maximum discoverability.

Primary Research Objectives:

- To Quantify Research Visibility: Systematically track how often your academic pages appear in Google Search results (Impressions) for relevant scientific queries [16].

- To Analyze Audience Engagement: Measure how frequently researchers click on your links in search results (Clicks) and the effectiveness of your content's snippets (Click-Through Rate) [14] [16].

- To Identify Strategic Keyword Opportunities: Discover high-value, low-competition scientific queries and content gaps to inform your content creation and optimization strategy [17].

- To Classify Search Intent: Categorize driving queries to ensure content aligns with the academic user's needs, whether informational (seeking knowledge), navigational (seeking a specific site), or transactional (ready to access a resource or tool) [17].

Foundational Metrics: The Researcher's Toolkit

The GSC Performance report provides four key quantitative metrics. Understanding their definitions and interrelationships is the first step in the analytical workflow.

Table 2.1: Key Performance Metrics & Definitions

| Metric | Academic Definition | Protocol for Interpretation |

|---|---|---|

| Impressions [16] | Count of link appearances in search results; item must be in view (e.g., not requiring a "see more" click). | High impressions indicate strong page relevance to a query. Low impressions suggest a content gap or poor indexing. |

| Clicks [16] | Count of user clicks from Google Search to your site. | Measures successful audience capture. Compare with impressions to calculate CTR. |

| Click-Through Rate (CTR) [16] | Percentage of impressions resulting in a click: (Clicks / Impressions) * 100. |

Low CTR may indicate non-compelling title/meta description or a content-intent mismatch [17]. |

| Average Position [16] | Average topmost ranking position for your site/page across all queries. | A position of 1-10 is ideal (page 1). Positions 11-20 represent "low-hanging fruit" for optimization [17]. |

Diagram 2.1: Logical relationship between key Performance Report metrics, from search query to engagement calculation.

Experimental Protocol: Performance Report Analysis

Access and Data Acquisition

- Access Performance Report: Navigate to Google Search Console and select the relevant property. Click "Search Results" in the left navigation to open the Performance report [14].

- Configure Baseline View: Set the date range to the last 3 months (default) or a period relevant to your research cycle (e.g., since a major publication release). Ensure the "Search" tab is selected [14].

- Data Export: For full quantitative analysis, click the Export button to download the data for external processing and archiving.

Analytical Workflow for Query and Page Analysis

The following workflow outlines a systematic approach to extract meaningful academic insights from the raw performance data.

Diagram 3.1: Core analytical workflow for academic keyword research in the Performance Report.

Procedure Steps:

- Identify High-Value Queries: Navigate to the Queries tab. This shows the exact search terms users entered before visiting your site [14]. Sort the table by

Clicksto find your top traffic-driving terms and byImpressionsto find terms where you have visibility but may not be capturing clicks. - Identify Top-Performing Content: Navigate to the Pages tab. This shows which URLs on your site are receiving the most traffic from search [14]. Sort by

Clicksto see your most popular content and byCTRto see which pages are most effective at compelling a click. - Cross-Reference for Intent Analysis: Click on a high-impression query in the Queries tab. The report will refresh to show which Pages are ranking for that specific term [17]. Analyze if the landing page content fully matches the user's likely intent (e.g., a query for "PD-1 inhibitor clinical trial results" should not lead to a page about basic PD-1 biology).

Protocol for Opportunity Identification

This protocol defines specific methodologies for uncovering actionable keyword and content opportunities.

Protocol 3.3.1: Targeting "Low-Hanging Fruit"

- Objective: Identify pages ranking on the second page of results (positions ~11-20) that can be promoted to the first page with minimal effort.

- Procedure: In the Pages tab, review the

Average Positioncolumn. Export data and filter for pages with an average position between 11 and 20. For these pages, implement strategic internal linking from high-authority site pages and refresh content with additional data or FAQs [17].

Protocol 3.3.2: Optimizing for Click-Through Rate (CTR)

- Objective: Improve the attractiveness of search snippets for queries where you already have strong visibility.

- Procedure: In the Queries tab, sort data by

Impressions(descending) and then byCTR(ascending). Identify queries with high impressions but low CTR. For the pages ranking for these queries, optimize the title tag and meta description to be more compelling and accurately reflect the query's intent [17].

Protocol 3.3.3: Discovering Content Gaps

- Objective: Identify search queries that are not adequately answered by existing site content, revealing topics for new content.

- Procedure: Analyze the Queries tab for terms with significant impressions where the top-ranking landing page is only loosely related or is a general overview page. This mismatch indicates a need to create a new, highly targeted piece of content that directly satisfies the query [17].

The Scientist's Toolkit: Research Reagent Solutions

Table 4.1: Essential "Reagents" for Search Performance Analysis

| Tool / Resource | Function in Analysis | Academic Application Example |

|---|---|---|

| Performance Report (GSC) [1] | Primary data source for search traffic, impressions, CTR, and position. | Core instrument for monitoring organic visibility of a research group's publication list. |

| Query Filter [14] | Isolates data for specific search terms or patterns (e.g., using regex). | Filter for branded (e.g., "Smith Lab autophagy") vs. non-branded (e.g., "mitophagy assay protocol") traffic [18]. |

| URL Inspection Tool (GSC) [1] | Provides detailed crawl, index, and serving information for a specific URL. | Diagnose why a new preprint or publication is not appearing in search results. |

| Date Comparison Tool [14] | Compares performance between two time periods. | Measure the search impact of a news release related to a recent publication. |

| Looker Studio [19] | Data visualization platform for combining GSC and Google Analytics data. | Create a dashboard correlating search clicks (GSC) with on-page engagement (Google Analytics) for key pages. |

Advanced Analysis: Search Console Insights & Data Integration

For a simplified, insight-driven overview, utilize the Search Console Insights report, which integrates directly with the main GSC interface [11] [18]. This report provides automated insights, including:

- "Trending up" and "Trending down" pages and queries, helping you quickly identify growing or waning areas of interest [18].

- Branded vs. Non-branded traffic classification, allowing you to measure brand recognition versus discovery through topical relevance [18].

For a comprehensive understanding of user behavior after the click, integrate GSC data with Google Analytics. While GSC focuses on pre-click search performance, Google Analytics provides data on post-click user interactions (e.g., sessions, engagement rate), helping you attribute conversions to organic search traffic [19].

For research institutions, understanding how you are discovered in search engines is critical for measuring reputation, outreach, and influence. This analysis divides search traffic into two fundamental categories, as defined by Google:

- Branded Queries: Search terms that include your institution's name, variations or misspellings of it, and names of unique services or products (e.g., "Bear University admissions," "Gmail for google.com") [3] [20]. These represent users with prior knowledge of your institution.

- Non-Branded Queries: All other search queries not containing your brand name (e.g., "best colleges in Georgia for engineering," "online master's in public health") [20]. These represent new users in a discovery phase.

Analyzing this segmentation within Google Search Console (GSC) provides direct insight from Google on brand recognition and organic growth potential [3] [21]. This is particularly vital as AI Overviews in search begin to shape how informational queries are answered, making brand trust and citation-level relevance increasingly important [21].

Data from real higher education institutions reveals a consistent performance pattern between these query types. The table below summarizes the core characteristics and performance metrics of branded versus non-branded queries, synthesized from industry analysis [20] [21].

Table 1: Performance Characteristics of Branded vs. Non-Branded Queries

| Characteristic | Branded Queries | Non-Branded Queries |

|---|---|---|

| Example Search Terms | "University of X application deadline," "X neuroscience department" | "best public health PhD programs," "careers with a biology degree" |

| Typical User Intent | Navigational; high intent to engage with a specific institution [20] [22] | Informational or commercial; exploring options, early in decision journey [20] [22] |

| Average Click-Through Rate (CTR) at Position 1 | 35.68% [21] | 28.16% [21] |

| Primary Strategic Value | Measures brand strength, connects with high-intent users, ensures information accuracy [20] | Expands awareness, attracts new applicants, influences early-stage decisions [20] |

| Typical Traffic Ratio (Illustrative) | ~7% (Smaller Institution) to ~26% (Larger Institution) of total clicks [20] | ~93% (Smaller Institution) to ~74% (Larger Institution) of total clicks [20] |

| Impact of AI Overviews | Can increase CTR by +18.68% on average when triggered [21] | 88.1% target informational queries, potentially increasing zero-click searches [21] |

Experimental Protocol: Segmentation Analysis in Google Search Console

This protocol details the methodology for performing a foundational analysis of branded and non-branded query performance.

Principle

Leverage Google Search Console's native filtering and reporting capabilities to segment and analyze search performance data, enabling data-driven decisions about content and brand strategy.

Research Reagent Solutions (The Scientist's Toolkit)

Table 2: Essential Tools for Search Performance Analysis

| Tool / Resource | Function in Analysis |

|---|---|

| Google Search Console | The primary data source, providing direct feedback from Google on queries, impressions, clicks, and rankings [4]. |

| Branded Queries Filter | Native GSC filter that uses an AI-assisted system to automatically classify branded and non-branded traffic [3] [23]. |

| Search Console API | Allows extraction of up to 50,000 rows of data, overcoming the 1,000-row limit of the web interface for large-scale analysis [4]. |

| Looker Studio | A visualization tool for building custom dashboards that can combine GSC data with other sources (e.g., Google Analytics) for deeper insights [19]. |

| Regular Expressions (RegEx) | An advanced method for creating custom query filters within GSC or Looker Studio, useful before the native branded filter was available [21]. |

Procedure

Initial Setup and Data Acquisition

- Property Verification: Ensure you are analyzing a top-level property (e.g.,

https://university.edu) in Google Search Console, as the branded filter is not available for URL path or subdomain properties [3]. - Access Performance Report: Navigate to the "Search Results" Performance report within GSC.

- Define Data Parameters: Select an appropriate date range (e.g., the last 6 or 12 months) to capture meaningful trends.

Native Filter Application

- Apply Query Filter: Click the "+ NEW" button in the "Query" filter section.

- Select Branded Filter: Choose the new "Branded" filter option from the list. The system will automatically display performance data for queries Google's AI identifies as branded [3] [24].

- Record Metrics: Document the key metrics (clicks, impressions, CTR, average position) for the branded segment.

- Switch to Non-Branded View: Change the filter to "Non-branded" to capture the performance data for discovery queries.

- Export Data: For both segments, use the export function to download the data for further analysis and archiving.

Insights Report Analysis

- Navigate to Insights: Go to the Insights report in GSC.

- Review Traffic Breakdown: Locate the new card that visually breaks down the percentage of total clicks from branded versus non-branded traffic [3] [23]. This provides a high-level overview of your brand's footprint.

Advanced Analysis via API (Optional)

For institutions hitting the 1,000-row data limit in the GSC interface [4]:

- Utilize the GSC API: Use the free Search Console API to request larger datasets (up to 50,000 rows per day) [4].

- Employ Analysis Tools: Use tools like the "Search Analytics for Sheets" add-on to pull this extensive data directly into a spreadsheet for comprehensive analysis, including long-tail keyword opportunities [4].

Workflow Visualization

The following diagram illustrates the logical workflow for conducting this analysis, from setup to strategic insight.

Interpretation and Strategic Application

The data gleaned from this protocol should inform distinct strategic actions:

- Act on Branded Query Performance: Strong performance here indicates healthy brand equity. Ensure landing pages (e.g., for specific program names) are perfectly optimized to convert this high-intent traffic. A decline may signal reputational issues or a successful marketing campaign by a competitor [20].

- Invest in Non-Branded Content: A high volume of non-branded impressions with low clicks indicates a content gap or poor snippet optimization. Create high-quality, authoritative content that directly answers the questions and needs of users in the exploratory phase [20] [25]. This is crucial for building relevance with AI Overviews [21].

- Monitor the Ratio Over Time: Use the Insights report to track the ratio of branded to non-branded traffic. An increasing branded percentage may result from successful offline marketing, while growth in non-branded traffic indicates successful organic reach and discovery [24] [22].

From Data to Discovery: A Researcher's Methodology for Keyword Mining

For researchers, scientists, and professionals in fields like drug development, Google Search Console (GSC) represents a potent source of public search behavior data. It can provide insights into the dissemination of scientific information, public health inquiry trends, and the terminology used by both specialists and the lay public. However, the platform's inherent 1,000-row data limitation presents a significant barrier to rigorous academic analysis [26] [27]. This constraint means that for any site ranking for more than a thousand queries or possessing over a thousand pages, the data available through the standard interface is incomplete, potentially introducing severe sampling bias into research findings. This application note provides detailed protocols to overcome these limitations, enabling the extraction of comprehensive datasets necessary for robust academic research.

Understanding GSC Data Limitations

The Google Search Console interface and its direct export functions are restricted to a maximum of 1,000 rows of data for any metric in the Performance report, whether for queries, pages, or other dimensions [26] [27]. This data is always ordered by the number of clicks or impressions, meaning that lower-volume, long-tail queries—which are often of significant academic interest—are systematically excluded from view in the standard interface. Furthermore, the provided data is subject to privacy-driven sampling, where certain queries, particularly long-tail queries with low search volume, are anonymized and not reported, leading to discrepancies between page-level and query-level impression totals [27]. GSC data is also limited to a 16-month rolling window and pertains exclusively to Google Search, excluding other search engines [27].

Table 1: Key Google Search Console Data Limitations for Researchers

| Limitation | Description | Impact on Academic Research |

|---|---|---|

| 1,000-Row Limit | Maximum rows viewable or exportable for any metric (queries, pages) [26] [27]. | Incomplete data for large sites; systematic exclusion of long-tail data. |

| Data Sampling | Not all queries are reported; privacy measures hide low-volume terms [27]. | Inaccurate aggregation; missing insights from niche or specialized queries. |

| 16-Month Data History | Performance data is only available for the past 16 months [27]. | Limits longitudinal studies and long-term trend analysis. |

| Single-Source Data | Contains data only from Google Search, not other search engines [27]. | Provides a siloed view of search performance, not the entire search ecosystem. |

Methodologies for Exceeding the 1,000-Row Limit

Several established methodologies allow researchers to bypass the 1,000-row constraint and access a more complete dataset.

Protocol 1: Using the Search Analytics for Sheets Add-on

This method is optimal for researchers who require a user-friendly interface to extract larger datasets without direct API programming.

- Installation: Navigate to the Chrome Web Store and install the "Search Analytics for Sheets" add-on [26].

- Setup: Open a new Google Sheet. Access the add-on via

Extensions > Search Analytics for Sheets > Open sidebar[26]. - Configuration: In the sidebar:

- Select the desired GSC property (requires prior verification in GSC).

- Set the date range for your analysis.

- Choose the primary dimension for grouping (e.g.,

query,page). - Apply any necessary filters (e.g., by country, device).

- Data Extraction: Click to run the query. The add-on can export up to 25,000 rows of data directly into the Google Sheet, significantly surpassing the native UI's limit [26].

Protocol 2: Leveraging the Google Search Console API

For custom applications, large-scale data extraction, or integrating search data into other research tools, the GSC API is the most powerful and flexible solution. It allows for the retrieval of up to 5,000 rows in a single request and enables complex filtering [26].

Table 2: Essential Research Reagent Solutions for GSC Data Extraction

| Research Reagent (Tool/API) | Function in Experimental Protocol |

|---|---|

| Search Analytics for Sheets Add-on | Enables bulk export (up to 25,000 rows) of GSC data into a spreadsheet interface without extensive coding [26]. |

| Google Search Console API | Programmatic interface for executing advanced queries and retrieving large, structured datasets (up to 5,000 rows per request) [26] [28]. |

| Google Cloud Project (BigQuery) | Cloud-based data warehouse required for the Bulk Data Export feature; stores and enables SQL queries on unsampled, long-term search data [29]. |

| Google Looker Studio | Dashboarding solution that connects directly to the GSC API, allowing for the visualization of datasets beyond the 1,000-row limit [26]. |

The following workflow diagram illustrates the strategic decision-making process for selecting the appropriate data extraction methodology based on research requirements.

Protocol 3: Configuring Bulk Data Export to BigQuery

For the most comprehensive, long-term research projects involving very large websites, the Bulk Data Export feature is the definitive solution, as it is not subject to row limits [26]. This protocol establishes a daily, automated export of GSC data into Google BigQuery.

- Prerequisites: A Google Cloud Project with billing enabled and the BigQuery API activated [29].

- Granting Permissions: In the Google Cloud Console IAM & Admin panel, grant the service account

search-console-data-export@system.gserviceaccount.comtheBigQuery Job User(bigquery.jobUser) andBigQuery Data Editor(bigquery.dataEditor) roles [29]. - Configure Export in GSC: In Search Console, navigate to Settings > Bulk data export. Input your Google Cloud Project ID and choose a dataset name (default is

searchconsole). Select a geographic location for your data [29]. - Data Management: The first export occurs within 48 hours of configuration. To manage storage costs, it is a best practice to set a partition expiration time for your dataset in BigQuery [29].

Experimental Protocol: Extracting a Multi-Dimensional Dataset via the GSC API

This protocol details the steps to retrieve a combined dataset of queries and pages using the Search Console API, overcoming a major UI limitation where these dimensions cannot be easily merged.

- Objective: Export up to 5,000 rows of data containing both

PAGEandQUERYdimensions. - Authentication: Ensure you have owner-level access to the GSC property and have set up API credentials.

- Request Composition: Structure a JSON request body. The example below filters for pages containing "video" while excluding queries containing "free" [26].

- Execution: Use a client library (e.g., Python, Java) or the API explorer to execute the request with the composed JSON.

- Data Handling: The response will be in JSON format, which can be parsed and converted to CSV or another structured format for analysis [26].

The technical workflow for programmatic data extraction, from authentication to final analysis, is outlined in the following diagram.

In the rigorous fields of academic and scientific research, particularly in competitive areas such as drug development, the ability to make data-driven decisions is paramount. Google Search Console (GSC) represents a valuable, yet often underutilized, source of direct market and topic interest intelligence straight from the world's primary search engine. However, the standard web interface for GSC presents a significant data accessibility problem for large-scale sites and research topics: it displays a maximum of only 1,000 rows of data, effectively redacting a vast portion of the available information [4]. This limitation obscures the long-tail of search queries—those highly specific, low-volume terms that are often the most revealing for niche academic fields or emerging scientific concepts. This application note details a methodological framework for employing the Google Search Console API to bypass this interface limitation, enabling researchers to extract the full 50,000 rows of data per day per search type, thus facilitating a more comprehensive and robust analysis for academic keyword insight research [30].

Technical Specifications & Data Limitations

A clear understanding of the GSC API's capabilities and boundaries is the foundation of any valid research methodology. The following table summarizes the key quantitative specifications researchers must incorporate into their project planning.

Table 1: Google Search Console Data Access Limits

| Parameter | Web Interface Limit | API Limit | Notes & Research Implications |

|---|---|---|---|

| Default Row Display | 1,000 rows [4] | N/A | The web interface is unsuitable for large-scale data collection. |

| Daily Row Retrieval | N/A | 50,000 rows per day, per search type (e.g., web, image) [30] | This is a hard limit on the number of data rows (e.g., query/page combinations) returned for a single day's analysis. |

| Maximum Page Size | N/A | 25,000 rows per API request [31] | A single query cannot retrieve more than 25,000 rows, necessitating pagination for full data extraction. |

| Total Query Limit | N/A | 2 queries per second, 1,000 queries per day (documented recommendation); 40,000 QPM, 30M QPD (actual high limits) [31] [4] | Documented quotas are conservative; real-world limits are significantly higher and unlikely to be breached in a research context. |

A critical clarification for research design is that the 50,000-row limit applies on a per-day basis [30]. Therefore, a query for a 30-day date range can potentially access 50,000 rows for each of those 30 days, yielding a theoretical maximum of 1.5 million rows for the period [31]. It is also vital to note that the data is subject to privacy filtering, where detailed data with low impression counts is anonymized and removed. This filtering becomes more pronounced as more dimensions (e.g., country, device) are added to a query, a phenomenon often described as "more detail = less data" [31]. Consequently, studies focusing on granular, long-tail phenomena may observe data incompleteness that is intrinsic to the data source rather than the collection method.

Experimental Protocol: Data Extraction & Pagination

This protocol provides a step-by-step methodology for extracting a complete dataset from the GSC API for a specified date range, ensuring researchers capture all available data up to the daily 50,000-row limit.

Research Reagent Solutions

Table 2: Essential Tools for GSC API Data Collection

| Tool / Component | Function in Protocol | Research-Grade Notes |

|---|---|---|

| Google Search Console API | The primary data source. Provides raw, unsampled search analytics data. | Access requires a verified Google account and appropriate permissions for the website property (e.g., domain, URL prefix). |

| Authentication Library (OAuth 2.0) | Securely authenticates the research application to access the API on behalf of the user. | Libraries are available for Python, R, and other languages common in scientific computing. |

| Programming Environment | Executes the data extraction script. | Python or R are recommended for their robust data manipulation and analysis libraries (e.g., pandas, dplyr). |

| Pagination Logic | A loop in the code that manages multiple API calls to retrieve data in sequential "pages." | Critical for overcoming the 25,000-row-per-request limit. This logic is the core of the full data extraction process. |

| Data Deduplication Routine | A post-processing function to identify and remove duplicate data rows. | Essential for data integrity, as the lack of a secondary sort in the API can cause duplicates across paginated requests [31]. |

Step-by-Step Workflow

The following diagram illustrates the logical flow of the data extraction protocol.

Workflow Diagram Title: GSC API Pagination for Full Data Extraction

Protocol Steps:

Authentication & Query Configuration: Authenticate your application with the GSC API using an OAuth 2.0 flow to obtain a valid access token. Define your core query parameters, including:

Initialization: Initialize two key variables:

currentStartRow = 0and an empty collection (allData) to consolidate the results.API Request Loop: Enter a loop and make a request to the GSC API with the current

startRowparameter. The API returns data sorted by clicks, descending, with no secondary sort [31].Data Append & Check: Append the newly fetched data to the

allDatacollection. If the number of rows returned is less than the requested 25,000, you have reached the end of the available dataset, and the loop should terminate.Pagination & Termination Check: If 25,000 rows were received, increment the

currentStartRowby 25,000 to retrieve the next "page" of data. The loop must also terminate if the total accumulated rows meet or exceed the 50,000-row daily limit to prevent unnecessary API calls [30].Data Integrity Post-Processing: After the loop terminates, process the

allDatacollection to remove duplicate rows. The absence of a guaranteed sort order for rows with identical click counts means duplicates can occur between pages [31]. Apply deduplication logic based on a unique combination of dimensions (e.g., query, page, date, country) before proceeding to analysis.

Data Visualization & Analysis Workflow

Once the raw data is acquired, transforming it into actionable academic insights requires a structured analytical workflow.

Analysis Workflow Diagram Title: From Raw GSC Data to Academic Insight

Analysis Protocol Steps:

Data Aggregation: The raw dataset, comprising individual query-page pairs, must be aggregated into meaningful research constructs. This involves:

- Keyword Clustering: Manually or algorithmically group individual queries into thematic topics relevant to the research (e.g., "EGFR inhibitor side effects," "PD-1 combination therapy").

- Metric Summation: For each cluster, sum the

clicksandimpressionsand calculate the averagepositionover the study period. This provides a macroscopic view of topic performance.

Trend Modeling & Pattern Identification: Analyze the aggregated data for significant patterns.

- Temporal Analysis: Model the performance of key topics over time using line charts to identify rising, stable, or declining interest trends [32].

- Correlation Analysis: Use scatterplots to investigate the relationship between different metrics, such as the correlation between a page's average ranking position and its click-through rate [32].

Visualization for Academic Communication: Select visualization types that clearly communicate the findings to a scientific audience, ensuring all non-text elements meet a minimum 3:1 contrast ratio for accessibility [33].

- Bar Charts: Ideal for comparing the total clicks or impressions across different keyword clusters or content categories, especially with long category names [32].

- Line Charts: The most effective method for displaying continuous data, such as the progression of impression share for a key topic over a multi-year period [32].

- Scatterplots: Used to highlight the correlation between two variables, such as the number of publications on a drug and its associated search impression volume, to generate hypotheses about real-world impact [32].

By adhering to these detailed application notes and protocols, researchers in the scientific and drug development communities can systematically leverage the full power of the Google Search Console API. This approach transforms a limited marketing tool into a robust source of empirical data for understanding the evolving landscape of public and professional interest in academic topics.

Within the framework of a broader thesis on leveraging Google Search Console for academic keyword research, this protocol provides a detailed methodology for using advanced regular expressions (regex) to isolate long-tail and question-based research queries. This technique enables researchers, scientists, and drug development professionals to systematically identify highly specific, high-intent search traffic, uncovering nascent research trends, unmet scientific curiosities, and potential gaps in the public understanding of complex topics, such as drug mechanisms or disease pathways.

In academic and scientific keyword research, not all search traffic holds equal value. Broad, head-term keywords (e.g., "cancer") are characterized by high search volume and intense competition, but often yield low conversion rates for specific research outputs [34]. Conversely, long-tail keywords are longer, more specific search queries that garner fewer individual searches but, in aggregate, represent the majority of all search traffic [34]. These queries, which include specific question-based formats (e.g., "how does CRISPR Cas9 edit DNA?" or "side effects of mTOR inhibitors"), are critically important because they signal a highly motivated user with precise research intent. isolating these queries from analytics data allows researchers to:

- Identify Knowledge Gaps: Discover specific questions the academic community and public are asking.

- Guide Content Strategy: Create targeted content that directly addresses nuanced scientific inquiries.

- Track Emerging Trends: Spot early signals of interest in new drug therapies or research methodologies.

Google Search Console (GSC) is an essential tool for this analysis, providing direct data on how a site appears in Google Search results [35]. While GSC recently introduced an AI-assisted branded queries filter [3], isolating specific query patterns like long-tail questions still requires the precision of manual regex filtering.

Theoretical Framework: Characterizing Query Types

The Search Demand Curve

The distribution of search queries follows a power-law distribution, often visualized as a "search demand curve" [34]. The "head" comprises a small number of high-volume, generic keywords, while the "long tail" consists of a vast number of low-volume, specific phrases. For research purposes, the long tail is where targeted engagement and high conversion value are found [36].

Defining Long-Tail and Question Queries

- Long-Tail Keywords: These are defined by their low search volume and specificity, not merely their word count [34]. They are less competitive and often easier to rank for, making them ideal for new research blogs or niche scientific topics.

- Question-Based Queries: A critical subset of long-tail keywords, these often begin with interrogatives like "what," "how," "why," "which," and "where" (e.g., "What is the mechanism of action of SGLT2 inhibitors?"). They represent a direct, unfilled need for information.

Table 1: Comparison of Keyword Types in Scientific Research

| Feature | Head/Short-Tail Keyword | Long-Tail Keyword |

|---|---|---|

| Example | "immunotherapy" | "CAR-T cell therapy side effects in pediatric AML" |

| Search Volume | High | Low |

| Competitiveness | Very High | Low |

| User Intent | Exploratory, Generic | Specific, Informational, Transactional |

| Conversion Value | Lower | Higher |

| Research Insight | General Topic Interest | Specific Knowledge Gap or Emerging Trend |

Experimental Protocol: Isolating Queries with Regex in Search Console

This protocol outlines the step-by-step process for using regular expressions in Google Search Console to filter the Performance report for long-tail and question-based queries.

Research Reagent Solutions

Table 2: Essential Tools for Query Research & Filtering

| Tool / Resource | Function | Specific Application |

|---|---|---|

| Google Search Console | Provides raw data on site performance in Google Search, including queries, impressions, clicks, and position [35] [1]. | The primary source for query data to be filtered. |

| RE2 Regex Engine | The specific syntax for regular expressions used in tools like GSC and Microsoft Clarity [37]. | Defines the pattern-matching rules for filtering. |

| Keyword Research Tool (e.g., Ahrefs, WordStream) | Provides data on search volume and keyword difficulty for query ideas [34] [38]. | Validates the search volume and competitiveness of identified long-tail queries. |

Procedure

Data Acquisition:

- Navigate to the Google Search Console Performance report for your property [35].

- Select the "Search results" tab and ensure you are viewing data for the 'Web' search type over a sufficient time period (e.g., 3 months).

Apply Initial Filter for Queries:

- Click the "+ NEW" button to add a filter.

- Set the filter type to "Query".

- Do not enter a term yet; proceed to the regex step.

Regex Formulation and Application:

- The core of this protocol is the application of specific regex patterns. The following patterns are designed to match queries based on their structure.

- To isolate question-based queries, use the following pattern, which matches queries starting with common question words:

- To isolate long-tail queries, a common approach is to match queries containing a minimum number of words. The following pattern matches queries with four or more words:

- Advanced Combined Filtering: To find long-tail questions specifically, one can mentally combine these approaches, first filtering for questions and then manually reviewing for length, or by using more complex, multi-line regex beyond basic scope.

Data Analysis and Triangulation:

- After applying a regex filter, analyze the resulting queries for their Impressions, Clicks, and Average Position [3].

- Export the filtered list of queries.

- Use a keyword research tool [34] [38] to check the search volume and keyword difficulty of the identified queries, confirming their status as long-tail keywords.

- Categorize the queries by thematic content (e.g., "drug side effects," "mechanism of action," "protocol questions") to identify central research themes.

Workflow Visualization

The following diagram illustrates the logical workflow for the query isolation and analysis process.

Anticipated Results and Interpretation

Upon successful execution of this protocol, the researcher will obtain a curated list of search queries that are highly specific and often question-based. The quantitative data from GSC should be summarized for clear interpretation.

Table 3: Example Output of Filtered Query Analysis

| Filtered Query | Impressions | Clicks | CTR | Avg. Position | Thematic Category |

|---|---|---|---|---|---|

| "how does metformin lower blood sugar" | 850 | 45 | 5.3% | 4.2 | Mechanism of Action |

| "long term side effects of statins" | 1,200 | 90 | 7.5% | 3.5 | Drug Safety & Efficacy |

| "difference between mRNA and viral vector vaccine" | 2,500 | 210 | 8.4% | 2.8 | Emerging Therapies |

| "protocol for Western blot protein extraction" | 600 | 55 | 9.2% | 5.1 | Laboratory Methods |

Interpretation:

- High CTR at a Moderate Position: Queries like "difference between mRNA and viral vector vaccine" achieving an 8.4% CTR while in position ~3 indicates a very high level of searcher intent and satisfaction with the search snippet.

- Thematic Clustering: The emergence of a cluster of queries around "drug side effects" can directly inform a content creation strategy aimed at addressing patient or clinician concerns.

- Validation: The low search volume (when checked in a keyword tool) for queries like "protocol for Western blot protein extraction" confirms their status as valuable, low-competition long-tail keywords [34].

Technical Notes and Troubleshooting

- Regex Performance: Complex negative lookahead patterns (e.g.,

^((?!hede).)*$to exclude a term [39]) can be computationally expensive on very large datasets. Use them judiciously. - GSC Limitations: The branded queries filter in GSC is powered by an AI-assisted system and cannot be manually defined with regex [3]. The manual method described herein is for the "Filter by query" field.

- Refining Patterns: The provided regex patterns are a starting point. They may require refinement based on observed data. For instance, the question pattern might be expanded to include other question words relevant to a specific scientific field.

- Data Sampling: For properties with very high traffic, GSC may use data sampling in its reports, which can slightly affect the absolute precision of the results.

In scientific search engine optimization (SEO), 'Striking Distance' keywords represent a high-yield, low-resource opportunity for academic and research institutions. These are search terms for which an institution's web pages already rank, but are positioned just outside the first page of Google Search results, typically between positions 11 and 30 [40].

Moving these keywords to the first page can significantly increase organic traffic, as the click-through rate (CTR) drops substantially after position 10 [40]. For researchers and drug development professionals, systematically targeting these terms is an efficient method to enhance the visibility of publications, project pages, and institutional resources without extensive new content creation [41].

Materials: The Scientist's Toolkit for Keyword Identification

The following tools are essential for identifying and analyzing striking distance keywords. Google Search Console (GSC) is the foundational, free tool for this process, providing direct data from Google on query rankings and site performance [1] [10].

Table 1: Essential Research Reagents for Keyword Identification

| Tool / Solution | Primary Function | Application in Keyword Research |

|---|---|---|

| Google Search Console | Provides direct data on site performance in Google Search [1]. | Core data source for identifying keywords your site already ranks for and their average position [40]. |

| Search Console API / Looker Studio | Allows for advanced data extraction and visualization from GSC [19]. | Bypasses GSC's 1,000-row export limit for large-scale sites and enables custom dashboard creation [10]. |

| Third-Party SEO Platforms | Tools like SE Ranking, Ahrefs, or SEMrush [41] [10]. | Augment GSC data with metrics like keyword difficulty and estimated search volume for prioritization [41]. |

| Spreadsheet Software | Applications like Google Sheets or Microsoft Excel. | Used for manually filtering and analyzing exported GSC data to isolate striking distance keywords [40]. |

Experimental Protocols & Data Analysis

Protocol 1: Isolating Striking Distance Keywords using Google Search Console

This protocol details the step-by-step methodology for extracting a list of striking distance keywords from Google Search Console.

Data Acquisition:

- Log in to Google Search Console and select the relevant property (your website or domain).

- Navigate to the Performance report (or the newer Search Console Insights report, where available) [11] [10].

- Within the report, set a relevant date range (e.g., last 3 months) to gather sufficient data.

- Apply the necessary filter for "Search type: Web" to focus on standard search results.

- Export the performance data using the export function, typically to Google Sheets for ease of manipulation.

Data Filtering and Isolation:

- In your spreadsheet, locate the column for "Average position".

- Apply a filter or create a new sheet that shows only rows where the Average position is greater than 10.1 and less than 30.1 [40]. This captures keywords on pages 2 and 3 of the search results.

- The resulting list is your initial dataset of striking distance keywords.

Data Prioritization:

- Sort the filtered list by "Clicks" and "Impressions" to see which near-ranking keywords are already generating some user engagement.

- For a more strategic approach, integrate this list with a third-party tool to overlay search volume and keyword difficulty data, prioritizing high-volume, low-competition terms [41].

The following workflow diagram summarizes this keyword identification process:

Data Presentation: Quantitative Metrics for Keyword Analysis

The table below summarizes the key performance indicators (KPIs) available in Google Search Console that are critical for evaluating striking distance keyword opportunities.

Table 2: Key Quantitative Metrics for Striking Distance Keyword Analysis in Google Search Console

| Metric | Definition | Interpretation in Striking Distance Analysis |

|---|---|---|

| Clicks | The number of times users clicked on a link to your site from Google Search results for a specific query [10]. | Indicates a keyword's existing ability to drive traffic, even from a lower position. |

| Impressions | The number of times your URL appeared in search results viewed by a user for a specific query [10]. | Measures a keyword's visibility potential. High impressions with low clicks suggest a CTR problem. |

| Average Position | The highest position your site achieved in search results, averaged across all queries where it appeared [10]. | The primary filter for identifying striking distance keywords (positions 11-30) [40]. |

| Click-Through Rate (CTR) | The percentage of impressions that resulted in a click (Clicks ÷ Impressions) [10]. | A low CTR from a page-2 position highlights a potential opportunity to optimize title tags and meta descriptions. |

Optimization Protocols: From Page-Two to Page-One

Once striking distance keywords are identified, targeted optimization is required. The following diagram outlines the logical progression of optimization tactics, from least to most resource-intensive.

Protocol 2: Internal Linking for Authority Flow

Principle: Use internal links from high-authority pages on your site to pass relevance and "link equity" to the page targeting the striking distance keyword, using the keyword as anchor text [42] [40].

Methodology:

- Identify Source Pages: Use Google's

site:search operator to find pages on your domain that thematically relate to or already mention the target keyword. Example:site:youruniversity.edu "preclinical drug assay"[40]. - Insert Links: On the identified source pages, naturally incorporate hyperlinks pointing to the target page. Use the striking distance keyword or a close variant as the anchor text to clearly signal the topic to search engines [42].

- Leverage Topic Clusters: For broad, competitive terms, consider creating a central "pillar" page (e.g., "Cancer Immunotherapy Research") and internally linking to it from related "cluster" pages (e.g., "CAR-T Cell Studies," "Checkpoint Inhibitor Trials") to build collective authority [43].

Protocol 3: On-Page Content Optimization and Refreshing

Principle: Enhance the relevance, comprehensiveness, and user experience of the page ranking for the striking distance keyword to better satisfy user intent and search engine quality criteria [40].

Methodology:

- Analyze Top Competitors: Manually review the pages currently ranking in the top 10 for the target keyword. Identify common content elements, structure, and depth.

- Optimize Page Elements:

- Refresh and Expand Content:

Protocol 4: Targeted External Link Building

Principle: Acquire hyperlinks from other reputable websites to increase the authority and trustworthiness of the target page in the eyes of search engines [42] [40].

Methodology:

- Create Link-Worthy Content: Ensure the target page is a high-quality, authoritative resource that provides unique value, such as original research findings, a comprehensive protocol, or a novel database.

- Outreach and Promotion:

- Identify relevant academic blogs, industry news sites, or professional associations that would find your content valuable for their audience.

- Reach out to these sites to suggest your content as a resource.

- Link Reclamation: Use tools to find mentions of your institution or research that do not link back to your site. Contact the site owners to request that they add a link to your relevant page [42].

In the modern academic landscape, the discoverability of your research is just as crucial as its quality. While publication in a peer-reviewed journal is a fundamental step, it does not guarantee that the intended audience will find and engage with your work. The digital pathway to your research often begins not on a journal's website but on a search engine results page. Leveraging data from Google Search Console (GSC) provides a powerful, yet underutilized, method for academic researchers to understand the specific search terms—the queries—that lead the global scientific community to their publications. This process of mapping search queries to your publications allows you to bridge the gap between public search interest and your existing body of work, ultimately amplifying the reach and impact of your research.

Background and Rationale

The Discoverability Crisis in Academic Publishing

A growing "discoverability crisis" exists within scientific literature, where many articles, despite being indexed in major databases, remain undiscovered by potential readers [45]. This occurs because academics primarily discover new research by searching databases using specific key terms. If a publication's title, abstract, and keywords do not incorporate the terminology commonly used by its target audience, it is unlikely to appear in search results, thereby limiting its readership and potential for citation [45]. Research indicates that studies with appealing abstracts are not necessarily discovered if they lack basic search engine optimization [45].

How Search Engines Connect Queries to Content

Google Search operates through three primary stages that are relevant to academic discoverability [46]:

- Crawling: Googlebot crawls and downloads content from web pages, including academic publications.

- Indexing: Google analyzes the text and key content tags (like

<title>elements andaltattributes) of the crawled page to understand its topics and stores this information in its index [46]. - Serving Search Results: When a user enters a query, Google's machines search the index for matching pages and return results based on relevance and quality [46].

Google Search Console provides direct insight into how this process performs for your web properties, including institutional pages or lab websites that host your publications.

Application Notes: Leveraging Search Console for Academic Insight

Google Search Console's new Search Console Insights report, integrated directly into the main interface, offers an accessible way for researchers to understand their website's performance in Google Search without needing to be data experts [11]. This tool is instrumental for mapping queries to publications.

Key Features of Search Console Insights for Researchers

The report provides several critical data points for academic analysis:

- Total Clicks and Impressions: Monitor how often your research pages appear in search results (impressions) and how often users click on them (clicks), allowing you to track performance trends over time [11].

- Top Performing Pages: Identify which of your publications or academic profiles are attracting the most traffic from search, helping you understand which research resonates most with your audience [11].

- Top Search Queries: Discover the exact phrases users type into Google that lead them to your work. This is the core data for mapping search interest [11].