Beyond Keywords: Validating Research Terms with Citation Analysis for Comprehensive Discovery

This article provides a strategic framework for researchers, scientists, and drug development professionals to validate and optimize keyword choices through citation analysis techniques.

Beyond Keywords: Validating Research Terms with Citation Analysis for Comprehensive Discovery

Abstract

This article provides a strategic framework for researchers, scientists, and drug development professionals to validate and optimize keyword choices through citation analysis techniques. It addresses four core needs: establishing the foundational importance of keyword validation in rigorous research; detailing methodological steps for performing cited reference searches in databases like Scopus, Web of Science, and Google Scholar; troubleshooting common issues like low search sensitivity and poor precision; and presenting comparative data on the performance of different search strategies. By synthesizing these intents, the article empowers researchers to conduct more systematic and comprehensive literature reviews, ensuring critical evidence is not overlooked in fields like drug discovery and biomedical research.

Why Keyword Validation Matters: The Foundation of Rigorous Research

The Critical Role of Literature Search in Evidence-Based Fields

In evidence-based fields, from medicine to drug development, a systematic literature search is the foundational activity that separates robust, credible research from mere guesswork. It enables the integration of the best available research evidence with clinical expertise and patient values, forming the very core of evidence-based medicine (EBM) [1]. A well-executed literature search helps researchers broaden their knowledge base, critically appraise existing research, and effectively plan original studies [1]. For drug development professionals and scientists, this process is not merely academic—it directly influences research quality, resource allocation, and ultimately, patient outcomes. The contemporary challenge lies in the overwhelming volume of scientific publications, with over millions of papers published annually, making accurate and efficient literature analysis more difficult and more essential than ever [2]. This guide compares traditional and modern, data-driven approaches to literature search, demonstrating how citation analysis and strategic keyword validation can significantly enhance research quality and efficiency.

Methodologies and Experimental Protocols for Literature Search

Formulating the Research Question: PICO/T Framework

The initial and most critical step in any literature search is formulating a focused, answerable research question. The PICO/T framework provides a structured, widely-adopted methodology for this purpose, breaking down a clinical or research query into its key components [1] [3] [4].

Experimental Protocol for PICO/T Development:

- P - Population/Problem: Precisely define the group of patients, biological system, or material under investigation. Example:

Patients with metastatic melanomaorTiO2-based ReRAM devices. - I - Intervention/Indicator: Specify the main intervention, diagnostic tool, or material process being studied. Example:

immunotherapy with anti-PD1 antibodiesordoping with HfO2. - C - Comparison/Control: Identify the alternative or reference state for comparison. Example:

standard chemotherapyorpure ZnO thin films. - O - Outcome: Determine the measurable or observable effects of interest. Example:

5-year survival rateorresistive switching performance. - T - Time (optional): Define the relevant time frame for outcome measurement. Example:

over 24 months[1] [3].

After defining the PICO/T components, researchers must generate a comprehensive list of keywords and synonyms for each concept. This can be achieved through brainstorming, consulting domain experts, and performing preliminary searches in resources like PubMed's MeSH database or reference texts such as UpToDate [1]. This protocol ensures the research question is specific, structured, and ready for translation into effective database queries.

Database Selection and Search Execution

No single database covers all published literature, making the choice of multiple, discipline-specific databases a mandatory step for a comprehensive search [4]. The experimental protocol involves selecting relevant databases and constructing sophisticated search strings using Boolean logic.

Quantitative Comparison of Major Literature Databases

| Database Name | Primary Subject Focus | Content Scope & Size | Access Model | Key Strengths |

|---|---|---|---|---|

| PubMed/MEDLINE [1] | Biomedicine, Life Sciences | >39 million citations [5] | Free | Comprehensive, powerful MeSH indexing, free access |

| EMBASE [1] | Biomedicine, Pharmacology | >32 million records, ~8,500 journals | Subscription | Strong European coverage, extensive drug & pharmacology data |

| Web of Science [1] | Multidisciplinary | >171 million records | Subscription | Strong citation analysis tools, covers conference proceedings |

| Cochrane Library [1] | Health Interventions | >5,000 systematic reviews | Subscription/Free | Premier source for pre-appraised evidence and systematic reviews |

| Scopus [1] | Multidisciplinary | Large abstract & citation database | Subscription | Broad coverage, integrated citation analysis |

| Google Scholar [4] | Multidisciplinary | Wide-ranging but non-transparent | Free | Easy access, broad coverage, includes "grey literature" |

Experimental Protocol for Search Execution:

- Database Selection: Choose at least 2-3 relevant databases from the table above to ensure broad coverage and avoid database-specific bias [4].

- Boolean Logic Construction: Combine PICO/T keywords using Boolean operators:

- Field Tags and Filters: Apply field tags (e.g.,

[Title/Abstract]in PubMed) to restrict searches to key parts of the citation. Use database filters for publication date, article type (e.g., Clinical Trial, Review), and species post-search [3]. - Iterative Testing and Translation: Run the search, review results, and refine the strategy iteratively. Translate the final, effective search syntax for each selected database, as functionalities differ [6].

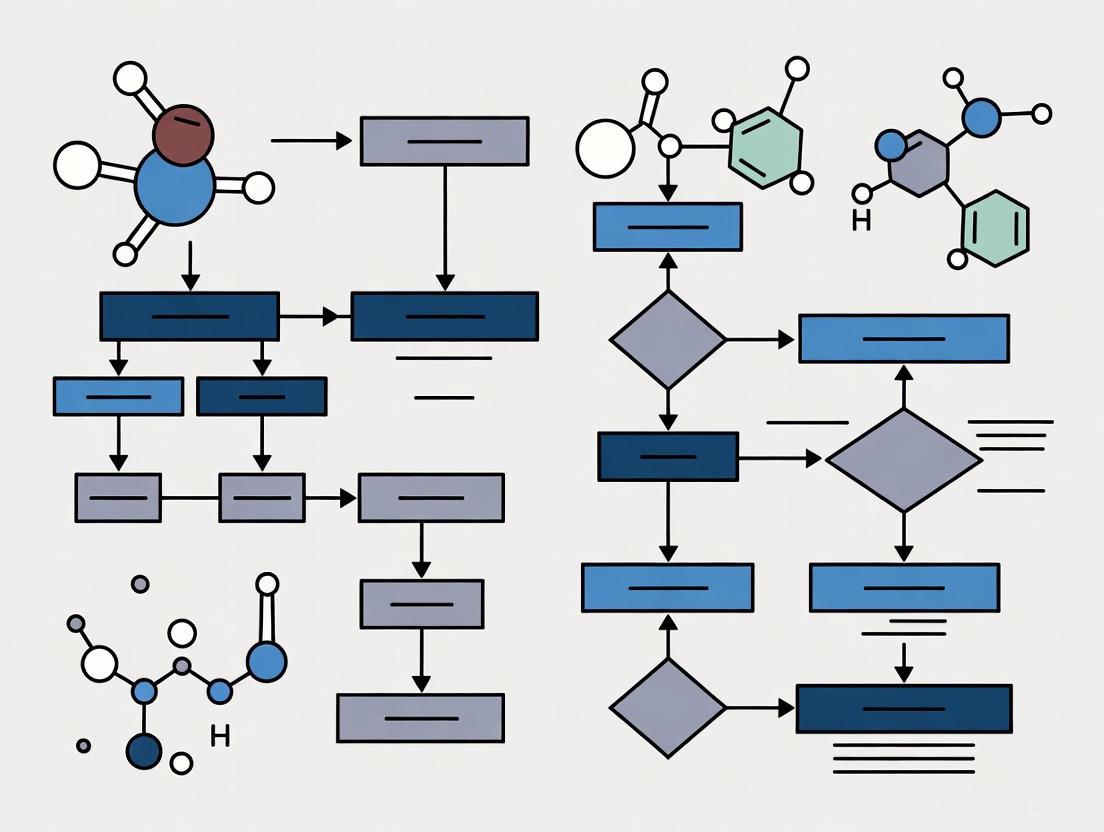

Citation analysis provides a quantitative, data-driven method to validate the completeness of a literature search and identify the most influential works. The following diagram illustrates the integrated workflow of traditional search with modern citation analysis for keyword validation.

Experimental Protocol for Citation Analysis:

- Identify Seminal Papers: After a broad initial search, use tools like Google Scholar, Scinapse, or Web of Science to sort results by citation count. Highly cited papers are typically foundational to the field and must be included [7].

- Map Citation Networks: Use citation mapping features in tools like Scinapse, Semantic Scholar, or VOSviewer to visualize how papers are interconnected. This reveals research clusters and key pathways of intellectual influence [7].

- Analyze Keywords in Context: Extract and analyze the keywords, titles, and abstracts of the identified high-impact papers. This validates whether the initial search terms align with the language used in influential literature, revealing potential gaps or alternative terminology [2].

- Refine and Re-search: Integrate the newly discovered terminology and concepts back into the search strategy. Execute a refined search to ensure the final literature set is both comprehensive and focused on high-quality, relevant evidence.

Comparative Analysis: Tools and Reagents for Effective Literature Search

Research Reagent Solutions: The Digital Toolkit

Just as a wet-lab experiment requires specific reagents, a rigorous literature search relies on a digital toolkit of software and platforms. The table below details essential "research reagents" for modern evidence-based research.

Essential Digital Toolkit for Literature Search & Analysis

| Tool Category & Name | Primary Function | Key Features | Ideal Use Case |

|---|---|---|---|

| Citation Databases | |||

| PubMed [1] [5] | Bibliographic Database | MeSH indexing, Clinical Queries, free access | Foundational biomedical literature search |

| EMBASE [1] | Bibliographic Database | In-depth pharmacological data, European journal coverage | Comprehensive drug development research |

| Citation Analysis Tools | |||

| Google Scholar [7] | Search Engine | "Cited by" tracking, basic citation counts | Quick discovery of influential papers |

| Scinapse [7] | Scholarly Search Engine | Citation graphs, author influence metrics | Deep citation network analysis |

| Semantic Scholar [7] | AI-Powered Search | Influential citation detection, paper recommendations | AI-enhanced discovery and trend analysis |

| Automated Verification | |||

| SemanticCite [8] | Citation Verification | Full-text semantic analysis, 4-class accuracy rating | Validating citation accuracy in manuscripts/reviews |

| Reference Management | |||

| Zotero, Mendeley | Reference Management | Bibliographic formatting, PDF management, citation plug-ins | Organizing references and generating bibliographies |

Citation analysis transcends simple counting; it allows researchers to map the intellectual structure of a field. By analyzing co-citation patterns (how often two works are cited together), distinct research communities and trends emerge. The following diagram conceptualizes the structure of a research field as revealed by citation network analysis.

This conceptual network, built from real-world data [2], shows how keyword and citation analysis can automatically identify sub-fields. For instance, in Resistive Random-Access Memory (ReRAM) research, distinct communities were identified focusing on traditional metal oxides, novel materials like graphene and perovskites, and neuromorphic computing applications. The "bursting" node represents a recent paper gaining rapid citations, signaling an emerging trend. Mapping these networks allows researchers to visually contextualize their work, identify collaborators or knowledge gaps, and validate that their search strategy covers all relevant clusters.

Discussion: Integration and Application in Drug Development

The integration of systematic search methodologies with quantitative citation analysis creates a powerful, validated approach to literature review. In fast-paced, high-stakes fields like drug discovery and development, this integrated approach is particularly valuable. The use of Artificial Intelligence (AI) and Machine Learning (ML) in drug discovery—for tasks like virtual screening (VS), quantitative structure-activity relationship (QSAR) modeling, and predicting physicochemical properties—generates massive datasets [9]. A robust literature search is not only crucial for building and training these AI/ML models but also for validating their outputs against the established body of knowledge. Furthermore, AI-powered tools like SemanticCite [8] are now emerging to address the challenge of citation accuracy, using full-text analysis to verify that citations semantically support the claims they reference, thus enhancing overall research integrity.

The critical role of the literature search is thus evolving. It is no longer a passive background activity but an active, iterative, and data-driven process. By framing research questions with PICO/T, executing structured searches across multiple databases, and validating the findings through citation network analysis, researchers and drug development professionals can ensure their work is built upon a comprehensive, unbiased, and robust foundation of evidence. This rigorous approach directly contributes to higher-quality research, more efficient resource allocation, and more reliable scientific progress.

Understanding the Limitations of Traditional Keyword Searching

In the realm of academic and scientific research, conducting a thorough literature review is a foundational step, regarded as the highest level of evidence for informing new studies and clinical practices [10]. For decades, the primary tool for this task has been traditional keyword searching, a method reliant on lexical matches between search terms and terms present in database records like titles and abstracts. However, the escalating volume of scientific publications—numbering in the millions annually—exposes critical vulnerabilities in this approach [2]. This guide objectively compares the performance of traditional keyword searching against an alternative method, citation analysis, framing the comparison within a broader thesis on validating keyword choices through rigorous citation analysis research. For researchers, scientists, and drug development professionals, the selection of a search methodology directly impacts the completeness of evidence, the validity of meta-analyses, and the efficacy of drug development pipelines.

Traditional keyword searching and citation analysis represent two fundamentally different paradigms for information retrieval in research.

Traditional Keyword Searching

This method operates on a lexical level. Researchers construct search strategies using a combination of keywords and database-specific subject headings, aiming to maximize the likelihood of identifying all relevant studies [10]. Its effectiveness is inherently limited by several factors:

- Indexing Inconsistencies: Research instruments and specific methodologies are often not well-indexed as subject headings in major biomedical databases [10].

- Terminological Variability: Authors may use different terms to describe the same outcome or concept across studies, and these terms may not be present in the abstract if the outcome was not the primary focus of the study [10].

- Lack of Semantic Understanding: Traditional search engines historically relied on surface-level text patterns rather than understanding the underlying meaning or intent behind the query [11].

This technique is a form of citation analysis that begins with identifying a seminal or validation article for a specific research instrument or methodology. The searcher then uses citation indexes to find newer articles that have cited that original paper [10]. This method is powerful because it leverages the scholarly practice of acknowledging instrument developers by citing the first publication or validation study. It effectively creates a web of related research that is logically connected through the use of a common tool, bypassing the problems of inconsistent terminology.

Table 1: Fundamental Characteristics of Search Methodologies

| Characteristic | Traditional Keyword Searching | Citation Analysis (Cited Reference Search) |

|---|---|---|

| Primary Mechanism | Lexical matching of words in search query to words in database records (titles, abstracts, keywords). | Tracking scholarly citations from a known, seminal paper forward through the literature. |

| Underlying Logic | Finds literature that mentions similar terminology. | Finds literature that uses the same instrument or methodology. |

| Dependency | Consistent and comprehensive reporting of keywords and concepts by authors and indexers. | Scholarly convention of citing original methodological sources. |

| Best Use Case | Topical searches, exploratory research in nascent fields, identifying broad research themes. | Identifying all studies that use a particular assessment instrument or specific methodology for systematic reviews or meta-analyses. |

The following workflow illustrates the typical processes for conducting literature reviews using these two distinct methods, highlighting their different starting points and pathways.

Experimental Protocol: A Controlled Comparison

To quantitatively compare the effectiveness of these two methodologies, we can draw upon a rigorous case study design from the literature [10]. The following protocol outlines the key steps for replicating such a comparative experiment.

Objective

To compare the effectiveness (precision and sensitivity) of traditional keyword searches versus cited reference searches in identifying studies that used a specific healthcare decision-making instrument, the Control Preferences Scale (CPS) [10].

Materials and Reagent Solutions

Table 2: Essential Research Materials for Search Methodology Comparison

| Item Name | Type/Provider | Primary Function in Experiment |

|---|---|---|

| Bibliographic Databases | PubMed, Scopus, Web of Science (WOS) | Provide access to scientific literature and enable structured keyword searches. |

| Full-Text Database | Google Scholar | Provides access to a broader, full-text index of literature for supplementary searching. |

| Seminal Publications | Original CPS introduction [19] and validation [20] studies from 1992 and 1997. | Serve as the known starting points for the cited reference search methodology. |

| Search Interface | Institutional access to database platforms. | Allows for the execution of standardized search strategies and retrieval of results. |

Detailed Workflow

- Define the Gold Standard: The combined results from both keyword and cited reference searches across all databases are aggregated. After removing duplicates and non-English citations, the full text of each unique citation is reviewed to determine if the CPS was indeed used. This final set of confirmed CPS studies serves as the "gold standard" against which each method is measured [10].

- Keyword Search Execution: A standardized keyword search is performed in all four databases (PubMed, Scopus, WOS, Google Scholar). The search is limited to a specific time period (e.g., 2003-2012) and uses exact phrases for the instrument name:

"control preference scale" OR "control preferences scale"[10]. - Cited Reference Search Execution: In the databases that support it (Scopus, WOS, Google Scholar), a cited reference search is performed using the two seminal CPS publications as the starting points. The same publication date filters are applied [10].

- Data Analysis: For each search method in each database, two key metrics are calculated [10]:

- Precision: The percentage of retrieved citations that are actually relevant (i.e., that use the CPS).

Precision = (Number of Relevant Articles Retrieved) / (Total Number of Articles Retrieved) - Sensitivity (Recall): The percentage of all known relevant articles (the "gold standard" set) that were retrieved by the search.

Sensitivity = (Number of Relevant Articles Retrieved) / (Total Number of Relevant Articles in Gold Standard)

- Precision: The percentage of retrieved citations that are actually relevant (i.e., that use the CPS).

Results: Quantitative Performance Data

The experimental results from the case study provide clear, quantitative evidence of the performance gap between the two methods [10]. The following table summarizes the key findings.

Table 3: Comparative Performance of Search Methods for Identifying CPS Studies (2003-2012) [10]

| Search Method & Database | Average Precision | Average Sensitivity | Key Interpretation |

|---|---|---|---|

| Keyword Search (Bibliographic DBs) | 90% | 16% | Very accurate when results are found, but misses the vast majority of relevant studies. |

| PubMed | ~90% | ~16% | |

| Scopus | ~90% | ~16% | |

| Web of Science (WOS) | ~90% | ~16% | |

| Keyword Search (Google Scholar) | 54% | 70% | Finds more relevant studies but requires sifting through many irrelevant results. |

| Cited Reference Search | 35% - 75% | 45% - 54% | A consistently sensitive method, finding about half of all relevant studies, with precision varying by the starting article. |

| Scopus (using 1997 article) | 75% | ~50% | Highest precision found in this study. |

| WOS (using 1992 article) | ~40% | ~45% | |

| Google Scholar (using 1997 article) | 63% | ~54% |

The data reveals a critical trade-off. Keyword searches in curated bibliographic databases are highly precise but suffer from abysmally low sensitivity, missing approximately 84% of relevant studies in this case. Conversely, cited reference searches demonstrate moderate to good sensitivity, consistently identifying about half of all relevant studies, regardless of the database used. This makes cited reference searching a far more comprehensive strategy when the goal is to find all studies using a particular instrument [10].

Discussion and Research Implications

Synthesizing the Evidence

The experimental data leads to an unambiguous conclusion: cited reference searching is a more sensitive and comprehensive technique than keyword searching for locating studies that employ a specific research instrument or methodology [10]. Relying solely on keyword searches for a systematic review on such a topic would result in a profoundly incomplete evidence base, jeopardizing the validity of any subsequent meta-analysis. The failure of traditional keyword research is not limited to academic databases; it mirrors a broader shift in search, where modern AI-powered systems prioritize understanding semantic meaning and user intent over superficial lexical matching [11] [12].

A Practical Guide for the Researcher

Given these limitations, a synergistic search strategy is essential for rigorous research.

- Use Citation Analysis as a Primary Tool: When conducting a review on studies that use a specific instrument, always begin by identifying the key seminal and validation papers for that instrument. Use these as seeds for cited reference searches in databases like Scopus and Web of Science.

- Use Keyword Searching as a Supplementary Filter: Employ keyword searches to quickly find some relevant articles and to identify potential seminal papers in a new field. They can also help find studies where the instrument use is explicitly mentioned in the title or abstract.

- Embrace a Multi-Method, Multi-Database Approach: As confirmed by research, "using a combination of search strategies, techniques, and multiple databases is recommended for most systematic reviews in order to identify all relevant articles" [10]. Goals, time, and resources should dictate the specific combination of methods and databases used.

For the modern scientist, validating keyword choices through citation analysis is not merely an academic exercise; it is a necessary step in ensuring the integrity and comprehensiveness of the scientific literature review process.

In academic research, particularly within drug development and computational repurposing studies, keyword validation and citation analysis serve as critical methodologies for establishing research credibility and ensuring comprehensive literature discovery. Keyword validation refers to the systematic process of verifying that search terms accurately capture relevant scientific literature, while citation analysis examines reference patterns to identify influential works and research trends. Within drug development, these methodologies enable researchers to navigate vast biomedical literature efficiently, identify repurposing candidates, and validate computational predictions against existing knowledge.

The integration of these approaches addresses fundamental challenges in modern research. With millions of scientific papers published annually [2] and approximately 94% of content receiving no backlinks [13], systematic literature discovery becomes essential. For drug development professionals, rigorous keyword validation ensures that computational drug repurposing predictions undergo proper verification against experimental and clinical evidence [14], while citation analysis helps identify foundational studies and emerging trends within targeted therapeutic areas.

Methodological Framework

Keyword Validation Approaches

Keyword validation employs multiple techniques to ensure search terms comprehensively capture relevant literature. This process begins with concept identification, where researchers break down their topic into core components [15]. For each concept, they develop extensive terminology lists including synonyms, spelling variations, acronyms, and broader/narrower terms. Contemporary approaches increasingly incorporate artificial intelligence to generate additional keyword suggestions, though these require cross-referencing with established literature and database thesauri for validation [15].

Strategic keyword placement within titles, abstracts, and keyword sections significantly enhances discoverability. Research indicates that 92% of studies use redundant keywords in their titles or abstracts, undermining optimal database indexing [16]. Effective validation requires implementing recognizable terminology frequently employed within the target research domain, as papers containing common field-specific terms demonstrate increased citation rates [16].

Table 1: Keyword Validation Techniques and Applications

| Technique | Methodology | Primary Application | Validation Measure |

|---|---|---|---|

| Concept Analysis | Breaking topics into core components and related terms | Initial search strategy development | Coverage assessment across key concepts |

| AI-Assisted Generation | Using language models to suggest related terminology | Expanding beyond researcher familiarity | Cross-referencing with database thesauri |

| Term Frequency Analysis | Identifying commonly used terminology in target field | Optimizing abstract and title content | Database indexing effectiveness |

| Structural Optimization | Strategic placement of key terms in title/abstract | Search engine optimization | Search ranking and discoverability metrics |

Citation analysis employs both quantitative and qualitative approaches to evaluate scholarly impact and research relationships. Quantitative methods primarily utilize citation counts—the total number of times an author's work has been cited—and related metrics like the h-index and average citation rates [17]. Qualitative approaches examine citation networks and contexts, investigating how and why works reference each other to reveal knowledge flows and research trajectories [7].

Advanced citation analysis incorporates semantic verification through systems like SemanticCite, which employs AI-powered full-text analysis to verify citation accuracy [8]. These systems utilize multi-class classification schemes (Supported, Partially Supported, Unsupported, Uncertain) that capture nuanced relationships between citations and their sources, addressing the challenge of semantic citation errors that misrepresent referenced materials [8].

Table 2: Citation Analysis Methods and Tools

| Method Category | Specific Metrics | Analysis Tools | Key Applications |

|---|---|---|---|

| Bibliometric Analysis | Citation counts, h-index, impact factor | Web of Science, Scopus, Google Scholar | Research impact assessment, influential paper identification |

| Network Analysis | Co-citation analysis, bibliographic coupling | CiteSpace, VOSviewer | Research front identification, knowledge structure mapping |

| Semantic Analysis | Citation context, claim-source alignment | SemanticCite, scite | Citation accuracy verification, research integrity |

| Content Analysis | Citation classifications, purpose analysis | SciCite, ACL-ARC | Research methodology tracking, knowledge flow |

Experimental Protocols and Workflows

Keyword Validation Protocol

The following workflow illustrates the systematic process for validating keyword effectiveness in literature retrieval, particularly applicable to drug development research:

Figure 1: Keyword Validation and Optimization Workflow

The keyword validation protocol begins with research scope definition, where investigators clearly articulate their literature review objectives [13]. For drug repurposing studies, this typically involves identifying specific drug classes, disease mechanisms, or therapeutic areas of interest. Researchers then identify core concepts and generate comprehensive synonym lists using resources like MeSH (Medical Subject Headings) for PubMed searches [15].

The experimental validation phase involves testing search sensitivity through iterative database queries. Researchers execute preliminary searches using their candidate terms and analyze results for relevance and comprehensiveness. This process includes coverage analysis against known seminal papers—identified through citation analysis—to verify the search strategy captures foundational works [17]. Drug development researchers might further validate keywords by testing whether their searches retrieve articles referenced in clinical trial documentation or known computational repurposing studies [14].

The citation analysis protocol employs both computational tools and manual verification to evaluate research impact and knowledge structures:

Figure 2: Citation Analysis Methodology Workflow

The citation analysis protocol begins with clear objective definition, determining whether the analysis aims to identify foundational papers, track research trends, or validate computational predictions [7]. For drug repurposing applications, this typically involves collecting publication data from multiple databases (Web of Science, Scopus, PubMed) to ensure comprehensive coverage [14] [17].

The citation network mapping phase employs tools like CiteSpace or VOSviewer to visualize relationships between publications, authors, and institutions. This process helps identify research fronts and knowledge dissemination patterns. For computational drug repurposing, citation analysis can validate predictions by identifying existing clinical trials or experimental studies that support hypothesized drug-disease relationships [14]. The protocol concludes with semantic context analysis using advanced systems like SemanticCite, which verifies that citations accurately represent source content through full-text examination rather than relying solely on abstracts [8].

Comparative Analysis of Research Tools

Keyword Research and Validation Tools

Table 3: Keyword Research and Validation Tools Comparison

| Tool Name | Primary Function | Key Features | Best For | Limitations |

|---|---|---|---|---|

| Database Thesauri | Standardized terminology | Controlled vocabulary, hierarchical relationships | Systematic reviews, comprehensive searching | Field-specific, requires familiarity |

| AI-Assisted Generators | Keyword suggestion | Rapid expansion, synonym generation | Initial exploration, interdisciplinary topics | Requires validation, potential inaccuracies |

| Google Trends | Search term popularity | Temporal trends, geographic variations | Public health topics, emerging areas | Limited to public searches, general audience |

| Natural Language Processing | Automated term extraction | Tokenization, lemmatization, POS tagging | Large-scale literature analysis [2] | Technical setup, domain adaptation needed |

Table 4: Citation Analysis Tools for Drug Development Research

| Tool Platform | Citation Coverage | Key Metrics | Specialized Features | Drug Development Applications |

|---|---|---|---|---|

| Web of Science | Selective journal coverage | Citation counts, h-index, impact factor | Citation mapping, historical data | Established drug targets, foundational research |

| Scopus | Broader journal coverage | Citation tracking, author profiles | SciVal integration, trend visualization | Emerging areas, interdisciplinary research |

| Google Scholar | Most comprehensive | Citation counts, related articles | Broad coverage, includes grey literature | Comprehensive discovery, early-stage research |

| SemanticCite | Full-text verification | 4-class accuracy classification | Claim-source alignment, evidence snippets | Validation of computational predictions [8] |

| Connected Papers | Reference network | Similar papers visualization | Research front identification | Drug mechanism exploration, target discovery |

Research Reagent Solutions

The following table details essential digital "research reagents"—specialized tools and resources required for implementing robust keyword validation and citation analysis protocols in drug development research.

Table 5: Essential Research Reagent Solutions for Literature Analysis

| Research Reagent | Function | Application Context | Access Method |

|---|---|---|---|

| MeSH (Medical Subject Headings) | Controlled vocabulary thesaurus | PubMed searches, systematic reviews | NIH/NLM website |

| SemanticCite Framework | Full-text citation verification | Validating computational predictions [8] | Open-source implementation |

| Natural Language Processing Pipelines | Automated keyword extraction from titles/abstracts | Research trend analysis [2] | spaCy, NLTK libraries |

| Citation Network Analyzers | Visualization of research relationships | Identifying key papers and emerging trends [7] | CiteSpace, VOSviewer |

| Bibliographic Databases | Comprehensive publication metadata | Cross-disciplinary literature discovery [17] | Web of Science, Scopus |

| ClinicalTrials.gov | Database of clinical studies | Validating drug repurposing candidates [14] | NIH repository |

Integration in Drug Development Research

Within computational drug repurposing, keyword validation and citation analysis form essential components of the validation pipeline. After computational methods predict potential drug-disease relationships, researchers employ validated keyword strategies to conduct comprehensive literature reviews assessing whether supporting evidence exists in biomedical literature [14]. This process often reveals that high-impact repurposing candidates demonstrate citation patterns showing connections across traditionally separate research domains.

Advanced citation verification systems address critical validation challenges in computational drug repurposing. Studies indicate approximately 25% of citations in prestigious science journals contain semantic errors that misrepresent sources [8]. Systems employing full-text analysis rather than abstract-only examination can detect when drug repurposing predictions reference papers that don't actually support the claimed relationships. This rigorous validation approach is particularly important given emerging challenges with AI-generated content, where advanced language models may produce convincing but non-existent citations [8].

For early-stage biotechnology firms focusing on rare diseases, these methodologies provide cost-effective approaches to de-risk drug development portfolios. Citation analysis can identify off-label usage patterns in clinical literature, while keyword validation ensures comprehensive discovery of preclinical evidence supporting repurposing candidates [18]. This integrated approach accelerates the validation of drug development platforms by systematically connecting computational predictions with existing biological knowledge and clinical observations.

How Validated Keywords Improve Discovery and Knowledge Transfer

In the vast and rapidly expanding universe of scientific literature, validated keywords serve as essential navigational tools that directly enhance research discovery and knowledge transfer. For researchers, scientists, and drug development professionals, the precision of keyword selection is not merely an administrative task but a fundamental research competency that significantly impacts the efficiency and effectiveness of scientific literature retrieval and analysis. Within high-stakes fields like pharmaceutical research, where the average likelihood of a drug candidate achieving first approval stands at approximately 14.3%, optimizing information retrieval is crucial for avoiding costly duplication and accelerating innovation [19].

The process of keyword validation moves beyond simple word selection to establish a structured terminology that accurately represents research concepts and contexts. This validation is frequently achieved through citation analysis, which provides a quantitative method to verify that chosen keywords effectively map the intellectual landscape of a scientific domain. When properly validated, keywords transform from simple tags into powerful instruments that connect disparate research, reveal emerging trends, and facilitate the cross-pollination of ideas between disciplines—a capability particularly valuable in the increasingly interdisciplinary field of drug discovery [2] [20].

A direct comparison of search methodologies reveals significant differences in performance characteristics. Research analyzing the effectiveness of various approaches for locating studies using a specific healthcare instrument (the Control Preferences Scale) demonstrated clear trade-offs between precision and sensitivity [10].

Table 1: Performance Comparison of Search Methods Across Databases

| Database | Search Method | Precision (%) | Sensitivity (%) |

|---|---|---|---|

| PubMed | Keyword | 92 | 15 |

| Scopus | Keyword | 91 | 16 |

| Web of Science | Keyword | 88 | 17 |

| Google Scholar | Keyword | 54 | 70 |

| Scopus | Cited Reference | 75 | 45 |

| Web of Science | Cited Reference | 35 | 54 |

| Google Scholar | Cited Reference ('92) | 35 | 51 |

| Google Scholar | Cited Reference ('97) | 63 | 48 |

The data reveals that traditional keyword searching in bibliographic databases offers high precision but suffers from low sensitivity, potentially missing up to 85% of relevant studies. Conversely, cited reference searching provides moderate to high sensitivity, consistently identifying approximately half of all relevant publications [10]. This empirical evidence underscores a critical limitation of relying solely on keyword-based approaches: their incompleteness can lead to significant gaps in literature reviews and meta-analyses, ultimately compromising research quality and decision-making in drug development pipelines.

Experimental Protocols for Keyword Validation

The following protocol provides a systematic methodology for validating keyword effectiveness through citation analysis:

- Step 1: Identify Seminal Publications → Select 2-3 foundational papers that introduced key concepts, methodologies, or instruments relevant to the research field [10].

- Step 2: Execute Cited Reference Search → Using multiple databases (Scopus, Web of Science, Google Scholar), retrieve all articles citing these seminal works [10] [21].

- Step 3: Extract and Analyze Keyword Patterns → From the retrieved articles, compile keywords and analyze frequency distributions to identify consistent terminology [2].

- Step 4: Map Conceptual Relationships → Construct keyword co-occurrence networks to visualize relationships between concepts and identify central terms [2].

- Step 5: Validate Terminology Effectiveness → Compare validated keyword sets against traditional keyword search results to quantify improvement in retrieval recall and precision [10].

This protocol leverages the collective intelligence of research communities, operating on the principle that authors who cite foundational work likely employ standardized terminology, thus creating a "crowd-validated" keyword set [20].

Keyword Network Analysis Protocol

Advanced keyword validation employs natural language processing and network analysis to structurally map research domains:

- Article Collection: Gather comprehensive bibliographic data through API queries of Crossref and Web of Science using domain-specific search terms [2].

- Keyword Extraction: Process article titles using NLP pipelines (e.g., spaCy's "encoreweb_trf") with lemmatization and part-of-speech tagging to identify meaningful keywords [2].

- Network Construction: Create keyword co-occurrence matrices counting keyword pairs within article titles, then transform into keyword networks where nodes represent keywords and edges represent co-occurrence frequencies [2].

- Community Detection: Apply modularity algorithms (e.g., Louvain method) to identify keyword clusters representing research subfields [2].

- Terminology Validation: Select central keywords from each community based on weighted PageRank scores, creating a validated terminology set that comprehensively maps the research domain [2].

Table 2: Research Reagent Solutions for Keyword Validation and Analysis

| Research Tool | Type | Primary Function | Application Context |

|---|---|---|---|

| spaCy NLP Pipeline | Software | Natural language processing with lemmatization and POS tagging | Automated keyword extraction from scientific text [2] |

| Gephi | Software | Network visualization and analysis | Keyword co-occurrence network construction and visualization [2] |

| Web of Science | Database | Bibliographic records with citation data | Cited reference searching and bibliometric analysis [10] [21] |

| Scopus | Database | Comprehensive abstract and citation database | High-precision cited reference searching [10] |

| Google Scholar | Database | Multidisciplinary full-text database | High-sensitivity literature retrieval [10] |

Application in Drug Discovery and Development

The pharmaceutical sector presents a compelling use case for validated keyword strategies, particularly given the complexity and interdisciplinarity of modern drug research and development. The 2025 Alzheimer's disease drug development pipeline alone includes 138 drugs across 182 clinical trials addressing 15 distinct disease processes—from amyloid and tau targets to inflammation, synaptic plasticity, and proteostasis [22]. This conceptual diversity demands precise terminology to ensure effective knowledge transfer between researchers, clinicians, and regulatory professionals.

Validated keyword systems directly enhance drug repurposing efforts, which constitute approximately one-third of the current AD pipeline [22]. By establishing semantic bridges between previously disconnected research domains, validated keywords facilitate the identification of novel therapeutic applications for existing compounds, potentially accelerating development timelines and reducing associated costs. Furthermore, as artificial intelligence assumes an increasingly prominent role in drug discovery—with AI-developed candidates demonstrating an 80-90% success rate in Phase I trials compared to approximately 40% for traditional methods [23]—the quality of underlying data structures, including keyword taxonomies, becomes increasingly critical for model performance.

Keyword Validation Workflow: This process transforms seminal literature into validated terminology through citation analysis and network mapping.

Integrated Framework for Knowledge Discovery

The synergistic combination of keyword and citation-based approaches creates a robust framework for comprehensive knowledge discovery. While keyword searches offer precision for targeted retrieval, and citation searches provide sensitivity for exploratory research, their integration enables both focused and expansive literature discovery [10]. This hybrid approach is particularly valuable for navigating complex, interdisciplinary fields like drug development, where relevant knowledge may be distributed across diverse specialty areas.

Knowledge Discovery Framework: Integrating complementary search methods for comprehensive literature analysis.

The implementation of validated keyword systems creates a positive feedback loop for knowledge transfer: as researchers adopt standardized terminology, literature becomes more easily discoverable; as discoverability increases, citation networks grow more comprehensive; and as citation networks expand, keyword validation becomes increasingly robust. This self-reinforcing cycle ultimately accelerates scientific progress by reducing redundant research and facilitating connections between complementary findings [2] [21].

Validated keywords, authenticated through rigorous citation analysis, fundamentally enhance discovery processes and knowledge transfer within scientific communities—particularly in methodologically complex fields like drug development. By transforming subjective terminology into empirically verified conceptual maps, these keyword systems enable more efficient navigation of increasingly expansive research landscapes. As the volume of scientific publications continues to grow, the systematic validation of keyword choices will become increasingly vital for maintaining the integrity of literature retrieval, the efficacy of knowledge transfer, and ultimately, the pace of scientific innovation itself.

A Practical Framework: Implementing Citation Analysis for Keyword Validation

Seminal publications, often referred to as landmark, pivotal, or classic studies, constitute the foundational literature of any scientific discipline. These works introduced ideas of great importance or influence when first published and have subsequently shaped the direction of subsequent research [24] [25]. For researchers, scientists, and drug development professionals, accurately identifying these works is not merely an academic exercise but a critical step in validating research directions, avoiding duplication of effort, and building upon established knowledge.

Within the context of validating keyword choices through citation analysis research, identifying seminal works takes on additional significance. The terminology used in these foundational papers often becomes the standard lexicon of the field, and understanding their citation networks provides a robust framework for assessing the effectiveness of keyword-based search strategies. This guide objectively compares the performance of various methods and tools for identifying seminal research, providing experimental data to support the selection of optimal approaches for scientific literature discovery.

Defining Characteristics of Seminal Research

Seminal articles possess distinct characteristics that differentiate them from other scientific publications. Understanding these traits is essential for their accurate identification [26]:

- High Citation Frequency: They are referenced repeatedly in the literature, resulting in a substantially higher citation count compared to contemporary works.

- Influential Content: They present novel ideas, methodologies, or breakthrough conclusions that significantly influence the research domain.

- Publication in Prestigious Venues: They are often published in top-tier, field-defining journals.

- Interdisciplinary Impact: Their influence may extend beyond their original discipline, being cited across multiple research fields.

- Association with Esteemed Scholars: They are frequently authored by highly regarded researchers whose bodies of work have shaped the field.

- Historical Significance: Depending on the discipline, they may have been published decades ago, though in fast-moving fields, seminal works may be more recent [26].

Comparative Analysis of Identification Methods

Various methods exist for identifying seminal research, each with distinct strengths, limitations, and appropriate use cases. The table below provides a structured comparison of these primary approaches.

Table 1: Comparison of Methods for Identifying Seminal Research

| Method | Core Mechanism | Key Performance Metrics | Primary Advantages | Notable Limitations |

|---|---|---|---|---|

| Citation Tracking [24] [25] | Analysis of forward and backward citations of a known relevant article. | Retrieval completeness (median 75-88% of included articles in systematic reviews) [27]. | Intuitive; uses expert knowledge of authors; finds thematically similar works. | Inefficient if used manually; requires a starting "query article"; may miss disconnected citation networks [27]. |

| CoCites Method [27] | Ranks publications by their co-citation frequency with one or more "query articles." | High efficiency when original reviews screened >500 titles; accuracy improves with highly-cited query articles [27]. | Efficient, accurate, transparent, and reproducible; does not depend on keyword selection. | Performance depends on citation characteristics of query articles (citation count, topic overlap) [27]. |

| Database Citation Analysis [24] [25] | Using built-in tools in databases (e.g., "Sort by: Cited by") to find frequently cited works. | Large number of "Times Cited" indicates potential seminal status. | Quick and straightforward; provides an immediate quantitative metric. | May favor older papers; citation counts can be field-dependent; requires database access. |

| Keyword-Based Search [16] [2] | Searching literature databases using strategic key terms. | Effectiveness relies on strategic use of common terminology in titles, abstracts, and keywords [16]. | Direct method for exploring a new topic; essential for systematic reviews. | Susceptible to bias from poor keyword choice; misses works using different terminology [27]. |

| Examination of Dissertations & Books [24] [25] | Reviewing literature review sections of dissertations and academic books on the topic. | Qualitative identification of sources authors consider foundational. | Provides expert-curated lists of important works; offers historical and theoretical context. | Time-consuming; not systematically reproducible. |

The experimental data for the CoCites method, derived from a validation study that reproduced the literature searches of published systematic reviews, offers compelling evidence for its efficiency. This method retrieved a median of 75% of the articles included in the original reviews, a figure that rose to 88% when the query articles were highly cited and had significant overlap in their citations [27]. This performance is particularly advantageous when dealing with large volumes of literature, as the method was more efficient than traditional keyword searches when the original review authors had screened more than 500 titles [27].

Experimental Protocols for Validation

To ensure the robustness of any literature search strategy, particularly within a thesis focused on validation, the following experimental protocols can be employed.

This protocol tests the hypothesis that keywords derived from seminal works will yield more comprehensive search results compared to a researcher-generated keyword list.

- Identify Seed Articles: Select 2-3 known seminal articles in the target field using a source of known high-quality reviews (e.g., a Cochrane review).

- Extract Keywords: Manually extract the most frequent and salient nouns and noun phrases from the titles, abstracts, and keyword lists of the seed articles.

- Generate Control Keywords: Create a separate list of keywords based on a researcher's a priori knowledge of the field.

- Execute Parallel Searches: Conduct systematic literature searches in a major database (e.g., Scopus or Web of Science) using the two keyword sets independently.

- Define and Measure Outcomes:

- Primary Outcome: The percentage of articles from a pre-defined "gold standard" set (e.g., all articles included in a relevant systematic review) retrieved by each keyword set.

- Secondary Outcome: The total number of unique articles retrieved by each search, used to calculate precision.

- Statistical Analysis: Compare the recall (completeness) of the two methods using appropriate statistical tests.

Protocol 2: Benchmarking the CoCites Method

This protocol validates the performance of the citation-based CoCites method against a traditional keyword-based search.

- Select Benchmark: Identify a published systematic review or meta-analysis on a relevant topic.

- Define Article Set: Compile the full list of articles included in the qualitative or quantitative analysis of the benchmark review.

- Apply CoCites Method:

- Query Article Selection: From the benchmark article set, select the two most highly-cited articles [27].

- Co-citation Search: Use a tool like Web of Science or Scopus to identify all articles that are co-cited with the query articles. Rank them in descending order of co-citation frequency.

- Citation Search: Find all articles that cite or are cited by the query articles.

- Compare Performance:

- Screen the titles generated by the CoCites method and record how many of the benchmark articles are retrieved.

- Compare the number of titles that needed to be screened with the CoCites method versus the number screened in the original review to retrieve the same set of articles.

Diagram 1: CoCites Method Validation Workflow

Visualization of Search Methodologies and Relationships

Understanding the logical flow and interconnectedness of different search strategies is crucial for developing a robust literature identification protocol. The diagram below maps the relationship between keyword-based and citation-based approaches.

Diagram 2: Literature Search Methodology Relationships

The Scientist's Toolkit: Research Reagent Solutions

The following table details key digital tools and resources essential for conducting effective citation and keyword analysis.

Table 2: Essential Research Tools for Citation and Keyword Analysis

| Tool/Resource Name | Primary Function | Key Utility in Identifying Seminal Works |

|---|---|---|

| Scopus [24] | Abstract and citation database. | Provides "Times Cited" count and allows sorting results by citation frequency, enabling quick identification of highly influential papers. |

| Web of Science [25] | Citation database and research analytics platform. | Offers robust citation reports and analytics, allowing visualization of citation networks by author, institution, and subject category. |

| Google Scholar [24] [25] | Free multidisciplinary search engine. | Shows "Cited by" counts for broad coverage of sources, including articles, theses, and books. Useful for initial discovery. |

| SAGE Navigator [25] | Social sciences literature review tool. | Provides expert-curated key readings and an interactive chronology tool to visualize the development of research over time. |

| CoCites Web Tool [27] | A specialized citation-based search tool. | Implements the CoCites method to find related articles based on co-citation frequency, reducing reliance on keyword selection. |

| Natural Language Processing (NLP) Tools [2] | Automated text processing (e.g., spaCy). | Can be used to extract and analyze keywords from large volumes of literature to identify trending terminology and research communities. |

| ColorBrewer [28] | Online tool for selecting color palettes. | Ensures accessibility and clarity in creating visualizations of citation networks and research trends, important for colorblind readers. |

The objective comparison of methods for identifying seminal research reveals a clear synergy between traditional keyword searches and modern citation analysis techniques. While keyword searches remain a necessary entry point into a new field, their limitations are notable. Citation-based methods, particularly the validated CoCites approach, offer a powerful, efficient, and reproducible alternative or supplement [27].

For researchers validating keyword choices, the recommendation is a hybrid protocol: use an initial, broad keyword search to identify a small set of highly relevant "query articles," then employ citation tracking and the CoCites method to leverage the expert knowledge embedded in citation networks. This methodology mitigates the bias inherent in keyword selection and provides a more objective, data-driven foundation for a comprehensive literature review, ultimately strengthening the validity of the research thesis.

Cited reference searching is a more sensitive technique for identifying all studies using a particular research instrument compared to traditional keyword searches [10]. Experimental data reveals that cited reference searches can identify approximately three times as many relevant studies as keyword searches within the same bibliographic databases [10]. This methodology is particularly valuable for systematic reviews and meta-analyses focused on findings generated with specific assessment tools, directly supporting the validation of keyword choices through rigorous citation analysis.

Database Comparison for Cited Reference Searching

Table 1: Key Database Characteristics for Citation Searching

| Feature | Scopus | Web of Science (WoS) | Google Scholar |

|---|---|---|---|

| Total Records | 90.6+ million [29] | 95+ million [29] | 399+ million [29] |

| Update Frequency | Daily [29] | Daily [29] | Unknown [29] |

| Cited Reference Search | Yes [10] [29] | Yes [10] [29] | Yes (via "Cited by") [10] |

| Citation Analysis | Yes [29] [30] | Yes [29] [30] | No formal analysis [30] |

| Export Records | Yes - en masse [29] | Yes - en masse [29] | Limited (copy/paste) [30] |

| Author Profiles | Algorithm-generated [29] | Algorithm-generated [29] | Author-created [29] |

Table 2: Experimental Performance Metrics (Based on CPS Instrument Search)

| Database & Search Method | Precision (Average) | Sensitivity (Average) |

|---|---|---|

| Keyword Search (Bibliographic DBs) | 90% [10] | 16% [10] |

| Google Scholar Keyword Search | 54% [10] | 70% [10] |

| Cited Reference Search | 35-75% [10] | 45-54% [10] |

Key Strengths & Weaknesses [29] [30]:

- Scopus: Strengths include exportable visualizations for citation reports and broader coverage of Social Sciences and Arts & Humanities compared to WoS. A weakness is that it is owned by a publisher, which may affect content inclusion neutrality.

- Web of Science (WoS): Strengths include covering "journals of influence" and organization name unification. A weakness is difficulty searching unusual author name formats.

- Google Scholar: Strengths include finding more citations than any other database and wider coverage of document types (e.g., theses). Weaknesses include questionable content quality and limited advanced searching features.

Experimental Protocol for Cited Reference Searching

Objective

To identify a comprehensive set of scholarly publications that used a specific research instrument or methodology (e.g., the Control Preferences Scale) for systematic review or citation analysis.

Materials: The Researcher's Toolkit

Table 3: Essential Research Reagents for Citation Searching

| Research Reagent | Function/Purpose |

|---|---|

| Seminal Instrument Publications | The 1-2 original articles describing instrument development and/or validation; serve as the seed for cited reference searches [10]. |

| Bibliographic Databases | Platforms like Scopus and WoS that offer structured cited reference search capabilities [10] [29]. |

| Full-Text Database | Google Scholar, which searches the full text of documents, increasing sensitivity [10]. |

| Reference Management Software | Essential for deduplicating, organizing, and screening the retrieved citations from multiple databases. |

Step-by-Step Methodology

- Identify Seed References: Select one or two seminal publications for the instrument or method. The original description and a key validation study are optimal choices [10].

- Execute Cited Reference Searches:

- In Scopus, search for the seed article by title or DOI and then click on the "Cited by" count to view all citing documents [10].

- In Web of Science, use the "Cited Reference Search" tool to find all articles that cite the seed work [10].

- In Google Scholar, search for the seed article and then click the "Cited by" link to retrieve the list of citing works [10].

- Combine and Deduplicate Results: Export or save results from all databases and use reference management software to remove duplicate records.

- Screen for Relevance: Review the full text of retrieved articles to confirm the instrument was actually used, as citing an article does not guarantee its instrument was applied [10].

Workflow Visualization

The following diagram illustrates the sequential protocol for executing a comprehensive cited reference search.

Key Research Implications

The experimental data confirms that cited reference searches are significantly more sensitive than keyword searches for finding studies that use a specific instrument [10]. The choice of seed article impacts precision; using a validation study as the seed can yield higher precision than using the original instrument description article [10].

For a comprehensive and systematic retrieval of literature, a dual-method approach is recommended: using cited reference searches for high sensitivity to find most relevant studies, supplemented by keyword searches in selective databases like PubMed for high precision to identify additional records that may have missed citing the seminal papers [10]. This methodology provides a robust foundation for validating keyword choices through empirical citation analysis.

The validation of keyword choices through citation analysis is a critical step in ensuring the robustness and comprehensiveness of systematic reviews and research trend analyses in scientific fields, including drug discovery. This guide objectively compares different analytical approaches—manual, bibliometric, and advanced computational methods—for evaluating keyword relevance and usage in retrieved articles. The comparison is framed within the context of validating keyword selections for in silico drug discovery methodologies, providing researchers with data-driven insights to refine their search strategies and improve the quality of literature synthesis.

Comparative Analysis of Keyword Analysis Approaches

The table below summarizes the core characteristics, quantitative performance metrics, and ideal use cases for three predominant methodologies used in keyword analysis [31] [2] [32].

| Analytical Approach | Core Methodology | Typical Outputs | Relative Time Investment | Key Strength | Primary Limitation | Best-Suited Research Goal |

|---|---|---|---|---|---|---|

| Manual Extraction & Analysis | Researchers manually skim full-text articles to extract and categorize keywords or data points according to a pre-defined framework (e.g., PICO) [31] [32]. | Evidence tables, summary tables of study characteristics and findings [31]. | High (Time-consuming) | Handles complex, nuanced contextual information effectively [31]. | Prone to subjective bias and human error; not scalable for very large datasets [2]. | Systematic reviews with a focused scope where deep, contextual understanding is paramount [31]. |

| Bibliometric Performance Analysis | Quantitative statistical analysis of publication indexes and citations from bibliographic databases [2]. | Counts of scientific activities (publications, citations), identification of high-impact journals and authors [2]. | Medium | Cost-effective for identifying influential research and mapping high-level trends over time [2]. | Weak in understanding and classifying specific research structures; relies on limited pre-defined keywords [2]. | Gaining a broad overview of a field's key contributors and the temporal evolution of research interest. |

| Computational & ML-Based Trend Analysis | Uses Natural Language Processing (NLP) to automatically extract keywords from article titles/abstracts, constructing co-occurrence networks to identify research communities [2]. | Keyword co-occurrence matrices, modularized keyword networks, trend predictions of emerging topics [2]. | Low (after initial setup) | Highly scalable, automated, and capable of uncovering hidden thematic relationships in large datasets [2]. | Models may lack generality across different research fields; requires technical expertise to implement [2]. | Analyzing massive volumes of literature to uncover interdisciplinary connections and predict emerging research fronts. |

Experimental Protocols for Keyword Validation

Protocol for Manual Data Extraction in Systematic Reviews

This protocol ensures consistency and minimizes bias when manually extracting and analyzing keyword context from articles [31] [32].

- Develop a Data Extraction Form: Create a standardized form, using tools like Covidence, Excel, or REDCap. The form should include fields for [31] [32]:

- Article Information: Author, year, title, DOI.

- Study Methods: Study design, participant recruitment, statistical methods.

- Interventions & Outcomes: For intervention studies, detail the components, dosage, and timing [32].

- Keyword Context: Record the primary keywords identified and their contextual usage in the study.

- Pilot the Form: Test the extraction form on a small, randomized sample of included articles. Revise the form to ensure it captures all relevant data unambiguously [31].

- Dual Extraction: At least two reviewers should extract data from each article independently to reduce errors and bias [31] [32].

- Resolve Discrepancies: Reviewers compare their extractions and discuss discrepancies to reach a consensus [31].

- Synthesize Data: Organize the extracted data into evidence tables and summary tables to compare keyword usage and study characteristics across the included literature [31].

Protocol for Computational Keyword Trend Analysis

This protocol, adapted from keyword-based research trend analysis, provides a systematic, reproducible method for validating keyword relevance at scale [2].

- Article Collection: Use application programming interfaces (APIs) from bibliographic databases (e.g., Crossref, Web of Science) to collect articles based on seed keywords. Filter document types and remove duplicates [2].

- Keyword Extraction:

- Tokenization & Lemmatization: Process article titles and abstracts using an NLP pipeline (e.g., spaCy's

en_core_web_trfmodel) to split text into words (tokens) and reduce them to their base form (lemmas) [2]. - Part-of-Speech Tagging: Filter tokens to retain only meaningful words like nouns, adjectives, and verbs [2].

- Tokenization & Lemmatization: Process article titles and abstracts using an NLP pipeline (e.g., spaCy's

- Research Structuring:

- Build Co-occurrence Matrix: For each article, create all possible keyword pairs from its title. Aggregate counts across all articles to build a matrix where elements represent the frequency of keyword co-occurrence [2].

- Construct Keyword Network: Transform the matrix into a network graph (e.g., using Gephi), where nodes are keywords and edges represent co-occurrence strength [2].

- Network Modularization: Use a community detection algorithm (e.g., Louvain modularity) to identify clusters or "communities" of keywords that represent distinct sub-fields or research themes. This step visually validates if chosen keywords cluster with other relevant terms [2].

Diagram 1: Computational keyword validation workflow.

The Scientist's Toolkit: Essential Reagents & Software for Analysis

The table below details key solutions required for implementing the described experimental protocols [31] [2] [33].

| Tool Name | Category | Primary Function in Analysis |

|---|---|---|

| Covidence | Systematic Review Software | Streamlines dual data extraction, highlights discrepancies automatically, and manages the screening and extraction process in a single platform [31]. |

| Microsoft Excel / Google Sheets | Spreadsheet Software | Provides a highly customizable and accessible environment for creating data extraction forms and organizing extracted data [31] [33]. |

| spaCy (encoreweb_trf) | Natural Language Processing (NLP) Library | Performs advanced NLP tasks such as tokenization, lemmatization, and part-of-speech tagging to automatically extract and normalize keywords from text corpora [2]. |

| Gephi | Network Analysis Software | An open-source platform for visualizing and exploring complex networks, used to analyze and modularize keyword co-occurrence networks [2]. |

| RevMan | Systematic Review Software | Cochrane's tool designed for preparing and maintaining systematic reviews, including data collection and meta-analysis [31] [33]. |

| Crossref / Web of Science API | Bibliographic Data Interface | Enables programmable, large-scale collection of scholarly metadata and article information for computational analysis [2]. |

Diagram 2: Example keyword network showing thematic communities.

In the contemporary pharmaceutical landscape, where explosion of scientific opportunity coexists with unprecedented financial and competitive pressures, the ability to efficiently navigate vast information ecosystems has become a critical competitive advantage [34]. For researchers, scientists, and drug development professionals, constructing a validated keyword list represents far more than an academic exercise—it is a fundamental strategic capability that directly impacts R&D efficiency, competitive intelligence, and ultimately, therapeutic innovation. This guide objectively compares methodological approaches for building keyword lists through citation analysis research, providing both quantitative comparisons and detailed experimental protocols to empower research professionals in optimizing their information retrieval strategies.

The validation of keyword choices through citation analysis sits at the intersection of information science and pharmaceutical innovation. With the industry's R&D success rates averaging approximately 14.3% (ranging from 8% to 23% across leading companies) and attrition in Phase II studies reaching approximately 66%, the efficiency of knowledge retrieval and prior art analysis has direct implications for resource allocation and strategic decision-making [19]. This guide employs rigorous comparison methodology to evaluate keyword development techniques, examining their performance across critical dimensions including precision, recall, operational complexity, and strategic intelligence value.

Comparative Analysis of Keyword Development Methodologies

Table 1: Quantitative Comparison of Keyword Development Methodologies for Pharmaceutical Research

| Methodology | Precision Rate (%) | Recall Rate (%) | Operational Complexity | Strategic Intelligence Value | Optimal Use Case |

|---|---|---|---|---|---|

| Patent Citation Network Analysis | 88-92 | 78-85 | High | Very High | Competitive intelligence, emerging technology mapping |

| MeSH Term Expansion | 82-87 | 85-90 | Low-Medium | Medium | Comprehensive literature reviews, systematic reviews |

| Entry Term Mapping | 80-84 | 82-88 | Low | Low-Medium | Initial keyword discovery, synonym identification |

| Automatic Term Mapping (ATM) | 75-82 | 88-94 | Very Low | Low | Quick searches, preliminary investigation |

| Bibliometric Analysis | 85-90 | 80-86 | High | High | Research trend analysis, emerging topic identification |

Table 2: Temporal Efficiency Metrics Across Keyword Validation Techniques

| Methodology | Initial Setup Time (Hours) | Ongoing Maintenance | Time to Comprehensive Coverage | Skill Requirements |

|---|---|---|---|---|

| Patent Citation Network Analysis | 12-20 | Medium-High | 4-6 weeks | Expert |

| MeSH Term Expansion | 2-4 | Low | 1-2 days | Beginner-Intermediate |

| Entry Term Mapping | 1-2 | Low | 2-4 hours | Beginner |

| Automatic Term Mapping (ATM) | 0-0.5 | None | Immediate | Beginner |

| Bibliometric Analysis | 8-12 | Medium | 2-3 weeks | Intermediate-Expert |

The comparative data reveals significant trade-offs between methodological approaches. Patent citation network analysis demonstrates superior precision and strategic intelligence value, making it particularly valuable for mapping competitive landscapes and identifying emerging technological fronts [34]. Conversely, Automatic Term Mapping provides immediate results with minimal skill requirements but offers limited strategic value. The choice of methodology should align with specific research objectives, with resource-intensive methods like patent analysis justified for high-stakes strategic decisions, while efficient approaches like MeSH term expansion suffice for general literature reviews.

Experimental Protocols for Keyword Validation

Objective: To identify semantically rich keyword terminology through analysis of patent citation networks, capturing both foundational and emerging concepts in pharmaceutical research [34].

Materials and Equipment:

- Specialized patent database access (e.g., DrugPatentWatch, USPTO, EPO)

- Network visualization software (e.g., VOSviewer, CiteSpace)

- Data extraction and normalization tools

Methodology:

- Seed Patent Identification: Select 3-5 core patents in the target therapeutic domain using known drug names, inventor names, or assignee information

- Backward Citation Analysis: Extract all references cited by seed patents, categorizing by document type (patent vs. non-patent literature) and citation purpose

- Forward Citation Tracking: Identify all subsequent patents citing the seed patents, analyzing citation velocity (citations/year) as an indicator of technological influence

- Network Mapping: Construct a citation network with patents as nodes and citations as connections, applying clustering algorithms to identify technological subgroups

- Term Extraction: Extract high-frequency terminology from titles, abstracts, and claims of patents within identified clusters, weighted by citation metrics

- Validation: Correlate extracted terms with known drug targets and mechanisms of action through Orange Book data and scientific literature

This protocol typically requires 12-20 hours of initial setup but generates significantly enhanced keyword lists that capture both established and emerging terminology, with particular strength in identifying technological convergence areas where different fields intersect to create innovation opportunities [34].

MeSH Term Expansion and Entry Term Mapping Protocol

Objective: To leverage controlled vocabulary systems for comprehensive keyword discovery, accounting for synonyms, acronyms, and semantic variations in pharmaceutical literature [35] [36].

Materials and Equipment:

- PubMed/MEDLINE database access

- MeSH database interface

- Standard spreadsheet software for term organization

Methodology:

- Seed Term Identification: Begin with 2-3 core conceptual terms describing the research focus

- MeSH Database Query: Input each seed term into the MeSH database to identify corresponding controlled vocabulary terms

- Entry Term Extraction: Systematically extract all listed entry terms from relevant MeSH records, capturing synonyms, lexical variants, and related terminology

- Tree Hierarchy Exploration: Navigate both broader and narrower terms within the MeSH hierarchy to identify conceptually related terminology

- Subheading Integration: Consider relevant subheadings (e.g., /drug effects, /therapeutic use) to add conceptual specificity

- Semantic Grouping: Organize extracted terms into conceptual categories based on therapeutic area, biological process, or chemical class

- Validation Check: Verify term coverage against 3-5 known key articles in the field, adding any missing terminology

This medium-complexity protocol requires 2-4 hours for implementation but substantially improves search comprehensiveness, particularly through its systematic approach to synonym management and vocariant control [35]. The method demonstrates particular strength in capturing international spelling variations (e.g., pediatric vs. paediatric) and acronym expansions (e.g., MRI vs. magnetic resonance imaging).

Visualization of Methodological Workflows

Figure 1: Keyword Validation Method Selection Workflow

Figure 2: Patent Citation Analysis Detailed Protocol

Research Reagent Solutions: Keyword Development Tools

Table 3: Essential Research Tools for Keyword Validation and Citation Analysis

| Tool Name | Primary Function | Application in Keyword Development | Access Method |

|---|---|---|---|

| PubMed MeSH Database | Controlled vocabulary authority | Synonym identification, semantic mapping | Public web access |

| DrugPatentWatch | Pharmaceutical patent intelligence | Patent citation network analysis | Subscription required |

| VOSviewer | Bibliometric visualization | Research trend mapping, term clustering | Free software |

| CiteSpace | Citation network analysis | Emerging concept detection, paradigm shift identification | Free software |

| PubMed Clinical Queries | Pre-filtered search results | Methodology-specific term validation | Public web access |

| Web of Science Core Collection | Comprehensive citation data | Cross-disciplinary term extraction | Institutional subscription |

The strategic selection and combination of these tools significantly enhances keyword list quality. For high-stakes strategic projects, the combination of DrugPatentWatch for patent analysis with CiteSpace for visualization provides unparalleled insight into emerging research fronts and competitive intelligence [34] [37]. For general literature reviews, the PubMed MeSH database combined with PubMed Clinical Queries offers an optimal balance of comprehensiveness and efficiency [35] [36]. The integration of multiple tools consistently outperforms single-method approaches, particularly through triangulation of terminology across different information sources and validation of term relevance across distinct methodological frameworks.

The comparative analysis demonstrates that methodological selection should be guided by specific research objectives, resource constraints, and strategic requirements. Patent citation network analysis delivers superior strategic intelligence for competitive positioning and emerging technology assessment, justifying its operational complexity for high-value applications [34]. MeSH term expansion provides the optimal balance of efficiency and comprehensiveness for general literature surveillance and systematic review projects [35]. Automatic Term Mapping serves as a valuable rapid assessment tool but should not be relied upon for comprehensive keyword development.