Beyond Citations: A 2025 Framework for Benchmarking Keyword Strategies Against Highly-Cited Research

This article provides researchers, scientists, and drug development professionals with a modern framework for aligning SEO keyword strategies with the principles of high-impact scientific publishing.

Beyond Citations: A 2025 Framework for Benchmarking Keyword Strategies Against Highly-Cited Research

Abstract

This article provides researchers, scientists, and drug development professionals with a modern framework for aligning SEO keyword strategies with the principles of high-impact scientific publishing. It bridges the gap between academic influence, measured by citation metrics in databases like Web of Science, and digital discoverability. Readers will learn to decode the semantic patterns of highly-cited papers, apply AI-powered tools for keyword discovery, audit and optimize their existing content, and validate their strategy to dominate search visibility in competitive biomedical fields.

The New Frontier: Why Keyword and Citation Benchmarking is Essential for Modern Research Impact

In the evolving landscape of academic research and scientific communication, two distinct paradigms for measuring impact have emerged: traditional citation metrics from established sources like Clarivate and digital keyword metrics derived from online search and engagement patterns. While citation metrics have long served as the gold standard for assessing academic influence, digital keyword metrics offer real-time insights into research discovery and visibility. This guide objectively compares these approaches within the context of benchmarking keyword strategies against highly-cited papers research, providing researchers, scientists, and drug development professionals with actionable methodologies to enhance the discoverability and impact of their work.

The fundamental distinction lies in their measurement focus: citation metrics quantify scholarly influence through formal citation networks, while keyword metrics capture digital attention through search patterns and online mentions [1] [2]. Understanding their convergence enables researchers to develop more comprehensive dissemination strategies that maximize both academic recognition and practical reach within their scientific domains.

Clarivate analytics provides a suite of established metrics centered on citation analysis, primarily drawn from the Web of Science Core Collection. These metrics have evolved to address different aspects of scholarly impact assessment:

Journal-Level Metrics

- Journal Impact Factor (JIF): Measures the average number of citations received per article published in a journal over a two-year period, though it's criticized for field biases and narrow journal coverage [3] [4].

- Journal Citation Indicator (JCI): A newer, field-normalized metric that calculates the average category normalized citation impact (CNCI) for all citable items published in a journal over the preceding three years [5]. Unlike JIF, JCI applies to all Web of Science Core Collection journals and enables better cross-disciplinary comparison with a world average set at 1.0 [5].

- Eigenfactor Score: Based on citations from all documents from the past five years to a journal's content published during those same years, with self-citations removed [4].

- Article Influence Score: Determines the average influence of a journal's articles over their first five years after publication [4].

A core challenge in citation metrics is proper normalization to account for field-specific differences in citation density, publication age, and document type [6]. The JCI and other normalized metrics attempt to address these disparities, though different normalization approaches present trade-offs between field specificity and comparability [6] [5].

Table 1: Key Clarivate Citation Metrics Comparison

| Metric | Time Frame | Field Normalized | Coverage | World Average |

|---|---|---|---|---|

| Journal Impact Factor (JIF) | 2 years | No | Journals with JIF only | Varies by field |

| 5-year Journal Impact Factor | 5 years | No | Journals with JIF only | Varies by field |

| Journal Citation Indicator (JCI) | 3 years | Yes | All Web of Science Core Collection | 1.0 |

| Eigenfactor Score | 5 years | Indirectly | Journals with JIF only | Varies by field |

| Article Influence Score | 5 years | Indirectly | Journals with JIF only | 1.0 |

Understanding Digital Keyword Metrics

Digital keyword metrics originate from search engine optimization, social media monitoring, and online content analysis, providing real-time data on search volume, interest, and engagement [2]. These metrics are particularly valuable for understanding initial discovery and visibility of research before formal citations accumulate.

Core Keyword Metrics for Research Visibility

- Search Volume: Measures how often a term is searched in a given timeframe and location, revealing topic interest levels [2].

- Keyword Difficulty: Estimates how challenging it is to rank on the first page of search results for a specific keyword [2].

- Search Intent: Categorizes why people search for something (informational, navigational, commercial, or transactional) [2].

- Volume of Mentions: Tracks how often specific keywords appear online, including social media, forums, and blogs [2].

- Total Reach: Estimates how many people have seen content where a specific keyword is mentioned [2].

- Share of Voice (SOV): Measures the percentage of online conversations a keyword captures compared to competitors [2].

Application to Research Context

For researchers, these metrics help identify which terminology resonates within specific scientific communities and beyond. High search volume for methodological terms may indicate emerging techniques gaining traction, while navigational searches for specific authors or drugs reflect established recognition [2].

Table 2: Essential Digital Keyword Metrics for Researchers

| Metric | Measurement Focus | Research Application | Tools for Tracking |

|---|---|---|---|

| Search Volume | Frequency of search queries | Identifying trending topics and terminology | Semrush, Ahrefs, Google Keyword Planner |

| Keyword Difficulty | Competition for search ranking | Assessing effort needed for visibility | Semrush, Ahrefs |

| Search Intent | User purpose behind searches | Aligning content with researcher needs | Semrush, manual analysis |

| Volume of Mentions | Online frequency of keyword use | Measuring topic penetration | Brand24, social listening tools |

| Total Reach | Potential audience exposure | Understanding dissemination scope | Brand24, analytics platforms |

| Share of Voice | Comparative visibility | Benchmarking against competing concepts | Brand24, manual calculation |

Experimental Comparison: Search Methodologies for Literature Discovery

A critical intersection between citation and keyword metrics emerges in systematic literature retrieval, where both approaches can be quantitatively compared for effectiveness.

A 2014 study directly compared the effectiveness of keyword searches versus cited reference searches for identifying studies using a specific measurement instrument (Control Preferences Scale) [1] [7]. The methodology provides a robust framework for understanding the complementary strengths of each approach:

Information Sources: The study utilized three bibliographic databases (PubMed, Scopus, Web of Science) and one full-text database (Google Scholar) to represent different coverage and functionality [1] [7].

Search Methods:

- Keyword searches: Used exact phrases "control preference scale" OR "control preferences scale" in title or abstract fields across all databases

- Cited reference searches: Used two seminal publications (the original 1992 instrument introduction and a 1997 validation study) as starting points [1] [7]

Time Frame and Standardization: All searches were limited to a consistent 10-year publication period (2003-2012) to ensure comparability [1] [7].

Effectiveness Measures:

- Precision: Percentage of relevant articles relative to total citations retrieved

- Sensitivity: Percentage of relevant articles found relative to total unique relevant articles in the combined results [1] [7]

Quantitative Results: Precision and Sensitivity Trade-offs

The experimental results demonstrated clear trade-offs between keyword and citation search approaches across different database types:

Keyword Search Performance:

- Bibliographic databases (PubMed, Scopus, WOS): High average precision (90%) but low average sensitivity (16%) [1] [7]

- Google Scholar: Lower precision (54%) but significantly higher sensitivity (70%) than bibliographic databases [1] [7]

Cited Reference Search Performance:

- Moderate sensitivity across all databases (45-54%) [1] [7]

- Precision varied substantially (35-75%), with Scopus showing the highest precision [1] [7]

- In Scopus and Web of Science, cited reference searching found approximately three times as many relevant studies as keyword searching [1] [7]

Table 3: Experimental Results - Search Method Performance

| Search Method & Database | Precision | Sensitivity | Key Finding |

|---|---|---|---|

| Keyword Search (PubMed) | High (~90%) | Low (~16%) | Precise but incomplete |

| Keyword Search (Scopus/WOS) | High (~90%) | Low (~16%) | Precise but incomplete |

| Keyword Search (Google Scholar) | Moderate (54%) | High (70%) | Broad but noisy |

| Cited Reference Search (Scopus - 1997 article) | 75% | 54% | Most precise citation approach |

| Cited Reference Search (WOS - 1992 article) | ~40% | ~45% | Moderate precision, good sensitivity |

| Cited Reference Search (Google Scholar - 1992 article) | 35% | ~50% | Low precision, moderate sensitivity |

| Cited Reference Search (Google Scholar - 1997 article) | 63% | ~50% | Good balance for full-text search |

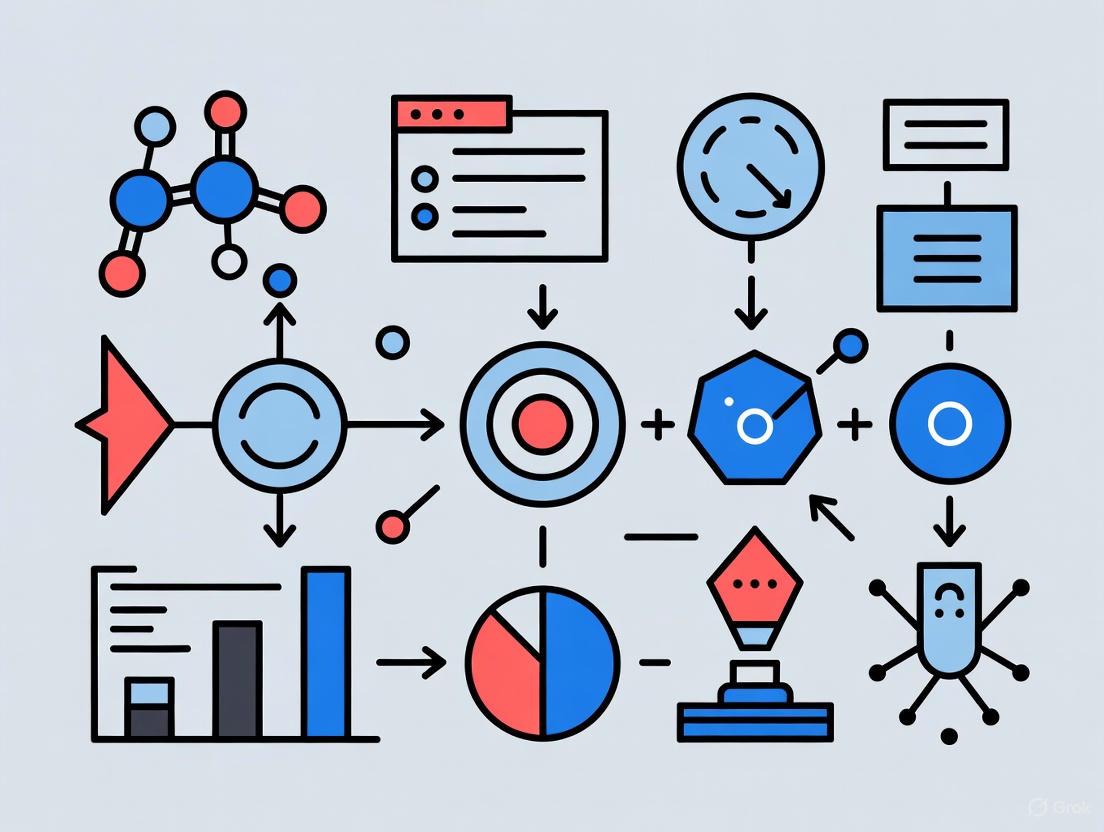

The experimental evidence supports an integrated approach to research discovery and impact assessment. The following workflow visualizes how citation metrics and keyword metrics can be combined in a comprehensive research strategy:

Research Reagent Solutions: Essential Tools for Impact Analysis

Implementing a convergent approach requires specific tools and platforms that enable both citation and keyword analysis. The following table details essential "research reagents" for comprehensive impact assessment:

Table 4: Research Reagent Solutions for Convergent Metrics Analysis

| Tool Category | Specific Solutions | Primary Function | Research Application |

|---|---|---|---|

| Bibliographic Databases | Web of Science Core Collection, Scopus | Citation indexing and analysis | Foundational citation data, journal metrics, cited reference searches |

| Full-Text Databases | Google Scholar | Full-text search and citation tracking | High-sensitivity keyword searches, broad literature discovery |

| Keyword Research Tools | Semrush, Ahrefs, Google Keyword Planner | Search volume and difficulty analysis | Identifying trending terminology, assessing digital competition |

| Social Listening Platforms | Brand24 | Mention volume and reach tracking | Measuring online penetration, share of voice analysis |

| Normalization Platforms | Journal Citation Reports, InCites | Field-normalized citation metrics | Cross-disciplinary comparison, contextual impact assessment |

| AI Research Assistants | Web of Science Research Assistant | Semantic search and literature discovery | Natural language queries, intelligent concept mapping |

The experimental evidence demonstrates that citation metrics and keyword metrics offer complementary rather than competing approaches to research impact assessment. Citation searches provide field-normalized measures of scholarly influence with moderate to high sensitivity across databases, while keyword searches offer high precision in bibliographic databases but may miss substantial relevant literature [1] [7]. The convergence of these approaches enables researchers to develop more robust strategies for both disseminating their work and discovering relevant research.

For researchers, scientists, and drug development professionals, this convergence suggests practical applications: using keyword metrics to optimize article titles and abstracts for discoverability while targeting journals with strong citation metrics (particularly field-normalized indicators like JCI) for academic impact [4] [5]. By integrating both approaches throughout the research lifecycle—from literature review to results dissemination—professionals can maximize both the visibility and scholarly recognition of their work in an increasingly competitive and interdisciplinary research landscape.

In the competitive landscape of academic research, the Highly Cited Researchers list from Clarivate stands as a recognized benchmark for identifying exceptional scientific influence. This annual list distinguishes the top 1% of global researchers based on their publication of multiple Highly Cited Papers over the past eleven years [8]. For researchers, scientists, and drug development professionals, understanding these selection criteria is crucial not only for recognition but also for benchmarking effective dissemination strategies for their work. The process combines quantitative citation metrics with rigorous qualitative analysis to address challenges in an increasingly complex scholarly record [8] [9]. This guide provides a comprehensive comparison of these criteria, supported by experimental data and methodologies, to illuminate the pathway to this recognition.

Quantitative Foundation: The Core Data-Driven Criteria

The selection process begins with a quantitative analysis of citation data from the Web of Science Core Collection, which Clarivate describes as "the world's most trusted publisher-independent global citation database" [8]. The fundamental building blocks are Highly Cited Papers—those that rank in the top 1% by citations for their field and publication year during an eleven-year rolling window (currently 2014-2024 for the 2025 list) [8] [10].

Essential Science Indicators (ESI) Framework

Analysts at the Institute for Scientific Information (ISI) utilize ESI to categorize and evaluate research across 21 broad fields in the sciences and social sciences [8]. These fields are defined by journal groupings, with multidisciplinary journals like Nature and Science having their papers individually assigned to a field based on cited reference analysis [8]. The methodology focuses exclusively on article and review papers, excluding citations to letters, correction notices, and other document types [8].

Table 1: Key Quantitative Metrics for Highly Cited Researcher Selection

| Metric | Description | Data Source | Threshold |

|---|---|---|---|

| Highly Cited Papers | Papers in top 1% of citations for field & year | Web of Science Core Collection | Multiple papers over 11 years |

| Evaluation Window | Rolling citation analysis period | Essential Science Indicators (ESI) | 11 years (2014-2024 for 2025 list) |

| Document Types | Articles and reviews included | Web of Science | Article, Review |

| Research Fields | Broad categories for evaluation | ESI Journal Categorization | 21 fields in sciences/social sciences |

| Cross-Field Impact | Performance across multiple fields | ESI & Additional Analysis | Exceptional performance across several fields |

The 2025 list recognized 7,131 Highly Cited Researcher designations awarded to 6,868 individuals, with some researchers recognized in multiple fields [10] [11]. This represents just 1 in 1,000 of the global research community [8].

Qualitative Refinement: Ensuring Research Integrity

Beyond the initial quantitative triage, Clarivate employs a sophisticated qualitative analysis to address potential manipulation and ensure the recognition reflects genuine, broad scholarly influence [9]. This multifaceted approach has evolved to counter an increasingly "polluted scholarly record" [8].

Exclusion Criteria and Integrity Checks

ISI analysts apply several integrity checks during the refinement process, excluding candidates based on specific patterns that suggest artificial inflation of citation impact [9]. These checks have become increasingly stringent, with exclusions rising from 500 in 2022 to more than 1,000 in 2023 [9].

Table 2: Qualitative Exclusion Criteria in Highly Cited Researcher Selection

| Exclusion Category | Description | Rationale |

|---|---|---|

| Excessive Self-Citation | Self-citation levels exceeding field norms | Prevents artificial inflation of impact metrics [9] |

| Hyper-Authorship | Publication rates straining normative authorship | Questions meaningful contribution to numerous papers [9] |

| Citation Network Manipulation | Over half of citations deriving from co-authors | Indicates narrow influence rather than broad community impact [9] |

| Retracted Publications | Papers retracted for misconduct | Uses Retraction Watch database to identify problematic works [11] |

| Incremental Value Research | Extraordinary recent publications with high self-citation | Filters research of potentially low substantive value [9] |

The methodology also considers information from research institutions, national research managers, and collective community groups like For Better Science and PubPeer, even when these sources include anonymous or whistleblower contributions [9]. Furthermore, the list does not count Highly Cited Papers that have been retracted from the Web of Science, particularly when retracted for misconduct reasons such as plagiarism, image manipulation, or fake peer review [11].

Special Considerations for Mathematics

The field of Mathematics presents unique challenges due to its highly fractionated research domains with specialists working on niche topics, coupled with relatively low average publication and citation rates [9]. These characteristics make the field particularly vulnerable to citation manipulation strategies. In response, Clarivate excluded Mathematics in 2023-2024 and reintroduced it in 2025 with enhanced screening procedures [11]. For the 2025 list, analysts pre-screened Highly Cited Papers in Mathematics to filter out those that would otherwise distort results, leading to 60 researchers being named in this category [11].

Methodological Protocols: From Data to Final List

The complete selection process follows a rigorous workflow that transforms raw citation data into the final Highly Cited Researchers list through multiple stages of quantitative and qualitative assessment.

Experimental Protocol: Selection Workflow

Diagram 1: HCR Selection Workflow

The methodology employs a multi-stage filtration system that begins with the Web of Science Core Collection citation data [8]. Analysts generate a preliminary list based on the presence of multiple Highly Cited Papers over the eleven-year analysis window [9]. This initial candidate list then undergoes rigorous qualitative assessment, including checks for excessive self-citation, hyper-authorship patterns, and narrow citation networks [9]. The final list represents researchers who have demonstrated "significant and broad influence" rather than those with high citation counts derived from limited circles [9].

Author Disambiguation and Affiliation Verification

A critical technical challenge in this process is accurate author identification. Clarivate uses a combination of algorithmic disambiguation and manual expert review to address this issue [11]. The team examines author identifiers, emails, research topics, journal sources, institutional addresses, and co-authorships to distinguish unique individuals [11]. In complex cases involving frequent affiliation changes, analysts may consult original papers (when journals publish full names rather than just initials), author websites, or CVs [11].

For affiliation accuracy, Clarivate employs a researcher verification process that combines information from the scholarly record (contact details on Highly Cited Papers across the eleven-year window) with updates from researchers themselves [8] [11]. A primary affiliation is specifically defined as the researcher's "home institution—typically at a location where they reside, conduct the majority of their work as reflected in their publication record and usually hold a primary position" [8]. Research fellowships are not typically recognized as primary affiliations [11].

Practical Implications for Researchers

The Scientist's Toolkit: Research Dissemination Reagents

Table 3: Essential Research Dissemination Toolkit

| Tool/Strategy | Function | Implementation Consideration |

|---|---|---|

| Strategic Keyword Placement | Enhances discoverability in databases | Place common terminology early in abstracts [12] |

| Common Terminology | Increases resonance with search algorithms | Use field-standard terms over specialized jargon [12] |

| Collaboration Networks | Extends research reach and impact | Maintain diverse networks to demonstrate broad influence [9] |

| Citation Ethics | Maintains integrity of citation profile | Avoid excessive self-citation or coordinated citation circles [9] |

| Multidisciplinary Approach | Enables cross-field recognition | Work at intersection of disciplines to expand impact [11] |

The relationship between effective keyword strategies and citation impact represents a crucial connection point for researchers. Studies indicate that strategic keyword placement in titles, abstracts, and keyword sections significantly enhances article discoverability in databases and search engines [12]. This discoverability forms the foundation for potential citations, as "we cannot cite what we do not discover" [12].

Research in ecology and evolutionary biology has revealed that papers whose abstracts contain more common and frequently used terms tend to have increased citation rates [12]. Furthermore, choosing well-suited terms can determine whether a study appears at the top of search results or gets buried beneath other documents [12]. This is particularly important for databases that sort results by relevance, where strategic keyword use can significantly enhance an article's visibility.

Diagram 2: Keyword Impact Pathway

The relationship between search intent and content strategy further illuminates this connection. Studies have found that content relevance drives organic clicks when users are further along in their research journey and conducting transactional searches, while online authority becomes the key driver when users are at the awareness stage and looking for information [13]. This suggests that the optimal keyword strategy may vary depending on the research domain and where potential citing researchers might be in their investigative process.

The Highly Cited Researchers methodology represents a sophisticated evolution beyond simple citation counting. Through its combination of quantitative thresholds and qualitative integrity checks, the process seeks to identify genuine research influence rather than merely rewarding citation accumulation strategies. For the global research community, understanding these criteria provides valuable insights into effective research dissemination while highlighting the importance of maintaining ethical standards in publication and citation practices.

The continuous refinement of this methodology—including the enhanced screening for mathematical research and more rigorous affiliation verification—demonstrates Clarivate's commitment to addressing an increasingly complex scholarly landscape. As research evaluation continues to evolve, this multi-faceted approach offers a model for balancing quantitative metrics with qualitative assessment to identify truly influential research contributions.

For researchers, scientists, and drug development professionals, success has traditionally been measured by citation counts, Journal Impact Factors (JIF), and the publication of disruptive findings in prestigious journals [14]. However, in today's data-driven landscape, a new form of impact is critical for securing funding, attracting talent, and accelerating the translation of research from the bench to the bedside: online visibility. This guide objectively compares the performance of traditional academic influence with modern Search Engine Optimization (SEO) strategies, framing them as complementary, yet distinct, benchmarking tools for the biomedical enterprise. Data reveals that while a strong correlation exists between academic and online impact for top-tier research, significant differences in ranking (an average of 17.5% for journals and 17.7% for individual papers) highlight the unique value proposition of a dedicated SEO strategy [14]. By adopting the rigorous, evidence-based methodologies familiar to your laboratory work, you can build a robust online presence that extends the reach and commercial potential of your scientific discoveries.

Benchmarking Impact: Traditional Academic vs. Modern SEO Metrics

Evaluating the success of research requires a multi-faceted approach. The table below compares the established systems for measuring academic influence with the emerging metrics for gauging online visibility.

Table 1: Comparative Analysis of Academic Influence and SEO Performance Metrics

| Metric Category | Academic Influence Metrics | SEO & Online Visibility Metrics |

|---|---|---|

| Primary Objective | Advance knowledge, secure academic prestige | Drive qualified traffic, generate leads, demonstrate commercial applicability |

| Key Performance Indicators (KPIs) | Journal Impact Factor (JIF), Journal Citation Indicator (JCI), Citation Counts, Disruption Index (Dz) [14] | Organic traffic, keyword rankings for commercial/intent-driven queries, domain authority, conversion rates [15] [16] |

| Target Audience | Peers, academic institutions, specialized journals | Industry partners, investors, patients, policymakers, cross-disciplinary collaborators [17] [16] |

| Content Format | Research papers, reviews, clinical trials | Product pages, technical notes, case studies, educational blogs, webinars [17] [15] |

| Validation System | Peer review, citation networks [14] | Google's E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness), backlinks from authoritative sites [18] [19] [16] |

| Key Finding from Data | Correlation coefficient of ~0.635 between disruption (Dz) and 5-year citation count (CC5) [14] | SEO-optimized resources can rank for thousands of keywords, generating traffic equivalent to thousands of dollars in advertising [15] |

Experimental Protocols: Methodologies for Measuring Impact

Protocol for Analyzing Academic Impact and Disruption

A 2024 study on medical journals provides a replicable methodology for comparing traditional academic impact with levels of disruptive innovation [14].

- Research Object: 114 general and internal medicine Science Citation Index Expanded (SCIE) journals and 15,206 associated research papers from 2018 [14].

- Data Resources: Journal Citation Reports (JCR), Web of Science (WoS), OpenCitations Index of PubMed (POCI), and the H1 Connect peer review database [14].

- Evaluation Indicators:

- Academic Impact: Measured using Journal Impact Factor (JIF), Journal Cumulative Citations for 5 years (JCC5), and Journal Citation Indicator (JCI) [14].

- Disruptive Innovation: Quantified using the Disruption Index (Dz) and Journal Disruption Index (JDI), which calculate citation substitution in a paper's citation network [14].

- Analysis: Correlation coefficients (e.g., between JDI and JCC5) and ranking differences were calculated to assess the alignment between impact and innovation [14].

Protocol for Implementing Life Sciences SEO

SEO in the life sciences is not about "keyword stuffing" but about understanding the unique search patterns of researchers, healthcare professionals, and informed patients [17]. The following workflow outlines this strategic process.

Diagram: Strategic SEO Workflow for Biomedical Organizations. This diagram outlines the continuous cycle of keyword research, content creation, technical optimization, and performance analysis required for effective SEO.

Keyword Research and Selection Methodology

Effective keyword strategy requires a granular approach tailored to the scientific audience, which performs highly specific, technically sophisticated searches, often using Boolean operators [17].

- Leverage Specialized Terminology: Utilize resources like PubMed, Google Scholar, and MeSH (Medical Subject Headings) to identify the precise terminology your target audience uses [17] [20].

- Balance Technical Accuracy and Search Volume: Create a layered keyword strategy that targets both high-search-volume accessible terms and hyper-technical terminology to maximize reach while maintaining scientific credibility [17].

- Employ the KEYWORDS Framework: For systematic keyword selection, apply a structured framework adapted from research methodologies [20]:

- Key Concepts (Research Domain)

- Exposure or Intervention

- Yield (Expected Outcome)

- Who (Subject/sample)

- Objective or Hypothesis

- Research Design

- Data analysis tools

- Setting (Conducting site) [20]

- Prioritize Long-Tail Keywords: These are longer, more specific phrases (e.g., "CRISPR-Cas9 T cell immunotherapy clinical trials phase 2") that have lower search volume but higher conversion potential because they indicate clear user intent and are less competitive [17] [21].

Technical and E-E-A-T Optimization Protocol

In life sciences, technical SEO and establishing credibility are paramount due to the "Your Money or Your Life" (YMYL) nature of the content [16].

- Implement Scientific Schema Markup: Provide search engines with explicit clues about your content's nature by tagging elements like

MedicalScholarlyArticle,AuthorCredentials,StudyFindings, andChemicalCompounds[17]. - Optimize for E-E-A-T: Google's Experience, Expertise, Authoritativeness, and Trustworthiness guidelines are critical for healthcare and life sciences SEO [18] [16].

- Expertise: Have content written or reviewed by credentialed scientists and healthcare professionals [18].

- Authoritativeness: Earn backlinks from reputable medical websites, journals, and industry associations [18].

- Trustworthiness: Incorporate SSL security, clear disclaimers, and comply with regulatory standards (FDA, EMA) [17] [18] [19].

The Scientist's Toolkit: Essential Research & SEO Reagents

Just as laboratory experiments require specific reagents, successfully bridging academic influence and online visibility requires a set of specialized tools.

Table 2: Essential Solutions for Integrated Academic and Digital Impact

| Tool Category | Specific Tool / Solution | Function & Application |

|---|---|---|

| Academic & Database Tools | PubMed / MeSH [17] [20] | Identifies standardized scientific terminology and high-value keywords from published literature. |

| Google Scholar [17] | Reveals keyword trends and terminology used in academic abstracts and titles. | |

| H1 Connect / Faculty Opinions [14] | Provides authoritative peer review and validation of key papers in the biomedical field. | |

| SEO & Analytics Tools | Ahrefs / Semrush [17] [15] | Conducts competitor keyword analysis, tracks rankings, and evaluates backlink profiles. |

| Google Search Console [16] | Provides first-party data on a website's organic search performance and striking-distance keywords. | |

| PageSpeed Insights [19] | Analyzes and provides recommendations for improving website loading speed. | |

| Content Optimization Framework | KEYWORDS Framework [20] | Provides a systematic, PICO-inspired structure for selecting comprehensive and relevant keywords for research. |

| Regulatory Compliance Guideline | FDA/EMA Regulations [17] [18] | Ensures all online content and claims adhere to strict industry promotional guidelines, building trust. |

Comparative Performance Data Analysis

The relationship between academic influence and potential for online impact is nuanced. Data from a study of 114 medical journals reveals a moderate correlation (coefficient of 0.635) between a paper's disruptive innovation (Dz) and its 5-year citation count (CC5) [14]. However, this same study found a critical divergence: the average difference in rankings based on disruptive innovation versus traditional academic influence was about 17.5% for journals and 17.7% for individual papers [14]. This demonstrates that these two evaluation systems, while related, capture fundamentally different aspects of a research output's value.

Furthermore, content optimized for SEO demonstrates clear business value. For instance, one biotech company's SEO-driven resource, "Useful Numbers for Cell Culture," ranks for over 3,000 keywords and has an estimated equivalent advertising value of $7,400 per month [15]. This shows that targeted online content can generate sustained, high-value traffic that complements academic citation.

The translation of biomedical research from an academic achievement to a commercially viable or clinically impactful outcome requires a dual-strategy approach. Relying solely on traditional metrics like the JIF is no longer sufficient; the 17.7% ranking difference between innovation and pure academic impact creates a visibility gap that can hinder progress [14]. By benchmarking your digital presence against the same rigorous standards applied in the laboratory—adopting structured protocols for keyword research, technical SEO, and E-E-A-T optimization—you can build a compelling business case for your work. Integrating a strategic SEO framework ensures that your groundbreaking research achieves not only academic influence but also the online visibility necessary to attract partners, secure investment, and ultimately accelerate the journey toward improving human health.

The digital landscape for scientific dissemination is evolving rapidly. While traditional citation analysis remains a cornerstone for evaluating academic impact, a parallel, complementary framework has emerged from search engine optimization (SEO) to measure the discoverability and contextual relevance of research. This guide benchmarks modern semantic search strategies against traditional keyword-based methods, providing a structured, data-driven comparison for researchers, scientists, and drug development professionals. The objective is to translate proven SEO protocols into the academic context, enabling professionals to enhance the online visibility and resonance of their published work, thereby facilitating evidence synthesis and accelerating scientific impact [12].

The shift from traditional to semantic SEO mirrors a broader trend in information retrieval: a move from simple pattern matching to a sophisticated understanding of meaning and context. This is critically important in scientific fields, where precision and the interconnection of complex concepts are paramount. As one analysis of journal guidelines in ecology and evolutionary biology revealed, restrictive abstract word limits and redundant keyword usage in titles and abstracts can significantly hinder article discoverability in digital databases [12]. By adopting the strategies compared herein, researchers can systematically optimize their publications to align with how modern search engines and academic databases interpret and rank content.

Core Terminology and Conceptual Frameworks

Defining the Key Concepts

Traditional SEO: An approach to optimization that focuses primarily on keyword manipulation and backlink acquisition. Its core components include keyword optimization (researching and using specific user-search terms), backlinks (inbound links from other websites to improve authority), and on-page SEO (optimizing meta tags, headers, and content on individual webpages) [22]. The primary goal is to achieve high visibility on Search Engine Results Pages (SERPs) by matching a user's query string with keywords on a webpage [23].

Semantic SEO: An evolution of SEO that focuses on understanding and optimizing for the user intent and contextual meaning behind search queries. It involves creating content that answers questions and covers topics comprehensively [22]. Instead of focusing on individual keywords, it uses topic clusters and Natural Language Processing (NLP) to understand the relationships between concepts [22] [24]. The goal is to satisfy user intent completely, making content more resilient to search algorithm updates [22].

Search Intent: The fundamental goal a user has when typing a query into a search engine. Semantic SEO prioritizes understanding and fulfilling this intent, which can be informational (seeking knowledge), navigational (seeking a specific website), or transactional (aiming to purchase) [13] [25]. Aligning content with search intent is crucial for reducing bounce rates and increasing user engagement [24] [26].

Entity-Based Search: A specific implementation of semantic search where an entity—a unique, identifiable person, place, thing, or concept—becomes the fundamental unit of search. Search engines use knowledge graphs to map the relationships between these entities to deliver more accurate and context-aware results [27] [23]. For example, an entity-based system understands the connections between a famous author, the books they've written, and the awards they've won [27].

The Logical Relationship of Modern SEO Concepts

The following diagram illustrates how semantic principles and entity recognition work together to process user queries and content, moving beyond simple keyword matching.

Comparative Analysis: Semantic vs. Traditional SEO

Quantitative Performance Comparison

The table below summarizes experimental and observational data comparing the performance of traditional and semantic/entity-based SEO strategies.

Table 1: Performance Benchmarking of Traditional vs. Semantic/Entity-Based SEO

| Metric | Traditional SEO | Semantic/Entity-Based SEO | Data Source & Context |

|---|---|---|---|

| Primary Focus | Keyword ranking & backlink acquisition [22] | User intent & contextual meaning [22] | Industry practice analysis [22] [28] |

| Content Relevance Driver | Keyword density & exact match terms [22] [23] | Topic clusters & entity relationships [22] [24] | Industry practice analysis [22] [23] [24] |

| Impact on Information Retrieval (IR) Score | Susceptible to score dilution from poor keyword proximity [29] | Improves IR scores by 5–20% with entity attributes; 25–100%+ with entity-type information [29] | Analysis of search algorithm performance [29] |

| Algorithm Resilience | More susceptible to algorithm updates [22] | More resilient, built on modern AI principles [22] [23] | Industry observation of search engine updates [22] [23] |

| Voice Search Compatibility | Low, due to reliance on short, typed phrases [27] | High, as voice queries are longer and conversational [27] | Analysis of search behavior trends [27] |

| Typical SERP Feature Appearance | Standard organic listings [23] | Higher prevalence in Featured Snippets, People Also Ask, & Knowledge Panels [23] [24] | Analysis of search engine results pages [23] [24] |

Experimental Protocols and Methodologies

Protocol A: Measuring Click-Through Performance by Search Intent

This protocol is derived from a model that analyzes the drivers of organic clicks for different types of searches [13].

- Objective: To determine whether content relevance or online authority is a more significant driver of organic clicks, and how this varies with user search intent.

- Methodology:

- Data Collection: Gather data for a set of websites across different industries. The dataset must include, for a large sample of search queries:

- Number of organic clicks for each website.

- The organic rank of the website on the Search Engine Result Page (SERP).

- Characteristics of the search query (popularity, competition, specificity, and intent—categorized as informational or transactional).

- Website characteristics (content relevance, measured by semantic alignment with the query; online authority, measured by domain-level metrics).

- Model Estimation: Employ a joint model to account for the endogeneity of organic rank and unobserved heterogeneity. This typically involves a two-stage model:

- Rank Model: Models the website's organic rank as a function of query and website characteristics.

- Clicks Model: Models the expected organic clicks as a function of the predicted rank, query characteristics, website characteristics, and interaction effects.

- Data Collection: Gather data for a set of websites across different industries. The dataset must include, for a large sample of search queries:

- Key Findings from Prior Application: The study found that content relevance is a key driver of organic clicks for transactional searches (users looking to purchase). Conversely, for users at the awareness stage conducting informational searches, online authority is the primary driver of clicks [13]. This highlights the need to align optimization strategies with the user's stage in the customer journey.

Protocol B: Entity Salience Analysis for Content Optimization

This protocol uses automated tools to quantitatively assess how well search engines understand the entities within a piece of content.

- Objective: To measure the contextual understanding of a webpage and identify opportunities to improve entity relevance.

- Methodology:

- Identify Key Entities: For a given topic, identify the main entities (people, places, concepts) that are central to a comprehensive understanding. Use resources like Google's Knowledge Graph, Wikipedia, or domain-specific ontologies [27] [29].

- Content Analysis: Run the webpage's content through a natural language processing API, such as Google Cloud's Natural Language API.

- Metric Extraction: The API returns entity annotations and salience scores. The salience score indicates the importance of each entity to the overall document, ranging from 0.0 to 1.0 [29].

- Optimization: Revise content to increase the salience of target entities and ensure a comprehensive coverage of all related entities identified in the knowledge base.

- Key Findings from Prior Application: This method moves beyond keyword density. By structuring content to mirror the entity relationships found in established knowledge bases like Wikipedia, practitioners can signal topical expertise to search engines. This approach is foundational to achieving visibility in AI-driven search features and knowledge panels [29].

The Scientist's Toolkit: Research Reagent Solutions for SEO

For researchers aiming to apply these digital optimization strategies, the following "reagent solutions" are essential.

Table 2: Essential Tools and Materials for SEO & Content Optimization Research

| Tool / Material | Function / Explanation |

|---|---|

| Google Search Console | A diagnostic tool that monitors search traffic, identifies indexing issues, and reveals the actual search queries that lead to a website. Critical for tracking organic performance [22]. |

| Natural Language API (e.g., Google Cloud) | The experimental apparatus for Entity Salience Analysis. It quantitatively measures how a machine learning model interprets the entities and sentiment within a text [29]. |

| Schema.org Vocabulary | A standardized markup language (structured data) that acts as a "stain." It helps search engines identify specific entities (e.g., ScholarlyArticle, Author, Dataset) on a webpage, enhancing clarity and eligibility for rich results [27] [24]. |

| Content Analysis Platforms (e.g., MarketMuse, Clearscope) | These tools function as assay kits. They analyze top-ranking content for a given topic and provide a "completeness" score, recommending related entities and topics to cover for comprehensive topic authority [22]. |

| Keyword Research Tools (e.g., SEMrush, Ahrefs) | Used for market sizing and competitor analysis. They help identify search query volume, keyword difficulty, and the terms for which competing websites are ranking, informing content strategy [22] [26]. |

Integrated Workflow for Academic Content Optimization

The following diagram synthesizes the core concepts and experimental protocols into a practical workflow for optimizing scientific content.

The experimental data and comparative analysis clearly demonstrate that semantic and entity-based search strategies offer a more robust, effective, and future-proof framework for optimizing content discoverability compared to traditional keyword-centric methods. The key differentiator is the focus on meaning and user intent over lexical matching [22] [28] [23]. This is particularly relevant for the scientific community, where the accurate and interconnected representation of complex information is critical.

The hybrid approach, leveraging the foundational elements of traditional SEO (such as technical website health) while fully embracing the semantic principles of entity optimization and intent fulfillment, is the most effective path forward [22] [28]. For researchers, this means:

- Crafting titles and abstracts that incorporate common, recognizable terminology to boost indexing [12].

- Structuring content to answer not just a single question, but to cover a topic cluster comprehensively, anticipating related questions a scientist might have [24].

- Using structured data (schema.org) to explicitly define the scholarly entities within their work, from authors and institutions to datasets and chemical compounds [27] [29].

By adopting these protocols, scientists and drug development professionals can ensure their valuable research is not only published but is also discoverable, thereby maximizing its potential for engagement, citation, and real-world impact in an increasingly digital academic landscape.

A Step-by-Step Methodology: Mapping Highly-Cited Paper Themes to Actionable Keyword Clusters

In the competitive landscape of academic research, particularly in fields like drug development, the visibility and impact of scientific work are paramount. A modern researcher's toolkit must, therefore, extend beyond the lab bench to include digital tools that optimize the discoverability of research outputs. This guide provides an objective comparison of essential platforms, from Clarivate's authoritative research intelligence suites to keyword planners and emerging AI SEO platforms. By benchmarking keyword strategies against the patterns of highly-cited papers, researchers and scientists can systematically enhance the reach and influence of their work, ensuring it reaches the right audience in an increasingly digital and AI-driven ecosystem.

Clarivate Research Intelligence Suite

Clarivate provides a suite of tools integral to the modern research workflow, from literature management and discovery to measuring innovation and global research trends.

EndNote 2025

EndNote is a comprehensive reference management solution that has incorporated AI to streamline the research and writing process. Its features are designed to save researchers time and improve accuracy [30].

- AI-Powered Tools: The new "Key Takeaway" feature uses generative AI to extract key insights and takeaways from individual research papers, expediting research discovery [30].

- Journal Matching: The "Find a Journal publishing tool" is an enhanced machine learning tool available directly in the 'Cite While You Write' function. It allows researchers to find the best journal match for their manuscript [30].

- Enhanced Workflow: Features like "Cite from PDF" allow researchers to quickly insert a highlighted quote from a PDF and its corresponding citation with a single click. A redesigned summary panel and improved integration with Web of Science citing articles and related records help curate a more comprehensive reference library [30].

- Platform Foundation: These features leverage the Clarivate Academic AI Platform, a technological backbone designed for consistent deployment of AI capabilities across their portfolio [30].

Global Innovators & G20 Scorecard

Clarivate also produces macro-level, data-driven reports that provide critical benchmarking context for research institutions and governments.

- Top 100 Global Innovators Methodology: This report identifies organizations at the pinnacle of the global innovation landscape. The methodology is based on empirical patent data, evaluating invention strength through a composite score derived from factors like the volume of patented inventions and their global protection scope. Qualification requires at least 500 published inventions since 2000 and 100 granted inventions within the past five years, ensuring a focus on current and significant contributors [31].

- 2025 G20 Research and Innovation Scorecard: This interactive scorecard offers a comprehensive view of the research and innovation capabilities of G20 nations. It highlights key trends such as shifting global research partnerships, the rise of open access, and a growing focus on research aligned with Sustainable Development Goals (SDGs). For example, it details how collaboration between the European Union and Mainland China has more than doubled over the past decade, and how international collaboration in the United Kingdom has grown to 70% of its research output [32].

Keyword Research Tools for Academic Visibility

Keyword research is not dead; it has evolved. It remains a critical roadmap to understanding audience needs and optimizing content for discoverability [33]. For researchers, this means understanding the terms and queries used by peers, funders, and publishers.

Tool Comparison

The following table summarizes key keyword research tools, their primary strengths, and their applicability to the research field.

| Tool Name | Best For | Key Academic Application | Free Plan/Allowance |

|---|---|---|---|

| Google Keyword Planner [34] [35] | Validating search volume and competition; PPC keyword research. | Estimating search volume for public-facing research summaries or lab websites. | Completely free with a Google Ads account [34]. |

| Semrush [34] [36] | Advanced SEO; granular keyword data and competitive analysis. | Analyzing the online presence of research institutions or competitor labs. | Free plan includes 10 reports/day [34]. Paid plans start at ~$140/month [34]. |

| Ahrefs [35] | Competitor keyword analysis and SERP research. | Understanding which keywords drive traffic to leading journals or scholarly websites. | Paid plans start at $129/month [35]. |

| KWFinder [34] | Ad hoc keyword research with unique metrics. | Quick, in-depth analysis of specific keyword opportunities. | Free plan: 5 searches/day [34]. Paid: ~$30/month [34]. |

| Ubersuggest [34] | Content marketing. | Generating ideas for blog posts or articles related to a research field. | Free plan: 3 searches/day [34]. |

Modern Keyword Research Protocol

Effective keyword research in 2025 involves more than just finding high-volume terms. The following protocol, adapted from industry best practices, can be used to benchmark and optimize academic content [33].

- Start with Topics, Then Drill Down: Begin with broad research themes (e.g., "gene therapy") and use tools like Semrush or Google Keyword Planner to discover related queries and long-tail keywords (e.g., "AAV vector gene therapy safety profile") [33].

- Analyze Search Intent: Manually check the search engine results for your target keywords. Determine if the user's goal is informational (seeking knowledge, like "how does CRISPR work"), navigational (finding a specific journal or institution), or transactional (looking to use a service or database). The content type must match this intent [33].

- Cluster Related Keywords: Group semantically related terms so a single piece of content (e.g., a review article) can comprehensively cover a topic and rank for multiple variations. For example, a cluster for "biomarker discovery" might include "cancer biomarker detection techniques," "proteomics biomarker validation," and "genomic biomarkers for personalized medicine" [33].

- Benchmark Against Competitors: Identify research groups or institutions leading in your field. Use SEO tools to analyze the keywords for which their websites and prominent publications rank, identifying content gaps and opportunities [33].

The Rise of AI SEO Platforms

The advent of AI search engines like ChatGPT, Gemini, and Perplexity has given rise to a new discipline: Answer Engine Optimization (AEO). These platforms require new tools to track visibility and brand perception within AI-generated answers [37].

Leading AI SEO Platforms Comparison

The following table compares specialized AI SEO tools that are relevant for tracking the visibility of research institutions, experts, and published work in AI conversations.

| Platform | Primary Function | Relevance to Researchers | Pricing Overview |

|---|---|---|---|

| Rank Prompt [37] | Tracks brand/URL visibility across ChatGPT, Gemini, Claude, and Perplexity. | Monitoring how an institution, principal investigator, or a seminal paper is cited and described by AI assistants. | Affordable plans with unlimited prompt tracking on pro tiers [37]. |

| Profound [37] | Enterprise-level AI perception analytics across core assistants. | For large research institutions or consortia to understand their high-level brand positioning and narrative in the AI ecosystem. | Starts at $499/month (enterprise-focused) [37]. |

| Goodie [37] | Tracks product visibility in AI shopping shelves (e.g., ChatGPT, Amazon Rufus). | Less relevant for fundamental research, but potentially applicable for patented drugs, lab equipment, or commercialized research products. | Information not specified in search results. |

| Peec AI [37] | Tracks brand discovery across regions and languages in major LLMs. | For global research projects or universities to monitor their international visibility and share of voice in AI search. | Starts at ~€99/month [37]. |

Experimental Protocol for AI Search Visibility

To objectively benchmark visibility in AI search engines, the following experimental protocol can be employed, utilizing platforms like Rank Prompt.

- Define Entities for Tracking: Identify the key entities to track. This could include the name of your research institute (e.g., "Broad Institute"), a well-known project (e.g., "Human Cell Atlas"), a key drug name, or a prominent principal investigator.

- Establish a Baseline: Use the AI SEO platform to run an initial scan across all major AI assistants (ChatGPT, Gemini, Claude, Perplexity) for a set of pre-defined prompts. Example prompts could be: "Who are the leading researchers in [field]?", "What is the most cited paper on [topic]?", or "Tell me about [Your University]'s cancer research."

- Quantify Metrics: Record key metrics from the platform's dashboard, including:

- Share of Voice: How often your defined entity is mentioned compared to competitors in the AI's response.

- Citation Frequency: How often your specific publications or institutional URLs are cited as sources by the AI.

- Positioning & Sentiment: How the AI describes your entity—is it described as a "leader," "pioneer," or is the description neutral or inaccurate?

- Implement AEO Strategies: Based on the baseline, implement strategies to improve AI visibility. This can include optimizing institutional web pages with clear, authoritative information, implementing schema.org markup (especially for

ScholarlyArticleandPerson), and ensuring key publications are openly accessible to be used as training data. - Monitor and Re-evaluate: Continuously track the defined entities and prompts over a set period (e.g., quarterly). Use the AI SEO platform's dashboard to measure changes in the metrics, correlating them with the implemented strategies to determine effectiveness.

The Scientist's Digital Toolkit: Essential Research Reagents

Just as an experiment requires specific reagents, optimizing research visibility requires a set of digital tools. The following table details these essential "research reagents" for the modern scientist.

| Tool / Resource | Category | Function in the Research Visibility Workflow |

|---|---|---|

| EndNote 2025 [30] | Reference Management | AI-powered tool for managing literature, generating insights, and matching manuscripts to target journals. |

| Web of Science Core Collection | Bibliometric Database | Provides data on highly-cited papers and journal impact for benchmarking research performance. |

| Google Keyword Planner [34] [35] | Keyword Research | Validates search volume and competition for public-facing research content, free of charge. |

| Semrush [34] [36] | SEO Suite | Offers advanced analysis of keyword rankings, backlink profiles, and competitive benchmarking for institutional websites. |

| Rank Prompt [37] | AI SEO Platform | Tracks and benchmarks the visibility of researchers, institutions, and their work across AI answer engines like ChatGPT and Gemini. |

| Schema.org Markup | Technical SEO | A structured data vocabulary added to web pages to help search engines understand and represent scholarly content better. |

Visualizing the Research Visibility Workflow

The following diagram illustrates the integrated workflow for enhancing research discoverability, from foundational literature management to benchmarking performance in traditional and AI search.

Integrated Research Visibility Workflow

The paradigm for research impact is expanding. While citation counts in databases like Web of Science remain a crucial benchmark, digital visibility through traditional search engines and AI-powered answer engines is a new frontier for influence. By integrating the toolkit outlined here—Clarivate's research intelligence, rigorous keyword strategy, and AI SEO platform tracking—researchers and institutions can build a robust, data-driven approach to ensure their work is not only published and cited but also discovered and utilized in an increasingly complex information ecosystem. This holistic approach to research visibility is fast becoming a non-negotiable component of a successful scientific career.

This guide benchmarks methodological frameworks for analyzing academic publications, focusing on the T²K² benchmark for top-k keyword and document extraction. We objectively compare relational (Oracle, PostgreSQL) and document-oriented (MongoDB) database implementations utilizing TF-IDF and Okapi BM25 weighting schemes. Experimental data reveal that a structured, dimensional data warehouse schema (T²K²D²) significantly enhances computational performance for analytical queries. Supported by quantitative results and workflow visualizations, this analysis provides researchers with validated protocols for reproducing benchmark studies and optimizing keyword strategy reverse-engineering.

Reverse-engineering the success of highly-cited research is a cornerstone of scientific strategy. It enables researchers to decode the patterns that contribute to high visibility and impact. This process aligns with a broader thesis on benchmarking keyword strategies, which posits that systematic, data-driven analysis of successful publications can yield reproducible frameworks for enhancing a study's discoverability [12].

Such benchmarking is not limited to content analysis; it also extends to the computational efficiency of the methods used to process and analyze large text corpora. In academic research, the extraction of top-k keywords and documents is a fundamental task for trend identification, event detection, and literature review automation [38]. Therefore, benchmarking the performance of different computational approaches provides critical insights for building efficient research tools. This guide compares specific technological implementations within this domain, providing experimental data on their performance.

Experimental Protocols and Workflow

The core methodology for this comparison is based on the T²K² (Twitter Top-K Keywords) benchmark and its decision-support evolution, T²K²D² [38]. The benchmark is designed to evaluate the performance of different weighting schemes and database systems in processing text analysis queries.

The T²K² Benchmarking Methodology

The benchmark features a real tweet dataset and a set of queries with varying complexities and selectivities. Its data model is generic and can handle any textual document, making it applicable beyond tweets to scientific abstracts and papers [38]. The primary goal is to evaluate systems on their efficiency in computing top-k keywords and documents.

Key Implementation Details:

- Weighting Schemes: The benchmark incorporates two established weighting schemes for text analysis:

- TF-IDF (Term Frequency-Inverse Document Frequency): The augmented form is used to prevent a bias towards long documents:

TF-IDF(t,d,D) = [K + (1-K) * (f_t,d / max_t'∈d(f_t',d))] * (1 + log(N/n)), whereKis a free parameter set to0.5[38]. - Okapi BM25: A state-of-the-art probabilistic weighting function that builds upon TF-IDF [38].

- TF-IDF (Term Frequency-Inverse Document Frequency): The augmented form is used to prevent a bias towards long documents:

- Database Systems Tested: The benchmark was instantiated and tested on:

- Relational Databases: Oracle and PostgreSQL.

- Document-Oriented Database: MongoDB.

- Schema Design: The benchmark compares two primary schema designs:

- T²K² Schema: A generic schema for handling textual documents.

- T²K²D² Schema: A dimensional (star) schema, typical in data warehouses, which is hypothesized to improve performance for analytical queries [38].

The following diagram illustrates the logical workflow of the T²K² benchmarking process, from data preparation to performance evaluation.

Reverse-Engineering Analytical Workflow

Concurrently, for the reverse-engineering of highly-cited papers themselves, a structured analytical workflow is required. This process involves dissecting a paper's compositional elements to understand the factors driving its high citation count [12]. The workflow below outlines the key steps for this analysis, focusing on the title, abstract, and keywords.

Results: Performance Data and Comparative Analysis

The experimental results from implementing the T²K² and T²K²D² benchmarks provide clear, quantitative data for comparing the different database systems and schemas.

Computational Performance Comparison

The table below summarizes the key findings from the benchmark experiments, which evaluated query response times for top-k keyword and document extraction tasks [38].

Table 1: Benchmark Performance Results for Database Systems and Schemas

| Database System | Schema Type | Weighting Scheme | Performance Summary |

|---|---|---|---|

| Oracle | T²K²D² (Dimensional) | TF-IDF, Okapi BM25 | Superior Performance: Demonstrated fastest query response times when using the star schema for analytical queries. |

| PostgreSQL | T²K²D² (Dimensional) | TF-IDF, Okapi BM25 | Notable Improvement: Showed significant performance gains with the T²K²D² star schema compared to the generic T²K² schema. |

| MongoDB | T²K² (Generic) | TF-IDF, Okapi BM25 | Competitive Performance: Effectively handled the document-oriented workload with the generic schema. |

| All Systems | T²K²D² vs. T²K² | TF-IDF, Okapi BM25 | Schema Impact: The dimensional schema (T²K²D²) consistently provided better performance for complex, analytical queries common in benchmarking and research tasks. |

Reverse-Engineering Qualitative Findings

Analysis of author guidelines and published articles in ecology and evolutionary biology reveals patterns that inform a strategy for optimizing paper discoverability [12].

Table 2: Patterns in Titles, Abstracts, and Keywords from Publication Analysis

| Element | Finding | Data / Example |

|---|---|---|

| Title | Trend towards longer titles without major citation consequences. | Survey of 5,323 studies in ecology and evolutionary biology [12]. |

| Title | Humorous titles can increase engagement and citation count. | Papers with highest-humor titles had nearly double the citation count; use punctuation (e.g., colon) to combine humor and description [12]. |

| Abstract | Authors frequently exhaust strict word limits, suggesting guidelines are overly restrictive. | 92% of surveyed studies used keywords that were redundant with terms already in the title or abstract [12]. |

| Keywords | Redundant keywords are prevalent, undermining optimal indexing. | Common terminology and placement at the beginning of the abstract enhance discoverability [12]. |

| Abstract & Keywords | Strategic placement of common terminology is crucial for discoverability. | Using uncommon keywords is negatively correlated with academic impact [12]. |

The Scientist's Toolkit: Research Reagent Solutions

This section details the essential tools and materials, both computational and methodological, required to implement the benchmarking and reverse-engineering protocols described in this guide.

Table 3: Essential Research Reagent Solutions for Keyword Analysis and Benchmarking

| Tool / Solution | Function / Description | Relevance to Experiment |

|---|---|---|

| T²K² / T²K²D² Benchmark | A standardized benchmark suite for evaluating top-k keyword and document processing. | Provides the core experimental framework, data model, and query workload for performance tests [38]. |

| TF-IDF Weighting | A numerical statistic that reflects the importance of a word in a document relative to a corpus. | One of the two core weighting schemes implemented and tested for keyword extraction [38]. |

| Okapi BM25 Weighting | A state-of-the-art ranking function based on probabilistic retrieval models. | A more advanced weighting scheme compared to TF-IDF, used for performance comparison [38]. |

| Relational Database (e.g., PostgreSQL) | A database that stores data in structured tables with rows and columns. | One implementation environment for testing the in-database computation of weighting schemes, favoring the T²K²D² schema [38]. |

| NoSQL Database (e.g., MongoDB) | A document-oriented database designed for storing and retrieving flexible data schemas. | An alternative implementation environment, showing competitive performance with the generic T²K² schema [38]. |

| Structured Abstracts | An abstract format with standardized headings (e.g., Background, Methods, Results). | A methodological tool to maximize the incorporation of key terms and improve article discoverability [12]. |

| Google Trends / Thesaurus | Tools for identifying frequently searched terms and lexical variations. | Aids in selecting common, high-impact terminology for inclusion in titles, abstracts, and keywords [12]. |

In the competitive landscape of pharmaceutical research, the ability to rapidly access precise information is not merely convenient—it is a strategic imperative. Semantic intent mapping represents a paradigm shift in how research professionals discover and interact with scientific knowledge. Unlike traditional keyword-based searches that rely on literal word matching, semantic intent mapping uses artificial intelligence to understand the underlying meaning and purpose behind a search query [39]. This advanced approach allows researchers to uncover critical related questions and long-tail variations of their core queries that might otherwise remain hidden.

For drug development professionals, this capability directly enhances competitive intelligence activities. A comprehensively mapped semantic landscape provides insights into emerging research trends, unmet medical needs, and competitive scientific focus areas [40] [41]. When integrated with a broader thesis on benchmarking keyword strategies, semantic intent mapping becomes a powerful methodology for validating research directions against the corpus of highly-cited literature, ensuring that investigative resources are allocated to the most promising and substantiated avenues of inquiry.

The AI Foundation: How Semantic Understanding Works

Core Technologies

AI-powered semantic intent mapping is built upon sophisticated technological foundations that enable a nuanced understanding of scientific language.

- Natural Language Processing (NLP): NLP encompasses AI techniques for interpreting and generating human language, enabling tools to understand user intent, context, and meaning beyond simple keyword matching [39]. In a research context, NLP algorithms can deconstruct complex scientific queries into their core conceptual components.

- Large Language Models (LLMs): These are AI systems trained on vast textual data to generate and summarize content, answer questions, and personalize search experiences [39]. LLMs power the semantic understanding required to connect related concepts across therapeutic areas and methodological approaches.

- Enterprise Graph: This is a dynamic knowledge model linking people, data, and processes, continuously updated as organizations evolve [39]. For pharmaceutical companies, an Enterprise Graph can map relationships between biological targets, drug compounds, clinical investigators, and research publications, creating a structured network of scientific knowledge.

The MUVERA Algorithm in Scientific Search

A significant advancement in this domain is Google's MUVERA (Multi-Vector Retrieval Algorithm), which represents a substantial evolution beyond previous search technologies. Unlike previous single-vector systems that treated queries as monolithic units, MUVERA decomposes content into smaller semantic components, analyzing relationships between concepts rather than just word proximity [42].

This mathematical approach employs Chamfer similarity matching to measure how effectively query vectors align with document vectors, creating more predictable and contextually accurate search results [42]. For researchers, this means that a query about "KRAS inhibitor resistance mechanisms" can intelligently connect to content about "G12C mutation bypass pathways" even without exact keyword overlap, dramatically accelerating the literature review process and ensuring more comprehensive discovery of relevant research.

Experimental Protocol for Intent Mapping in Pharmaceutical Research

Data Collection and Query Analysis

The following protocol provides a methodological framework for implementing semantic intent mapping in a pharmaceutical research context, with particular utility for benchmarking studies.

Step 1: Define Core Research Themes

- Identify 3-5 primary therapeutic or methodological focus areas (e.g., "GLP-1 receptor agonists," "CAR-T solid tumors," "AI in clinical trial recruitment").

- For each theme, compile a list of foundational terms and concepts using authoritative sources such as MeSH (Medical Subject Headings) and established ontologies.

Step 2: Gather Audience and Search Data

- Analyze internal search logs from scientific portals and document repositories to understand existing query patterns.

- Extract frequently asked questions from internal team communications, scientific review meetings, and regulatory interactions.

- Utilize competitive intelligence sources to identify knowledge gaps and emerging areas of scientific interest [40].

Step 3: Deploy AI-Powered Keyword Expansion

- Implement AI tools capable of analyzing large datasets of user queries, social media comments, and product reviews to identify patterns and hidden intents that humans might miss [43].

- Input seed keywords into semantic analysis platforms to generate long-tail variations and related questions based on conceptual proximity rather than mere lexical similarity.

Step 4: Classify by Search Intent

- Categorize expanded terms according to the four primary intent types [42] [43]:

- Informational: Seeking knowledge (e.g., "mechanism of action of bispecific antibodies")

- Commercial Investigation: Comparing options (e.g., "PD-1 vs. PD-L1 inhibitor efficacy")

- Transactional: Ready to access resources (e.g., "download clinical trial protocol template")

- Navigational: Seeking specific destinations (e.g., "Nature Cancer journal site")

Step 5: Map to Content and Benchmarking Metrics

- Align identified intents with existing internal knowledge assets and external highly-cited publications.

- Establish quantitative metrics for measuring semantic coverage, including:

- Keyword relevance scores

- Intent fulfillment ratios

- Citation density for thematic areas

Table 1: Search Intent Classification for Pharmaceutical Research Queries

| Query Example | Intent Type | Therapeutic Context | Target Content Format |

|---|---|---|---|

| "Phase III trial design for Alzheimer's monotherapy" | Informational | Neurology | Clinical trial guidelines, methodology papers |

| "Comparative efficacy of IL-23 vs. IL-17 inhibitors for psoriasis" | Commercial Investigation | Immunology/Dermatology | Review articles, head-to-head trial data |

| "Request safety dataset for NDA submission" | Transactional | Regulatory Science | Template documents, database access |

| "New England Journal of Medicine coronavirus articles" | Navigational | Infectious Disease | Journal portal, specific article links |

Validation Against Highly-Cited Literature

To benchmark the effectiveness of the semantic mapping exercise:

- Select 10-15 seminal papers within the target research domain, identified through citation metrics and expert consultation.

- Analyze the linguistic patterns, terminology, and conceptual frameworks employed in these highly-cited works.

- Measure the alignment between the terminology identified through semantic intent mapping and the language of influential research.

- Calculate a Semantic Alignment Score (SAS) to quantify the degree of conceptual overlap, giving greater weight to long-tail variations that appear in both the mapped intent and the benchmark literature.

Research Applications and Impact Analysis

Enhancing Competitive Intelligence

When applied systematically, semantic intent mapping provides significant advantages across multiple pharmaceutical R&D functions:

Strategic R&D Planning

- Reveals emerging therapeutic concepts and methodological approaches before they reach peak visibility [41].

- Identifies knowledge gaps in the competitive landscape where research investment may yield differentiated insights.

- Enables more accurate forecasting of paradigm shifts in treatment approaches through analysis of query trend velocities.

Clinical Development Optimization

- Uncovers practical implementation questions that may affect trial design and execution.

- Identifies terminology variations used by different clinical communities, improving protocol design and patient recruitment materials.

- Surfaces methodological questions related to endpoint selection and biomarker strategy.

Business Development and Licensing

- Supports investigation of competitive landscapes and, most importantly, future competitive landscapes [40].

- Provides insights into the differentiation and value proposition versus marketed products and assets in development [40].

Table 2: Semantic Mapping Impact on Pharmaceutical R&D Functions

| R&D Function | Primary Intent Focus | Key Long-Tail Variations | Impact Metric |

|---|---|---|---|

| Discovery Research | Informational | "Target validation techniques for [pathway]", "Resistance mechanisms to [drug class]" | Increased patentability of discoveries |

| Clinical Development | Commercial Investigation | "[Drug] dosing frequency vs. standard of care", "Biomarker stratification for [therapy]" | Improved clinical trial enrollment rates |

| Medical Affairs | Informational | "Real-world evidence for [drug] in [subpopulation]", "Management of [adverse event]" | Enhanced scientific communication accuracy |

Case Study: Mapping the GLP-1 Agonist Landscape

The therapeutic area of metabolic diseases, particularly the glucagon-like peptide 1 (GLP-1) agonist class for type 2 diabetes and obesity, demonstrates the power of semantic intent mapping. A traditional keyword approach might focus on terms like "GLP-1 agonist efficacy." However, semantic mapping reveals crucial long-tail variations that reflect deeper research intents:

- Mechanistic Insights: "GIP and GLP-1 receptor co-agonism molecular mechanism"

- Clinical Applications: "Cardiovascular outcomes with GLP-1 in non-diabetic patients"

- Comparative Effectiveness: "Tirzepatide vs. semaglutide weight loss maintenance"

- Implementation Questions: "Dose titration schedule for GLP-1 therapy initiation"

This semantically expanded view provides a more comprehensive understanding of the research landscape, revealing both current scientific focus areas and emerging questions that may represent future research directions [44].

Implementation Toolkit for Research Organizations

Technology Infrastructure

Successful implementation of semantic intent mapping requires a structured approach to technology selection and deployment:

AI-Powered Enterprise Search Platforms

- Deploy solutions that leverage large language models and semantic understanding to interpret user intent and deliver personalized, relevant results [39].

- Prioritize platforms with specialized capabilities for processing scientific terminology and conceptual relationships.

- Ensure integration with existing scientific repositories and data systems to create a unified knowledge discovery environment.

Semantic Analysis Tools