Addressing Knowledge Gaps: Strategies for Effective EDC Awareness and Risk Assessment in Clinical Research

This article provides a comprehensive framework for researchers and drug development professionals to address the critical challenge of low awareness in Endocrine-Disrupting Chemical (EDC) knowledge assessment.

Addressing Knowledge Gaps: Strategies for Effective EDC Awareness and Risk Assessment in Clinical Research

Abstract

This article provides a comprehensive framework for researchers and drug development professionals to address the critical challenge of low awareness in Endocrine-Disrupting Chemical (EDC) knowledge assessment. It explores the foundational evidence of knowledge gaps among both public and professional populations, outlines robust methodological approaches for designing and implementing EDC assessment tools, and presents optimization strategies to enhance data quality and participant engagement. Furthermore, it examines validation techniques and comparative analyses of knowledge across demographics, synthesizing key takeaways to improve risk communication, refine educational interventions, and ultimately strengthen the scientific and regulatory approach to EDC safety in biomedical and clinical research.

Understanding the Landscape: Documenting EDC Knowledge Gaps and Their Impact

Endocrine-disrupting chemicals (EDCs) represent a significant public health concern, with scientific studies linking them to diverse adverse outcomes including reproductive disorders, metabolic diseases, neurodevelopmental issues, and hormone-related cancers [1] [2]. Despite the established scientific consensus on their危害性, a critical gap exists between the evidence of harm and broader societal awareness. This technical support center is framed within a thesis exploring the persistently low awareness in EDC knowledge assessment research, providing methodological support for scientists investigating this puzzling disconnect. The following sections offer standardized protocols, analytical frameworks, and troubleshooting guides to strengthen experimental designs in this emerging field.

Key Experimental Protocols in EDC Awareness Research

Researchers in this field typically employ cross-sectional study designs using validated scales to quantitatively measure awareness levels. The protocols below detail the primary methodological approaches.

Protocol for Assessing Awareness in Healthcare Professional Cohorts

This protocol is adapted from a 2025 study investigating awareness among Turkish medical students and physicians [1].

- Core Objective: To quantify and compare EDC awareness levels between medical students and physicians, and to examine correlations with general health attitudes.

- Study Design: Cross-sectional, questionnaire-based survey.

- Participant Recruitment:

- Target Cohorts: Medical students and practicing physicians.

- Channels: Institutional email directories, professional contact networks, hospital departments, and student networks.

- Exclusion Criteria: Incomplete survey responses, inconsistent demographic data.

- Sample Size Calculation: Based on an assumed population proportion of p=0.50, a 95% confidence interval, and a 6% margin of error, a minimum of 267 participants is required. Accounting for a 10% attrition rate, the target should be at least 294 individuals.

- Data Collection Instruments:

- Endocrine Disruptor Awareness Scale (EDCA): A 24-item validated instrument using a 1-5 Likert-type scale. It measures three subcategories: General Awareness, Impact, and Exposure and Protection. Scores are interpreted as: 1-1.8 (Very Low), 1.81-2.6 (Low), 2.61-3.4 (Moderate), 3.41-4.2 (High), 4.21-5 (Very High) [1].

- Healthy Life Awareness Scale (HLA): A 15-item scale measuring general health preferences across four subdomains: Change, Socialization, Responsibility, and Nutrition [1].

- Data Analysis Plan:

- Use descriptive statistics (mean ± SD, median [IQR], frequencies, and percentages).

- Apply non-parametric tests (Mann-Whitney U, Kruskal-Wallis) for group comparisons as data is often non-normally distributed.

- Use Spearman’s rank correlation to assess relationships between variables like age, HLA scores, and EDC awareness.

- Employ linear regression with a backward stepwise method to build a predictive model of EDC awareness.

Protocol for Assessing Awareness and Risk Perception in Public Cohorts

This protocol synthesizes methods from studies involving the general public and vulnerable groups like pregnant women [3] [2].

- Core Objective: To qualitatively and quantitatively explore the public's knowledge, awareness, and risk perceptions of EDCs.

- Study Design: Mixed-methods, combining focus groups and cross-sectional surveys.

- Participant Recruitment:

- Target Cohorts: General public, with potential oversampling of vulnerable groups (e.g., pregnant women, new mothers).

- Criteria: Aged 18-65, no formal education in food/environmental chemicals, residency in the study area.

- Sample Size for Surveys: For a binomial test with alpha=0.05, power=95%, proportion=0.5, and effect size=0.1, approximately 327 completed surveys are needed. Inflate by 15% for non-response [2].

- Data Collection Methods:

- Focus Groups: Conduct 5-10 participants per group, segregated by gender if discussing topics like fertility. Continue until data saturation is reached. Discussions should be transcribed verbatim for thematic analysis using software like NVivo [3].

- Structured Questionnaires: Assess sociodemographics, habits, knowledge of EDCs (e.g., recognition of terms like BPA, phthalates), information sources, and readiness to change behavior. Risk scores can be calculated by assigning points to behavioral frequencies and awareness levels [2].

- Data Analysis Plan:

- Qualitative: Thematic analysis to identify emergent themes (e.g., perceived control, severity, similarity heuristics).

- Quantitative: Chi-square tests to analyze relationships between risky behaviors and awareness. Residual analysis with Bonferroni correction for multi-group comparisons.

Troubleshooting Guides & FAQs for EDC Awareness Research

Low Participant Awareness Leading to Floor Effects

- Problem: A large proportion of participants score at the bottom of the scale ("Very Low" awareness), creating a "floor effect" that limits statistical analysis and variance.

- Solution:

- Pilot Testing: Conduct a small-scale pilot study. If floor effects are detected, incorporate a brief, neutral educational primer within the survey. For example, after an initial knowledge question, provide a standard definition: "If you haven't heard of them, endocrine disruptors are chemical substances found in many products. They mimic hormones in the body and may cause health problems" [2]. This allows for assessment of baseline knowledge and post-information sensitivity.

- Refine Instrument: Use simpler language and concrete examples (e.g., "chemicals in some plastics and cosmetics") instead of technical terms like "endocrine disruptors" in initial screening questions.

Recruitment Challenges for General Public Studies

- Problem: Difficulty recruiting a diverse and representative sample of the public, leading to potential selection bias.

- Solution:

- Multi-Channel Strategy: Use convenience sampling through multiple channels: university populations, community centers, public outreach events, and online platforms [3].

- Incentives: Offer modest incentives (e.g., food vouchers, gift cards) to maximize participation, as was done successfully in prior focus groups [3].

- Clear Communication: In recruitment materials, describe the study as about "attitudes towards everyday chemicals" to avoid pre-selecting for only those already concerned about EDCs.

Differentiating Between Awareness and Risk Perception

- Problem: Conflating a participant's awareness of EDCs with their perception of the associated risk.

- Solution:

- Separate Measurement: Design your instrument to measure these constructs independently. The EDCA Scale [1] measures awareness/knowledge. Risk perception should be measured using items from the literature that assess:

- Perceived Severity: How serious are the health effects of EDCs?

- Perceived Susceptibility: How likely are you to be affected by EDCs?

- Experiential Processing: Reliance on feelings or intuition about the risk [4].

- Analysis: Use correlation analysis to understand the relationship between awareness scores and risk perception scores. They are often related but distinct.

- Separate Measurement: Design your instrument to measure these constructs independently. The EDCA Scale [1] measures awareness/knowledge. Risk perception should be measured using items from the literature that assess:

Ensuring Validated and Comparable Metrics

- Problem: Using ad-hoc questions makes it impossible to compare results across studies or with established benchmarks.

- Solution:

- Use Validated Scales: Whenever possible, adopt and properly translate validated scales like the EDCA Scale [1] for healthcare professionals.

- Standardize Questions: For public surveys, use questions adapted from previous national or international surveys to allow for cross-cultural comparison [2].

- Report Consistently: When publishing, report both the total score and subcategory scores (General Awareness, Impact, Exposure/Protection) using the standardized interpretation ranges (Very Low to Very High) [1].

Quantitative Data Synthesis: Awareness Levels Across Cohorts

The table below synthesizes key quantitative findings from recent studies, highlighting the varying levels of awareness across different population groups.

Table 1: EDC Awareness Metrics Across Different Study Populations

| Study Cohort | Sample Size (n) | Awareness Metric | Key Finding | Data Source |

|---|---|---|---|---|

| Physicians | 236 | EDCA Total Score (Mean ± SD) | 3.63 ± 0.6 | [1] |

| Medical Students | 381 | EDCA Total Score (Mean ± SD) | 3.4 ± 0.54 | [1] |

| Pregnant Women & New Mothers | 380 (Planned) | Unfamiliar with EDCs | 59.2% | [2] |

| General Public (Malaysia) | Survey-based | Perceived EDC Risk | Majority perceived activities as "low risk" (19.3% higher than overall risk perception) | [4] |

| Endocrinologists | Subgroup | EDCA Total Score (Mean ± SD) | 3.96 ± 0.56 (vs. 3.59 ± 0.58 for other physicians) | [1] |

Table 2: Correlates of EDC Awareness Identified in Multivariate Analyses

| Factor | Relationship with EDC Awareness | Study Context |

|---|---|---|

| Professional Status | Physicians had significantly higher awareness than medical students (p < 0.001) [1]. | Healthcare Professionals [1] |

| Specialty | Endocrinologists' scores were significantly higher than other specialists (p = 0.003) [1]. | Healthcare Professionals [1] |

| Gender (among Physicians) | Female physicians' awareness was significantly higher than male counterparts (p = 0.027) [1]. | Healthcare Professionals [1] |

| Age | A significant positive correlation was found between age and EDC awareness scores [1]. | Healthcare Professionals [1] |

| Healthy Life Awareness | A significant positive correlation was found with general healthy life awareness (HLA) scores [1]. | Healthcare Professionals [1] |

| Experiential Processing | Public risk perception was heavily influenced by cognitive and affective "experiential" factors [4]. | General Public [4] |

The Scientist's Toolkit: Essential Reagents & Materials

This table outlines key non-laboratory "reagents" – the standardized instruments and tools – required for conducting robust EDC awareness research.

Table 3: Essential Research Instruments for EDC Awareness Assessment

| Item Name | Type | Primary Function | Example Application |

|---|---|---|---|

| Endocrine Disruptor Awareness Scale (EDCA) | Validated Psychometric Scale | Quantifies knowledge and awareness levels across three sub-domains: General Awareness, Impact, and Exposure/Protection. | Core dependent variable in studies with healthcare professionals or educated cohorts [1]. |

| Healthy Life Awareness Scale (HLA) | Validated Psychometric Scale | Assesses general attitudes towards preventive health and healthy living, used to correlate with EDC-specific awareness. | Measuring how general health consciousness relates to specific EDC knowledge [1]. |

| Mutualités Libres/AIM Survey Instrument | Structured Questionnaire | Assesses habits, knowledge, information sources, and readiness for change related to EDCs in the general public. | Adapted for use in studies involving vulnerable groups like pregnant women [2]. |

| Hospital Anxiety and Depression Scale (HADS) | Validated Psychometric Scale | Screens for anxiety and depressive symptoms in community and hospital settings. | Used in correlational studies to investigate links between EDC exposure biomarkers and mental health [5]. |

| Focus Group Protocol | Qualitative Research Tool | A semi-structured guide for facilitating group discussions to explore beliefs, attitudes, and perceived risks in depth. | Eliciting rich, contextual data on public perceptions and the factors influencing risk judgment [3]. |

| Urinary Biomarker Panels (e.g., MBzP, MP) | Biological Assay | Provides objective measures of exposure to specific EDCs (e.g., phthalates, parabens) for correlation with survey data. | Objectively linking internal dose of EDCs to health outcomes (e.g., depressive symptoms) or awareness levels [5]. |

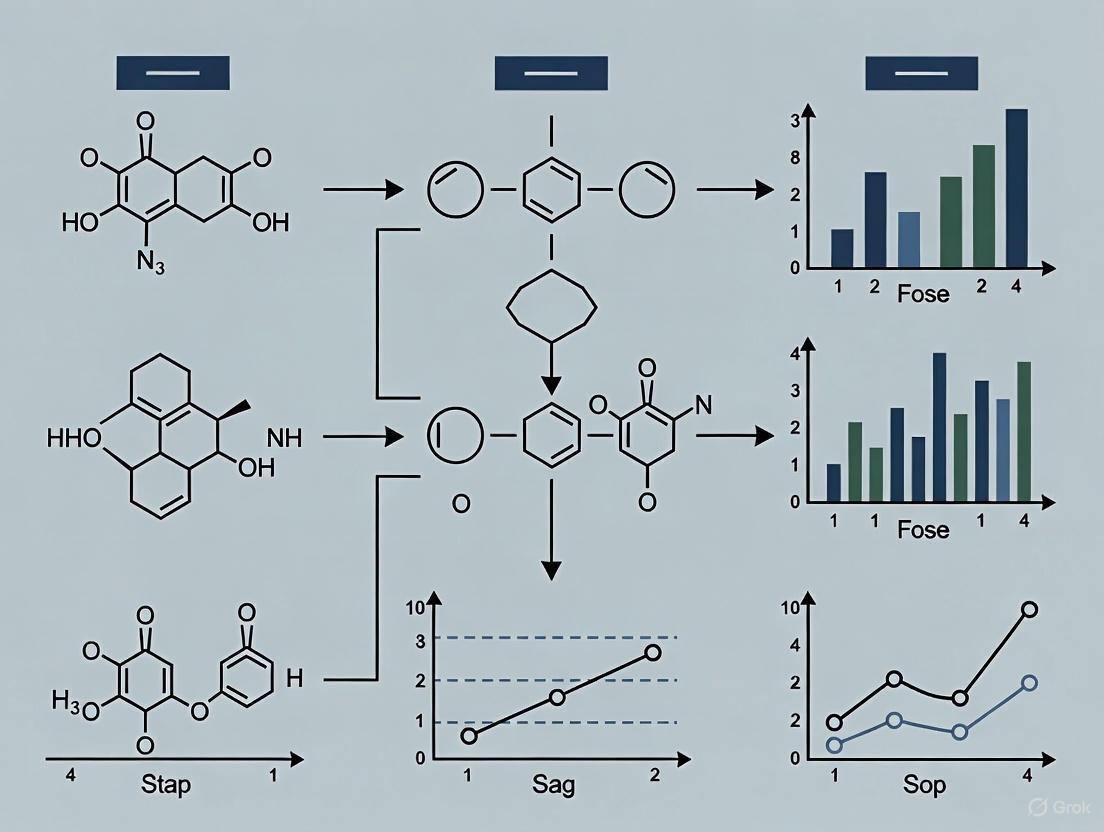

Visualizing the Public Risk Perception Paradigm

Public perception of EDC risk is not solely a function of knowledge. The following diagram models the key psychological factors influencing risk judgment, as identified in qualitative and quantitative studies [3] [4].

FAQs: Assessing Knowledge on Endocrine-Disrupting Chemicals (EDCs)

What constitutes a "low knowledge score" in EDC research? A "low knowledge score" is typically quantified using validated psychometric instruments like the Endocrine Disruptor Awareness scale (EDCA). This scale uses a 1-5 Likert system, where scores are interpreted as follows: 1-1.80 (Very Low), 1.81-2.60 (Low), 2.61-3.40 (Moderate), 3.41-4.20 (High), 4.21-5.00 (Very High). A 2024 study defined a median general awareness score of 2.12 among medical students as "significantly higher" than comparison groups, contextualizing what constitutes low performance [1].

How prevalent is low awareness of EDCs among healthcare professionals? Research indicates a significant awareness gap. A 2024 cross-sectional study with 617 participants found medical students had a median general EDC awareness score of 2.12 (IQR: 1.5), which falls into the "Low" awareness category on the EDCA scale. Physicians performed better with a median score of 2.87 (IQR: 1.63), but this still resides in the "Moderate" range, indicating substantial room for improvement [1].

Why is EDC awareness crucial for drug development and clinical research professionals? Endocrine-disrupting chemicals interfere with hormone action and are associated with chronic diseases including neurodevelopmental, reproductive, and metabolic disorders, as well as some cancers [6]. Understanding EDCs is critical for designing clinical trials that account for these environmental confounders, assessing patient exposure risks, and developing preventive health strategies. The association between EDC exposure and diseases like diabetes, obesity, and decreased fertility is particularly relevant for drug development pipelines [1].

What methodologies are used to assess EDC knowledge gaps? Standardized assessment employs the Endocrine Disruptor Awareness Scale (EDCA), a 24-item validated instrument with a 5-point Likert-type response system. It measures three subcategories: General Awareness, Impact, and Exposure & Protection. Studies typically employ cross-sectional designs with statistical analysis using non-parametric tests (Mann-Whitney U, Kruskal-Wallis) and linear regression to investigate variable relationships [1].

Quantitative Data on EDC Knowledge Scores

Table 1: EDC Awareness Scores Among Medical Populations (2024 Data)

| Population Group | Sample Size | General Awareness Score (Median [IQR]) | Total EDCA Score (Mean ± SD) | Awareness Classification |

|---|---|---|---|---|

| Medical Students | 381 | 2.12 [1.5] | 3.40 ± 0.54 | Low to Moderate |

| Physicians | 236 | 2.87 [1.63] | 3.63 ± 0.6 | Moderate |

| Endocrinologists | Subset of Physicians | Significantly higher than other specialties | 3.96 ± 0.56 | High |

Data sourced from a cross-sectional study of Turkish medical students and physicians using the validated Endocrine Disruptor Awareness Scale (EDCA) [1].

Table 2: Factors Associated with EDC Awareness

| Factor | Association with EDC Awareness | Statistical Significance |

|---|---|---|

| Professional Status (Physician vs. Student) | Significantly higher awareness in physicians | p < 0.001 |

| Specialty (Endocrinology) | Significantly higher awareness in endocrinologists | p = 0.003 |

| Gender (Female Physicians) | Significantly higher awareness in female physicians | p = 0.027 |

| Healthy Life Awareness (HLA) Score | Positive correlation with EDC awareness | Statistically Significant |

| Age | Positive correlation with EDC awareness | Statistically Significant |

Analysis of factors influencing EDC knowledge levels from a 2024 study [1].

Experimental Protocols for Knowledge Assessment

Protocol 1: Cross-Sectional Survey Using the EDCA Scale

Objective: To quantify knowledge scores and prevalence of low awareness regarding Endocrine-Disrupting Chemicals in a target professional population.

Materials:

- Validated Endocrine Disruptor Awareness Scale (EDCA) questionnaire

- Healthy Life Awareness Scale (HLA) questionnaire

- Digital survey platform (e.g., via institutional email)

- Statistical analysis software (e.g., IBM SPSS v25.0)

Methodology:

- Participant Recruitment: Recruit a representative sample through institutional channels. Ensure informed consent is obtained digitally.

- Data Collection: Administer the combined EDCA and HLA scales electronically. The EDCA measures three subdomains: General Awareness, Impact, and Exposure & Protection.

- Data Cleaning: Exclude incomplete responses and perform listwise deletion for missing values. Validate demographic data.

- Statistical Analysis:

- Use descriptive statistics (mean, median, standard deviation, IQR) for continuous variables.

- Assess normality of distribution to decide between parametric (t-test, ANOVA) or non-parametric tests (Mann-Whitney U, Kruskal-Wallis).

- Perform Spearman's rank correlation to examine relationships between variables like age, HLA score, and EDCA score.

- Employ linear regression with a backward stepwise method to build a model of factors predicting EDC awareness.

- Interpretation: Classify scores based on EDCA guidelines (1-1.8: Very Low; 1.81-2.6: Low; 2.61-3.4: Moderate; 3.41-4.2: High; 4.21-5: Very High). Report prevalence of low scores (e.g., percentages in "Low" and "Very Low" categories) [1].

Protocol 2: Bibliometric Analysis of Research Trends

Objective: To identify and visualize global research trends, collaborations, and knowledge gaps in the field of EDCs and health.

Materials:

- Bibliographic database (Web of Science Core Collection)

- Analysis and visualization tools (VOSviewer, CiteSpace, R package 'bibliometrix')

Methodology:

- Literature Search: Execute a structured search query using terms such as:

('endocrine disrupting chemical*' OR 'endocrine disruptor*') AND ('child*' OR 'pediatric' OR 'adolescen*') AND ('health' OR 'exposure' OR 'neurodevelopment'). - Inclusion/Exclusion Screening: Apply predefined criteria (e.g., date range, article type, language) to filter records.

- Data Extraction and Analysis:

- Use software to analyze publication outputs, influential countries/institutions, authorship, and journal distributions.

- Perform keyword co-occurrence analysis to identify research hotspots and emerging topics.

- Generate visual network maps of international collaboration.

- Synthesis: Identify under-researched areas and gaps in the scientific literature, which can reflect and inform knowledge gaps among professionals [7].

EDC Knowledge Assessment Workflow

The Scientist's Toolkit: Key Reagents & Materials

Table 3: Essential Materials for EDC Biomarker and Knowledge Assessment Research

| Item | Function/Application in Research |

|---|---|

| Validated Surveys (EDCA Scale) | A 24-item instrument to reliably quantify awareness levels across General Awareness, Impact, and Exposure & Protection subdomains [1]. |

| Biomolecular Assay Kits | For quantifying EDC concentrations (e.g., Bisphenol A, phthalate metabolites) or biomarkers of effect (e.g., uric acid, systemic inflammation markers) in human biological samples (serum, urine) [8]. |

| Statistical Analysis Software (e.g., SPSS, R) | To perform descriptive statistics, non-parametric tests, correlation, and regression analyses for both knowledge score data and exposure-health outcome relationships [8] [1]. |

| Bibliometric Software (VOSviewer, CiteSpace) | To analyze global research trends, map scientific collaboration, and identify knowledge gaps in the EDC field through literature data [7]. |

| Mixture Effect Statistical Models (WQS, Qgcomp, BKMR) | Advanced statistical models to assess the combined effect of multiple EDCs acting together on a health outcome, moving beyond single-chemical analysis [8]. |

Technical Support Center

Frequently Asked Questions (FAQs)

Q1: What are the primary challenges in assessing low knowledge levels in research populations? A key challenge is designing validated assessment tools that accurately capture baseline knowledge levels before an intervention. In the context of cancer prevention research, a knowledge assessment questionnaire can be used to categorize participants, for instance, by scoring them from 0–4 to indicate "low knowledge" [9]. This helps in quantifying the extent of the awareness gap. A major subsequent challenge is that low awareness (e.g., 3.7% in a study on cancer prevention codes) does not automatically translate into motivation to change behavior, with only 27.4% of respondents reporting increased motivation after being informed [10]. Furthermore, populations with lower education levels may be both less aware and more exposed to risk factors, complicating intervention strategies [10].

Q2: Our study revealed very low awareness of a key health guideline. How can we structure an effective intervention to bridge this knowledge gap? An effective intervention should be multi-faceted. First, the knowledge content must be evidence-based and clearly communicated, similar to the 12 recommendations of the European Code Against Cancer (ECAC) [10]. Second, the intervention must be designed not only to inform but also to motivate. Since agreement with recommendations (60.6% in one study) is much higher than subsequent motivation to change (27.4%), your protocol should include components that build self-efficacy and address perceived barriers [10]. Finally, dissemination should be targeted, as awareness levels can vary significantly with demographics like education level and living situation [10].

Q3: What methodological considerations are critical when measuring the link between knowledge and subsequent behavior change? Critical considerations include:

- Study Design: Cross-sectional studies can measure association, but longitudinal designs are better for establishing that an increase in knowledge precedes a change in behavior [10].

- Validated Instruments: Use or adapt previously validated questionnaires to ensure your knowledge assessment is reliable [10].

- Confounding Control: Account for socioeconomic factors, as they can influence both knowledge and behavior. For example, logistic regression should be adjusted for variables like education, gender, and age [10].

- Clear Outcome Definitions: Predefine how "knowledge" and "motivation" are quantified. For instance, knowledge can be a score on a questionnaire, while motivation can be measured using Likert-scale responses to specific statements about intent to change [10].

Q4: How can we address low participant motivation that persists even after successful knowledge transfer? Addressing persistent low motivation requires moving beyond simple information dissemination. Strategies include:

- Tailored Messaging: Develop messages that resonate with specific demographic groups (e.g., segmented by age, gender, or education level) who show lower motivation [10].

- Focus on Benefits: Emphasize the tangible benefits of the recommended actions to enhance personal relevance.

- Behavioral Techniques: Incorporate elements from behavioral science, such as action planning, to help participants overcome the "intention-behavior gap."

Troubleshooting Guides

Problem: Pre-intervention survey shows near-total lack of awareness of the topic.

- Potential Cause: The target population has not been exposed to existing public health campaigns or information channels.

- Solution: Verify the baseline assessment tool is not overly complex. Use this finding to justify the need for your intervention. A very low baseline (e.g., 3.7% awareness) provides a clear and strong rationale for your study [10].

Problem: Knowledge scores improve post-intervention, but no behavioral change is observed.

- Potential Cause: This is a common issue where knowledge is necessary but not sufficient for behavior change. Barriers (e.g., cost, time, access) or low perceived self-efficacy may prevent action.

- Solution: The intervention must be designed to address these barriers directly. Pre-study qualitative research can help identify these barriers. Furthermore, consider measuring "motivation" or "intent to change" as an intermediate outcome, which can be more sensitive than final behavior change in shorter studies [10].

Problem: High dropout rates in the study cohort, particularly in groups with initially low knowledge.

- Potential Cause: Participants with low knowledge may feel the content is not relevant to them or may become disengaged if the material is not accessible.

- Solution: Implement retention strategies such as simplified materials, reminder systems, and incentives. Ensure that the intervention is delivered in a user-friendly and supportive manner to maintain engagement.

Research Data and Protocols

Quantitative Data on Awareness and Motivation

The table below summarizes key quantitative findings from a cross-sectional study on awareness and motivation related to cancer prevention, illustrating the gap between knowledge and action [10].

Table 1: Awareness and Attitudes Towards Cancer Prevention in a Swedish Cohort

| Metric | Population Group | Result | Notes |

|---|---|---|---|

| Awareness of ECAC | Total Sample (N=1520) | 3.7% | Very low baseline awareness [10]. |

| College/University Education | OR: 2.23 | More likely to be aware [10]. | |

| Males | OR: 0.56 | Less likely to be aware [10]. | |

| Individuals Living Alone | OR: 0.47 | Less likely to be aware [10]. | |

| Agreement with ECAC | Total Sample | 60.6% | Majority agreed with recommendations post-exposure [10]. |

| Increased Motivation | Total Sample | 27.4% | Significant drop from agreement to motivation [10]. |

Experimental Protocol: Assessing Knowledge and Motivation

Title: Protocol for a Cross-Sectional Study on Awareness and Motivation in Health Behavior

Background: This protocol is designed to assess baseline awareness of a specific set of health recommendations (e.g., the European Code Against Cancer) and to measure the immediate impact of exposure to these recommendations on motivation to adopt healthier behaviors.

Methodology:

- Participant Recruitment: Recruit a large, randomly selected sample from a general population survey panel. Apply inclusion/exclusion criteria to avoid topic fatigue (e.g., not participating in a similar survey in the last 6 months) [10].

- Data Collection: Administer an online, study-specific questionnaire. The questionnaire should include:

- Demographic questions (age, gender, education, etc.) [10].

- Questions on general attitudes towards health prevention [10].

- A specific question to assess pre-existing awareness: "Had you heard about [the health guidelines] before taking part in this survey?" (Yes/No/Don't know) [10].

- Presentation of the key health recommendations.

- Post-exposure questions using Likert scales (1-5 points) to measure:

- Agreement with the recommendations.

- Whether they learned something new.

- Whether their motivation to improve their lifestyle has increased [10].

- Data Analysis:

- Dichotomize responses for analysis (e.g., combine "Don't know" with "No"; consider scores of 4-5 on the Likert scale as "Yes") [10].

- Use univariate and adjusted logistic regression analyses to identify demographic factors associated with awareness.

- Apply post-stratification weights to the data based on key demographics (gender, age, education) to correct for sample bias and improve generalizability [10].

Visualizing the Research Workflow

The diagram below outlines the logical flow and key assessment points in a study investigating the relationship between awareness, knowledge, and motivation.

Research Workflow and Factors

The Scientist's Toolkit: Key Reagents and Materials

Table 2: Essential Materials for Knowledge and Motivation Assessment Research

| Item Name | Function/Description | Example/Reference |

|---|---|---|

| Validated Questionnaire | A pre-tested survey instrument to reliably measure knowledge levels, attitudes, and behavioral intent. | Study-specific questionnaire adapted from Cancer Awareness Measures [10]. |

| Online Survey Platform | A secure, web-based application for distributing the questionnaire and collecting responses from a large sample. | Use of a managed online survey panel (e.g., Sverigepanelen) [10]. |

| Health Information Stimulus | The standardized evidence-based information given to participants as part of the intervention. | The 12 recommendations of the European Code Against Cancer (ECAC) [10]. |

| Statistical Analysis Software | Software used for performing univariate and multivariate analyses (e.g., logistic regression) on the collected data. | Used for calculating odds ratios (OR) and confidence intervals (CI) [10]. |

| Demographic Data | Background information on participants used for stratification and to control for confounding variables. | Data on gender, age, education, and income [10]. |

Troubleshooting Guide: Common EDC System Issues

1. Issue: User receives "Access Denied" when trying to enter data.

- Question: Why can't I access the case report form (CRF) for my site?

- Answer: This is typically a permissions issue. Your user profile may lack the necessary role-based access control (RBAC) for this specific study or site. Please contact your system administrator to verify that your account is assigned to the correct study group and has the 'Data Entry' role [11].

2. Issue: Data validation errors preventing form submission.

- Question: Why does the system keep rejecting my form even after I've filled all required fields?

- Answer: EDC systems perform real-time checks on data format and logic. Go to the "Validation" tab on the form to see specific error codes. Common issues include date formats (must be DD/MM/YYYY), values outside pre-defined ranges, or missing concomitant medication end dates when a start date is recorded. Refer to the study-specific data entry guidelines for allowable values [12].

3. Issue: Inability to electronically sign a completed case report form.

- Question: The "Sign" button is greyed out after I complete the CRF. What should I do?

- Answer: A form cannot be signed if there are unresolved queries or pending data clarifications. Navigate to the "Queries" tab and address all open queries from the Clinical Research Associate (CRA) or data manager. Once all queries are closed, the electronic signature function will become available [12].

4. Issue: Slow system performance during peak hours.

- Question: Why is the EDC system running so slowly today?

- Answer: System performance can be impacted by high user traffic, typically during regional business hours (e.g., 9:00 AM - 12:00 PM EST). This can also be due to your internet connection. For troubleshooting, the system's "Diagnostics" tab can provide details on connection status and latency. If problems persist, clear your browser cache or try accessing the system during off-peak hours [11].

5. Issue: Audit trail shows discrepancies I did not enter.

- Question: I see data points in the audit trail that I don't remember entering. Was my account compromised?

- Answer: The audit trail is a secure, time-stamped record of all data changes. Discrepancies can often be traced to automated system updates, such as the import of central laboratory data or the application of protocol-specified logic (e.g., auto-calculation of BMI from height and weight). Check the "Data Source" column in the audit trail to identify the origin of the entry [12].

The following table synthesizes key quantitative findings from knowledge assessment research, highlighting disparities across demographic variables. This data underpins the thesis on addressing low awareness in EDC knowledge assessment.

Table 1: Impact of Demographic Variables on Research Outcomes and Knowledge

| Demographic Variable | Key Metric | Findings by Group | Thesis Context: Relevance to EDC Knowledge Gaps |

|---|---|---|---|

| Age | Project Leadership & Output | Researchers aged 50+ show a significant decline in project leadership and output, influenced by retirement policies [13]. | Highlights a risk of knowledge attrition; EDC training programs must capture expertise from senior researchers before retirement. |

| Age | Publication Output (SCI/EI) | The gap in publication output between males and females widens dramatically after age 56, with male output increasing while female output plateaus [13]. | Suggests career stage-specific barriers; mid-to-late career researchers may face unique challenges in adopting new EDC systems. |

| Professional Experience | Advanced Degree Pursuit | Doctoral-level training is a key differentiator for research career trajectories, with PhDs being critical for leading independent investigations [14]. | Emphasizes that methodological depth (from PhD training) is crucial for understanding the principles behind EDC system design, not just their function. |

| Career Stage | Principal Investigator (PI) Rate | The proportion of researchers attaining PI status increases with career age, but gender gaps emerge and evolve, narrowing again post-50 [13]. | Indicates that professional background and seniority directly influence exposure to and authority over clinical data management tools like EDC. |

Experimental Protocol: Assessing EDC System Proficiency

Objective: To quantitatively assess and compare proficiency in Electronic Data Capture (EDC) system usage across researchers of different age groups, educational backgrounds, and professional experiences.

1. Methodology Overview

- Design: A cross-sectional, simulation-based assessment.

- Participants: Stratified sampling of clinical research personnel (e.g., Clinical Research Associates-CRAs, Data Coordinators, Investigators) based on the key demographic variables: Age (Group A: <35 yrs, Group B: 36-50 yrs, Group C: >50 yrs), Education (BSc, MSc, PhD), and Professional Background (Academia, Pharmaceutical Industry, CRO).

2. Procedure

- Step 1: Pre-assessment Survey. Collect demographic data and self-reported confidence in using EDC systems on a Likert scale (1-5).

- Step 2: Simulation Module. Participants complete a standardized, timed simulation in a test EDC environment. Tasks are designed to mirror real-world workflows:

- Data Entry: Enter data from a simulated source document into a CRF.

- Query Resolution: Identify and respond to automated data validation queries.

- Audit Trail Navigation: Locate a specific data point change within the audit trail.

- eCRF Sign-off: Execute the electronic signature process after resolving all queries.

- Step 3: Knowledge Quiz. A multiple-choice quiz tests understanding of underlying principles: Good Clinical Practice (GCP), ALCOA+ principles for data integrity, and 21 CFR Part 11 compliance.

3. Data Analysis

- Primary Endpoints: Total simulation completion time, accuracy score (%), and quiz score (%).

- Statistical Analysis: A multivariate analysis of variance (MANOVA) will be used to determine the influence of age, education, and professional background on the composite of the primary endpoints. Post-hoc tests will identify specific between-group differences [13].

This protocol generates the quantitative data necessary to move beyond anecdotal evidence and precisely identify where EDC knowledge gaps are most pronounced.

EDC Proficiency Assessment Workflow

The following diagram illustrates the logical flow of the experimental protocol for assessing EDC proficiency, from participant recruitment to data analysis.

The Scientist's Toolkit: Essential Reagents for EDC Research

Table 2: Key research reagent solutions for EDC knowledge assessment experiments.

| Item | Function/Description |

|---|---|

| Validated Test EDC System | A mirrored, non-production instance of a commercial EDC system (e.g., Medidata Rave, Oracle Clinical) used to host the simulation module without risk to live study data. |

| Simulated Source Documents | Mock patient records and clinical observation forms designed with intentional errors and ambiguities to test data entry accuracy and query generation skills. |

| Standardized Scoring Algorithm | An automated or semi-automated script to objectively score simulation accuracy and speed, ensuring consistency across all participant assessments. |

| Demographic Data Collection Module | A secure, anonymized electronic survey tool integrated into the assessment platform to consistently capture age, education, and professional background variables. |

| ALCOA+ Principles Framework | The definitive checklist for data integrity (Attributable, Legible, Contemporaneous, Original, Accurate, + Complete, Consistent, Enduring, Available) used as the basis for the knowledge quiz [12]. |

Frequently Asked Questions (FAQs)

1. How do age and career stage realistically impact the ability to learn a new EDC system?

- Answer: While younger researchers may adapt to new software interfaces more quickly, older, more experienced researchers possess a deeper understanding of clinical protocols and data integrity principles (like ALCOA+), which are critical for correct EDC use. The challenge is not cognitive ability but often a lack of targeted training that bridges this experiential knowledge with the new digital tool. Our research aims to design training that leverages these strengths [13].

2. My educational background is in biology, not computer science. Will this put me at a disadvantage in using EDC systems?

- Answer: Not necessarily. EDC systems are designed for clinical research professionals, not software engineers. A background in life sciences provides the critical context for understanding what data is being collected and why, which is more important than knowing how to code. Effective training should focus on translating this domain expertise into efficient system use, emphasizing the workflow rather than the underlying technology.

3. Why is it important to analyze EDC knowledge by demographic variables like age and education?

- Answer: A one-size-fits-all approach to training is inefficient. By identifying specific knowledge gaps associated with particular demographics, organizations can develop targeted, just-in-time training interventions. For example, if data shows mid-career academics struggle with audit trail functions, training can be tailored to address this, thereby improving overall data quality and compliance more effectively than generic tutorials [13].

4. What is the most common source of data entry errors, and is it linked to a specific demographic?

- Answer: Initial findings often point to errors in understanding complex, branching-form logic (e.g., skip patterns) and a lack of familiarity with audit trail functionality. These issues are not confined to one demographic but tend to be more prevalent among users with less formal training on the specific EDC system, regardless of their age or degree, highlighting the universal need for comprehensive, hands-on training [12].

The relationship between an individual's knowledge of a health threat and their subsequent perception of personal illness sensitivity is not always direct. A growing body of research suggests that risk perception is a critical psychological mechanism that translates abstract knowledge into a concrete sense of personal vulnerability [15] [16]. Within the specific context of Endocrine-Disrupting Chemicals (EDCs)—exogenous substances linked to adverse health outcomes such as cancer, infertility, and neurodevelopmental disorders—studies consistently reveal a significant public knowledge gap [17] [2] [18]. This technical support document explores the mediating role of risk perception, providing researchers with methodologies, troubleshooting guides, and essential tools to investigate how knowledge of EDCs, mediated through risk perception, influences perceived illness sensitivity, particularly in populations where low awareness prevails.

Key Concepts and Theoretical Framework

Core Constructs and Their Interrelationships

- Knowledge: Factual understanding of a health threat, its sources, and its consequences. In EDC research, this often refers to awareness of EDCs themselves and their associated health risks [2].

- Risk Perception: An individual's subjective judgment about the likelihood and severity of a health threat. It is often subdivided into:

- Illness Sensitivity: In this context, synonymous with perceived illness vulnerability or perceived susceptibility, reflecting concern about personally developing a health condition related to a threat like EDCs [19].

The Mediation Model

The central thesis is that knowledge does not directly determine illness sensitivity. Instead, its effect is mediated through risk perception. Knowledge influences the formation of risk perceptions (both deliberative and affective), which in turn directly shapes an individual's sense of illness sensitivity [15]. This model helps explain why increasing knowledge alone through public health campaigns may not yield corresponding changes in protective behavior or perceived vulnerability; the crucial step of personal risk appraisal must occur.

Established Experimental Protocols for Assessing the Mediation Model

Protocol 1: Cross-Sectional Survey with Mediation Analysis

This is a common and efficient design for establishing initial evidence of mediation.

- Objective: To test the hypothesis that risk perception mediates the relationship between EDC knowledge and perceived illness sensitivity (e.g., perceived susceptibility to EDC-related health conditions).

- Methodology:

- Participant Recruitment: Target vulnerable or general population samples (e.g., pregnant women, new mothers, young adults) [18] [2]. Sample size must be calculated to have adequate power for mediation analysis.

- Measures and Instrumentation:

- Knowledge Assessment: Use a structured questionnaire to assess both general awareness ("Have you heard of EDCs?") and specific knowledge (sources of exposure, health effects) [2]. A binary (Yes/No) or Likert scale can be used.

- Risk Perception Assessment: Measure using adapted scales. The Brief Illness Perception Questionnaire (Brief-IPQ) can be adapted for healthy populations [19]. Also assess:

- Comparative Risk: "Compared to others my age, my risk of health problems from EDCs is..." (Higher/Lower/Same) [19].

- Absolute Risk: "How likely are you to experience health issues from EDCs?" (Scale from very unlikely to very likely).

- Illness Sensitivity/Susceptibility Assessment: Measure with direct items, e.g., "I am vulnerable to health problems caused by EDCs," rated on a Likert scale [18].

- Data Analysis:

- Perform hierarchical regression analyses as outlined by Legesse & Wondimu (2023) to test for mediation [15].

- Use statistical software (e.g., SPSS, R) with PROCESS macro to conduct mediation analysis, testing the significance of the indirect effect of knowledge on illness sensitivity through risk perception.

Protocol 2: Qualitative Exploration Followed by Quantitative Validation

A mixed-methods approach provides deeper insight into the constructs before quantitative testing.

- Objective: To gain an in-depth, nuanced understanding of EDC risk perceptions and their determinants in a specific population, and to use these findings to develop a robust quantitative tool.

- Methodology:

- Qualitative Phase:

- Conduct focus groups or semi-structured interviews [17] [18].

- Use open-ended questions to explore awareness, feelings of vulnerability, perceived severity, and factors influencing risk perceptions (e.g., "What are your concerns about chemicals in everyday products?").

- Record, transcribe, and analyze data using thematic analysis with software like NVivo or RQDA to identify key themes [18].

- Quantitative Phase:

- Develop a questionnaire based on the qualitative findings.

- Administer the survey to a larger sample.

- Create a composite risk perception score from the qualitative-derived items, as demonstrated by Axelrad et al. (2018), which combined perceived severity and susceptibility sub-scores [18].

- Statistically validate the score and test the mediation model as in Protocol 1.

- Qualitative Phase:

The table below summarizes quantitative findings from key studies investigating knowledge and risk perception of environmental health threats.

Table 1: Summary of Key Quantitative Findings from Related Studies

| Study Population | Key Knowledge Finding | Key Risk Perception Finding | Mediation/Moderator Finding |

|---|---|---|---|

| Young Emirati Women (re: Breast Cancer) [19] | N/A (Illness perceptions were measured) | Low individual and comparative risk perception. Higher risk perception in those with family history. | The relationship between illness perceptions and perceived individual risk was mediated by compared risk. |

| Pregnant Women (re: EDCs) [18] | Low level of knowledge was a determinant of risk perception. | Mean EDC risk perception score was 55.0 ± 18.3 on a 100-point scale. | Age and level of knowledge were confirmed determinants of EDC risk perception. |

| Pregnant Women & New Mothers (re: EDCs) [2] | 59.2% were unfamiliar with EDCs. Low awareness of BPA and phthalates. | N/A (Focused on awareness and knowledge) | N/A |

| General Sample (re: NCDs) [15] | N/A | Risk perception partially mediated the knowledge-intention relationship. | Risk perception components operated as a moderator in the knowledge-intention pathway. |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for EDC Knowledge and Risk Perception Research

| Item | Function in Research | Example/Notes |

|---|---|---|

| Adapted Brief-IPQ [19] | Assesses cognitive and emotional illness representations in healthy populations. | Adapt items to target EDCs (e.g., "How much does exposure to EDCs affect your life?"). |

| EDC Knowledge Questionnaire [2] | Quantifies participant awareness and understanding of EDCs, their sources, and health effects. | Include items on specific EDCs (BPA, phthalates, parabens) and their associated health risks. |

| Risk Perception Score Instrument [18] | Provides a composite score of EDC risk perception by combining perceived severity and susceptibility sub-scores. | Ensures a multi-dimensional and quantifiable measure of the core mediator variable. |

| Semi-Structured Interview Guide [17] [18] | Explores underlying beliefs, feelings, and heuristic processing (e.g., similarity, availability) related to EDC risks. | Allows for in-depth, qualitative data collection to inform hypothesis and questionnaire design. |

| Computer-Assisted Qualitative Data Analysis Software (CAQDAS) [18] | Assists in the systematic coding and thematic analysis of qualitative interview/focus group data. | Software such as RQDA (using R) or NVivo. |

| Statistical Software with Mediation Analysis Capability [15] | Performs complex statistical analyses, including regression-based mediation and moderation analysis. | SPSS with PROCESS macro, R, or Stata. |

Troubleshooting Guides and FAQs

FAQ 1: We found a weak correlation between knowledge and illness sensitivity. Does this invalidate our hypothesis?

- Answer: Not necessarily. A weak direct correlation is often a hallmark of a mediated relationship. Your analysis should proceed to test whether risk perception is a significant mediator. The primary effect of knowledge may be operating through its influence on risk perception, rather than directly on sensitivity [15] [16].

FAQ 2: Participants show high knowledge but low risk perception. What could explain this?

- Answer: This is a common phenomenon, often explained by optimistic bias or unrealistic optimism, where individuals believe they are less at risk than others [19] [15] [16]. Other factors include:

- Lack of personal relevance: The knowledge is abstract and not personalized.

- Affective vs. Deliberative Disconnect: The deliberative, factual knowledge fails to generate an affective (emotional) response necessary for risk perception [16].

- Heuristic Processing: Relying on mental shortcuts like the "availability heuristic" (if a severe case isn't readily recalled, risk is perceived as low) [19].

FAQ 3: How can we improve the internal validity of our risk perception measure?

- Answer:

- Use Multi-item Scales: Avoid single-item measures. Use validated sub-scales for perceived susceptibility and severity [18].

- Pilot Testing: Conduct cognitive interviews to ensure participants interpret questions as intended.

- Measure Different Components: Differentiate between absolute risk, comparative risk, and affective risk to get a fuller picture [19] [16].

FAQ 4: Our mediation analysis shows a significant indirect effect, but the total effect is not significant. Is this a problem?

- Answer: This is known as "indirect-only mediation" and is a perfectly valid outcome. It indicates that the mediator (risk perception) fully explains the relationship between knowledge and illness sensitivity, and there is no significant direct effect. This is a strong finding for your mediation model [15].

Visualization of the Theoretical Framework and Workflow

The following diagram illustrates the core theoretical model of risk perception as a mediator and a typical mixed-methods research workflow to study it.

Building the Toolkit: Methodologies for Robust EDC Knowledge Assessment

Frequently Asked Questions

What is the first step in developing an EDC knowledge questionnaire? The process begins with a comprehensive literature review to define the construct and identify a pool of potential items. For EDCs, this means grounding the questionnaire in established scientific evidence, such as the Key Characteristics of Endocrine-Disrupting Chemicals, which include interacting with or antagonizing hormone receptors, altering hormone receptor expression, and disrupting signal transduction [20]. Initial items should cover the main exposure routes, such as food, respiration, and skin absorption [21].

How do I ensure my questionnaire's content is relevant and comprehensive? You must establish content validity. This involves assembling a panel of experts (e.g., in endocrinology, toxicology, chemical/environmental specialties, and survey design) to rate each item for its relevance and clarity. This is quantified using the Item-Content Validity Index (I-CVI), where an I-CVI of 0.78 or higher is considered excellent. The average of all I-CVIs, the Scale-Content Validity Index (S-CVI/Ave), should be at least 0.90 for the entire scale [22] [21].

My data is not fitting my expected model during validation. What should I do? This is common. Use a combination of Exploratory Factor Analysis (EFA) and Confirmatory Factor Analysis (CFA). EFA helps uncover the underlying factor structure of your data without preconceived constraints. CFA then tests how well that structure fits. If the model fit is poor (e.g., high RMSEA, low CFI), consult modification indices and cross-loadings to refine the model by removing poorly performing items or allowing correlated errors [23] [22].

What is an acceptable level of reliability for a new questionnaire? For internal consistency, Cronbach's alpha is commonly used. A value of 0.70 or higher is acceptable for a newly developed questionnaire, while 0.80 or higher is preferred for an established instrument [21]. For test-retest reliability, which measures stability over time, the Intraclass Correlation Coefficient (ICC) should be calculated. An ICC above 0.60 is considered good, and above 0.75 is excellent [22].

How can I address the "low awareness" problem in EDC knowledge assessment? The questionnaire must be designed to detect a wide range of knowledge levels. In the analysis, you can define a "low knowledge" category based on score distribution, for instance, participants scoring in the lowest quartile or below a specific cutoff point (e.g., 0-4 on a knowledge assessment) [9]. This allows researchers to identify demographic or socio-professional groups with significant knowledge gaps and tailor interventions accordingly.

Troubleshooting Guides

Problem: Poor Content Validity (Low CVI Scores)

Symptoms: Expert reviewers deem questions irrelevant, unclear, or non-comprehensive. The calculated I-CVI scores are below the 0.78 threshold.

| Resolution Step | Action & Details |

|---|---|

| 1. Reformulate Items | Reword ambiguous questions based on specific expert feedback. Use simpler language and avoid jargon. |

| 2. Review EDC Key Characteristics | Ensure all key domains of EDC action [20] and exposure routes [21] are covered to improve comprehensiveness. |

| 3. Re-pilot with Target Audience | Conduct cognitive interviews with a small sample from your population (e.g., 10 adults) to check for understanding and clarity before returning to experts [21] [22]. |

Problem: Unclear Factor Structure

Symptoms: EFA results show items cross-loading on multiple factors, low factor loadings (<0.40), or a factor structure that doesn't make theoretical sense.

| Resolution Step | Action & Details |

|---|---|

| 1. Check Data Adequacy | Verify that the Kaiser-Meyer-Olkin (KMO) measure is >0.60 and Bartlett's Test of Sphericity is significant before running EFA [21]. |

| 2. Remove Problematic Items | Sequentially remove items with low communalities (<0.20) or low factor loadings. It is desirable to have at least three items per factor [21]. |

| 3. Iterate with CFA | Use CFA on a separate dataset to confirm the structure derived from EFA. Be prepared to make further adjustments based on modification indices [21] [22]. |

Problem: Low Internal Consistency or Reliability

Symptoms: Cronbach's alpha for a knowledge domain or the entire scale is below 0.70. Test-retest ICC values are below 0.60.

| Resolution Step | Action & Details |

|---|---|

| 1. Increase Item Homogeneity | Review and add more items that measure the same specific construct within a domain (e.g., knowledge of EDCs in food). |

| 2. Check for Miskeyed Items | For knowledge scales, verify that the correct answers are accurately defined and that items are not misleading. |

| 3. Re-examine Test Conditions | For low test-retest reliability, ensure the time between test and retest is appropriate (e.g., 2-4 weeks) and that no intervening educational events occurred [22]. |

Experimental Protocols for Key Validation Steps

Protocol 1: Establishing Content Validity

Objective: To quantitatively assess the relevance and clarity of the initial questionnaire items by a panel of experts.

Methodology:

- Expert Panel Assembly: Recruit 5-8 experts with backgrounds in endocrinology, environmental health, survey methodology, and toxicology [21] [22].

- Rating Process: Provide experts with the list of items and a rating scale. They will rate each item on relevance (e.g., "not relevant" to "highly relevant") and clarity (e.g., "not clear" to "very clear").

- Quantitative Analysis:

- Calculate the Item-Content Validity Index (I-CVI) for each item: the number of experts giving a rating of "relevant" or "very relevant" divided by the total number of experts.

- Calculate the Scale-Content Validity Index (S-CVI/Ave): the average of all I-CVIs.

- Compute the modified Kappa (K*) statistic to account for chance agreement [22].

Success Criteria: I-CVI ≥ 0.78; S-CVI/Ave ≥ 0.90; K* > 0.75 [22].

Protocol 2: Assessing Construct Validity via Factor Analysis

Objective: To verify that the questionnaire items validly measure the intended theoretical constructs (e.g., knowledge domains).

Methodology:

- Sample Size: Recruit a sample of participants large enough for stable analysis. A common rule is 10 participants per item, or a minimum of 100-300 participants [23] [21].

- Data Collection: Administer the questionnaire to the sample.

- Exploratory Factor Analysis (EFA):

- Confirmatory Factor Analysis (CFA):

Success Criteria: A clear, interpretable factor structure emerges from EFA, and the CFA model demonstrates a good-to-excellent fit to the data.

Protocol 3: Evaluating Reliability

Objective: To determine the internal consistency and temporal stability of the questionnaire.

Methodology:

- Internal Consistency:

- Test-Retest Reliability:

- Administer the same questionnaire to the same group of participants after a suitable time interval (e.g., 2-4 weeks).

- Calculate the Intraclass Correlation Coefficient (ICC) for the total score and subscale scores. A two-way mixed-effects model with absolute agreement is often used [22].

Success Criteria: Cronbach's alpha ≥ 0.70; ICC ≥ 0.60 [21] [22].

The Scientist's Toolkit: Research Reagent Solutions

| Item Name | Function & Application in EDC Questionnaire Research |

|---|---|

| Expert Panel | A group of 5-8 specialists to evaluate content validity, providing quantitative (CVI) and qualitative feedback on item relevance and clarity [21] [22]. |

| Pilot Sample | A small group (n=10-30) from the target population to test face validity, clarity, and estimated completion time before full-scale deployment [21] [22]. |

| Statistical Software (e.g., R, SPSS with AMOS) | Essential for performing Item Response Theory (IRT), Exploratory Factor Analysis (EFA), Confirmatory Factor Analysis (CFA), and calculating reliability coefficients (Cronbach's alpha, ICC) [23] [18] [21]. |

| Key Characteristics of EDCs Framework | A published consensus list of ten mechanistic properties of EDCs (e.g., interacts with hormone receptors, alters hormone production) used to ensure scientific comprehensiveness of knowledge items [20]. |

| Validated KAP Model | A theoretical framework dividing the questionnaire into Knowledge, Attitude, and Practice sections, allowing for a multi-dimensional assessment of the target population [23] [22]. |

Experimental Workflow and Logical Pathway

The following diagram illustrates the end-to-end process for developing and validating a reliable EDC knowledge questionnaire, from initial design to final deployment.

Questionnaire Development and Validation Workflow

Technical Support Center: Troubleshooting Guides and FAQs

This technical support resource addresses common challenges and advanced operational strategies for Research Electronic Data Capture (REDCap) platforms. These questions and solutions are framed within the context of addressing low awareness in EDC knowledge assessment, providing researchers and drug development professionals with practical methodologies to enhance data quality and operational efficiency.

Frequently Asked Questions (FAQs)

Q1: What are the most effective strategies for validating a REDCap project to ensure FDA 21 CFR Part 11 compliance?

A comprehensive validation strategy is crucial for regulated research. The following components form a robust validation framework [24]:

- User Requirements Specification (URS): Document all functional and non-functional requirements, including data entry forms, workflows, and reporting capabilities.

- Risk Assessment: Identify potential threats to data integrity and patient safety, prioritizing modules handling electronic signatures or patient identifiers.

- Functional Testing: Rigorously examine each REDCap module, testing data entry forms, automated calculations, branching logic, and export functions.

- Security Validation: Verify role-based access controls, encryption mechanisms, and audit trails to ensure compliance with HIPAA and GDPR standards.

- Change Control Process: Implement documented procedures to ensure system updates or modifications do not compromise validation status.

Advanced strategies for 2025 include automated testing tools, continuous validation integrated into the software development lifecycle, and risk-based validation focusing resources on high-risk areas [24].

Q2: How can we overcome EHR integration barriers with REDCap's Clinical Data Interoperability Services (CDIS)?

Barriers to implementing EHR integration often include competing clinical IT priorities, technical setup complexities, and regulatory concerns [25]. The following table summarizes common barriers and their remedies:

| Barrier | Recommended Remedy |

|---|---|

| Competing clinical IT priorities | Secure extramural funding; identify a local clinical champion |

| Technical and networking setup complexity | Engage IT leadership early; maintain regular technical stakeholder calls |

| Regulatory concerns about data access | Emphasize that users only access data already available in EHR; highlight audit trails |

| Researcher understanding of EHR data limitations | Provide informatics professional training and consultations |

As of May 2024, only 77 institutions worldwide were using CDIS out of 7,202 using REDCap, demonstrating a significant awareness and implementation gap [25].

Q3: What methodology can resolve data quality issues in complex, longitudinal REDCap studies?

For longitudinal studies that overwhelm REDCap's built-in Data Resolution Workflow, implement an external data quality pipeline like the "Blackbox" framework [26]:

- Tool Composition: Python-based pipeline requiring these input documents: study assessment schedule, visit range tolerances (± days), REDCap data dictionary, and protocol-specified required measures.

- Key Capability: Utilizes the project's data dictionary to determine required fields across study visits, accurately applying branching logic context to identify true missing data.

- Protocol Deviation Tracking: Automatically identifies and reports missed visits, forms, fields, and incomplete forms as protocol deviations for regulatory compliance.

- Execution Outcome: In initial implementation, identified 1,949 queries with violations occurring between 85-500 days, most resolved through protocol adjustments and branching logic corrections [26].

Q4: Can REDCap be used for operational efficiency beyond data collection?

Yes, REDCap can automate numerous research operations. One academic medical center transformed these workflows [27]:

| Research Initiative | Prior Workflow | REDCap Automation Solution |

|---|---|---|

| Service Requests | Paper-based (10-15 pages) | One-page digital intake with document repository |

| Protocol Development | Microsoft Word tracking | REDCap checklist with manager completion alerts |

| Rate Quote Requests | Email (easily lost) | Automated system with 6-month follow-up prompts |

| Participant Scheduling | Phone/email coordination | Integrated calendar showing real-time availability |

| Randomization | Delayed staff notification | Automated randomization outcome alerts |

This automation reduced a 27-step startup process to just 4 steps, dramatically improving efficiency [27].

Q5: What specific features support multi-site, multi-language population research in REDCap?

REDCap enables simultaneous data collection and management in multiple languages using a single tool and database [28] [29]. Implementation recommendations include:

- Structured Team Support: Regular team meetings, training, supervision, and automated error-checking procedures.

- Challenge Mitigation: For unstable internet connections in low-resource settings, implement offline data collection strategies with secure syncing when connectivity resumes.

- Digital Skill Gaps: Provide comprehensive training for data collectors with varying technical competencies.

- Error Management: Address incomplete and duplicate records through immediate data access during collection, enabling real-time troubleshooting.

Workflow Diagrams for REDCap Implementation

Data Quality Assurance Pipeline

REDCap-EHR Integration Process

Electronic Data Capture (EDC) Systems Comparison

The EDC landscape includes both enterprise commercial systems and academic-focused platforms. This comparison highlights key systems mentioned in recent literature:

| EDC System | Primary Use Case | Key Features | Regulatory Compliance |

|---|---|---|---|

| REDCap | Academic, non-commercial research | Multi-site coordination, survey instruments, branching logic | HIPAA, 21 CFR Part 11, FISMA, GDPR [28] [29] |

| Medidata Rave | Large global trials (oncology, CNS) | Advanced edit checks, AI-powered enrollment forecasting | 21 CFR Part 11, ICH-GCP [30] |

| Veeva Vault EDC | Sponsor-based clinical trials | Cloud-native, drag-and-drop CRF configuration | 21 CFR Part 11, GDPR [30] |

| Castor EDC | Academic & sponsor-backed CROs | Rapid study startup, eConsent, patient-reported outcomes | 21 CFR Part 11, GDPR [30] |

| OpenClinica | Hybrid & multilingual studies | Built-in ePRO, randomization, eConsent | CDISC compliance, 21 CFR Part 11 [30] |

Research Reagent Solutions for EDC Implementation

Essential components for establishing a validated REDCap environment:

| Component | Function | Implementation Example |

|---|---|---|

| Validation Protocol | Documents system performance under all conditions | 8-month median implementation time for CDIS integration [25] |

| Data Quality Pipeline | Identifies data errors in complex studies | Blackbox Python framework identifying 1,949 queries in initial run [26] |

| EHR Mapping Tool | Connects clinical data to research fields | CDIS module extracting 62+ million data points across 243 projects [25] |

| Automated Workflow Templates | Streamlines research operations | REDCap workflow reducing 27-step process to 4 steps [27] |

| Training Curriculum | Addresses digital skill gaps | Multi-language training for population research in Vietnam, Nepal, Indonesia [28] [29] |

Addressing the low awareness in EDC knowledge assessment requires both technical solutions and strategic implementation frameworks. The methodologies presented here—from validation protocols and EHR integration to data quality pipelines and workflow automation—provide researchers with evidence-based approaches to maximize REDCap's capabilities. As REDCap continues evolving with cloud migration, enhanced compliance pathways, and better ecosystem integration planned through 2026 [31], adopting these advanced practices will be crucial for advancing research data management excellence.

Frequently Asked Questions (FAQs) and Troubleshooting Guides

Awareness and Knowledge Assessment

FAQ: Our survey on EDC awareness has low response rates and shows minimal pre-existing knowledge. Is this typical? Troubleshooting Guide:

- Problem: Low participant awareness skews baseline data.

- Solution: This is a common challenge, as studies consistently show low public awareness of EDCs. A Turkish study found 59.2% of pregnant women and new mothers were unfamiliar with EDCs [2]. In focus groups, public awareness of EDCs was also found to be low [17]. Frame your survey to account for this expected knowledge gap. Consider including a brief, neutral educational primer post-baseline assessment to measure knowledge improvement, similar to methodologies used in other studies [2].

FAQ: How can we reliably assess the effectiveness of an EDC educational intervention? Troubleshooting Guide:

- Problem: Measuring changes in knowledge and behavior.

- Solution: Use a pre-post intervention design with validated scales. The Endocrine Disruptor Awareness scale (EDCA) is a 24-item Likert-scale instrument that measures general awareness, impact, and exposure and protection [1]. Combine this with a Healthy Life Awareness Scale (HLA) to investigate associations with general health consciousness [1]. The "Reducing Exposures to Endocrine Disruptors (REED)" study successfully used EDC-specific health literacy (EHL) surveys and Readiness to Change (RtC) metrics to demonstrate intervention efficacy [32].

Intervention and Exposure Reduction

FAQ: Our participants feel overwhelmed and don't know how to reduce their EDC exposure. What resources can we provide? Troubleshooting Guide:

- Problem: Participants lack actionable steps.

- Solution: Implement a structured, multi-faceted intervention. The most promising strategies include [33]:

- Accessible web-based educational resources.

- Targeted replacement of known toxic products (e.g., plastics, certain cosmetics).

- Personalization through one-on-one meetings or support groups. The REED study, which includes mail-in urine testing and personalized report-back, successfully increased EHL behaviors and reduced specific phthalate levels [32].

FAQ: Which clinical biomarkers can we track to objectively measure the health impact of reduced EDC exposure? Troubleshooting Guide:

- Problem: Linking exposure reduction to tangible health outcomes.

- Solution: While an active area of research, EDCs have been significantly associated with a wide range of clinical outcomes. When designing studies, consider tracking biomarkers related to conditions with established links to EDCs [34]:

- Metabolic Health: Biomarkers for diabetes and obesity.

- Reproductive Health: Hormone levels related to infertility.

- Cardiovascular Health: Biomarkers for cardiovascular disease.

- Inflammation: Inflammatory markers.

Preclinical and Translational Research

FAQ: How do we justify an animal study investigating EDCs and eating behavior? Troubleshooting Guide:

- Problem: Translating basic research for grant applications or publications.

- Solution: Cite foundational studies. Recent research presented in 2025 found that early-life EDC exposure in rats altered brain pathways related to reward and eating behavior, leading to a higher preference for sugary and fatty foods later in life. This provides a mechanistic explanation for how EDCs can contribute to obesity [35].

Experimental Protocols & Methodologies

Protocol 1: Implementing a Human EDC Reduction Intervention

This protocol is adapted from the "Reducing Exposures to Endocrine Disruptors (REED)" study [32].

Objective: To test the effectiveness of an educational and behavioral intervention in reducing EDC exposure in a cohort of reproductive-aged adults.

Workflow Overview:

Methodology Details:

- Participant Recruitment: Recruit from a large population health cohort (e.g., the Healthy Nevada Project). Target men and women of reproductive age (18-44 years). A sample size of 600 (300 per group) provides robust statistical power [32].

- Baseline Data Collection:

- Biomonitoring: Use a mail-in urine test kit to measure baseline levels of common EDCs (e.g., BPA, phthalates, parabens).

- Surveys: Administer validated EDC Health Literacy (EHL) and Readiness to Change (RtC) surveys.

- Clinical Biomarkers: Optional: Include at-home clinical test kits (e.g., Siphox) to track relevant health biomarkers [32].

- Intervention:

- Group: The intervention group receives a self-directed online interactive EDC curriculum modeled after the Diabetes Prevention Program, plus live counseling sessions for personalized support [32].

- Control Group: The control group receives standard care or a minimal intervention.

- Post-Intervention Data Collection: Repeat the biomonitoring and survey administration after the intervention period.

- Analysis: Compare changes in EDC metabolite levels, EHL/RtC scores, and clinical biomarkers between the intervention and control groups.

Protocol 2: Assessing EDC Awareness in a Target Population

This protocol is adapted from cross-sectional studies on EDC awareness among medical professionals and pregnant women [1] [2].

Objective: To quantify the level of EDC awareness and knowledge in a specific population, such as healthcare workers or vulnerable groups.

Methodology Details:

- Study Design: Cross-sectional, questionnaire-based survey.

- Participant Recruitment: Recruit a statistically determined sample size (e.g., 300+ participants) from the target population via institutional channels [1] [2].

- Survey Instruments:

- Use the validated Endocrine Disruptor Awareness scale (EDCA), a 24-item instrument with three subcategories: general awareness, impact, and exposure and protection. Scores are interpreted as very low to very high [1].

- Include the Healthy Life Awareness Scale (HLA) to correlate EDC awareness with general health attitudes [1].

- Collect sociodemographic data and information sources on EDCs.

- Data Analysis:

- Use descriptive statistics (mean, median, frequencies) to summarize awareness levels.

- Employ non-parametric tests (Mann-Whitney U, Kruskal-Wallis) to compare scores between groups (e.g., students vs. physicians, different specialties) [1].

- Perform linear regression to investigate relationships between variables like age, HLA score, and EDC awareness [1].

Table 1: EDC Awareness Levels Across Populations

| Study Population | Sample Size | Key Finding | Awareness Level | Reference |

|---|---|---|---|---|

| Pregnant Women & New Mothers | 380 | 59.2% were unfamiliar with EDCs | Low | [2] |

| Turkish Medical Students | 381 | Median general EDC awareness score | Moderate (2.87/5) | [1] |

| Turkish Physicians | 236 | Median general EDC awareness score | High (2.12/5) | [1] |

| Turkish Endocrinologists | Subset of Physicians | Total EDC awareness score was significantly higher than other specialties. | Very High (3.96/5) | [1] |

| General Public (Focus Groups) | 34 | Awareness of EDCs was low. | Low | [17] |

Table 2: Significant Health Outcomes Associated with EDC Exposure

This table summarizes data from an umbrella review of 67 meta-analyses encompassing 109 health outcomes [34].

| Health Outcome Category | Specific Examples of Significant Associations |

|---|---|

| Cancer | 22 cancer outcomes, including testicular, prostate, breast, and thyroid cancers. |

| Neonatal/Infant/Child | 21 outcomes, including birth weight, neurodevelopmental issues, and childhood obesity. |

| Metabolic Disorders | 18 outcomes, including diabetes, obesity, and metabolic syndrome. |

| Cardiovascular Disease | 17 outcomes related to heart and circulatory system health. |

| Reproductive & Pregnancy | 11 pregnancy-related outcomes and infertility. |

| Other Outcomes | 20 outcomes including renal, neuropsychiatric, respiratory, and hematological effects. |

The Scientist's Toolkit: Key Research Reagents & Materials

Table 3: Essential Materials for EDC Exposure and Intervention Research

| Item | Function in Research | Example Context |

|---|---|---|

| Mail-in Urine Test Kits | Enables biomonitoring of non-persistent EDCs (e.g., phthalates, phenols) from study participants in their own homes. | Used in the REED study to measure baseline exposure and verify reduction post-intervention [32]. |

| EDC Health Literacy (EHL) Surveys | Validated questionnaires to assess participants' knowledge of EDC sources, health effects, and avoidance strategies. | A critical tool for measuring the educational impact of an intervention [32]. |

| Readiness to Change (RtC) Surveys | Assesses a participant's motivational stage for adopting behaviors to reduce EDC exposure. | Helps tailor intervention strategies and measure behavioral willingness [32]. |

| Endocrine Disruptor Awareness Scale (EDCA) | A validated 24-item scale specifically designed to measure EDC awareness across three subcategories. | Used to assess awareness levels among medical students and physicians [1]. |

| Clinical Biomarker Test Kits (e.g., Siphox) | At-home blood test kits to measure clinical biomarkers (e.g., for metabolic health, inflammation). | Used in the REED study to link EDC reduction to potential improvements in health outcomes [32]. |