A Researcher's Guide to Developing Robust Item Pools for Reproductive Health Behavior Assessment

This article provides a comprehensive methodological guide for researchers and biomedical professionals on developing scientifically rigorous item pools for assessing reproductive health behaviors.

A Researcher's Guide to Developing Robust Item Pools for Reproductive Health Behavior Assessment

Abstract

This article provides a comprehensive methodological guide for researchers and biomedical professionals on developing scientifically rigorous item pools for assessing reproductive health behaviors. Covering the full spectrum from foundational domain identification to psychometric validation, the guide synthesizes best practices in scale development with specific applications in reproductive health contexts. It addresses critical challenges including cultural adaptation, ethical considerations with vulnerable populations, and methodological optimization for diverse settings. The content is designed to equip scientists with practical frameworks for creating valid, reliable measurement tools that can accurately capture complex reproductive health constructs and behaviors in both clinical and research environments.

Laying the Groundwork: Defining Constructs and Generating Initial Items for Reproductive Health Assessment

Within the rigorous process of item pool development for reproductive health behaviors research, the initial and most critical phase is the precise establishment of conceptual boundaries. This foundational step ensures that measurement tools are built upon a clearly defined theoretical landscape, which is essential for the validity and reliability of subsequent research findings. In the complex field of reproductive health, where constructs like empowerment, social norms, and sexual health are often multifaceted and overlapping, a lack of conceptual clarity can lead to inconsistent operationalization, confounding results, and an inability to compare findings across studies. This application note provides detailed protocols for delineating these conceptual domains, supported by structured data presentation and visual workflows, to guide researchers, scientists, and drug development professionals in constructing robust item pools.

Conceptual Foundations and Review Protocols

Theoretical Underpinnings and Concept Mapping

A literature review is the primary methodology for clarifying conceptual boundaries and identifying distinct domains. This process must move beyond a simple gathering of definitions to a systematic analysis of how core constructs are applied within the existing research landscape.

Experimental Protocol for a Systematic Concept Analysis

- Objective: To identify and define the key domains of a target construct (e.g., "patient empowerment") and differentiate it from neighbouring concepts.

- Methodology: Implement a keyword-based literature search strategy following a structured process [1].

- Search Strategy: Execute a systematic search in major academic databases (e.g., PubMed). Use a set of predefined keywords related to the core construct and its neighbours (e.g., "patient empowerment," "patient engagement," "patient activation," "patient involvement"). The search should be limited to a relevant timespan (e.g., 1990–2013) to capture the evolution of terminology [1].

- Screening and Selection: Screen articles by title and abstract, followed by a full-text review for eligibility. The final selection should include articles that provide or imply a definition for the concepts under investigation. A typical study might yield 286 selected articles [1].

- Data Extraction and Analysis:

- Code each article for the presence of an explicit definition of the concept.

- Analyze the definitions to identify their core components and the nature of the construct (e.g., whether it is described as a process, an emergent state, or a behaviour) [1].

- Map the relationships, similarities, and differences between the concepts based on the analysis of their definitions.

Table 1: Summary of Concept Definition Clarity from a Systematic Review

| Concept Analyzed | Percentage of Articles with an Explicit Definition | Primary Construct Nature Identified in Review |

|---|---|---|

| Patient Empowerment | 42% | Process, Emergent State, or Participative Behaviour [1] |

| Patient Enablement | 30% | Not Specified in Results |

| Patient Engagement | 29% | Not Specified in Results |

| Patient Involvement | 17% | Behaviour [1] |

Result Interpretation: The findings from such a review highlight the significant ambiguity in the field. For instance, one analysis identified three distinct interpretations of "patient empowerment," conceptualized as a process, an emergent state, or a participative behaviour [1]. The resulting concept map, framed across dimensions such as the nature and focus of the concept, provides a visual tool to demarcate boundaries and relationships between seemingly similar terms, thereby informing the structure of the item pool [1].

Qualitative Exploration of Domain Relevance

For novel or under-researched populations, qualitative methods are indispensable for ensuring domains are relevant and grounded in lived experience.

Experimental Protocol for Qualitative Domain Identification

- Objective: To explore the components of a reproductive health construct from the perspective of a specific population (e.g., HIV-positive women, adolescents).

- Methodology: Employ qualitative approaches such as in-depth interviews and focus group discussions [2] [3] [4].

- Participant Recruitment: Use purposive sampling to recruit participants from the target population until data saturation is achieved. Sample sizes vary but may include ~25 participants for individual interviews [3] or smaller focus groups of 7-9 participants [4].

- Data Collection: Conduct semi-structured interviews or facilitated focus groups. Sessions are often audio-recorded and transcribed verbatim. In studies on sensitive topics, ensure a safe and supportive environment and provide opportunities for participants to be accompanied by a staff member or relative [4].

- Data Analysis: Analyze transcripts using conventional content analysis methods. This involves coding the data, grouping codes into categories, and abstracting these categories into broader themes or domains [3]. Software like MAXQDA can be used to manage the data [3].

Table 2: Example Domains Identified from Qualitative Research with Specific Populations

| Target Population | Qualitative Method | Identified Domains (Examples) |

|---|---|---|

| HIV-Positive Women [3] | Semi-structured interviews & focus group | Disease-related concerns, Life instability, Coping with illness, Disclosure status, Responsible sexual behaviors, Need for self-management support |

| People with Mild to Borderline Intellectual Disabilities [4] | Concept Mapping (Brainstorming & Sorting) | Romantic relationships, Sexual socialization, Sexual health, Sexual selfhood |

| Adolescents and Young Adults [2] | In-depth interviews | Bodily esteem, Voice, Self-efficacy, Future orientation, Social support, Safety |

Quantitative Scale Development and Validation Protocol

Once domains are conceptually defined, the next step is to operationalize them into a measurable instrument. This involves generating items, assessing content validity, and evaluating psychometric properties.

Experimental Protocol for Item Pool Development and Validation

- Objective: To develop and validate a scale that measures the defined construct (e.g., sexual and reproductive empowerment).

- Methodology: A mixed-methods approach is recommended, often following published scale development guidelines [2].

- Item Generation: Create a comprehensive item pool. This is done deductively (from literature and expert input) and inductively (from prior qualitative research). An initial pool may contain over 100 items [2].

- Content Validity Assessment: Convene a panel of experts (including AYA health experts and methodologists) to evaluate items for relevance, clarity, and comprehensiveness. Calculate quantitative indices:

- Cognitive Interviews: Test the items with ~30 individuals from the target population to ensure they are interpreted as intended and are worded at an understandable level. This step leads to the removal or revision of unclear items [2].

- Psychometric Evaluation: Administer the refined item set to a large sample for quantitative validation.

- Construct Validity: Use Exploratory Factor Analysis (EFA) to identify the underlying factor structure. Assess sampling adequacy with the Kaiser-Meyer-Olkin (KMO) index (should be >0.6) and Bartlett's test of sphericity (should be significant) [3].

- Reliability: Assess internal consistency using Cronbach's alpha (α > 0.70 is desired). Evaluate stability via test-retest reliability over a 2-week interval, with an intraclass correlation coefficient (ICC) of >0.7 indicating good stability [3].

Table 3: Psychometric Data from Scale Validation Studies

| Scale | Initial Items | Final Items (Subscales) | Cronbach's Alpha (α) | Test-Retest Reliability (ICC) |

|---|---|---|---|---|

| Sexual & Reproductive Empowerment Scale for AYAs [2] | 95 | 23 (7 subscales) | Reported (Implied acceptable) | Not Specified |

| Reproductive Health Scale for HIV-Positive Women [3] | 48 | 36 (6 factors) | 0.713 | 0.952 |

Data Presentation and Visualization Standards

Effective presentation of quantitative data is crucial for appraisal and communication. Tables should be self-explanatory.

Table 4: Standards for Presenting Frequency Distributions of Categorical Variables [5]

| Variable Category | Recommended Table Contents | Recommended Graph Types |

|---|---|---|

| Categorical (e.g., Acne scars: Yes/No) | Absolute frequency (n), Relative frequency (%) [5] | Bar chart, Pie chart [5] |

| Discrete Numerical (e.g., Years of education) | Absolute frequency, Relative frequency (%), Cumulative relative frequency (%) [5] | Histogram, Frequency polygon [5] |

| Continuous Numerical (e.g., Height) | Requires categorization into intervals of equal size before frequencies can be calculated and presented [5] | Histogram |

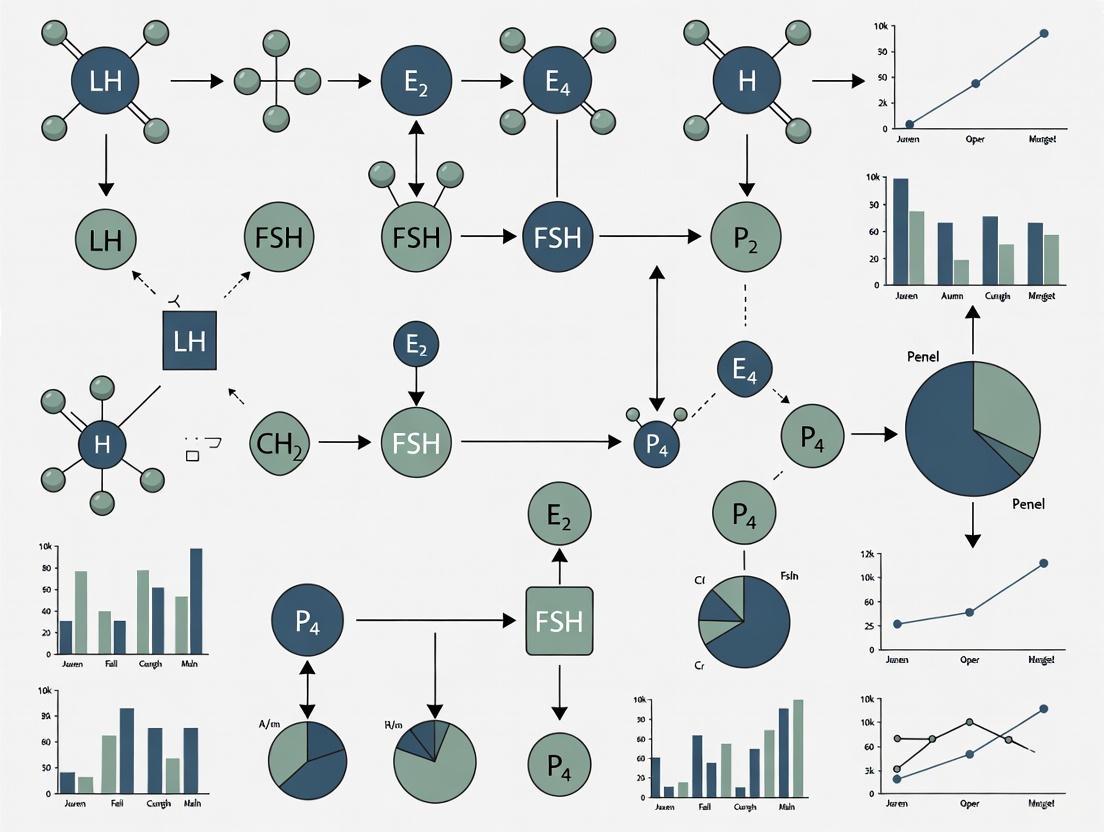

Visual Workflows for Domain Identification and Instrument Development

The following diagrams outline the core protocols described in this document, providing a clear visual workflow for researchers.

Diagram 1: Conceptual Domain Identification Workflow

Diagram 2: Item Pool Development & Validation Protocol

The Scientist's Toolkit: Research Reagent Solutions

Table 5: Essential Reagents for Conceptual and Psychometric Research

| Research Reagent / Tool | Function / Application |

|---|---|

| Academic Databases (e.g., PubMed) | Primary source for executing systematic literature reviews to map the conceptual landscape [6] [1]. |

| Qualitative Data Analysis Software (e.g., MAXQDA) | Facilitates the organization, coding, and thematic analysis of interview and focus group transcript data [3]. |

| Content Validity Indices (CVR & CVI) | Quantitative metrics used to objectively assess the essentiality and clarity of items based on expert ratings, refining the initial item pool [3]. |

| Statistical Software (e.g., R, SPSS with GroupWisdom) | Performs critical psychometric analyses, including Exploratory Factor Analysis (EFA) and reliability calculations (Cronbach's alpha, ICC) [2] [4]. |

| Concept Mapping Software (e.g., GroupWisdom) | Supports the statistical analysis and visual representation of conceptual structures derived from brainstorming and sorting tasks [4]. |

Integrating Deductive and Inductive Approaches for Comprehensive Item Generation

The development of a robust item pool is a foundational step in creating valid and reliable measurement tools for reproductive health behavior research. The integration of deductive and inductive approaches ensures that scales are both theoretically grounded and contextually relevant to the target population. This methodology is particularly crucial in reproductive health research, where complex, culturally sensitive constructs such as contraceptive self-efficacy, attitudes toward menstrual regulation, and health behaviors must be measured with precision and cultural appropriateness [7] [8].

The deductive approach (theory-driven, "top-down") leverages existing literature, theoretical frameworks, and prior validated instruments to generate items, while the inductive approach (data-driven, "bottom-up") utilizes qualitative data from the target population to identify emergent themes and concepts [7]. When combined, these methods facilitate the creation of comprehensive item banks that capture the full spectrum of a construct while ensuring cultural and contextual relevance, ultimately strengthening the content validity of the resulting instrument [9] [7].

Theoretical Foundation

The conceptual basis for integrating deductive and inductive methods rests on their complementary strengths in capturing both established theoretical constructs and lived experiences. This integration is formalized in a structured process that ensures comprehensive domain coverage.

Table 1: Core Characteristics of Deductive and Inductive Approaches

| Aspect | Deductive Approach | Inductive Approach |

|---|---|---|

| Direction | Top-down | Bottom-up |

| Theoretical Basis | Driven by existing theories, frameworks, and literature | Driven by empirical data from the target population |

| Primary Methods | Systematic literature review, analysis of existing scales [10] [7] | Focus groups, in-depth interviews, observational studies [11] [7] |

| Key Strength | Ensures theoretical consistency and builds on established knowledge [7] | Identifies emergent themes and ensures cultural relevance [11] [12] |

| Common Pitfalls | May miss culturally-specific nuances | May lack theoretical grounding |

The integration of these approaches follows a logical sequence, visualized in the workflow below:

Methodological Protocol

Phase 1: Domain Identification and Conceptual Definition

Before item generation, precisely define the domain and its boundaries through a systematic process:

- Specify the purpose of the construct you intend to measure and confirm that no existing instruments adequately serve the same purpose [7]. If similar tools exist, justify why a new instrument is necessary and how it will differ.

- Develop a preliminary conceptual definition of the domain. For example, in reproductive health, "contraceptive self-efficacy" was defined as beliefs in one's capabilities to perform actions needed to use contraception effectively [8].

- Identify and specify the dimensions of the domain, either a priori (if guided by established theory) or a posteriori (if emerging from data) [7]. In research on endocrine-disrupting chemicals (EDCs), dimensions were defined a priori based on exposure routes: food, respiration, and skin absorption [10].

Phase 2: Integrated Item Generation

Execute deductive and inductive processes concurrently to build a comprehensive item pool.

Deductive Method: Logical Partitioning

The deductive approach, termed "logical partitioning," systematically derives items from existing knowledge [9] [7].

- Conduct a comprehensive literature review of peer-reviewed publications, existing scales, and conceptual frameworks related to your construct. The development of an oral, mental, and sexual reproductive health tool for Nigerian adolescents, for example, began with a structured search in PubMed and ScienceDirect to identify relevant domains and validated instruments [9].

- Extract and adapt items from validated instruments. When developing a self-injection self-efficacy scale, researchers adapted items from the General Self-efficacy Scale and the Condom Use Self-Efficacy Scale, modifying them to be specific to self-injection [8].

- Leverage established theoretical frameworks. The Theoretical Framework of Acceptability (TFA), which includes seven constructs (e.g., affective attitude, burden, self-efficacy), has been used as a deductive basis for generating items to assess intervention acceptability [13].

The inductive approach grounds the item pool in the lived experiences and language of the target population [7].

- Implement qualitative data collection techniques such as focus group discussions (FGDs) and in-depth interviews (IDIs). A study on unmarried youth in urban Indian slums used these methods to explore SRH needs, revealing gendered differences in information access and structural barriers to care [11].

- Employ thematic analysis of qualitative data using principles of grounded theory and narrative inquiry to identify emergent themes and concepts [11]. This is crucial for understanding local terminology; for instance, research in Nigeria and Côte d'Ivoire found that women perceive "menstrual regulation" and "pregnancy removal" as distinct concepts, which has direct implications for how survey items should be phrased [12].

Phase 3: Item Pool Compilation and Refinement

Synthesize outputs from both approaches into a preliminary item pool.

- Combine and consolidate items from deductive and inductive sources, removing duplicates.

- Ensure comprehensive coverage of all identified domains and subdomains. The initial item pool should be significantly larger than the desired final scale—recommendations suggest it should be at least twice as long, or even up to five times as large [7]. The development of a reproductive health behavior questionnaire for endocrine-disrupting chemicals began with 52 initial items [10].

- Refine item wording for clarity, simplicity, and cultural appropriateness. Items should be worded unambiguously and follow the conventions of normal conversation to minimize respondent burden and "satisficing" (providing merely satisfactory answers) [7]. Fowler's five essential characteristics for item quality should be considered: consistent understanding, consistent administration, clear communication of adequate answers, respondent access to needed information, and respondent willingness to answer [7].

Application in Reproductive Health Research

The integrated approach has been successfully applied across various reproductive health research contexts, demonstrating its versatility and robustness.

Table 2: Applications of Integrated Item Generation in Reproductive Health

| Research Context | Deductive Components | Inductive Components | Key Outcomes |

|---|---|---|---|

| Self-injection Self-efficacy Scale (Uganda) [8] | Items adapted from General Self-efficacy Scale and Condom Use Self-Efficacy Scale | Not explicitly detailed in available excerpt | 3-item unidimensional scale validated to measure confidence in self-injection capabilities |

| SRH Needs of Unmarried Youth (India) [11] | Review of national programs and strategies | FGDs and IDIs with adolescents in slums to understand lived experiences | Identified limited SRH awareness, gendered information access, and structural barriers |

| Reproductive Health Behaviors (South Korea) [10] | Literature review on EDC exposure routes and health impacts | Not explicitly detailed in available excerpt | 19-item tool with 4 factors measuring health behaviors through food, breathing, and skin |

| Theoretical Framework of Acceptability Questionnaire [13] | TFA constructs (affective attitude, burden, etc.); literature-derived items | Stakeholder feedback on comprehensibility and relevance | Generic 8-item questionnaire for assessing healthcare intervention acceptability |

Research Reagent Solutions

The following table details essential methodological components for implementing the integrated item generation approach in reproductive health research.

Table 3: Essential Methodological Components for Item Generation

| Component | Function | Application Example |

|---|---|---|

| Systematic Literature Review Protocol | Provides comprehensive theoretical foundation and identifies existing measures | Identifying validated tools for mental health assessment in adolescent populations [9] |

| Semi-structured Interview Guides | Facilitates exploratory data collection while ensuring coverage of key domains | Exploring terminology and experiences around menstrual regulation [12] |

| Focus Group Discussion Protocols | Elicits group norms, shared terminology, and collective experiences | Understanding SRH information sources and barriers among unmarried youth [11] |

| Theoretical Framework of Acceptability (TFA) | Provides structured construct definitions for deductive item generation | Developing items for affective attitude, burden, and ethicality of health interventions [13] |

| Content Validity Index (CVI) Assessment | Quantifies expert agreement on item relevance and clarity | Expert panel evaluation of items for EDC exposure behavior questionnaire [10] |

| Digital Data Management Tools | Organizes and synthesizes large item pools from multiple sources | Using Excel databases to manage initial item pools during questionnaire development [13] |

The integration of deductive and inductive approaches provides a rigorous methodology for comprehensive item generation in reproductive health behavior research. This synergistic process ensures that developed instruments are both theoretically sound and contextually relevant, capturing the complex nuances of reproductive health constructs across diverse populations. The structured protocol outlined in this document—from domain definition through item refinement—offers researchers a validated roadmap for creating psychometrically robust measures that can advance our understanding of critical reproductive health behaviors and improve intervention development.

Conducting Systematic Literature Reviews to Inform Theoretical Frameworks

Systematic literature reviews (SLRs) represent a cornerstone of rigorous scientific inquiry, providing a methodical and reproducible framework for synthesizing existing evidence. Within the specific context of a broader thesis on item pool development for reproductive health behaviors research, conducting a high-quality SLR is an indispensable first step. It ensures that the resulting theoretical framework and measurement items are grounded in a comprehensive understanding of the field, accurately reflecting established constructs, identified gaps, and effective methodological approaches [14]. This document outlines detailed application notes and experimental protocols for executing a SLR to inform such a theoretical framework, with specific considerations for the reproductive health research domain.

Protocol for Systematic Literature Review

Preliminary Planning and Registration

The initial phase involves defining the review's scope and objectives, a process critical for ensuring the research remains focused and manageable.

- Defining Research Questions: Formulate clear, focused questions using structured frameworks like PICO (Population, Intervention, Comparison, Outcome) or SPIDER (Sample, Phenomenon of Interest, Design, Evaluation, Research type). For reproductive health behavior item development, key questions may explore the psychometric properties of existing instruments, conceptual definitions of behaviors, or factors influencing behavioral measurement.

- Protocol Registration: Prior to commencing the review, register the detailed protocol with an international prospective register of systematic reviews, such as PROSPERO. This preempts duplication of effort, enhances transparency, reduces bias, and allows for peer feedback on the proposed methods [15] [16]. The protocol should detail the rationale, objectives, and all methodological strategies outlined below.

Search Strategy and Study Selection

A comprehensive, unbiased search strategy is fundamental to the validity of a SLR.

- Electronic Databases: Searches should be performed across multiple, relevant electronic databases to cover the breadth of literature. Key databases for public health and social science research include:

- Ovid-MEDLINE and PubMed for biomedical literature.

- CINAHL for nursing and allied health literature.

- PsycINFO for psychological and behavioral science literature.

- Cochrane Library for systematic reviews and trials.

- Embase for pharmacological and biomedical research.

- Search Syntax: Develop a structured search syntax using a combination of Medical Subject Headings (MeSH) and free-text keywords related to the core concepts. For example, terms related to "reproductive health," "health behavior," "psychometrics," "validation studies," and "item development" should be combined with Boolean operators (AND, OR) [15]. The search strategy from one relevant protocol is demonstrated in [15].

- Eligibility Criteria: Establish clear, pre-defined criteria for including or excluding studies.

- Participants (P): Define the target population (e.g., adolescents, men, women of reproductive age).

- Intervention/Exposure (I): In the context of measurement, this could be the use of a specific theoretical framework or a particular item development method.

- Comparators (C): May include alternative frameworks or measurement approaches.

- Outcomes (O): Primary outcomes would relate to the quality of the theoretical framework or the psychometric properties of the item pool (e.g., content validity, factor structure, reliability) [15].

- Study Designs (S): Specify eligible designs (e.g., instrument development papers, validation studies, qualitative studies exploring constructs, systematic reviews).

- Study Selection Process: The selection process should be conducted by at least two independent reviewers to minimize error and bias. Use systematic review software like Covidence [15] [16] or Rayyan to manage the process. Discrepancies are resolved through discussion or by a third reviewer. The flow of studies is documented using a PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) flowchart [16].

Table 1: Key Information Sources and Search Strategy

| Component | Description | Example for Reproductive Health Behaviors |

|---|---|---|

| Electronic Databases | Multiple bibliographic databases covering the field. | MEDLINE, PsycINFO, CINAHL, EMBASE [15] |

| Search Syntax | Combination of controlled vocabulary and keywords. | (("reproductive health") AND ("behavior" OR "behaviour") AND ("item development" OR "psychometr*" OR "validity")) |

| Eligibility Criteria | Pre-defined rules for inclusion/exclusion. | Population: Adults 18+; Outcome: Reported on factor analysis or content validity of a reproductive health behavior scale. |

| Selection Process | Independent, dual-reviewer screening. | Title/abstract screening followed by full-text review using Covidence software [15]. |

Data Extraction and Management

Data extraction converts the information from included studies into a structured format for synthesis.

- Data Extraction Form: Develop a standardized, piloted data extraction form using a tool like Microsoft Excel, Covidence, or SRDR (Systematic Review Data Repository) [17]. The form should be tailored to the review's objectives.

- Data Items: Extract the following key information, at a minimum:

- Bibliographic details: Author, year, title, journal, DOI.

- Study characteristics: Country, setting, aims, design, methodology.

- Participant characteristics: Sample size, demographics, inclusion/exclusion criteria.

- Intervention/Framework details: Name of theoretical framework, constructs, definitions.

- Item Pool Details: Number of initial items, source of items (e.g., literature, expert input, qualitative work), modification process.

- Outcomes and Results: Psychometric properties reported (e.g., content validity index, Cronbach's alpha, factor loadings, model fit indices) [17].

- Extraction Process: Data extraction should also be performed by two independent reviewers to ensure accuracy. A pilot test on a small sample of studies (e.g., 2-3) helps refine the form.

Table 2: Essential Data Extraction Fields for Item Pool Development

| Category | Data Field | Purpose |

|---|---|---|

| Study Identification | Citation, Publication Year, Country | Contextualize the evidence and identify geographic/research trends. |

| Theoretical Foundation | Named Theory/Framework, Constructs Defined | Identify commonly used and validated theoretical frameworks in the field. |

| Methodology | Item Generation Method (e.g., literature, interview), Reduction Method | Inform best practices for the item development process. |

| Item Pool | Initial Item Count, Final Item Count, Item Wording/Wording | Understand the scope and nature of questions used to measure behaviors. |

| Psychometric Outcomes | Content Validity Index, Internal Consistency, Factor Loadings | Evaluate the quality and robustness of existing measures. |

Quality Assessment and Risk of Bias

Critically appraising the methodological quality of included studies is essential for interpreting the findings.

- Assessment Tools: Select appropriate, validated tools based on study design.

- Randomized Controlled Trials: Cochrane Risk of Bias tool (RoB 2.0) [15].

- Non-Randomized Studies: Risk of Bias Assessment Tool for Non-randomized Studies (ROBINS-I).

- Qualitative Research: Joanna Briggs Institute (JBI) Critical Appraisal Checklist [15].

- Measurement Studies: COnsensus-based Standards for the selection of health Measurement Instruments (COSMIN) risk of bias checklist is particularly relevant for reviews informing item pool development.

- Assessment Process: Like screening and extraction, quality assessment should be conducted by two independent reviewers, with disagreements resolved by consensus.

Data Synthesis

Synthesizing the extracted data allows for the development of a coherent theoretical framework.

- Narrative Synthesis: This is often the primary method for reviews informing theory. It involves summarizing and explaining the findings from the included studies, organized by key themes (e.g., theoretical constructs, methodological approaches). The synthesis should explore relationships between studies and provide a critical discussion of the evidence [16].

- Meta-Analysis: If the included studies are sufficiently homogeneous in their populations, interventions, and outcomes, a meta-analysis can be performed to statistically pool quantitative results (e.g., average reliability coefficients) [15]. This may be less common for pure theoretical development but can be useful for quantifying measurement properties.

- Qualitative Evidence Synthesis: If qualitative studies are included, methodologies like meta-ethnography can be used to integrate findings and generate new interpretive insights into the constructs of interest [16].

Dissemination

The final step is to report the findings in a clear, transparent, and accessible manner.

- Reporting Guidelines: Adhere to the PRISMA 2020 statement [15] to ensure all relevant aspects of the review are reported.

- Outputs: Results should be disseminated through peer-reviewed publications, conference presentations, and reports to relevant stakeholders. The synthesized theoretical framework should be presented clearly, highlighting the evidence supporting each construct and the relationships between them.

Experimental Workflow and Visualization

The following diagram, generated using Graphviz, illustrates the sequential and iterative workflow of a systematic literature review.

The Scientist's Toolkit: Research Reagent Solutions

In the context of a systematic review for theoretical framework development, "research reagents" refer to the essential methodological tools and resources required to execute the review rigorously. The following table details these key components.

Table 3: Essential Research Reagents for Conducting a Systematic Review

| Tool/Resource | Category | Function/Benefit |

|---|---|---|

| Covidence [15] [16] | Software Platform | A web-based tool that streamlines and manages the entire systematic review process, including title/abstract screening, full-text review, data extraction, and quality assessment. |

| PRISMA Checklist & Flow Diagram [15] | Reporting Guideline | An evidence-based minimum set of items for reporting in systematic reviews, crucial for ensuring transparency and completeness. The flow diagram visualizes the study selection process. |

| Cochrane Handbook [15] | Methodological Guide | The definitive guide to the process of preparing and maintaining systematic reviews, providing comprehensive methodological standards. |

| PROSPERO Registry [15] [16] | Protocol Registry | An international database for prospectively registering systematic review protocols, which helps avoid duplication and reduce reporting bias. |

| JBI Critical Appraisal Tools [15] | Quality Assessment | A suite of checklists for critically appraising different types of study designs (e.g., RCTs, qualitative, quasi-experimental) to assess methodological quality and risk of bias. |

| Microsoft Excel / SRDR [17] | Data Extraction Tool | A flexible and widely accessible platform for creating customized data extraction forms and managing synthesized data from included studies. |

Qualitative research methods provide indispensable tools for investigating complex human behaviors, perceptions, and experiences, particularly in sensitive domains such as reproductive health. In-depth interviews and focus group discussions enable researchers to explore the underlying reasons, motivations, and contextual factors that shape reproductive health behaviors—insights that often remain uncovered by quantitative methods alone [18] [19]. Within the specific context of developing an item pool for reproductive health behaviors research, these methods are particularly valuable for ensuring that assessment instruments are grounded in the lived experiences and conceptualizations of the target population [20].

The fundamental strength of qualitative inquiry lies in its ability to answer "how" and "why" questions about complex phenomena [18] [19]. For reproductive health research, this means exploring how individuals conceptualize reproductive health, what behaviors they consider relevant, and why they engage in specific health practices. This approach is especially crucial for male reproductive health, which has been historically neglected in research and programmatic efforts [20]. As reproductive health encompasses "a state of complete physical, mental, and social well-being and not merely the absence of disease or infirmity in all matters pertaining to the reproductive system" [20], qualitative methods become essential for capturing its multidimensional nature.

Conceptual Foundations of Qualitative Inquiry

Philosophical Underpinnings

Qualitative research operates from philosophical perspectives that differ significantly from quantitative approaches. While quantitative research typically assumes a single objective reality, qualitative research acknowledges multiple dynamic realities constructed through human experience [18]. This epistemological position is particularly relevant for reproductive health behavior research, where cultural, social, and individual factors create diverse perspectives and experiences that cannot be reduced to standardized measures alone.

The pragmatism paradigm often underpins mixed-methods approaches, where qualitative methods are used to explore phenomena before developing quantitative instruments [20]. This sequential exploratory design is especially valuable for item pool development, as it ensures that assessment tools are derived from and responsive to the authentic experiences of the target population rather than solely relying on pre-existing theoretical frameworks.

Methodological Approaches

Several methodological approaches can guide the use of in-depth interviews and focus groups in reproductive health research:

- Phenomenological research focuses on understanding the essence or common meaning of lived experiences [18] [21]. This approach would be valuable for exploring universal aspects of reproductive health experiences across a target population.

- Grounded theory aims to develop theories that are "grounded" in the data itself [18]. This methodology is particularly useful when existing theoretical frameworks regarding reproductive health behaviors are inadequate or non-existent.

- Consensual qualitative research (CQR) emphasizes reaching consensus within a research team throughout the analysis process [21]. This approach enhances objectivity and rigor, making it particularly valuable for controversial or sensitive topics in reproductive health.

Table 1: Key Qualitative Research Approaches for Reproductive Health Studies

| Methodological Approach | Primary Focus | Application in Reproductive Health Research |

|---|---|---|

| Phenomenological Research | Essence of lived experiences | Exploring universal experiences of reproductive health transitions |

| Grounded Theory | Theory development from data | Building theoretical models of health behavior decision-making |

| Consensual Qualitative Research | Team consensus on interpretations | Enhancing objectivity in sensitive topic areas |

| Case Study | In-depth analysis of bounded system | Examining unique reproductive health programs or interventions |

| Narrative Research | Storytelling and personal accounts | Understanding individual reproductive health journeys |

Methodological Protocols: In-Depth Interviews

Protocol Development and Interview Guide Design

Developing a comprehensive interview protocol is fundamental to obtaining rich, relevant data for item pool development. The protocol should balance structure with flexibility, allowing for exploration of unanticipated themes while ensuring coverage of core research topics [22].

The PCO framework (Population, Context, Outcome) provides a useful structure for formulating qualitative research questions [18]. For example: "What are the experiences (Outcome) of men aged 25-40 (Population) regarding reproductive health services in urban primary care settings (Context)?" This formulation ensures questions are simultaneously focused and exploratory.

Interview guides typically include:

- Opening questions that establish rapport and introduce the topic broadly

- Key questions that directly address the research objectives

- Probing questions to elicit deeper information and clarification

- Closing questions that allow for additional reflections [22]

For reproductive health research, the interview guide should be iteratively refined through pilot testing to ensure questions are culturally appropriate, non-judgmental, and effectively elicit meaningful responses about potentially sensitive topics [20] [22].

Participant Recruitment and Sampling Strategies

Purposive sampling with maximum variation is typically employed in qualitative research to capture a wide range of perspectives [20] [18]. For reproductive health behavior research, this might involve intentionally recruiting participants with diverse demographics, reproductive histories, or health service experiences.

Sample size in qualitative research is determined by the principle of data saturation, which occurs when new interviews no longer yield novel insights or themes [20] [19]. While exact numbers vary based on the study scope, research suggests that 12-30 participants are often sufficient for in-depth interview studies, though complex topics may require larger samples [20].

Table 2: Sampling Considerations for Reproductive Health Behavior Research

| Sampling Aspect | Consideration | Application Example |

|---|---|---|

| Strategy | Purposive with maximum variation | Intentionally recruiting men of different ages, education levels, and cultural backgrounds |

| Sample Size | Determined by data saturation | Continuing interviews until no new themes emerge about reproductive health behaviors |

| Inclusion Criteria | Specific to research question | Married men aged 20-45 living in urban areas |

| Recruitment Venues | Multiple relevant settings | Health centers, workplaces, community organizations |

| Ethical Considerations | Privacy and sensitivity | Ensuring confidential environments for discussing sensitive topics |

Data Collection Procedures

In-depth interviews in reproductive health research should be conducted in private settings that ensure confidentiality and comfort [20] [22]. Interviews are typically audio-recorded with participant permission and supplemented by field notes capturing nonverbal cues and contextual observations [19].

Skilled interviewing techniques are particularly important for sensitive reproductive health topics. These include:

- Using neutral, non-judgmental language

- Building rapport while maintaining professional boundaries

- Employing effective probing (e.g., "Can you tell me more about that?") [20]

- Demonstrating cultural sensitivity regarding terminology and taboos

Interviews generally last 25-90 minutes, depending on participant engagement and topic complexity [20] [22]. Transcription should occur shortly after interviews, with careful attention to accuracy and identification of potentially identifiable information that should be anonymized.

Methodological Protocols: Focus Group Discussions

Design and Composition Considerations

Focus groups utilize group dynamics to elicit insights that might not emerge in individual interviews. The group setting can encourage participants to explore and clarify their views through discussion with others who have similar experiences [19].

For reproductive health topics, focus group composition requires careful consideration. Homogeneous groups (e.g., similar age, gender, or background) often facilitate more open discussion of sensitive topics [19]. Group size typically ranges from 6-8 participants, allowing for diverse perspectives while ensuring all participants can contribute [19].

Focus group guides share similarities with interview guides but place greater emphasis on:

- Stimulating discussion among participants

- Managing group dynamics to ensure balanced participation

- Exploring agreements and disagreements within the group

Moderation Techniques and Data Collection

Effective focus group moderation requires special skills in:

- Establishing ground rules for respectful discussion

- Encouraging participation from all members

- Managing dominant speakers without silencing them

- Exploring group norms and shared experiences [19]

For reproductive health research, moderators must be particularly adept at creating a safe environment for discussing potentially sensitive topics. This may include using appropriate terminology, acknowledging discomfort, and respectfully redirecting inappropriate comments.

Focus groups are typically audio- and video-recorded to capture both verbal content and group dynamics. Co-moderators or observers can document nonverbal communication, participant interactions, and other contextual factors that enrich the data [19].

Data Analysis and Interpretation

Analytical Approaches

Thematic analysis provides a flexible and accessible approach for analyzing qualitative data in reproductive health research. This method involves identifying, analyzing, and reporting patterns (themes) within the data through a process of coding and theme development [23].

The analytical process typically involves:

- Familiarization with the data through repeated reading of transcripts

- Generating initial codes that identify meaningful data segments

- Searching for themes by collating codes into potential themes

- Reviewing themes against the coded extracts and entire dataset

- Defining and naming themes to capture their essence

- Producing the analysis through narrative explanation and illustrative quotes [23]

For consensual qualitative research, the analysis emphasizes reaching consensus within the research team through multiple rounds of independent coding and team discussion [21]. This approach enhances the trustworthiness of the analysis, particularly important for sensitive reproductive health topics.

From Analysis to Item Pool Development

The transition from qualitative analysis to item pool development requires systematic translation of themes and concepts into potential assessment items. This process involves:

- Identifying key constructs emerging from the data

- Developing item stems that reflect participant language and concepts

- Ensuring comprehensive coverage of the domain based on qualitative findings

- Maintaining linguistic and cultural appropriateness [20] [24]

For example, in developing a male reproductive health behavior instrument, qualitative findings about specific health practices, information-seeking behaviors, or service utilization patterns would directly inform potential questionnaire items [20]. The qualitative data provides not only the content for items but also appropriate language and framing that reflects how the target population conceptualizes these issues.

Ensuring Methodological Rigor

Trustworthiness and Quality Criteria

Qualitative research employs distinct criteria for ensuring rigor, often referred to as trustworthiness. Key strategies include:

- Reflexivity: Critical self-appraisal by researchers of their biases, values, and assumptions [18] [23]. Maintaining a reflexive journal enhances transparency about how researcher subjectivity may influence data collection and interpretation.

- Triangulation: Using multiple data sources, methods, or researchers to cross-verify findings [23]. In reproductive health research, this might involve combining interview data with document analysis or observational data.

- Member checking: Returning preliminary findings to participants to verify accuracy and interpretation [23]. This approach is particularly valuable for ensuring cultural and contextual appropriateness in reproductive health studies.

- Detailed documentation: Maintaining comprehensive records of data collection and analytical decisions to create an audit trail [19].

Ethical Considerations in Reproductive Health Research

Reproductive health research raises specific ethical considerations that require careful attention:

- Confidentiality and privacy: Particularly important given the sensitive nature of reproductive health topics. This includes secure data storage, careful anonymization, and private data collection settings [20] [22].

- Cultural sensitivity: Recognizing and respecting cultural norms, values, and terminology related to reproductive health [20].

- Power dynamics: Being mindful of potential power imbalances between researchers and participants, especially when discussing personal health topics.

- Emotional safety: Providing appropriate support resources for participants who may experience distress when discussing sensitive reproductive health experiences.

Application to Reproductive Health Behavior Research

Case Examples and Applications

The sequential exploratory mixed-methods design has been successfully applied in various reproductive health instrument development studies:

- Male Reproductive Health Behavior Instrument: A study developed a psychometric instrument for assessing male reproductive health-related behavior using an initial qualitative phase with in-depth interviews to explore men's perceptions and experiences [20]. The qualitative findings directly informed the development of a quantitative assessment tool.

- Women Shift Workers' Reproductive Health Questionnaire: This study employed a similar approach, conducting 21 interviews with women shift workers to generate items for a reproductive health questionnaire [25]. The qualitative phase ensured the instrument addressed relevant concerns specific to this population.

- Adolescent Sexual and Reproductive Health Competency Assessment: This validation study utilized expert interviews and literature review to generate items for assessing healthcare provider competency in adolescent reproductive health services [24].

Table 3: Essential Research Reagents and Tools for Qualitative Reproductive Health Research

| Tool Category | Specific Tools/Resources | Purpose and Application |

|---|---|---|

| Recording Equipment | Digital audio recorders, external microphones | High-quality audio capture in various settings |

| Data Management | Qualitative data analysis software (NVivo, MAXQDA, Dedoose) | Organizing, coding, and analyzing qualitative data |

| Transcription Resources | Transcription software, transcription service partnerships | Converting audio to accurate text transcripts |

| Interview Protocols | Semi-structured interview guides, consent forms | Standardizing data collection while maintaining flexibility |

| Participant Materials | Information sheets, demographic forms, reimbursement protocols | Ethical administration of participant procedures |

| Analysis Framework | Codebooks, thematic frameworks, reflexive journals | Systematic approach to data interpretation |

In-depth interviews and focus groups provide invaluable methodological approaches for developing comprehensive, culturally grounded item pools in reproductive health behavior research. By centering the lived experiences and conceptualizations of the target population, these qualitative methods ensure that subsequent assessment instruments accurately reflect the relevant constructs, language, and concerns of those whose health behaviors we seek to understand and measure.

The rigorous application of these methods—through careful design, skilled data collection, systematic analysis, and attention to ethical considerations—enables researchers to develop instruments with enhanced content validity and cultural relevance. As reproductive health continues to gain recognition as an essential component of overall well-being, particularly for historically neglected populations such as men [20], these qualitative approaches will remain fundamental to creating assessment tools that truly capture the complexity of reproductive health behaviors across diverse contexts.

Ensuring Cultural and Contextual Relevance in Initial Item Formulation

Application Notes: Core Principles and Workflow

The initial formulation of a relevant and comprehensive item pool is a critical first step in developing a high-quality psychometric instrument for reproductive health research. For behaviors that are deeply influenced by socio-cultural norms, such as those in male reproductive health, ensuring the cultural and contextual relevance of these items is not merely beneficial—it is a scientific prerequisite for obtaining valid and reliable data [20]. This protocol outlines a systematic, mixed-methods approach to achieve this goal, framing the process within the broader context of item pool development.

A sequential exploratory mixed-method design is the most robust framework for this task. This design prioritizes an initial qualitative phase to explore and understand the phenomenon within its natural context, followed by a quantitative phase to validate the findings [20]. The core workflow, from conceptualization to a finalized preliminary item pool, is designed to ensure that the instrument is grounded in the lived experiences and language of the target population.

The following diagram illustrates the key stages of this mixed-methods approach for developing a culturally relevant item pool.

Experimental Protocols

Protocol for Qualitative Data Collection and Content Analysis

This protocol details the methodology for the initial qualitative phase, which is foundational for discovering culturally specific concepts and phrasing for the item pool [20].

- 2.1.1. Objective: To explain the target population's perception of reproductive health-related behaviors and to inductively generate initial instrument items.

- 2.1.2. Materials:

- Audio recording equipment.

- Interview guide with open-ended questions.

- Transcribed interview data.

- Qualitative data analysis software (e.g., NVivo, MAXQDA).

- 2.1.3. Procedure:

- Participant Selection: Employ purposive sampling with maximum variation to capture a wide range of experiences based on age, education, socioeconomic status, and geographic location [20]. Sample size is determined by data saturation.

- Data Collection: Conduct semi-structured, in-depth, individual interviews. The interview guide should focus on open-ended questions exploring knowledge, attitudes, practices, and perceived barriers/facilitators related to reproductive health behaviors.

- Data Management: Transcribe interviews verbatim and verify transcripts for accuracy.

- Data Analysis: Perform contractual content analysis on the transcribed text. The process involves the following stages, which are rarely linear and often require iterative refinement.

Protocol for Systematic Item Formulation and Integration

This protocol describes the process of translating qualitative findings into a structured preliminary item pool, supplemented by a review of existing literature.

- 2.2.1. Objective: To develop a comprehensive and culturally grounded preliminary item pool for the target instrument.

- 2.2.2. Materials:

- Finalized themes and dimensions from the qualitative analysis.

- Access to scientific databases (e.g., PubMed, Scopus, Web of Science).

- Item formulation guidelines.

- 2.2.3. Procedure:

- Inductive Item Generation: For each defined theme and sub-dimension from the qualitative analysis, draft a set of candidate items. Use clear, simple, and culturally appropriate language that reflects the terminology used by participants.

- Deductive Item Completion: Conduct a systematic review of the literature on male reproductive health behaviors [20]. Identify concepts and existing items from validated tools. Use these to supplement the inductively generated items, ensuring comprehensive coverage of all relevant behavioral constructs.

- Item Refinement and Wording:

- Write items at an appropriate reading level.

- Avoid double-barreled questions, jargon, and leading statements.

- Decide on a consistent response scale (e.g., Likert, frequency).

- Compile Preliminary Item Pool: Consolidate all inductively and deductively generated items into a single pool. This pool serves as the input for the quantitative validation phase, where it will undergo formal psychometric testing [20].

Data Presentation

Table 1: Key Considerations for Culturally Relevant Item Formulation

| Principle | Application in Protocol | Rationale |

|---|---|---|

| Linguistic Equivalence | Use terminology and phrases directly sourced from qualitative interviews with the target population [20]. | Ensures items are understood as intended and avoids academic jargon that may be misinterpreted. |

| Conceptual Equivalence | Ensure that the underlying construct of a behavior (e.g., "self-care") has the same meaning and relevance in the target culture [20]. | Prevents measuring different constructs across different cultural groups, which threatens validity. |

| Contextual Embeddedness | Frame items within culturally specific scenarios, norms, and barriers identified during qualitative exploration. | Increases ecological validity and respondent engagement, leading to more accurate responses. |

| Social Desirability Mitigation | Phrase items neutrally to minimize the pressure to respond in a socially acceptable manner. | Reduces bias in responses, providing a more accurate measurement of sensitive or stigmatized behaviors. |

Table 2: Sampling and Data Collection Strategy for Qualitative Phase

| Parameter | Protocol Specification | Justification |

|---|---|---|

| Sampling Method | Purposive sampling with maximum variation [20]. | Captels a wide spectrum of experiences and ensures diversity in the initial item pool. |

| Data Collection Method | Semi-structured, in-depth individual interviews [20]. | Allows for deep exploration of personal views and experiences while maintaining comparability. |

| Sample Size | Determined by data saturation (no new themes emerge) [20]. | Ensures comprehensive concept exploration without unnecessary data collection. |

| Data Analysis | Contractual content analysis [20]. | Provides a systematic, iterative process for identifying and defining core themes and categories from textual data. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Qualitative Item Formulation Research

| Item / Reagent | Function in Protocol |

|---|---|

| Semi-Structured Interview Guide | Ensures consistency across interviews by providing a framework of key questions and probes, while allowing flexibility to explore emergent topics. |

| Qualitative Data Analysis Software (e.g., NVivo) | Facilitates the efficient organization, coding, and analysis of large volumes of textual interview transcript data. |

| Audio Recording Equipment | Captures the interview dialogue accurately for verbatim transcription, preserving the original data for analysis. |

| Informed Consent Forms | Adheres to ethical standards in research by formally documenting the participant's voluntary agreement to take part in the study [20]. |

| Data Saturation Log | A tracking document used by the research team to document the emergence of new themes, determining the point at which no new information is found and data collection can cease [20]. |

From Concepts to Questions: Methodological Approaches for Reproductive Health Item Development

Within the critical field of reproductive health behaviors research, the development of precise and psychometrically sound measurement instruments is foundational to advancing scientific understanding. The process of item pool development, which involves generating a comprehensive set of candidate questions or statements, is a crucial first step in capturing complex, latent constructs such as health literacy, service-seeking attitudes, and resilience [26] [27]. The format of the response scale attached to each item is not merely a presentational detail; it directly influences data quality, participant engagement, and the statistical validity of the resulting scores. This protocol outlines best practices for selecting and implementing response scales, with a specific focus on Likert-type and alternative formats, contextualized for researchers investigating reproductive health behaviors.

Theoretical Foundations of Response Scales

The Likert Scale

Developed by Rensis Likert in 1932, a Likert scale is a unidimensional rating scale used to measure attitudes, perceptions, and opinions [28] [29]. Its primary characteristic is the presentation of a series of statements to which respondents indicate their level of agreement or disagreement. The original scale used an odd number of response options, typically five to seven, which included a neutral midpoint [28]. This format allows researchers to move beyond simple binary (yes/no) responses and capture the intensity of a respondent's feeling, providing more granular data for analysis [29].

Alternative Scaling Methods

While the Likert scale is predominant, other unidimensional scaling methods exist, each with distinct characteristics and applications. Table 1 provides a comparative overview of these major scaling methods.

Table 1: Major Unidimensional Scaling Methods for Survey Research

| Scale Type | Creator & Date | Core Principle | Typical Format | Key Advantage | Key Disadvantage |

|---|---|---|---|---|---|

| Likert Scale [29] | Rensis Likert (1932) | Measures agreement with a series of statements. | 5- to 7-point agreement scale (e.g., Strongly Disagree to Strongly Agree). | Intuitive for respondents; high reliability and adaptability. | Potential for "satisficing" (satisfactory but not optimal answering) [26]. |

| Thurstone Scale [29] | Louis Leon Thurstone (1928) | Judges pre-rate statements; respondents select only statements they agree with. | "Equal-appearing intervals" with pre-assigned values; respondents select agreed statements. | Reduces bias by using pre-rated items. | Time-consuming and labor-intensive to develop. |

| Guttman Scale [29] | Louis Guttman (Mid-20th C.) | Measures extent of attitude using cumulative, hierarchical statements. | Series of statements ordered from least to most extreme; respondent stops when disagreeing. | Produces a single, cumulative score that predicts item responses. | Difficult to construct a perfect cumulative hierarchy of items. |

Protocol for Selecting and Implementing Response Scales

The following workflow outlines a systematic approach for selecting and validating response scales within the item pool development process for reproductive health research.

Figure 1: A systematic workflow for the selection and validation of response scales in research instrument development.

Step 1: Determine the Scaling Goal

The first step is to align the scale with the nature of the information you wish to collect. Likert-type scales can be adapted to measure several dimensions [29]:

- Agreement: The most common use (e.g., Strongly Disagree to Strongly Agree).

- Frequency: To gauge how often a behavior occurs (e.g., Never to Always).

- Importance: To rank the perceived significance of an item (e.g., Not at all Important to Extremely Important).

- Quality: To assess a characteristic (e.g., Poor to Excellent).

- Likelihood: To measure behavioral intentions (e.g., Extremely Unlikely to Extremely Likely).

Step 2: Select the Scale Format and Number of Points

The choice between a Likert scale and a forced-choice (even-numbered) scale depends on the research question and whether a neutral option is theoretically meaningful.

Likert Scale Point Options: The number of scale points involves a trade-off between granularity and respondent cognitive load.

- 5-point scales are highly recommended for their balance of ease-of-use and reliability, and are particularly suited for unipolar constructs (e.g., measuring degrees of a single quality like satisfaction) [26] [29].

- 7-point scales are often recommended for bipolar constructs (e.g., disagree/agree) as they provide a wider continuum for expression [26].

- Scales with fewer than five points have been shown to have lower reliability [26].

Forced-Choice Scales: Removing the neutral option (e.g., using a 4-point or 6-point scale) forces respondents to take a stance, which can be useful in mitigating central tendency bias. However, this can also lead to frustration or non-response if respondents genuinely hold a neutral view [29].

Step 3: Design and Word Items Effectively

The phrasing of the items (statements) is as critical as the response scale itself. Best practices include [26]:

- Simplicity and Clarity: Use simple, unambiguous language. Avoid jargon, especially in populations with varying health literacy levels.

- Avoid Leading or Absolute Language: Do not use adverbs like "very," "extremely," or "absolutely," as they can skew responses [28].

- Cultural and Social Sensitivity: Ensure items are not offensive or biased regarding gender, religion, or socioeconomic status. This is paramount in reproductive health research [26].

- Accessibility of Information: Frame questions such that all respondents can reasonably be expected to have access to the information needed to answer accurately [26].

Step 4: Pretest and Validate the Scale

Before full deployment, the draft instrument must be rigorously pretested.

- Cognitive Interviews: Conduct interviews with individuals from the target population (e.g., young adults, refugee women) to assess if items are understood as intended and if the response scale is easy to use. This process was key in refining a resilience scale for people with dementia, leading to the removal of poorly understood items [27].

- Expert Review: Solicit feedback from content experts (e.g., clinicians, public health researchers) and methodological experts to evaluate content validity [30] [31].

- Pilot Survey: Administer the scale to a small sample from your target population to conduct preliminary reliability (e.g., Cronbach's alpha) and factor analysis, which helps in item reduction and verifying the underlying factor structure [26] [30].

Application in Reproductive Health Research

The development of scales in reproductive health requires particular attention to cultural context, sensitivity of topics, and varying levels of health literacy.

- Service Seeking: The Sexual and Reproductive Health Service Seeking Scale (SRHSSS) was developed for young adults using a 3-point Likert-type scale ("I agree correctly," "I don't know," "I disagree incorrectly") to reduce complexity while capturing key attitudes and perceived barriers [30].

- Health Literacy: The Reproductive Health Literacy Scale integrated multiple validated scales, using 4-point Likert formats for its components (e.g., "very difficult" to "very easy") to avoid a neutral midpoint and effectively measure refugees' abilities to find, understand, and use health information [31].

- Resilience: In developing a resilience measure for people with dementia, researchers used cognitive interviews to test a 4-point agreement scale, finding it acceptable and understandable for the population, which led to a refined 37-item pool [27].

Research Reagent Solutions

The following table details key methodological "reagents" essential for the experimental process of developing and validating a response scale.

Table 2: Essential Reagents for Response Scale Development and Validation

| Research Reagent | Function in Scale Development | Exemplar from Reproductive Health Research |

|---|---|---|

| Expert Panel [30] [31] | To establish content validity by assessing the relevance and representativeness of items for the target construct. | An expert panel of psychiatric and gynecological nursing professors evaluated the content validity of the SRHSSS [30]. |

| Focus Group Guide [30] | To generate items inductively from the target population, ensuring the scale reflects lived experiences and domain language. | A focus group with 8 young adults using semi-structured questions informed the item pool for the SRHSSS [30]. |

| Cognitive Interview Protocol [27] | To evaluate face validity, comprehensibility, and appropriateness of items and response options from the participant's perspective. | Used with people with dementia to identify and amend items that were difficult to understand or answer [27]. |

| Pilot Survey Dataset [30] | A dataset collected from a sample of the target population, used for statistical item reduction (e.g., factor analysis) and reliability assessment. | Data from 458 young adults was used for Exploratory Factor Analysis (EFA) and reliability testing of the SRHSSS [30]. |

| Statistical Software (e.g., R) | To perform psychometric analyses such as Factor Analysis (EFA/CFA) and calculate reliability coefficients (e.g., Cronbach's alpha). | Confirmatory Factor Analysis (CFA) was used to test a 4-factor model of behavioral health functioning in a disability claimant population [32]. |

The selection of an appropriate response scale is a critical, evidence-based decision in the development of robust research instruments for reproductive health. By adhering to a structured protocol that emphasizes clear construct definition, careful scale formatting, and iterative validation with the target population, researchers can ensure their tools yield valid, reliable, and meaningful data. This, in turn, strengthens the scientific foundation for understanding and improving sexual and reproductive health outcomes.

Developing Culturally-Adapted Items for Diverse Populations

The development of a valid and reliable item pool is a foundational step in health research, particularly in sensitive domains such as reproductive health. When research involves diverse populations, a rigorous process of cultural adaptation is not merely beneficial but essential for ensuring the conceptual, semantic, and operational equivalence of the instrument. Framed within a broader thesis on item pool development for reproductive health behaviors research, these application notes provide a detailed protocol for the systematic creation and initial validation of culturally-adapted items. This guide synthesizes contemporary methodologies to help researchers generate data that accurately reflects the health constructs of interest across different cultural contexts [9] [25].

Theoretical Framework and Core Principles

The cultural adaptation of research items should be guided by a solid theoretical framework that acknowledges health as a bio-psycho-social construct. This is particularly critical for reproductive health, which is deeply embedded in cultural norms, values, and social structures [25]. The process must extend beyond simple translation to encompass a holistic assessment of the target population's worldview.

The core principle is to achieve equivalence in several dimensions:

- Conceptual Equivalence: Ensuring the health construct (e.g., "sexual and reproductive health") is perceived and defined similarly across cultures.

- Semantic Equivalence: Ensuring the meaning of each item is retained after translation and adaptation.

- Operational Equivalence: Ensuring the method of administration (e.g., self-administered questionnaire, interview) is appropriate and yields comparable data.

This approach aligns with integrated health models, which recognize that domains like reproductive health, mental health, and oral health are deeply interconnected. An instrument developed for Nigerian adolescents, for example, successfully integrated these three domains into a single assessment tool, acknowledging their shared social, economic, and behavioral determinants [9].

Phase 1: Item Generation – A Mixed-Methods Approach

The initial phase aims to generate a comprehensive and relevant item pool. A sequential exploratory mixed-methods design is recommended, as it leverages both qualitative and quantitative data to ensure items are grounded in the lived experiences of the target population [25].

Qualitative Data Collection and Analysis

Objective: To explore the concept of the health behavior and its dimensions from the emic (insider) perspective.

Protocol:

- Participant Selection: Use purposive sampling with maximum variation to recruit individuals from the target population. Key inclusion criteria for a reproductive health study might include being of reproductive age, marital status, and relevant health or occupational experiences. For example, a study on women shift workers recruited married women aged 18-45 with pregnancy and work experience of over two years [25].

- Data Collection: Conduct in-depth, semi-structured interviews. Sample questions include: "In your opinion, what are the effects of [health behavior/concept] on your daily life?" and "Can you describe your experiences with...?" Probing questions should be used to elicit richer data [25]. Interviews should continue until data saturation is achieved, which may occur after approximately 20-25 interviews [25].

- Data Analysis: Employ conventional content analysis as described by Graneheim and Lundman [25]. This involves:

- Transcribing interviews verbatim.

- Repeated reading to derive meaning units.

- Condensing meaning units into codes.

- Grouping codes into sub-categories and categories based on their relationships.

- Defining the overarching themes or dimensions that constitute the construct.

Literature Review and Item Pool Generation

Objective: To deductively generate items based on the qualitative findings and existing scientific literature.

Protocol:

- Structured Literature Review:

- Conduct a systematic search in electronic databases (e.g., PubMed, ScienceDirect) using keywords related to the health domains of interest.

- Apply inclusion/exclusion criteria. For instance, include articles that conceptualize or measure the health domains in apparently healthy populations, and exclude those focused on specific diseases or highly unique contexts [9].

- From the included articles, identify and extract established domains, subscales, and validated measurement items.

- Logical Partitioning (Deductive Method): Use this method to define constructs and generate items that align with predefined concepts from the qualitative work and literature [9]. This ensures theoretical consistency but should be complemented by the qualitative findings to capture new, culturally-specific dimensions.

- Item Pool Compilation: Combine items generated from the qualitative analysis with those adapted from validated tools. Most items should be retained verbatim, while others may be rephrased for clarity and cultural appropriateness. The preliminary pool should be organized into logical sections (e.g., socio-demographics, core health domains, service utilization) [9].

Table 1: Sample Item Pool Structure from an Integrated Health Tool Development Study

| Section | Number of Items | Domain / Construct Measured | Example Source |

|---|---|---|---|

| Socio-demographics | 21 | Age, education, family background | Researcher-developed |

| Mental Health | 35+ | Psychological distress (12 items), depression (9 items), generalized anxiety (8 items), suicide ideation (4 items), risk factors (substance use, self-esteem) | PHQ-9, GAD-7, Rosenberg Scale [9] |

| Sexual & Reproductive Health | 11 | Sexual debut, sexual activity status, knowledge | Literature-derived [9] |

| Oral Health | 8 | Oral hygiene practices, self-reported oral problems, oral habits | Literature-derived [9] |

| Service Utilization | 2 | Access to and use of general, dental, and psychiatric services | Researcher-developed [9] |

Phase 2: Content and Face Validity Assessment

This phase ensures the item pool is relevant, clear, and comprehensible to the target population and the expert community.

Quantitative Content Validity

Objective: To statistically determine the essentiality and relevance of each item.

Protocol:

- Expert Panel: Assemble a panel of 10-12 experts from relevant fields (e.g., reproductive health, midwifery, psychology, occupational health, and cultural liaisons) [25].

- Content Validity Ratio (CVR): Ask experts to rate each item on a 3-point scale of "essential," "useful but not essential," or "not necessary." Calculate CVR for each item using the formula:

CVR = (n_e - N/2) / (N/2), wheren_eis the number of experts rating "essential," andNis the total number of experts. An item CVR of 0.64 or more is considered acceptable for a panel of 10 experts [25]. - Content Validity Index (CVI): Ask the same experts to rate the relevance of each item on a 4-point scale (1=not relevant, 4=highly relevant). The CVI for each item is the proportion of experts giving a rating of 3 or 4. An item-level CVI of 0.78 or higher is recommended for acceptance [25].

Qualitative Face and Content Validity

Objective: To identify problems with wording, formatting, ambiguity, and cultural appropriateness.

Protocol:

- Expert Qualitative Assessment: Experts are invited to comment qualitatively on the grammar, wording, item allocation, and scaling of the items [25].

- Target Population Assessment: Conduct cognitive interviews with 8-10 individuals from the target population. Ask them to verbalize their thought process as they answer each question. Probe for understanding of instructions, items, and response options.

- Item Impact Score: To quantitatively assess face validity, members of the target population can rate the importance of each item on a 5-point scale. The impact score is calculated by multiplying the mean importance score by the percentage of respondents who rated it 4 or 5 [25].

Table 2: Psychometric Validity and Reliability Metrics from a Sample Study

| Psychometric Property | Method Used | Result Reported | Acceptance Threshold |

|---|---|---|---|

| Content Validity | Content Validity Ratio (CVR) | Items with CVR > 0.64 retained | > 0.62 (for 10 experts) |

| Content Validity | Content Validity Index (CVI) | Item-level CVI > 0.78 | ≥ 0.78 |

| Face Validity | Item Impact Score | Calculated for each item | Higher score indicates greater perceived importance |

| Construct Validity | Exploratory Factor Analysis (EFA) | 5 factors explaining 56.5% of variance [25] | KMO > 0.8; Factor loading > 0.3 [25] |

| Internal Consistency | Cronbach's Alpha | Alpha > 0.92 for the entire tool [25] | > 0.7 |

| Composite Reliability | Composite Reliability (CR) | CR > 0.7 [25] | > 0.7 |

| Stability | Test-retest Reliability | Not explicitly mentioned in results | ICC > 0.7 (suggested) |

Phase 3: Pilot Testing and Psychometric Evaluation

A pilot study is conducted to assess the preliminary reliability and functionality of the instrument, followed by a larger study for robust psychometric evaluation.

Pilot Testing Protocol

Objective: To identify any unforeseen problems with the instrument's administration and to conduct a preliminary reliability analysis.

Procedure:

- Administer the instrument to a small, convenience sample (e.g., n=50) from the target population [25].

- Calculate the Cronbach's alpha coefficient to assess internal consistency. A value above 0.7 is generally acceptable, though a value >0.9, as found in one pilot study, indicates excellent internal consistency [25].

- Check inter-item correlation coefficients; items with correlations below 0.3 may need revision [25].