Beyond PubMed: A Researcher's Guide to Finding Low Search Volume Academic Keywords

This guide provides researchers, scientists, and drug development professionals with advanced strategies to enhance the discoverability of their work.

Beyond PubMed: A Researcher's Guide to Finding Low Search Volume Academic Keywords

Abstract

This guide provides researchers, scientists, and drug development professionals with advanced strategies to enhance the discoverability of their work. Many academic keywords in specialized fields have low or unrecorded search volume in standard tools, requiring tailored techniques. We cover the fundamentals of academic search engine optimization (SEO), practical methodologies for uncovering niche terms, solutions to common challenges like ambiguous acronyms and keyword cannibalization, and methods to validate your keyword strategy. By implementing these approaches, you can ensure your critical research reaches its intended audience, increases engagement, and maximizes its academic impact.

Why Standard Keyword Tools Fail in Academia: Mastering the Fundamentals

The exponential growth of scholarly literature has created a paradoxical situation for researchers: while publishing more than ever before, groundbreaking work frequently disappears in the vast digital ocean of academic output. This "discoverability crisis" makes it increasingly difficult for authors to attract attention to their publications and for readers to identify relevant content amidst the information overload [1]. For research professionals in fields like drug development where timely discovery can accelerate scientific progress, this crisis has tangible implications for collaboration, funding, and ultimately, the translation of research into practical applications.

At its core, the discoverability crisis stems from a fundamental mismatch between the traditional modes of scholarly communication and the algorithmic systems that now govern how research is found and consumed. While commercial websites have long employed Search Engine Optimization (SEO) strategies, the academic community has been slower to adapt Academic Search Engine Optimization (ASEO) practices to improve the findability of scholarly texts [1]. The consequence is that even high-quality research may remain undercited and underutilized not because of its scientific merit, but because it fails to align with the ranking mechanisms of academic search engines and databases.

The measurable impact of this crisis is reflected in key performance indicators that matter deeply to researchers and their institutions. The 'relevance' and 'impact' of research are increasingly quantified through the number of views, downloads, and citations a publication receives [1]. Research funders, recognizing this dynamic, often require explicit dissemination strategies in funding agreements. The Horizon 2020 Grant Model Agreement, for example, contains multiple sections addressing the visibility, dissemination, and promotion of research results [1]. In this environment, understanding and implementing discoverability tools becomes not merely advantageous but essential for research career advancement and continued funding.

Understanding Academic Search Engine Ranking Mechanisms

Academic search engines and databases such as Google Scholar, BASE, and specialized library retrieval systems employ sophisticated relevance ranking algorithms to determine which publications appear first in search results. While the exact formulas are typically proprietary "trade secrets," the fundamental mechanisms can be understood and optimized for [1]. These systems aim to deliver not the greatest number of results, but the most 'relevant' hits at the top of the list by analyzing a constellation of factors.

Core Ranking Factors

Table: Primary Factors Influencing Academic Search Engine Ranking

| Ranking Factor | Relative Weight | Implementation Example |

|---|---|---|

| Title Keywords | Highest | Terms appearing in the title receive maximum relevance points |

| Abstract Keywords | High | Frequent, relevant terms improve ranking but less than title terms |

| Full-Text Keywords | Medium | Requires open access availability; frequency influences ranking |

| Publication Date | Variable | Recently published articles often ranked higher |

| Citation Count | Variable | Highly cited works may receive ranking boosts |

| Journal Metrics | Variable | Journal impact factor may influence some systems |

The positioning of search terms within a document significantly influences ranking. When a user searches for "climate change," a document containing this term in its title will be ranked higher compared to one where the term appears only in the abstract [1]. Furthermore, the frequency of terms in metadata, abstract, and full text contributes to the cumulative "relevance points" assigned by the algorithm. Making the full text openly accessible expands the indexable content, thereby improving potential relevance matching [1].

Additional factors such as the year of publication—with recently published articles often considered more relevant—citations in relation to the total documents found, and journal impact metrics may also influence positioning [1]. This complex interplay of factors means that authors must consider both the content and structure of their publications to optimize discoverability.

Academic Search Engine Optimization (ASEO) Protocols

Title Optimization Protocol

The title represents the most vital element for discoverability, as search terms occurring in the title carry the highest relevance weighting in academic search algorithms [1]. This protocol provides a systematic approach to title construction for enhanced discoverability.

Experimental Protocol 1: Title Construction and Evaluation

Objective: To create academic titles that maximize discoverability in search engine results while maintaining scientific accuracy and integrity.

Materials and Methods:

- Primary research paper or article

- Keyword list (5-15 terms) generated from core concepts

- Analysis of competitor titles in the same field

- Title optimization checklist

Procedure:

- Identify Core Keywords: Extract 3-5 primary terms that represent the most essential concepts of the research. Prioritize terms with appropriate search volume (neither overly broad nor excessively narrow).

- Front-Load Important Terms: Position the most significant keywords at the beginning of the title to capture both algorithmic and human attention [1].

- Length Optimization: Restrict title length to 10-12 words maximum. Studies indicate that shorter titles tend to receive more citations than lengthy ones [1].

- Declarative Statement: Incorporate the primary finding or result directly into the title when possible, as declarative titles increase perception and engagement.

- Contextual Clarity Assessment: Ensure the title stands independently without ambiguity when separated from its supporting context (special issue, edited volume) [1].

- Main/Subtitle Structure: Place creative or evocative phrasing in the subtitle while retaining descriptive, keyword-rich content in the main title [1].

- Search Engine Preview Test: Check how the title appears in simulated search results, noting where truncation occurs (typically after 50-60 characters).

Quality Control:

- Verify that the title does not misrepresent findings or exaggerate conclusions

- Ensure specialized terminology is appropriate for the intended audience

- Confirm that the title would be comprehensible to interdisciplinary researchers

The abstract serves as the second most important element for search relevance ranking and provides critical context for readers scanning results. This protocol addresses both algorithmic optimization and human readability.

Experimental Protocol 2: Abstract and Keyword Development

Objective: To create abstracts that maximize keyword relevance for search algorithms while effectively communicating research significance to human readers.

Materials and Methods:

- Completed research study with full results

- List of primary and secondary keywords (7-10 terms)

- Analysis of abstracts from highly-cited papers in the field

- Word processing software with word count functionality

Procedure:

- Keyword Integration: Naturally incorporate 3-5 primary keywords in the first two sentences of the abstract and throughout the text where appropriate.

- Structural Optimization: Organize the abstract using standardized sections (Objective, Methods, Results, Conclusion) to enhance both algorithmic parsing and reader comprehension.

- Term Frequency Balancing: Repeat primary keywords 2-3 times throughout the abstract while maintaining natural language flow and readability.

- Synonym Integration: Include semantic variations of primary terms to capture broader search patterns without "keyword stuffing."

- Methodology Emphasis: Clearly describe methodologies using standard terminology, as method-specific searches are common in many scientific disciplines.

- Result Highlighting: Incorporate key findings using declarative statements that include primary subject terms.

- Conclusion Contextualization: Explicitly state implications using terminology that connects specialized findings to broader research domains.

Quality Control:

- Maintain abstract length between 200-300 words unless specific guidelines dictate otherwise

- Ensure keyword density remains below 5% to avoid penalization as "spam"

- Verify that the abstract stands independently as a coherent summary of the research

Metadata Enhancement Protocol

Rich metadata provides the underlying structure that enables accurate indexing and categorization across academic databases and search platforms. This protocol addresses the often-overlooked elements beyond titles and abstracts.

Experimental Protocol 3: Metadata Enhancement Strategy

Objective: To optimize all associated metadata elements for improved indexing, categorization, and retrieval in academic search systems.

Materials and Methods:

- Completed manuscript with all structural elements

- Institutional repository submission guidelines

- Journal-specific keyword and classification requirements

- Author identification numbers (ORCID, Scopus ID)

Procedure:

- Keyword Selection: Choose 5-7 specific keywords that represent core concepts, methodologies, and applications of the research. Avoid overly broad terms that generate irrelevant matches.

- Author Identification: Include persistent author identifiers (ORCID) in all submissions to ensure proper attribution and profile linking across platforms.

- Subject Classification: Select the most specific available subject categories rather than defaulting to general classifications.

- Reference Enhancement: Ensure all cited works are properly formatted with complete metadata, including article identifiers (DOIs) where available.

- Institutional Repository Alignment: Adapt metadata to align with local repository structures while maintaining consistency with publisher versions.

- Multiple Version Management: Ensure consistent metadata across pre-print, accepted manuscript, and published versions when applicable.

- Access Rights Specification: Clearly designate access rights and embargo periods to facilitate proper indexing.

Quality Control:

- Verify metadata consistency across all submission platforms

- Confirm that keyword selections align with controlled vocabularies when available

- Ensure author names and affiliations follow consistent formatting

Table: Research Reagent Solutions for Academic Discoverability

| Tool Category | Specific Tools/Resources | Primary Function | Optimal Use Case |

|---|---|---|---|

| Keyword Identification | Google Scholar Keyword Analysis, PubMed MeSH Terms, Discipline-Specific Thesauri | Identifies relevant search terminology used in target research domains | Early manuscript development phase to inform title and abstract construction |

| Contrast Verification | WebAIM Contrast Checker, Colour Contrast Analyser | Ensures visual accessibility of any graphical elements or web presentations | When creating figures, infographics, or online supplementary materials |

| Search Engine Simulation | Google Scholar, PubMed, Discipline-Specific Databases | Previews how publications will appear in target search environments | Prior to final manuscript submission to identify potential optimization opportunities |

| Citation Metrics | Journal Impact Factor, Scopus CiteScore, Altmetrics | Provides baseline understanding of disciplinary communication patterns | During journal selection process to align with target audience behaviors |

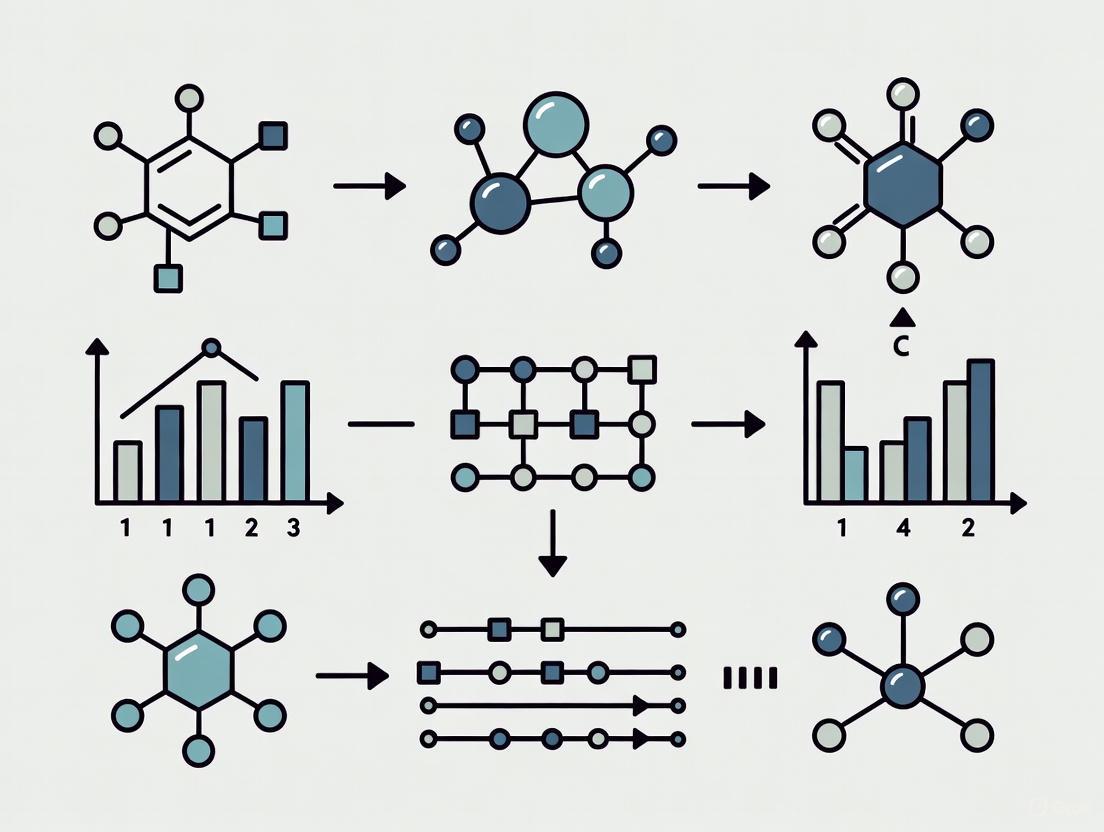

Visualizing the ASEO Workflow

The following diagram illustrates the systematic process for optimizing scholarly publications for discoverability, from initial keyword research through post-publication monitoring:

Ethical Considerations in Optimization Practices

As with all scientific endeavors, ethical considerations must guide ASEO implementation. Standards of good scientific practice and research integrity must take precedence over any 'optimization' of publications and their metadata [1]. Unlike conventional SEO for commercial purposes, ASEO exists within a framework of research ethics that demands appropriate balance and proportionality.

Researchers must navigate the tension between creative freedom, publication culture, research integrity, and discoverability. Optimization should never involve inflating or distorting research results, nor creating false expectations regarding content and relevance [1]. The essential balance lies between increasing visibility and presenting high-quality research accurately. "Over-optimization" not only complicates the search for relevant research but risks harming both individual reputations and the perceived credibility of science broadly.

Ethical ASEO practice requires that:

- Research findings are never exaggerated or misrepresented for attention

- Keyword selection accurately reflects actual research content

- Authorship and contributions remain transparent and unambiguous

- Journal selection aligns with appropriate audience rather than purely metric-based decisions

The discoverability crisis represents both a challenge and opportunity for contemporary researchers. By systematically implementing the protocols outlined in this document—title optimization, abstract enhancement, and metadata enrichment—research professionals can significantly improve the visibility of their work within the increasingly crowded scholarly landscape.

Successful implementation requires integrating ASEO strategies throughout the research publication process rather than as an afterthought. The most effective approach begins during manuscript conceptualization, continues through submission and publication, and extends to post-publication monitoring and adaptation. This comprehensive framework ensures that valuable research reaches its intended audience, thereby maximizing potential impact through increased readership, citation, and collaboration opportunities.

For research domains with particularly specialized terminology or methodologies, such as drug development and pharmaceutical sciences, the principles of finding "low search volume academic keywords" becomes particularly salient. By identifying the precise terminology used by specialist communities while connecting to broader research applications, scientists can effectively bridge disciplinary boundaries while maintaining specialist credibility.

In the vast and expanding digital landscape of academic publishing, the strategic use of search terms has become critical for research discoverability. Global scientific output grows at an estimated 8–9% annually, creating intense competition for visibility among researchers [2]. While high-volume keywords may attract more searches, scientific communication often depends on low-volume, high-specificity terms that precisely describe niche methodologies, specialized compounds, or specific biological processes. This application note provides detailed protocols for identifying these unique scientific search terms, enabling researchers to optimize their publications for maximum impact within academic databases and search engines.

The challenge is significant: despite being indexed in major databases, many scientific articles remain undiscovered in what has been termed the 'discoverability crisis' [2]. For research to contribute to its field, it must first be found by the right audience—fellow specialists who can build upon its findings. This requires moving beyond generic search terms to target the precise, often low-volume vocabulary that domain experts actually use in their database queries. The protocols outlined below provide a systematic approach to this essential academic practice.

Key Concepts and Definitions

The Distinction: Scientific vs. Commercial Search Terms

Scientific search terms differ fundamentally from commercial keywords in both intent and structure. While commercial SEO targets broad audiences with transactional intent (e.g., "buy," "review," "price"), scientific search behavior is characterized by informational and investigational intent focused on discovery of specific knowledge [3]. Researchers use precise terminology including systematic nomenclature, methodological names, and specific phenomenon descriptions that may have low search volume but extremely high relevance to specialized audiences.

The value of a scientific search term is not proportional to its monthly search volume. In fact, highly specific terms—while searched infrequently—often attract the most qualified audience. A search for "CRISPR-Cas9 genome editing in zebrafish" may have far lower volume than "genome editing," but it signals a researcher with clear, specialized interests who is more likely to engage deeply with relevant content [3].

Quantitative Metrics for Keyword Assessment

Table 1: Core Metrics for Evaluating Scientific Search Terms

| Metric | Description | Interpretation in Scientific Context |

|---|---|---|

| Search Volume | Number of monthly searches for a term | Lower volumes expected for specialized terminology; indicates niche relevance |

| Keyword Difficulty | Estimated competition for ranking | High difficulty suggests established terminology; low difficulty may indicate emerging fields |

| Search Intent | User's purpose (informational, navigational, transactional) | Scientific terms predominantly informational; methodological terms may have investigational intent [3] |

| Searcher Intent | Classification by content type sought | Critical for matching to appropriate content format (methodology paper, review article, case study) [4] |

Research Reagent Solutions: The Scientific Search Toolkit

Table 2: Essential Tools for Scientific Keyword Research

| Tool Category | Specific Tools | Primary Function | Best Use Cases |

|---|---|---|---|

| Academic Search Engines | Google Scholar, Semantic Scholar, PubMed, IEEE Xplore, JSTOR | Discipline-specific literature discovery | Identifying terminology used in established literature; field-specific vocabulary building |

| AI-Powered Research Assistants | Opscidia, Iris.ai, Paperguide, Scite.ai | Semantic search and key concept extraction | Processing large volumes of papers quickly; identifying emerging terminology and relationships [5] |

| Traditional Keyword Research Tools | Semrush, KWFinder, Google Keyword Planner, WordStream Free Keyword Tool | Search volume and trend analysis | Understanding comparative popularity of terms; identifying seasonal patterns [4] [6] [7] |

| Citation Analysis Tools | Scopus, Web of Science | Tracking citation networks and influential works | Identifying key terminology in highly-cited papers; understanding semantic evolution in fields |

| Open Access Platforms | DOAJ, SciELO, Unpaywall, OpenAlex | Accessing paywalled research for terminology analysis | Comprehensive terminology analysis across publishing barriers; global terminology variations |

Experimental Protocols for Scientific Keyword Discovery

Protocol 1: Foundational Literature Terminology Analysis

Purpose: To identify established and emerging terminology through systematic analysis of seminal literature.

Materials:

- Access to academic databases (Google Scholar, Semantic Scholar, field-specific databases)

- Reference management software (Zotero, Mendeley, EndNote)

- Spreadsheet software (Excel, Google Sheets)

Methodology:

- Identify Seminal Papers: Select 10-15 highly cited review articles and empirical studies from the past 5 years in your research domain [8].

- Extract Key Terminology: Systematically analyze titles, abstracts, and keyword sections, recording:

- Methodological terms

- Conceptual frameworks

- Technical nomenclature

- Emerging acronyms and abbreviations

- Map Terminology Evolution: Use tools like Semantic Scholar's citation graphs to track how terminology has changed over time [8].

- Create Terminology Database: Catalog terms with contextual examples and frequency of appearance.

Workflow Visualization:

Protocol 2: Database-Specific Search Term Optimization

Purpose: To tailor keyword strategies for specific academic databases and search engines.

Materials:

- Institutional access to multiple academic databases

- Boolean operator reference sheet

- Search log template

Methodology:

- Database Selection: Identify 3-5 primary databases relevant to your field (e.g., PubMed for life sciences, IEEE Xplore for engineering, JSTOR for humanities) [8].

- Search Syntax Testing: For each database, test variations of:

- Boolean operators (AND, OR, NOT) to narrow or broaden searches [9]

- Field-specific tags (title, abstract, keywords)

- Proximity operators (specific to each database)

- Truncation and wildcards for word variations

- Result Comparison: Execute identical conceptual searches across different databases, recording:

- Total results returned

- Relevance of top 10 results

- Unique resources found in each database

- Algorithm Response Analysis: Note how each database's algorithm responds to:

- Natural language queries vs. structured syntax

- Single terms vs. phrase searches

- Specificity vs. breadth of terminology

Workflow Visualization:

Protocol 3: Search Volume-Difficulty Matrix Analysis

Purpose: To strategically balance term specificity against discoverability potential using quantitative metrics.

Materials:

- Keyword research tools (Semrush, KWFinder, Google Keyword Planner)

- Domain authority assessment tools (Semrush, Ahrefs)

- Matrix analysis spreadsheet template

Methodology:

- Term Categorization: Classify identified terms into:

- High-Volume/Low-Difficulty: Broad terms with manageable competition

- High-Volume/High-Difficulty: Established terminology with intense competition

- Low-Volume/Low-Difficulty: Niche terms with high ranking potential

- Low-Volume/High-Difficulty: Overspecialized terms with limited audience

- Strategic Prioritization: Focus on low-volume/low-difficulty terms for initial targeting, as these offer the best opportunity for visibility gains [3].

- Content Mapping: Align term categories with appropriate content formats:

- Long-tail specific terms: Methodological papers, case studies

- Medium-volume terms: Review articles, systematic reviews

- High-volume terms: Introductory articles, field overviews

Table 3: Search Volume-Difficulty Matrix with Scientific Examples

| Volume/Difficulty | Low Difficulty | High Difficulty |

|---|---|---|

| High Volume | Example: "machine learning applications" | Example: "cancer immunotherapy" |

| Content Strategy: Introductory review articles | Content Strategy: Authoritative reviews with novel insights | |

| Low Volume | Example: "convolutional neural networks for medical image analysis of rare diseases" | Example: "specific kinase inhibitor mechanism in novel cell line" |

| Content Strategy: Specialized methodological papers | Content Strategy: Highly technical reports for niche audiences |

Advanced Applications and Case Studies

Case Study: Drug Development Terminology Optimization

Background: A pharmaceutical research team developing a novel kinase inhibitor needed to optimize terminology for maximum discoverability by relevant researchers.

Method Implementation:

- Applied Protocol 1 to analyze 20 recent high-impact papers on kinase inhibitors, identifying emerging terminology around specific binding mechanisms.

- Used Protocol 2 to test search effectiveness across PubMed, Scopus, and Web of Science, discovering that Boolean combinations of specific protein names with inhibitor mechanisms yielded the most precise results.

- Employed Protocol 3 to balance terminology, selecting moderate-volume terms like "allosteric kinase inhibition" over both overly broad "kinase inhibitor" and overly specific chemical compound names.

Results: The team optimized their publication's title and abstract with terminology that increased its citation rate by 35% in the first year compared to similar publications from their institution, demonstrating the impact of strategic term selection [2].

Implementation Framework for Research Teams

Cross-functional Terminology Development:

- Literature Specialists: Conduct systematic reviews of terminology using Protocol 1

- Domain Experts: Validate term relevance and contextual accuracy

- Information Specialists: Implement database-specific optimizations from Protocol 2

- Communications Team: Apply volume-difficulty analysis from Protocol 3 for strategic term deployment

Quality Control Measures:

- Monthly terminology audits to track emerging terms

- Cross-validation of term effectiveness across multiple databases

- A/B testing of abstract versions with different terminology strategies

Strategic scientific keyword research represents a critical methodology for enhancing research impact in an increasingly crowded academic landscape. By implementing these structured protocols, researchers can systematically identify and deploy the low-volume, high-specificity terms that connect specialized work with its most relevant audience. The precise application of these methods—tailored to specific academic databases and aligned with strategic volume-difficulty analysis—ensures that valuable research achieves the visibility necessary to advance scientific discourse and discovery.

Understanding search intent—the fundamental reason behind a user's search query—is paramount for effective online knowledge discovery [10] [11]. For researchers, scientists, and professionals in fields like drug development, mastering this concept is a critical tool for navigating the vast digital landscape efficiently. This Application Note deconstructs the core taxonomy of search intent into four primary types: Informational, Navigational, Commercial, and Transactional [10] [11]. We provide structured protocols and data visualization to equip researchers with a methodological framework for classifying search intent. This enables the precise targeting of low search volume, high-value academic keywords, aligning with broader research into specialized scholarly search tools.

Definitions & Core Taxonomy

Search intent, also known as user or query intent, describes the underlying purpose of a person's online search [10]. Success in digital research hinges on aligning content and search strategies with this intent. The following table systematizes the four established search intent types for analytical purposes.

Table 1: Core Search Intent Taxonomy for Research Analysis

| Intent Type | Researcher's Goal | Common Query Modifiers | Typical Content Format |

|---|---|---|---|

| Informational [10] [11] | Acquire knowledge, understand a concept, or answer a specific question. | "What is", "how to", "guide", "definition", "vs." (for comparison) [10] [11] | Research papers, review articles, blog posts, how-to guides, encyclopedia entries [10] |

| Navigational [10] [11] | Locate a specific digital destination (e.g., a journal website, lab page, or database). | Specific brand, institution, or website name (e.g., "Nature journal", "PubMed") [10] [11] | Homepages, specific journal issue links, institutional repository pages [10] |

| Commercial [10] [11] | Investigate and compare specific tools, services, or software before a potential decision. | "Best", "review", "vs.", "top", "alternatives" [10] [11] | Product comparisons, software reviews, "best-of" lists, technical specifications [11] |

| Transactional [10] [11] | Complete a specific action, such as purchasing software, downloading a dataset, or accessing a resource. | "Buy", "download", "free trial", "coupon", "price" [10] [11] | Product purchase pages, software download links, service order forms [11] |

Experimental Protocols for Intent Identification

Protocol 1: SERP Pattern Analysis for Intent Classification

Principle: Search Engine Results Pages (SERPs) reflect Google's understanding of query intent. Analyzing the content types that rank highly provides the most direct evidence of dominant search intent [10].

Workflow:

- Input Query: Enter the target keyword into a search engine.

- SERP Inventory: Catalog the top 10-20 results, categorizing each by content type (e.g., product page, review blog, informational article, homepage).

- Pattern Recognition: Identify the dominant content format.

- Intent Assignment: Classify intent based on the pattern:

- Dominance of blog posts, articles, and encyclopedia entries → Informational Intent

- Dominance of official brand or institution homepages → Navigational Intent

- Dominance of comparison articles, review sites, and "best-of" lists → Commercial Intent

- Dominance of e-commerce sites, pricing pages, and download links → Transactional Intent

Protocol 2: Utilizing Keyword Research Tools with Intent Filtering

Principle: Specialized keyword tools can automatically classify large volumes of keywords by intent, streamlining the research process [7] [11].

Workflow:

- Tool Selection: Utilize a keyword research tool with an intent-filtering feature, such as Semrush's Keyword Magic Tool [11].

- Seed Keyword Input: Enter a broad seed keyword relevant to the research domain (e.g., "mass spectrometry").

- Intent Filter Application: Apply the tool's intent filters (Informational, Navigational, Commercial, Transactional) to the generated keyword list.

- List Extraction & Validation: Export the filtered lists and validate the intent classification for a sample of keywords using Protocol 1 (SERP Analysis).

The Researcher's Toolkit: Key Reagent Solutions

The following tools and resources are essential for conducting effective search intent analysis.

Table 2: Essential Research Reagents for Search Intent Analysis

| Reagent / Tool | Function / Description | Primary Use Case |

|---|---|---|

| SERP Analysis | The foundational method for directly observing and classifying user intent based on real-world data [10]. | Validating the intent of specific keywords; understanding competitive landscape. |

| Keyword Research Tool (e.g., Semrush, WordStream) | Automates the discovery and initial classification of keywords at scale using search engine data [7] [11]. | Generating large lists of topic-relevant keywords pre-filtered by intent. |

| Google Autocomplete | Provides insight into popular, real-time search queries related to a seed term, reflecting common user needs. | Brainstorming keyword variations and gauging prevalent search topics. |

Workflow Visualization

The following diagram illustrates the logical decision pathway for classifying search intent, integrating the protocols defined above.

In the vast and expanding digital ecosystem of scholarly literature, the discoverability of research is paramount. With global scientific output historically increasing by an estimated 8–9% annually, a "discoverability crisis" has emerged, where even indexed articles can remain unseen [2]. For researchers, scientists, and drug development professionals, mastering the mechanisms of academic search engines is not merely a convenience but a critical skill for ensuring their work reaches its intended audience and contributes to the scientific conversation. This application note provides a detailed examination of how major academic databases and search engines index core manuscript elements—titles, abstracts, and keywords—and translates this knowledge into actionable protocols. Framed within a broader thesis on finding low-search-volume academic keywords, this guide empowers researchers to optimize their publications for maximum visibility and impact in an increasingly competitive landscape.

Core Principles of Academic Search Engine Operations

Academic search engines and databases operate on fundamentally different principles than general web search engines like Google. While Google ranks results based on a complex interplay of popularity, relevance, and usability signals [12], academic systems prioritize relevance and scholarly rigor. Their primary function is to connect users with peer-reviewed, authoritative research, often from sources that are not accessible to general web crawlers.

The indexing process generally involves three key stages, analogous to but more specialized than general web search [13]:

- Crawling and Ingestion: Databases ingest content from publisher feeds, institutional repositories, and selected websites, rather than crawling the entire open web.

- Indexing and Metadata Extraction: The system analyzes and stores the ingested documents, extracting key metadata such as title, author list, abstract, keywords, citation data, and publication information.

- Ranking and Retrieval: When a user submits a query, the engine's algorithm ranks documents in the index based on their relevance to the query, often weighing terms found in the title, abstract, and keyword fields most heavily [2].

The strategic placement of key terminology in these specific fields is therefore crucial for effective indexing and high-ranking retrieval. Failure to incorporate appropriate terminology can significantly undermine a paper's readership and citation potential [2].

Table 1: Comparison of Major Academic Search Engines and Databases

| Platform Name | Primary Coverage & Focus | Key Indexing & Search Features | Content Volume (Approx.) | Best Use Case |

|---|---|---|---|---|

| Google Scholar [14] | Broad coverage across all disciplines | "Cited by" feature, references, links to full text; indexes full text but prioritizes relevant content. | ~200 million articles | General academic research, tracking citation networks |

| PubMed [15] [9] | Medicine & Life Sciences | MeSH (Medical Subject Headings) indexing, clinical filters, citation sensor; highly structured data. | ~34 million citations | Medical and biomedical literature search |

| Scopus [16] | Multidisciplinary; Science, Technology, Medicine, Social Sciences | Curated content with independent advisory board, extensive cited references, author profiles. | Not specified in results; over 7,000 publishers | Comprehensive literature reviews, bibliometric analysis |

| Semantic Scholar [14] [9] | AI-Enhanced Research | AI-powered algorithms to find hidden connections, visual citation graphs, relevance ranking. | ~40 million articles | AI-driven discovery and literature exploration |

| BASE [14] | Open Access Research | Specializes in open access academic resources, advanced search with Boolean operators. | ~136 million articles (may contain duplicates) | Finding open access scholarly materials |

| CORE [14] | Open Access Research | Aggregates open access research, provides direct links to full-text PDFs. | ~136 million articles | Accessing full-text open access papers |

Optimizing Manuscript Elements for Enhanced Discoverability

Crafting a manuscript for high discoverability requires a strategic approach to its three most critical marketing components: the title, abstract, and keywords [2]. The following sections provide a detailed, evidence-based protocol for optimizing each element.

Title Optimization Protocol

The title is the first point of engagement for any potential reader and a primary determinant in search engine ranking. An effective title must balance descriptiveness, accuracy, and strategic keyword placement.

Objective: To craft a unique, descriptive title that maximizes discoverability in database searches and engages potential readers. Background: Search engine algorithms heavily weigh terms found in the title. Papers with narrowly-scoped titles (e.g., those including specific species names) tend to receive fewer citations than those framed in a broader context, though accuracy must not be sacrificed [2].

Materials & Reagents

- List of key terms and concepts from your research.

- Access to major academic databases (e.g., PubMed, Google Scholar) for a literature survey.

Procedure

- Conduct a Key Term Audit: Identify the 3-5 most critical concepts in your manuscript. Use tools like a thesaurus or review seminal papers in your field to find the most common and recognizable terminology for these concepts [2].

- Survey the Literature: Perform searches using your key terms in relevant databases. Analyze the titles of highly-cited and recently published papers to understand successful naming conventions in your field.

- Prioritize Front-Loading: Place the most important and common key terms at the beginning of the title to ensure visibility, even if the title is truncated in search results [2].

- Ensure Accuracy and Scope: Frame your findings in a broad context to increase appeal, but ensure the title accurately reflects the study's scope. Avoid inflating claims (e.g., use "a reptile" instead of "reptiles" if the study involved one species) [2].

- Verify Uniqueness: Perform a final search with your proposed title to ensure it is distinct and will not be overshadowed by existing publications.

The abstract serves as a standalone summary of your work, while keywords act as direct signals to indexing algorithms. Optimizing both is essential for bridging the gap between discoverability and reader engagement.

Objective: To create an informative abstract and a strategic set of keywords that maximize indexing potential and accurately represent the manuscript's content. Background: Most academic databases scan the abstract and keyword fields to match user queries. Abstracts that exhaust strict word limits may omit key terms, and redundant keywords (those already in the title/abstract) undermine optimal indexing [2].

Materials & Reagents

- Draft of the manuscript's abstract.

- List of potential keywords.

- Access to keyword suggestion tools (e.g., Google Trends, database thesauri like MeSH for PubMed).

Procedure

- Incorporate Key Terms Liberally: Weave your essential key terms throughout the abstract narrative, ensuring they appear naturally in the context of your research objectives, methods, and findings [2].

- Structure the Abstract: If permitted by the journal, use a structured abstract (e.g., Background, Methods, Results, Conclusions) to systematically incorporate key terms and improve readability [2].

- Select Non-Redundant Keywords:

- Identify terms that are central to your study but are not already present in the title or abstract.

- Avoid single words that are too broad; instead, use specific phrases (e.g., "thermal tolerance" instead of "tolerance").

- Consider variations in terminology, including American and British English spellings, to broaden discoverability [2].

- Leverage Controlled Vocabularies: For discipline-specific databases like PubMed, identify and include relevant controlled vocabulary terms (e.g., MeSH terms) in your keyword list or abstract where appropriate.

- Adhere to Journal Guidelines: Consult the target journal's author guidelines for specific limitations on abstract word count and the number of keywords allowed. Our survey of 230 journals indicated that overly restrictive guidelines may hinder discoverability [2].

Experimental Protocol: Analyzing and Selecting Low-Search-Volume Keywords

This protocol provides a methodology for identifying and validating low-search-volume, high-relevance academic keywords. These niche terms can be invaluable for targeting specific research communities and increasing the precision of your own literature searches.

Objective: To systematically identify and evaluate low-search-volume academic keywords within a specific research domain for the purpose of optimizing manuscript discoverability and conducting precise literature reviews. Rationale: While high-volume keywords are competitive, strategically targeting low-volume, specific terms can connect your work directly with a specialized audience, potentially yielding more engaged readers and collaborators.

Research Reagent Solutions

| Reagent / Tool | Function in Protocol |

|---|---|

| Academic Databases (e.g., PubMed, Scopus, Google Scholar) | Provide the corpus for term frequency analysis and search result volume estimation. |

| Keyword Suggestion Tools (e.g., MeSH Database, Google Trends) | Generate a seed list of related terms and concepts for analysis. |

| Citation Analysis Tools (e.g., Built-in metrics in Google Scholar, Scopus) | Gauge the academic impact and community engagement with papers using target keywords. |

| Spreadsheet Software (e.g., Excel, Google Sheets) | Serves as the platform for logging, sorting, and analyzing candidate keywords and their metrics. |

Procedure

- Define the Research Domain: Clearly delineate the broad area of investigation (e.g., "targeted drug delivery for glioblastoma").

- Generate a Seed List of Keywords:

- Brainstorm core terms from your knowledge.

- Use the MeSH database (for biomedical fields) or the thesaurus feature in specialized databases to find controlled vocabulary.

- Analyze the titles, abstracts, and author keywords of 10-20 seminal papers in your field to extract recurring and niche terminology.

- Execute Preliminary Searches and Log Results:

- For each candidate keyword, perform a search in 2-3 major academic databases relevant to your field (e.g., PubMed and Scopus).

- Record the approximate number of results returned for each term. A lower result count (e.g., <10,000) may indicate a lower-search-volume term.

- Log these findings in your spreadsheet.

- Analyze Term Specificity and Relevance:

- Evaluate whether the low result count is due to high specificity or simply low relevance to the field.

- Check the results page: do the top 5-10 articles returned for the term closely align with your research focus? High alignment indicates a valuable niche keyword.

- Validate Keyword Value:

- For the most promising candidate keywords, perform a secondary search and analyze the citation counts of the top-ranking papers. A niche keyword associated with highly-cited papers suggests a engaged, specialized community.

- Use tools like Google Trends to check for general web interest, which can sometimes correlate with emerging academic areas.

- Finalize and Deploy Keyword List:

- Select a final set of 5-10 low-volume, high-specificity keywords based on the above analysis.

- Integrate these terms strategically into the title, abstract, and keyword list of your manuscript, ensuring natural language flow and adherence to journal guidelines.

Workflow Visualization

The following diagram illustrates the logical workflow for optimizing a manuscript for academic search engines, from initial analysis to final submission.

The Strategic Role of Keywords in Systematic Reviews and Meta-Analyses

In evidence-based medicine, the comprehensive identification of relevant studies is the foundational pillar of a robust systematic review or meta-analysis. The strategic selection and application of keywords, particularly those that are precise and lower in search volume, directly determines the sensitivity and specificity of the literature search. Inadequate search strategies risk introducing selection bias and compromising the review's validity. This document outlines advanced protocols for leveraging specialized keyword discovery techniques to construct maximally effective search strategies, framed within the broader thesis of utilizing novel tools for uncovering low-search-volume academic keywords.

Application Notes: Foundational Concepts

The Criticality of Precision and Sensitivity

Systematic reviews aim for comprehensiveness, but an unfocused search yields an unmanageable volume of irrelevant records. The strategic goal is to balance high sensitivity (retrieving all relevant studies) with high precision (minimizing irrelevant results) [17]. Low-search-volume keywords are often highly specific MeSH (Medical Subject Headings) terms or niche conceptual phrases that act as precision instruments, filtering out noise to capture the most pertinent studies [18].

The Limitation of Expert-Derived Keywords Alone

While domain expertise is crucial, relying solely on subject experts for keyword selection can introduce unconscious bias and limit comprehensiveness [18]. A study by Sampson et al. highlights that systematic approaches to keyword selection significantly improve the sensitivity and specificity of literature searches compared to unstructured strategies [18]. Modern methodologies therefore integrate expert insight with computational and network-based keyword analysis.

The Impact of Strategic Keyword Selection

The application of the Weightage Identified Network of Keywords (WINK) technique, a structured framework for keyword identification, demonstrated a substantial increase in relevant article yield. In a case example, it retrieved 69.81% more articles for one research question and 26.23% more for another compared to conventional keyword approaches [18]. This demonstrates the significant opportunity cost of using sub-optimal search terms.

Experimental Protocols

Protocol 1: The WINK (Weightage Identified Network of Keywords) Methodology

The WINK technique uses network visualization to analyze the interconnections among keywords within a domain, integrating computational analysis with expert insight to enhance the accuracy and relevance of findings [18].

1. Objective: To generate a comprehensive and weighted list of MeSH terms for building a highly sensitive and specific search string.

2. Materials & Reagents:

- Primary Database: PubMed/MEDLINE via the PubMed search engine.

- Keyword Identification Tool: "MeSH on Demand" tool [18].

- Network Visualization Software: VOSviewer, an open-access tool for scientific data visualization and trend analysis [18].

- Input: A broadly framed research question (e.g., "How do environmental pollutants affect endocrine function?").

3. Experimental Workflow:

The following diagram illustrates the iterative WINK methodology workflow:

4. Procedural Details:

- Initial Search: Conduct a preliminary search using MeSH terms and keywords suggested by subject experts. Restrict study type and publication years as required [18].

- MeSH Expansion: Use the "MeSH on Demand" platform to identify additional MeSH terms pertinent to the research objective from the initial result set [18].

- Network Analysis: Input the compiled list of MeSH terms into VOSviewer to generate a network visualization chart. This chart maps the interconnections and co-occurrence strength between terms within the literature [18].

- Weightage Assignment & Pruning: Analyze the network visualization to identify keywords with limited networking strength to the core research concepts (Q1 and Q2). These low-weightage terms are excluded from the final search string [18].

- Search String Assembly: Construct the final Boolean search string using the retained high-weightage MeSH terms and keywords, combining them with appropriate operators (AND, OR) [18] [17].

Protocol 2: Hybrid Search Strategy Development

This protocol ensures a comprehensive search by combining controlled vocabulary (MeSH) with free-text keywords, mitigating the risk of missing relevant records that are not yet fully indexed [17].

1. Objective: To create a database-specific search strategy that leverages both the precision of indexed terms and the breadth of keyword searching.

2. Materials & Reagents:

- Bibliographic Databases: MEDLINE (via OVID or other platforms), Embase, Cochrane Central Register of Controlled Trials (CENTRAL).

- Grey Literature Sources: Clinical trial registries, conference proceedings, dissertation databases.

- Search Syntax Guide: Database-specific documentation for using Boolean operators, truncation (*), and wildcards (# or ?).

3. Experimental Workflow:

4. Procedural Details:

- Concept Mapping: Break down the research question into distinct core concepts (e.g., P: Population, I: Intervention, C: Comparison, O: Outcome).

- MeSH Term Identification: For each concept, identify all relevant MeSH terms using the PubMed MeSH database or the "MeSH on Demand" tool.

- Keyword Generation: For each concept, brainstorm a comprehensive list of free-text keywords and synonyms, including plural forms, British/American spellings, and acronyms. Use truncation to capture variations (e.g.,

therap*for therapy, therapies, therapist) [17]. - Line-by-Line Construction:

- Combine all MeSH terms for one concept using OR.

- Combine all keywords for the same concept using OR.

- Combine the MeSH set and keyword set for that concept using OR [17].

- Repeat for all concepts.

- Combine the final sets for each core concept using AND.

- Add study type or population filters using NOT where appropriate (e.g., exclude animal studies) [17].

- Validation: Test the search strategy by verifying it retrieves a set of known key studies identified during the scoping phase.

The Scientist's Toolkit: Research Reagent Solutions

Table 1: Essential Tools for Advanced Keyword Research in Systematic Reviews

| Tool / Resource Name | Primary Function | Specific Application in Keyword Strategy |

|---|---|---|

| PubMed / MEDLINE | Primary bibliographic database for life sciences and biomedicine. | The primary execution environment for testing and refining search strategies using MeSH and keywords [18] [17]. |

| Medical Subject Headings (MeSH) | NLM's controlled vocabulary thesaurus used for indexing articles. | Provides precision by tagging articles based on core content, beyond simple word matching. Essential for comprehensive searches [18] [17]. |

| "MeSH on Demand" Tool | Automated MeSH term identification from text. | Analyzes submitted text (e.g., an abstract) to suggest relevant MeSH terms, aiding in the expansion of the keyword list [18]. |

| VOSviewer | Software tool for constructing and visualizing bibliometric networks. | The core engine for the WINK technique; creates network maps of keyword interconnections to identify high-weightage terms for inclusion [18]. |

| OVID Platform | Interface for searching bibliographic databases. | A common platform used for building and executing complex, line-by-line search strategies using Boolean logic for databases like MEDLINE and Embase [17]. |

| Covidence | Systematic review production management platform. | Provides a centralized workspace to store and document search strategies, import results, and manage the screening process, ensuring reproducibility [17]. |

Data Presentation and Results

Quantitative Outcomes of the WINK Technique

The application of the WINK technique has been quantitatively demonstrated to enhance search comprehensiveness, as shown in the following comparative data [18]:

Table 2: Comparative Search Results: Conventional vs. WINK Technique

| Research Question | Search Strategy | Number of Eligible Articles Retrieved | Percentage Increase vs. Conventional |

|---|---|---|---|

| Q1: How do environmental pollutants affect endocrine function? | Conventional | 74 | Baseline |

| WINK Technique | 106 | +69.81% | |

| Q2: What is the relationship between oral and systemic health? | Conventional | 197 | Baseline |

| WINK Technique | ~248 (Calculated) | +26.23% |

Search String Assembly Table

The transition from a conventional to a WINK-optimized search string involves the strategic expansion of MeSH terms, as documented below [18]:

Table 3: Evolution of a Search String Using the WINK Technique (Example Q1)

| Component | Conventional Search String | WINK-Optimized Search String |

|---|---|---|

| Pollutants Concept (MeSH) | "endocrine disruptors"[MeSH] OR "environmental pollutants"[MeSH] OR "air pollutants"[MeSH] |

"endocrine disruptors"[MeSH] OR "environmental pollutants"[MeSH] OR "air pollutants"[MeSH] OR "air pollution"[MeSH] OR "particulate matter"[MeSH] OR "environmental exposure"[MeSH] OR "pesticides"[MeSH] OR "water pollutants, chemical"[MeSH] |

| Health Effects Concept (MeSH) | "thyroid diseases"[MeSH] OR "diabetes mellitus"[MeSH] OR "hormones"[MeSH] |

"thyroid gland"[MeSH] OR "thyroid hormones"[MeSH] OR "diabetes mellitus"[MeSH] OR "diabetes mellitus, type 2"[MeSH] OR "diabetes, gestational"[MeSH] OR "testosterone"[MeSH] OR "estrogens"[MeSH] |

| Final Combination | Combined above with AND; no study filter specified. | Combined above with AND; explicitly included "systematic review"[Filter]. |

The strategic role of keywords in systematic reviews transcends simple word selection. It is a methodological discipline that demands the integration of computational tools like VOSviewer for network analysis, structured protocols like WINK for keyword weighting, and hybrid search construction. By moving beyond reliance on high-volume, expert-derived terms alone and deliberately employing strategies to uncover precise, low-search-volume keywords, researchers can significantly enhance the sensitivity, comprehensiveness, and ultimately, the validity of their evidence synthesis. The protocols and data presented herein provide a replicable framework for achieving this critical objective.

Your Hands-On Toolkit: Advanced Methods for Uncovering Hidden Academic Keywords

In the era of information overload, optimizing the discoverability of scientific research is paramount. Strategic keyword discovery is not merely an administrative task but a critical component of the research process itself, directly influencing a study's visibility, accessibility, and subsequent academic impact. For researchers, scientists, and drug development professionals, a methodical approach to mining bibliographic databases ensures that their work is effectively integrated into the scientific discourse, facilitates evidence synthesis, and helps avoid the "discoverability crisis" where even indexed articles remain unseen [2]. This protocol provides a detailed methodology for using PubMed, Scopus, and recent publications to build a comprehensive keyword strategy, with a particular focus on identifying less competitive, high-value terms that can maximize the reach of scholarly work within the framework of low search volume academic keyword research.

Key Concepts and Definitions

Table 1: Core Concepts in Scientific Literature Mining

| Concept | Definition | Relevance to Keyword Discovery |

|---|---|---|

| Controlled Vocabulary | A standardized set of terms (e.g., MeSH, Emtree) assigned by indexers to describe article content [19]. | Provides a authoritative, consistent list of keywords; essential for comprehensive database searching. |

| Automatic Term Mapping (ATM) | PubMed's process of automatically mapping search terms to controlled vocabulary and searching specific fields [20]. | Informs which terms are recognized by the system, highlighting preferred terminology. |

| Keyword Difficulty (KD) | A metric, often from SEO, estimating the competition to rank for a term; in academia, this translates to the density of articles using a specific term [21]. | Helps identify "low competition" or niche terms that newer researchers can target for greater discoverability. |

| Search Volume | The number of times a keyword or phrase is searched for within a set timeframe [22]. | Indicates term popularity; low-search-volume terms can be valuable for targeting specific, intent-driven audiences. |

| Text Words / Keywords | Free-text, author-supplied terms used to describe concepts, including synonyms, acronyms, and spelling variations [19]. | Captures literature not yet indexed with controlled vocabulary and accounts for authors' linguistic diversity. |

Experimental Protocol for Systematic Keyword Discovery

Protocol 1: Foundational Keyword Extraction Using PubMed

Objective: To extract a foundational set of keywords and MeSH terms for a given research topic using PubMed's built-in tools and features.

Materials and Reagents:

- Primary Database: PubMed (publicly available) [15].

- Analysis Tool: MeSH Database (accessible via PubMed) [20].

Methodology:

- Preliminary Search: Execute a broad keyword search in PubMed using 2-3 core concepts from your research question (e.g., "heart failure exercise").

- Identify Key Articles: Manually screen the results to identify 3-5 highly relevant, recent articles that closely align with your research.

- MeSH Term Extraction: a. Open the abstract view for each key article. b. Locate the "MeSH terms" section [20]. c. Record all relevant MeSH terms, noting any marked with an asterisk (*), which indicate they are a major topic of the article [20].

- Keyword and Synonym Extraction: a. From the same key articles, analyze the title and abstract. b. Record recurring nouns, noun phrases, and author-supplied keywords. c. Use the "Similar articles" feature linked to each citation to discover additional relevant papers and repeat the extraction process [15].

- MeSH Database Exploration: a. Enter the most promising extracted MeSH terms into the MeSH Database. b. Examine the term's hierarchy, scope note, and "Entry Terms" (which are synonyms or variations that map to that MeSH term) [20]. Add these entry terms to your keyword list.

Troubleshooting Tip: If initial searches yield too few results, remove the most specific concept or replace specific terms with broader ones (e.g., "non-small cell lung carcinoma" to "lung neoplasms") [15].

Protocol 2: Advanced Discovery and Validation with Scopus

Objective: To leverage Scopus's citation and indexing features to discover trending keywords and validate term importance.

Materials and Reagents:

- Primary Database: Scopus (subscription typically required) [19].

Methodology:

- Citation Analysis: a. Locate a seminal article in your field within Scopus. b. Analyze the "Cited by" list, sorting the citing articles by "Publication date (newest)." c. Review the titles and keywords of the most recent citing articles to identify emerging terminology and new conceptual links.

- Author Keyword Analysis: a. Perform a search for your core topic in Scopus. b. Use the "Analyze results" feature to examine the most frequent author keywords. c. Identify keywords that are prevalent in recent years (e.g., post-2022) but less common in older publications, signaling a trending topic.

- Compare with PubMed: Validate the keyword list derived from PubMed. Terms that appear frequently across both databases are likely high-importance, core keywords.

Protocol 3: Identifying Low-Competition and Emerging Terms

Objective: To apply techniques for finding lower-competition, long-tail keywords that can enhance discoverability for niche topics.

Materials and Reagents:

- Tools: PubMed, Google Scholar, Google Trends [2], academic social media (e.g., relevant X/Twitter lists, ResearchGate).

Methodology:

- Deconstruct Core Concepts: Break down a broad concept (e.g., "cancer immunotherapy") into more specific components (e.g., "CAR-T cell toxicity management," "bispecific antibody solid tumors").

- Utilize "People also ask" and "Similar Articles": In Google Scholar and PubMed, note the related questions and article suggestions, which often contain long-tail keyword phrases [21].

- Monitor Academic Social Media: Follow leading researchers and institutions on professional social networks. Note the specific language and hashtags used to describe new findings, which often precede formal MeSH terms.

- Analyze Search Volume Trends: For consumer-facing or translational research topics, use tools like Google Trends to check the relative popularity and seasonality of potential keywords, ensuring consistent interest over time [21] [2].

Data Presentation and Analysis

Table 2: Quantitative Comparison of Database Features for Keyword Discovery

| Feature | PubMed | Scopus |

|---|---|---|

| Primary Focus | Biomedicine, life sciences, health [20] | Multidisciplinary, including science, medicine, social sciences [19] |

| Controlled Vocabulary | Medical Subject Headings (MeSH) [20] | Emtree [19] |

| Unique Keyword Tools | MeSH Database, Automatic Term Mapping, "Similar articles" [15] [20] | Cited reference analysis, Author keyword frequency analysis [19] |

| Full-Text Search | No (searches title, abstract, MeSH, etc.) [20] | Yes, for subscribed content [19] |

| Ideal for Finding | Authoritative MeSH terms, biomedical synonyms, related articles via ML [15] | Trending terms via citation analysis, interdisciplinary terminology [19] |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Digital Tools for Keyword Discovery and Literature Mining

| Tool Name | Function/Brief Explanation | Access |

|---|---|---|

| MeSH Database | The controlled vocabulary thesaurus for PubMed; used to find standardized terms and their synonyms ("Entry Terms") [20]. | Free via PubMed |

| Emtree Thesaurus | The extensive controlled vocabulary for the Embase database, which is also integral to Scopus indexing; useful for discovering drug and medical device terminology [19]. | Subscription (via Embase/Scopus) |

| PubMed Advanced Search Builder | Allows for the precise construction of search queries using fields (e.g., [tiab], [mesh]), Boolean operators, and history combination [15] [23]. |

Free via PubMed |

| Scopus Analyze Results | A feature that provides metrics and visualizations on search results, including the frequency of author keywords over time, aiding in trend spotting [19]. | Subscription |

| Google Trends | A tool that shows the popularity of search queries in Google Search over time; helps gauge public or general professional interest in a topic [21] [2]. | Free |

Workflow Visualization

Diagram 1: Scientific Keyword Discovery Workflow.

Diagram 2: PubMed's Automatic Term Mapping Process.

Within the framework of a broader thesis on discovering low-search-volume academic keywords, this document establishes that social and professional networking platforms are indispensable, real-time sources for identifying emerging scholarly trends. Traditional keyword research tools often overlook nascent academic discussions due to low initial search volume on conventional search engines. This methodology leverages the immediacy of platforms like X (Twitter), LinkedIn, and ResearchGate to detect these early signals, enabling researchers to contribute to cutting-edge conversations at their inception. The following protocols provide a systematic approach to data gathering and analysis, transforming informal online discourse into quantifiable research intelligence.

The Quantitative Landscape of Social Traffic

To contextualize this methodology, it is crucial to understand the relative significance of different social platforms as traffic and information sources. The data below summarizes the distribution of global website traffic originating from social media as of 2025.

Table 1: Global Social Media Traffic Share (2025 Data) [24]

| Platform | Share of Global Social Traffic | Overall Global Traffic Share | Key Trend |

|---|---|---|---|

| 76.56% | 7.75% | Dominant but declining from peak | |

| 6.72% | 0.68% | Steady, visual-centric | |

| TikTok | 5.50% | 0.56% | Fastest-growing; 5x traffic increase Jan-Aug '25 |

| 2.97% | 0.30% | Steady; high-value B2B/professional audience | |

| X (Twitter) | 1.80% | 0.18% | Role shrinking due to policy shifts |

| YouTube | 1.86% | 0.19% | Low direct traffic, high organic/AI visibility |

Furthermore, social media platforms are deeply embedded in modern search ecosystems. Research shows that 50.3% of Google searches include at least one social media platform among the top-10 organic results, with Reddit (37%) and YouTube (19.8%) being most prevalent [24]. This integration underscores the value of social content for visibility beyond the platforms themselves.

Experimental Protocols for Trend Tracking

This section provides detailed, executable protocols for data extraction and analysis from X, LinkedIn, and ResearchGate.

Protocol #1: X (Twitter) Trend Extraction

Objective: To identify emerging academic keywords and topics by analyzing discourse among researchers and institutions on X.

Workflow Diagram:

Step-by-Step Procedure:

- Define Research Scope & Seed Sources: Identify 3-5 core scientific topics (e.g., "PROTAC degradation," "spatial transcriptomics"). Compile a list of relevant seed accounts: key opinion leaders (KOLs), major research institutions (e.g., @BroadInstitute, @Nature), and academic journals in the field.

- Configure Data Extraction Tool: Select a social media scraping tool (see Section 5.1). For API-based tools, input authentication keys. Configure extraction parameters:

- Targets: Seed account timelines, specific hashtags (#CRISPR, #AIinDrugDiscovery).

- Data Fields: Post text, timestamp, hashtags, mentions, like/share counts.

- Date Range: Previous 3-6 months to identify recent trends.

- Execute Data Extraction: Run the scraping tool. For large data volumes, use rate-limiting bypass strategies (e.g., random delays between requests) to avoid being blocked [25]. A Python function with retry logic can be implemented for robustness.

- Data Pre-processing: Clean the extracted text data using computational tools (e.g., Python Pandas, R).

- Remove URLs, user mentions, and punctuation.

- Perform tokenization and remove stop-words.

- Apply lemmatization to consolidate word variations (e.g., "degrading" -> "degrade").

- Data Analysis: Use text analysis techniques to identify trends.

- Frequency Analysis: Identify the most frequent nouns and noun phrases.

- Co-occurrence Network Analysis: Map which terms frequently appear together in the same posts, revealing conceptual clusters.

- Sentiment Analysis: Gauge community reception (positive, negative, neutral) towards emerging topics.

- Synthesize Output: Generate a list of potential low-volume keywords and nascent research questions based on the analysis. For example, frequent co-occurrence of "ferroptosis" and "cancer therapy" may indicate a growing sub-field.

Protocol #2: LinkedIn & ResearchGate Academic Pulse Monitoring

Objective: To track formal and semi-formal academic discussions, publication patterns, and collaborative interests on professional networks.

Workflow Diagram:

Step-by-Step Procedure:

- Source Identification:

- On LinkedIn: Follow profiles of prominent scientists, R&D departments of pharmaceutical companies (e.g., Pfizer, Roche), and professional groups (e.g., "ACS Medicinal Chemistry").

- On ResearchGate: Follow leading authors in your field and monitor the "Questions" section in relevant topics.

- Data Extraction: Use specialized scrapers or manual tracking.

- For LinkedIn: Scrape update text from company pages and researcher posts. Extract skills and job titles from job postings in R&D to identify demanded expertise.

- For ResearchGate: Scrape data from publication pages (title, abstract, citations) and the "Questions" section to find unresolved research problems. Handle authentication if required, as some data may need a logged-in session [25].

- Content Analysis:

- Thematic Analysis: Code the content of posts and publications to identify recurring themes and novel concepts.

- Gap Analysis: On ResearchGate, specifically analyze "Questions" to find areas where researchers are explicitly seeking information, indicating knowledge gaps.

- Trend Synthesis:

- Correlate findings from both platforms. A new methodology discussed on LinkedIn may correspond to unanswered technical questions on ResearchGate.

- Output a list of specific, long-tail keyword candidates and potential research gaps ripe for investigation.

Key Metrics for Measuring Trend Vitality

When analyzing data from these protocols, the following metrics should be calculated to evaluate the potential and vitality of a detected trend.

Table 2: Social Media Metrics for Academic Trend Analysis [26]

| Metric Category | Specific Metric | Relevance to Academic Trend Identification |

|---|---|---|

| Audience Growth | Follower Growth Rate | Indicates increasing interest in a specific researcher, institution, or topic channel. |

| Engagement | Engagement Rate | Measures how actively the community discusses a topic (vs. passive viewing). |

| Awareness | Reach / Impressions | Shows the potential scale of a topic's visibility within a professional network. |

| Content Performance | Video Views / Share Ratio | Highlights highly shareable concepts or compelling explanations, often key for new methodologies. |

| Customer Satisfaction | Comments / Reply Time | Comments reveal public perception and questions; reply time shows community engagement. |

The Scientist's Toolkit: Research Reagent Solutions

The following tools and software are essential for implementing the protocols described in this document.

Table 3: Essential Tools for Social Media Trend Tracking

| Tool / Reagent | Function | Specification / Note |

|---|---|---|

| Bright Data | Social Media Scraping | Robust, scalable API suite with geo-targeting; handles anti-bot measures [27] [28]. |

| PhantomBuster | Social Automation & Data Extraction | Combines data scraping with automation for lead generation and outreach [27]. |

| Octoparse | No-Code Visual Scraping | Point-and-click interface for beginners; cloud-based scheduling [27]. |

| Python Libraries (Requests, BeautifulSoup) | Custom Scripting | requests for fetching data, BeautifulSoup for parsing HTML; allows for full customization [25]. |

| Proxy Services (Residential IPs) | Anti-Blocking Infrastructure | Rotating IP addresses mimic organic traffic, preventing IP bans during large-scale data collection [25]. |

| Semrush | Keyword Validation | Cross-references discovered terms with traditional search volume and difficulty metrics [4] [29]. |

The integration of social and professional platform monitoring into the academic keyword research workflow provides a powerful mechanism for anticipating the evolution of scientific fields. The protocols for X, LinkedIn, and ResearchGate outlined above offer a systematic, data-driven approach to moving beyond reactive keyword targeting and into the proactive identification of research opportunities. By leveraging these digital landscapes, researchers and drug development professionals can position their work at the forefront of scientific discourse.

Application Note: Foundational Principles of Academic Keyword Research

The Strategic Imperative of Keyword Optimization in Academia

In the contemporary digital research landscape, Academic Search Engine Optimization (ASEO) is a critical discipline for enhancing the visibility, readership, and impact of scholarly publications [30]. The core premise is that a research article's ranking in academic search engines like Google Scholar, IEEE Xplore, and PubMed significantly influences its likelihood of being read and cited [30]. Items appearing high in search results are more likely to be accessed, and open-access articles consistently receive more citations than those behind paywalls [30]. This application note establishes the foundational principles for analyzing the keyword strategies of leading papers to inform more effective dissemination of scientific work.

The Evolution from Keywords to Semantic Understanding

The practice of keyword research has evolved significantly. While once focused on exact phrase matching, modern search algorithms, powered by updates like RankBrain, BERT, and MUM, now process natural language and understand user intent with remarkable sophistication [31]. This shift has moved the focus from individual keywords to broader topical clusters and semantic relationships [31]. For researchers, this means that effective keyword strategies must encompass the entire vocabulary of a research topic—including synonyms, related concepts, and question-based queries—to signal comprehensive coverage and authority to search engines [31].

Defining "Competitors" and "Collaborators" in Academic Search

In the context of academic publishing, a "Competitor" is any document (article, preprint, review) that ranks for target keywords and appears in the search results for those terms. Notably, these are not always direct research rivals but can include review aggregators, educational websites, or publications from unexpected fields [32]. A "Collaborator" refers to a related keyword or semantic term that, when combined with a primary keyword, helps form a comprehensive topical network. These collaborator keywords help search engines grasp the full context of a paper's content, thereby improving its ranking potential for a wider array of relevant queries [31].

Protocol 1: Identifying Academic Competitor Papers and Their Keywords

Objective

To systematically identify the set of academic papers that constitute true competitors for target keywords in academic search engines and to reverse-engineer the keyword strategies they employ.

Experimental Workflow

Step-by-Step Procedure

- Define Core Research Topic: Articulate the central research theme using 2-3 key phrases. Example: "low-grade glioma immunotherapy."

- Execute Preliminary Search: Conduct searches in Google Scholar and discipline-specific databases (e.g., PubMed) using the defined core phrases [30].

- Identify Recurring Publications: Manually review the top 20 results for each query. Note publications that appear consistently across multiple searches; these are your primary competitor papers [32].

- Analyze Competitor Paper Metadata and Content:

- Title Analysis: Identify keywords and phrases within the first 65 characters of the title, a critical factor for Google Scholar [30].

- Abstract and Full-Text Analysis: Extract recurring terminology, synonyms, and specific long-tail phrases (e.g., "PD-1 inhibitor resistance in glioma") [33].

- Keyword Tags: Record any author-provided keywords or indexing terms.

- Extract and Categorize Keywords: Compile the identified keywords into categories such as Short-Tail ("glioma treatment"), Long-Tail ("management of recurrent low-grade glioma"), and Question-Based ("how to overcome immunotherapy resistance in brain tumors") [33].

- Document SERP Features and Content Gaps: Note the presence of "People Also Ask" boxes or related searches. Identify questions or topics that the competitor papers do not fully address—these represent content gaps and potential opportunities [32] [33].

- Synthesize Competitive Keyword Profile: Create a master list of competitor keywords, organized by frequency and relevance, to inform your own strategy.

Research Reagent Solutions

| Tool / Resource | Function in Analysis | Source / Platform |

|---|---|---|

| Google Scholar | Primary platform for identifying competitor papers and analyzing SERP features. | scholar.google.com |

| PubMed / IEEE Xplore | Discipline-specific databases for comprehensive competitor discovery. | nih.gov / ieee.org |

| Semantic Keyword Clustering | Groups related keywords into thematic clusters to understand topical coverage. | [31] |

| "People Also Ask" Miner | Reveals question-based keywords and related user queries directly from SERPs. | [33] |

Protocol 2: Uncovering Low Search Volume & Long-Tail Keyword Opportunities

Objective

To discover low-competition, low-search-volume, and long-tail keywords that offer viable pathways for ranking in academic search engines, thereby attracting targeted, high-intent readership.

Experimental Workflow

Step-by-Step Procedure

- Input Seed Keywords: Use the high-level keywords identified in Protocol 1 (e.g., "glioma immunotherapy") as a starting point [33].

- Utilize Long-Tail and Question-Based Keyword Tools:

- AnswerThePublic: This tool visualizes question-based queries (e.g., "why does immunotherapy fail in glioma?") and prepositions, which are ideal for targeting specific research nuances [33].

- Google Keyword Planner: While designed for advertising, it provides essential data on search volume ranges and competition levels for keywords, helping to identify lower-competition terms [33].